Essence

The synchronization between external price discovery and on-chain state finality represents the primary friction in decentralized derivative markets. Verification Delta quantifies the financial leakage occurring when the speed of market movement outpaces the latency of cryptographic confirmation. This metric defines the exposure of a liquidity pool to stale-price arbitrage, where the ledger state lags behind the global spot reality.

High volatility environments expand this delta, creating windows for toxic order flow to extract value from passive liquidity providers.

The integrity of a decentralized margin engine depends on the mathematical certainty that verification latency remains lower than the time required for price-action to invalidate collateral ratios.

Adversarial actors exploit the gap between the off-chain reality and the on-chain verified state. Verification Delta serves as a measure of “price entropy” within a protocol. When the delta is positive, the system remains solvent.

A negative delta indicates that the cost of verifying a state change exceeds the value protected by that change, leading to systemic insolvency. This relationship dictates the maximum leverage a protocol can safely offer without risking a total collapse during rapid market deleveraging events.

Origin

The genesis of this concept lies in the early failures of automated market makers and lending protocols during the “Black Thursday” event of March 2020. Ethereum congestion caused oracle updates to stall, leading to a situation where the “on-chain price” was significantly higher than the “market price.” This discrepancy allowed users to withdraw collateral that should have been liquidated, effectively exploiting a massive Verification Delta.

Oracle Latency and Block Space Competition

Early developers viewed oracles as neutral data feeds, ignoring the game-theoretic implications of block space competition. The realization that miners ⎊ now validators ⎊ could reorder transactions to profit from delayed price updates shifted the focus toward quantifying the cost of certainty. Verification Delta emerged as a formal way to describe the risk associated with “optimistic” assumptions in financial settlement.

The Shift to Proactive Risk Management

As decentralized finance matured, the need for a rigorous sensitivity analysis of verification times became apparent. Professional market makers demanded a metric that could account for the probability of a “stale state” exploit. This led to the integration of Verification Delta into the risk engines of modern perpetual and options platforms, moving beyond simple slippage calculations toward a holistic view of settlement risk.

Theory

In the rigorous framework of quantitative finance, Verification Delta is the partial derivative of the protocol’s net equity with respect to the verification time T. Mathematically, it is expressed as Vδ = partial E / partial T. This value represents how much value is lost for every additional second of latency in the state transition process.

The Solvency Constraint

A protocol remains robust only if the Verification Delta is managed within specific bounds. If the price of an underlying asset S moves at a velocity dS/dt, the verification mechanism must satisfy the condition that T < (M / (dS/dt)), where M is the maintenance margin. Failure to meet this condition results in a "Verification Gap," where the system is mathematically unable to liquidate underwater positions before they reach negative equity.

| Verification Type | Latency Profile | Delta Sensitivity | Solvency Risk |

|---|---|---|---|

| Optimistic Finality | High (Minutes) | Extreme | High during Volatility |

| ZK-Proof Finality | Medium (Seconds) | Moderate | Low to Moderate |

| Sequencer Pre-conf | Low (Milliseconds) | Low | Minimal |

Solvency in decentralized derivatives is a function of the race between market volatility and the computational overhead of state finality.

Adversarial Information Asymmetry

Adversarial agents monitor the mempool to identify pending price updates. By injecting trades before the verification of a new price, they capture the Verification Delta as profit. This is a form of structural arbitrage that treats the protocol’s latency as a free option.

The value of this option increases with the volatility of the underlying asset and the congestion of the underlying network.

Approach

Modern derivative systems architect their margin engines to minimize the impact of Verification Delta through a combination of off-chain computation and on-chain verification. The current standard involves using high-frequency oracle pull mechanisms rather than passive push models. This ensures that the state is only updated when a trade is initiated, reducing the window for stale-state exploitation.

- Real-time Margin Recalculation: Protocols calculate the Verification Delta for every active position, adjusting liquidation thresholds based on current network congestion levels.

- Dynamic Fee Scaling: Trading fees increase when the Verification Delta expands, compensating liquidity providers for the heightened risk of toxic arbitrage.

- Cross-chain Synchronization: Advanced architectures use state-root bridging to verify prices across multiple layers, ensuring that the Verification Delta remains consistent across the entire liquidity network.

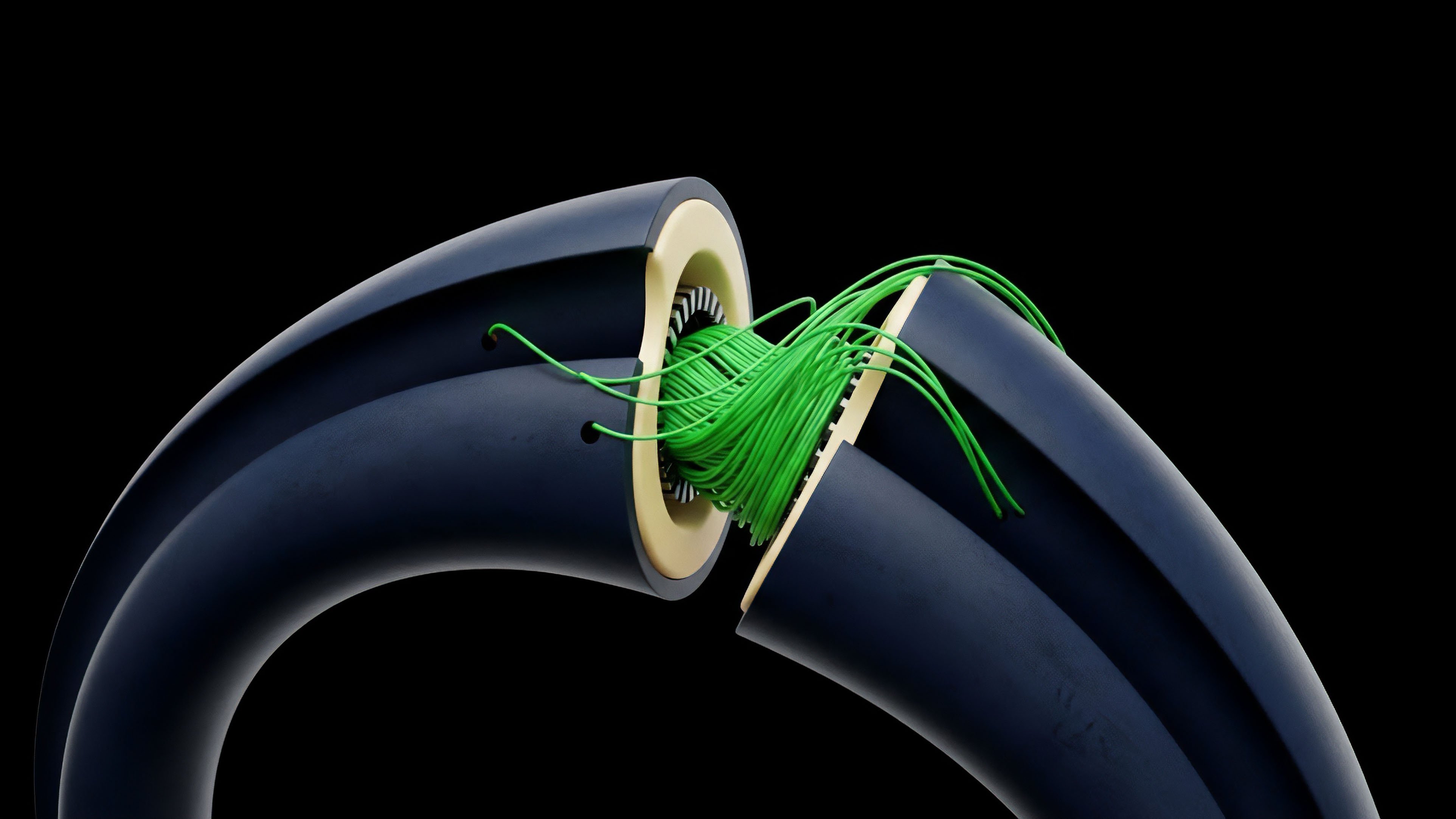

Implementing Zero-Knowledge Safeguards

The integration of Zero-Knowledge (ZK) proofs allows for the verification of complex margin requirements without the need for full on-chain state updates. This reduces the Verification Delta by decoupling the “proof of solvency” from the “finality of settlement.” By submitting a succinct proof that a position is still above the liquidation threshold, the protocol can maintain high-speed trading while ensuring mathematical integrity.

| Strategy Component | Functional Impact | Risk Mitigation Target |

|---|---|---|

| Adaptive Oracles | Reduces T | Stale Price Arbitrage |

| ZK-Margin Proofs | Decouples Verification | Liquidation Latency |

| Priority Sequencing | Guarantees Finality | Mempool Front-running |

Evolution

The transition from monolithic blockchains to modular stacks has transformed the nature of Verification Delta. In the early days, the delta was a byproduct of base-layer throughput. Today, it is a managed variable within the sequencer logic of Layer 2 networks.

The move toward App-Chains has allowed protocols to customize their consensus mechanisms specifically to minimize this delta, prioritizing financial settlement over general-purpose computation.

The maturation of decentralized finance is marked by the transition from reactive state updates to predictive verification models that anticipate market volatility.

The current state of the market reflects a sophisticated understanding of these dynamics. We see the rise of “pre-confirmations” where sequencers provide a cryptographic guarantee of inclusion before the block is even produced. This effectively reduces the Verification Delta to near-zero for the duration of the trade execution.

However, this introduces a new risk: the centralization of the sequencer. The trade-off has shifted from technical latency to trust-based finality, where the architect must decide between a decentralized but slow system and a centralized but efficient one. This tension is the defining characteristic of the current architectural era, where the pursuit of efficiency often clashes with the basal principles of censorship resistance.

Our inability to solve this without compromise remains the primary obstacle to achieving true parity with centralized exchanges. The industry has moved away from the naive belief that “code is law” without acknowledging that “speed is liquidity.” As we build more complex instruments like exotic options and multi-leg strategies, the Verification Delta becomes even more volatile, requiring a level of precision in risk modeling that was previously unnecessary.

Horizon

The future of Verification Delta lies in the total abstraction of the verification process. We are moving toward a world where “Continuous Verification” is handled by decentralized AI agents that monitor global liquidity and adjust protocol parameters in real-time.

This will eliminate the “gap” by making the protocol’s state a predictive reflection of the market rather than a reactive one.

ZK-Coprocessors and Off-Chain Intelligence

The deployment of ZK-coprocessors will allow protocols to offload the heavy lifting of margin calculations to specialized hardware. This will enable the verification of thousands of positions per second with millisecond latency, effectively neutralizing the Verification Delta as a source of risk. The protocol will function as a high-speed engine with the security of a decentralized ledger.

The Convergence of Traditional and Crypto Finance

As traditional financial institutions enter the space, the demand for “Deterministic Finality” will drive the next wave of innovation. Verification Delta will become a standardized metric in institutional risk reports, used to compare the efficiency of different blockchain networks. The protocols that can maintain the lowest delta during periods of extreme stress will become the foundational layers of the global financial system.

Systemic Resilience and Self-Healing Protocols

The ultimate goal is the creation of self-healing protocols that can detect an expanding Verification Delta and automatically enter a “Safety Mode.” In this state, the protocol would limit new positions and prioritize liquidation transactions, preventing the contagion of insolvency. This level of automated risk management will be the hallmark of the next generation of decentralized derivatives, providing a level of stability that exceeds current centralized models.

Glossary

Delta Hedging

Market Microstructure

Vega Exposure

Adversarial Game Theory

Order Flow Toxicity

Cryptographic Finality

Consensus Mechanism

Slippage Management

High Frequency Trading