Essence

Validium Solutions represent a specialized architecture within decentralized networks designed to offload transaction processing while maintaining rigorous data integrity. Unlike systems that mandate on-chain data availability, these protocols store transaction data off-chain, relying on cryptographic proofs to guarantee state transitions remain valid.

Validium Solutions provide a mechanism for scaling decentralized networks by decoupling state verification from the necessity of storing transaction data on the primary blockchain.

The primary utility of this approach lies in its ability to facilitate high-throughput financial activity without compromising the underlying consensus rules. By utilizing Zero-Knowledge Proofs, the system ensures that every off-chain operation adheres to established network logic. Participants interact with these solutions to achieve cost-efficient settlement while retaining the security guarantees provided by the base layer’s cryptographic verification.

Origin

The architectural necessity for these solutions stems from the inherent throughput limitations of early distributed ledgers.

As demand for decentralized financial applications surged, the cost of block space became a bottleneck for scaling. Developers sought methods to move computation away from the main chain, leading to the development of Validity Proofs.

- Scalability constraints necessitated a departure from monolithic chain architectures toward modular designs.

- Cryptographic advancements in succinct non-interactive arguments of knowledge allowed for compact verification of large computational sets.

- Modular design principles separated the execution environment from the data availability layer to enhance network performance.

These developments shifted the focus toward protocols that could compress complex financial transactions into singular, verifiable proof objects. This evolution mirrors historical efforts in traditional finance to move clearing and settlement processes into optimized, specialized environments while anchoring finality in a central authority, though here the authority is replaced by immutable code.

Theory

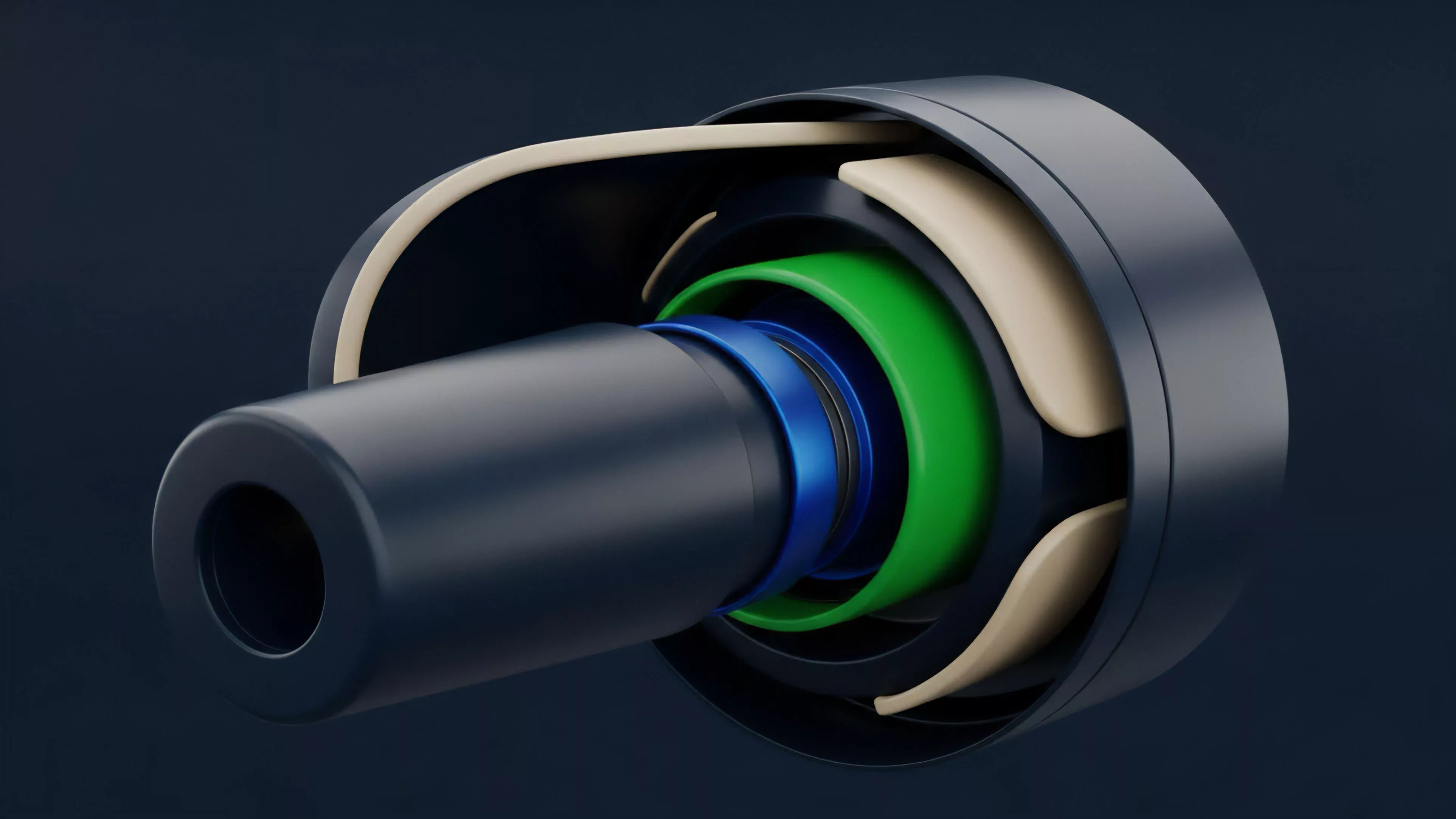

The mechanics of these systems rely on the interaction between an off-chain operator and an on-chain contract. The operator manages the ledger, executing transactions and generating Validity Proofs for each batch.

These proofs are submitted to the on-chain contract, which verifies the mathematical correctness of the batch without requiring the underlying transaction data.

| Component | Functional Role |

| Operator | Executes transactions and generates proof |

| Verifier Contract | Validates proof against current state root |

| Data Availability Committee | Ensures data remains accessible off-chain |

The integrity of the state transition is maintained through mathematical proof rather than public access to every individual transaction record.

The system faces adversarial pressure from the operator. If the operator fails to provide data, the Data Availability Committee acts as a secondary layer of trust. This design introduces a trade-off between absolute censorship resistance and performance, requiring a nuanced understanding of how trust is distributed among the committee members versus the cryptographic security of the proofs themselves.

Approach

Current implementation strategies focus on maximizing capital efficiency for derivative trading platforms.

By utilizing these solutions, protocols reduce the gas costs associated with margin updates and position liquidations. Market makers leverage this environment to execute high-frequency strategies that would be prohibited by the latency and expense of traditional on-chain operations.

- Margin management is performed off-chain, allowing for near-instantaneous updates to collateral requirements.

- Liquidation engines operate with reduced latency, protecting the system from systemic risks during periods of high volatility.

- Capital efficiency increases as traders lock less liquidity into on-chain escrow contracts.

My assessment of these implementations suggests that while the throughput gains are significant, the reliance on committee-based data availability introduces a specific risk profile that participants must evaluate. The mathematical rigor of the proof is absolute, but the accessibility of the data remains a variable that dictates the true level of decentralization in any given setup.

Evolution

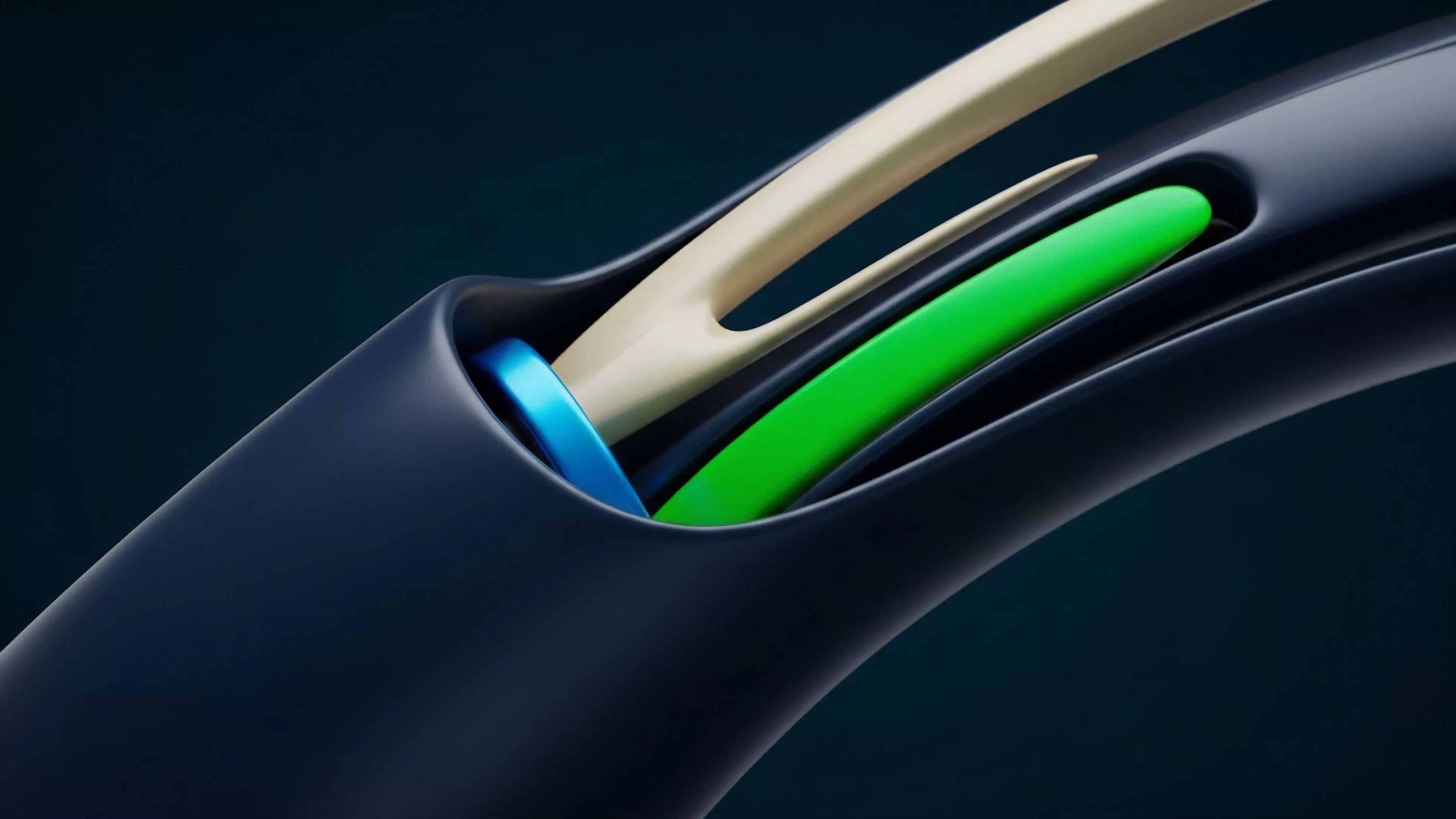

The transition from early, centralized off-chain scaling to more decentralized Validium models marks a shift toward resilient infrastructure. Initially, these systems functioned as simple sidechains with limited cryptographic oversight.

The introduction of ZK-Rollup technologies pushed the industry toward more secure proof-based systems, eventually leading to the current state where data availability is treated as a distinct, configurable parameter.

Systemic resilience has grown as protocols have moved from single-operator models to multi-party committee structures for data management.

This trajectory reflects a broader maturation of the financial stack, where the focus has moved from simple transaction speed to the preservation of user sovereignty. The integration of Recursive Proofs now allows these solutions to aggregate thousands of transactions into a single proof, significantly lowering the marginal cost of settlement.

Horizon

The future of this technology lies in the automation of data availability through decentralized networks. Moving away from static committees toward permissionless, cryptoeconomic data availability layers will likely define the next phase of development.

These advancements will permit the creation of derivative exchanges that possess the performance characteristics of centralized venues while maintaining the transparency and security of permissionless protocols.

- Decentralized sequencers will replace current operator models to eliminate single points of failure.

- Interoperability protocols will enable seamless movement of collateral between different scaling solutions.

- Automated governance will manage the parameters of data availability committees to ensure long-term stability.

We are approaching a point where the distinction between centralized and decentralized performance will vanish. The real challenge remains the synchronization of global liquidity across these fragmented, high-speed environments. The successful protocol will not just scale transaction volume but will provide the necessary infrastructure to maintain unified order flow in an increasingly modular ecosystem.