Essence

Data Availability Solutions function as the structural verification layer for modular blockchain architectures. These systems ensure that transaction data ⎊ essential for reconstructing state and validating block integrity ⎊ remains accessible to all network participants without requiring them to download the entire blockchain history. By decoupling execution and settlement from data storage, these protocols address the scalability trilemma, allowing for increased throughput while maintaining decentralized security guarantees.

Data availability protocols provide cryptographic assurance that transaction data exists and remains retrievable for network verification.

The core utility lies in preventing malicious block producers from withholding data, which would otherwise render the chain unverifiable and enable unauthorized state transitions. Data Availability Solutions introduce mechanisms such as erasure coding and sampling, which force the publication of data in a format that allows light clients to verify block validity with high statistical confidence. This shift from full-node reliance to probabilistic verification represents a transition toward scalable, verifiable decentralized ledgers.

Origin

The necessity for Data Availability Solutions emerged from the inherent limitations of monolithic blockchain designs, where every node must process every transaction to ensure validity.

As network demand increased, the cost of participation grew, threatening decentralization. Early research into sharding and light client security identified that verifying the existence of data was as critical as verifying the execution of transactions.

- Data availability sampling techniques allow nodes to query random portions of block data to confirm availability.

- Erasure coding enables the reconstruction of missing data chunks from a larger, redundant set.

- KZG commitments provide compact cryptographic proofs that specific data fragments belong to the original block.

This domain evolved through the study of fraud proofs and data availability committees, which sought to bridge the gap between performance and security. By formalizing the data availability requirement as a distinct protocol phase, architects established a framework where security scales with the number of participants, rather than being constrained by the hardware requirements of a single, monolithic entity.

Theory

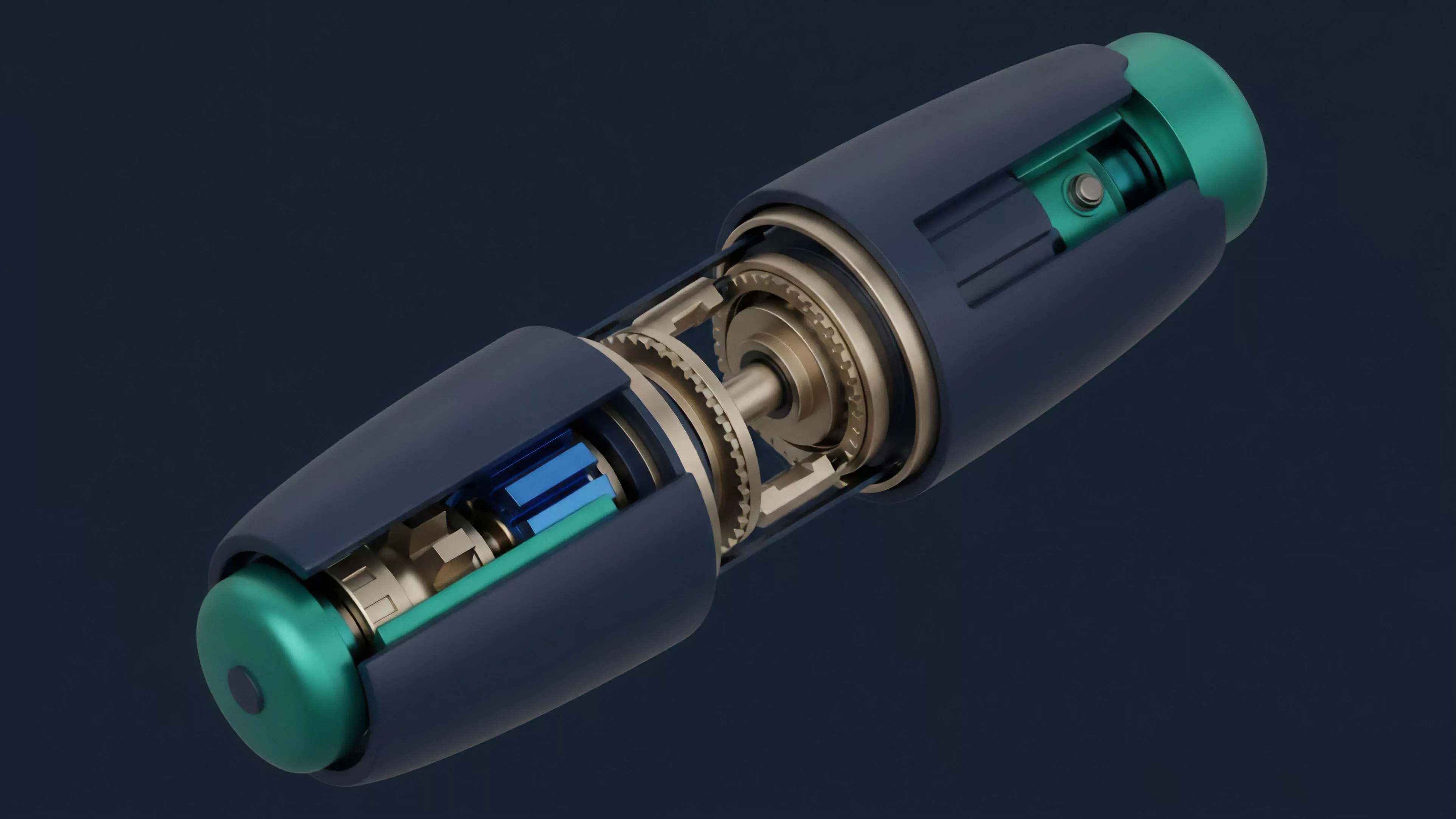

The mechanical integrity of Data Availability Solutions relies on the interaction between commitment schemes and statistical sampling. Block producers commit to data using structures that allow for efficient verification of individual pieces.

If a producer attempts to withhold a portion of the data, the underlying mathematical proofs ⎊ specifically those utilizing erasure coding ⎊ ensure that the remainder of the block is insufficient for reconstruction, thereby exposing the fraud.

Probabilistic data verification enables light clients to achieve security levels equivalent to full nodes through randomized sampling.

From a quantitative finance perspective, these solutions act as a risk-mitigation layer against systemic failure. The protocol physics here are governed by the probability of detection; as a light client samples more chunks, the likelihood of missing a withheld block approaches zero. This mechanism introduces a quantifiable security bound, transforming the binary state of data availability into a measurable variable that dictates the trust assumptions of the entire execution environment.

| Mechanism | Function | Security Impact |

| Erasure Coding | Data Redundancy | Resilience against data withholding |

| Sampling | Verification | Low-cost light client validation |

| Commitments | Integrity | Proof of inclusion and state |

The architectural choice to separate data availability from computation creates an adversarial environment where the cost of withholding data is decoupled from the value of the transaction. This separation is fundamental to the stability of decentralized markets, as it prevents localized network congestion from compromising the global state of the ledger.

Approach

Current implementations of Data Availability Solutions utilize specialized networks or integrated protocol upgrades to handle massive volumes of state data. These systems prioritize high-throughput data propagation while maintaining a permissionless entry for validators.

By utilizing advanced cryptographic primitives, these networks ensure that the data availability layer remains resilient even under high load or malicious actor intervention.

- Modular blockchains offload storage and availability to specialized networks.

- Light clients perform continuous background sampling to maintain network health.

- State commitment structures enable rapid verification of transaction histories.

Market participants now view these solutions as foundational infrastructure for decentralized finance. The ability to verify transaction integrity without heavy infrastructure allows for a more diverse range of participants in the settlement process, which in turn reduces the risk of centralized failure points. This approach mirrors the evolution of traditional financial clearinghouses, where the objective is to ensure that the record of truth is immutable and accessible to all parties involved in the trade.

Evolution

The trajectory of Data Availability Solutions has moved from theoretical constructs in early whitepapers to production-grade infrastructure powering modular rollups.

Initially, the industry relied on simple data availability committees ⎊ groups of trusted entities responsible for storing data. This approach lacked the trustless guarantees required for decentralized finance, prompting the development of current, cryptographically-secured protocols.

The shift toward trustless data verification marks a transition from committee-based models to cryptographically enforced protocol designs.

Technological advancements in polynomial commitments and zero-knowledge proofs have significantly increased the efficiency of these systems. As these protocols mature, they are being integrated into the core stack of various layer-two scaling solutions. The transition from monolithic to modular has necessitated this evolution, as the demand for verifiable, high-throughput data storage exceeds the capacity of single-chain architectures.

| Phase | Primary Characteristic | Security Assumption |

| Early Stage | Data Availability Committees | Trusted entities |

| Intermediate | Fraud Proofs | Honest majority |

| Current | Erasure Coding and Sampling | Cryptographic math |

The design of these systems is no longer just about storage; it is about providing the cryptographic primitives necessary for secure, high-frequency decentralized derivatives. The current focus is on reducing the latency of data availability proofs to ensure that settlement can occur in real-time, matching the performance requirements of centralized trading venues.

Horizon

The future of Data Availability Solutions lies in the seamless integration of verifiable data storage with cross-chain communication protocols. As decentralized markets become more interconnected, the ability to prove the state of one chain to another will depend entirely on the reliability of the underlying data availability layer. This will facilitate the creation of unified liquidity pools across disparate networks, reducing fragmentation and increasing capital efficiency. The next phase will involve the hardening of these protocols against sophisticated adversarial attacks, including long-range data withholding attempts and network-level partition attacks. We are moving toward a state where data availability is a commoditized service, allowing developers to build complex derivative instruments that are as secure as the underlying settlement layer, regardless of the chain on which they operate. The ultimate goal is a global, decentralized ledger where the cost of data verification is negligible, enabling the next generation of financial products to scale to billions of participants without compromising on security.