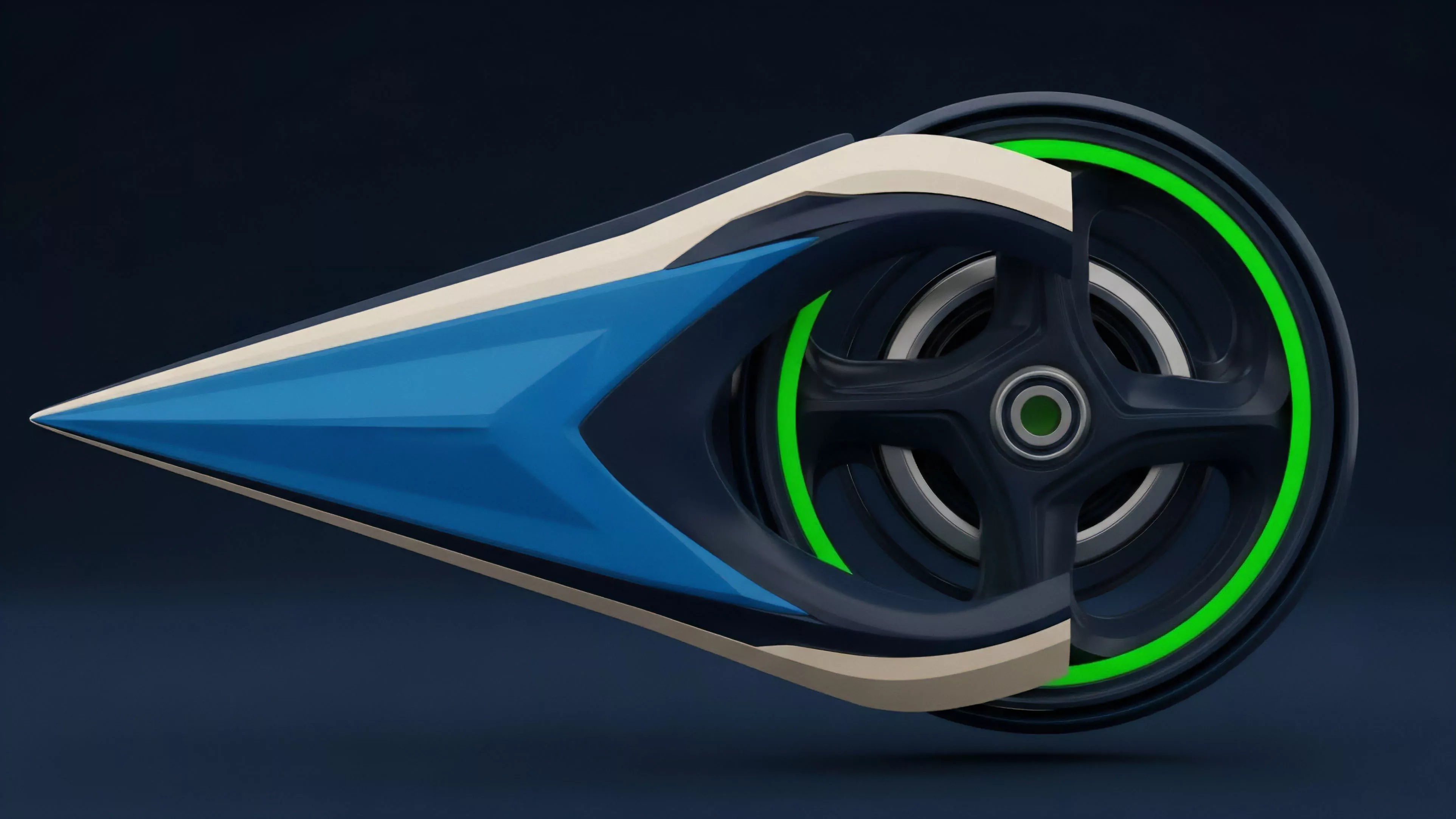

Essence

Validator Performance represents the aggregate technical and economic reliability of a network participant responsible for transaction ordering, block production, and state consensus. Within decentralized markets, this metric functions as the primary indicator of operational integrity, dictating the risk profile of staked assets and the efficiency of derivative pricing models that rely on underlying protocol finality.

Validator Performance serves as the fundamental reliability benchmark that governs the risk-adjusted returns of staked capital and derivative instruments.

The systemic relevance of Validator Performance extends beyond simple uptime statistics. It encompasses latency profiles, signature efficiency, and adherence to consensus rules, all of which directly influence the cost of capital for liquidity providers. When performance degrades, the resultant increase in block propagation latency or missed attestations introduces stochastic volatility into the network, creating a direct feedback loop that affects the pricing of options written against that protocol’s native token.

Origin

The necessity for monitoring Validator Performance originated from the shift toward proof-of-stake consensus mechanisms, where the security of the financial state machine relies on distributed, incentivized actors.

Early iterations of these protocols lacked granular performance telemetry, forcing market participants to rely on rudimentary proxies like simple availability. As decentralized finance matured, the demand for precise risk management necessitated a more sophisticated understanding of how validator-level behavior translates into systemic risk.

- Consensus latency provides the technical foundation for understanding block finality and settlement speed.

- Attestation efficiency measures the alignment between validator actions and protocol requirements.

- Slashing exposure represents the binary risk state where poor performance triggers direct capital loss.

This evolution reflects the transition from permissioned, centralized order books to permissionless, decentralized venues. The architectural requirement to maintain consistent state across thousands of nodes necessitated the creation of standardized performance metrics, allowing market participants to quantify the probability of chain reorgs or extended downtime, thereby creating a new dimension for pricing risk in crypto derivatives.

Theory

The theoretical framework for Validator Performance relies on the interaction between protocol physics and behavioral game theory. At its core, the performance of a validator is a function of its technical stack ⎊ including hardware optimization, network topology, and software implementation ⎊ and its economic incentives, which dictate the trade-offs between aggressive performance and conservative security practices.

| Metric | Financial Implication | Systemic Impact |

| Attestation Latency | Option Theta Decay | Consensus Finality Speed |

| Block Inclusion Rate | Yield Volatility | Network Throughput |

| Slashing Risk | Tail Risk Pricing | Protocol Stability |

Validator Performance functions as a stochastic variable that dictates the pricing of tail risk and liquidity efficiency within decentralized markets.

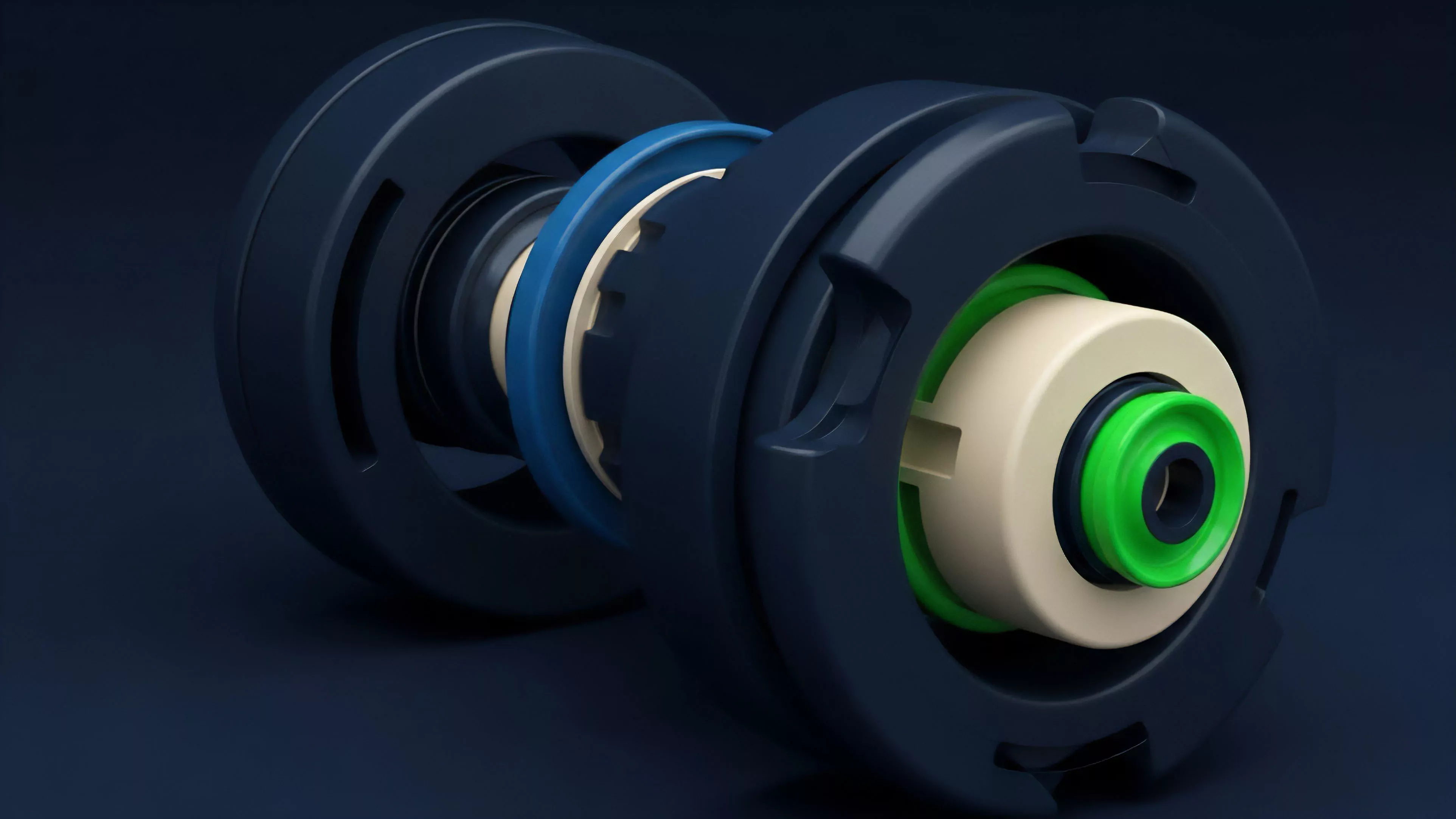

From a quantitative perspective, we model Validator Performance as a distribution of probabilities rather than a deterministic value. Adversarial environments require validators to navigate the tension between maximizing rewards and minimizing the risk of protocol penalties. This interaction generates unique volatility patterns, where systemic stress often manifests as a rapid degradation in validator consensus, causing cascading liquidations in correlated derivative positions.

One might consider how this mirrors the way institutional high-frequency traders monitor exchange matching engine latency during periods of extreme market stress.

Approach

Current strategies for evaluating Validator Performance prioritize real-time telemetry and predictive analytics to manage exposure. Market participants utilize high-frequency monitoring of node behavior to inform hedging strategies, particularly when holding large positions in liquid staking derivatives. This approach shifts the focus from historical uptime to forward-looking indicators of potential consensus failure.

- Telemetry aggregation allows for the identification of systemic performance degradation before it impacts finality.

- Risk sensitivity modeling quantifies how specific validator failures influence the greeks of associated option portfolios.

- Adversarial simulation tests the resilience of staked assets against varying degrees of network stress.

The current market architecture incentivizes validators to achieve high performance through complex MEV extraction strategies, which in turn introduces new dimensions of risk. This behavior forces derivative desks to adjust their volatility surfaces, as the potential for sudden validator-driven network instability becomes a priced component of the asset’s overall risk profile.

Evolution

The trajectory of Validator Performance has moved from basic uptime monitoring to the integration of complex cryptographic proofs and decentralized identity frameworks. Initially, protocols were evaluated solely on their ability to remain online; today, the discourse centers on the quality of block construction and the mitigation of validator-induced censorship.

This evolution mirrors the broader maturation of financial markets, where the focus shifts from simple access to the optimization of execution quality and the mitigation of systemic externalities.

Validator Performance metrics have transitioned from simple availability checks to sophisticated measures of protocol-level risk and execution quality.

The rise of liquid staking has accelerated this evolution, as capital becomes increasingly decoupled from specific validators. This shift forces a commoditization of Validator Performance, where protocols compete for stake based on their ability to provide superior risk-adjusted returns. The resulting market structure is highly competitive, yet fragile, as the concentration of stake in a small number of high-performing entities creates systemic points of failure that demand constant vigilance from the derivatives market.

Horizon

Future developments in Validator Performance will likely focus on the automated, programmatic mitigation of validator-level risks through decentralized, trustless protocols.

We expect the integration of zero-knowledge proofs to provide verifiable, real-time audits of validator performance, eliminating the reliance on centralized data providers and reducing the information asymmetry currently present in the market.

| Future Trend | Technological Driver | Market Impact |

| Automated Slashing Protection | Zero Knowledge Proofs | Lower Risk Premiums |

| Performance-Linked Yields | Smart Contract Oracles | Efficient Capital Allocation |

| Decentralized Validator Scoring | Governance Tokens | Reduced Systemic Risk |

The convergence of Validator Performance metrics with automated derivative clearinghouses will allow for the creation of new financial instruments that hedge against specific network-level risks. These advancements will move the industry toward a more resilient, transparent, and efficient financial system where the underlying protocol integrity is directly quantifiable and tradable.