Essence

Trustless Data Sources function as the verifiable bridge between external market reality and on-chain execution environments. These mechanisms replace traditional trusted intermediaries with cryptographic proofs, ensuring that the input data for derivative contracts remains accurate, tamper-proof, and accessible without reliance on a centralized authority.

Trustless data sources provide the cryptographic foundation for decentralized financial agreements by ensuring input integrity without central intermediaries.

The systemic value lies in eliminating the counterparty risk inherent in reporting data. When an option contract settles based on an asset price, the mechanism providing that price becomes the single point of failure. Decentralized Oracle Networks and Zero-Knowledge Proof systems resolve this by requiring consensus or mathematical verification from diverse, independent nodes.

This architecture transforms data from a subjective, vulnerable input into a robust, immutable asset.

Origin

The necessity for Trustless Data Sources arose from the fundamental architectural constraint of blockchains. Smart contracts exist in isolated environments, unable to access off-chain data natively without breaking consensus. Early attempts relied on centralized data feeds, which introduced massive systemic risk.

- Centralized Oracles: These early models failed because a single malicious or compromised data provider could trigger mass liquidations across entire protocols.

- Decentralized Oracle Networks: The development of Chainlink and similar protocols introduced multi-node aggregation to mitigate the risk of individual data corruption.

- Cryptographic Proofs: Advances in Zero-Knowledge Succinct Non-Interactive Arguments of Knowledge (zk-SNARKs) enabled data providers to prove the correctness of their computations without revealing the underlying private data.

This evolution reflects a transition from trusting human operators to trusting mathematical certainty. The historical failures of early exchange data feeds highlighted the danger of relying on proprietary APIs, driving the industry toward the current standard of verifiable, decentralized inputs.

Theory

The mechanics of Trustless Data Sources rely on distributed consensus or cryptographic verification. When multiple nodes provide data, the protocol applies aggregation functions ⎊ such as medianizing, weighted averaging, or outlier filtering ⎊ to determine the final, accepted value.

This process mimics market price discovery, aggregating dispersed information into a single, reliable state.

Mathematical aggregation of data across independent nodes minimizes the impact of individual malicious actors on derivative settlement.

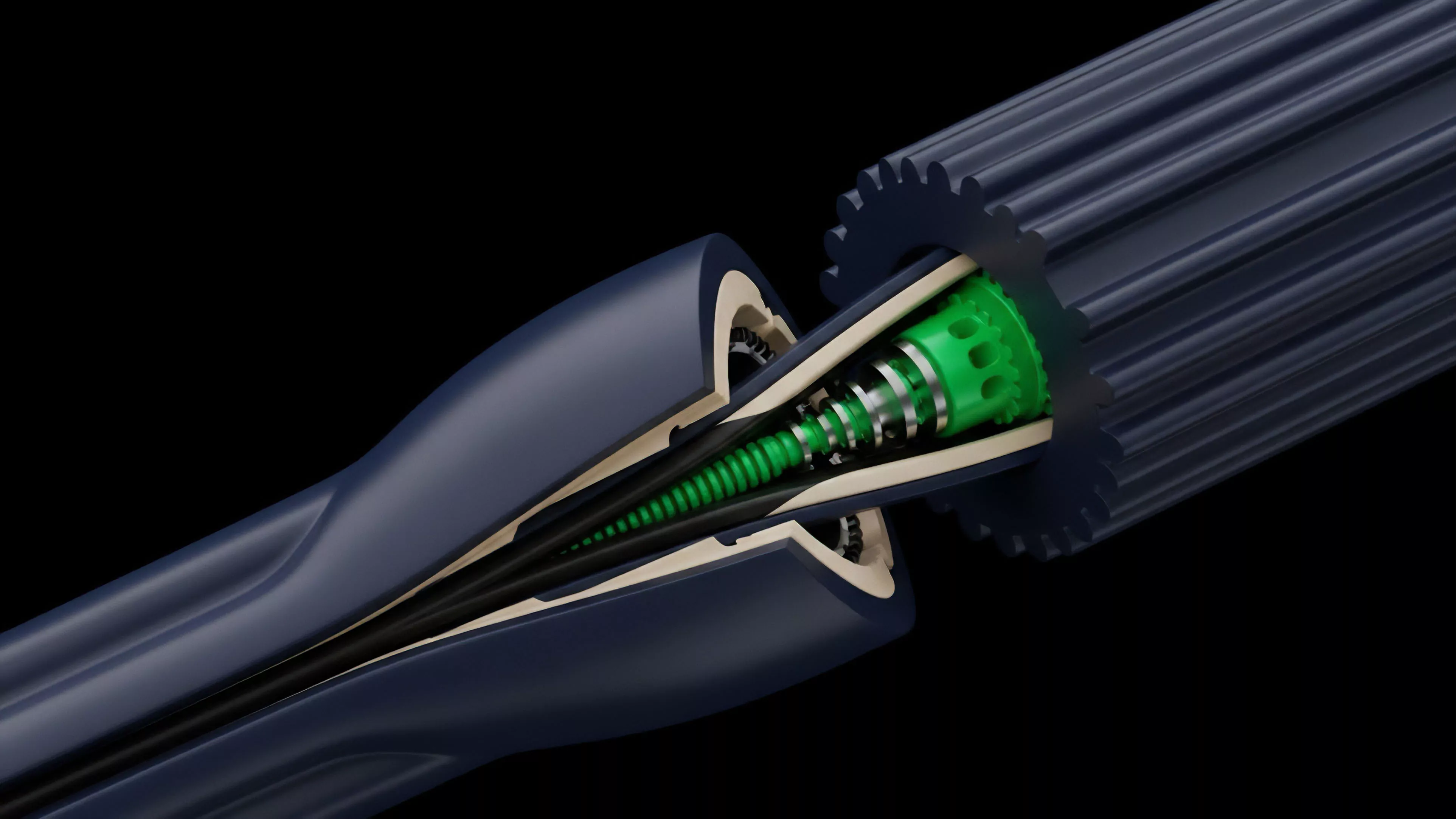

The technical architecture involves several critical components:

| Component | Functional Role |

| Data Provider Nodes | Fetch raw market data from various off-chain sources. |

| Consensus Engine | Validates and aggregates data to reach a single truth. |

| On-chain Registry | Maintains the current, verified state accessible by smart contracts. |

Behavioral game theory dictates the design of these systems. Staking mechanisms and slashing penalties force node operators to act honestly. If a node reports data that deviates significantly from the median, the protocol penalizes the operator, effectively creating an adversarial environment where honesty is the most profitable strategy.

Approach

Current implementations prioritize speed and cost-efficiency while maintaining security.

Protocols often use Off-chain Computation to handle complex data processing, pushing only the final, verified result on-chain to minimize gas expenditures. This hybrid approach balances the high throughput requirements of active derivatives markets with the security demands of decentralized finance. The strategy for ensuring data integrity involves:

- Reputation Scoring: Assigning weight to nodes based on historical accuracy and uptime.

- Latency Minimization: Utilizing high-frequency polling to ensure that the on-chain data remains synchronized with rapidly shifting spot markets.

- Adversarial Testing: Regularly subjecting data feeds to stress tests to identify potential failure modes in volatile market conditions.

Verifiable data feeds allow for the construction of sophisticated financial instruments that operate with the same reliability as traditional centralized counterparts.

This is where the pricing model becomes elegant and dangerous if ignored. By decoupling the data source from the settlement logic, protocols achieve modularity. However, this creates new systemic dependencies where the security of the derivative is bound by the integrity of the oracle mechanism.

Evolution

The transition from simple data feeds to Zero-Knowledge Oracles marks a significant shift in protocol capability.

Early systems focused on providing basic price data for liquid assets. Modern frameworks now support complex, multi-layered data inputs, including volatility indices, cross-chain state proofs, and private data verification. This evolution is driven by the demand for higher capital efficiency.

By using Cryptographic Proofs, protocols can now verify data directly from the source ⎊ such as an exchange’s internal ledger ⎊ without the exchange needing to trust the oracle provider. This removes the middleman entirely, moving closer to a truly permissionless financial system. One might consider the parallel to historical financial clearinghouses.

Just as clearinghouses were established to guarantee settlement in fragmented markets, decentralized protocols are building their own automated, trustless clearing mechanisms. The shift toward Modular Data Layers suggests that the future of derivatives will not rely on one single, monolithic data provider, but on a constellation of specialized, verifiable sources.

Horizon

The next phase involves the integration of Real-time Proofs that allow for instantaneous, risk-adjusted margin calculations. As protocols move toward sub-second latency, the reliance on Trustless Data Sources will increase, making the security of these feeds the most important factor in protocol stability.

Future developments will likely focus on:

- Cross-chain Data Aggregation: Unifying liquidity states across disparate networks to prevent fragmentation.

- Privacy-preserving Oracles: Enabling the use of proprietary or sensitive financial data without exposing the underlying information.

- Autonomous Governance: Allowing protocol parameters to adjust automatically based on real-time data inputs from these trustless sources.

The convergence of high-frequency data and smart contract execution will redefine market microstructure. The winners in this space will be those who successfully balance the technical complexity of cryptographic verification with the practical needs of global, 24/7 liquidity providers.