Essence

Data Aggregation Efficiency represents the mathematical and technical velocity at which fragmented liquidity signals, order books, and trade histories are unified into a singular, actionable representation of market state. In decentralized derivatives, this is the functional backbone of price discovery. The latency between an event on a decentralized exchange and its incorporation into a margin engine determines the integrity of liquidation thresholds and the precision of risk management models.

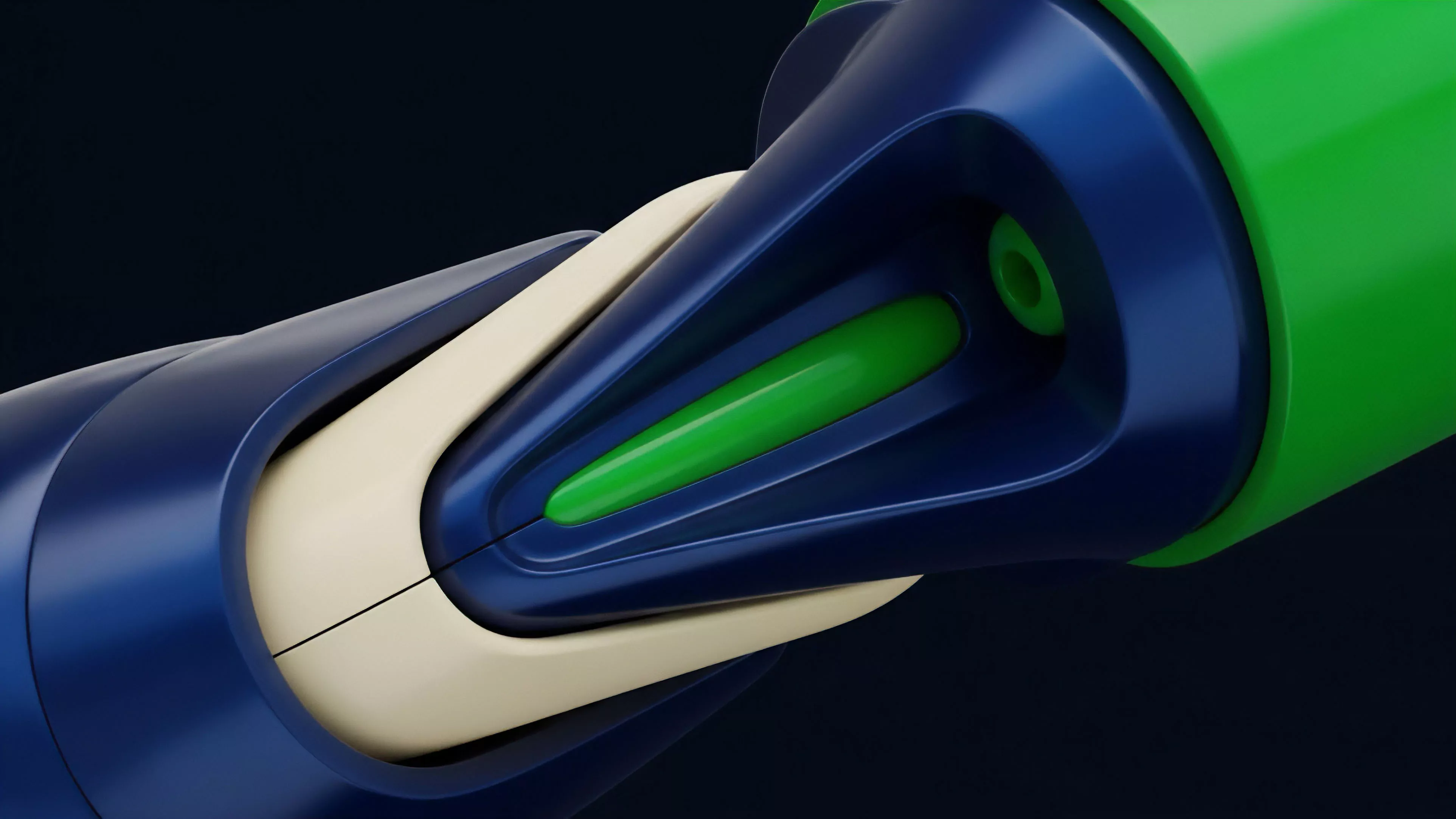

Data Aggregation Efficiency dictates the speed at which disparate liquidity signals converge into a unified, actionable market state for derivatives.

This construct functions as the sensory system for protocol-level risk. When data arrives fragmented or delayed, the derivative pricing mechanism operates on outdated information, leading to toxic flow and suboptimal capital allocation. True efficiency minimizes this temporal gap, ensuring that every participant views the same market truth simultaneously, which is the prerequisite for stable collateral management and accurate volatility estimation.

Origin

The necessity for Data Aggregation Efficiency surfaced alongside the proliferation of automated market makers and cross-chain liquidity fragmentation.

Early decentralized finance iterations relied on single-source price feeds, which created systemic vulnerabilities when those sources failed or suffered from oracle manipulation. Market participants required a mechanism to synthesize data from multiple venues to create a resilient, composite index price.

- Liquidity Fragmentation forced developers to seek methods for pooling order flow across distinct smart contract environments.

- Oracle Vulnerabilities drove the development of decentralized price aggregation to prevent single-point failures in liquidation engines.

- Latency Arbitrage pushed engineers to optimize data pipelines to ensure local protocol state kept pace with global market volatility.

This evolution mirrors the history of traditional electronic communication networks, where the consolidation of order books became the standard for fair price discovery. In decentralized systems, however, the challenge involves not just speed but cryptographic verification of the aggregated data, necessitating complex consensus mechanisms to ensure the truthfulness of the final price output.

Theory

The theoretical framework rests on the reduction of information asymmetry through computational optimization. At the protocol level, Data Aggregation Efficiency is modeled as the minimization of the error function between the realized market price and the synthetic index used for derivative valuation.

When this error function widens, the protocol becomes susceptible to predatory liquidation strategies.

Mathematical Modeling

The valuation of complex options requires high-frequency inputs. If the aggregation process introduces jitter, the calculated Greeks ⎊ specifically Delta and Gamma ⎊ become unreliable.

| Metric | Impact of Inefficiency |

| Latency | Delayed liquidation triggers |

| Jitter | Inaccurate volatility surface |

| Throughput | Stale order book updates |

Protocol integrity depends on minimizing the delta between real-time global price action and the internal state of the derivative margin engine.

The physics of these systems involves a constant struggle between decentralization and speed. Aggregating data across decentralized nodes introduces inherent overhead. The goal is to reach a threshold where the overhead does not compromise the validity of the margin calculations, effectively balancing the speed of execution with the safety of the consensus layer.

Sometimes, I consider whether our obsession with decentralization blinds us to the raw engineering reality that information must travel, and travel carries a cost in time.

Approach

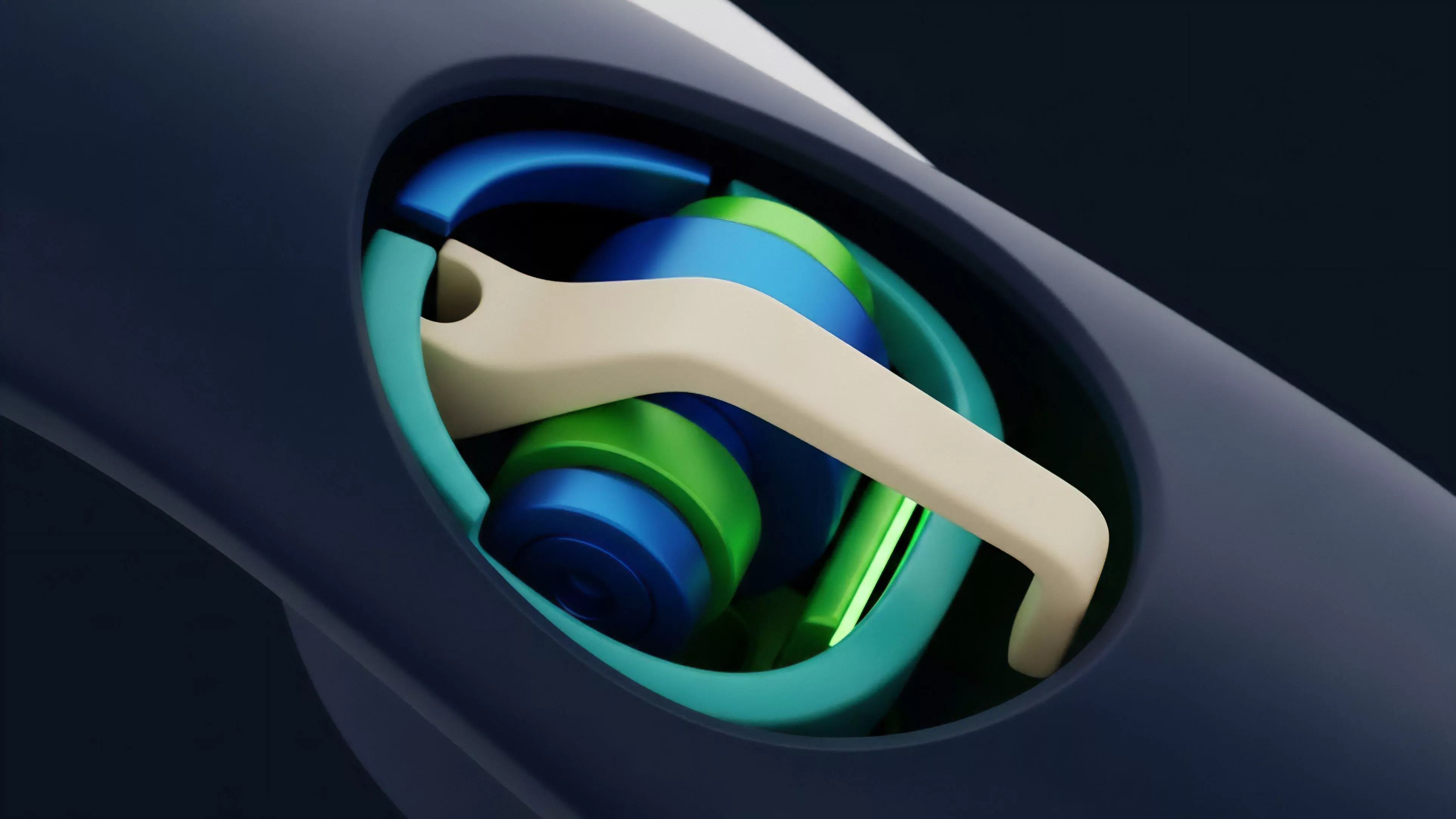

Current methodologies emphasize off-chain computation and optimistic verification to bypass the limitations of on-chain processing. Protocols now utilize decentralized oracle networks that perform the heavy lifting of data cleaning and normalization before submitting the finalized state to the blockchain. This allows for higher precision in Data Aggregation Efficiency without overwhelming the base layer with raw data processing tasks.

- Preprocessing Layers filter noise from volatile, low-liquidity exchanges before data reaches the margin engine.

- Optimistic Updates allow for near-instantaneous price changes, assuming the integrity of the data source until proven otherwise.

- Redundancy Protocols ensure that if one data stream fails, the aggregate remains stable by dynamically reweighting the inputs.

The strategy focuses on creating a hierarchy of data trust. By assigning weights to different exchanges based on their historical volume and reliability, protocols construct a more accurate composite price. This weighted aggregation prevents anomalous spikes on a single, illiquid venue from cascading into unnecessary liquidations across the entire derivative ecosystem.

Evolution

The path from simple moving averages to current multi-dimensional, weight-adjusted price discovery marks a shift toward institutional-grade infrastructure.

Early systems struggled with the basic task of reading data from two sources simultaneously. The current state utilizes sophisticated filtering that accounts for exchange-specific liquidity depth, ensuring that the aggregate is not merely a mean, but a representation of the actual cost to execute a trade.

Institutional-grade derivative platforms now prioritize data integrity as the primary defense against systemic market manipulation.

As these systems matured, they moved away from static thresholds toward adaptive models that respond to market volatility. During high-volatility events, the aggregation mechanism automatically shifts to prioritize sources with the highest volume, effectively ignoring low-liquidity venues that might exhibit erratic price action. This adaptability is the defining characteristic of modern, resilient derivative protocols.

Horizon

Future developments will likely focus on the integration of zero-knowledge proofs to verify the aggregation process itself.

If a protocol can prove the mathematical correctness of its aggregated data without revealing the raw inputs, it achieves a new standard of trustless efficiency. This will enable the creation of highly leveraged derivative instruments that currently remain impossible due to the risks associated with data transparency and manipulation.

- ZK-Aggregation will allow for the verification of multi-source data integrity at the protocol layer.

- Predictive Aggregation models will utilize machine learning to anticipate data gaps before they occur.

- Cross-Chain Synthesis will unify liquidity across disparate blockchain environments, creating a truly global derivative market.

The next frontier involves the move toward native, on-chain order books that eliminate the need for external oracles. By processing data aggregation directly within the execution environment, we remove the final barrier between the market state and the derivative contract. This represents the logical endpoint of the current architectural trajectory, where the protocol is its own source of truth, immune to external data degradation.