Essence

Protocol solvency hinges on the accuracy of internal supply telemetry rather than external market sentiment. Tokenomics Oracle Systems represent the architectural layer responsible for broadcasting the internal economic variables of a network ⎊ circulating supply, emission velocity, and staking participation ⎊ to the smart contract environment. These systems transform static whitepaper promises into live, verifiable data streams.

Tokenomics oracles provide the verifiable economic state required for trustless settlement of supply-contingent derivatives.

The nature of these systems involves a shift from price-centric data to state-centric data. While traditional oracles report what an asset is worth in an external currency, Tokenomics Oracle Systems report what the asset is within its own system. This includes the precise measurement of token sinks, the velocity of burn mechanisms, and the real-time distribution of governance power.

By providing this transparency, they enable the creation of derivatives that hedge against protocol-specific risks, such as unexpected inflation or changes in staking rewards. The functional significance of this technology lies in its ability to facilitate “Economic Proofs.” A derivative contract can now execute based on the verified fact that a specific percentage of the total supply is locked in a vault, or that the daily emission rate has crossed a certain threshold. This level of granular economic data allows for a more sophisticated class of financial instruments that were previously impossible to settle on-chain without centralized intervention.

Origin

The transition from simple price discovery to complex state-contingent logic necessitated a new class of data provider.

Early algorithmic stablecoins suffered from latency in supply adjustments ⎊ a failure of telemetry ⎊ which led to the development of dedicated modules for reporting protocol-specific metrics. These predecessors were often hardcoded or relied on centralized multisig updates, creating a single point of failure that contradicted the decentralized ethos. As the complexity of decentralized finance grew, the limitations of price-only feeds became apparent.

When a protocol undergoes a “rebase” or a “hard fork,” the price alone does not convey the full economic reality for a derivative holder. The genesis of Tokenomics Oracle Systems can be traced to the need for “State-Awareness” in automated market makers and lending protocols. They emerged to bridge the gap between the isolated logic of a single smart contract and the broader economic state of the entire network.

The historical progression of these systems reflects a broader trend toward modularity. Instead of every protocol building its own internal monitoring tools, specialized Tokenomics Oracle Systems began to offer standardized feeds. This standardization allowed developers to build cross-protocol derivatives, such as options on the “Staking Yield” of one network settled in the “Stablecoin” of another, creating a more interconnected and resilient financial web.

Theory

We define the Greek “Sigma-T” as the volatility of the token supply itself ⎊ a metric often ignored by those blinded by price action.

In the conceptual architecture of Tokenomics Oracle Systems, the primary focus is the mathematical modeling of supply-elastic assets. Traditional Black-Scholes models assume a constant or log-normal distribution of price, but they fail to account for the reflexive relationship between supply expansion and market liquidity.

The delta of a tokenomics-based option measures the sensitivity of the contract value to changes in the protocol emission rate.

The theoretical structure of these oracles relies on “State-Root Verification.” Just as a physical system moves toward higher entropy, a blockchain moves toward a more complex state with every block. Tokenomics Oracle Systems must sample this state without introducing bias. This requires a rigorous application of quantitative finance to determine the “True Inflation Adjusted Price” (TIAP).

If a token price remains stable while the supply doubles, the oracle must signal a fifty percent economic dilution, which is the decisive data point for a derivative strike price.

| Parameter | Tokenomics Derivative Application |

|---|---|

| Emission Rate | Pricing inflation-protected swaps |

| Staking Yield | Valuing yield-bearing call options |

| Burn Velocity | Determining deflationary strike prices |

| Lock-up Ratio | Assessing liquidity-at-risk for margin engines |

Consider the implications of a “Supply-Squeeze” derivative. Unlike a traditional short squeeze driven by market buying, a supply-squeeze occurs when protocol logic removes tokens from circulation. Tokenomics Oracle Systems provide the data to price the probability of such an event.

This involves calculating the “Economic Delta” ⎊ the rate at which the derivative’s value changes relative to the protocol’s internal burn rate.

Approach

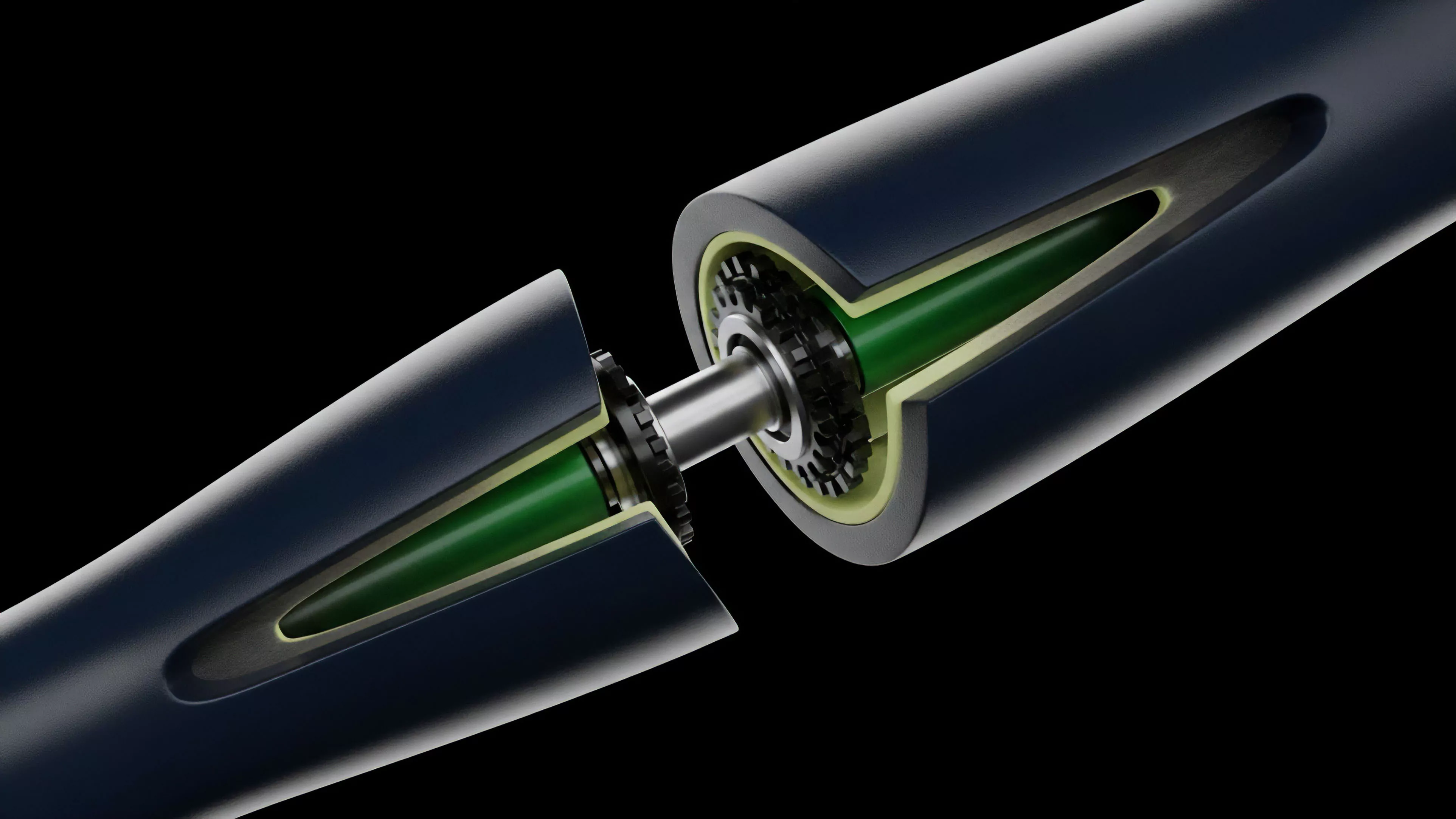

Implementation of these systems requires a multi-layered verification sequence to ensure data integrity in adversarial environments. The operational logic begins with node operators who fetch raw state data from archival nodes, ensuring they are not merely looking at the current block but the historical trajectory of the economic variables.

- Circulating supply figures derived from subtracting known protocol-owned and locked addresses from the total mint.

- Protocol-owned liquidity depth measured across multiple decentralized exchanges to assess the true exit liquidity.

- Staking participation ratios used to calculate the “Security-to-Value” metric of the network.

- Burn rate velocities tracked via event logs to provide a real-time deflationary signal.

Once the data is gathered, it passes through an aggregation layer. This layer uses medianizing algorithms to filter out malicious data from compromised nodes. The final payload is then wrapped in a cryptographic proof ⎊ often a Zero-Knowledge Proof ⎊ to allow the consuming smart contract to verify the data without re-executing the entire supply calculation.

This efficiency is what allows Tokenomics Oracle Systems to scale across multiple chains.

- Node operators fetch on-chain state data from archival nodes to establish a baseline.

- Aggregation layers compute the median value to filter outliers and prevent manipulation.

- Cryptographic proofs verify the authenticity of the state transition for the consuming contract.

- The smart contract consumes the verified payload for derivative settlement or parameter adjustment.

Evolution

If you think your protocol is safe because you have a multi-sig, you haven’t met a determined market maker with a balance sheet and a grudge. The historical progression of Tokenomics Oracle Systems has moved from static, governance-dependent constants to autonomous, censorship-resistant streams. Early iterations were vulnerable to “Governance Attacks,” where participants would vote to change the reported supply figures to benefit their own derivative positions.

This was a catastrophic failure of design that led to the current focus on “Hard-Coded Telemetry.” The second generation of these systems introduced “Time-Weighted Average Tokenomics” (TWAT). Similar to TWAP for price, TWAT smooths out temporary spikes in supply or burn rates caused by flash loans or one-time protocol events. This prevents “Economic Flash Attacks” where an adversary briefly manipulates the protocol’s internal state to trigger a massive liquidation on a derivative platform.

The current state of the art involves “Cross-Chain State Synchronizers,” which allow a protocol on Ethereum to react to the tokenomics of a sovereign chain on Cosmos or Polkadot.

| Phase | Oracle Model | Risk Vector |

|---|---|---|

| First Generation | Hardcoded Constants | Governance Rigidity |

| Second Generation | Governance Voting | Latency and Bribery |

| Third Generation | Autonomous TOS | Smart Contract Exploits |

| Fourth Generation | ZK-State Proofs | Computational Complexity |

The most significant shift has been the move toward “Adversarial Resistance.” In the current digital asset environment, an oracle is only as good as its cost of corruption. Tokenomics Oracle Systems now incorporate “Economic Bonds” for data providers. If a provider reports an incorrect supply figure ⎊ verified by a subsequent challenge period ⎊ their bond is slashed.

This creates a game-theoretic equilibrium where the cost of lying exceeds the potential profit from manipulating a derivative settlement.

Horizon

The future of these systems belongs to the ruthless. As we move toward a world of “Hyper-Tokenization,” every physical and digital asset will have its own internal economy. Tokenomics Oracle Systems will evolve into “Universal State Engines” that track the metabolism of the entire global financial system.

We are moving toward a terminal trajectory where the distinction between “Price” and “State” disappears, as price becomes a secondary derivative of the verifiable economic state.

Adversarial environments necessitate oracles that prioritize censorship resistance over sub-second latency.

We will see the integration of machine learning to predict “Tokenomics Anomalies.” Instead of just reporting the current burn rate, future Tokenomics Oracle Systems will provide a “Probabilistic Forecast” of future supply based on current network activity. This will allow for the creation of “Volatility Swaps” on the protocol’s own economic health. If a network’s activity drops, the oracle will signal an increased risk of inflation, allowing holders to hedge their exposure automatically. Ultimately, these systems will become the “Lies-to-Truth” converters of the decentralized world. They strip away the marketing hype of “infinite scalability” and “deflationary pressure” and replace it with cold, hard, verifiable numbers. In the terminal state of crypto-finance, the protocols that survive will be those with the most transparent and robust Tokenomics Oracle Systems, as they will be the only ones capable of attracting deep, institutional-grade liquidity for their derivative markets.