Essence

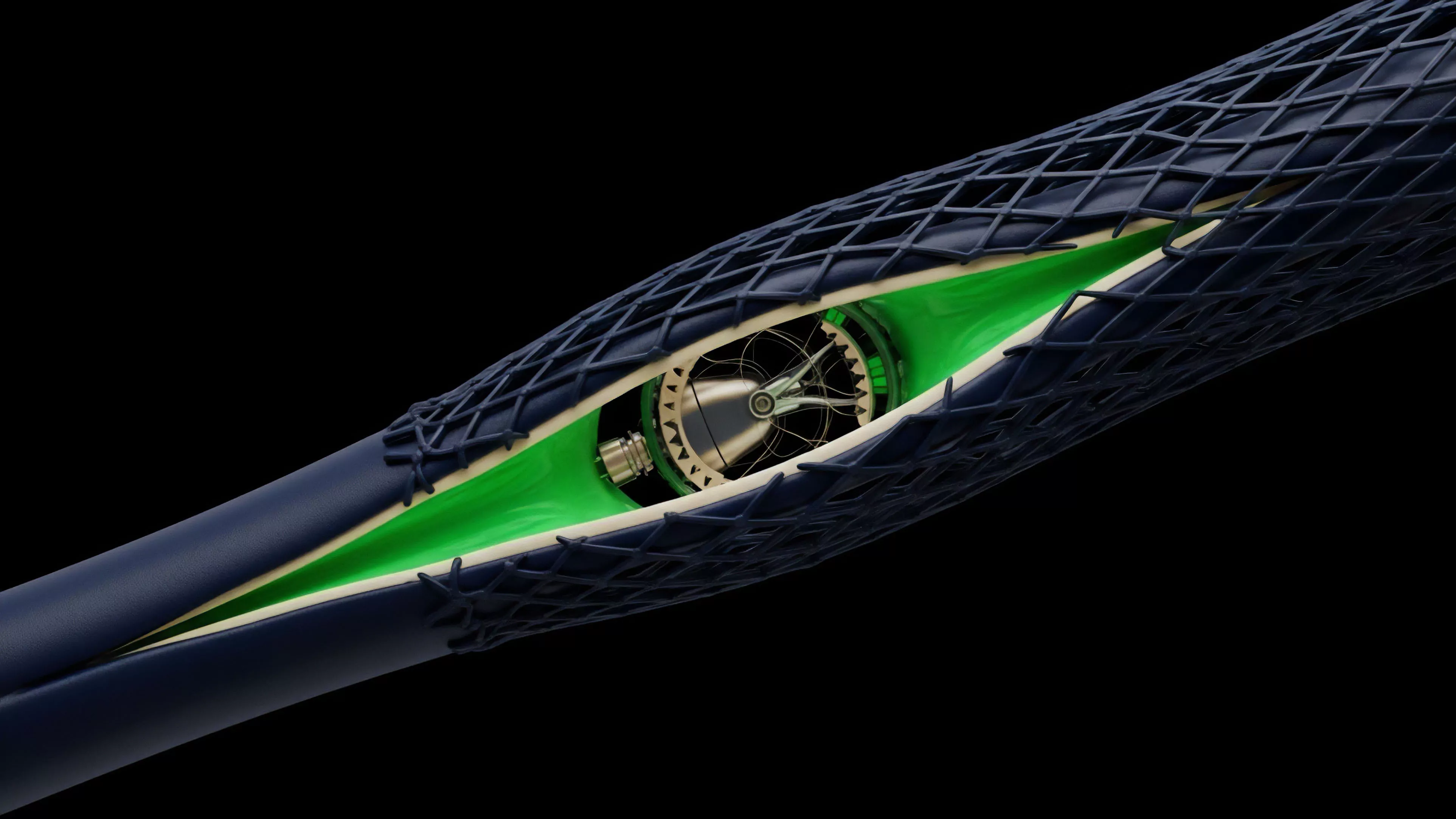

Signal Processing Techniques in decentralized derivatives function as the mathematical framework for distilling actionable intelligence from high-frequency market data. These methods transform raw order flow and trade execution streams into structured indicators of volatility, liquidity depth, and potential price trajectory. By applying time-series analysis and spectral decomposition, participants isolate transient market noise from persistent structural trends.

Signal processing techniques enable the extraction of latent market information from noisy, high-frequency decentralized exchange data streams.

The primary objective involves quantifying the underlying state of market sentiment and liquidity health. Practitioners utilize these tools to map the probabilistic distribution of future asset prices, directly influencing the pricing of complex options and the management of collateralized positions. The efficacy of these techniques depends on the ability to maintain low latency while ensuring high signal fidelity amidst the rapid, often chaotic, updates inherent to blockchain-based order books.

Origin

The roots of these methodologies reside in classical electrical engineering and control theory, adapted to the specific constraints of distributed ledger environments.

Early implementations focused on simple moving averages to smooth price action, eventually progressing to more sophisticated filters such as Kalman and Butterworth filters. These adaptations emerged from the need to address the inherent latency and asynchronous nature of decentralized trade settlement.

- Kalman Filtering provides a recursive approach to estimating the true state of a system from a series of incomplete or noisy observations.

- Spectral Analysis identifies periodic components within price data to detect cyclic patterns that traditional linear models overlook.

- Wavelet Transforms allow for the multi-resolution analysis of market data, capturing both short-term shocks and long-term trend shifts.

Market participants recognized that blockchain environments create unique data artifacts, such as front-running attempts and MEV-related price distortions. Developing techniques to filter these artifacts became a priority for sophisticated liquidity providers. The shift toward these methods reflects the transition from reactive trading strategies to predictive systems that anticipate order book imbalances before they materialize in the spot price.

Theory

Mathematical rigor defines the application of these techniques.

The system operates on the assumption that market price represents a composite signal comprised of a fundamental trend, periodic seasonal components, and stochastic noise. Advanced modeling employs Fourier Transforms to convert time-domain price series into frequency-domain representations, allowing for the isolation of specific cycles.

| Technique | Primary Application | Systemic Impact |

| Moving Averages | Trend Identification | Lag-based risk management |

| Kalman Filter | State Estimation | Real-time volatility tracking |

| Wavelet Analysis | Multi-scale Decomposition | Regime change detection |

The application of frequency-domain analysis allows for the precise isolation of cyclic market behaviors from transient stochastic noise.

Risk management frameworks rely on these signals to adjust dynamic delta-hedging strategies. When the signal-to-noise ratio decreases, the model automatically increases the safety buffer for margin requirements. This creates a feedback loop where the technical architecture of the derivative protocol itself enforces stability through the automated application of these mathematical filters.

Approach

Current implementations prioritize computational efficiency and robustness against adversarial data manipulation.

Practitioners utilize Adaptive Filtering to adjust model parameters in real-time as market conditions shift from low-volatility regimes to high-stress, liquidation-prone environments. This dynamic adjustment is necessary to prevent the model from overfitting to stale historical data.

- Adaptive Filtering recalibrates internal coefficients based on the incoming data stream to maintain prediction accuracy.

- Feature Extraction reduces high-dimensional order book data into lower-dimensional signals that represent market depth and pressure.

- Latency Minimization ensures that signal processing occurs within the timeframe of block confirmation, preserving the utility of the output.

These approaches must account for the specific physics of decentralized protocols, including the impact of gas price spikes on transaction ordering. The most resilient systems treat the mempool as a primary input, using signal processing to identify pending transactions that indicate institutional movement or potential liquidation cascades. This level of technical depth distinguishes sophisticated market participants from those relying on lagging indicators.

Evolution

The trajectory of these techniques tracks the maturation of decentralized financial infrastructure.

Early efforts relied on centralized data feeds, but the current state prioritizes on-chain signal extraction. This shift reduces reliance on external oracles and minimizes the surface area for data manipulation. The integration of Zero-Knowledge Proofs now allows for the verification of signal processing computations without revealing the underlying proprietary algorithms.

On-chain signal processing minimizes oracle dependence and enhances the integrity of automated derivative pricing mechanisms.

Protocol design now incorporates these techniques directly into smart contracts to automate risk mitigation. For instance, some platforms employ on-chain volatility filters to adjust interest rates for margin loans dynamically. This creates a self-regulating ecosystem where the protocol responds to market stress without requiring governance intervention, significantly reducing the systemic risk of contagion during periods of extreme volatility.

Horizon

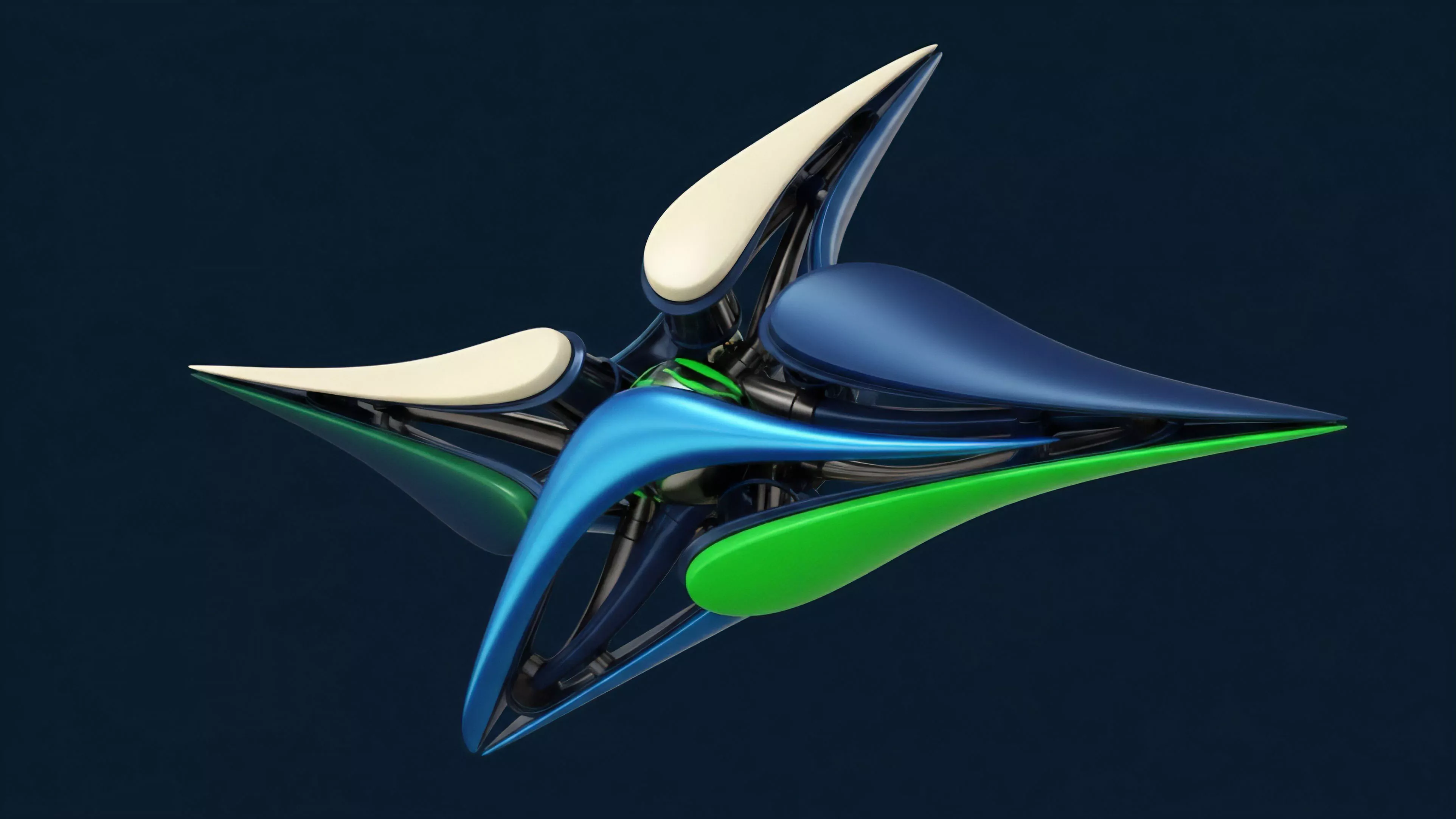

Future development will likely involve the application of machine learning-augmented signal processing, where neural networks learn to optimize filter parameters dynamically.

This transition will enable the system to identify complex, non-linear relationships within market data that remain opaque to traditional linear filters. The objective remains the creation of autonomous, resilient derivative protocols capable of self-correction.

- Machine Learning Integration enables automated optimization of signal processing parameters to adapt to evolving market regimes.

- Decentralized Compute will provide the infrastructure to perform complex spectral analysis without centralized bottleneck risks.

- Cross-Chain Signals will allow for the aggregation of liquidity data across disparate protocols to create a unified view of market health.

The next iteration of decentralized finance will center on the ability to process global market information at the protocol level. As protocols become more intelligent, the distinction between a trading strategy and a financial instrument will continue to blur, leading to the creation of autonomous, self-hedging derivative assets. This represents a fundamental shift toward truly programmable, self-sustaining financial systems.