Essence

Statistical Analysis Applications within crypto derivatives represent the mathematical framework used to quantify uncertainty and extract actionable signals from high-frequency market data. These systems transform raw order book updates and trade execution logs into probability distributions, allowing participants to price risk and identify misalignments in decentralized liquidity pools. The primary function involves distilling chaotic price action into structured parameters that govern option valuation and portfolio management.

Statistical Analysis Applications provide the quantitative foundation for translating raw market noise into actionable risk parameters and pricing models.

This domain relies on the intersection of stochastic calculus and real-time data ingestion to maintain market efficiency. By applying rigorous metrics to non-linear asset behaviors, these applications enable participants to hedge exposure against extreme tail events while simultaneously optimizing capital allocation within permissionless protocols.

Origin

The genesis of these applications lies in the adaptation of traditional financial econometrics to the unique constraints of blockchain-based settlement. Early implementations utilized basic historical volatility calculations, which failed to account for the discontinuous nature of decentralized order books and the impact of rapid liquidation cycles.

- Black-Scholes adaptation served as the initial baseline for pricing decentralized vanilla options.

- Variance risk premium analysis emerged to quantify the difference between realized volatility and implied volatility expectations.

- Automated market maker data streams provided the first transparent, on-chain datasets for granular microstructure investigation.

These early efforts prioritized simplicity, often ignoring the protocol-specific risks such as gas price fluctuations and oracle latency. As the market matured, the focus shifted toward incorporating these technical variables into more robust models, recognizing that crypto derivatives demand a higher degree of responsiveness to protocol-level shocks than their traditional counterparts.

Theory

The structural integrity of derivative pricing rests upon the accurate estimation of volatility surfaces and the sensitivity of these surfaces to underlying asset movements. Quantitative models must account for the distinct characteristics of crypto assets, specifically their tendency toward high kurtosis and frequent, sudden regime shifts.

Quantitative Finance and Greeks

Mathematical modeling of crypto options requires constant recalibration of Delta, Gamma, and Vega to reflect the rapid decay of hedging effectiveness in volatile environments. Because these markets operate continuously, the model must process incoming order flow to adjust for local volatility spikes that traditional models assume are mean-reverting.

Quantitative modeling in crypto derivatives demands constant recalibration of sensitivity parameters to account for rapid regime shifts and non-linear volatility.

Market Microstructure

The technical architecture of decentralized exchanges influences price discovery through specific mechanisms:

| Metric | Impact on Analysis |

|---|---|

| Latency | Affects the accuracy of real-time volatility estimates |

| Slippage | Distorts the effective price used for delta calculations |

| Liquidity Depth | Determines the validity of mid-price as a true signal |

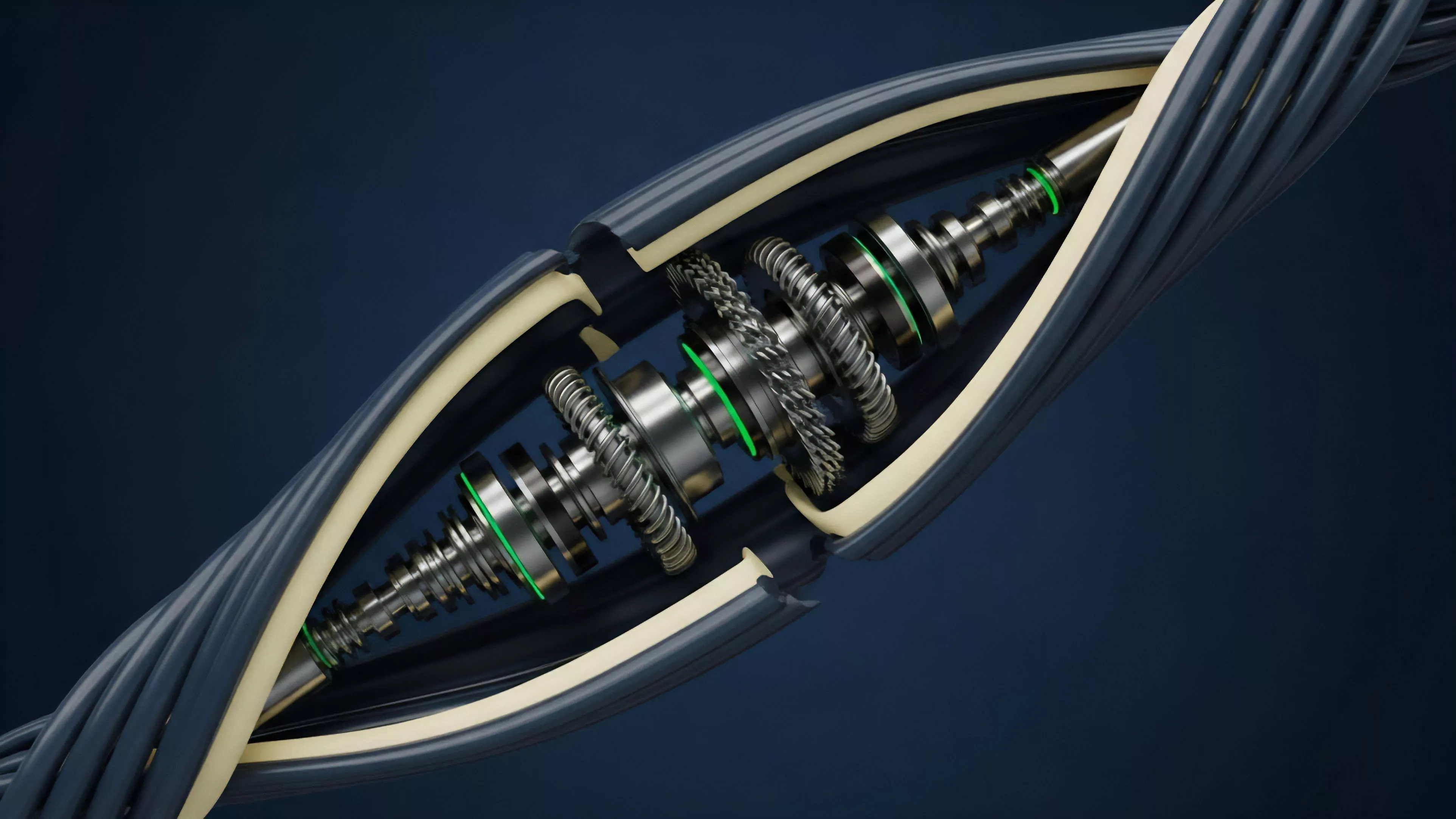

The interplay between order flow and consensus mechanisms introduces unique noise. A sudden increase in transaction volume can trigger a spike in base layer fees, which in turn alters the cost basis for arbitrageurs and shifts the entire volatility surface. This structural dependency requires models that treat the blockchain as an active participant rather than a passive ledger.

One might consider this relationship analogous to the study of fluid dynamics in a pipe where the viscosity changes based on the speed of the flow itself. As the system nears capacity, the rules of motion shift, rendering static models obsolete. Returning to the mechanics of price discovery, the reliance on automated liquidators introduces feedback loops that can exacerbate volatility, requiring analysts to incorporate liquidation thresholds directly into their probability density functions.

Approach

Current methodologies emphasize the integration of on-chain telemetry with off-chain computational power to maintain competitive pricing.

Sophisticated actors now utilize Machine Learning architectures to predict short-term volatility trends, moving beyond static historical averages.

- Real-time ingestion of order book snapshots enables the calculation of instantaneous implied volatility.

- Cross-protocol correlation mapping identifies systemic risks originating from collateral reuse across decentralized finance platforms.

- Automated stress testing simulates portfolio responses to rapid price de-pegging or protocol-wide liquidity drains.

Modern approaches integrate real-time on-chain telemetry with predictive modeling to navigate the systemic risks inherent in decentralized liquidity pools.

The primary challenge remains the fragmentation of liquidity across multiple chains and protocols. This dispersion creates synthetic volatility that does not necessarily reflect true market demand but rather the technical friction of moving capital between venues. Practitioners must therefore distinguish between genuine price discovery and noise generated by cross-chain arbitrage attempts.

Evolution

The transition from simple, centralized-exchange-inspired models to protocol-native, decentralized analysis has been driven by the requirement for higher capital efficiency.

Earlier cycles were characterized by a reliance on external data feeds, which introduced significant vulnerability to oracle failure and price manipulation.

| Era | Focus | Primary Constraint |

|---|---|---|

| Early | Replication of traditional models | Oracle dependence |

| Intermediate | On-chain data integration | Liquidity fragmentation |

| Current | Systemic risk and feedback loop analysis | Protocol-level volatility |

The shift toward Automated Market Maker structures has forced a redesign of how participants perceive and hedge risk. Instead of relying on a central order book, analysts now examine the invariant curves of liquidity pools, treating them as dynamic surfaces that react to every trade. This change has made the understanding of pool-specific mechanics a prerequisite for any meaningful statistical analysis.

Horizon

The future of these applications lies in the development of decentralized, high-fidelity data oracles that provide sub-second updates without sacrificing decentralization. As cross-chain interoperability increases, the statistical models will evolve to treat the entire crypto market as a single, unified pool of liquidity, allowing for more precise cross-asset hedging strategies. The integration of Zero-Knowledge Proofs for private, yet verifiable, trade data will likely revolutionize the way market makers assess counterparty risk. This advancement will enable a more nuanced understanding of institutional order flow without compromising the privacy of individual participants. The ultimate trajectory points toward autonomous, self-correcting risk engines that adjust margin requirements and hedging strategies in real-time, effectively automating the entire lifecycle of a derivative position within a secure, trustless environment.