Essence

Settlement Efficiency Analysis functions as the rigorous evaluation of the temporal and capital costs inherent in finalizing derivative transactions within decentralized networks. It quantifies the friction between trade execution and the absolute finality of asset transfer. This process scrutinizes the underlying blockchain latency, the liquidity depth of collateral pools, and the systemic overhead required to validate margin positions.

Settlement efficiency measures the velocity at which a derivative contract transitions from a committed order to an immutable, settled state within a distributed ledger.

At the architectural level, Settlement Efficiency Analysis addresses the inherent trade-offs between speed, cost, and security. Protocols operating on high-throughput chains often sacrifice decentralization or security for faster settlement, while those anchored to robust, layer-one networks prioritize finality at the cost of increased latency and gas expenditure. Understanding this balance is critical for market makers and liquidity providers who manage capital allocation across fragmented venues.

Origin

The genesis of Settlement Efficiency Analysis lies in the transition from traditional, centralized clearinghouses to permissionless, automated market structures.

Legacy finance relies on intermediaries ⎊ clearing banks and central counterparties ⎊ to manage counterparty risk through multi-day settlement cycles. Decentralized finance (DeFi) collapses this timeline, replacing human intermediaries with Smart Contract Security and autonomous clearing engines.

- Automated Clearing replaced manual reconciliation by utilizing on-chain state updates to confirm ownership transfers instantly.

- Collateralized Debt Positions introduced a requirement for continuous, real-time settlement monitoring to maintain solvency.

- Liquidity Fragmentation emerged as a consequence of multi-chain deployments, necessitating new metrics for assessing cross-venue settlement costs.

Early decentralized exchanges faced severe inefficiencies, characterized by high slippage and delayed order matching. These technical bottlenecks forced developers to architect specialized margin engines capable of near-instantaneous liquidation triggers. The discipline of analyzing these systems grew from the need to predict how protocol-level constraints impact the profitability of derivative strategies in adversarial market conditions.

Theory

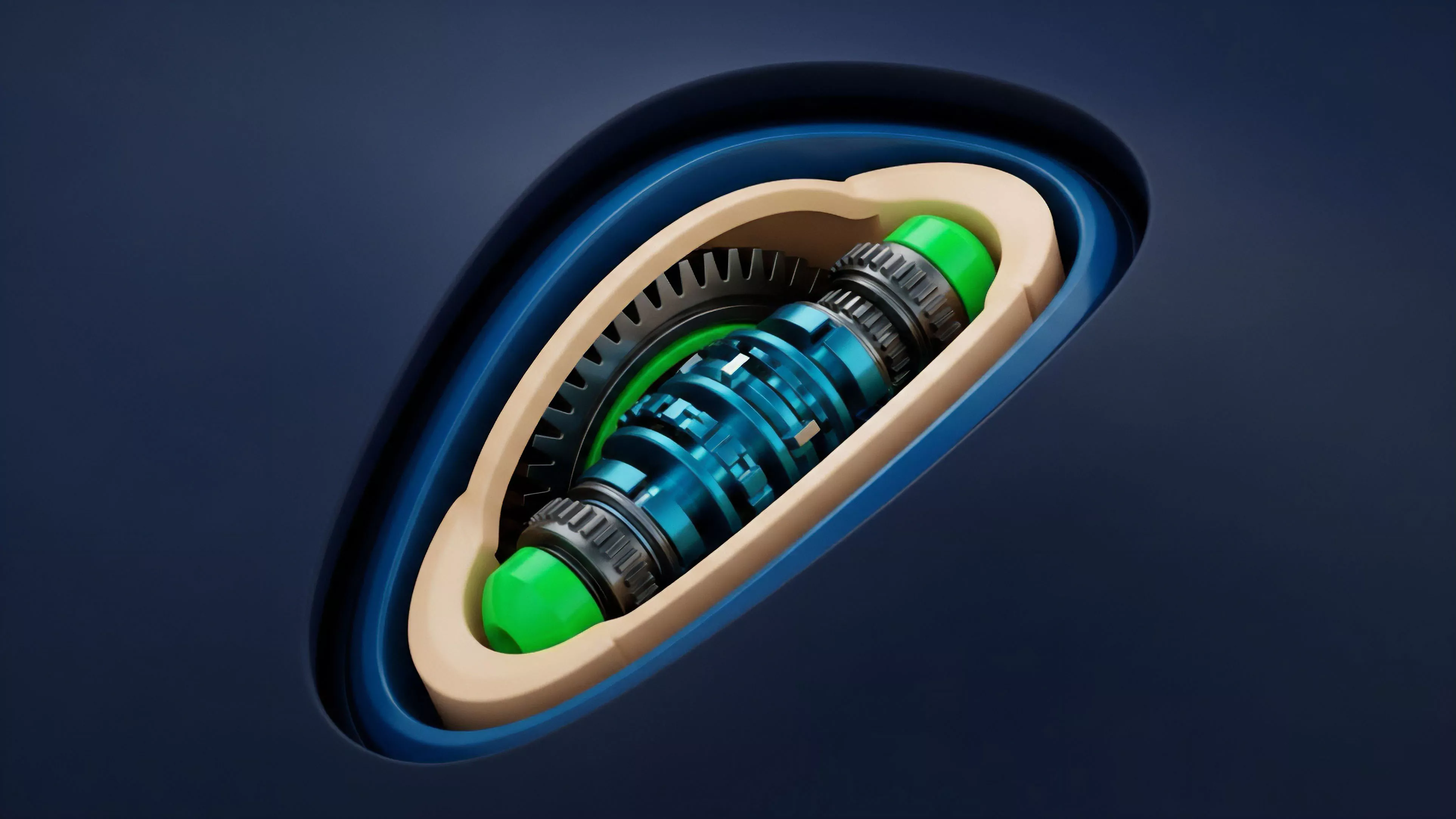

The structure of Settlement Efficiency Analysis relies on the interaction between Protocol Physics and Quantitative Finance.

The model evaluates the time-to-finality, which is the duration required for a transaction to become irreversible, against the volatility of the underlying asset. If the time-to-finality exceeds the volatility-adjusted risk threshold, the protocol faces potential insolvency.

| Metric | Technical Implication |

| Time-to-Finality | Determines the window of counterparty exposure. |

| Gas-to-Collateral Ratio | Evaluates the cost of maintaining active positions. |

| Liquidation Latency | Measures the responsiveness of the risk engine. |

The Greeks ⎊ specifically Gamma and Theta ⎊ are influenced by the efficiency of the settlement process. In a high-latency environment, the effective cost of hedging increases, as the delta-neutral position becomes stale before it can be rebalanced. This latency introduces a hidden tax on market makers, which is often priced into the bid-ask spread.

Sometimes I contemplate the intersection of these financial systems with thermodynamics; the way energy dissipates in a physical engine mirrors the loss of capital efficiency in a high-latency blockchain.

Efficient settlement minimizes the duration of counterparty risk and optimizes the utilization of collateral within decentralized derivative protocols.

Adversarial agents exploit settlement delays through front-running and sandwich attacks. A protocol with poor Settlement Efficiency Analysis provides an open invitation for these agents to extract value from legitimate participants, effectively eroding the economic design of the platform.

Approach

Current methodologies for Settlement Efficiency Analysis involve high-frequency monitoring of mempool activity and block production times. Practitioners utilize on-chain analytics to map the relationship between transaction priority fees and the speed of position finality.

By modeling the Order Flow, analysts can determine the optimal gas pricing strategies for large-scale liquidations.

- Mempool Latency Auditing quantifies the delay between order broadcast and block inclusion.

- Liquidation Threshold Stress Testing simulates market crashes to observe the protocol’s response under high network congestion.

- Capital Throughput Evaluation measures the velocity of collateral moving through the clearing engine during peak volatility.

This approach requires deep integration with Smart Contract Security frameworks to ensure that settlement engines remain robust under stress. Analysts focus on the trade-offs between synchronous and asynchronous settlement models. Synchronous models offer immediate finality but risk network congestion, while asynchronous models provide better scalability but introduce complex synchronization challenges.

Evolution

The trajectory of Settlement Efficiency Analysis has shifted from simple block-time observation to sophisticated, multi-layer risk modeling.

Initial protocols relied on simple time-weighted averages for price feeds, which proved vulnerable to manipulation during periods of high volatility. Modern systems have transitioned to oracle-based, decentralized price feeds that incorporate Macro-Crypto Correlation data to preemptively adjust liquidation parameters.

The evolution of settlement infrastructure moves from monolithic, slow-finality systems toward modular, high-speed architectures optimized for complex derivative instruments.

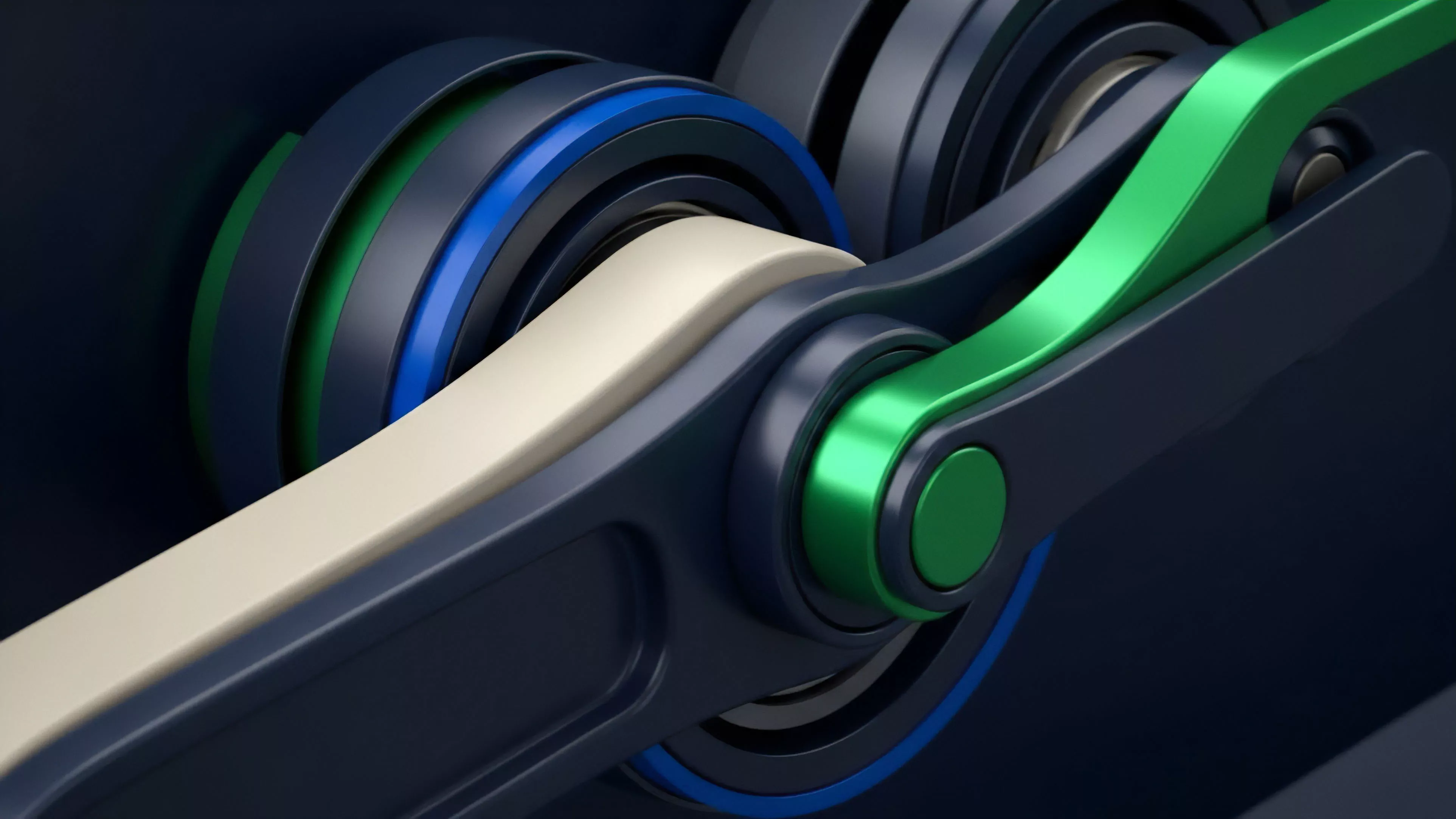

The integration of Layer-2 scaling solutions has fundamentally altered the landscape. By moving execution off-chain while maintaining Layer-1 security, these systems achieve near-instantaneous settlement without sacrificing decentralization. This change allows for the creation of more complex instruments, such as path-dependent options and exotic derivatives, which were previously impractical due to high gas costs and latency.

Horizon

Future developments in Settlement Efficiency Analysis will likely focus on predictive settlement engines that utilize machine learning to anticipate network congestion and dynamically adjust collateral requirements. The shift toward cross-chain interoperability will necessitate standardized settlement protocols that function across heterogeneous ledger environments. As institutional capital enters the space, the demand for deterministic, sub-millisecond settlement will drive the next generation of protocol architecture. The ultimate goal remains the creation of a global, permissionless clearing system where the cost of finality is negligible and the speed is limited only by the speed of light. Achieving this requires overcoming the inherent tensions between Protocol Physics and the economic reality of decentralized market participation.