Essence

Network Bandwidth Capacity represents the fundamental throughput limit governing the execution of decentralized derivative contracts. Within the context of on-chain finance, this metric defines the maximum volume of state updates, transaction sequencing, and oracle data propagation a blockchain network supports per unit of time. When market volatility spikes, the demand for margin updates and liquidations surges, directly challenging the existing Network Bandwidth Capacity.

The throughput ceiling of a decentralized ledger dictates the speed and reliability of derivative settlement during periods of extreme market stress.

This constraint operates as a silent tax on participants. High demand for block space forces competitive bidding for inclusion, often resulting in failed liquidations or delayed order execution. Traders view this capacity as the physical infrastructure layer upon which financial risk is managed, meaning that architectural limitations often manifest as systemic slippage or increased protocol-level risk.

Origin

The genesis of Network Bandwidth Capacity concerns lies in the early scalability trilemma, where security and decentralization were prioritized over high-frequency transaction processing.

Early protocols operated under rigid throughput limits, assuming that low-frequency settlement would suffice for rudimentary asset transfers. However, the introduction of complex derivative products transformed block space from a commodity into a critical financial resource.

- Protocol Physics: Early designs prioritized consensus finality over rapid data propagation, creating bottlenecks during high-volume trading.

- Resource Competition: Decentralized exchanges and lending protocols began competing for limited execution slots, turning network congestion into a volatility amplifier.

- Systemic Fragility: The inability of networks to scale bandwidth in tandem with derivative market growth forced developers to build secondary scaling layers.

These origins highlight the transition from simple peer-to-peer payments to complex, state-dependent financial environments where the network itself acts as the clearinghouse.

Theory

The mathematical modeling of Network Bandwidth Capacity relies on understanding the relationship between block gas limits, transaction frequency, and state bloat. As the complexity of derivative contracts increases, the amount of data required for validation grows, consuming a larger portion of the available throughput. This creates a feedback loop where increased market activity leads to higher network fees, potentially rendering certain arbitrage strategies unprofitable.

| Metric | Financial Impact |

| Latency | Increases execution risk and slippage |

| Throughput | Determines maximum liquidations per block |

| Gas Cost | Directly influences cost of hedging |

Financial derivative protocols must calibrate their operational logic to match the deterministic throughput constraints of their underlying settlement layer.

From a quantitative perspective, the network acts as a queuing system. When the arrival rate of derivative orders exceeds the service rate of the blockchain, the queue grows, increasing the probability of stale price data. This latency is particularly dangerous for margin engines, as it creates a temporal gap between market price discovery and the execution of collateral requirements.

Occasionally, I observe how the physics of digital networks mirror the fluid dynamics of traditional plumbing; if the pressure increases too rapidly, the system experiences catastrophic failure rather than a smooth reduction in flow. This physical limitation is the ultimate boundary for any decentralized derivative system.

Approach

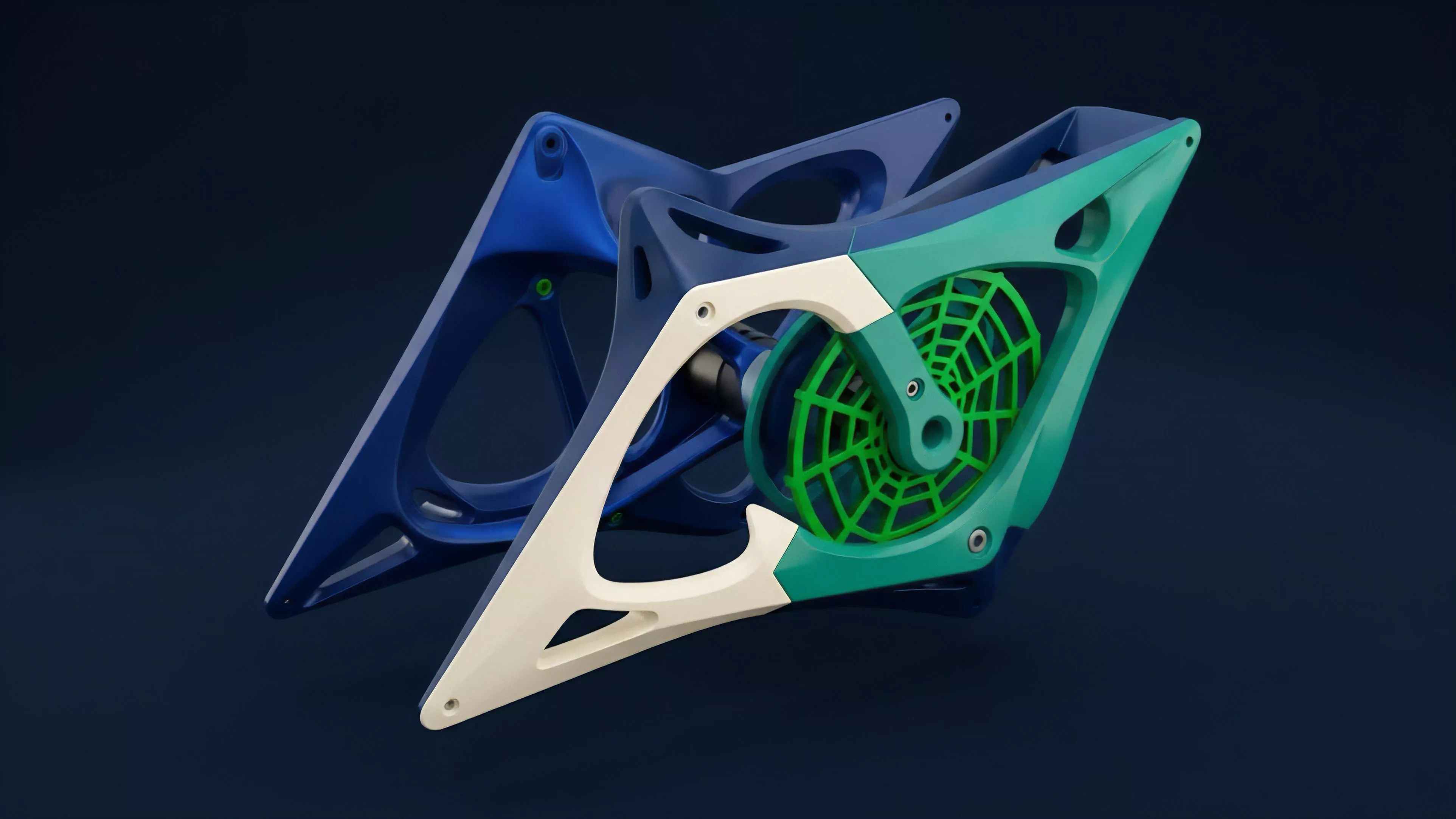

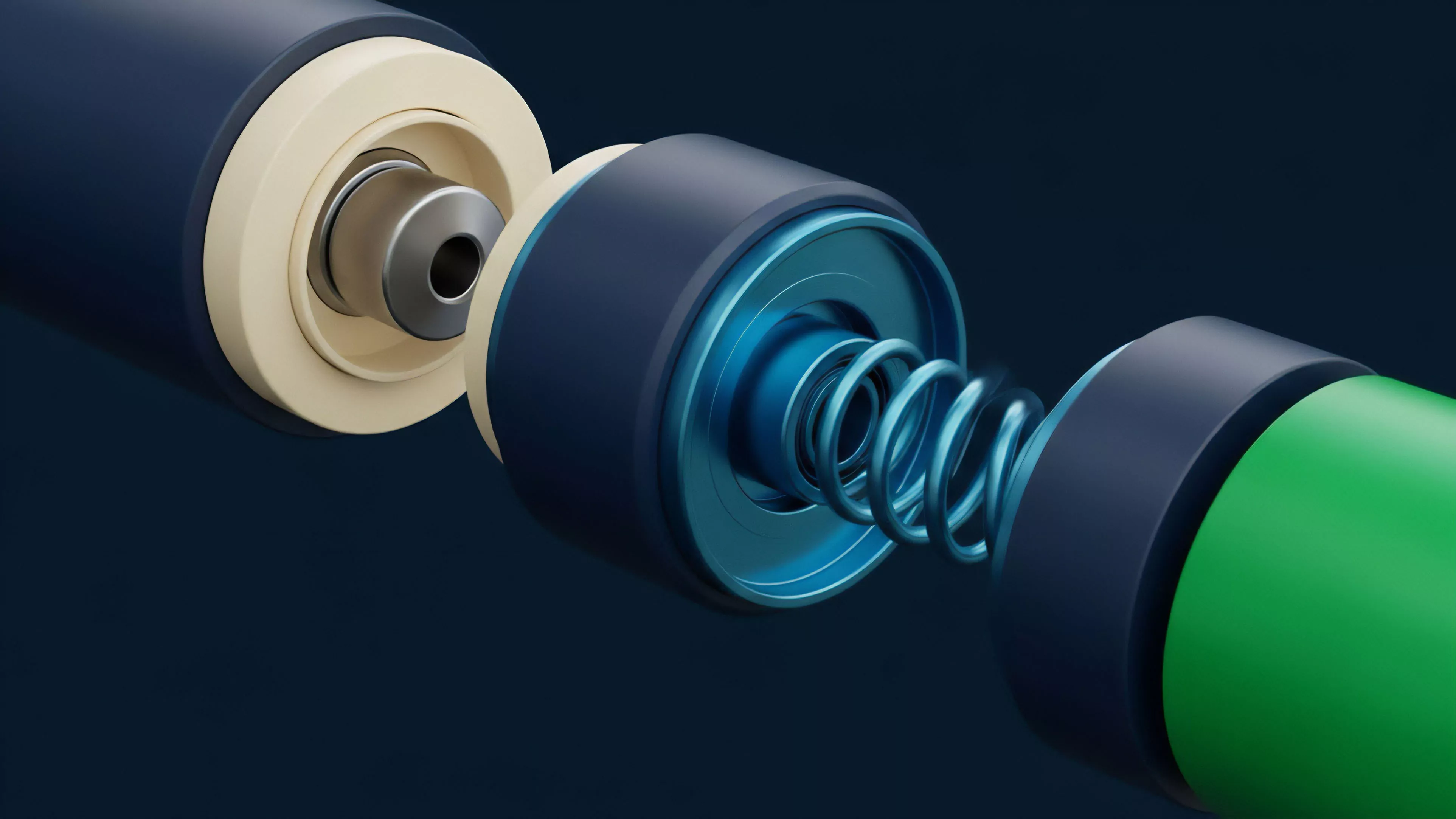

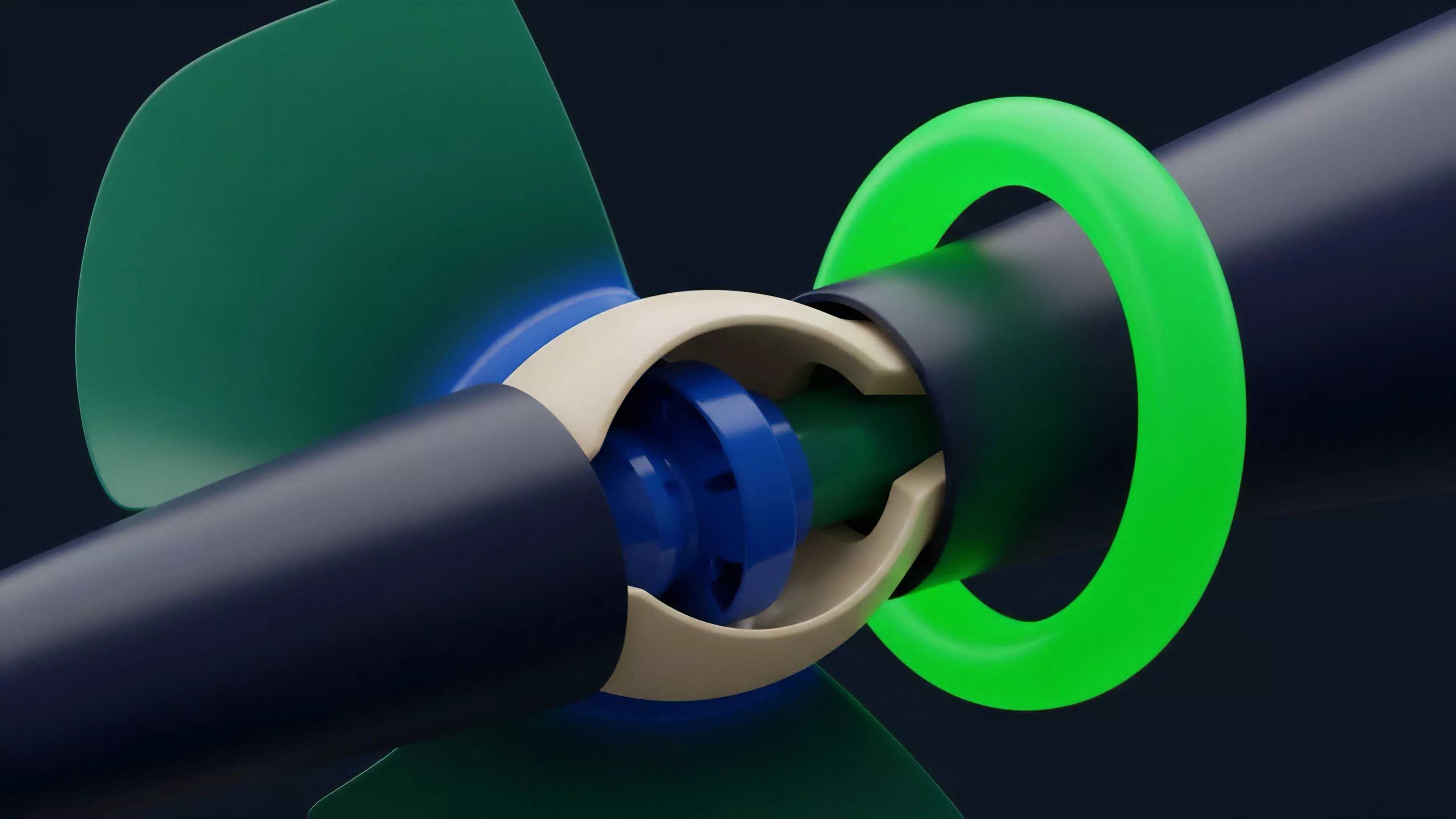

Current strategies to mitigate Network Bandwidth Capacity limitations involve a shift toward modular architectures and off-chain execution environments. Developers now decouple the consensus layer from the execution layer, allowing for higher throughput without compromising the security guarantees of the base chain.

These designs utilize rollups and state channels to batch transactions, thereby reducing the burden on the primary network bandwidth.

- State Compression: Reducing the data footprint of individual option contracts to maximize block space efficiency.

- Parallel Execution: Implementing sharding or multi-threaded virtual machines to process non-conflicting derivative trades simultaneously.

- Optimistic Sequencing: Moving order matching off-chain while relying on the base layer for final settlement to preserve capacity.

The evolution of derivative protocols is driven by the necessity to move execution off-chain while maintaining the integrity of on-chain settlement.

Market participants now utilize specialized indexers and private mempools to bypass public network congestion. This creates a two-tiered system where sophisticated actors secure priority access to execution, while retail participants face the brunt of network latency and fee volatility.

Evolution

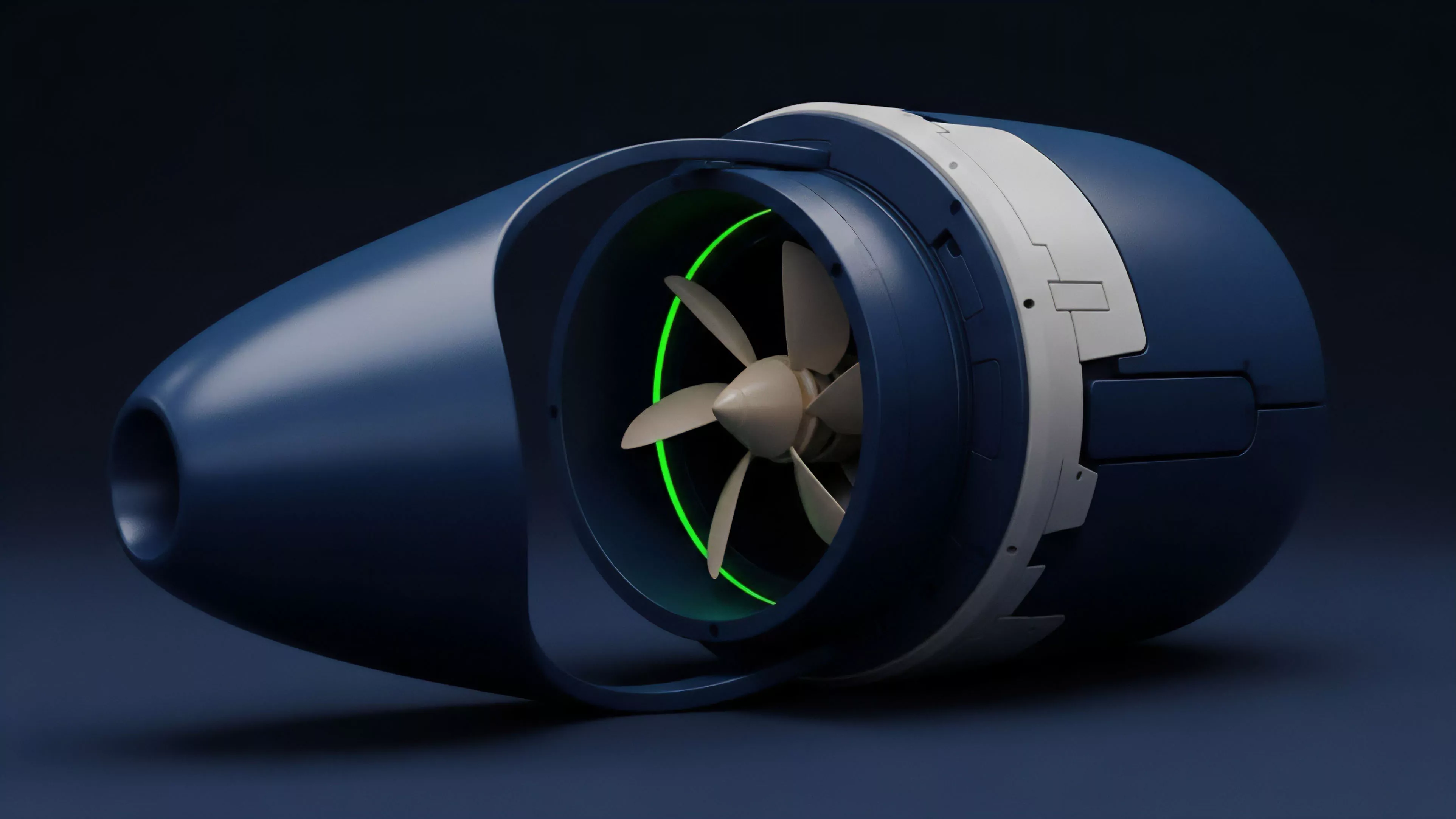

The path from monolithic, congested chains to specialized, high-bandwidth execution environments defines the recent history of decentralized finance. Early iterations of derivative platforms suffered from chronic downtime during market crashes, forcing a rapid maturation of infrastructure.

The industry moved from naive, single-chain designs to sophisticated, multi-layered topologies that prioritize predictable execution throughput over simple, broadcast-based validation.

| Era | Primary Constraint | Scaling Solution |

| Early DeFi | Gas price volatility | Limited derivative adoption |

| Middle Stage | Base layer congestion | Introduction of Layer 2 rollups |

| Current | Sequencer centralization | Decentralized sequencing protocols |

This progression demonstrates a shift toward viewing the blockchain as a base settlement utility, with the heavy lifting of high-frequency order flow delegated to specialized, high-bandwidth environments.

Horizon

Future developments in Network Bandwidth Capacity will center on the integration of zero-knowledge proofs to verify massive amounts of derivative data without overwhelming the base layer. The focus is shifting toward verifiable computation, where the network bandwidth is no longer consumed by the transaction data itself, but by the compact proof of the transaction’s validity. This shift will allow for order books that rival centralized exchanges in speed while retaining the censorship resistance of decentralized protocols.

The future of decentralized derivatives depends on the ability to verify complex financial states with minimal on-chain data footprint.

As these protocols mature, we will see the emergence of purpose-built application-specific chains that optimize their entire stack for the unique throughput requirements of derivative markets. This will eventually lead to a landscape where network bandwidth is dynamically allocated based on the real-time volatility of the derivative assets being traded.