Essence

Risk Parameter Standardization is the process of establishing consistent, verifiable, and interoperable rulesets for managing leverage and collateral within decentralized financial protocols. The core challenge in crypto options markets is the absence of a central clearing counterparty. This absence means each protocol must independently define its risk engine, creating a fragmented landscape where collateral factors, margin requirements, and liquidation thresholds vary significantly between platforms.

Standardization aims to mitigate systemic risk by providing a common language for risk assessment, ensuring that a unit of collateral on one platform is treated similarly on another. This consistency reduces information asymmetry and allows for more reliable risk calculations across the broader market. The objective is to move from a collection of isolated risk silos to an interconnected system where a single point of failure cannot trigger a cascading contagion effect.

Risk parameter standardization is the architectural process of establishing consistent rulesets for collateral and leverage to mitigate systemic risk across decentralized protocols.

A lack of standardization leads directly to Liquidity Fragmentation , where capital cannot efficiently move between protocols due to differing risk assessments. A protocol with looser parameters may attract more liquidity in a bull market, but it becomes a point of weakness during volatility spikes. The goal of standardization is to define a baseline for market safety without stifling innovation.

This baseline provides a foundation for more complex financial engineering, allowing for the creation of new products built on top of standardized risk primitives. It transforms the market from a series of individual experiments into a coherent, resilient financial system.

Systemic Risk and Interoperability

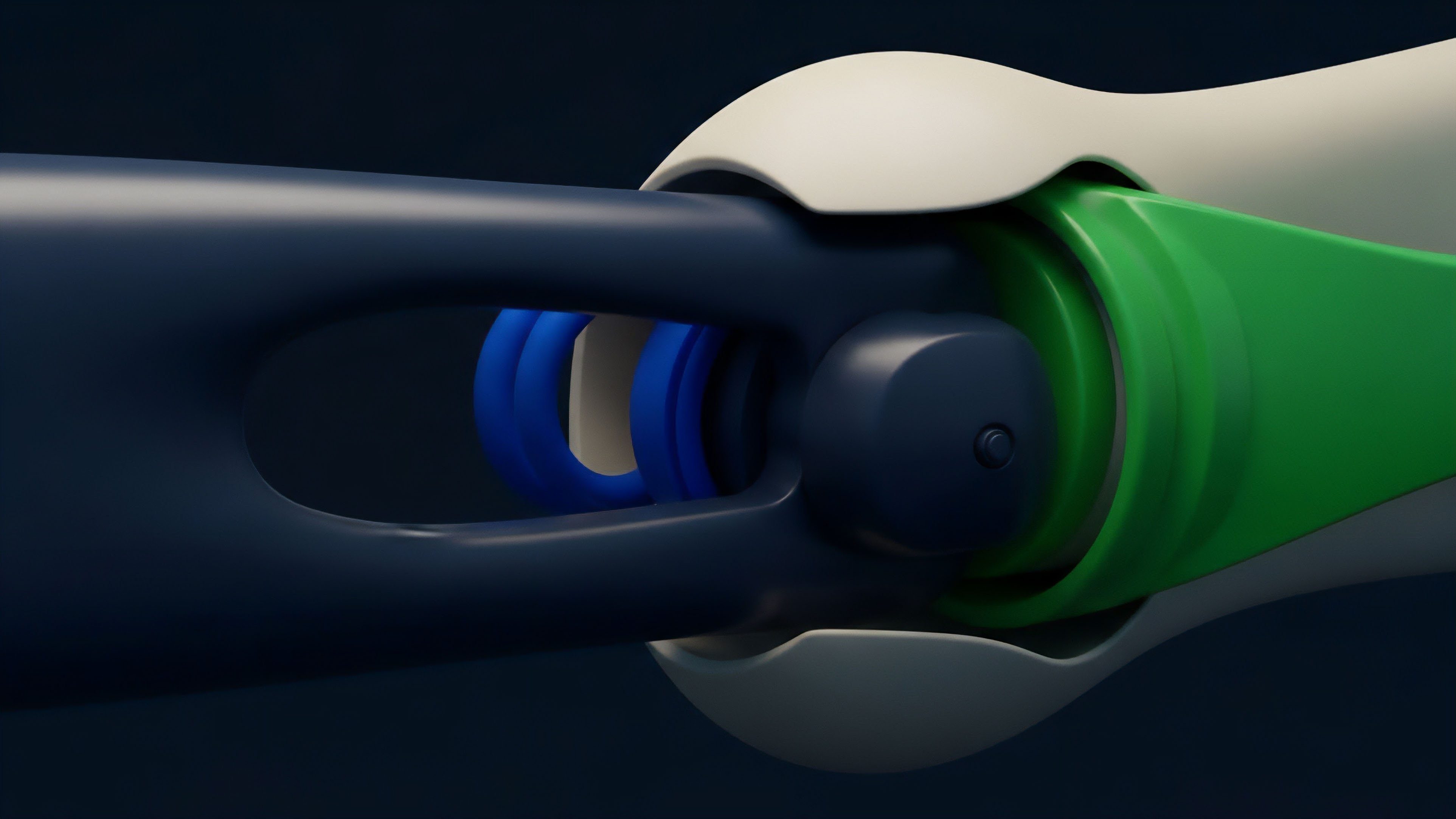

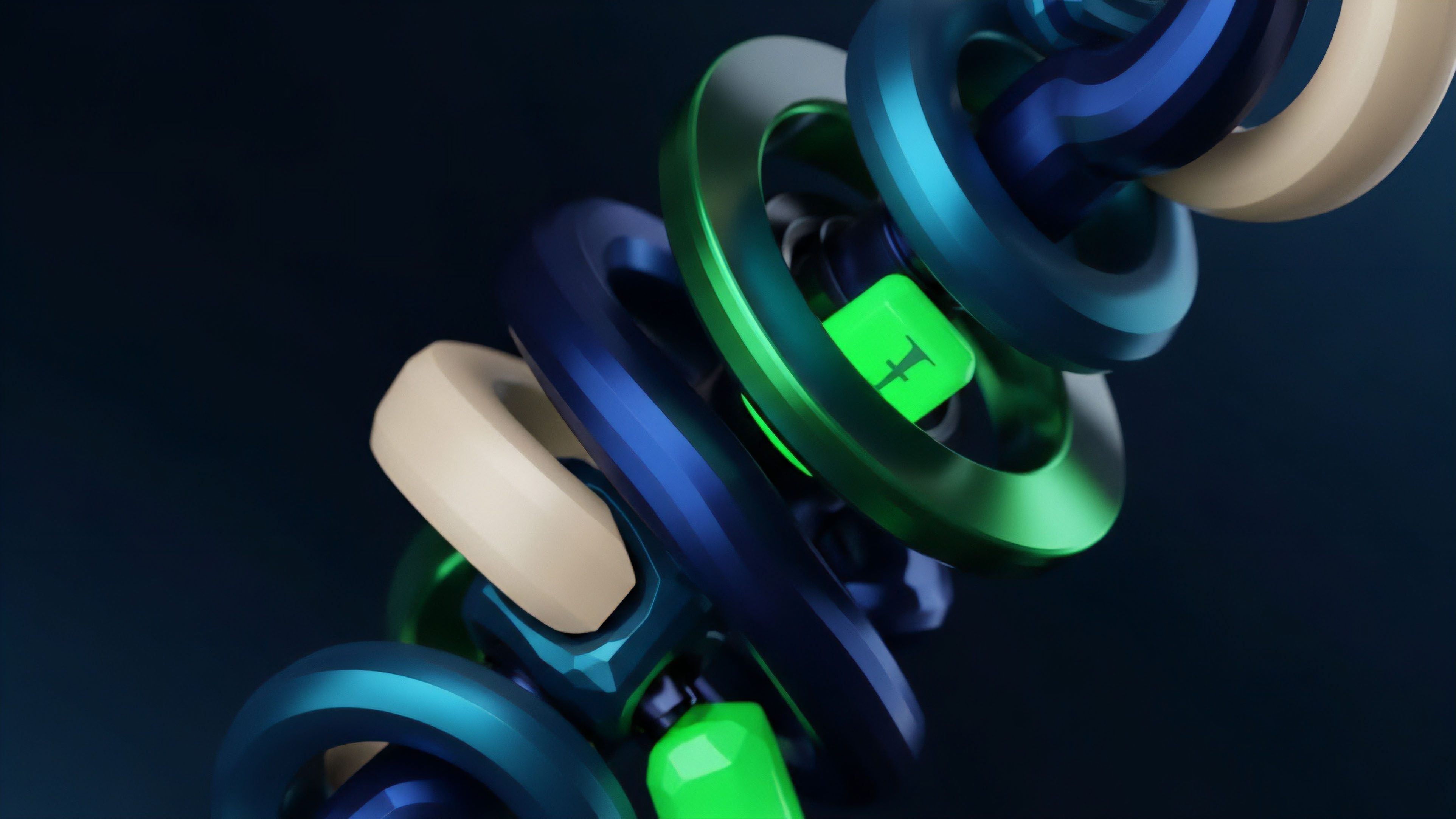

The primary driver for standardization is the interconnected nature of decentralized finance. When a user deposits collateral on Protocol A, and Protocol A’s risk parameters differ from Protocol B’s, a liquidation event on Protocol A can have unexpected consequences for Protocol B if they share collateral types or liquidity pools. This creates a hidden layer of systemic risk.

Standardization provides a mechanism for Interoperable Risk Management , where a change in parameters on one protocol can be easily understood and accounted for by others. This allows for the development of truly composable financial products that can move seamlessly between different venues.

Origin

The concept of risk parameter standardization originates in traditional finance (TradFi) clearing houses.

Institutions like the Options Clearing Corporation (OCC) or the CME Group act as central counterparties, setting and enforcing uniform margin requirements and risk calculations for all participants. This centralized approach ensures market integrity and prevents individual failures from causing widespread collapse. In the early days of crypto derivatives, centralized exchanges like BitMEX and Deribit adopted similar models, using proprietary risk engines to manage collateral and liquidations.

The challenge intensified with the advent of decentralized finance (DeFi). DeFi protocols are designed to be permissionless and trustless, replacing central authorities with smart contracts and community governance. Early DeFi protocols, such as MakerDAO and Compound, introduced Automated Risk Engines that used on-chain data to calculate collateral factors and liquidation thresholds.

However, each protocol developed its own specific methodology, leading to a proliferation of differing risk models. This created a competitive environment where protocols often optimized for capital efficiency and user experience rather than systemic safety. The need for standardization became apparent during major market stress events, particularly in 2020 and 2021.

Liquidation cascades highlighted the vulnerabilities inherent in fragmented risk parameters. When one protocol’s parameters were too aggressive, it triggered liquidations that flooded the market with collateral, causing price drops that in turn triggered liquidations on other protocols. This demonstrated that risk parameters are not isolated to a single protocol; they are a systemic concern for the entire ecosystem.

The community began to recognize that a shared framework for risk management was essential for long-term sustainability.

Theory

Risk parameter standardization requires a deep understanding of quantitative finance and protocol physics. The theoretical foundation relies on modeling the probability distribution of asset prices, specifically focusing on tail risk.

In traditional finance, models like Black-Scholes-Merton (BSM) are used to price options and set margin requirements. However, BSM assumes a normal distribution of returns, which consistently fails to capture the “fat tails” characteristic of crypto assets. Crypto markets exhibit high kurtosis, meaning extreme price movements are far more likely than BSM predicts.

The theoretical solution requires moving beyond BSM to models that account for Jump Diffusion Processes and stochastic volatility. Standardization efforts focus on creating consensus around the inputs to these models, specifically the Volatility Surface and the Volatility Skew. The volatility surface plots implied volatility across different strike prices and maturities.

The skew, or the slope of this surface, reflects the market’s expectation of downside risk. When different protocols use different methodologies to calculate the volatility surface, their risk parameters will diverge significantly, leading to inconsistencies in pricing and margin calls.

Quantitative Risk Metrics and Standardization

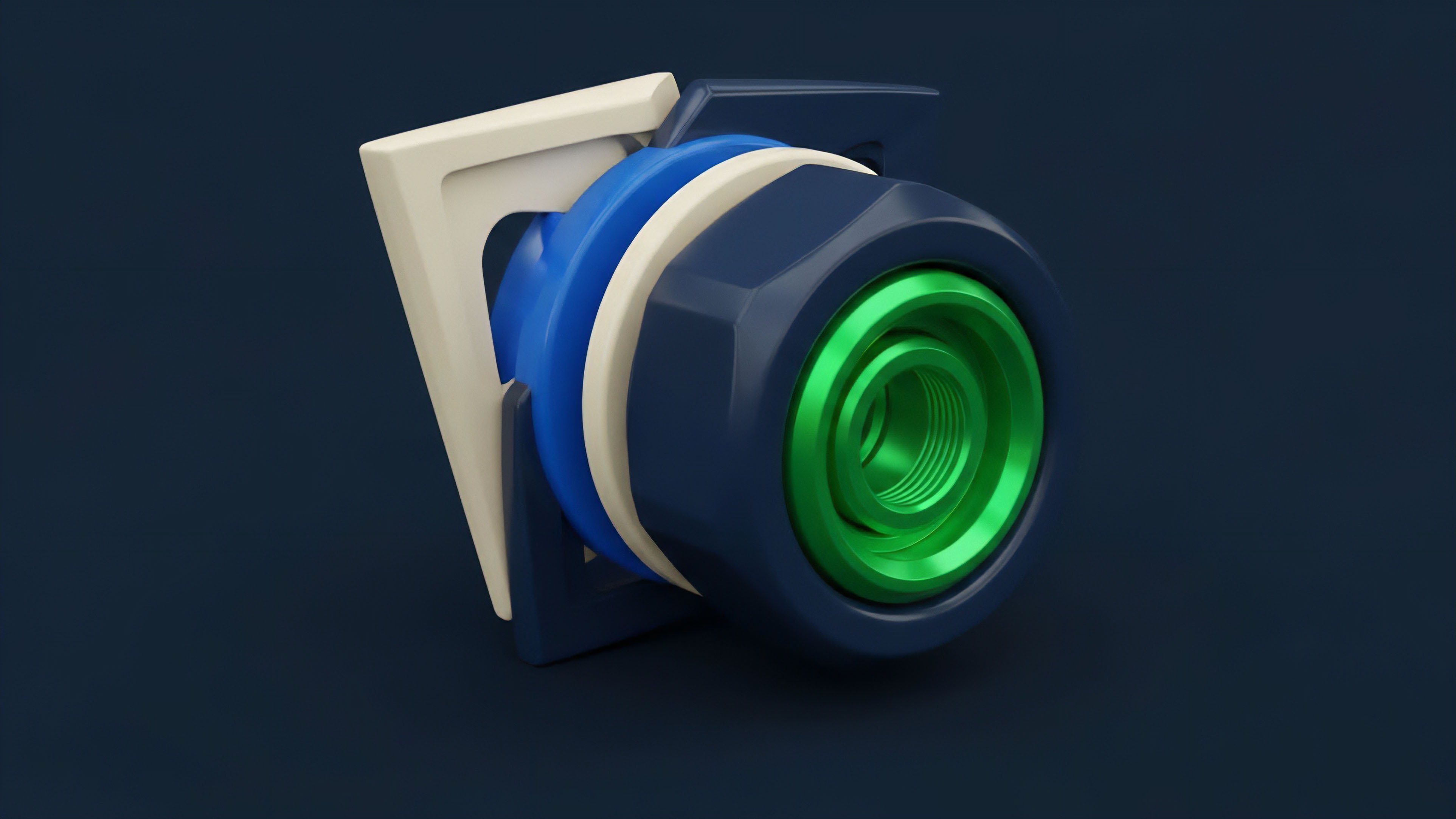

The standardization process involves aligning key risk metrics and their calculation methods. The goal is to ensure that a risk parameter like Initial Margin Requirement represents the same level of risk across protocols. This requires agreement on:

- Collateral Haircuts: The percentage reduction applied to the value of collateral to account for its volatility. A standardized approach ensures that different protocols assign similar haircuts to the same asset.

- Liquidation Thresholds: The ratio of collateral value to outstanding debt at which a position is automatically liquidated. Standardization ensures that a position is liquidated at a similar risk level across platforms, preventing competitive “race to the bottom” behavior where protocols lower thresholds to attract leverage-seeking users.

- Greeks Calculation: The sensitivities of an option’s price to changes in underlying variables (Delta, Gamma, Vega). Standardization ensures that a protocol’s risk engine calculates these sensitivities using consistent methodologies, allowing for accurate risk management and hedging strategies.

Protocol Physics and Settlement Risk

The theoretical challenge in DeFi is further complicated by Protocol Physics , specifically the block time and transaction finality of the underlying blockchain. Liquidation mechanisms are time-sensitive; a protocol must be able to liquidate a position before the collateral value drops below the debt value. If a protocol’s risk parameters are standardized, but its underlying blockchain has high latency or variable gas fees, the theoretical risk model may fail in practice.

Standardization must therefore extend beyond pure financial theory to include operational parameters like liquidation engine design and oracle update frequency.

Approach

The current approach to risk parameter standardization is fragmented, with different protocols employing various methods to set and manage risk. This section outlines the primary approaches and the challenges inherent in each.

Decentralized Autonomous Organizations (DAOs) and Governance

Many protocols rely on DAO Governance to set and adjust risk parameters. This involves community members voting on proposals to change collateral factors, interest rate models, and liquidation thresholds. While decentralized, this approach is often slow, reactive, and prone to political or behavioral biases.

The process of proposing, debating, and implementing changes can take days or weeks, making it difficult to respond quickly to sudden market volatility. Furthermore, a protocol’s risk parameters can become subject to “governance attacks” where a large token holder votes for parameters that benefit their specific position at the expense of overall protocol health.

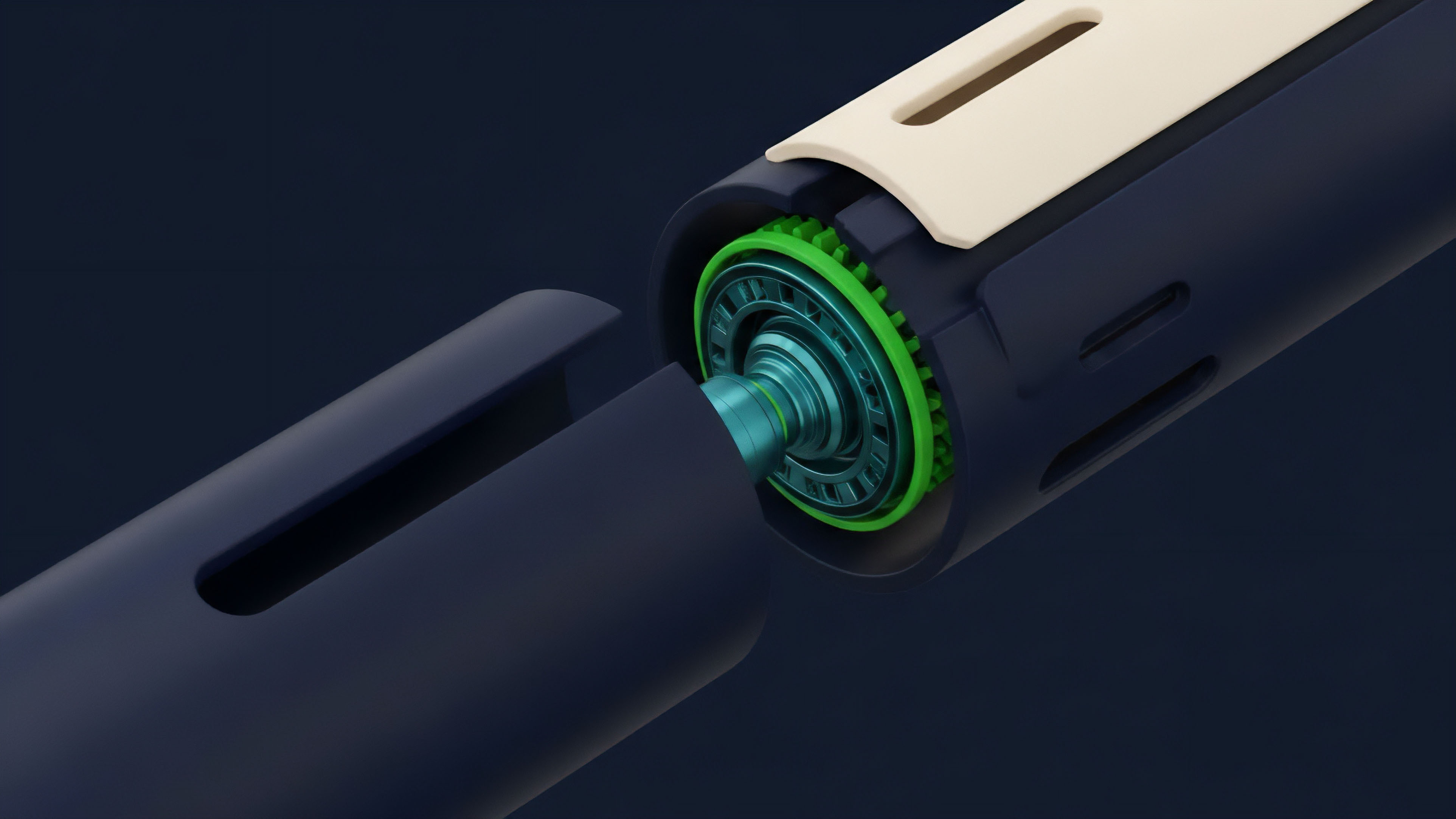

Algorithmic Risk Engines and Automated Systems

An alternative approach involves Algorithmic Risk Engines that dynamically adjust parameters based on market data. These systems use machine learning models or predefined formulas to automatically update parameters like collateral factors in response to changes in volatility, liquidity, and asset correlation. While faster and more objective than human governance, these engines are only as good as their underlying models.

If a model fails to account for a novel market event, it can lead to large liquidations. Standardization efforts here focus on creating shared, open-source models that protocols can adopt, rather than each building a proprietary system.

Risk Committee Frameworks and Data Oracles

A hybrid approach involves creating specialized Risk Committees composed of financial experts and quantitative analysts. These committees propose parameter changes based on data-driven analysis, and the DAO votes on their recommendations. This balances expert insight with decentralized governance.

The committee’s recommendations often rely on Risk Oracles that provide standardized data feeds. The goal is to standardize the data inputs and the analytical frameworks used by these committees.

| Methodology | Primary Decision Maker | Response Time | Risk of Bias |

|---|---|---|---|

| DAO Governance | Token Holders | Slow (Days/Weeks) | Political/Behavioral Bias |

| Algorithmic Engine | Smart Contract Logic | Fast (Minutes/Hours) | Model Risk/Black Box Risk |

| Risk Committee Hybrid | Experts/Token Holders | Medium (Hours/Days) | Expert Bias/Information Asymmetry |

Evolution

The evolution of risk parameter standardization has progressed from isolated, proprietary systems toward a collaborative, data-driven framework. Initially, protocols competed by offering the most aggressive risk parameters, resulting in a race to the bottom that maximized short-term yield but created long-term instability. The market has since learned from high-profile failures, leading to a shift toward shared risk frameworks.

The current stage of evolution focuses on Cross-Protocol Standardization Initiatives. These initiatives aim to define a common set of risk parameters that protocols can voluntarily adopt. The key challenge here is the behavioral game theory involved: convincing protocols to cede a competitive advantage (loose parameters) for the collective benefit of systemic stability.

The long-term success of standardization depends on a critical mass of protocols agreeing to use a shared risk model.

From Reactive to Proactive Risk Management

Early protocols were reactive; they adjusted parameters after a market event occurred. The evolution toward standardization allows for proactive risk management. By aligning parameters, protocols can anticipate and mitigate systemic risk before it materializes.

This allows for the development of Automated Risk Arbitrage , where market makers can profit from inconsistencies in risk parameters between different platforms, incentivizing protocols to converge on a standardized model.

Standardization efforts have shifted from isolated, proprietary risk engines to collaborative frameworks that prioritize systemic stability over short-term competitive advantage.

The Role of Data Providers and Risk Modeling Services

The evolution of standardization is heavily reliant on external data providers and risk modeling services. These services provide protocols with objective data on asset volatility, correlation, and liquidity. They act as neutral third parties, offering standardized inputs that protocols can use to set their parameters.

This reduces the reliance on internal governance processes and provides a more robust, objective foundation for risk assessment.

Horizon

The horizon for risk parameter standardization involves a move toward truly interoperable, systemic risk management. The future of decentralized finance depends on creating a resilient infrastructure that can withstand extreme market conditions.

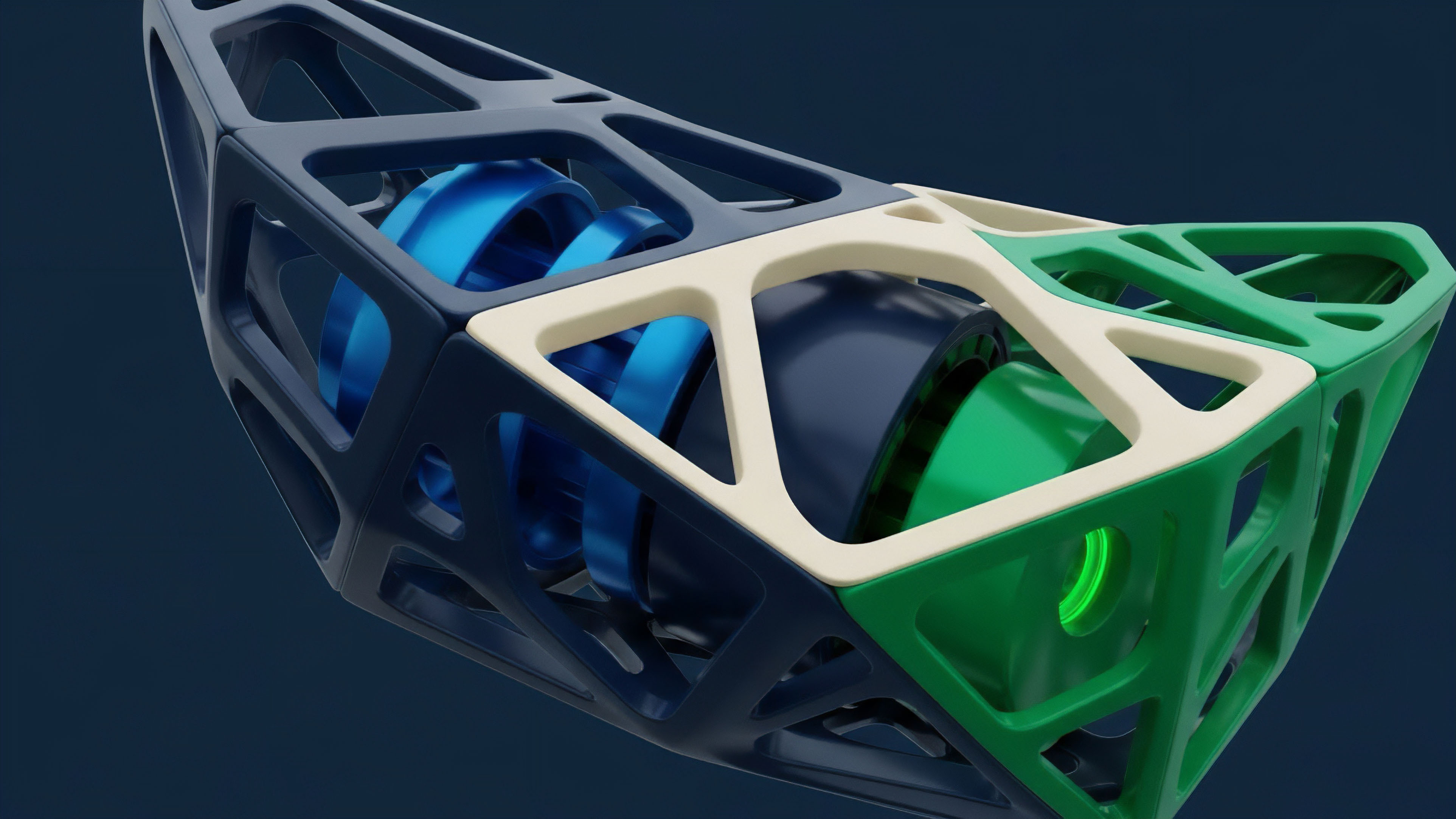

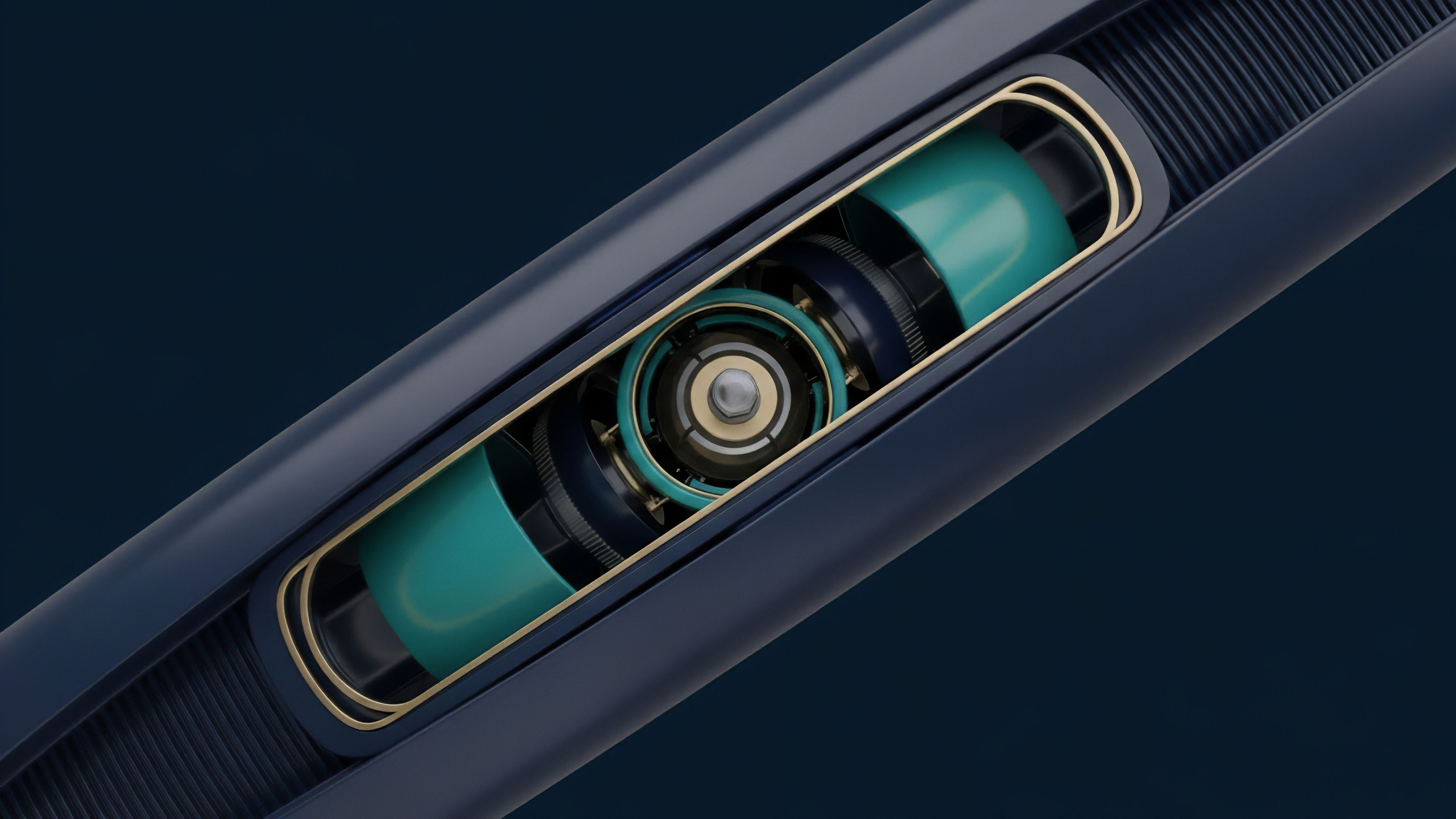

Standardization is the foundation upon which this infrastructure will be built. The next phase will likely see the development of Standardized Risk Primitives. These primitives will be a set of smart contracts that define risk parameters for different assets and market conditions.

Protocols will be able to plug into these primitives, automatically adopting a standardized risk model without needing to implement their own. This creates a “Risk-as-a-Service” model where protocols can focus on their core product offerings while outsourcing risk management to a standardized, community-vetted system.

Systemic Contagion Mitigation and Standardized Stress Testing

Standardization will enable new forms of Systemic Stress Testing. By aligning risk parameters, a market-wide simulation can be run to determine the impact of a large price shock on all participating protocols simultaneously. This allows protocols to proactively adjust their parameters to mitigate potential contagion.

This shift from individual protocol risk management to market-wide risk management is critical for the long-term viability of decentralized finance.

New Financial Products and Risk Arbitrage

A standardized risk environment will unlock new financial products that are currently too complex or risky to build. For example, Standardized Credit Default Swaps (CDS) could be created on the solvency of protocols. If risk parameters are standardized, the probability of protocol insolvency can be modeled more accurately, allowing for a liquid market in risk transfer. This allows for more sophisticated risk management strategies and attracts institutional capital seeking predictable risk exposure. The future state of a standardized system will look less like a collection of isolated islands and more like a single, interconnected continent.

Glossary

Decentralized Risk Management

Model Parameter Estimation

Volatility Parameter Estimation

Pricing Function Standardization

Risk Primitives Standardization

Risk Parameter Optimization Report

Parameter Changes

Risk Parameter Control

Parameter Uncertainty