Cryptographic Solvency Assurance

Risk Calculation Verification represents the transition from reputational trust to computational certainty within decentralized finance. In legacy markets, the assessment of counterparty risk remains a black box, managed by clearinghouses that rely on non-public data and human discretion. This protocol-level verification replaces those opaque structures with a transparent, mathematical audit of every participant’s ability to meet their obligations.

By embedding risk parameters directly into the execution layer, the system ensures that solvency is a provable state rather than a promise. The systemic relevance of this verification lies in its ability to prevent the cascading failures seen in traditional over-the-counter markets. When volatility spikes, the Risk Calculation Verification engine acts as a neutral arbiter, triggering liquidations or margin calls based on immutable logic.

This creates a market environment where the architecture itself enforces financial stability, removing the need for a central lender of last resort.

Risk Calculation Verification serves as the automated ledger of protocol solvency by validating collateral adequacy against real-time market liabilities.

The focus shifts from monitoring participants to auditing the code that governs them. This shift is vital for the scaling of decentralized derivatives, as it allows for the creation of complex instruments without introducing the systemic fragility inherent in centralized systems. The protocol does not rely on the goodwill of a broker; it relies on the verifiable integrity of its margin engine.

Evolution of Trustless Settlement

The genesis of Risk Calculation Verification is found in the post-2008 requirement for real-time transparency in financial settlement. Traditional finance failed because the true extent of leverage and risk was hidden within complex, off-balance-sheet vehicles. The birth of blockchain technology provided the tools to move these calculations into the public domain, allowing for a Deterministic Risk Model that operates without intermediaries.

Early decentralized protocols lacked the computational efficiency to perform complex risk audits on-chain. They relied on high collateralization ratios to buffer against uncertainty. As the technical architecture matured, the need for capital efficiency drove the development of more sophisticated verification methods.

This allowed protocols to offer Crypto Options and other derivatives with lower margin requirements while maintaining a high degree of safety.

| Feature | Traditional Risk Management | Risk Calculation Verification |

|---|---|---|

| Transparency | Opaque, proprietary models | Public, verifiable code |

| Settlement Speed | T+2 or T+1 cycles | Near-instantaneous on-chain |

| Counterparty Risk | Managed by clearinghouses | Eliminated via smart contracts |

| Data Source | Internal bank databases | Decentralized price oracles |

The shift toward verifiable risk computation eliminates the information asymmetry that historically led to systemic financial contagion.

The transition was accelerated by the rise of Automated Market Makers and the subsequent demand for professional-grade hedging tools. Traders required a guarantee that their gains would be paid out even during extreme tail-risk events. Risk Calculation Verification provided this guarantee by making the solvency of the liquidity pool visible to all participants at all times.

Mathematical Verification Protocols

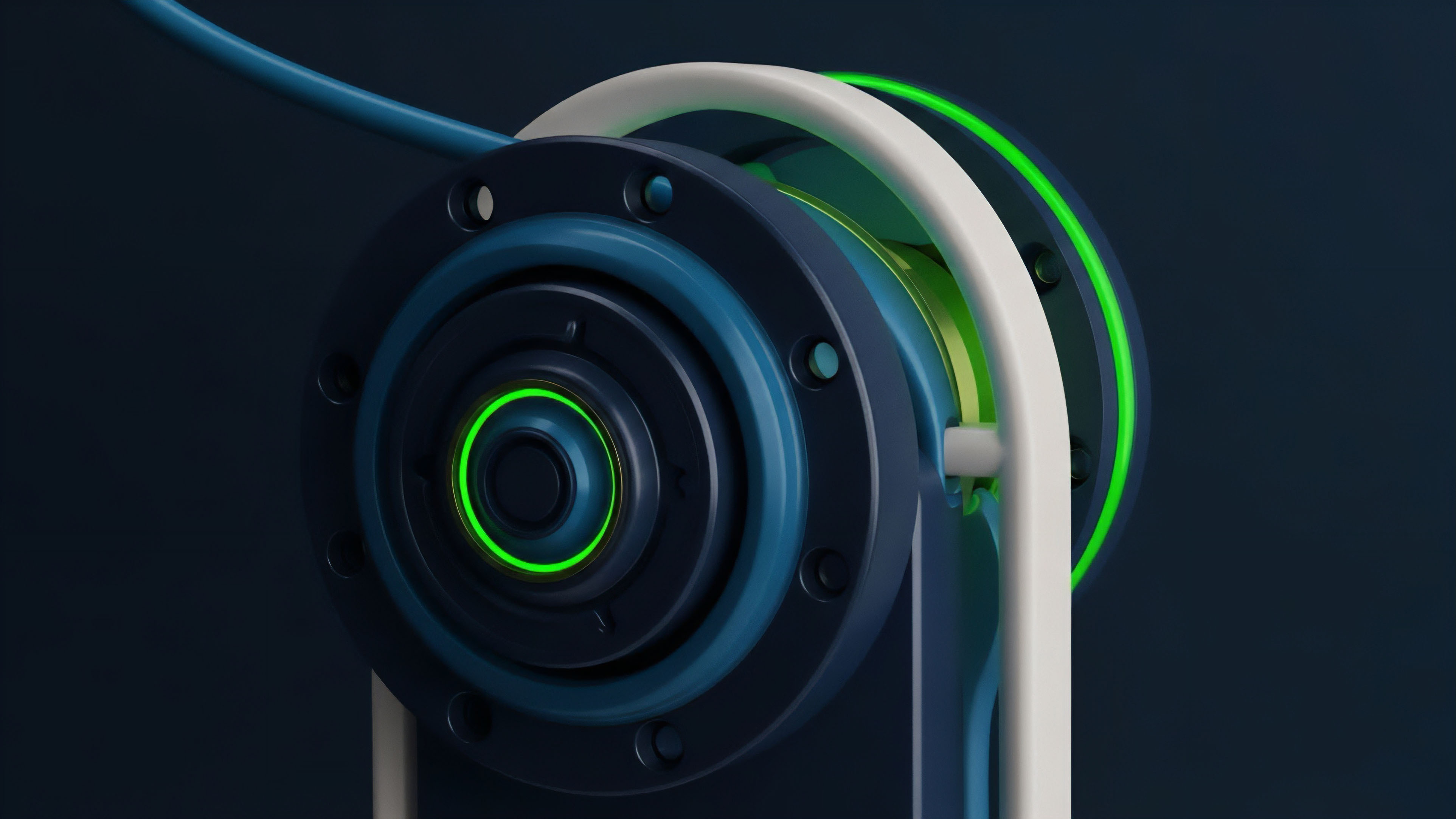

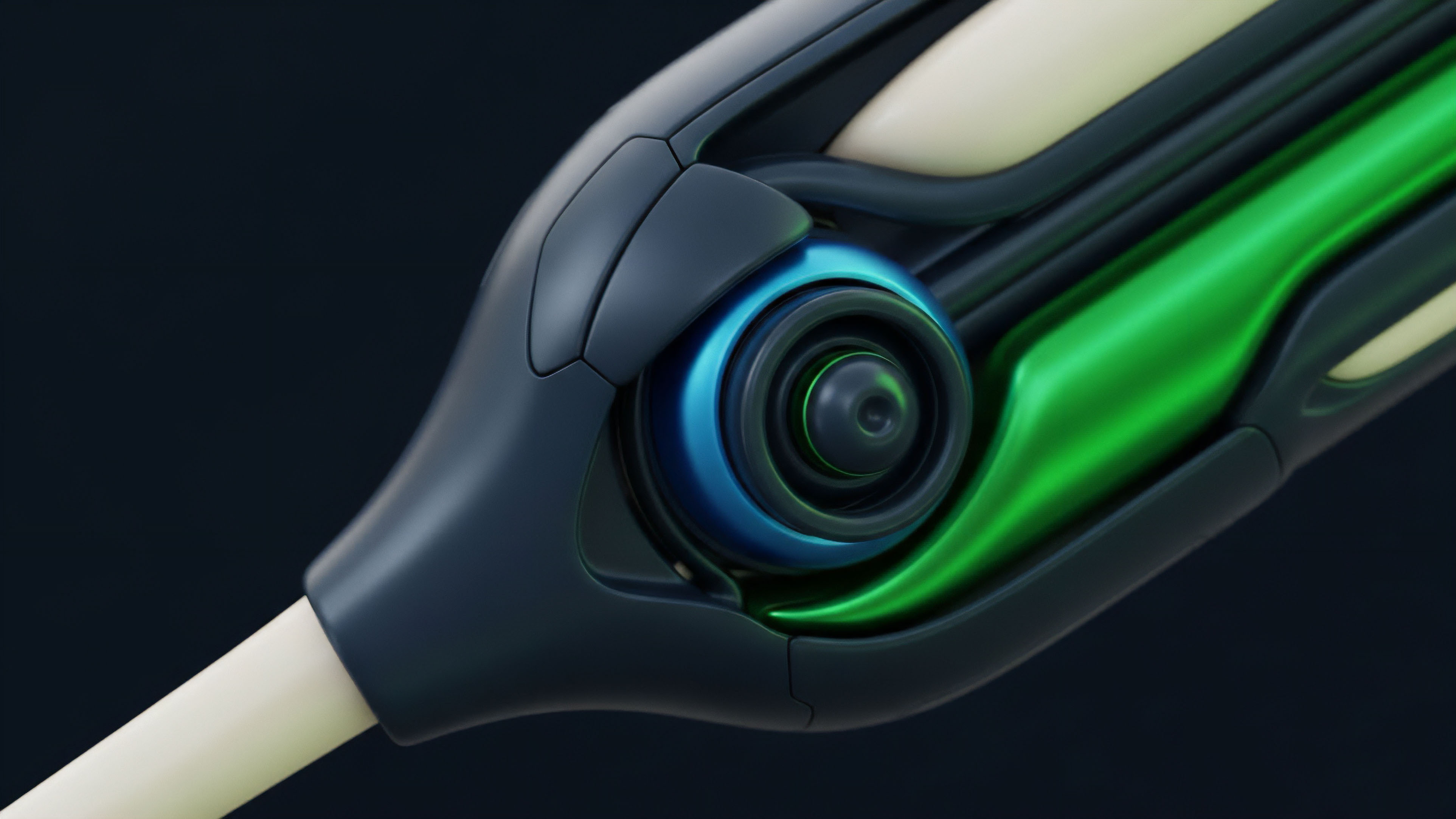

At the theoretical level, Risk Calculation Verification is the continuous application of Quantitative Finance models to a distributed ledger. It involves the calculation of Option Greeks ⎊ specifically Delta, Gamma, and Vega ⎊ to determine the sensitivity of a portfolio to price and volatility shifts. The verification process ensures that the margin held by the protocol is always greater than or equal to the Value at Risk (VaR) calculated by these models.

The protocol must account for the Volatility Smile and the skew of the market to price risk accurately. Unlike static models, Risk Calculation Verification must be fluid, adjusting its requirements as market conditions change. This requires a robust connection between the Smart Contract and high-frequency price feeds.

- Delta Neutrality Verification ensures that the aggregate exposure of the protocol remains balanced against market movements.

- Gamma Scalping Audits validate that liquidity providers are properly compensated for the risks of rapid price changes.

- Vega Sensitivity Checks monitor the impact of implied volatility shifts on the total collateral pool.

- Margin Haircut Validation applies mathematical discounts to volatile collateral to maintain a safety buffer.

Quantitative models embedded in smart contracts provide a mathematical ceiling on the maximum allowable leverage within a derivative protocol.

The logic of the system is designed to be adversarial. It assumes that market participants will seek to maximize their gearing and that price movements will be violent. Therefore, the Risk Calculation Verification must be more resilient than the market it monitors.

It uses Stress Testing parameters to simulate extreme scenarios, ensuring the protocol remains solvent even when liquidity dries up.

Execution of Real Time Audits

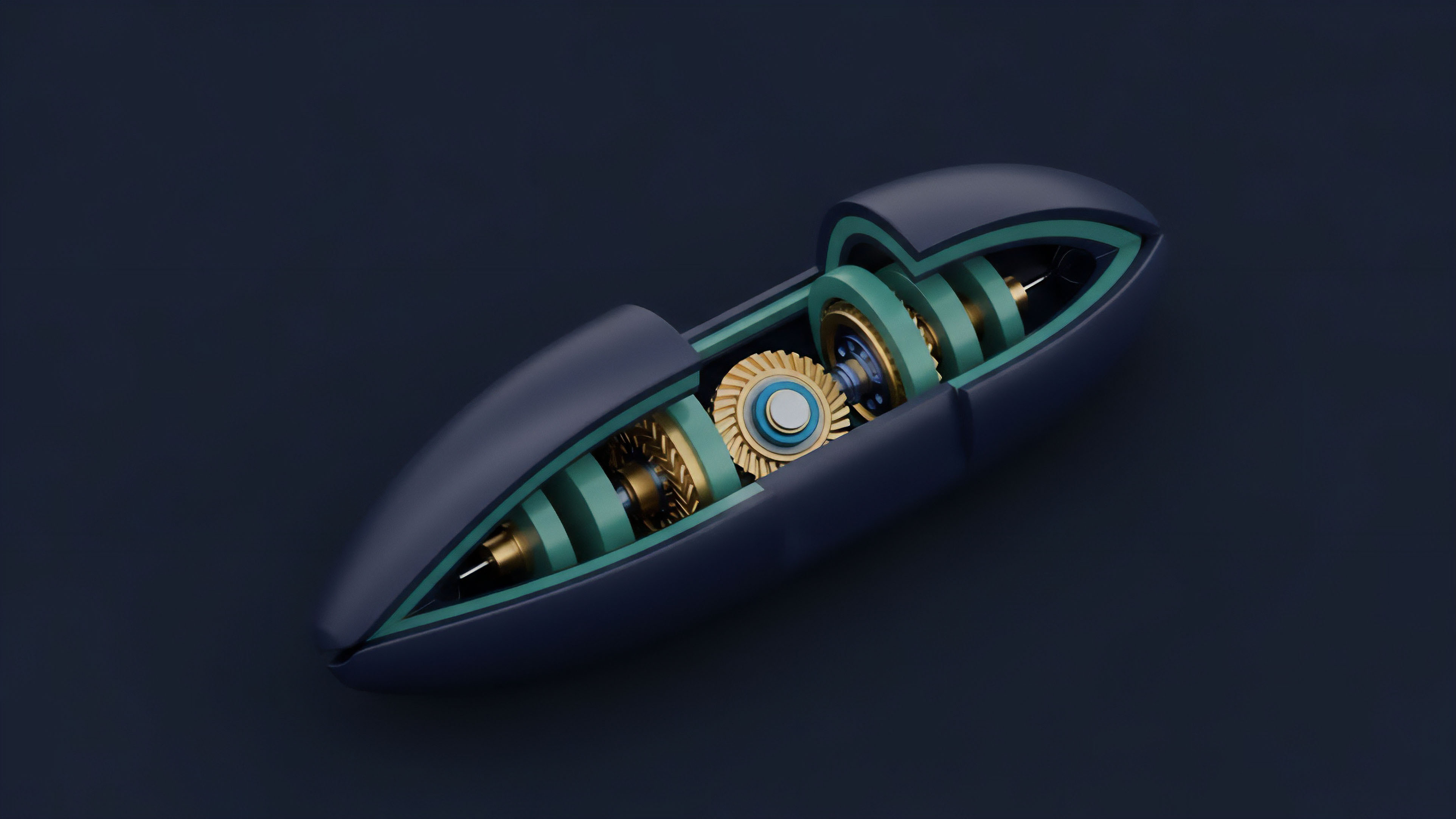

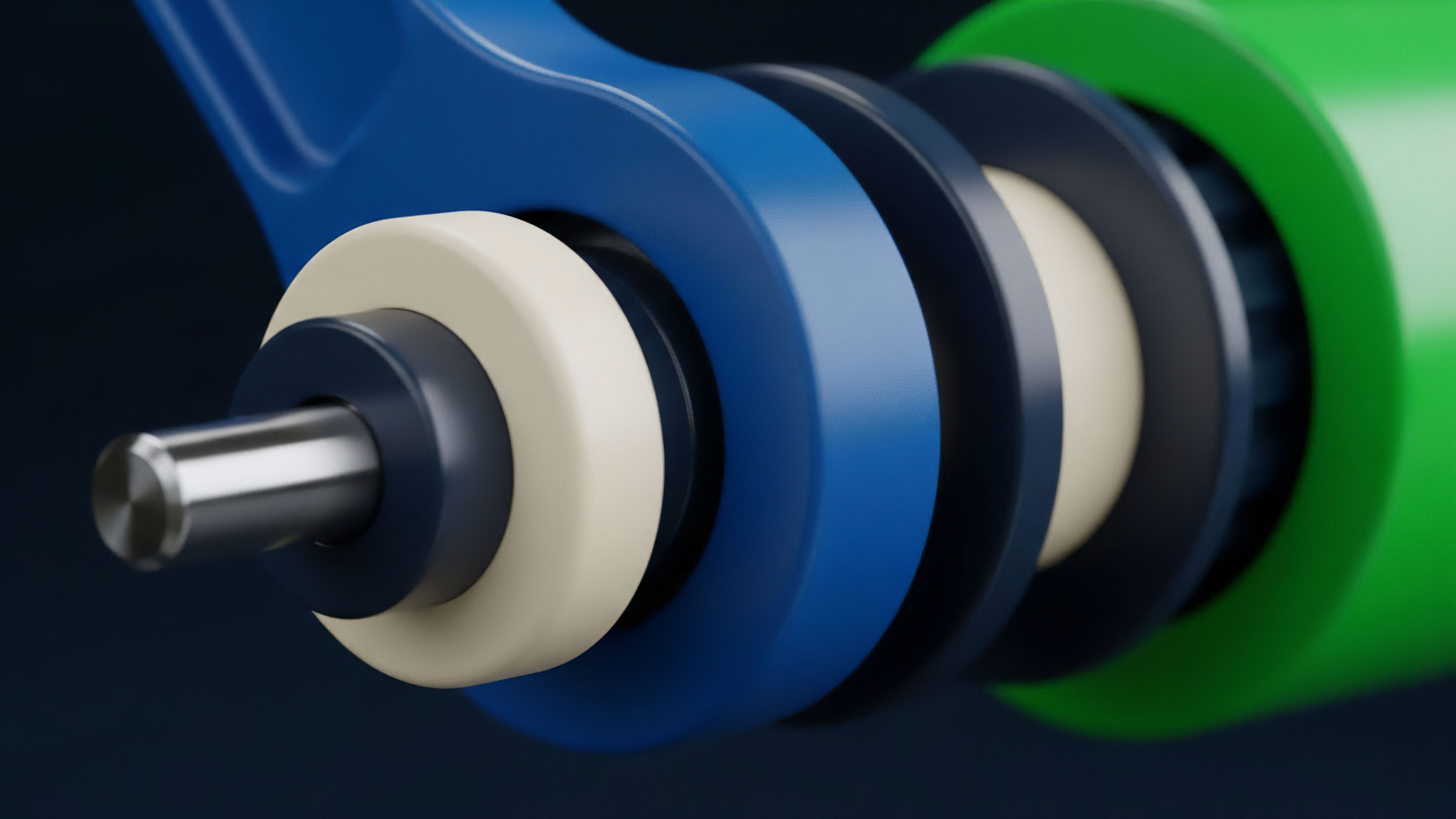

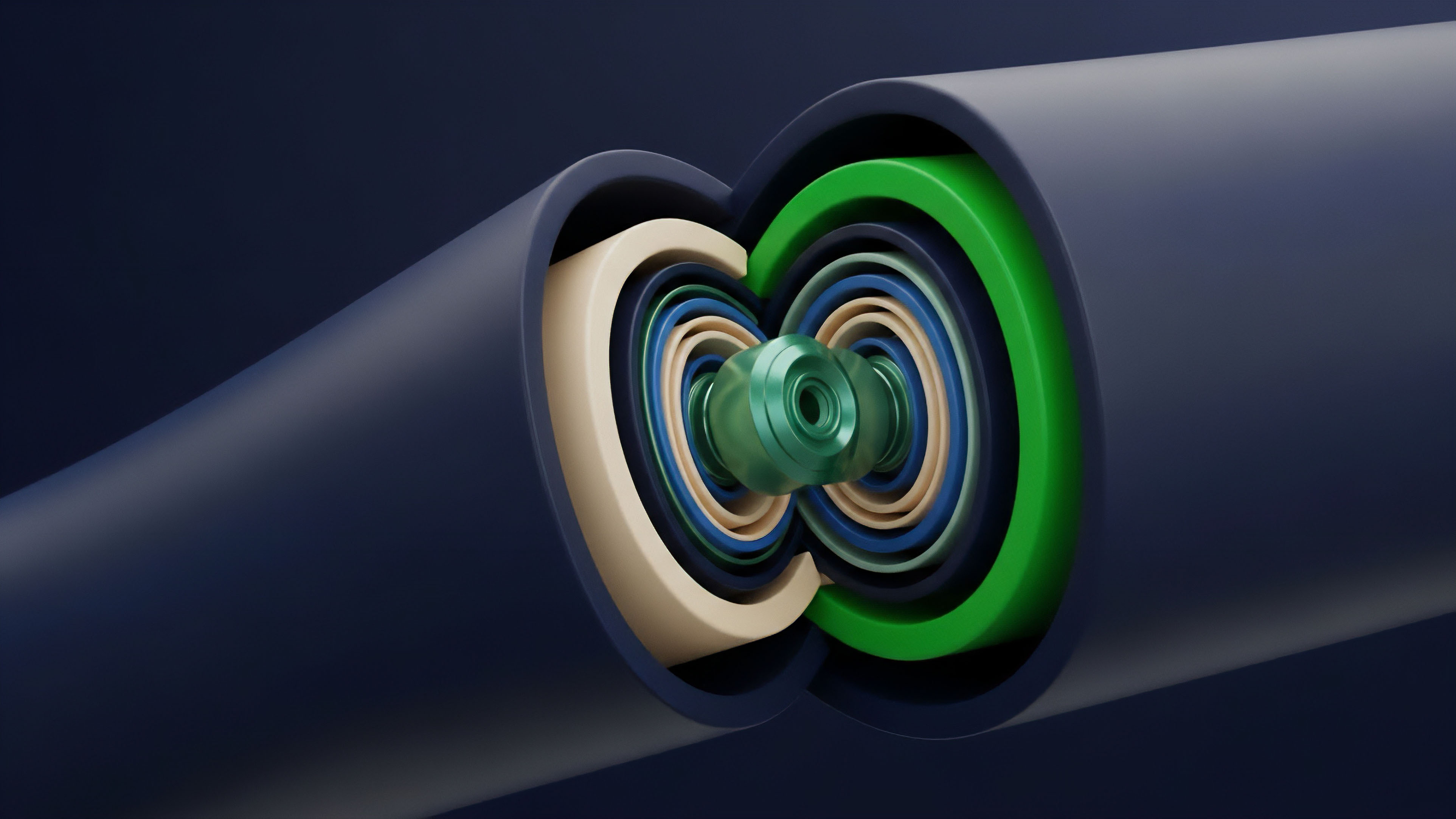

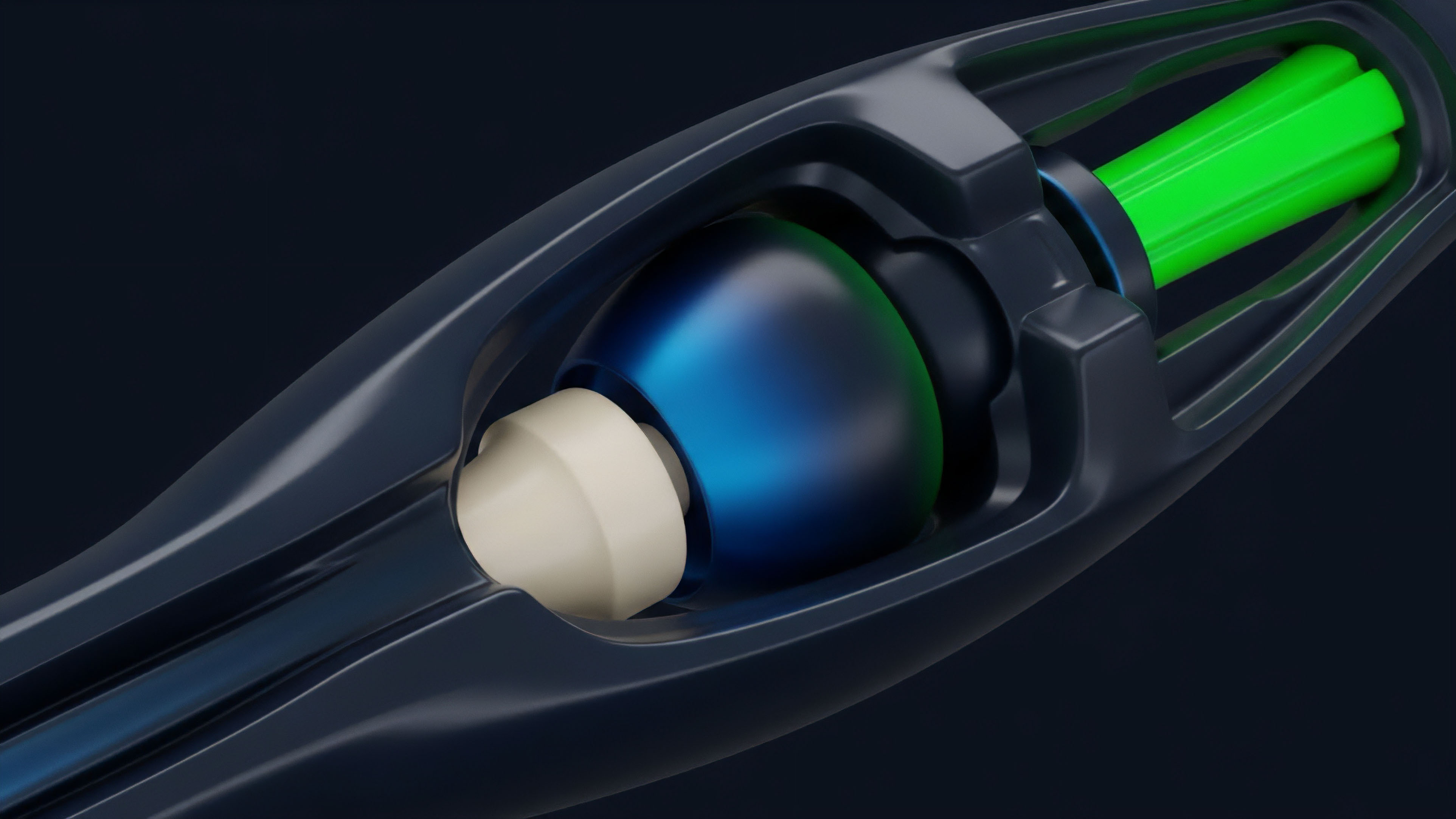

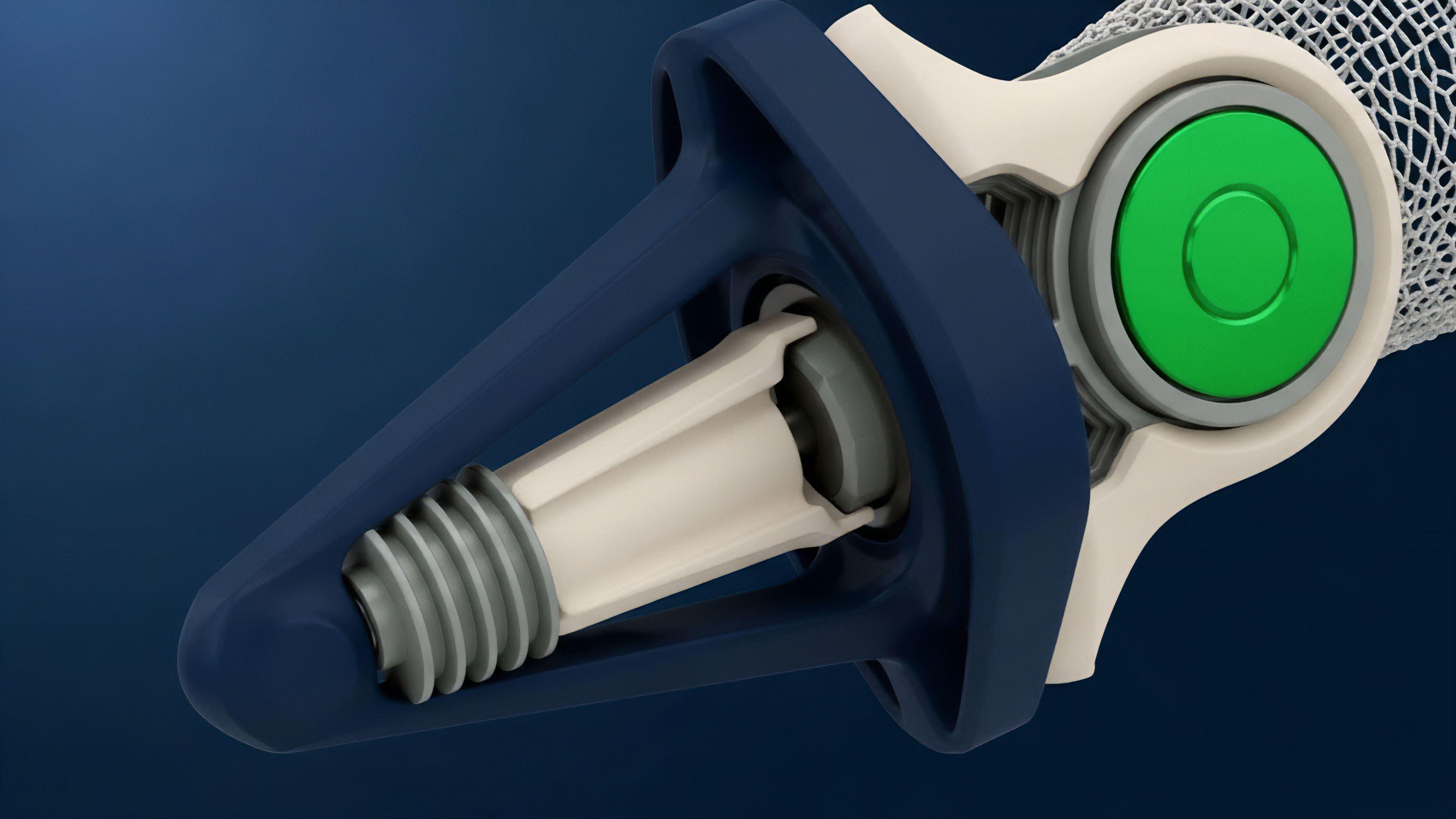

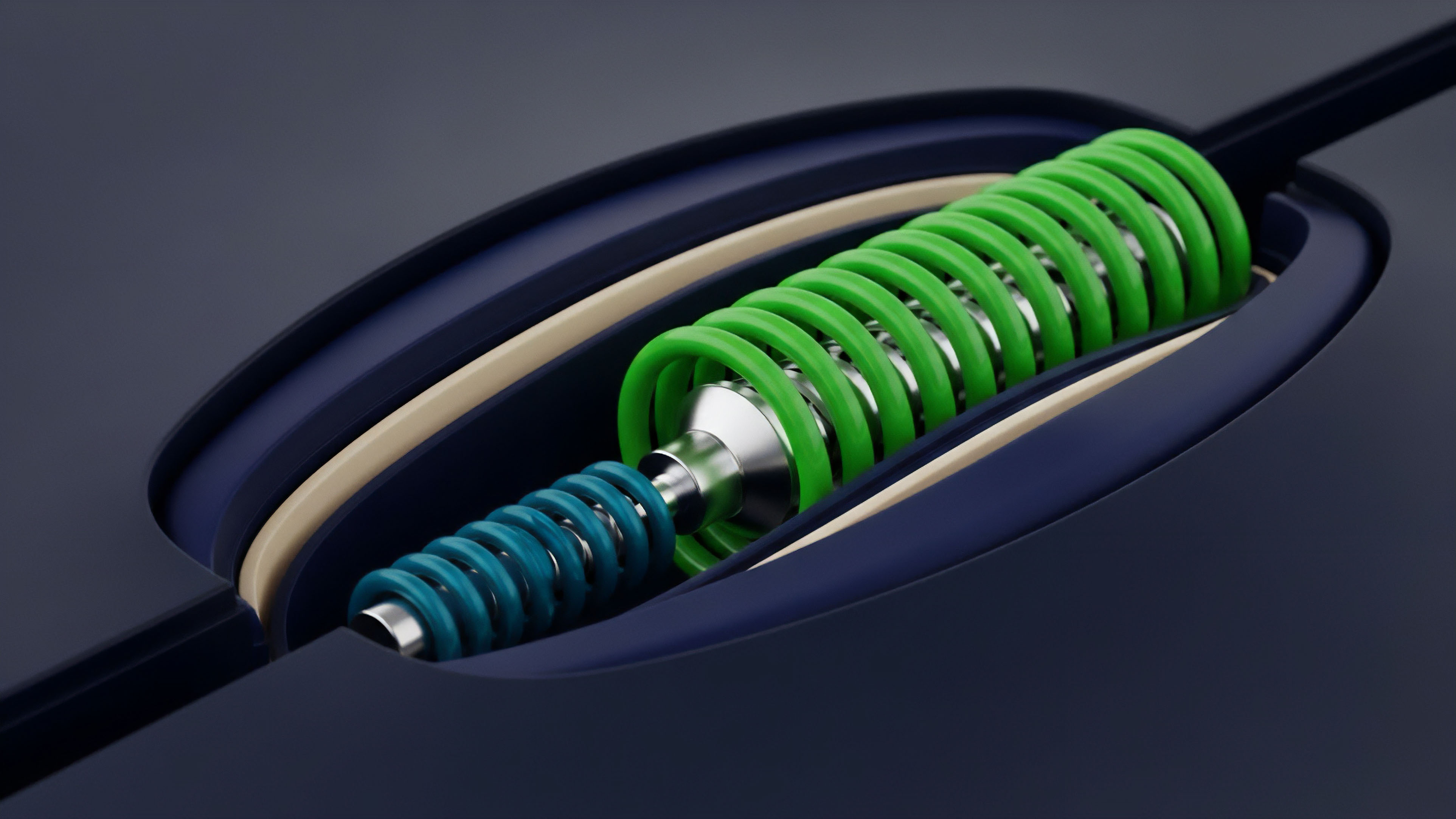

The current implementation of Risk Calculation Verification relies on a multi-layered architecture that combines on-chain logic with off-chain computation. High-performance Risk Engines calculate the necessary margin requirements off-chain to save on gas costs, while the Smart Contract verifies these calculations before executing any trade or withdrawal.

This hybrid method allows for the speed of centralized exchanges with the security of decentralized settlement. Verification is performed through a series of checks that occur before a transaction is finalized. If the proposed trade would put the user or the protocol at risk, the Risk Calculation Verification engine rejects the transaction.

This proactive approach prevents the accumulation of “toxic” debt within the system.

| Verification Layer | Primary Function | Technical Mechanism |

|---|---|---|

| Oracle Layer | Price and Volatility Data | Decentralized Data Feeds |

| Computation Layer | Margin Requirement Logic | Off-chain Solvers / ZK-Proofs |

| Settlement Layer | Collateral Enforcement | On-chain Smart Contracts |

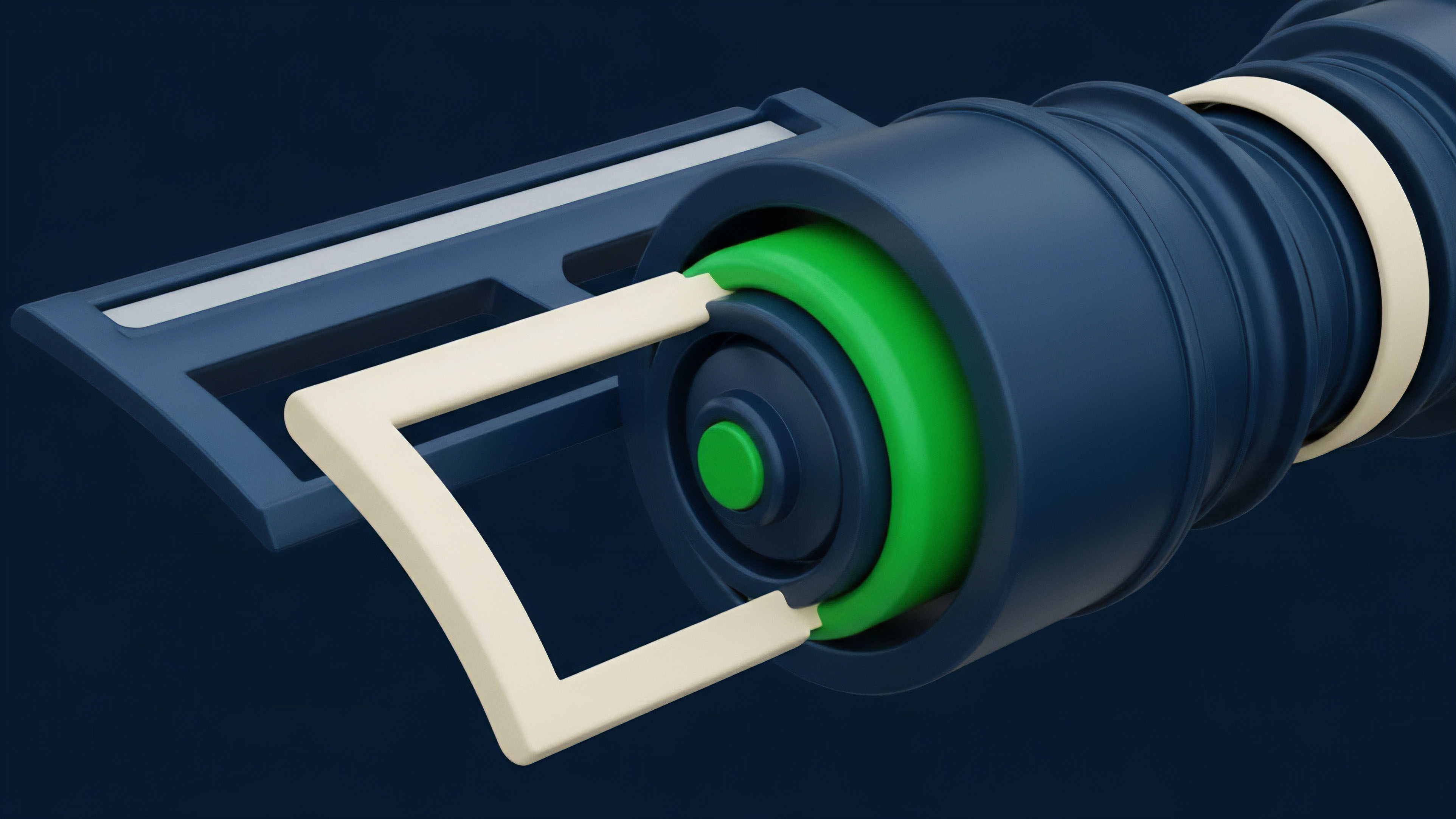

The use of Cross-Margin Engines has become the standard for professional-grade platforms. These engines allow for the offsetting of risks across different positions, increasing capital efficiency. The Risk Calculation Verification process must therefore be able to handle complex correlations between different assets and instrument types.

Structural Shifts in Risk Architecture

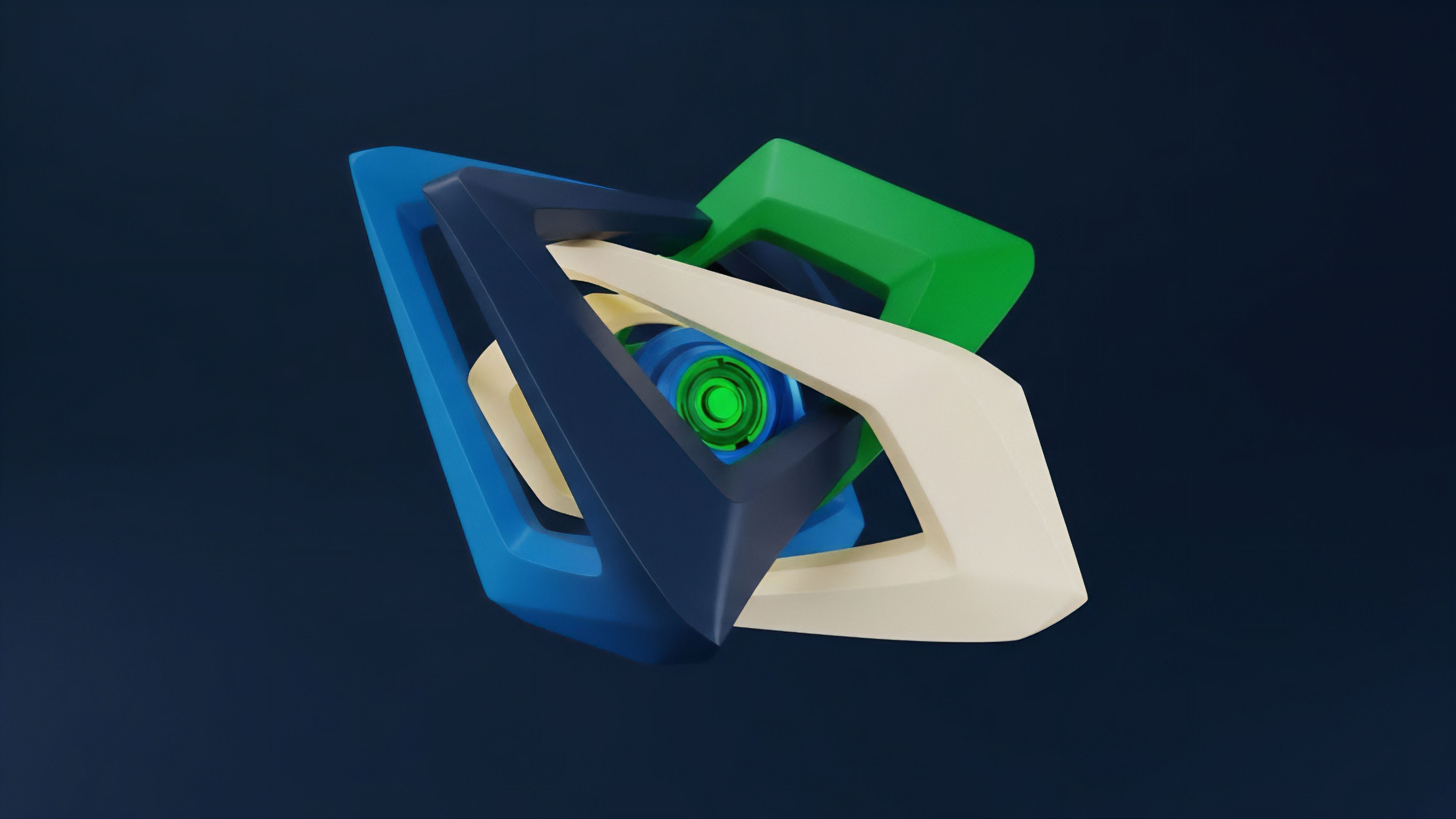

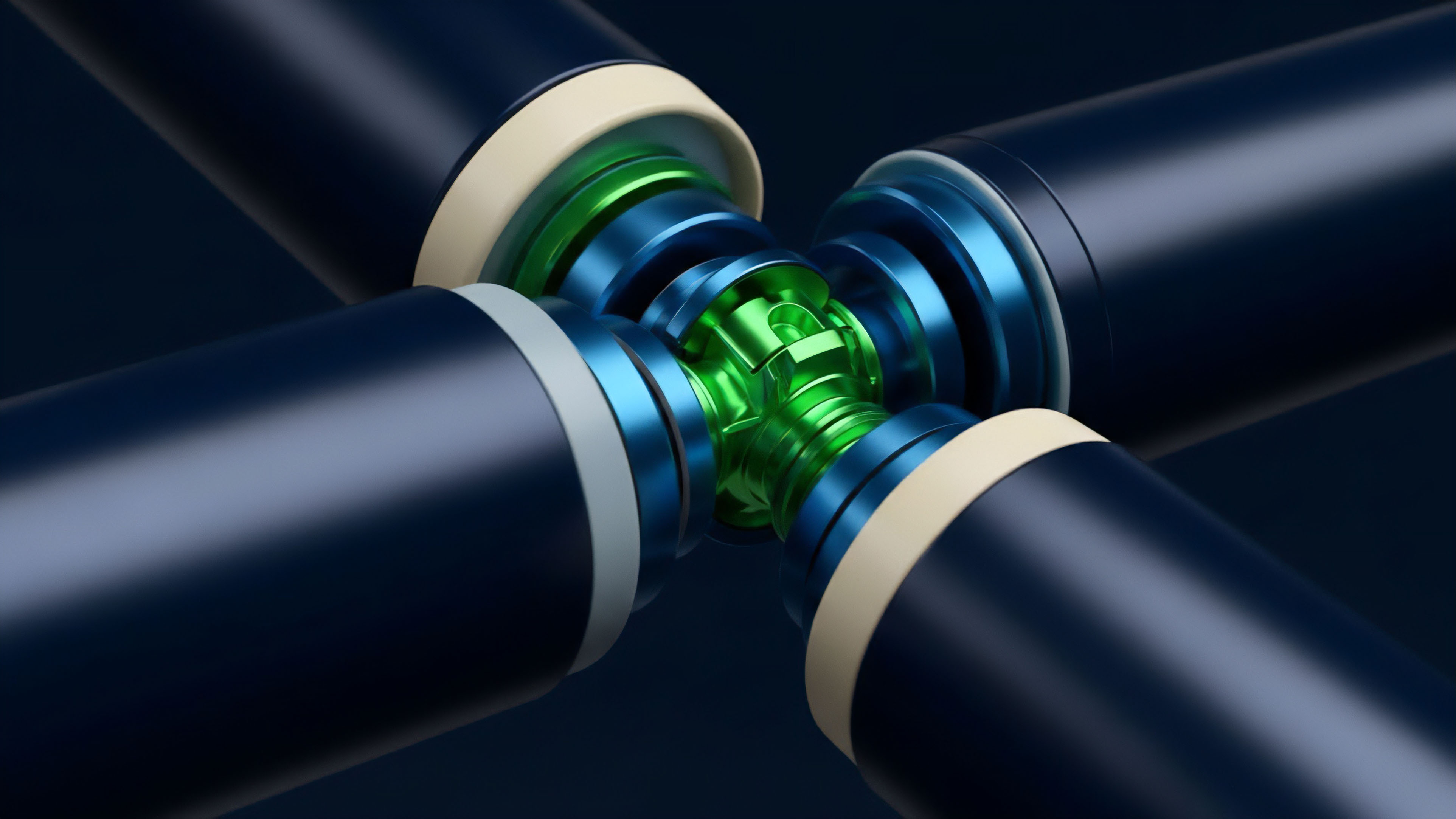

The progression of Risk Calculation Verification has moved from Isolated Margin models to Portfolio Margin systems. In the early stages, risk was siloed; a loss in one position could lead to liquidation even if the trader had profitable positions elsewhere. This was inefficient and led to unnecessary market volatility.

Modern systems verify risk at the portfolio level, allowing for a more accurate assessment of a trader’s total exposure. This shift required a massive increase in the complexity of the verification logic. The protocol must now calculate the Correlation Matrix between different assets in real-time.

This is where the Derivative Systems Architect must balance the need for precision with the constraints of blockchain latency.

- Collateral Tokenization allows for a wider variety of assets to be used as margin, requiring more complex valuation proofs.

- Dynamic Liquidation Thresholds adjust based on market liquidity, ensuring that liquidations do not cause a price collapse.

- Socialized Loss Mitigation mechanisms are verified to ensure that the protocol can handle “black swan” events without failing.

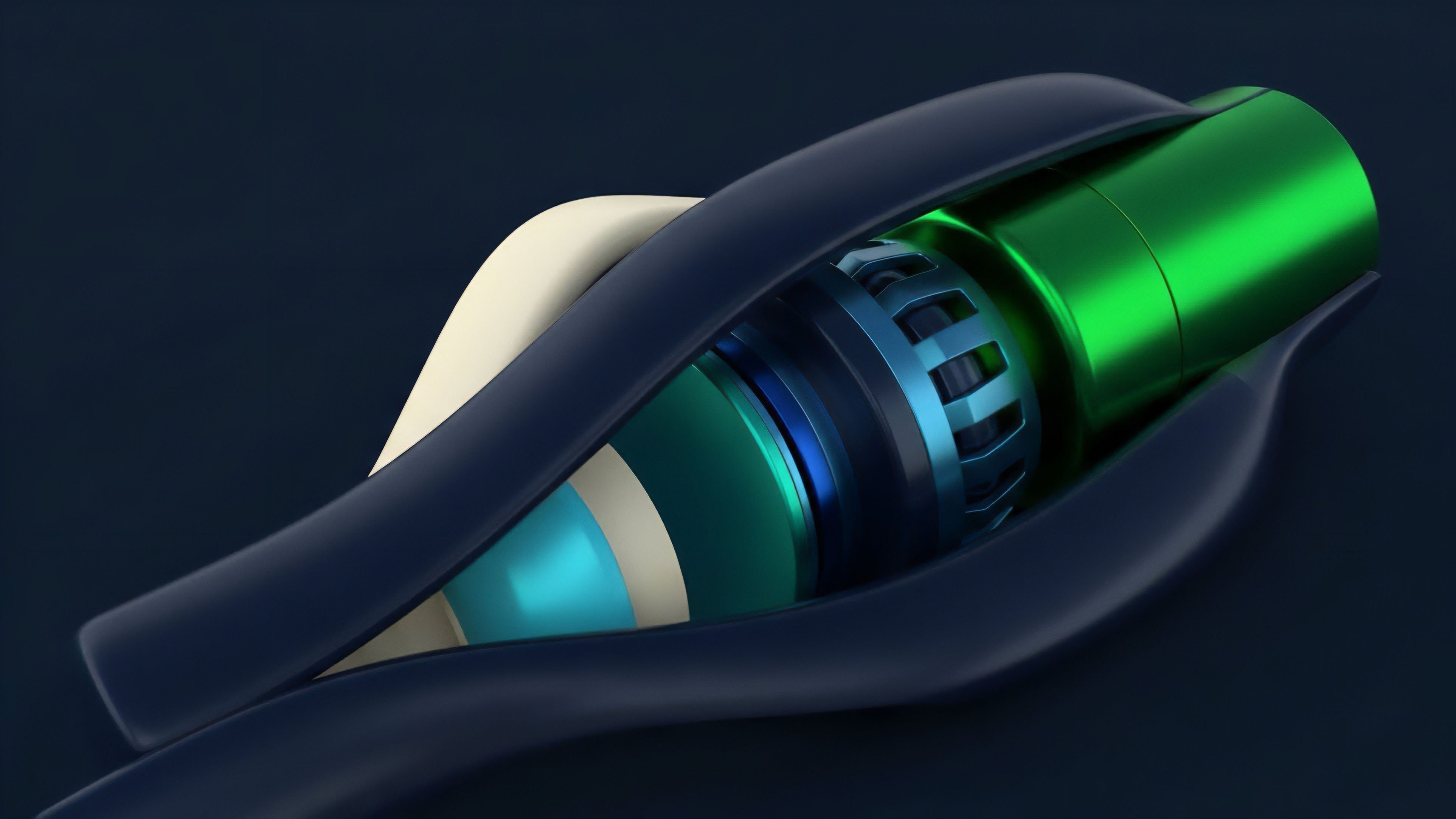

The current state of the art involves Optimistic Verification, where calculations are assumed to be correct unless challenged by a watcher. This increases throughput while maintaining a high level of security. However, the move toward Zero-Knowledge Proofs is expected to replace this, providing immediate, mathematical certainty without the need for a challenge period.

Future Vectors of Mathematical Security

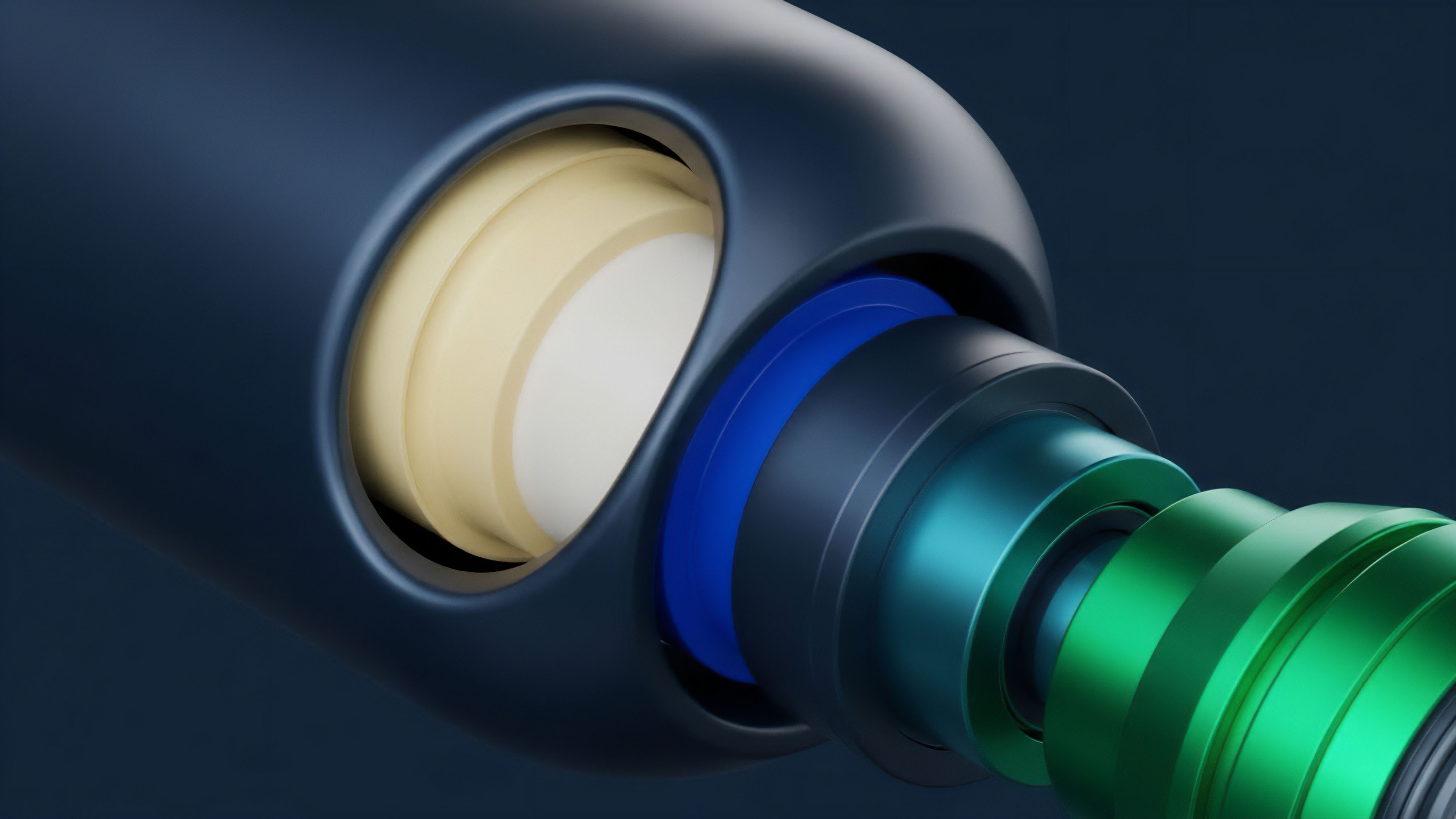

The prospect of Risk Calculation Verification lies in the total integration of Privacy Preserving Computation. Future protocols will likely use Zero-Knowledge Succinct Non-Interactive Arguments of Knowledge (zk-SNARKs) to verify that a trader is solvent without revealing their specific positions or strategies. This will attract institutional capital that requires confidentiality while still needing to prove its risk management standards to regulators.

We are also seeing the rise of AI-Driven Risk Parameters. These systems use machine learning to identify emerging risks before they manifest in the price data. The Risk Calculation Verification engine will then audit these AI suggestions to ensure they remain within the bounds of the protocol’s safety mandates.

- Multi-Chain Risk Aggregation will allow for the verification of solvency across different blockchain networks simultaneously.

- Programmable Risk Policies will enable DAOs to adjust the verification logic in response to changing macro conditions.

- Real-Time Solvency Attestations will provide a continuous, public proof of a protocol’s health, accessible to any external auditor.

The ultimate goal is a financial system where risk is not managed through human oversight but through Computational Governance. In this future, Risk Calculation Verification is the silent foundation of global trade, providing the certainty needed for a truly permissionless and resilient financial operating system. The complexity of the math is hidden behind the simplicity of the proof, allowing for a world where value can move as freely as information.

Glossary

State Verification Protocol

Position Verification

Logarithmic Verification

Data Verification Protocols

Regulatory Compliance Verification

Oracle Price Verification

Risk Calculation Privacy

Decentralized Var Calculation

Risk-Weighted Asset Calculation