Essence

Public Input Verification represents the cryptographic assurance mechanism ensuring that data ingested by decentralized financial protocols from external sources remains untampered and reflective of true market states. This process acts as the bridge between off-chain reality and on-chain execution, maintaining the integrity of derivative pricing engines. Without robust verification, the oracle systems feeding these protocols become vectors for manipulation, allowing malicious actors to influence asset prices or trigger liquidations illegitimately.

Public Input Verification functions as the foundational gatekeeper that prevents external data corruption from compromising the settlement logic of decentralized derivatives.

The necessity for this mechanism stems from the inherent opacity of traditional data feeds. When a protocol relies on a single source or an unverified stream, it exposes its margin engines to systematic risk. Effective verification demands that incoming data points undergo multi-party validation or cryptographic proof generation, ensuring that the input satisfies predefined consensus thresholds before the smart contract accepts it as the basis for financial transactions.

Origin

The genesis of Public Input Verification lies in the limitations of early decentralized exchange architectures that relied on simplistic, centralized price feeds.

As the complexity of crypto options increased, the reliance on single-point-of-failure oracles became untenable, necessitating a shift toward decentralized data aggregation. Early developers realized that the security of a derivative contract depends entirely on the accuracy of its reference asset price.

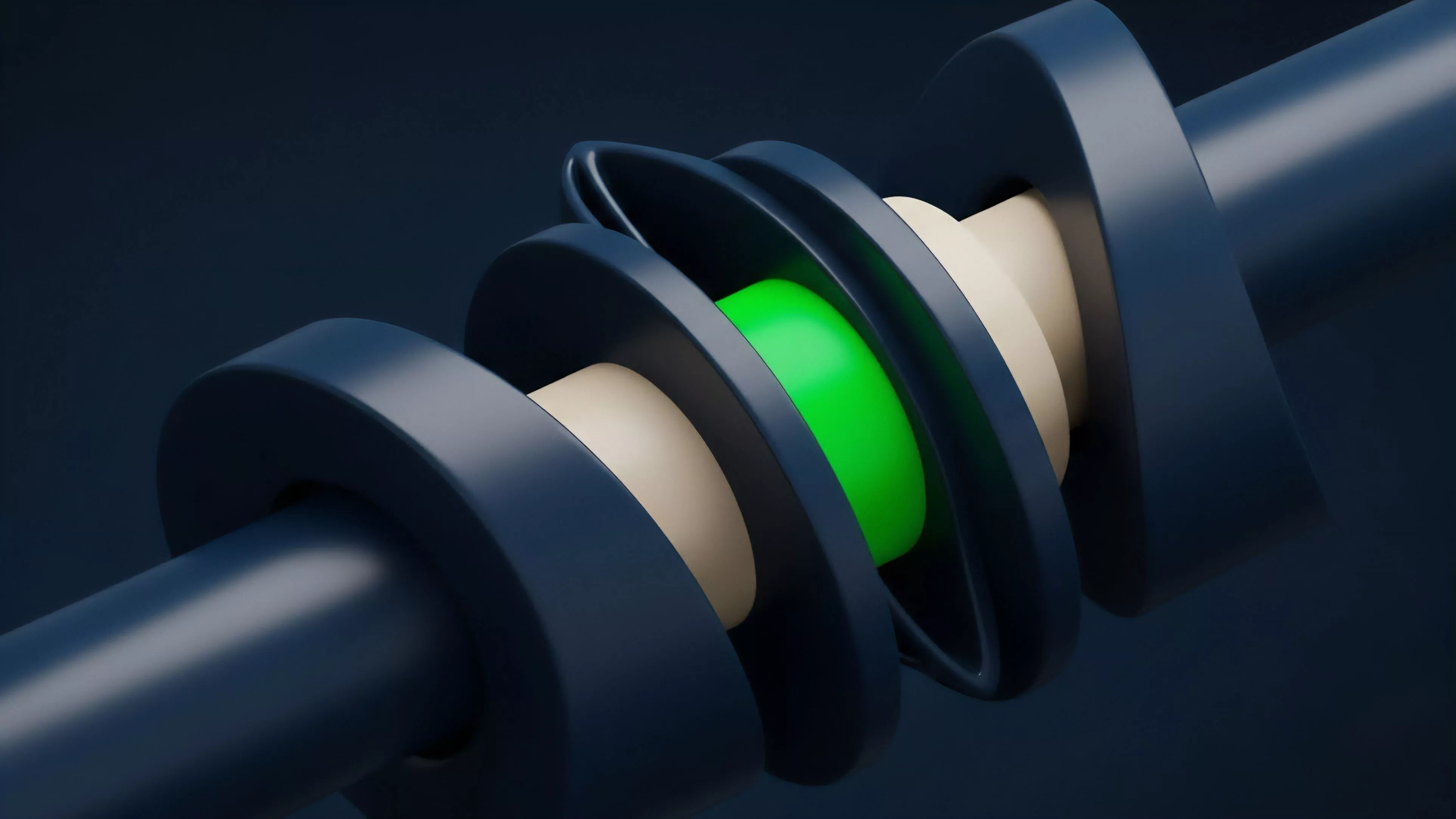

- Deterministic Execution requires that every validator in a network agrees on the input data before processing a trade or liquidation.

- Cryptographic Commitment involves publishers signing their data, allowing the protocol to verify the source identity and the integrity of the information package.

- Consensus Aggregation combines multiple independent data streams to derive a weighted median or a statistically sound price, reducing the impact of outliers.

This evolution reflects the broader transition from experimental smart contract design to rigorous financial engineering. By moving away from centralized reliance, the industry developed methods to force external inputs through a gauntlet of decentralized checks, ensuring that market data is not merely reported, but mathematically proven to be accurate within a specific margin of tolerance.

Theory

The mechanics of Public Input Verification rest upon the application of game theory to prevent data collusion. By requiring validators to stake collateral against the accuracy of their inputs, the system creates an adversarial environment where the cost of providing false data outweighs the potential profit from market manipulation.

This is the application of economic incentives to solve a technical problem of data integrity.

Data accuracy in decentralized systems relies on the economic disincentive for validators to report false prices rather than on the inherent trustworthiness of the source.

Quantitative Framework

The mathematical model for verification often employs a Bayesian Update approach or a Weighted Median Calculation to process inputs. If a validator reports a price significantly deviating from the consensus, the protocol automatically flags the submission for review or penalizes the actor. This structure effectively filters out noise and malicious actors, maintaining a stable price signal for complex derivative instruments.

| Validation Mechanism | Economic Incentive | Systemic Outcome |

| Proof of Stake | Collateral Slashing | High Cost of Attack |

| Multi-Party Computation | Reputation Weighting | Data Integrity |

| Zero Knowledge Proofs | Computational Verification | Privacy and Accuracy |

The internal logic assumes that participants act rationally to maximize their own utility, which in this case means protecting their stake by providing truthful data. Any deviation from the consensus is treated as an attack on the protocol, triggering an immediate defensive response.

Approach

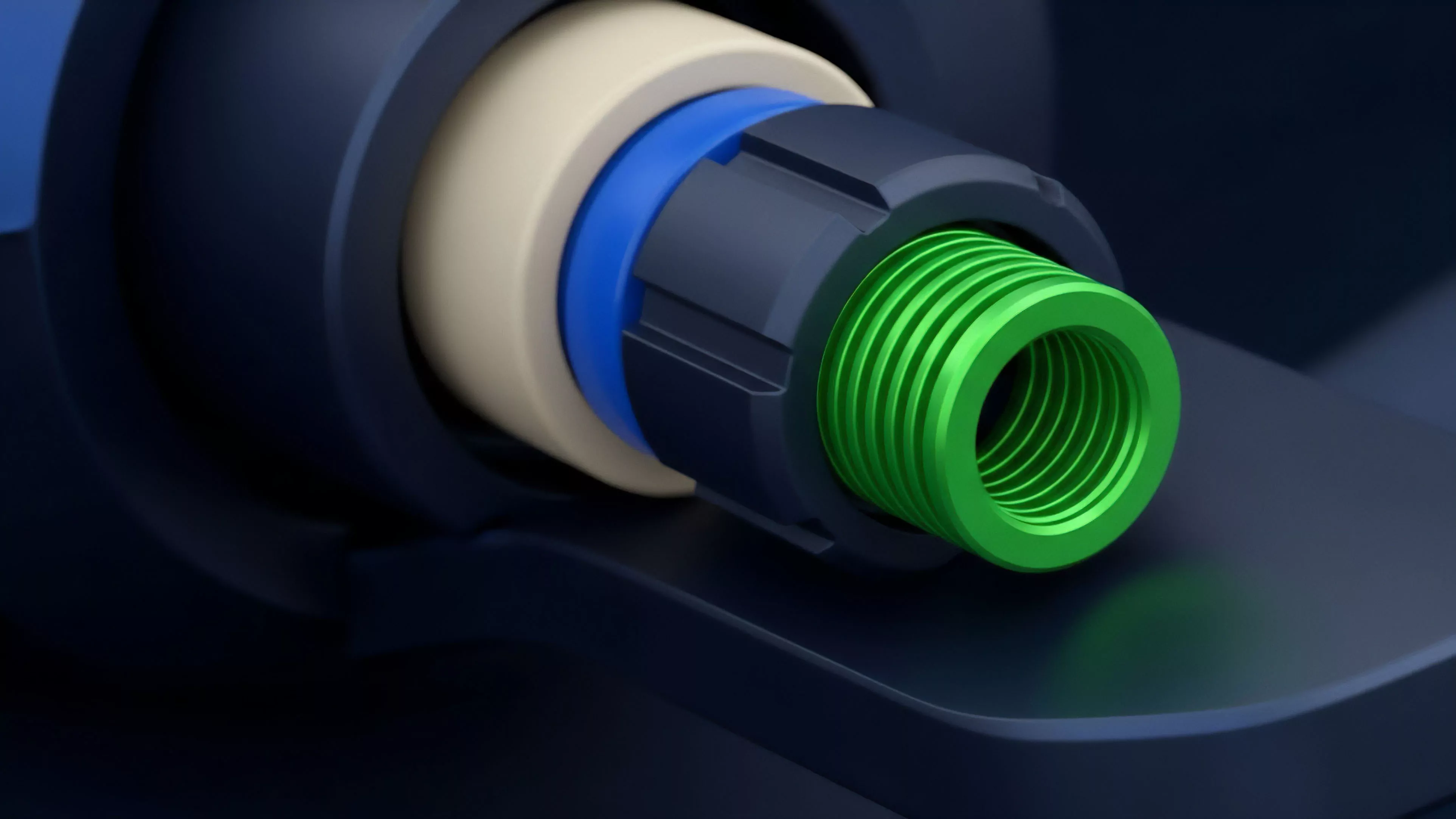

Current strategies for Public Input Verification prioritize modularity and speed. Protocols now utilize decentralized oracle networks that allow for the verification of data across multiple layers of the blockchain stack.

This modularity ensures that the verification layer remains distinct from the core settlement engine, allowing for updates without disrupting the derivative contracts themselves.

- Decentralized Oracle Networks distribute the risk of data failure across a wide pool of independent node operators.

- On-chain Verification Logic embeds the validation rules directly into the smart contract, ensuring that the protocol remains self-executing.

- Latency Mitigation employs off-chain computation to aggregate data, which is then verified on-chain via succinct cryptographic proofs to minimize gas consumption.

This architecture creates a system where the protocol does not need to know the specific identity of the data provider, only that the provided data has passed the required cryptographic checks. This is a significant shift in how we handle financial data, moving the burden of proof from a trusted institution to the code itself.

Evolution

The trajectory of Public Input Verification has moved from simple, manual reporting to automated, high-frequency verification systems. Early iterations were vulnerable to simple flash loan attacks that manipulated spot prices.

Modern systems now incorporate time-weighted average price calculations and cross-exchange verification to ensure that the input represents a sustainable market price rather than a temporary anomaly.

Robust verification protocols must account for high-frequency market volatility to prevent false liquidation triggers in derivative contracts.

The evolution also includes the integration of Zero Knowledge Proofs, which allow for the verification of complex data sets without revealing the underlying raw data. This is a critical development for privacy-focused derivative markets, where users require assurance that the data is accurate without exposing their trading strategies or source information to the public ledger. The complexity of these systems has increased as the market demands faster settlement times and lower slippage for large-scale derivative positions.

Horizon

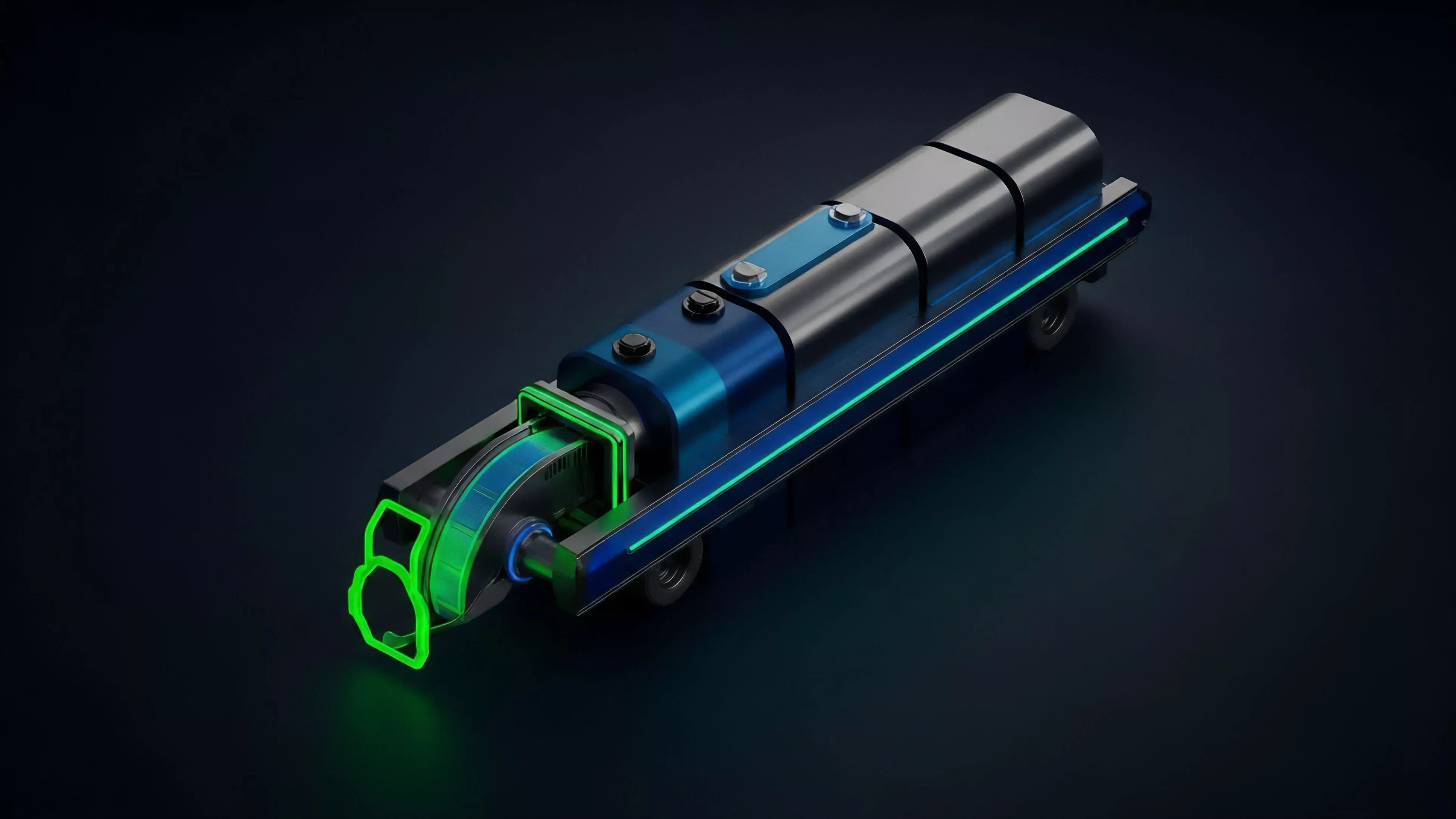

Future developments in Public Input Verification will likely focus on the integration of artificial intelligence for real-time anomaly detection.

These systems will autonomously monitor data feeds, identifying and neutralizing manipulation attempts before they impact the protocol. As decentralized finance matures, the reliance on human-governed parameters will decrease, replaced by autonomous, self-healing verification engines that adapt to changing market conditions.

- Automated Anomaly Detection utilizes machine learning to identify suspicious patterns in incoming data streams.

- Cross-Chain Verification enables protocols to verify data from disparate blockchains, creating a unified global market price.

- Governance-Free Validation removes the need for DAO-based intervention by encoding all risk parameters into the verification logic.

The shift toward fully automated verification will reduce the latency between market events and protocol response, significantly improving capital efficiency. This progression will enable the creation of highly complex derivatives that were previously impossible to sustain in a decentralized environment, as the verification infrastructure will be capable of handling massive volumes of data with absolute integrity.