Essence

Cryptographic Data Validation functions as the definitive mechanism for ensuring state integrity within decentralized financial environments. It operates by utilizing mathematical proofs to confirm that transaction data, state transitions, and derivative pricing parameters align with the governing protocol logic. This process eliminates the reliance on centralized intermediaries, replacing human-based verification with deterministic code execution.

Cryptographic data validation provides the mathematical guarantee that all state transitions within a decentralized financial system are both accurate and authorized.

The significance of this validation lies in its ability to establish trust in adversarial settings. Participants interact with derivative protocols without needing to verify the underlying accounting practices of a counterparty, as the protocol itself mandates compliance through cryptographic primitives. This architectural choice shifts the burden of proof from legal contracts to algorithmic verification, creating a resilient foundation for automated market making and decentralized clearing.

Origin

The genesis of Cryptographic Data Validation traces back to the fundamental design requirements of distributed ledger technology, specifically the necessity to achieve consensus on the state of a network without a central authority. Early implementations focused on simple transaction verification, utilizing digital signatures and hash functions to confirm sender authenticity and data integrity. The evolution toward complex financial instruments demanded more sophisticated methods.

As decentralized exchanges and derivative platforms emerged, the requirement for verifying off-chain data ⎊ such as asset price feeds ⎊ became critical. This led to the development of decentralized oracle networks and zero-knowledge proof systems, which allow for the validation of large datasets without exposing the raw information or requiring trust in a single data provider.

- Digital Signatures establish the non-repudiation of transaction requests.

- Merkle Trees facilitate the efficient verification of large datasets within a block.

- Zero-Knowledge Proofs enable the validation of state transitions while maintaining data privacy.

Theory

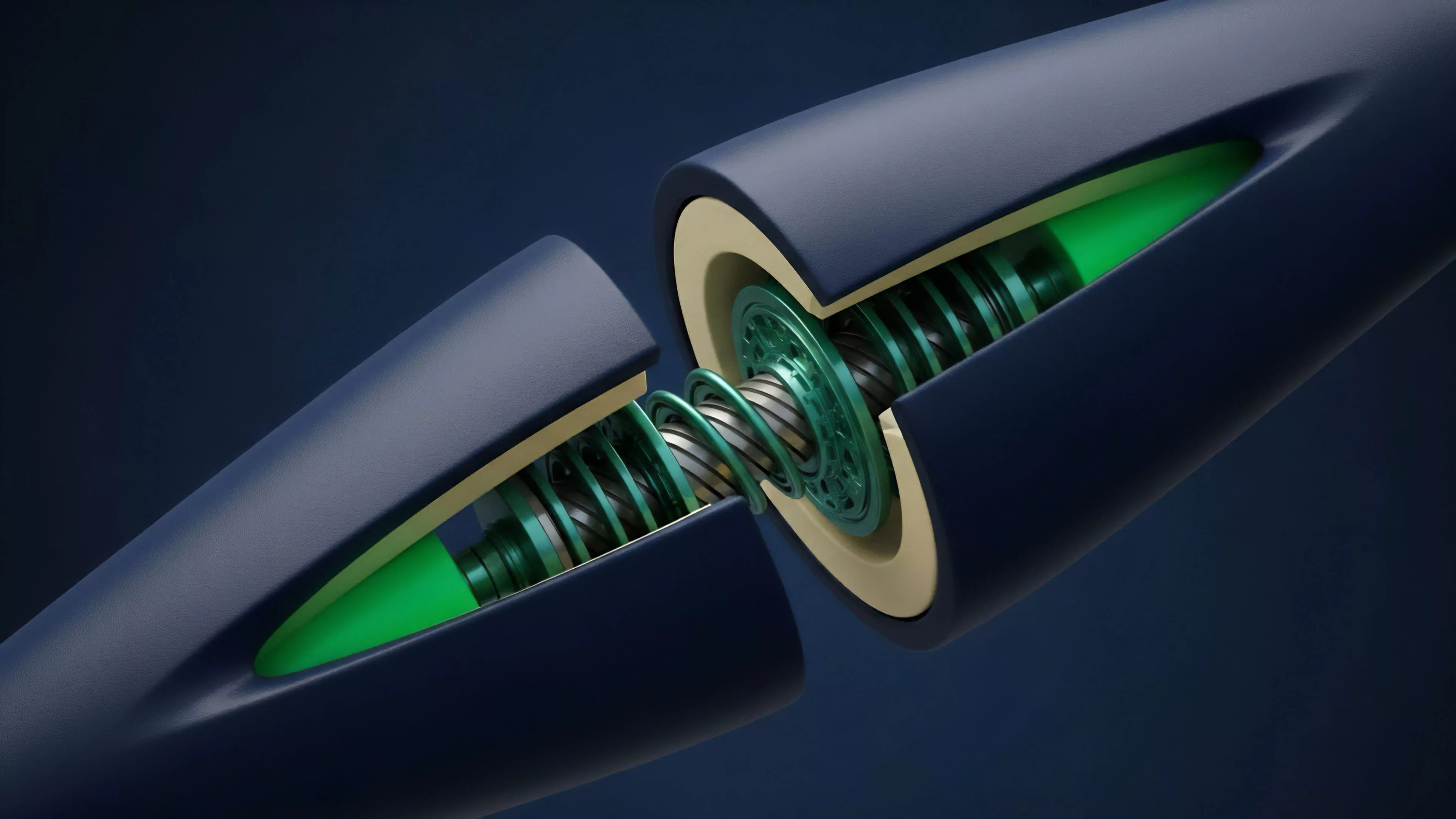

The structural integrity of Cryptographic Data Validation rests on the rigorous application of mathematical constraints to financial state machines. In a derivative context, this involves validating that margin requirements, liquidation thresholds, and option premiums are calculated precisely according to the predefined smart contract code. Any deviation from these parameters is rejected by the consensus layer.

The validity of a decentralized derivative position is derived solely from the mathematical proof of its adherence to protocol-defined state constraints.

Risk sensitivity analysis is integrated into this validation process. For instance, in an automated margin engine, the protocol must continuously validate that the collateral value remains above the maintenance margin threshold. This is not merely a static check; it is a dynamic process where the validation logic must account for volatility-induced price fluctuations, often requiring the interaction between on-chain data and external market signals.

| Validation Mechanism | Systemic Function | Risk Mitigation |

|---|---|---|

| State Proofs | Confirming account balances | Prevents double-spending |

| Oracle Consensus | Validating external prices | Reduces price manipulation |

| Validity Rollups | Batching transaction proofs | Ensures layer-two security |

The intersection of quantitative finance and protocol physics is where this validation becomes complex. The Greeks, such as Delta and Gamma, are not just theoretical constructs; they are inputs that must be validated to ensure the protocol remains solvent during extreme market stress. If the validation mechanism fails to account for non-linear price movements, the entire system faces contagion risk.

Approach

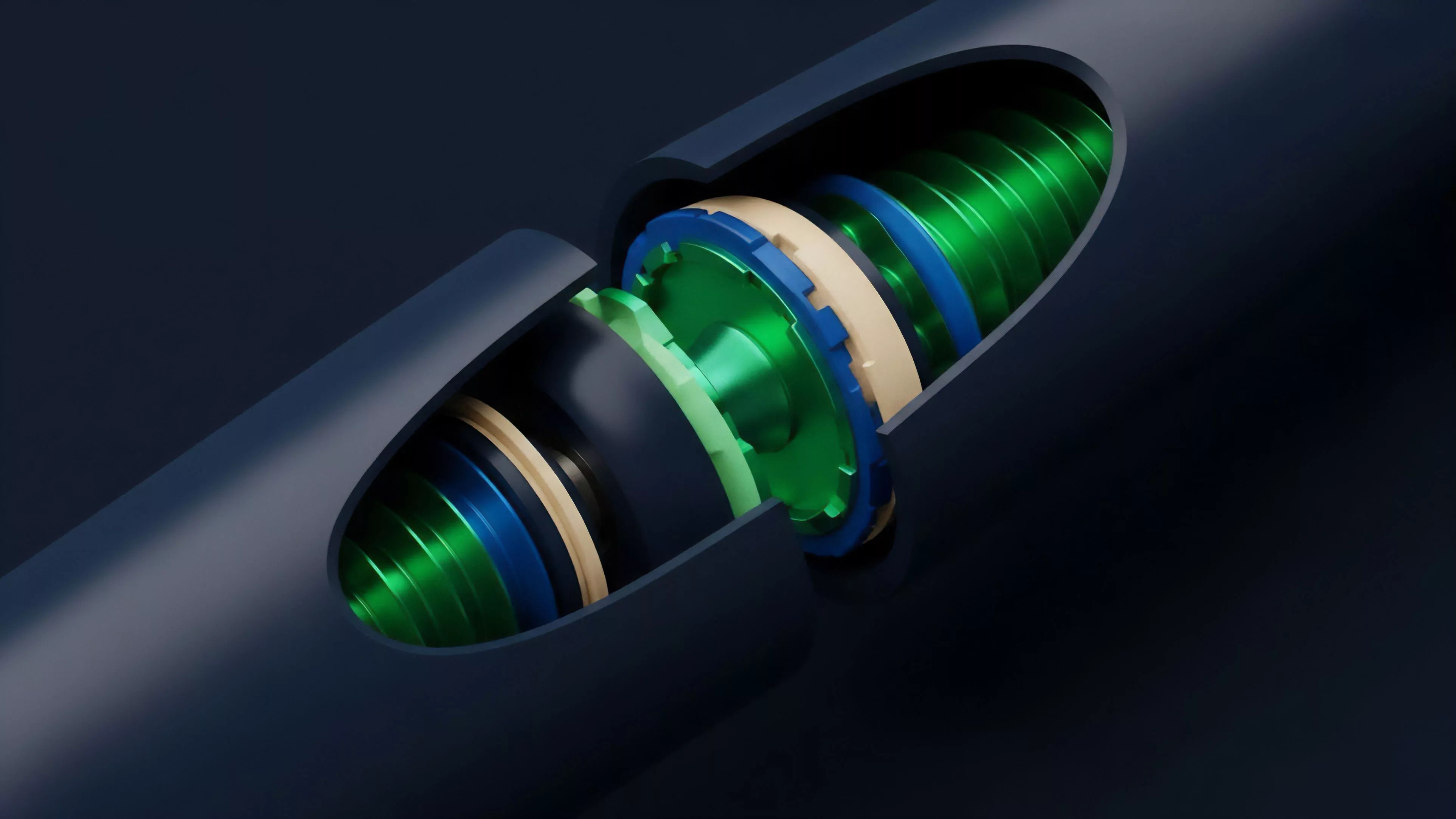

Current implementations of Cryptographic Data Validation utilize a layered architecture to ensure both security and throughput. Protocols now frequently employ optimistic or zero-knowledge rollups, where validation is offloaded from the main execution layer while maintaining cryptographic anchoring. This approach addresses the scalability trilemma by decoupling transaction execution from settlement validation.

The move toward modular protocol design has introduced specialized validation layers. These layers act as decentralized clearing houses, focusing exclusively on the integrity of state transitions for specific asset classes. This separation of concerns allows for higher performance in derivative trading while ensuring that the underlying data validation remains as robust as the base layer consensus.

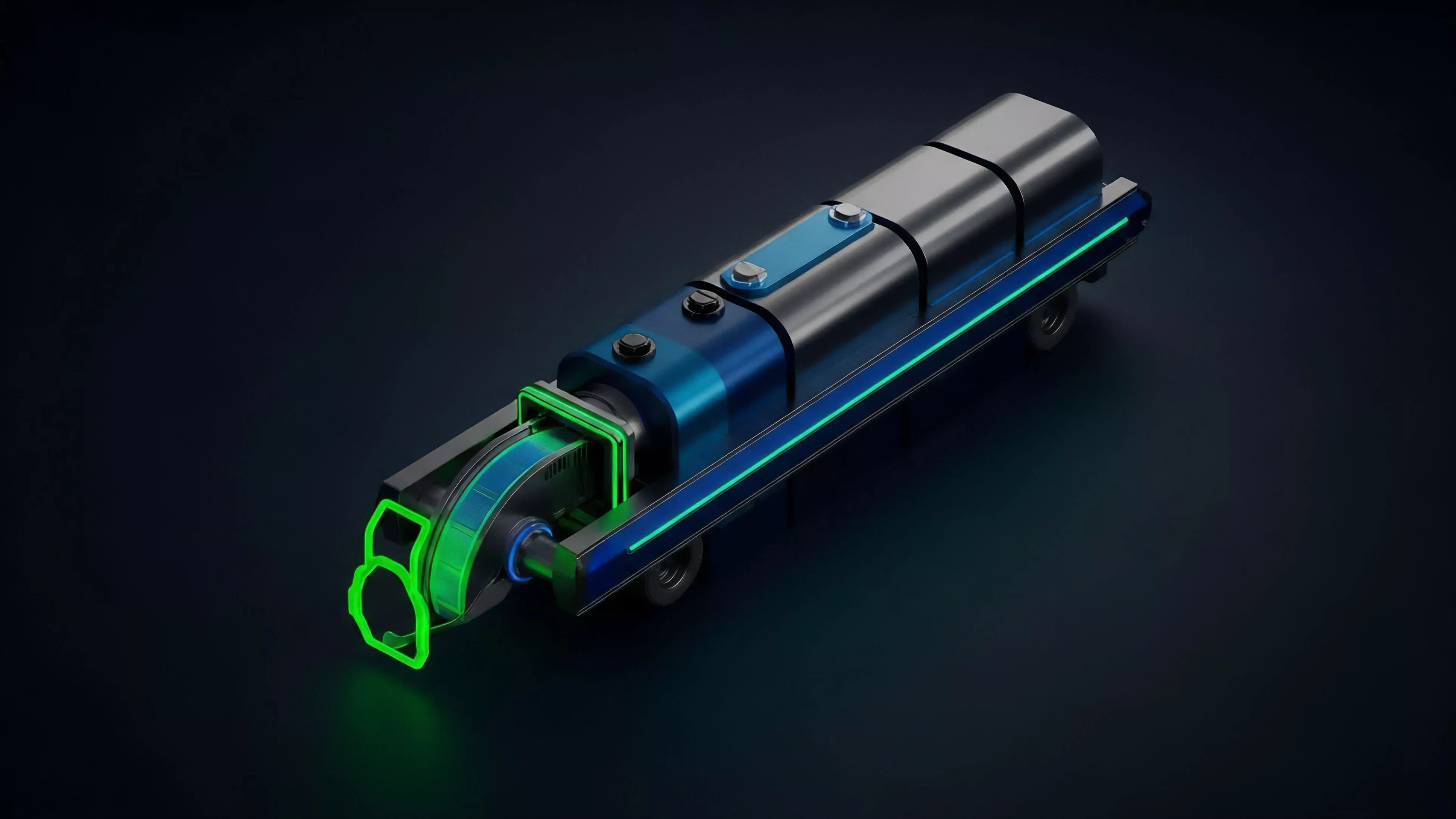

- Validator Nodes execute code to verify state transitions against the global consensus rules.

- Fraud Proofs provide a mechanism for network participants to challenge invalid state updates in optimistic rollups.

- Validity Proofs ensure that every state change is mathematically correct before being recorded on the main ledger.

Evolution

The trajectory of Cryptographic Data Validation has moved from simple, reactive verification to proactive, system-wide state assurance. Initial iterations were limited by computational overhead, often restricting the complexity of the financial instruments that could be validated on-chain. Improvements in cryptographic primitives and hardware acceleration have since enabled the validation of increasingly complex derivative models.

Evolution in validation technology has transitioned the ecosystem from simple transaction checking to complex, high-frequency state verification.

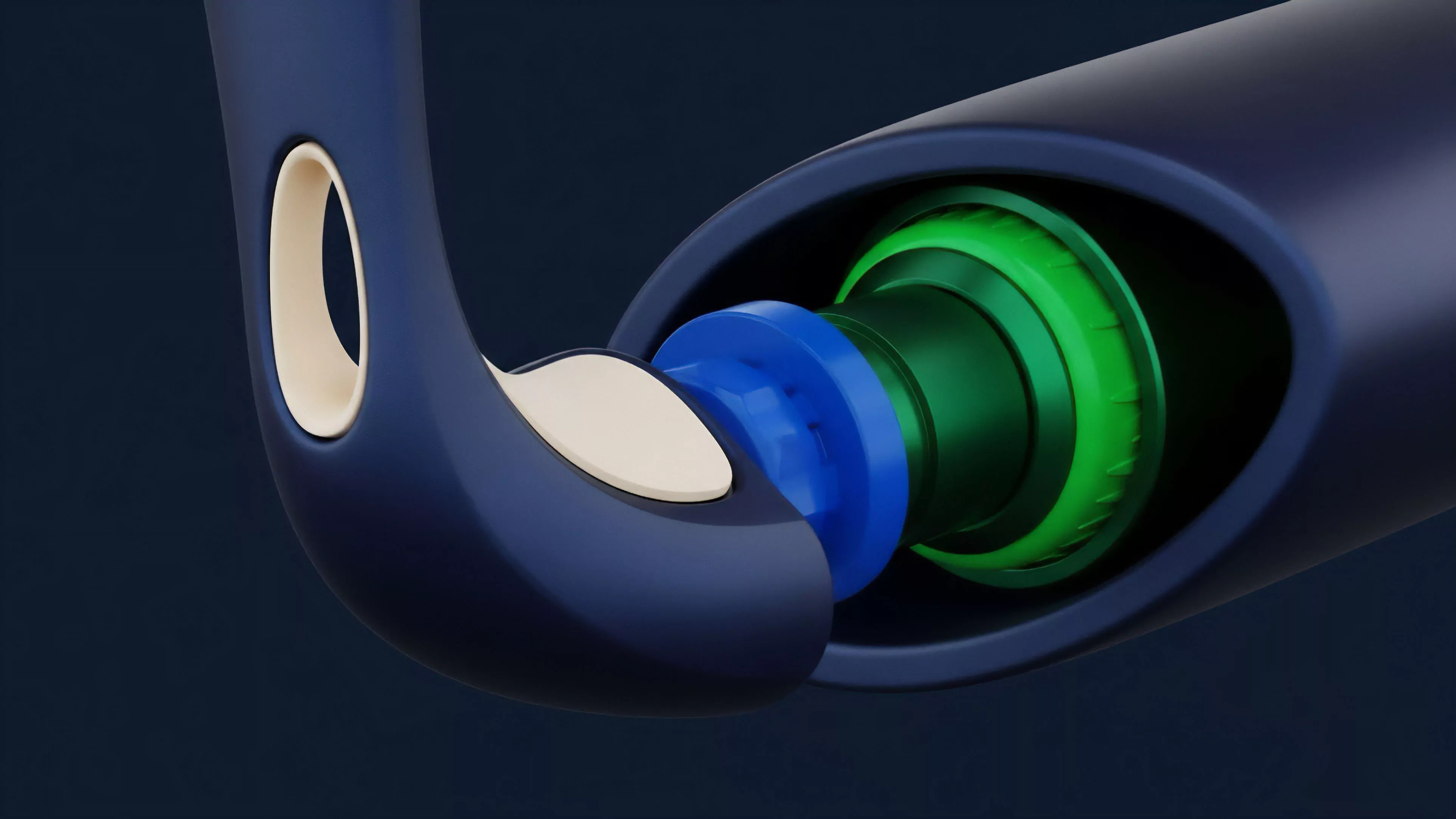

This shift has enabled the rise of sophisticated, automated risk management engines that operate with near-instantaneous validation. The integration of cross-chain communication protocols has further expanded the scope of validation, allowing derivative platforms to verify collateral status across disparate networks. This interconnectedness is essential for capital efficiency but introduces new vectors for systemic failure if the validation mechanisms across chains are not synchronized.

| Era | Validation Focus | Systemic Capability |

|---|---|---|

| Foundational | Transaction authenticity | Basic token transfers |

| DeFi Growth | Smart contract execution | Automated market making |

| Advanced | Complex state proofs | Cross-chain derivative settlement |

Horizon

The future of Cryptographic Data Validation lies in the refinement of hardware-level validation and the integration of advanced cryptographic proofs. We are witnessing a transition toward hardware-assisted validation, where secure enclaves perform cryptographic checks at the processor level, significantly reducing the latency associated with on-chain verification. This will be the catalyst for institutional-grade derivative trading within decentralized systems. Furthermore, the integration of artificial intelligence into the validation process will enable predictive risk assessment. Protocols will move beyond validating current state data to validating the potential future states of a portfolio, adjusting margin requirements in real-time based on probabilistic models of market volatility. This shift represents the final move toward fully autonomous, resilient financial infrastructure. What fundamental paradox emerges when the speed of validation outpaces the speed of human-comprehensible risk assessment?