Essence

Protocol Data Visualization serves as the analytical bridge between opaque on-chain transaction logs and actionable market intelligence. By transforming raw event data into structured, time-series representations, it allows participants to monitor the health, liquidity, and risk parameters of decentralized derivative venues in real time. This mechanism turns disparate smart contract state changes into a cohesive narrative of market activity.

Protocol Data Visualization translates raw blockchain state changes into legible financial metrics for assessing decentralized market health.

The primary utility lies in reducing information asymmetry. Traders rely on these visual frameworks to detect shifts in open interest, liquidation cascades, and funding rate deviations before they manifest as catastrophic price slippage. It acts as a diagnostic tool, exposing the underlying physics of automated market makers and decentralized order books to human scrutiny.

Origin

The necessity for these tools grew alongside the maturation of decentralized exchange architectures.

Early participants relied on block explorers, which presented data in a granular, non-financial format unsuitable for complex strategy execution. As liquidity protocols introduced sophisticated margin engines and option vaults, the requirement for dedicated telemetry became absolute.

- Transaction Monitoring: Initial attempts focused on simple event tracking to verify settlement integrity.

- Metric Aggregation: Developers began synthesizing events into indicators like total value locked and volume velocity.

- Derivative Complexity: The advent of decentralized options necessitated the tracking of implied volatility surfaces and delta exposure.

This evolution represents a shift from passive observation to active systemic monitoring. Financial engineers recognized that without specialized interfaces, the speed of automated liquidation processes would outpace human ability to hedge risk.

Theory

At the center of Protocol Data Visualization is the extraction and normalization of event logs from smart contracts. These logs contain the granular history of every trade, margin update, and liquidation event.

Mathematical models then process this data to derive higher-order metrics, such as the Greek sensitivities of an option portfolio or the utilization rates of a lending pool.

Visualizing protocol data allows for the real-time identification of systemic leverage accumulation and impending liquidity stress.

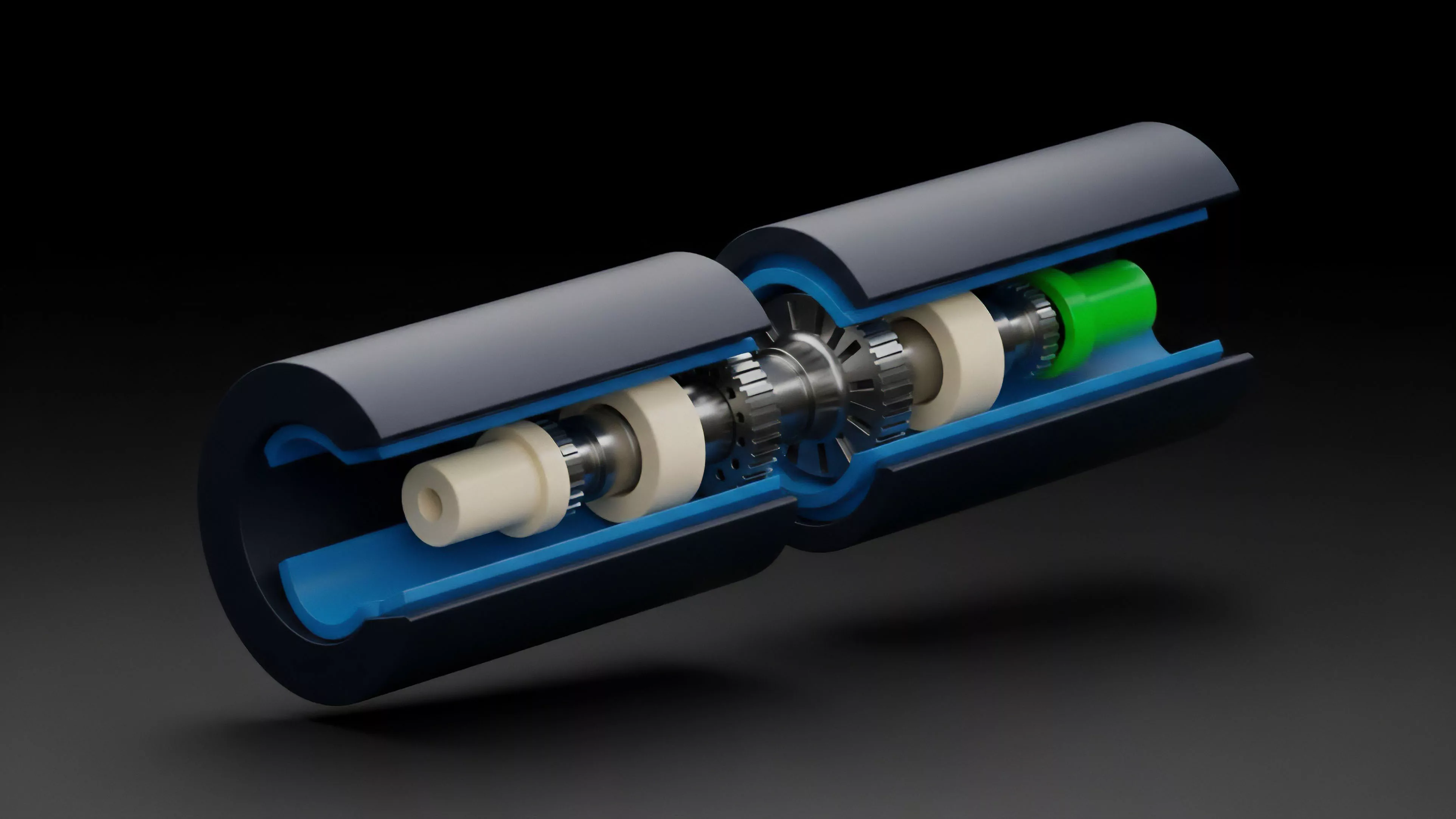

The technical architecture involves a three-tier stack:

- Indexing: High-throughput ingestion of blockchain events into relational or graph databases.

- Transformation: Applying quantitative formulas to raw logs to generate volatility surfaces and exposure profiles.

- Presentation: Rendering these metrics through interfaces that highlight anomalies in order flow or margin health.

The physics of these systems are often adversarial. Participants compete to identify mispriced options or inefficient liquidation thresholds, making the speed and accuracy of the visualization layer a competitive advantage. It is a constant game of decoding the state machine to anticipate the next state transition.

Approach

Current implementations focus on modularity and low-latency updates.

Professional market participants utilize custom-built dashboards that aggregate data from multiple decentralized venues to gain a unified view of market exposure. The reliance on centralized indexers has shifted toward decentralized, peer-to-peer data querying protocols to ensure the integrity of the information stream.

| Metric Category | Analytical Focus |

| Liquidity Depth | Slippage modeling and order book density |

| Risk Exposure | Delta, Gamma, and Vega sensitivity analysis |

| Systemic Health | Liquidation thresholds and collateralization ratios |

The strategic application of these tools requires understanding the specific constraints of the protocol. For example, visualizing the order flow of a constant product market maker differs significantly from tracking an order book-based derivative exchange. The architect must account for gas costs and block finality when determining the update frequency of the data visualization.

Evolution

The transition from static, retrospective reports to live, predictive interfaces marks the current frontier.

Historical data analysis provided a foundation for understanding past market cycles, but the demand for predictive modeling has forced a redesign of how data is queried and rendered.

Predictive data visualization enables proactive risk mitigation in high-velocity decentralized derivative markets.

The field has moved toward integrating machine learning models directly into the visualization stack. These models detect patterns in order flow that precede significant volatility events, providing a warning system for liquidity providers. The technical barrier to entry has risen, requiring a deep integration of blockchain engineering, quantitative finance, and user experience design.

The human element remains a significant variable in this system. When data indicates a potential liquidation, the speed of manual reaction is often insufficient, forcing a reliance on automated execution agents that are themselves informed by these visualization protocols.

Horizon

The next phase involves the integration of decentralized identity and reputation metrics into the visualization layer. Future interfaces will allow participants to filter market data based on the behavior of specific cohorts, such as institutional liquidity providers or long-term vault strategies.

This segmentation will provide deeper insights into the strategic intent behind large-scale capital movements.

- Real-time Simulation: Integrating “what-if” scenarios directly into the interface to test portfolio resilience against market shocks.

- Cross-Protocol Aggregation: Synthesizing data from multiple chains to provide a holistic view of decentralized financial exposure.

- Automated Alerting: Transitioning from visual monitoring to programmatic triggers based on complex protocol state conditions.

The ultimate goal is the creation of a transparent, self-correcting financial system where data visualization acts as the primary regulatory mechanism. As protocols become more complex, the ability to clearly represent their internal state will dictate which systems attract sustained liquidity and institutional confidence.