Essence

Pre-computation in decentralized finance represents a necessary architectural pattern for high-performance derivatives markets. The core challenge of on-chain options trading is the high computational cost and latency associated with calculating option pricing and risk parameters. Unlike traditional finance, where calculations run on dedicated, centralized servers, a decentralized environment forces every computation to compete for limited block space and resources.

This constraint makes real-time, dynamic risk management ⎊ a requirement for sophisticated strategies ⎊ unfeasible on the base layer. Pre-computation solves this by moving resource-intensive calculations off-chain, performing them in advance, and then submitting only the resulting data to the smart contract for verification and settlement. This approach allows protocols to offer complex products that require continuous risk monitoring, such as dynamic hedging or portfolio-level margin calculations, without incurring prohibitive gas costs for every state change.

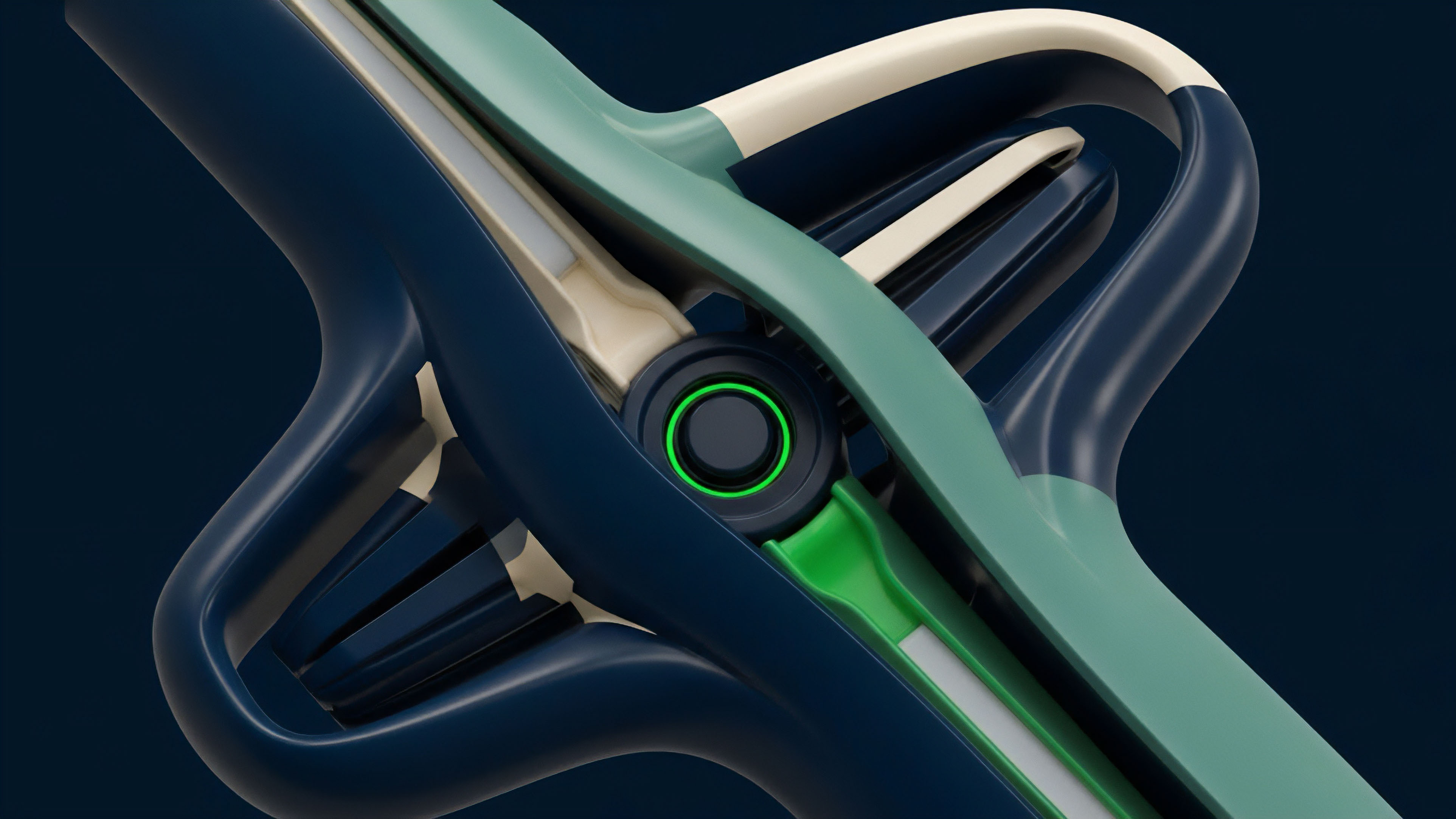

Pre-computation allows for the efficient execution of complex financial operations on a decentralized network by performing resource-intensive calculations off-chain.

The concept applies broadly to the calculation of option sensitivities, commonly known as the Greeks, and the construction of volatility surfaces. Calculating the change in an option’s value relative to changes in underlying price (Delta), time decay (Theta), or volatility (Vega) requires solving complex partial differential equations, often using numerical methods like Monte Carlo simulations or finite difference models. Performing these calculations on a blockchain would consume vast amounts of gas, making it economically irrational for a user to execute a trade or for a protocol to maintain accurate, up-to-date risk parameters for its entire liquidity pool.

Pre-computation is the mechanism that bridges the gap between the computational requirements of quantitative finance and the technical constraints of blockchain physics.

Origin

The origin of pre-computation in crypto derivatives stems directly from the limitations of early decentralized exchanges and options protocols. The initial designs for on-chain derivatives protocols often attempted to perform all necessary calculations within the smart contract logic. This “full on-chain” approach quickly proved untenable as transaction costs escalated during periods of network congestion.

The first generation of protocols struggled with two fundamental issues: first, accurately pricing options in real-time, and second, managing the risk of collateralized positions without constant re-evaluation. If a protocol cannot quickly recalculate a user’s margin requirements when market conditions change, it risks becoming insolvent during rapid price movements. This led to a critical realization: a high-throughput financial system cannot be built if every calculation requires a full network consensus.

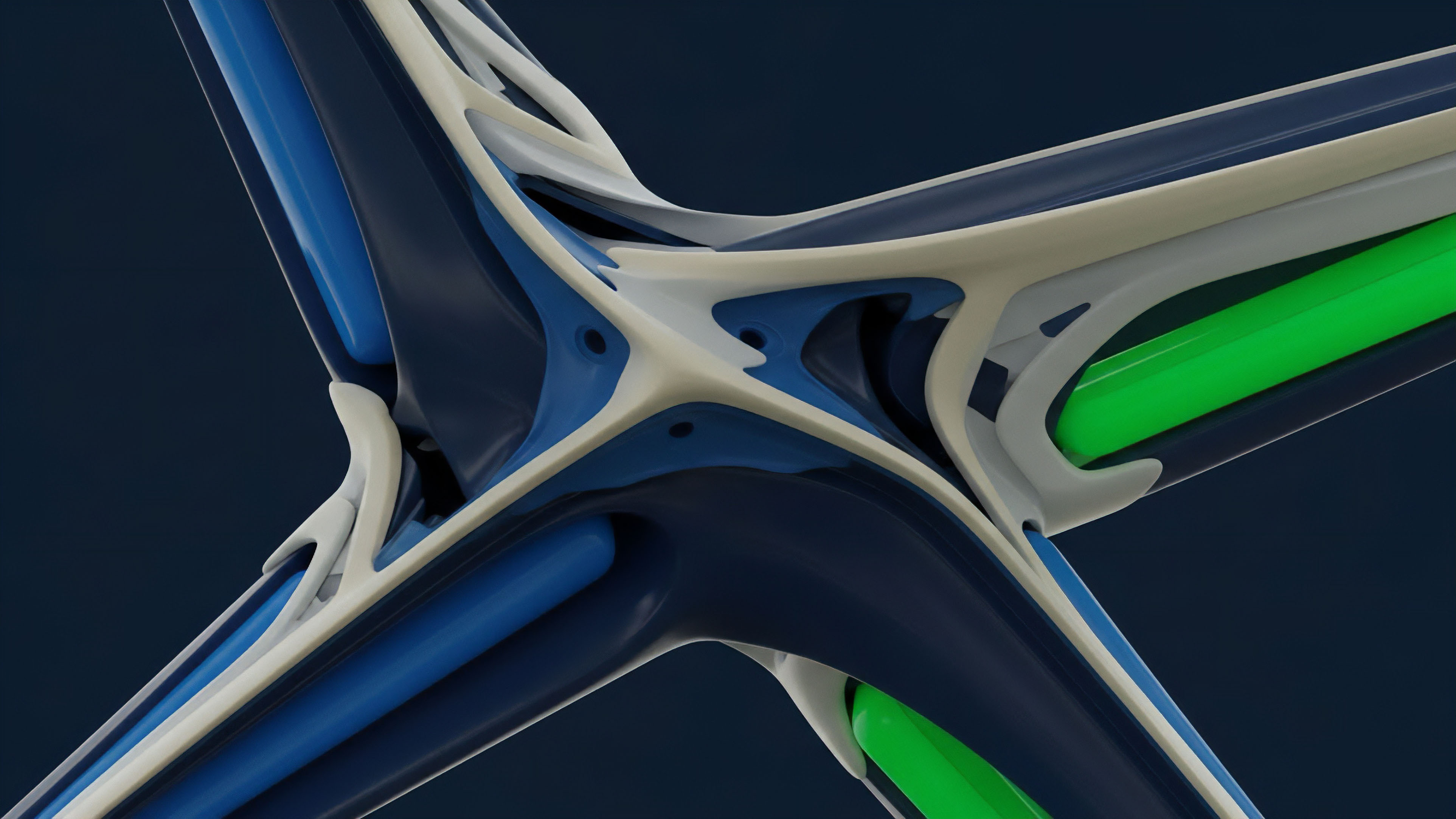

The shift to pre-computation reflects a design decision to minimize on-chain computation while maximizing off-chain efficiency. This mirrors a common pattern in decentralized architecture where “trust minimization” replaces “full decentralization” as the primary objective. The core idea is to move the heavy lifting to a more efficient off-chain environment while retaining the final verification step on-chain.

The inspiration for this approach comes from traditional finance’s high-frequency trading infrastructure, where complex calculations are performed on low-latency servers, allowing for instantaneous pricing and order matching. The challenge for DeFi was to adapt this model to a trustless environment, ensuring that the pre-computed data submitted to the blockchain was verifiably correct, rather than simply trusted due to the reputation of a centralized entity.

Theory

The theoretical foundation of pre-computation rests on the separation of computational complexity from on-chain state changes. In options pricing, the Black-Scholes model and its variations require calculating the cumulative distribution function of a standard normal distribution, which is computationally expensive. For more complex options, numerical methods are required.

The computational cost scales exponentially with the number of variables and the required precision. Pre-computation recognizes that the results of these calculations can be generated off-chain, and only the proof of calculation needs to be submitted on-chain. This reduces the on-chain cost from running the calculation itself to simply verifying the integrity of the data.

The Greeks and Volatility Surfaces

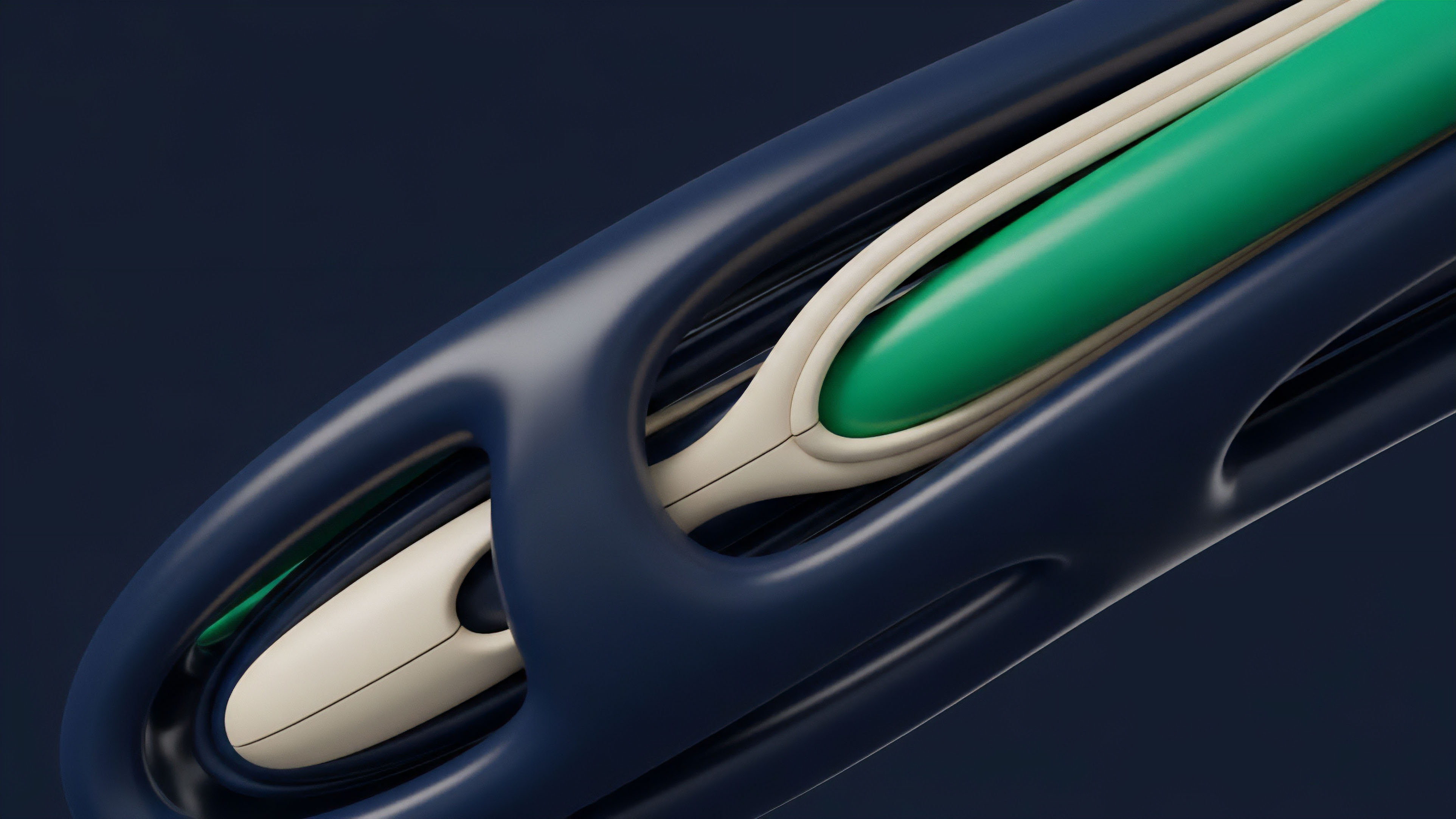

A primary application of pre-computation is the generation of a volatility surface. The implied volatility of an option changes based on its strike price and time to expiration, creating a three-dimensional surface. Market makers and risk managers require real-time access to this surface to price options accurately and manage their portfolio risk.

A pre-computation engine generates this surface off-chain by collecting market data, applying a model (such as Black-Scholes or stochastic volatility models like Heston), and calculating the implied volatility for various strikes and maturities. This data is then cached and updated continuously. The on-chain protocol simply references a specific point on this pre-computed surface when needed for a transaction or risk check.

The calculation of Greeks, particularly Gamma, benefits immensely from pre-computation. Gamma measures the rate of change of Delta. High Gamma means an option’s Delta changes rapidly with price movements, making risk management challenging.

A protocol needs to know the Gamma of its positions to understand how much re-hedging is required. By pre-calculating Gamma across a range of possible underlying prices, the protocol can anticipate re-hedging requirements and adjust margin thresholds preemptively, preventing sudden liquidations or protocol insolvency. The off-chain engine acts as a continuous risk monitor, constantly feeding updated risk metrics to the on-chain system.

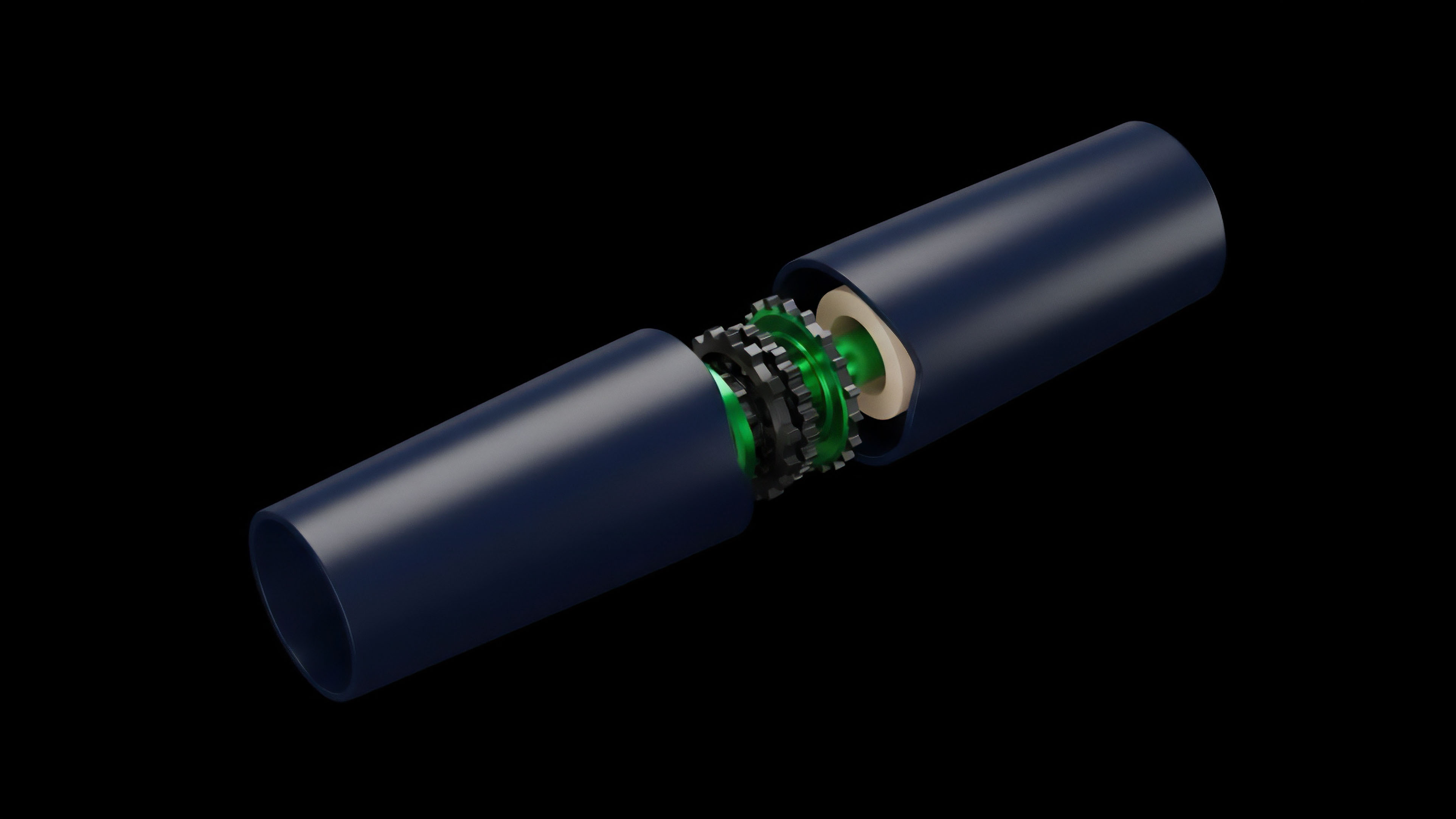

The core trade-off here is between latency and trust. Centralized pre-computation offers minimal latency, but introduces a single point of failure and requires trusting the off-chain entity. Decentralized pre-computation, often implemented through optimistic rollups or zero-knowledge proofs, adds a layer of verification to mitigate this trust requirement, though often at the cost of increased latency compared to a fully centralized system.

Approach

The implementation of pre-computation requires a structured approach to bridge the gap between off-chain calculation and on-chain verification. The current approaches vary in their level of decentralization and their reliance on different cryptographic proofs. A protocol must choose its method based on its risk tolerance and desired speed.

Off-Chain Computation Architectures

- Centralized Off-Chain Server: The simplest approach involves a single, trusted entity running the calculation engine off-chain. This entity calculates the required data (prices, Greeks, liquidation thresholds) and signs the result cryptographically. The smart contract verifies the signature before accepting the data. This method offers high speed and low cost, but introduces counterparty risk in the form of a single, trusted oracle.

- Decentralized Oracle Networks: This method distributes the pre-computation task among a network of independent nodes. Each node performs the calculation and submits its result. The smart contract then verifies a consensus among the submitted results. This reduces trust in a single entity but increases latency and complexity.

- Optimistic Pre-computation: In this model, an off-chain server submits the pre-computed data optimistically. The data is assumed to be correct unless challenged by another participant during a specified time window. If a challenge occurs, the calculation is performed on-chain to verify the result, punishing the fraudulent actor. This provides a balance between efficiency and security.

Pre-Computation for Liquidation Engines

For options protocols, pre-computation is essential for efficient liquidation engines. When a user’s collateral ratio falls below a certain threshold, their position must be liquidated to protect the protocol’s solvency. Calculating this ratio in real-time for every user during high market volatility is computationally intensive.

Pre-computation allows the protocol to constantly monitor all positions off-chain. When a position approaches the liquidation threshold, the off-chain engine alerts the on-chain contract, triggering the liquidation. This allows the protocol to act proactively, rather than reactively, to market movements.

A comparison of pre-computation methods for derivatives protocols highlights the trade-offs in design:

| Method | Trust Model | On-Chain Cost | Latency |

|---|---|---|---|

| Centralized Oracle | High Trust Requirement | Low | Minimal |

| Decentralized Oracle Network | Consensus-based Trust | Medium | Medium |

| Optimistic Rollup | Trustless with Challenge Period | Medium/High (on challenge) | High (challenge period) |

| ZK Rollup (Future) | Trustless with Proof Verification | High (proof verification) | Low |

Evolution

The evolution of pre-computation in crypto options mirrors the increasing sophistication of decentralized financial instruments. Initially, pre-computation focused on basic pricing feeds, providing a single price point for an underlying asset to calculate simple margin requirements. The next stage involved pre-calculating simple option pricing using models like Black-Scholes.

The current state, however, moves beyond simple pricing to dynamic risk management. Protocols now pre-calculate entire volatility surfaces and a range of Greeks for multiple strikes and expirations. This allows for more precise risk modeling and enables complex strategies like portfolio margining, where the risk of multiple positions is calculated together rather than individually.

The shift from single-point pricing to dynamic risk surface modeling represents the maturation of pre-computation in decentralized derivatives.

A significant shift in the evolution of pre-computation is the move from simple data feeds to proof-based computation. Early protocols relied on a “trusted” oracle to submit pre-computed data. The current trend is to integrate zero-knowledge proofs (ZKPs) into the pre-computation process.

A ZKP allows the off-chain computation to generate a cryptographic proof that verifies the accuracy of the calculation without revealing the input data. This removes the need for a challenge period or a trusted third party, offering a truly trustless method for off-chain calculation. The development of specialized ZK-friendly algorithms for financial modeling is a key area of research that will define the next generation of options protocols.

The increasing complexity of pre-computation has also driven a need for standardized interfaces and data formats. As protocols integrate more advanced risk models, the ability to share pre-computed data efficiently between different protocols becomes essential for liquidity and interoperability. This leads to the development of dedicated pre-computation services that function as a shared utility layer for the entire DeFi derivatives space.

Horizon

Looking ahead, pre-computation will transition from a necessary workaround for current blockchain limitations to a core component of future decentralized market infrastructure. The next frontier involves moving beyond static data pre-calculation to predictive pre-computation. This involves using machine learning models off-chain to predict future volatility or market movements.

These predictive models will generate more accurate volatility surfaces than traditional models, providing a significant advantage to protocols that can integrate them effectively. The challenge lies in creating a trustless mechanism for verifying the results of these complex, non-deterministic models.

Another area of development is the integration of pre-computation with exotic options. Current DeFi options are primarily vanilla (calls and puts). Exotic options, such as Asian or barrier options, have payoffs dependent on complex paths or conditions.

The computational overhead for pricing and risk-managing these instruments is immense. Pre-computation will enable the creation of these products by providing the necessary computational power off-chain, opening up a new dimension of risk management and yield generation strategies within decentralized markets. The architectural challenge here is designing protocols that can efficiently handle the verification of these path-dependent calculations.

The long-term vision for pre-computation sees a future where off-chain computation becomes indistinguishable from on-chain verification. As ZK technology advances, protocols will be able to prove complex calculations in near real-time, effectively eliminating the trade-off between speed and trust. This will allow decentralized derivatives markets to rival traditional finance in both speed and product complexity, while retaining the core benefits of transparency and permissionless access.

Glossary

Encrypted Data Computation

Proof of Computation in Blockchain

Private Computation

Collateralization Ratios

Multi Party Computation Thresholds

Pre-Confirmation Risk

On-Chain Verification

Sequencer Pre-Confirmations

Risk Array Computation