Essence

Order Book Depth Stability Monitoring Systems function as the analytical bedrock for assessing liquidity resilience within decentralized exchange environments. These systems quantify the capacity of a market to absorb significant trade volume without triggering disproportionate price slippage. By tracking the distribution of limit orders across the bid and ask sides of the order book, these tools provide a real-time diagnostic of market health.

These monitoring frameworks provide the quantitative infrastructure required to detect liquidity fragmentation and impending volatility spikes before they manifest in price action.

At their core, these systems translate raw order flow data into actionable metrics, such as market impact costs and order book slope. This data allows market participants to distinguish between genuine liquidity and ephemeral, synthetic depth often created by high-frequency trading bots. The primary objective remains the maintenance of orderly price discovery, ensuring that large-scale position adjustments do not destabilize the underlying asset valuation.

Origin

The genesis of Order Book Depth Stability Monitoring Systems traces back to the limitations inherent in early decentralized automated market makers.

Initial protocols relied on simplistic constant product formulas that ignored the structural realities of order flow, leading to frequent and severe price distortions during periods of high demand. Financial engineers adapted concepts from traditional electronic limit order books to address these inefficiencies.

- Liquidity Fragmentation: The primary catalyst for development, as decentralized markets struggled to aggregate disparate liquidity sources into a unified, stable trading environment.

- Slippage Mitigation: Early research focused on minimizing the adverse price impact of large trades, which directly necessitated the creation of tools to measure depth at specific price intervals.

- Automated Market Making: The transition from static algorithms to dynamic, order-book-aware protocols necessitated continuous monitoring of the bid-ask spread and available volume at various price tiers.

These systems were built upon the foundational work of market microstructure researchers who mapped the relationship between order book density and price volatility. By applying these traditional finance principles to the unique constraints of blockchain settlement, developers created the first iteration of stability monitors designed to protect against predatory trading practices and systemic exhaustion of liquidity.

Theory

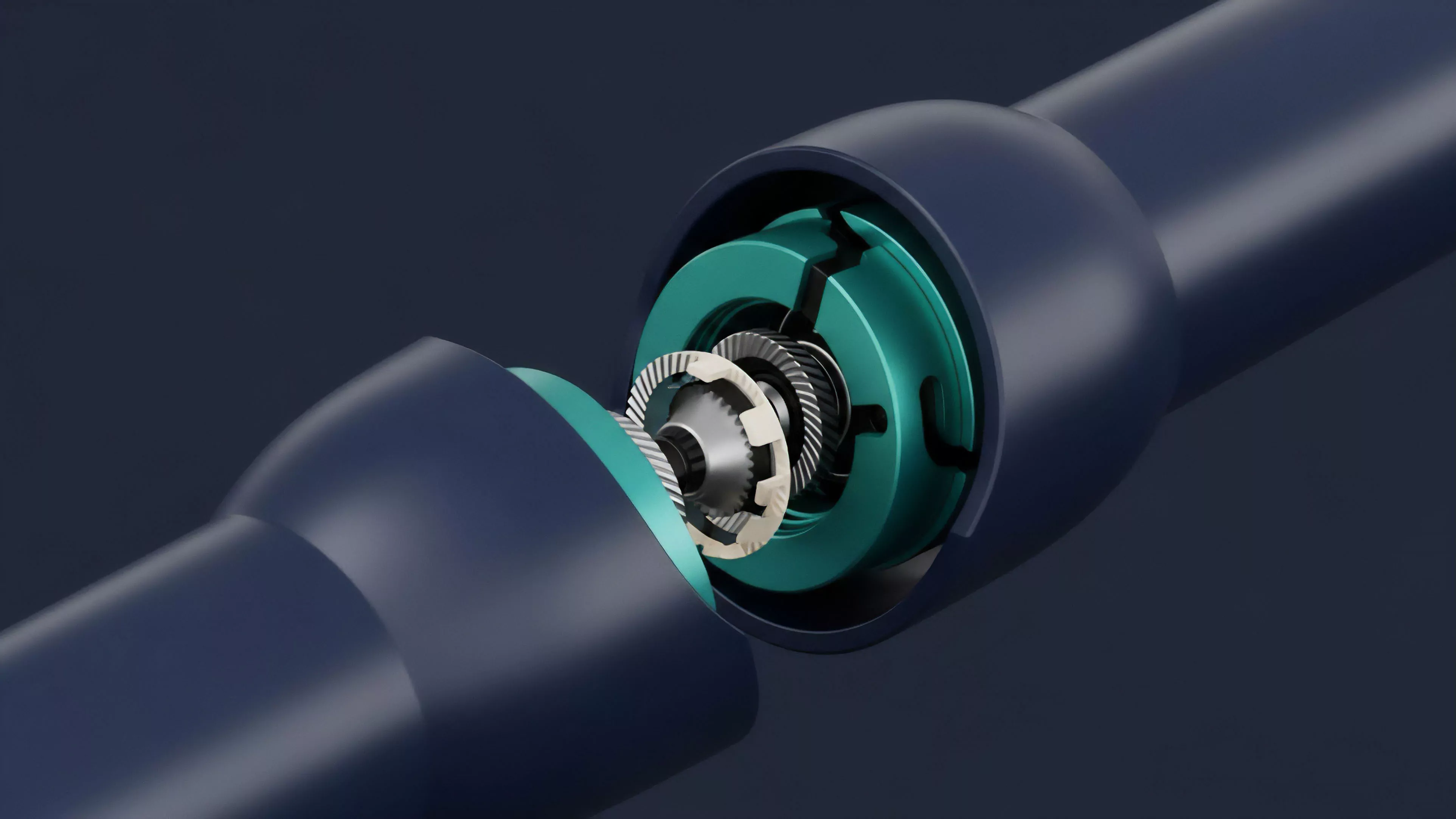

The theoretical framework governing these systems relies on the relationship between order book density and price sensitivity. Mathematically, the stability of an order book is defined by the elasticity of price with respect to trade volume.

When the distribution of limit orders becomes thin, the price impact of a marginal trade increases, leading to higher volatility and potential feedback loops that can threaten the entire protocol.

The stability of an order book is inversely proportional to the cost of execution for large orders at any given price level.

Quantitative Modeling of Liquidity

The structural integrity of an order book is often modeled using the concept of Order Book Slope, which measures the rate at which volume increases as one moves away from the mid-price. A steeper slope indicates higher concentration of orders near the current market price, suggesting greater stability. Conversely, a shallow slope indicates dispersed liquidity, leaving the asset price vulnerable to rapid, large-scale movements.

Game Theoretic Implications

Market participants operate within an adversarial environment where information asymmetry is constant. Order Book Depth Stability Monitoring Systems serve as a deterrent against predatory strategies like quote stuffing and order book spoofing. By identifying artificial patterns in the order flow, these systems force participants to provide genuine, executable liquidity to remain competitive within the market hierarchy.

| Metric | Definition | Stability Significance |

|---|---|---|

| Market Impact Cost | Price change resulting from a trade | High impact indicates low depth stability |

| Order Book Slope | Rate of change in cumulative volume | Steep slope indicates higher resistance |

| Spread Elasticity | Spread sensitivity to order volume | Low elasticity signals stable liquidity |

The psychological dimension of trading often leads to herd behavior, where participants pull liquidity during times of stress. This creates a reflexive cycle that these systems are designed to identify, providing a sober, data-driven check against the panic-induced thinning of the order book.

Approach

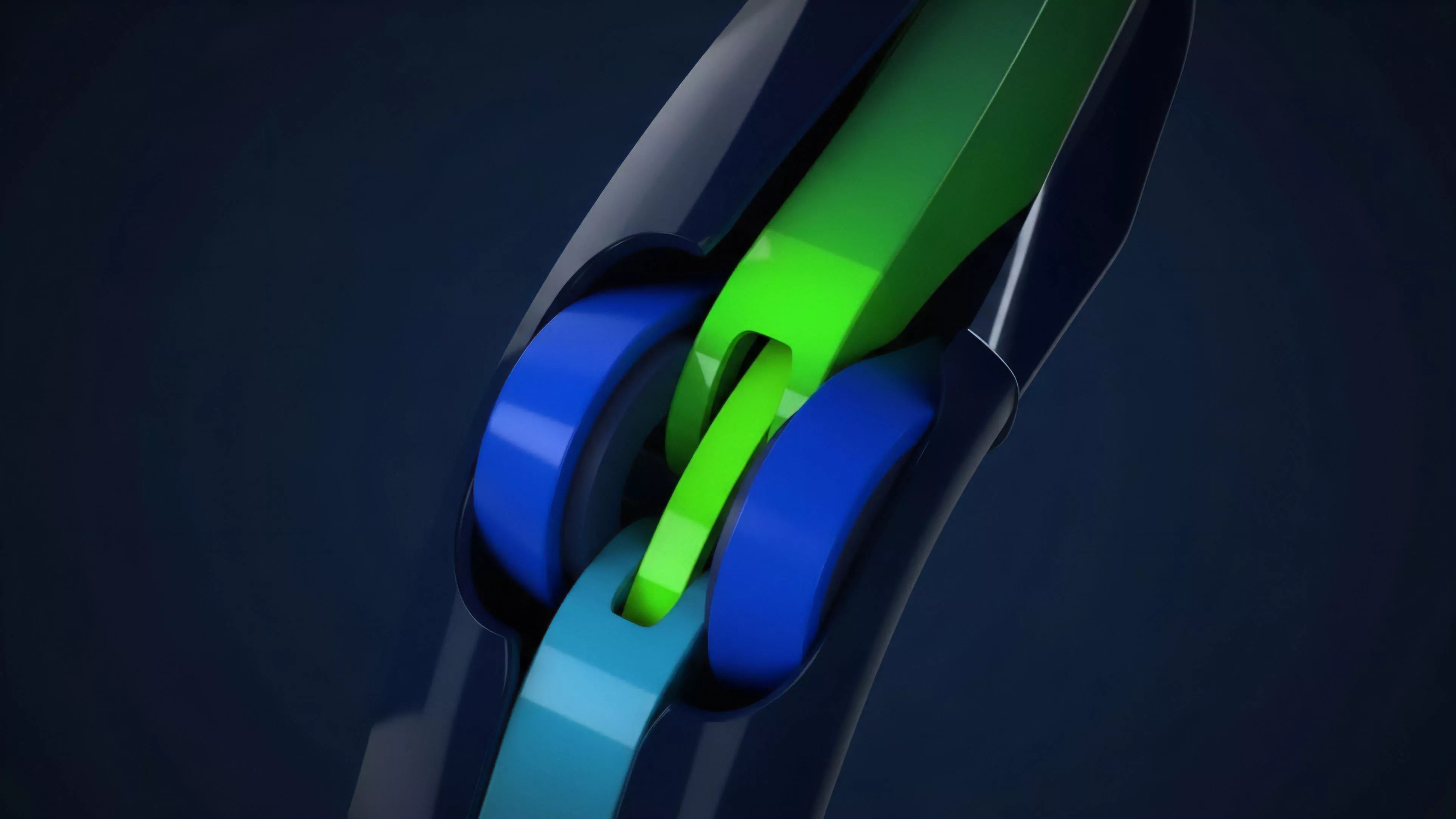

Current methodologies for monitoring order book depth involve continuous, real-time ingestion of websocket data from multiple exchange nodes. This process enables the construction of a comprehensive, consolidated view of the market, which is then analyzed for anomalies or structural weaknesses.

The goal is to move beyond simple volume metrics toward a deeper understanding of the distribution and quality of liquidity.

- Consolidated Data Ingestion: Aggregating order flow from decentralized protocols and centralized gateways to establish a unified view of the market landscape.

- Latency-Sensitive Analysis: Utilizing high-performance computing environments to ensure that stability metrics are updated at speeds matching the execution frequency of automated agents.

- Anomalous Flow Detection: Employing algorithmic filters to isolate legitimate liquidity providers from opportunistic actors who engage in temporary, high-volume quoting.

Effective monitoring systems prioritize the identification of liquidity voids before they result in significant price deviations.

This technical approach requires a rigorous understanding of protocol-specific constraints, such as gas costs and block confirmation times, which dictate the actual availability of liquidity. The data is processed through models that calculate the potential slippage for trades of varying sizes, providing traders and protocols with an accurate representation of the market’s capacity at any given moment.

Evolution

The progression of Order Book Depth Stability Monitoring Systems has moved from reactive, post-trade analysis to proactive, predictive modeling. Early versions merely recorded historical slippage events to provide a retrospective view of market conditions.

Modern iterations utilize machine learning models to anticipate liquidity depletion based on current market trends and external volatility triggers. This shift mirrors the broader maturation of decentralized finance, where the focus has transitioned from basic protocol functionality to the optimization of complex financial strategies. As the market becomes more institutionalized, the demand for high-fidelity liquidity monitoring has grown, driving the development of more sophisticated, cross-protocol tools that can identify contagion risks across interconnected platforms.

The introduction of cross-chain liquidity aggregation has added a new layer of complexity to the monitoring process. These systems must now account for latency and settlement risks associated with bridging assets, which directly impact the perceived depth of the order book. The evolution continues toward autonomous systems that can dynamically adjust margin requirements and leverage limits in response to detected changes in order book stability, creating a self-regulating market environment.

Horizon

The future of Order Book Depth Stability Monitoring Systems lies in the integration of decentralized identity and reputation scores for liquidity providers.

By assigning reliability metrics to market participants, protocols will be able to weight liquidity based on its historical performance and commitment during market stress. This moves the market toward a merit-based liquidity model, reducing the reliance on volatile, transient capital.

Future stability frameworks will incorporate predictive volatility modeling to preemptively adjust market parameters before liquidity shocks occur.

Technological advancements in zero-knowledge proofs will enable the verification of liquidity depth without compromising the privacy of the participants. This balance between transparency and confidentiality will be essential for attracting institutional capital to decentralized derivatives. Furthermore, the convergence of automated, protocol-level risk management and user-facing analytical tools will create a more resilient, self-healing market structure that can withstand even the most extreme liquidity events.