Essence

Algorithmic Execution Quality represents the precise alignment between a trader’s intent and the realized market outcome within digital asset venues. It functions as the primary metric for evaluating the efficiency of automated routing systems, specifically regarding their ability to minimize market impact while capturing available liquidity. This construct transcends simple price tracking, instead focusing on the multifaceted interplay of latency, fill probability, and slippage management across fragmented order books.

Algorithmic execution quality measures the efficacy of automated trading systems in bridging the gap between intended trade parameters and final settlement outcomes.

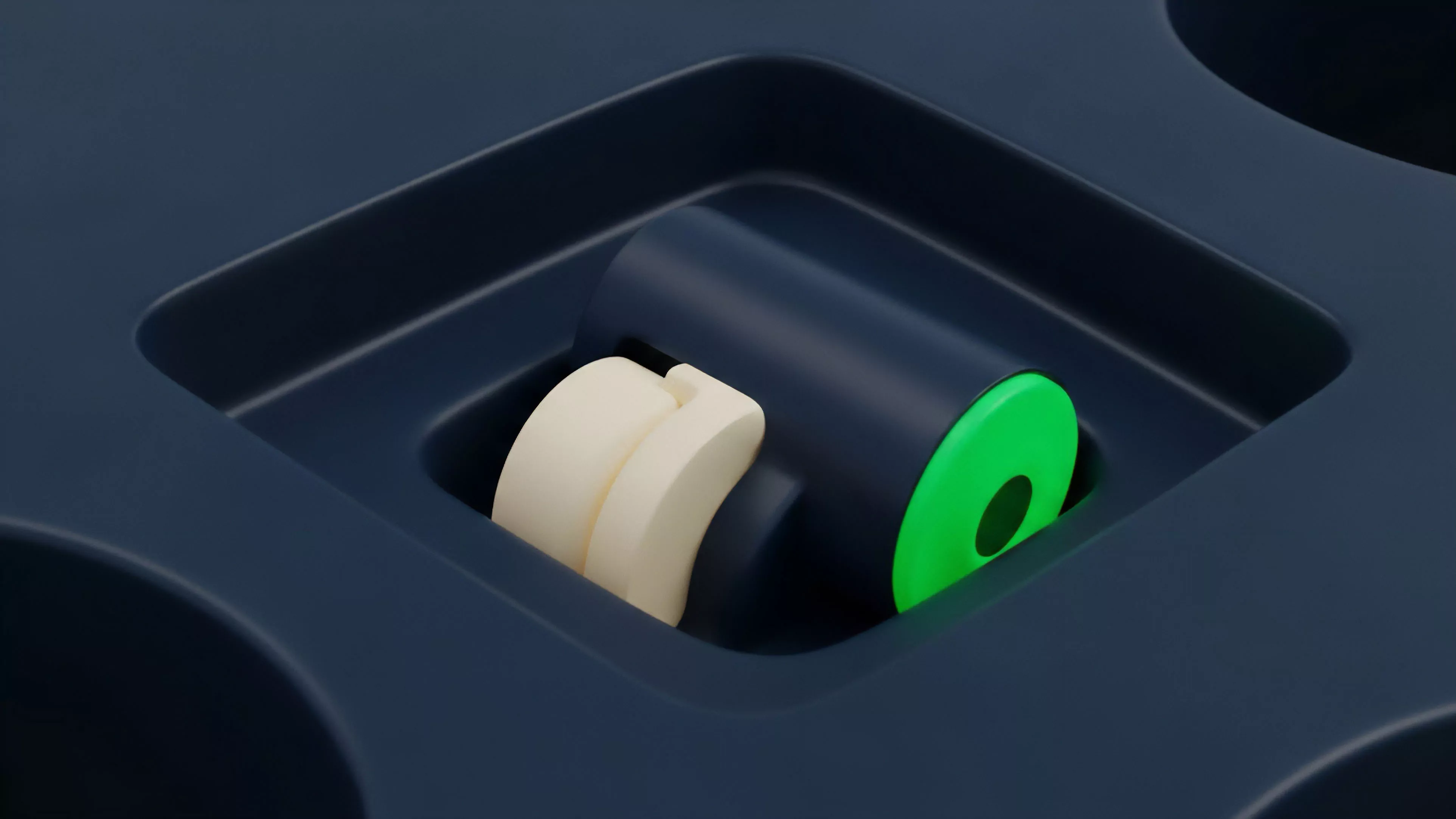

At its core, this discipline addresses the friction inherent in decentralized markets. When a participant initiates an order, the underlying liquidity engine must decompose the request into smaller, manageable fragments to avoid triggering adverse price movements. Success is determined by the capacity to maintain a neutral execution footprint, ensuring that the act of buying or selling does not fundamentally alter the price trajectory against the trader’s position.

Origin

The genesis of Algorithmic Execution Quality traces back to the maturation of electronic communication networks within traditional equities, eventually migrating into the high-volatility environment of crypto derivatives.

Early market participants relied on manual entry, leading to significant inefficiencies during periods of rapid price shifts. As decentralized exchanges proliferated, the need for sophisticated routing logic became paramount to navigate the lack of unified liquidity.

- Latency Sensitivity emerged as the primary driver for system design as block times and mempool congestion created significant arbitrage opportunities.

- Fragmented Liquidity forced developers to build meta-aggregators capable of splitting orders across multiple automated market makers.

- Adversarial Dynamics required the development of stealth-focused execution protocols to prevent predatory front-running by automated bots.

This evolution reflects a transition from simple market access to complex execution engineering. The shift was accelerated by the rise of institutional participation, which demanded rigorous standards for transaction cost analysis and predictable outcomes in an otherwise opaque, decentralized environment.

Theory

The theoretical framework governing Algorithmic Execution Quality rests upon the minimization of implementation shortfall. This concept quantifies the difference between the decision price and the actual execution price, accounting for both explicit fees and implicit costs such as slippage and opportunity loss.

Advanced models utilize stochastic calculus to estimate the probability of fill completion within specific time windows, balancing speed against the risk of signaling intent to the broader market.

Implementation shortfall serves as the foundational metric for evaluating the total cost of liquidity acquisition in fragmented digital asset markets.

Market Microstructure Mechanics

The architecture of order flow management relies on the interaction between limit order books and automated market maker pools. A robust system must account for:

- Information Leakage which occurs when large orders are detected, allowing other participants to adjust their quotes preemptively.

- Inventory Risk which impacts the pricing of derivatives as market makers hedge their exposure to the underlying asset.

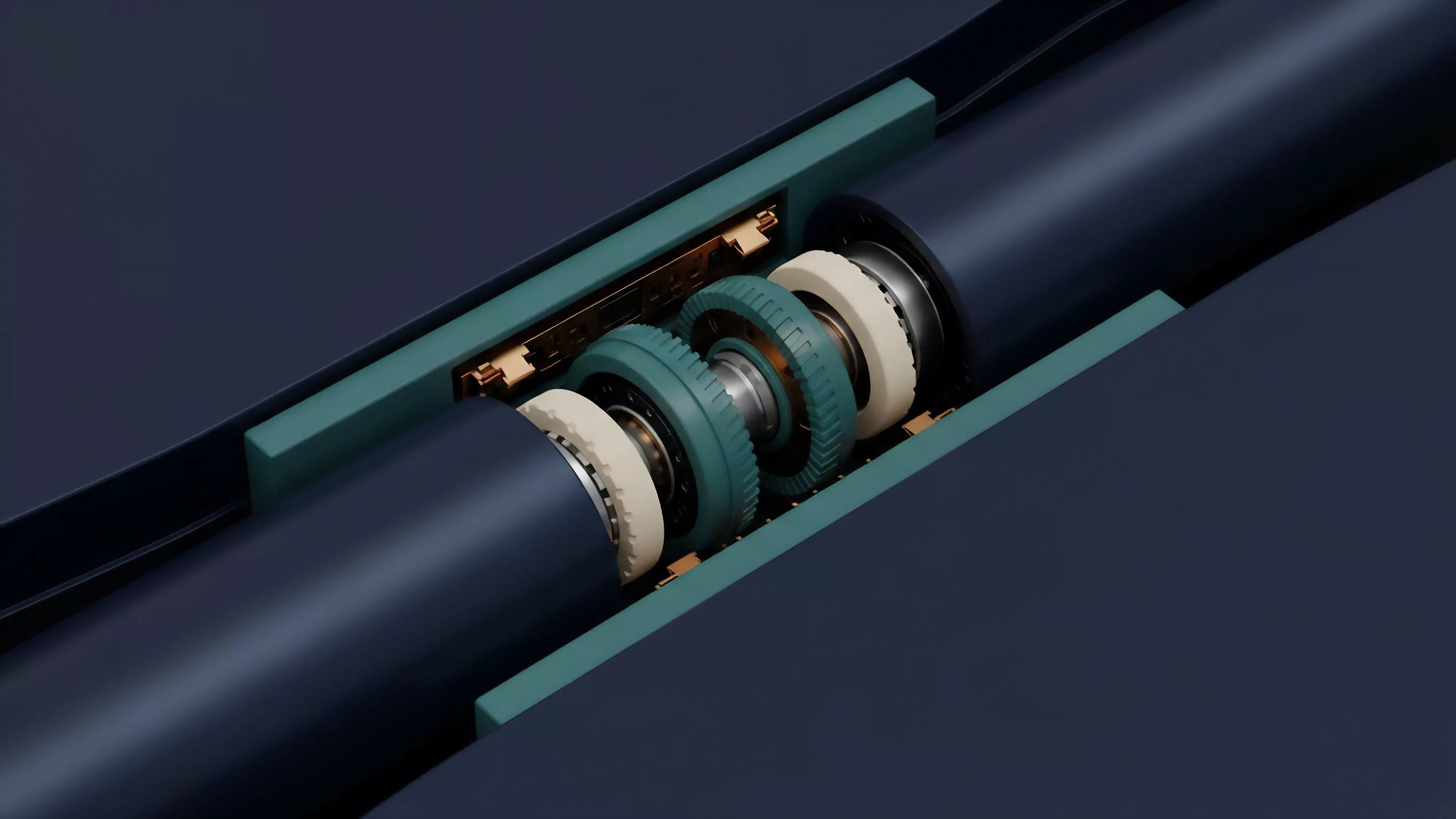

- Order Pacing which determines the optimal distribution of trades to absorb liquidity without exhausting available depth.

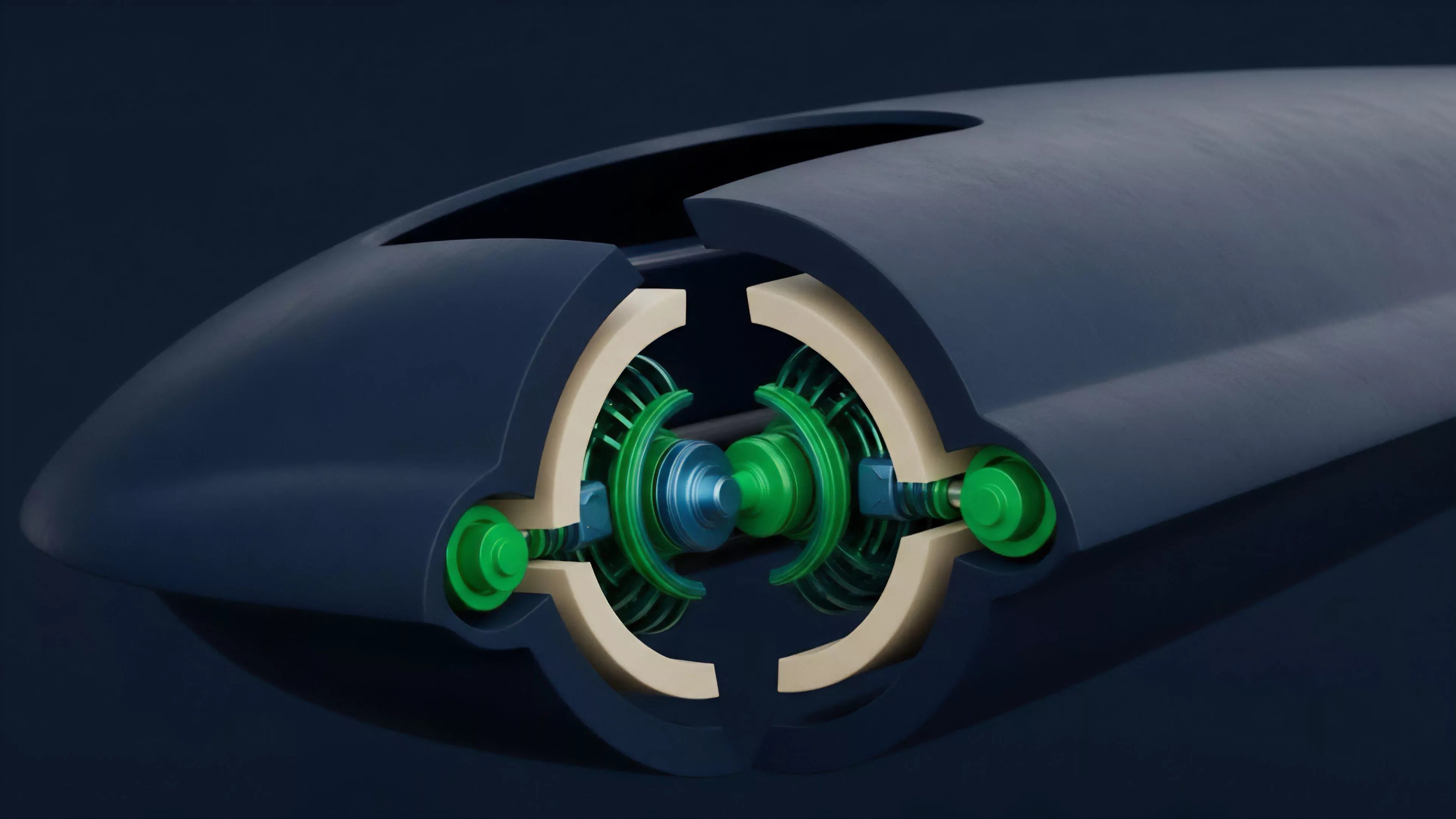

Consider the physics of a large-scale position adjustment. If an algorithm injects too much volume instantaneously, it depletes the top-of-book, forcing the trade into higher-cost liquidity tiers. The system must instead operate as a damped harmonic oscillator, seeking to return the market to equilibrium rather than forcing a violent correction.

Approach

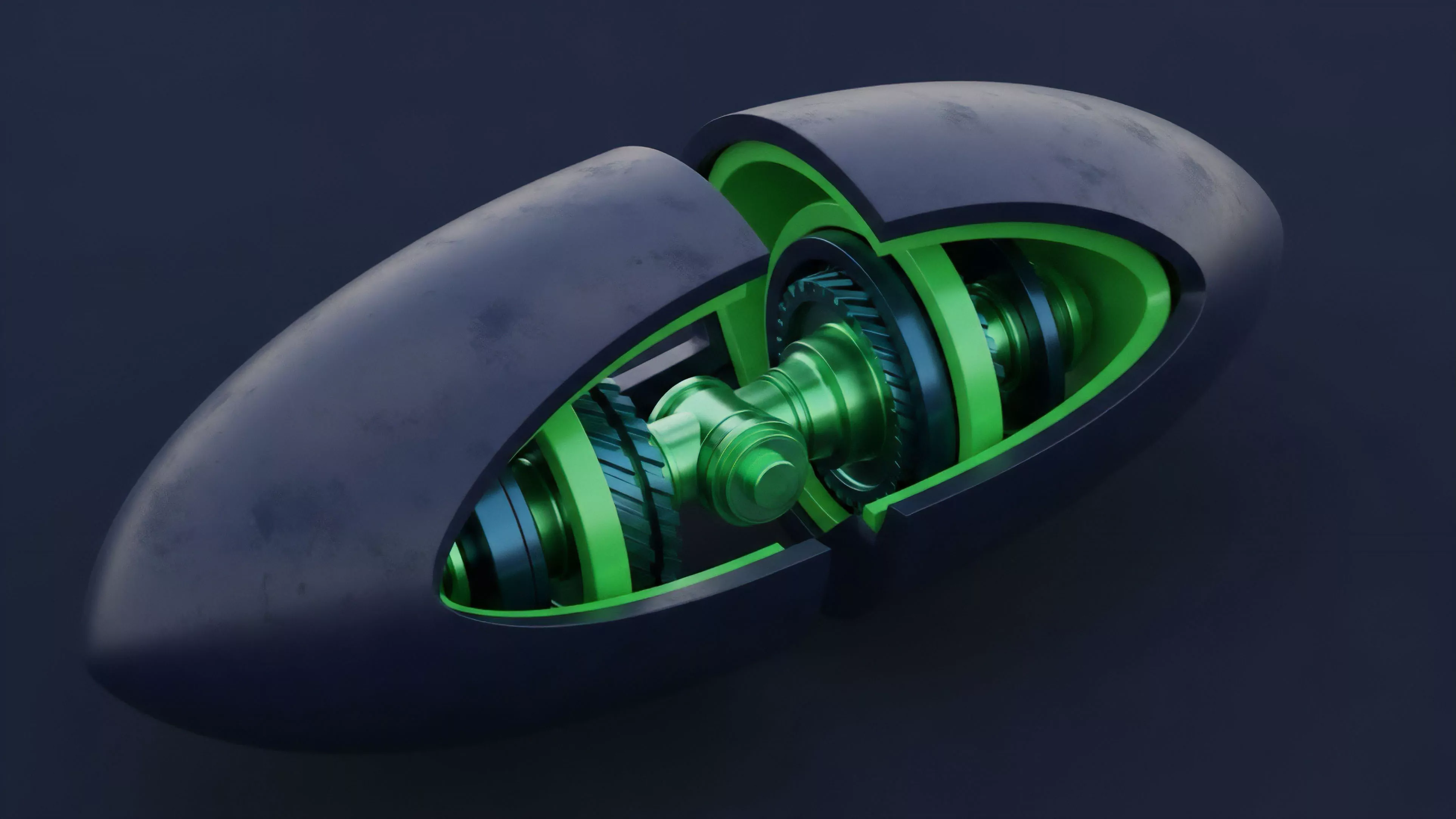

Current strategies for optimizing Algorithmic Execution Quality involve the deployment of sophisticated execution algorithms such as Volume Weighted Average Price or Time Weighted Average Price models, adapted for the unique constraints of blockchain settlement.

These tools allow traders to decompose massive positions into smaller, non-disruptive chunks. The focus has shifted toward on-chain observability, where real-time monitoring of mempool activity informs dynamic adjustments to gas settings and routing paths.

| Execution Strategy | Primary Benefit | Risk Factor |

| Iceberg Orders | Reduced market impact | Extended exposure time |

| Smart Order Routing | Liquidity aggregation | Increased routing latency |

| Flash Execution | Minimized front-running | Higher gas consumption |

The strategic application of these tools requires a deep understanding of protocol physics. For instance, the timing of an execution relative to a block producer’s schedule can significantly alter the realized price. Professionals now integrate predictive modeling to anticipate liquidity spikes, ensuring that orders are positioned when market depth is highest.

Evolution

The trajectory of this domain has moved from basic automated routing to the current era of Intent-Centric Architecture.

Early iterations focused on simple speed, but modern systems prioritize the preservation of capital through MEV-aware routing. By utilizing private mempools and specialized relayers, participants can now shield their orders from toxic extraction, a significant leap from the exposed nature of early decentralized trading.

Intent-centric execution protocols prioritize the protection of order flow integrity against predatory extraction mechanisms.

The integration of cross-chain liquidity has further complicated the landscape. Systems must now reconcile varying consensus mechanisms and settlement finality times, creating a new layer of complexity in execution pathing. This shift necessitates a move away from static routing tables toward adaptive, machine-learning-driven agents capable of evaluating global liquidity in milliseconds.

Horizon

The future of Algorithmic Execution Quality lies in the total abstraction of liquidity management.

We anticipate the rise of autonomous execution agents that utilize cryptographic proofs to guarantee optimal routing without revealing the trader’s ultimate intent. These systems will likely incorporate decentralized oracle networks to provide real-time pricing data, allowing for sub-millisecond adjustments in a multi-venue environment.

| Development Stage | Focus Area | Systemic Impact |

| Near Term | MEV mitigation | Reduced slippage |

| Mid Term | Cross-chain atomic swaps | Unified liquidity |

| Long Term | Autonomous agent orchestration | Institutional-grade efficiency |

This evolution will inevitably lead to a more resilient financial architecture where the quality of execution is a verifiable, programmable property of the protocol itself. The ultimate goal is a frictionless environment where the distinction between centralized and decentralized liquidity is entirely transparent to the end-user. The primary challenge remains the creation of protocols that can scale without sacrificing the core principles of trustless verification.