Structural Liquidity Definition

Order Book Data Processing constitutes the computational transformation of raw intent into structured liquidity. Every limit order entering the system functions as a discrete signal, a probabilistic claim on future value. This processing layer serves as the central nervous system of the exchange, sequencing these signals to establish a continuous price.

In the adversarial environment of digital asset derivatives, the fidelity of this data dictates the survival of market participants. High-frequency updates require a robust architecture to prevent data stale-ness, which leads to toxic flow and adverse selection for liquidity providers. The systemic relevance of Order Book Data Processing lies in its ability to facilitate price discovery under extreme volatility.

By aggregating individual limit orders into a unified depth map, the system provides the necessary inputs for calculating the Greeks and managing margin requirements. Our failure to account for toxic flow within the processing pipeline remains the primary risk to market stability. Without precise data ingestion, the liquidation engine cannot accurately assess the impact of large position closures, potentially leading to cascading failures across the protocol.

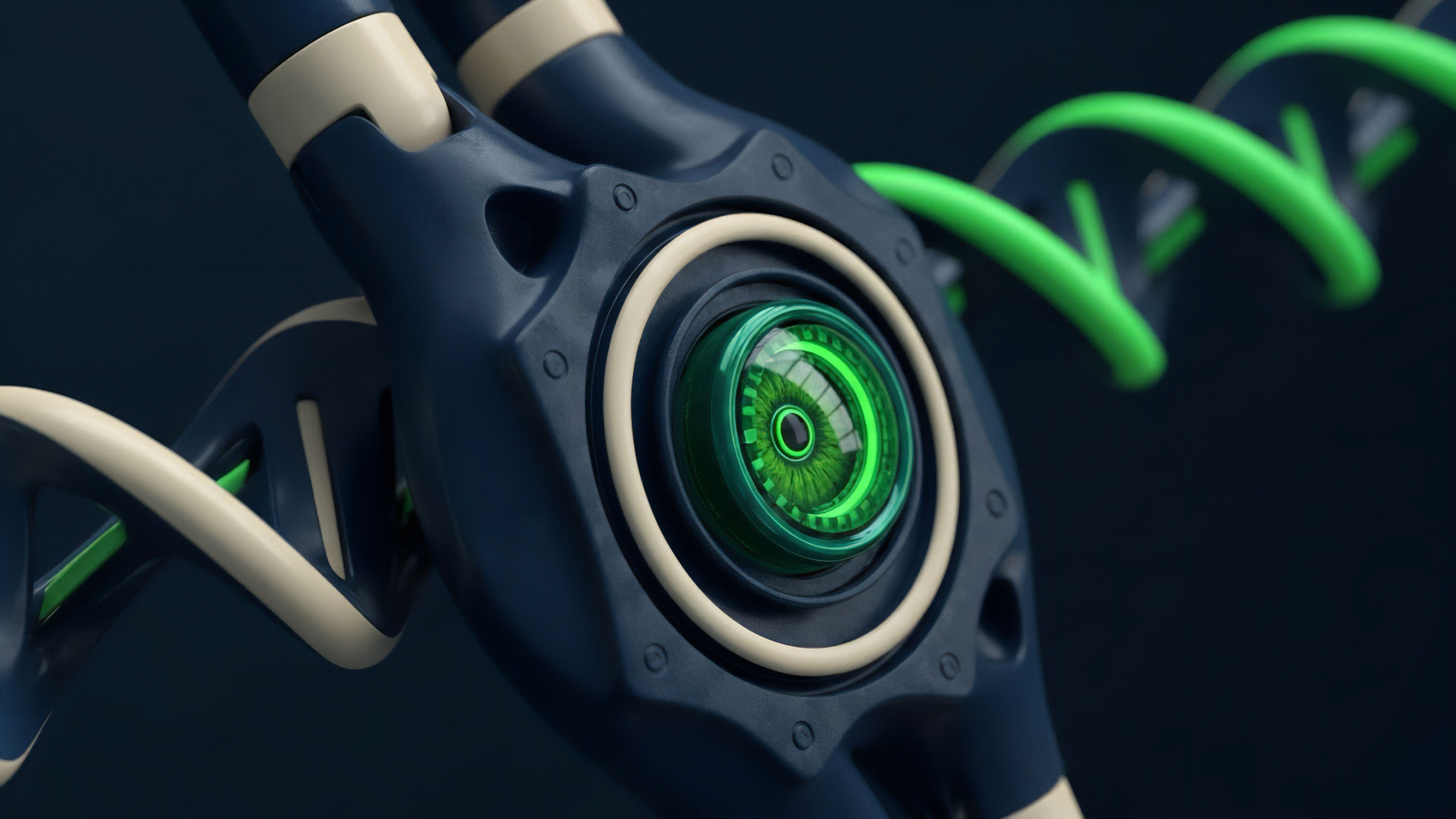

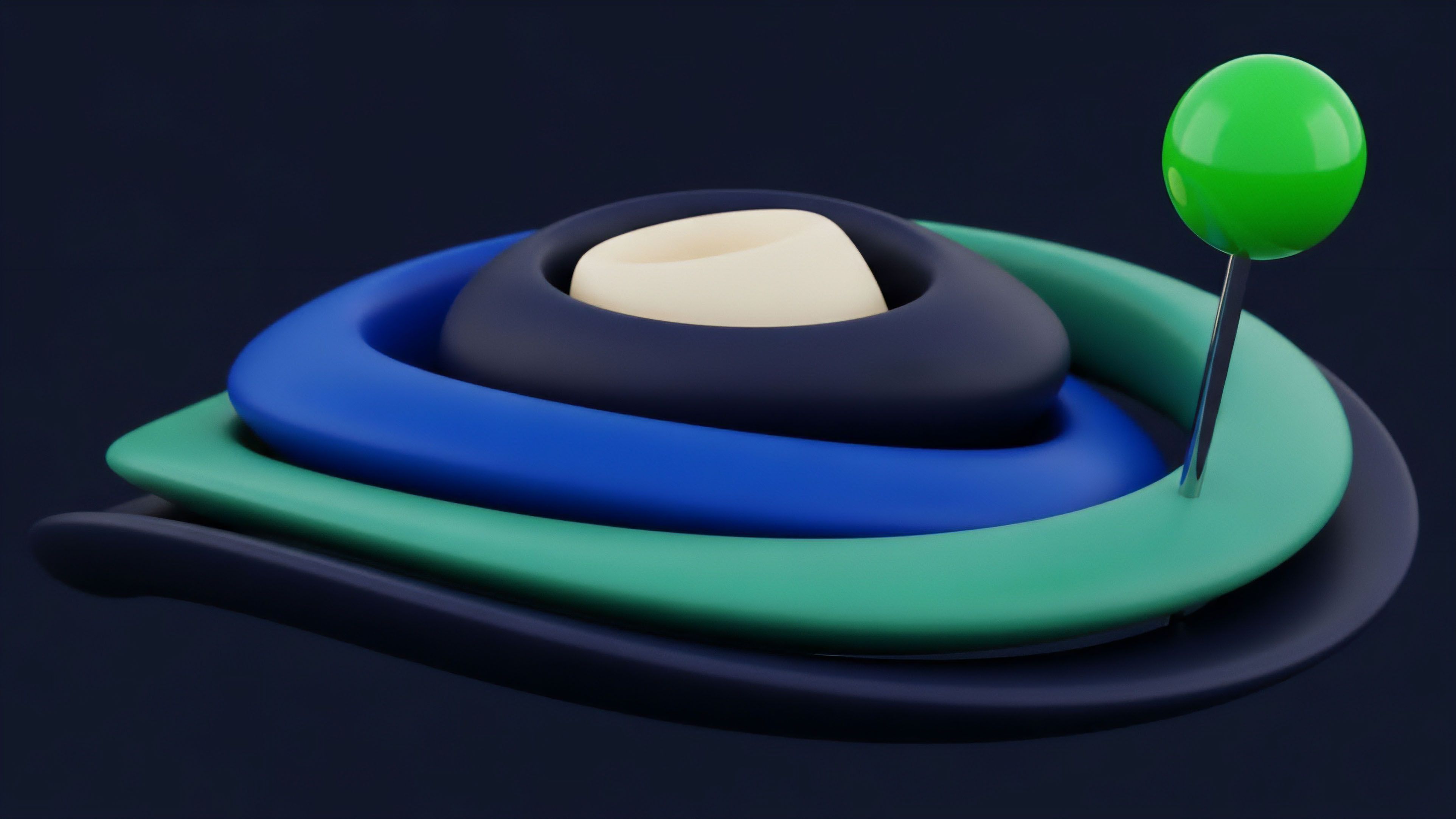

Liquidity maps transform disparate limit orders into a unified representation of market depth.

The process involves more than simple arithmetic; it requires the management of state across distributed systems. In decentralized environments, Order Book Data Processing must reconcile the trade-offs between latency and consensus. The integrity of the order book depends on the sequencer’s ability to maintain a deterministic order of operations.

Any deviation or manipulation at this level, such as front-running or sandwich attacks, compromises the financial fairness of the venue.

Electronic Matching Lineage

The transition from physical trading floors to electronic limit order books (CLOBs) established the requirements for current digital asset architectures. Early electronic systems relied on centralized databases with single-threaded matching logic. As trading volumes expanded, the need for low-latency processing led to the adoption of in-memory data structures.

Crypto-native systems adapted these principles to operate in a global, non-stop market, where the absence of traditional closing bells necessitates a different approach to data persistence and state management. Initial crypto exchanges utilized basic SQL-based matching engines, which struggled with the bursty nature of digital asset volatility. The evolution toward specialized matching engines involved the implementation of binary protocols and high-performance languages like C++ and Rust.

These advancements allowed for the processing of millions of messages per second, a requisite for modern high-frequency trading. The shift toward transparency also mandated that Order Book Data Processing include the broadcasting of real-time depth updates to all participants via WebSockets.

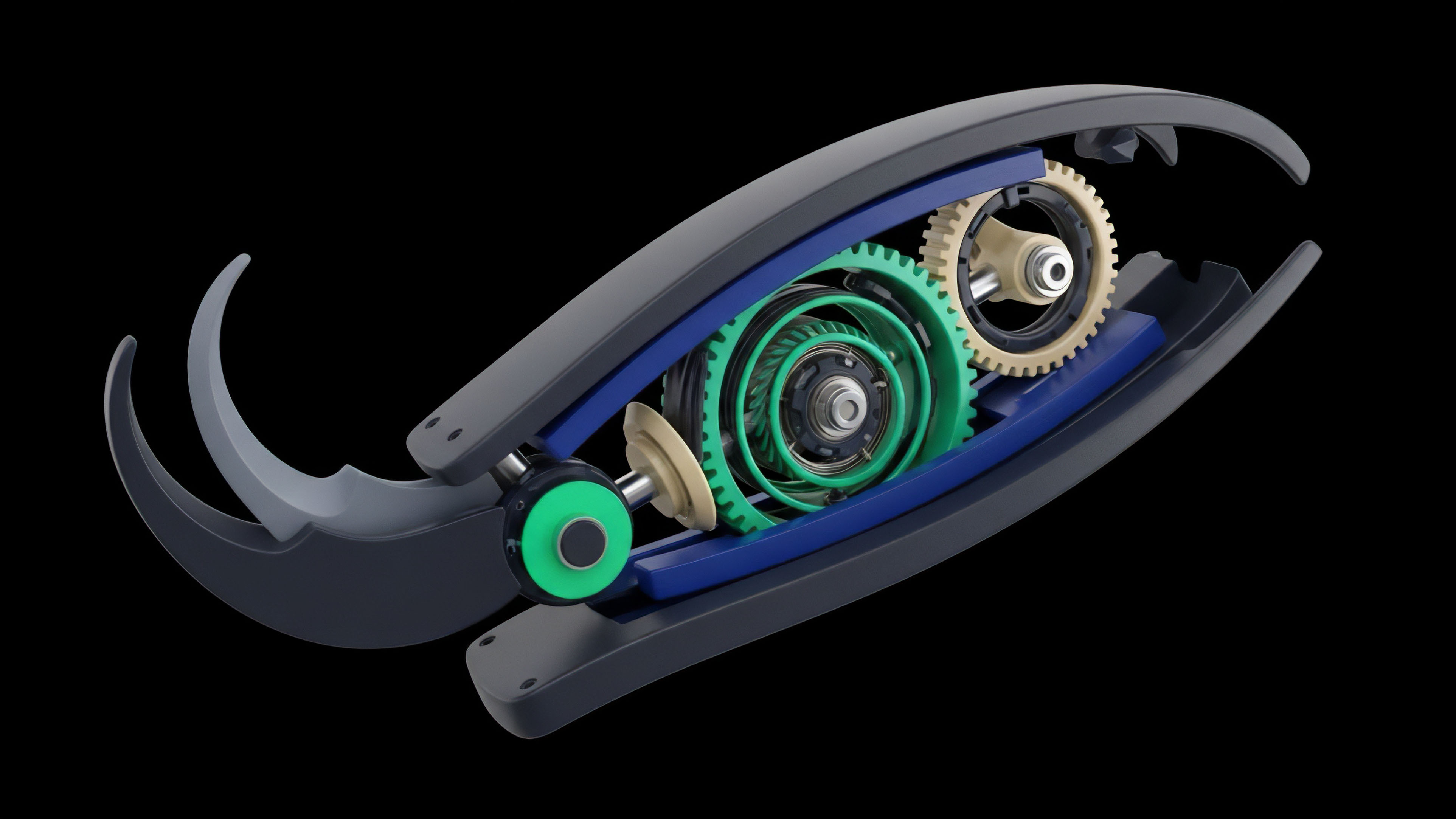

Matching engine throughput determines the upper bound of price discovery efficiency in high-frequency environments.

The emergence of decentralized finance introduced a new set of constraints. Early attempts at on-chain order books were hampered by the high cost of block space and slow settlement times. This led to the temporary dominance of Automated Market Makers (AMMs), which replaced the limit order book with a constant product formula.

Yet, the demand for capital efficiency and sophisticated order types has driven the development of high-throughput Layer 2 solutions and specialized blockchains that return to the CLOB model, albeit with decentralized validation.

Deterministic Priority Logic

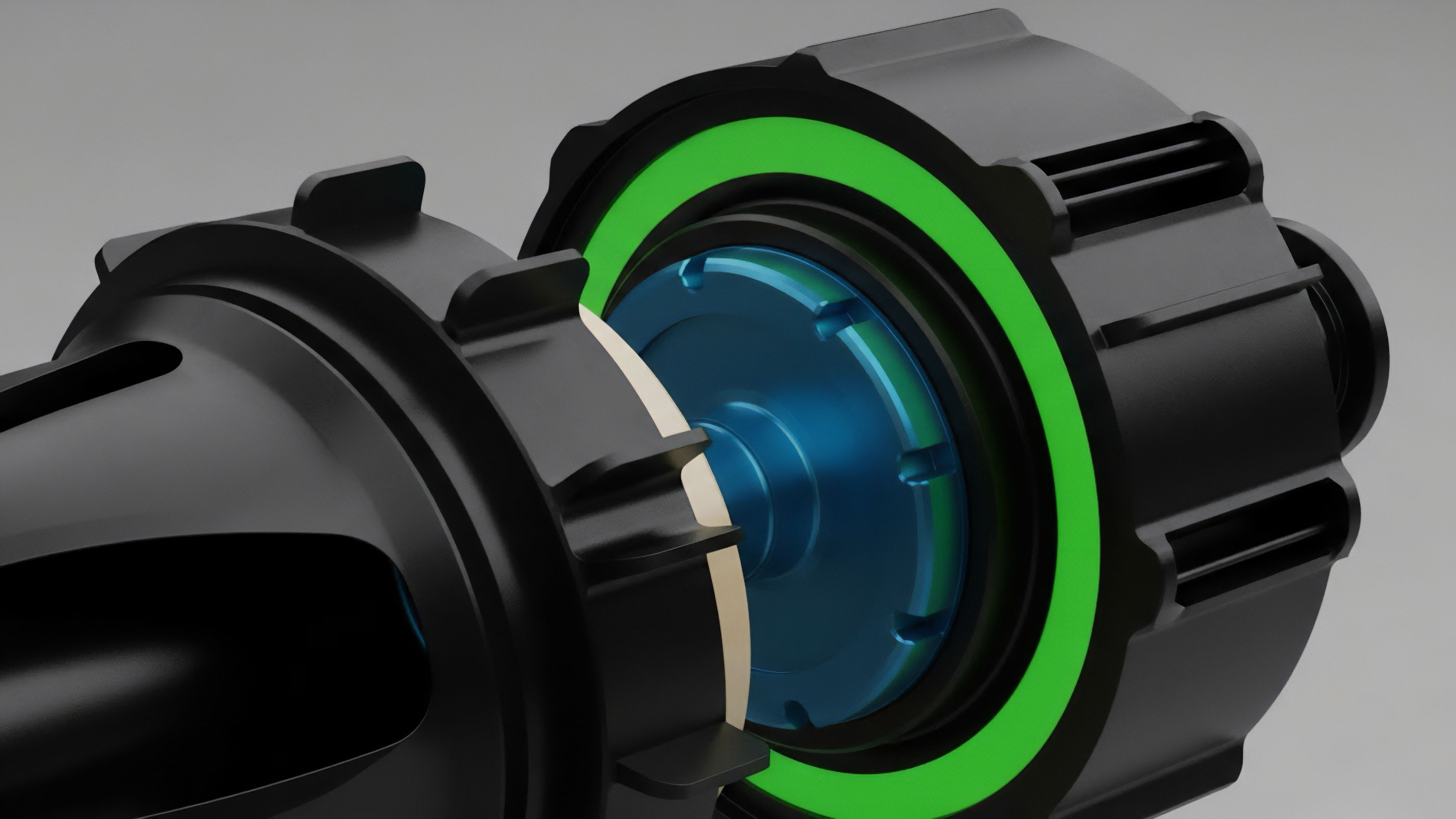

Matching engines rely on deterministic logic to ensure fairness and predictability. The most prevalent model is Price-Time priority, which rewards participants who provide the best price and those who act first at that price level. Order Book Data Processing must track three distinct levels of information: L1 (top of book), L2 (full depth at each price), and L3 (individual order identity).

The quantitative framework for this processing involves calculating the mid-price, bid-ask spread, and cumulative volume to determine the slippage for any given trade size.

| Priority Model | Logic Description | Market Impact |

|---|---|---|

| Price-Time (FIFO) | Orders are filled based on best price then arrival time. | Encourages speed and early liquidity provision. |

| Pro-Rata | Orders at the same price are filled proportionally to size. | Encourages large order sizes and depth. |

| Price-Size-Time | Priority given to price, then size, then time. | Favors institutional participants with high capital. |

The mathematical modeling of the limit order book often employs the Hawkes process to account for the clustering of order arrivals. Order Book Data Processing must handle the high dimensionality of these inputs to provide a real-time view of market pressure. In crypto options, this data is further complicated by the need to map liquidity across multiple strike prices and expiration dates.

The resulting volatility surface is a direct product of the processed order book data, making the ingestion pipeline a decisive factor in option pricing accuracy.

Operational Ingestion Workflow

Handling high-frequency updates requires a structured pipeline that minimizes latency at every stage. The process begins with normalization, where disparate exchange formats are converted into a standard schema. This allows for the aggregation of liquidity from multiple sources, providing a more comprehensive view of the market.

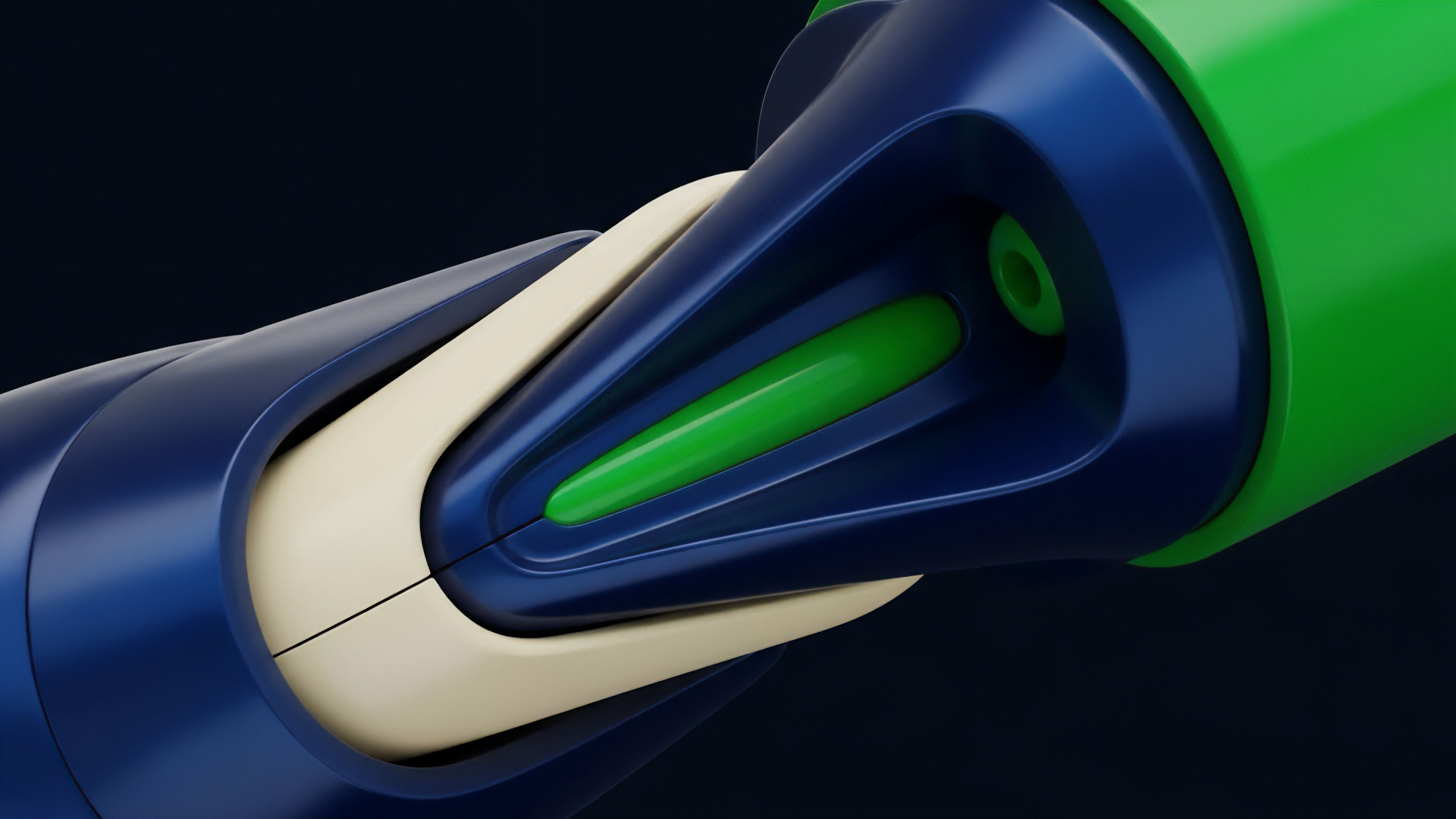

- Data Normalization: Stripping exchange-specific metadata to create a unified packet format for internal processing.

- State Synchronization: Maintaining a local mirror of the order book that is updated in real-time via delta messages.

- Conflict Resolution: Identifying and correcting sequence gaps or out-of-order messages that occur during network congestion.

- Feature Engineering: Calculating real-time metrics such as order flow imbalance and book pressure for algorithmic consumption.

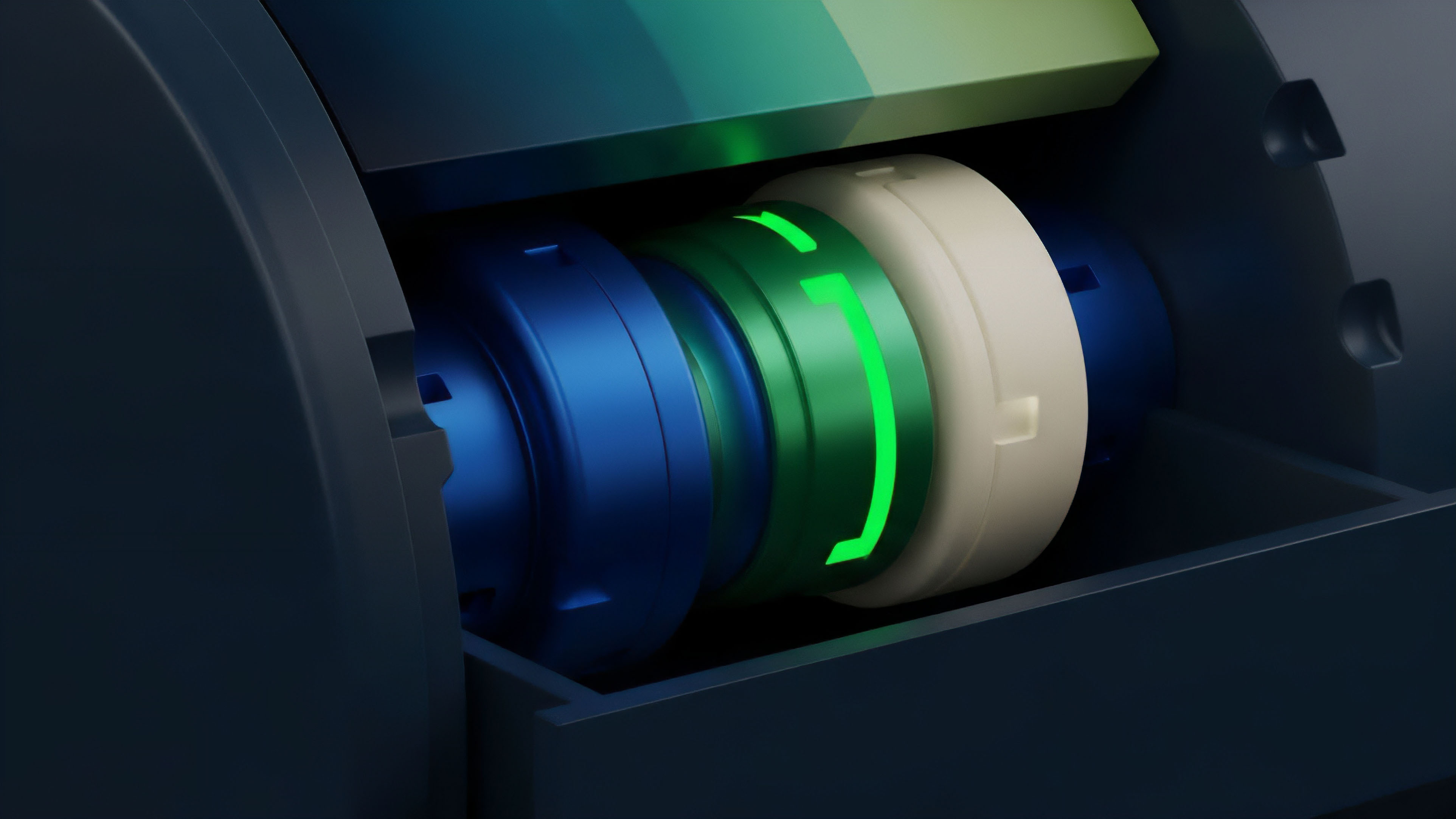

Asynchronous data ingestion remains the primary bottleneck for real-time risk management in decentralized derivatives.

The use of binary formats like SBE (Simple Binary Encoding) or FlatBuffers reduces the serialization overhead, which is vital for maintaining low-latency streams. Order Book Data Processing in a production environment often utilizes kernel bypass techniques and FPGA acceleration to shave microseconds off the ingestion time. This technical rigor is not a luxury; it is a survival requirement in an environment where the fastest actor captures the majority of the arbitrage opportunities.

Architectural Decentralization Shift

The movement from centralized venues to decentralized execution environments has redefined the constraints of Order Book Data Processing.

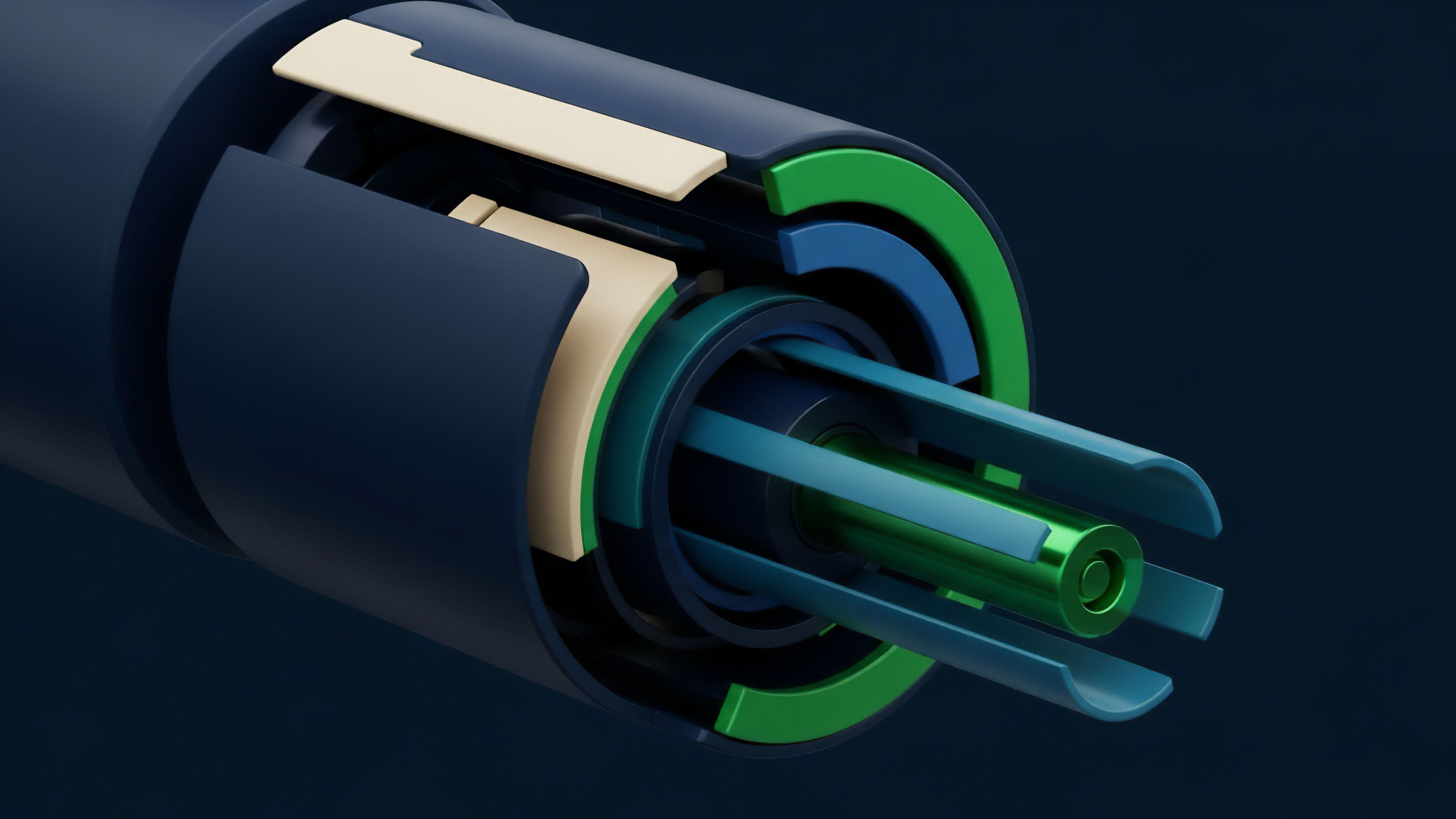

Centralized exchanges benefit from a single source of truth and sub-millisecond execution. Conversely, decentralized CLOBs must contend with the latency of distributed consensus. The rise of “App-Chains” and high-performance Layer 2s has bridged this gap, allowing for off-chain matching with on-chain settlement.

| Feature | Centralized Exchange (CEX) | Decentralized CLOB (DEX) |

|---|---|---|

| Matching Latency | Microseconds | Milliseconds to Seconds |

| Data Transparency | Limited to API output | Full on-chain auditability |

| Custody Risk | High (Exchange held) | Low (Self-custody) |

| Order Cancellation | Free and Instant | Often requires gas or fee |

This evolution has also introduced the concept of “Just-In-Time” liquidity and MEV-aware order books. Order Book Data Processing now includes the analysis of the mempool to anticipate incoming orders and adjust quotes accordingly. The integration of zero-knowledge proofs is the next logical step, allowing for private order books that protect trader intent while still providing verifiable proof of execution.

This shift represents a move toward a more resilient and censorship-resistant financial infrastructure.

Privacy Preserving Order Discovery

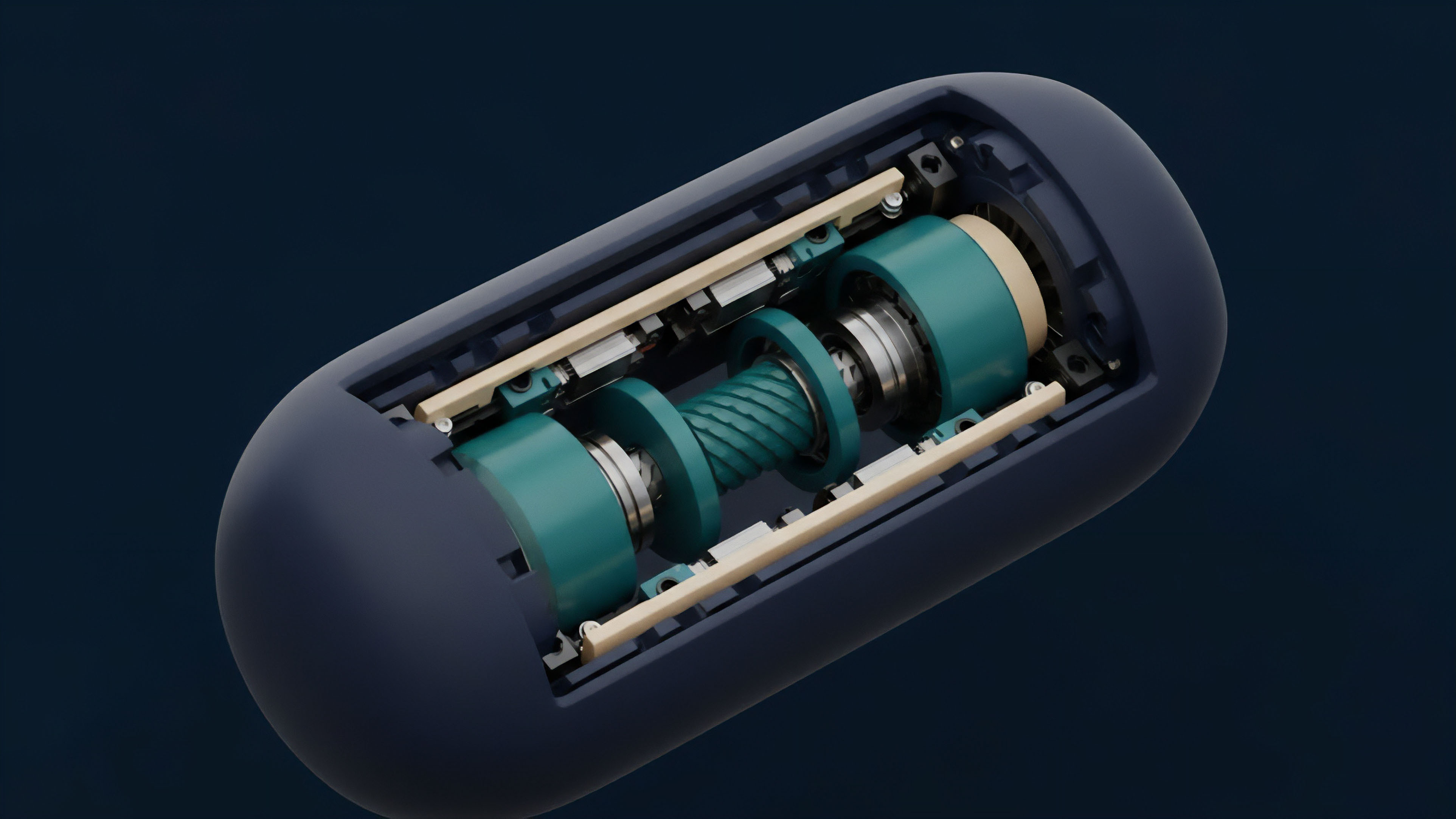

The future trajectory of Order Book Data Processing points toward a synthesis of privacy and efficiency. Asynchronous matching engines will likely replace the strict serial processing of today, allowing for greater scalability across sharded networks. This will require new mathematical models to ensure that price discovery remains robust even when the order of events is not perfectly synchronized across all nodes.

- Zero-Knowledge Dark Pools: Liquidity is visible in the aggregate, but individual order sizes and prices remain encrypted until execution.

- Cross-Chain Liquidity Aggregation: Processing engines that can unify order books across multiple sovereign blockchains in real-time.

- AI-Driven Sequencers: Matching engines that use machine learning to optimize order flow and minimize the impact of toxic arbitrage.

- Intent-Based Architectures: Moving away from explicit limit orders toward signed intents that solvers can batch and execute efficiently.

As these technologies mature, the distinction between a centralized and decentralized order book will blur. The focus will shift from where the data is processed to how the integrity of that process is guaranteed. Order Book Data Processing will remain the foundational layer upon which the next generation of crypto derivatives is built, providing the transparency and efficiency requisite for a truly open financial system. The ability to process vast amounts of data with cryptographic certainty will define the next era of market microstructure.

Glossary

Central Limit Order Book

Cross-Chain Liquidity

High Frequency Trading

Intent-Based Trading

Liquidation Engine

Limit Order Book

On-Chain Settlement

Just in Time Liquidity

Bid-Ask Spread