Essence

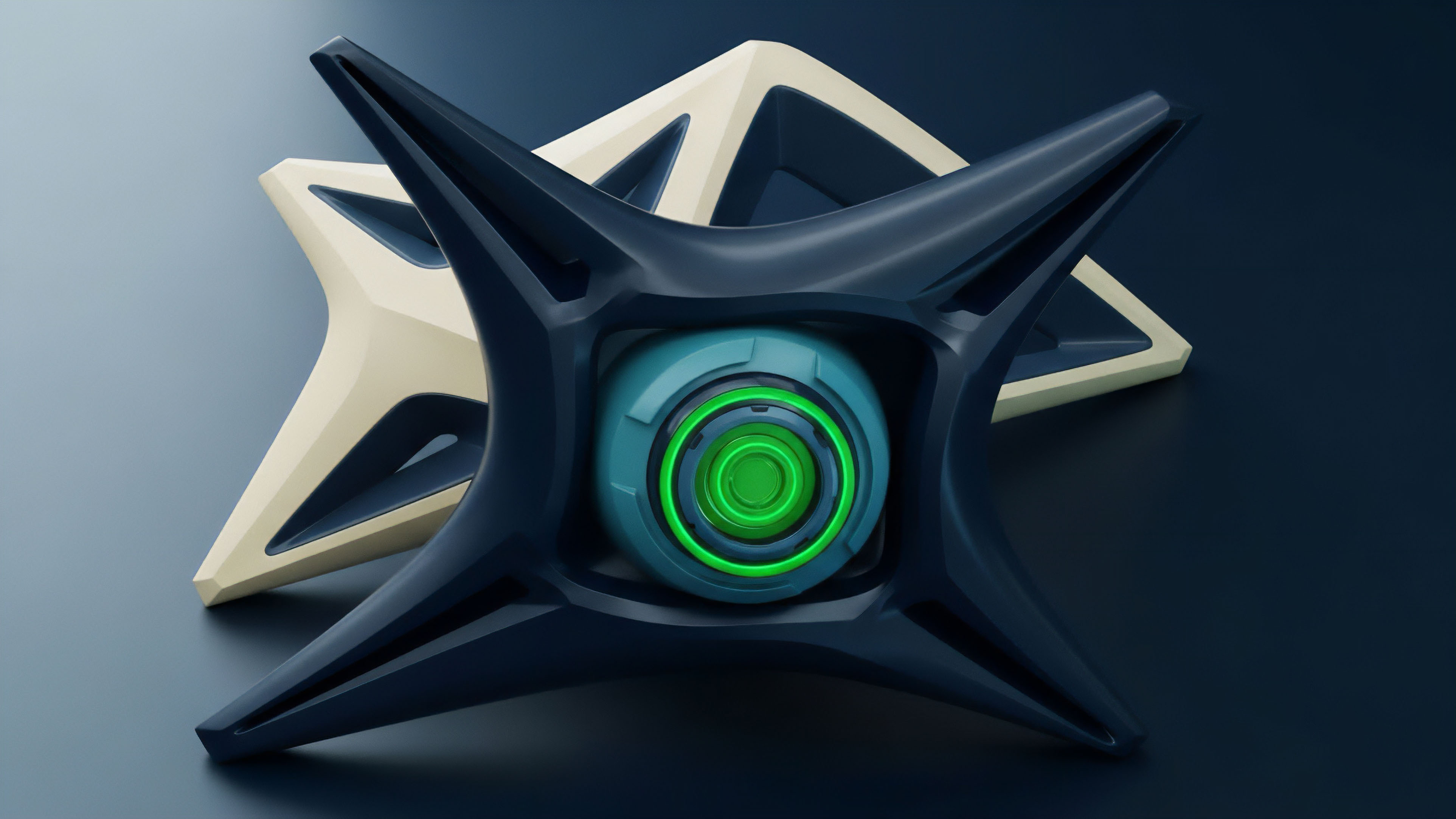

The true load-bearing capacity of any crypto options market is visible only through its order book depth, and the Liquidity Heatmap Aggregation Engine (LHAE) is the necessary tool for rendering this systemic truth. This engine is a high-frequency data pipeline that ingests, cleanses, and synthesizes limit order book snapshots and updates from multiple centralized and decentralized derivatives venues. Its output is a unified, visual representation ⎊ a heatmap ⎊ that maps available liquidity (volume) against price levels (depth) and time (latency).

The LHAE moves the analysis beyond simple top-of-book quotes, focusing instead on the actual capital required to move the options price by a defined increment, known as the effective market depth. This is a crucial distinction, as superficial liquidity can mask a market’s true fragility, particularly during high-volatility events where cascading liquidations are triggered by thin depth beyond the first few price levels.

The Liquidity Heatmap Aggregation Engine transforms fragmented order book data into a single, probabilistic map of systemic liquidity and price resistance.

The core function of the LHAE is to provide an accurate reading of the market microstructure across all execution venues where a specific crypto options contract is traded. Without this unified view, market participants ⎊ from sophisticated market makers to protocol risk managers ⎊ are operating with a partial and delayed understanding of their exposure. The engine’s output serves as the foundational layer for calculating Volume-Weighted Average Price (VWAP) and Time-Weighted Average Price (TWAP) for large options block trades, ensuring execution quality in environments where slippage is a primary cost of doing business.

The systemic implication is clear: a robust LHAE acts as a decentralized financial system’s optical nerve, providing the high-definition vision required to manage risk in an adversarial, low-latency environment.

Origin

The conceptual origin of the LHAE is rooted in the century-old study of Limit Order Book (LOB) mechanics on traditional exchanges, but its modern form is a direct response to the fragmentation inherent in the crypto derivatives landscape. Traditional finance LOB analysis focused on a single, monolithic venue, allowing for straightforward modeling of queue priority and price discovery.

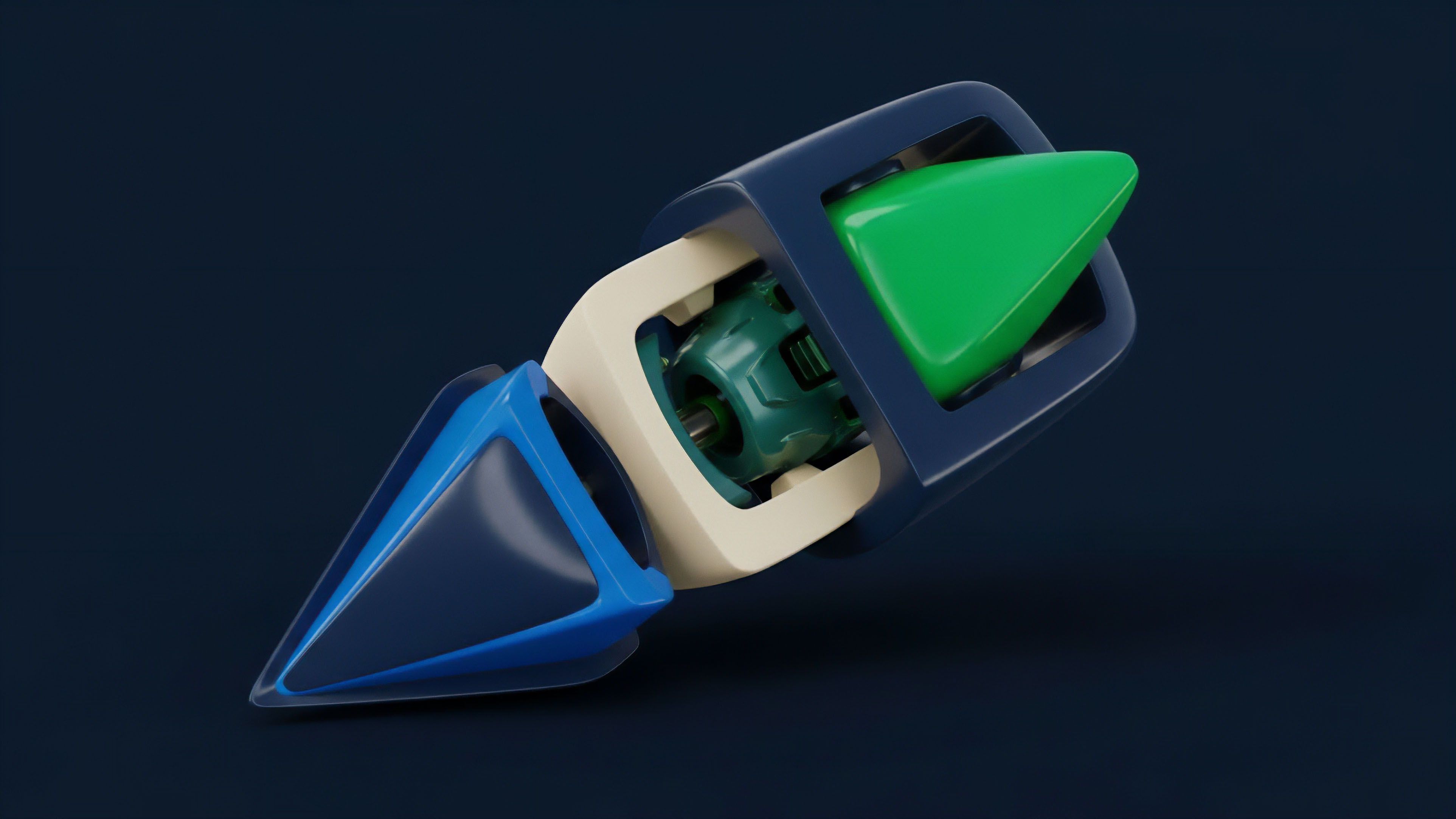

However, the crypto market introduced multiple, often siloed, execution environments: centralized exchanges, decentralized order book protocols, and automated market maker (AMM) pools for options. The LHAE was conceived to solve the fundamental problem of data heterogeneity and liquidity fragmentation. The need for this aggregation became acute with the rise of decentralized options protocols, which often lack the deep, centralized order flow of their traditional counterparts.

A market maker cannot price a BTC call option accurately on a decentralized venue if the majority of the hedging liquidity for the underlying asset resides on a separate centralized exchange. The LHAE, therefore, is an architectural necessity, a bridge between disparate settlement layers, born from the realization that price discovery is a distributed, not localized, phenomenon in this asset class. Its initial prototypes were crude, often relying on simple, time-stamped JSON data streams, but the imperative for low-latency, cross-venue correlation quickly drove the design toward sophisticated, event-driven data architectures.

Theory

The theoretical underpinnings of the Liquidity Heatmap Aggregation Engine are drawn from Market Microstructure Theory and the mathematical modeling of queue dynamics. The engine does not simply sum up visible orders; it applies a complex filtering and weighting process to estimate the true, executable depth. A key concept is the calculation of Imbalance Metrics , which quantify the pressure on a price level by comparing the aggregated volume of bids versus asks.

This metric is a direct input into short-term price prediction models, often serving as a high-frequency proxy for the collective conviction of market participants. The engine’s effectiveness hinges on its ability to manage the twin adversarial challenges of latency and intentional data manipulation. The latency component requires a time-synchronization layer that aligns event streams from sources with different clock drift and reporting speeds, a problem often solved using a NTP-synchronized, event-sourcing architecture that stamps all data with a canonical time before processing.

Data manipulation, particularly spoofing ⎊ the practice of placing large, non-bonafide orders to trick algorithms ⎊ is mitigated through sophisticated filtering algorithms. These algorithms apply a decay function to orders that are repeatedly canceled or amended without execution, reducing their weight in the final heatmap calculation. The resulting data structure is not a simple ledger but a multi-dimensional tensor representing the probability distribution of execution depth.

Microstructural Data Modeling

The LHAE’s processing pipeline executes several critical microstructural analyses to produce a reliable output:

- Effective Spread Calculation: Measures the true cost of execution by accounting for the depth required to fill an order, providing a more honest metric than the quoted bid-ask spread.

- Order Flow Toxicity Analysis: Assesses the likelihood that an incoming order is based on superior, non-public information, using the imbalance metrics and the rate of order book change as inputs.

- Queue Depletion Rate: Calculates the velocity at which standing limit orders are consumed, which is a direct measure of market stress and impending volatility.

Adversarial Mitigation Framework

The system must be built to withstand deliberate deception, a reality in any adversarial financial system. The primary tool is a statistical model that flags and weights orders based on their historical execution probability.

| Heuristic | Description | Impact on Heatmap Weight |

|---|---|---|

| Order-to-Trade Ratio (OTR) | Ratio of order messages (new, cancel, amend) to actual trades. | High OTR results in significant weight reduction. |

| Time-in-Queue Decay | Orders that remain in the book for a short duration before cancellation. | Exponential decay of influence based on cancellation speed. |

| Size Threshold Deviation | Orders significantly larger than the venue’s historical average trade size. | Subject to increased scrutiny and cross-venue validation. |

Accurate options pricing requires moving beyond simple quote aggregation to a statistical analysis of order book queue dynamics and the detection of adversarial liquidity.

Approach

The implementation of a robust Liquidity Heatmap Aggregation Engine demands a systems engineering approach that respects the physics of information flow. A critical initial step is the standardization of the raw data feed, which is a significant technical hurdle given the variety of APIs ⎊ WebSocket, FIX, proprietary ⎊ and data formats across centralized and decentralized venues. The data must be normalized into a single, canonical schema before any aggregation can occur.

This normalization layer is the first line of defense against data heterogeneity.

The Challenge of Latency Arbitrage

In the high-stakes world of crypto options, the LHAE must operate with microsecond precision. A delay of just a few milliseconds can render the heatmap obsolete, particularly around major market events. The entire system must be deployed as close as possible to the data sources, a concept known as co-location , to minimize network transit time.

This is a practical, physical constraint that often dictates the cloud architecture. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored. If your input data is even slightly stale, your perceived options Delta, Gamma, or Vega will be based on a fictional liquidity profile, making your hedging strategy actively destabilizing.

The reliance on stale data is a systemic vulnerability that cannot be compensated for with more sophisticated mathematical models; the foundation must be sound.

Adversarial Data Cleaning

The most challenging aspect of the approach is the continuous, real-time cleansing of data. The LHAE must run a live Pattern Recognition Engine to identify and neutralize the impact of spoofing and wash trading. This requires training machine learning models on historical data to distinguish genuine market interest from manipulative signals.

- Venue Weighting: Assigning a credibility score to each venue based on its regulatory oversight, historical trade volume, and observed level of manipulative activity. Data from a highly regulated, high-volume venue carries a greater weight than data from an opaque, low-volume protocol.

- Cross-Book Correlation: Comparing the imbalance metrics across different exchanges for the same underlying asset. Divergence in these metrics often signals an isolated manipulative attempt on a single venue.

- Execution Probability Modeling: Using historical order-to-trade ratios and order lifetime analysis to assign a probabilistic weight to every limit order, effectively filtering out orders that are statistically unlikely to execute.

Evolution

The evolution of order book analysis software tracks the maturation of the crypto derivatives market itself, moving from a single-venue focus to a multi-protocol, multi-asset systemic view. Early systems focused primarily on centralized exchange data, where the primary challenge was high-speed ingestion and basic visualization. The true architectural shift began with the rise of decentralized finance (DeFi) and the introduction of options on AMMs and hybrid order books.

This necessitated a transition from simple data ingestion to Protocol Physics Synthesis.

The evolution of order book analysis reflects a move from simple data aggregation to a high-dimensional systemic risk modeling that accounts for protocol-specific liquidation mechanics.

The key evolutionary milestones include:

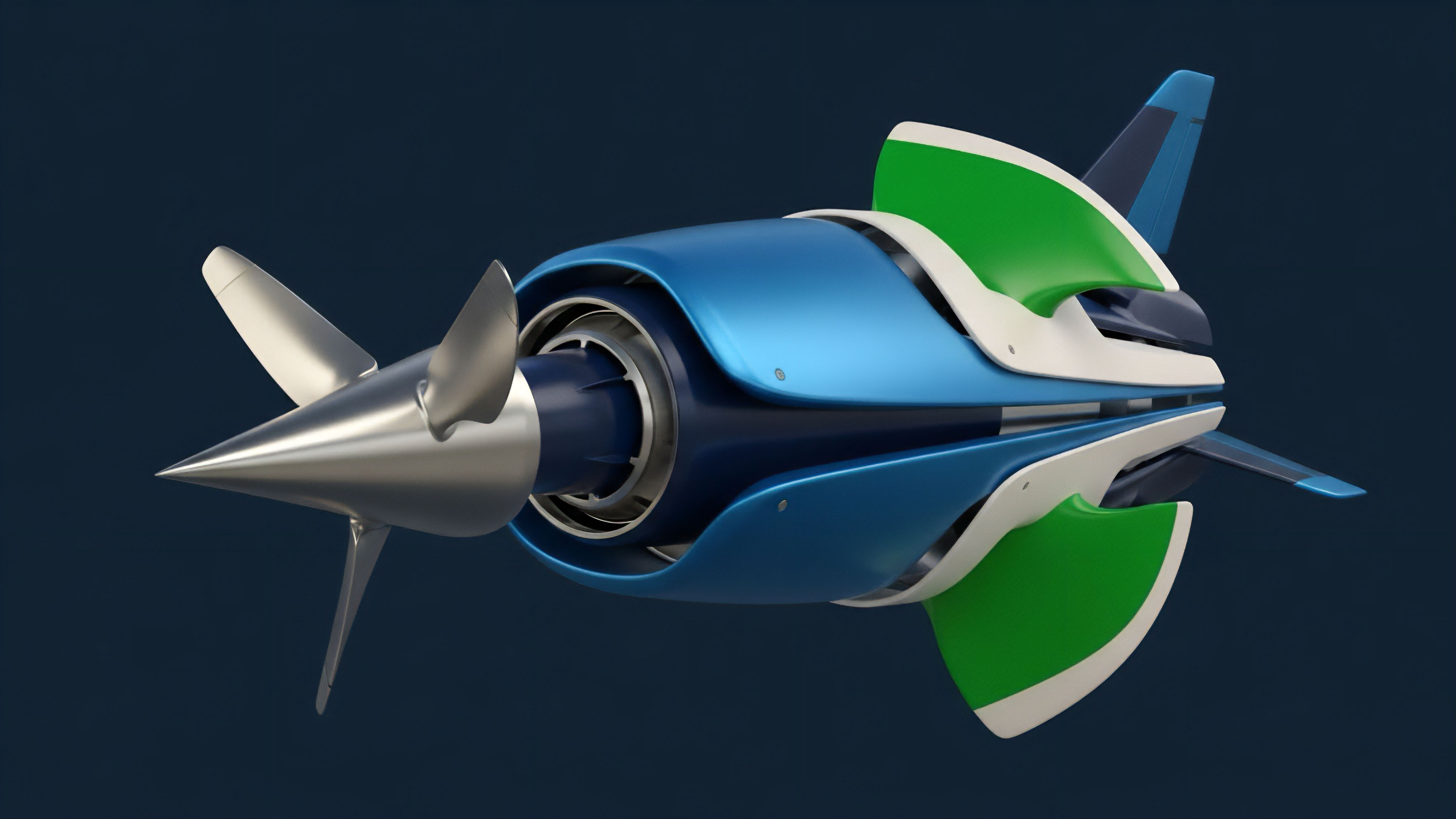

- Decentralized Venue Integration: The necessity of parsing smart contract events (e.g. Uniswap v3 ticks, options vault deposits) and translating these into an equivalent limit order book depth representation, despite the underlying mechanism being pool-based, not order-based.

- Greeks-Informed Heatmaps: The shift from purely price-volume heatmaps to visualizations that incorporate the aggregated Gamma and Vega exposure at different strike prices. This allows a risk manager to see where the market is most structurally sensitive to a small price movement or a volatility shock.

- Cross-Asset Depth Mapping: The realization that options liquidity for a specific token (e.g. ETH) is functionally linked to the liquidity of its perpetual futures and spot pairs. The LHAE evolved to map these interdependencies, providing a consolidated view of the capital required to move the entire complex, not just the option itself.

- Liquidation Cluster Identification: Advanced LHAE versions now actively scan the order book and derivatives funding rates to model potential cascading liquidation points, transforming the tool from a trading aid into a systemic risk assessment dashboard.

This evolutionary arc demonstrates a move away from simplistic visualization toward a highly complex, interconnected systems analysis. The system architect’s focus shifted from simply reporting the data to actively stress-testing the financial foundation of the underlying protocols.

Horizon

The future of the Liquidity Heatmap Aggregation Engine lies at the intersection of quantitative finance, smart contract security, and decentralized governance.

The next generation of LHAEs will not just report liquidity; they will be active, autonomous agents embedded within decentralized protocols.

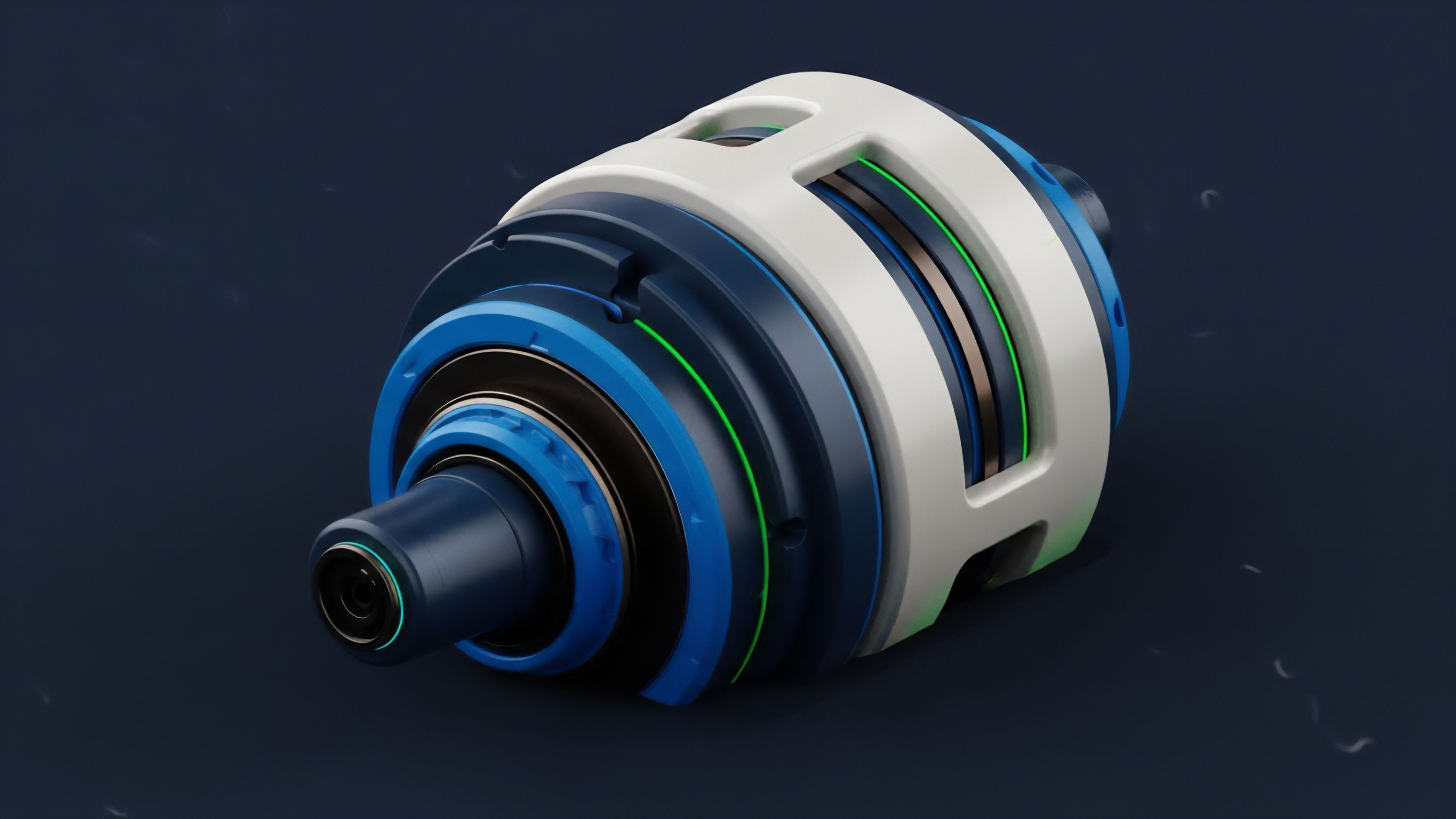

Protocol Physics and Automated Risk

The most significant leap will be the integration of LHAE output directly into the risk and margin engines of decentralized options protocols. This creates a closed-loop system where the protocol’s internal parameters ⎊ such as collateral requirements, liquidation thresholds, and fee structures ⎊ dynamically adjust based on real-time, aggregated, and adversarial-filtered liquidity data.

- Autonomous Parameter Adjustment: Governance systems will delegate limited authority to the LHAE to tighten margin requirements when the aggregated liquidity heatmap shows dangerously thin depth, effectively stress testing the foundation and pre-empting contagion.

- Decentralized Liquidity Provisioning: The LHAE will inform automated market maker strategies, directing capital to specific price and strike ranges where the heatmap indicates a structural gap or an arbitrage opportunity created by cross-venue imbalance.

- Synthetic Data Generation: Advanced models will use the aggregated depth to generate synthetic order book data for back-testing new options pricing models, allowing quantitative analysts to simulate market conditions under extreme liquidity shocks.

The Integration of Volatility Surfaces

The LHAE will merge with the live volatility surface. Instead of viewing liquidity and volatility as separate inputs, the future system will project the Implied Volatility (IV) across the strike axis, color-coding the heatmap based on the local Gamma and Vega risk.

| Current Function | Horizon Function | Systemic Impact |

|---|---|---|

| Static Depth Visualization | Dynamic, IV-Weighted Risk Tensors | Real-time, capital-efficient risk pricing. |

| Manual Spoofing Filter | Autonomous, Zero-Latency Adversarial Filter | Enhanced market integrity and execution quality. |

| External Trading Signal | Internal Protocol Risk Governor | Closed-loop, self-regulating decentralized finance. |

The inability to respect the skew is the critical flaw in our current models, and the LHAE’s evolution will force us to confront this reality by visualizing the liquidity available to absorb a sudden shift in implied volatility. The final form of this engine is a self-aware market sensor, a necessary component for achieving true financial system resilience.

Glossary

Protocol Risk Governance

Market Manipulation Mitigation

Co-Location Infrastructure

Crypto Options

Decentralized Exchange Order Flow

Automated Market Maker

Limit Order

Financial System Resilience

Implied Volatility Skew Analysis