Essence

Oracle Data Quality Control functions as the definitive mechanism for validating and sanitizing off-chain information before it influences decentralized financial protocols. At its heart, this system addresses the inherent trust gap between real-world data feeds and on-chain execution logic. By applying rigorous verification parameters, it mitigates the risk of malicious or erroneous price data triggering unintended liquidations or derivative contract settlements.

Oracle Data Quality Control acts as the necessary filtration layer ensuring that external market data maintains integrity before governing on-chain financial outcomes.

The architecture relies on multi-source aggregation and statistical deviation analysis to detect anomalies in real-time. Protocols operating without these controls remain susceptible to price manipulation attacks, where artificial volatility forces insolvency in automated market makers or options vaults. True Oracle Data Quality Control prioritizes the consistency of data delivery over raw speed, favoring deterministic outcomes in high-stakes environments.

Origin

The requirement for Oracle Data Quality Control surfaced directly from the fragility observed in early decentralized finance iterations.

Initial protocols frequently utilized single-source price feeds, creating a singular point of failure that adversarial actors exploited through flash loan attacks. The financial history of the sector remains littered with instances where compromised or delayed price data led to catastrophic loss of liquidity.

- Early Decentralized Systems lacked defensive layers, making them vulnerable to direct manipulation of spot market prices.

- Price Feed Vulnerabilities triggered systemic liquidations when off-chain assets decoupled from their on-chain representations.

- Security Research established that consensus-based verification models provide superior resilience compared to centralized data reporting.

Developers recognized that relying on external data necessitates a defensive posture akin to traditional market surveillance. This realization transformed the design of oracle networks from passive conduits into active, adversarial-resistant validation engines. The transition from simplistic data delivery to complex, quality-controlled data streams marks the maturation of the decentralized derivative sector.

Theory

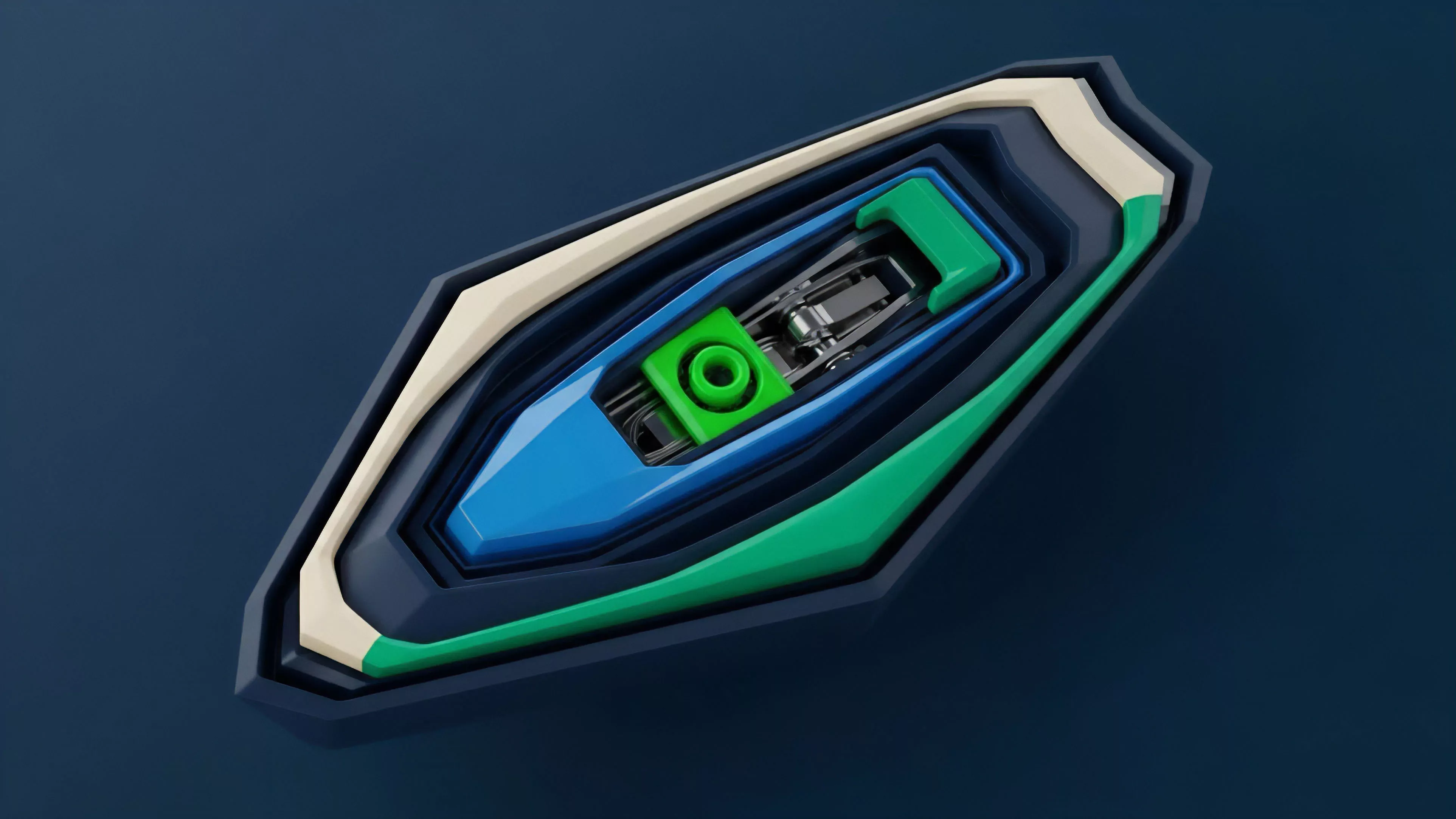

The mathematical foundation of Oracle Data Quality Control rests upon probabilistic consensus and deviation thresholds.

Protocols must model the expected variance of asset prices across multiple venues to establish a baseline of truth. When an incoming data point deviates beyond a predefined standard deviation, the system must trigger an automated suspension or utilize secondary fallback feeds to maintain contract stability.

| Parameter | Mechanism |

| Variance Threshold | Statistical boundary for feed rejection |

| Latency Penalty | Time-weighted decay for stale information |

| Source Weighting | Reputation-based influence of individual providers |

Rigorous verification of external data points prevents the propagation of price errors into automated margin engines and settlement logic.

Adversarial environments demand that data quality systems account for non-random manipulation attempts. Strategic participants attempt to distort oracle inputs to trigger specific liquidation thresholds. By implementing weighted consensus, the protocol minimizes the impact of any single compromised node.

This structural approach mirrors traditional quantitative risk management, where outliers undergo systematic investigation rather than immediate integration. The physics of protocol design dictates that security is inversely proportional to reliance on a single external source. Sometimes, the most effective protection involves pausing the system entirely, an admission that human-governed safety is superior to flawed automation.

Approach

Current implementations of Oracle Data Quality Control emphasize decentralization of the ingestion layer.

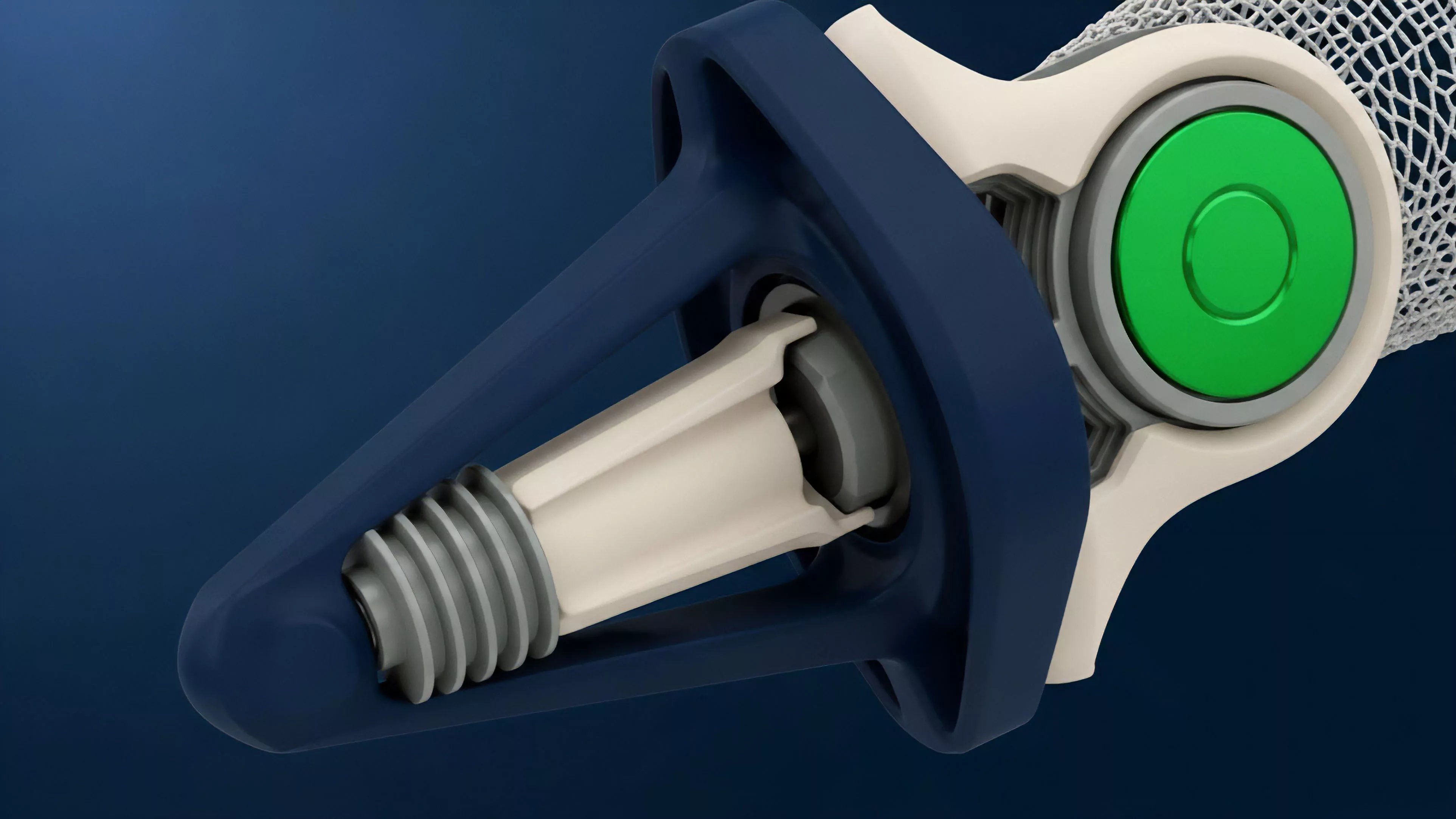

Providers utilize cryptographic proofs to verify the origin of data, ensuring that information remains tamper-proof during transmission. This involves the deployment of decentralized nodes that independently fetch, sign, and broadcast price updates to a smart contract aggregator.

- Data Aggregation occurs through decentralized nodes that compute a median value from multiple trusted exchanges.

- Anomaly Detection algorithms monitor incoming feeds for rapid, uncharacteristic spikes that suggest market manipulation.

- Fallback Mechanisms allow protocols to switch between primary and secondary oracle providers if data quality degrades.

Market makers and derivative platforms now incorporate these controls directly into their smart contract code. This creates a feedback loop where the protocol continuously assesses the reliability of its own inputs. The objective is to achieve a state where the cost of manipulating the oracle exceeds the potential profit gained from the resulting derivative settlement error.

Evolution

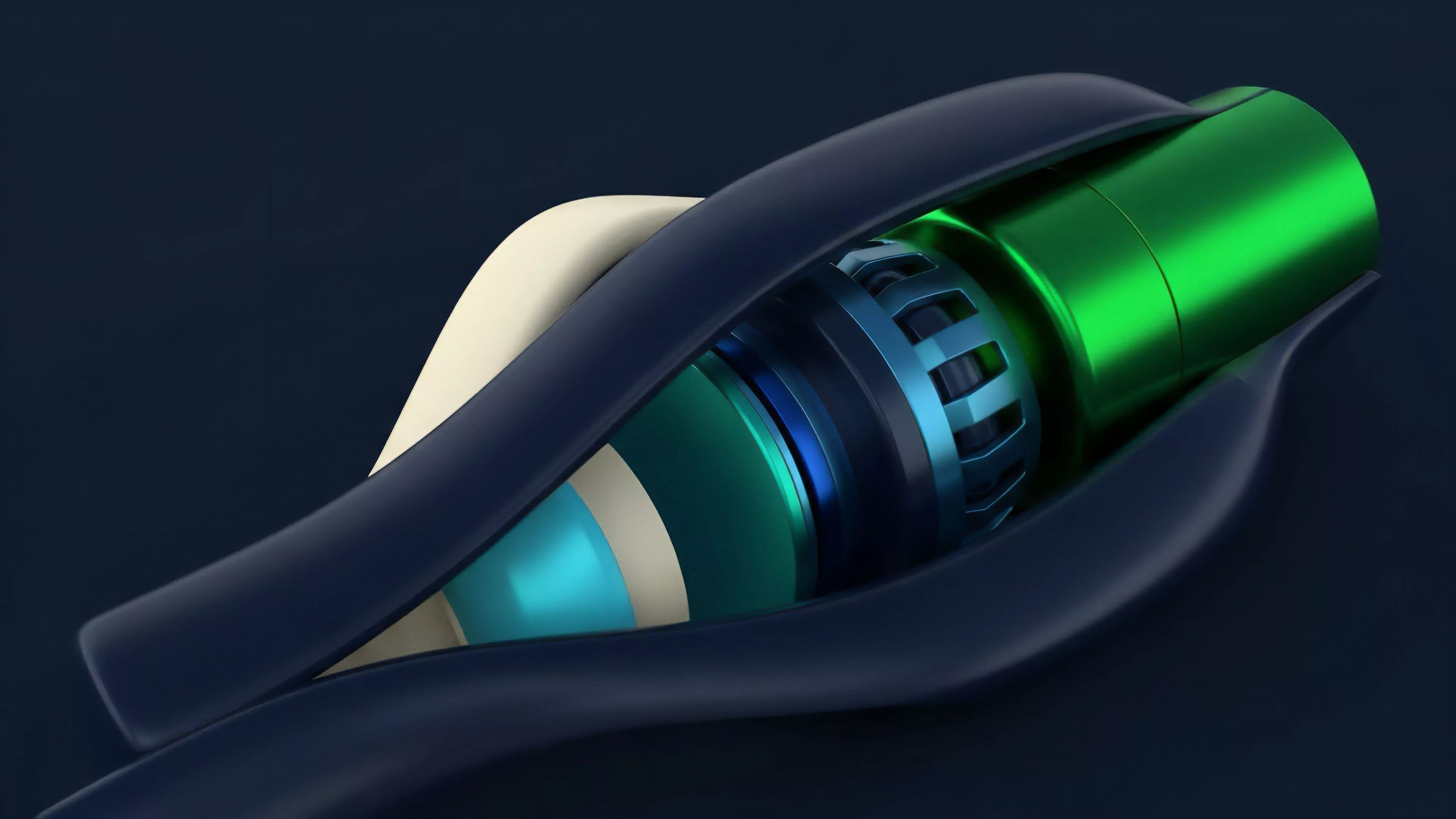

The progression of Oracle Data Quality Control reflects a shift from centralized, single-source feeds toward complex, multi-layered validation networks.

Initially, developers focused on increasing the frequency of updates to minimize latency. However, market participants quickly realized that high-frequency updates provided more opportunities for manipulation unless paired with robust validation logic.

Advanced protocols now utilize decentralized consensus and reputation systems to ensure the long-term reliability of external price inputs.

Modern systems integrate cross-chain validation, allowing protocols to verify asset prices across disparate blockchain environments. This expansion provides a wider net for detecting price discrepancies. The current trajectory suggests a movement toward hardware-level verification, utilizing trusted execution environments to guarantee that data processing occurs in a secure, isolated state.

Horizon

The future of Oracle Data Quality Control lies in the integration of zero-knowledge proofs to verify data authenticity without exposing the underlying source.

This development will allow for privacy-preserving price feeds that maintain high levels of security while minimizing exposure to surveillance. Additionally, machine learning models will likely play a larger role in predictive quality control, identifying potential manipulation patterns before they manifest in on-chain price movements.

- Zero-Knowledge Integration enables verifiable data feeds while protecting the identity and strategies of data providers.

- Predictive Analytics will allow protocols to anticipate and block malicious inputs based on historical manipulation signatures.

- Cross-Chain Consensus will unify data standards, reducing the fragmentation currently seen across different decentralized networks.

As the volume of assets locked in derivative contracts grows, the pressure to refine these controls will intensify. Protocols that fail to maintain high standards of data integrity will face rapid obsolescence as capital migrates toward more resilient, audit-focused architectures. The path forward demands an uncompromising commitment to technical accuracy and adversarial defense.