Essence

Secure Data Integration functions as the cryptographic connective tissue between decentralized oracle networks and derivative settlement engines. It ensures that the price feeds, volatility surfaces, and collateral valuations consumed by smart contracts maintain absolute fidelity to underlying market conditions. Without this layer, the integrity of automated liquidation protocols collapses under the weight of adversarial data manipulation.

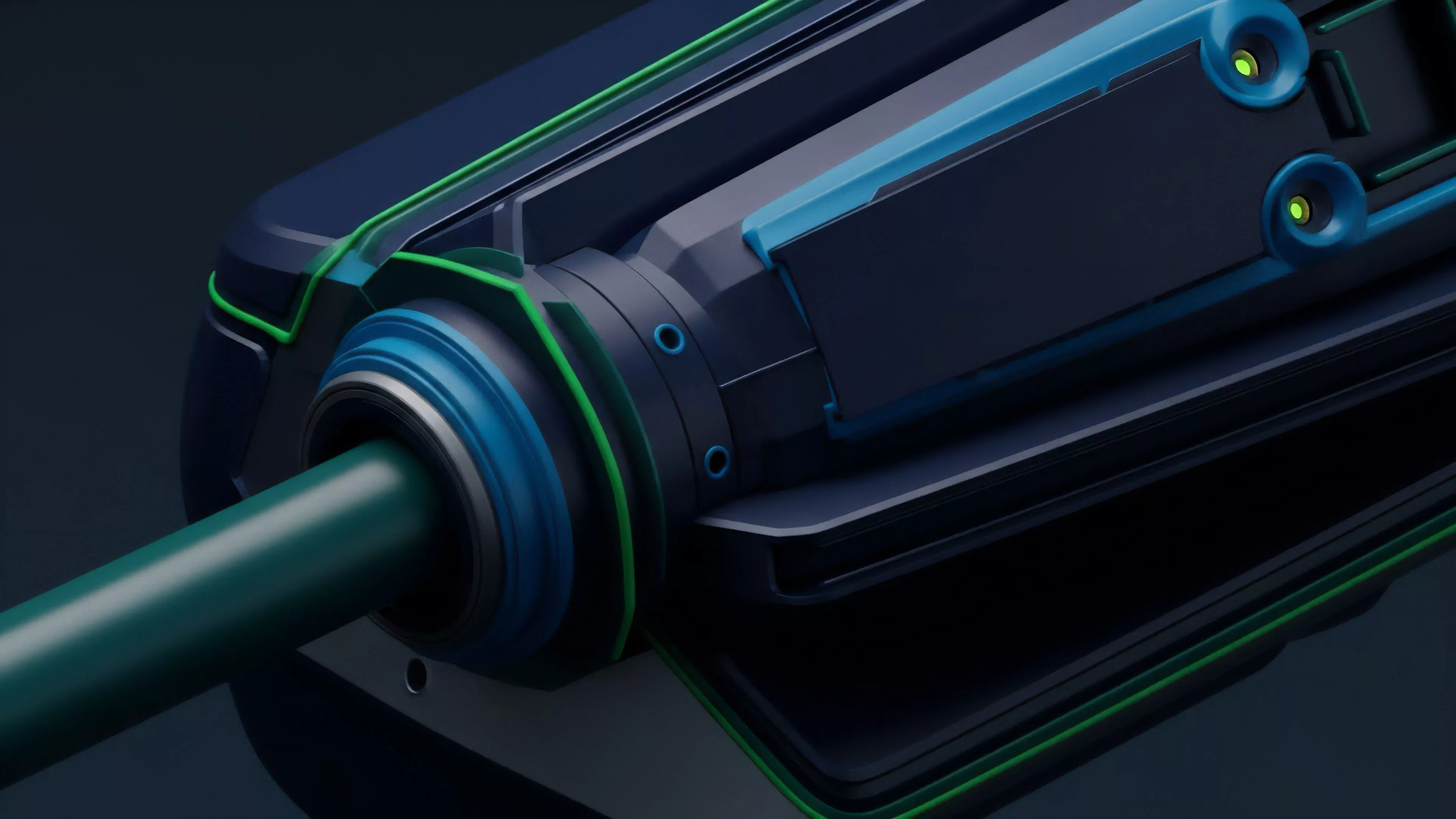

Secure Data Integration ensures cryptographic fidelity between off-chain market signals and on-chain derivative execution engines.

This architecture replaces traditional trust-based intermediaries with verifiable proofs, enabling decentralized exchanges to ingest complex financial data without compromising the non-custodial nature of the platform. It addresses the fundamental vulnerability of decentralized finance, where the discrepancy between internal state and external reality creates opportunities for toxic order flow and systemic exploitation.

Origin

The necessity for Secure Data Integration emerged from the failure of simple, centralized price feeds to withstand the volatility of digital asset markets. Early decentralized exchanges relied on single-source APIs, which became vectors for manipulation.

Developers recognized that if the input is compromised, the entire smart contract logic operates on fraudulent premises.

- Oracle Decentralization initiated the shift toward aggregating multiple independent nodes to provide a single, tamper-resistant data point.

- Cryptographic Proofs introduced zero-knowledge mechanisms to verify that data originated from authorized sources without revealing sensitive transmission paths.

- Time-Weighted Average Price algorithms were developed to smooth out instantaneous spikes and prevent flash-loan-induced liquidation events.

This transition mirrors the evolution of high-frequency trading infrastructure, where the speed of information delivery is subordinated to the verifiable accuracy of the data packet. The focus moved from mere transmission to rigorous validation at every hop within the data lifecycle.

Theory

The theoretical framework governing Secure Data Integration rests on the mitigation of information asymmetry between decentralized protocols and global liquidity pools. The primary challenge involves maintaining low-latency updates while preventing data poisoning by adversarial agents seeking to trigger liquidation thresholds.

| Mechanism | Function |

| Threshold Cryptography | Distributes trust across nodes to sign data packets. |

| Proof of Stake | Aligns economic incentives of validators with data accuracy. |

| Deviation Thresholds | Filters noise by only updating state when price shifts exceed a set percentage. |

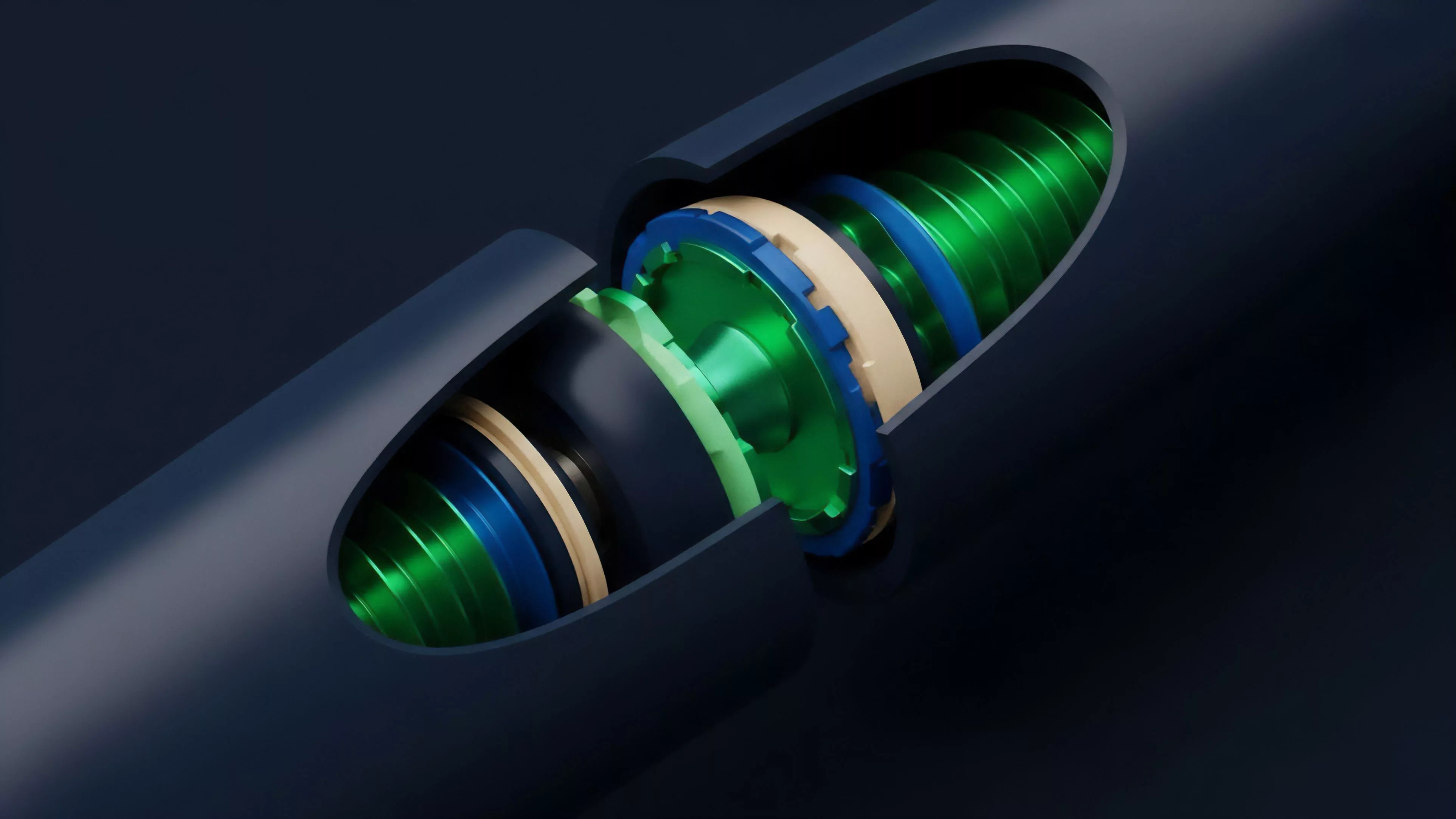

The mathematical model often utilizes a weighted median aggregation to neutralize outliers. This ensures that even if a subset of data providers is compromised, the final input remains representative of the broader market. The system functions as a decentralized consensus mechanism where the truth is determined by the majority of economically bonded actors rather than a single authority.

Data validation protocols utilize weighted median aggregation to neutralize adversarial price manipulation and maintain systemic stability.

The physics of this protocol requires a balance between gas efficiency and data granularity. Excessive frequency increases costs, while insufficient frequency allows for front-running opportunities. The optimal state exists where the cost of attacking the oracle exceeds the potential profit derived from the resulting mispricing.

Approach

Current implementation strategies emphasize modularity and cross-chain compatibility.

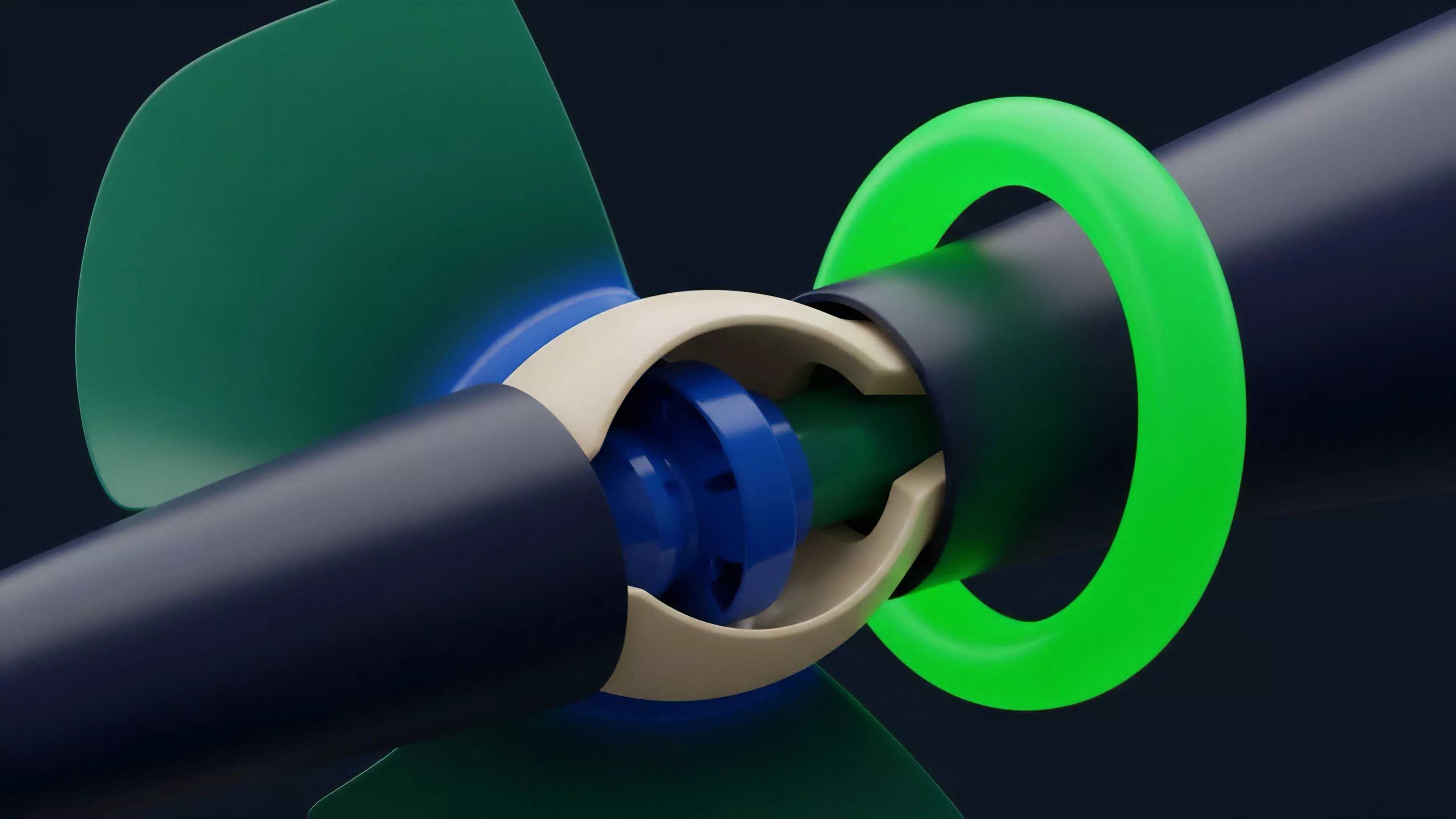

Protocols now deploy specialized Data Integration Layers that act as independent middleware, isolating the core derivative logic from the volatility of raw data ingestion.

- Modular Oracle Design separates the data fetching logic from the consensus layer, allowing for rapid updates to source aggregators.

- Verifiable Random Functions enable fair distribution of rewards and selection of data reporting nodes to prevent collusion.

- Collateral Value Smoothing implements moving averages that adjust the effective margin requirement based on realized volatility rather than spot price alone.

This approach shifts the burden of security from the application layer to the infrastructure layer. By treating data as a commodity with verifiable provenance, protocols can achieve a higher degree of resilience against market shocks. The strategy prioritizes survivability, acknowledging that in an adversarial environment, a system that fails gracefully is superior to one that attempts to maintain perfect but fragile accuracy.

Evolution

The architecture has transitioned from basic price feeds to complex, state-aware data streams that include volume, open interest, and implied volatility.

This evolution reflects the maturation of decentralized markets, which now demand the same level of sophistication found in traditional institutional trading environments.

| Phase | Focus |

| Primitive | Basic spot price aggregation. |

| Intermediate | Deviation-based updates and multi-source consensus. |

| Advanced | Real-time volatility surface construction and cross-chain data synthesis. |

As liquidity fragments across multiple layers and chains, Secure Data Integration has become the primary mechanism for unifying market perception. It facilitates the creation of synthetic assets that track off-chain indices, expanding the scope of decentralized finance beyond native crypto-assets. The system is currently moving toward a model where data provenance is embedded directly into the asset transaction, creating a permanent, auditable trail for every price update.

Horizon

Future developments in Secure Data Integration will focus on zero-knowledge hardware acceleration and decentralized identity for data providers.

The objective is to achieve sub-millisecond latency for global data synchronization without sacrificing the cryptographic guarantees that define the ecosystem.

Cryptographic data provenance will define the next phase of decentralized financial infrastructure by embedding verification into every transaction.

The next frontier involves integrating real-time geopolitical and macroeconomic data directly into derivative pricing models. This will allow for the automated adjustment of risk parameters in response to external shocks, effectively creating self-healing financial systems. The ultimate goal is the construction of a global, permissionless, and resilient financial layer where information flow is as robust as the consensus mechanism itself. What paradox emerges when the speed of data validation becomes the limiting factor for global decentralized market efficiency?