Essence

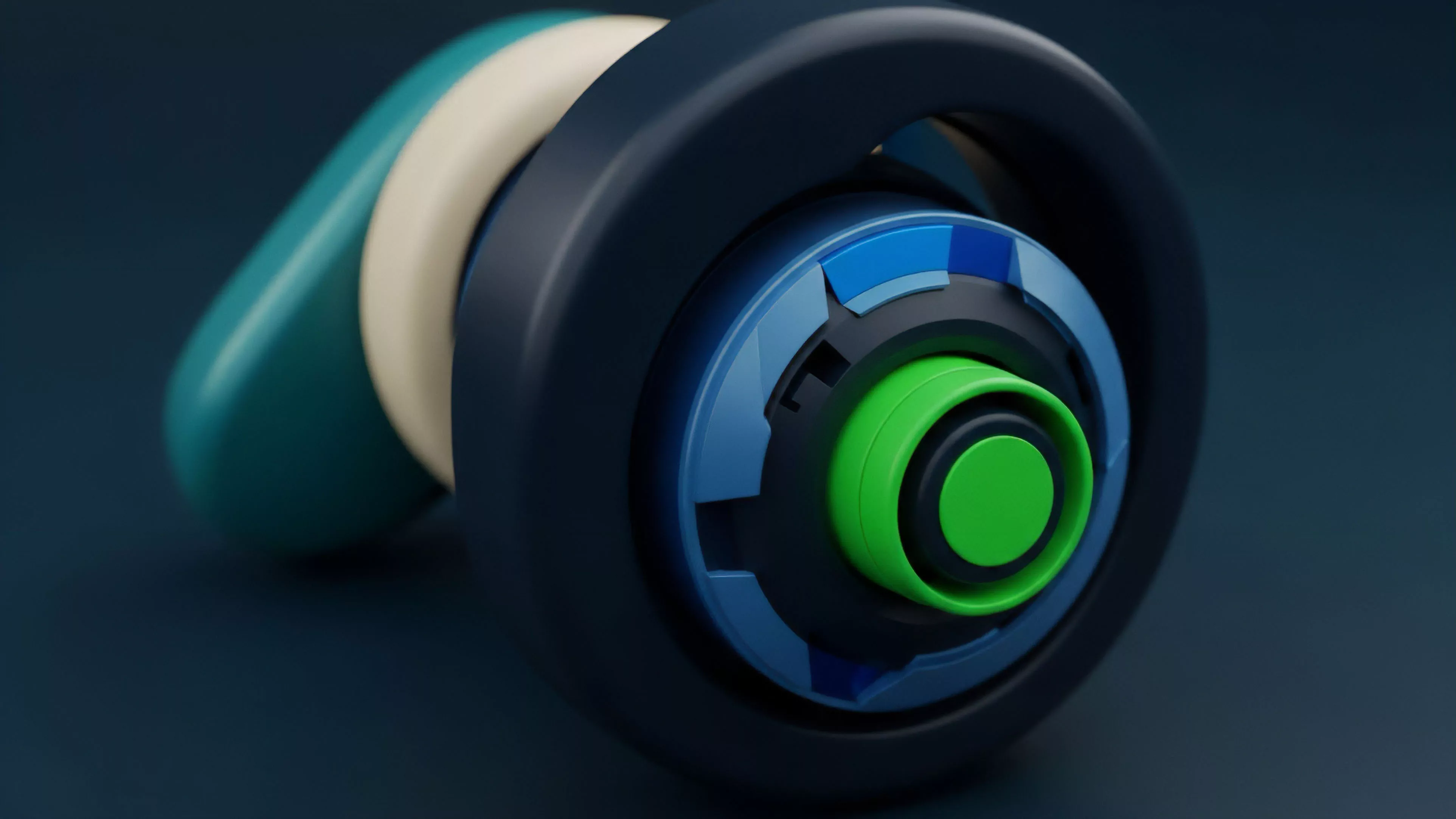

Onchain Volatility Modeling represents the mathematical architecture designed to quantify and predict price dispersion within decentralized liquidity venues. It operates by synthesizing real-time order flow data, block-space demand, and historical state transitions directly from the ledger. Unlike traditional finance where latency and centralized data feeds dictate model parameters, decentralized systems require models that ingest the entirety of the protocol state to derive risk premiums.

Onchain volatility modeling transforms raw blockchain transaction data into predictive measures of asset dispersion within decentralized liquidity pools.

The core utility lies in the conversion of stochastic market movements into actionable risk metrics for automated market makers and decentralized option protocols. By anchoring volatility estimates to verifiable onchain activity ⎊ such as gas price spikes, liquidation frequency, and liquidity concentration ⎊ these models create a self-referential feedback loop. This ensures that derivative pricing remains consistent with the actual stress levels experienced by the underlying protocol.

Origin

The genesis of this field stems from the limitations inherent in applying Black-Scholes or GARCH models to environments where price discovery occurs via automated algorithms rather than centralized order books. Early developers recognized that standard Gaussian assumptions failed to account for the frequent, discontinuous price jumps characteristic of thin liquidity environments. Consequently, the focus shifted toward developing mechanisms that account for the unique structural risks of decentralized exchanges.

- Protocol state observability provided the first breakthrough by allowing architects to calculate realized volatility using every executed trade.

- Liquidity concentration metrics allowed for the development of models that adjust premiums based on the depth of the available pool.

- Smart contract risk premiums introduced the need for modeling volatility as a function of code-level vulnerability exposure.

Theory

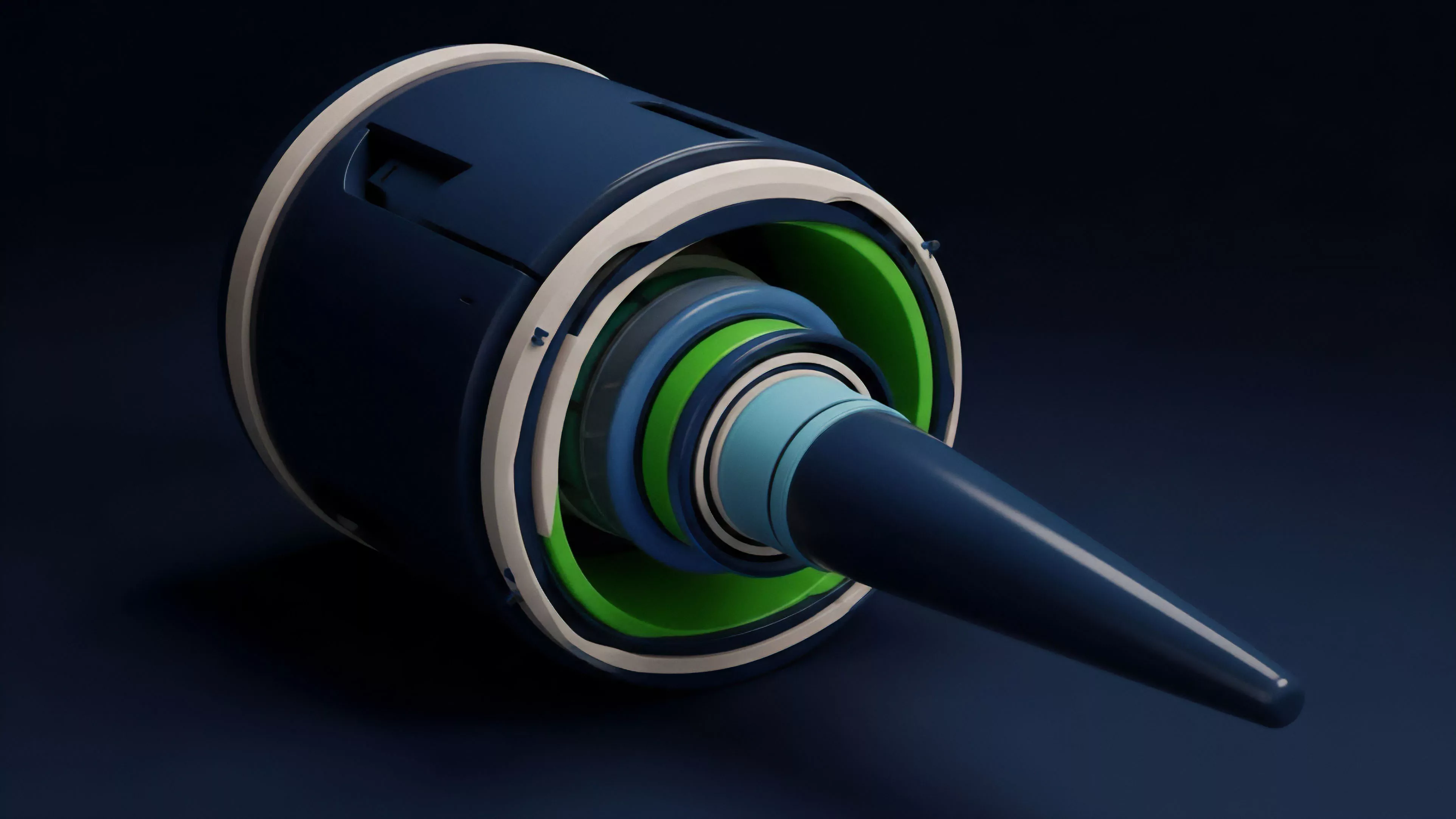

The theoretical framework for Onchain Volatility Modeling relies on the principle that protocol-specific variables serve as leading indicators for broader market shifts. By analyzing the interaction between liquidity providers and arbitrageurs, the model constructs a probability distribution of future price outcomes. This requires rigorous attention to the mechanics of automated market makers, where price slippage acts as a direct input for measuring local volatility.

| Metric | Theoretical Basis |

| Realized Volatility | Sum of squared log returns over N blocks |

| Implied Skew | Difference in option premiums across strike prices |

| Liquidity Decay | Rate of capital withdrawal during market stress |

The predictive accuracy of onchain models depends on their ability to interpret the specific mechanics of decentralized liquidity provision and arbitrage.

Mathematically, these models often utilize jump-diffusion processes to capture the extreme, non-normal tail risks prevalent in crypto markets. The interaction between block validation times and order execution creates a unique latency-dependent volatility signature that standard models ignore. This represents the primary divergence from legacy quantitative finance, as the model must internalize the consensus-driven nature of settlement.

Approach

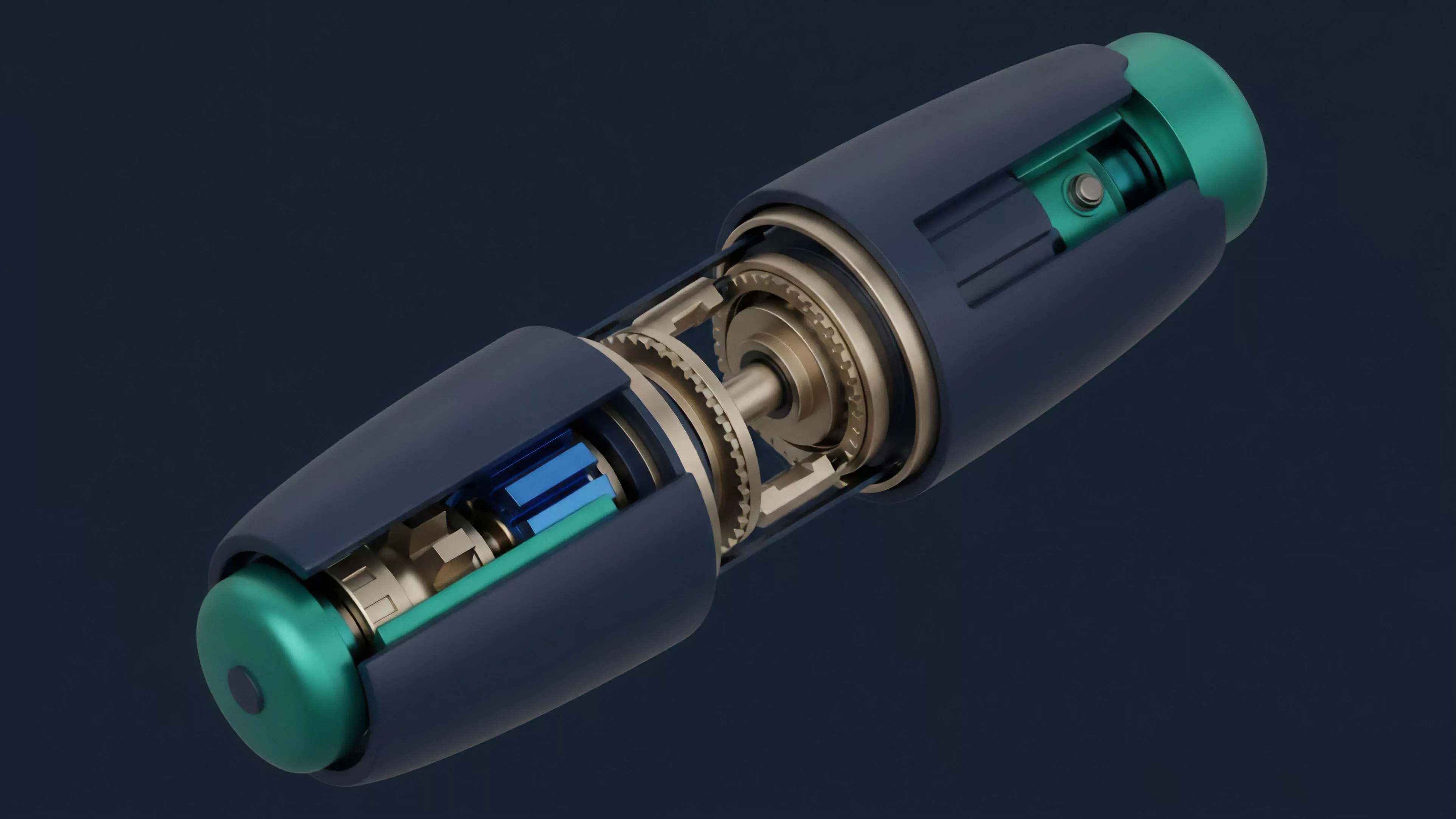

Modern implementation involves the continuous monitoring of mempool activity to anticipate volatility surges before they settle on the ledger. Quantitative analysts now employ machine learning architectures to identify patterns in order flow toxicity ⎊ where informed traders exploit stale pricing ⎊ to adjust volatility parameters dynamically. This proactive adjustment protects liquidity providers from being adversely selected during periods of extreme market turbulence.

- Mempool scanning identifies pending transactions that may cause significant price impact.

- Parameter calibration updates the volatility surface based on observed changes in liquidity depth.

- Risk mitigation execution triggers automated rebalancing or premium adjustment to maintain solvency.

The current state of the art emphasizes the reduction of model-induced latency. By offloading complex calculations to specialized oracle networks, protocols achieve near-instantaneous updates to their volatility surfaces. This capability remains the most significant advantage for decentralized derivatives over their centralized counterparts, as it eliminates the reliance on delayed, third-party data feeds.

Evolution

The field has progressed from static, time-based volatility windows to dynamic, state-aware systems that adapt to the underlying blockchain’s congestion. Early iterations merely tracked past performance, whereas contemporary systems treat volatility as a multi-dimensional surface that changes based on network health and collateralization ratios. The shift toward modular, composable finance means these models now frequently interact with lending protocols to assess systemic contagion risks.

Onchain volatility models have evolved from simple historical trackers into sophisticated, state-aware systems that predict systemic stress.

One might observe that the progression mimics the history of biological evolution, where complexity increases to match the demands of a harsher, more competitive environment. As protocols matured, the necessity for robust volatility estimation became the primary driver of capital efficiency. Today, the focus centers on minimizing the cost of hedging while maximizing the transparency of the pricing mechanism.

Horizon

Future development points toward the integration of cross-chain volatility indices, where models ingest data from multiple networks to derive a global sentiment metric. This will allow for more precise pricing of cross-chain derivatives and synthetic assets that derive value from disparate sources. Furthermore, the use of zero-knowledge proofs will likely enable the verification of complex volatility models without revealing sensitive order flow data, balancing privacy with market integrity.

| Development Stage | Strategic Focus |

| Current | Local liquidity depth analysis |

| Intermediate | Cross-protocol contagion modeling |

| Future | Decentralized volatility oracle consensus |

The ultimate objective remains the creation of a trustless, high-frequency derivative infrastructure that operates independently of centralized intermediaries. As these models gain sophistication, they will likely become the bedrock for decentralized insurance products and complex structured finance, providing the necessary precision to manage risk in an increasingly interconnected digital economy.