Essence

Protocol Level Monitoring represents the real-time observability of state transitions, validator behavior, and cryptographic settlement integrity within a decentralized financial network. It functions as the telemetry layer for programmable money, converting opaque blockchain ledger data into actionable financial intelligence. By tracking the raw mechanics of consensus and smart contract execution, participants gain visibility into systemic health before these conditions manifest as volatility or liquidation cascades in derivative markets.

Protocol Level Monitoring acts as the diagnostic infrastructure that translates underlying consensus state into measurable risk parameters for derivative market participants.

This observability is distinct from simple block explorer queries. It requires deep-packet inspection of mempool activity, monitoring of validator liveness, and analysis of gas price dynamics. When these metrics deviate from established baselines, the stability of synthetic assets and options pricing models becomes subject to rapid adjustment.

Effective monitoring acknowledges that the security of a financial position is tied to the physical robustness of the underlying blockchain consensus mechanism.

Origin

The necessity for Protocol Level Monitoring emerged from the maturation of decentralized exchange and lending architectures. Early market participants relied on centralized oracle feeds or superficial price tracking, which proved insufficient during periods of high network congestion or consensus instability. As derivative volumes migrated toward automated market makers and order-book protocols, the limitations of traditional, reactive risk management became apparent.

- Systemic Fragility: Early protocols lacked granular visibility into mempool bottlenecks, leading to unexpected slippage during liquidation events.

- Oracle Dependence: Over-reliance on external data sources necessitated a shift toward monitoring the internal state of protocols to verify collateralization ratios.

- Validator Behavior: The realization that validator censorship or latency directly impacts derivative settlement times spurred the development of specialized monitoring tools.

Market makers and professional liquidity providers pioneered these techniques to gain a competitive advantage in latency-sensitive environments. They recognized that the ability to detect a consensus-level delay before it hits the wider market provides a decisive edge in managing delta and gamma exposure. This transition marked the move from passive participation to active, protocol-aware risk management.

Theory

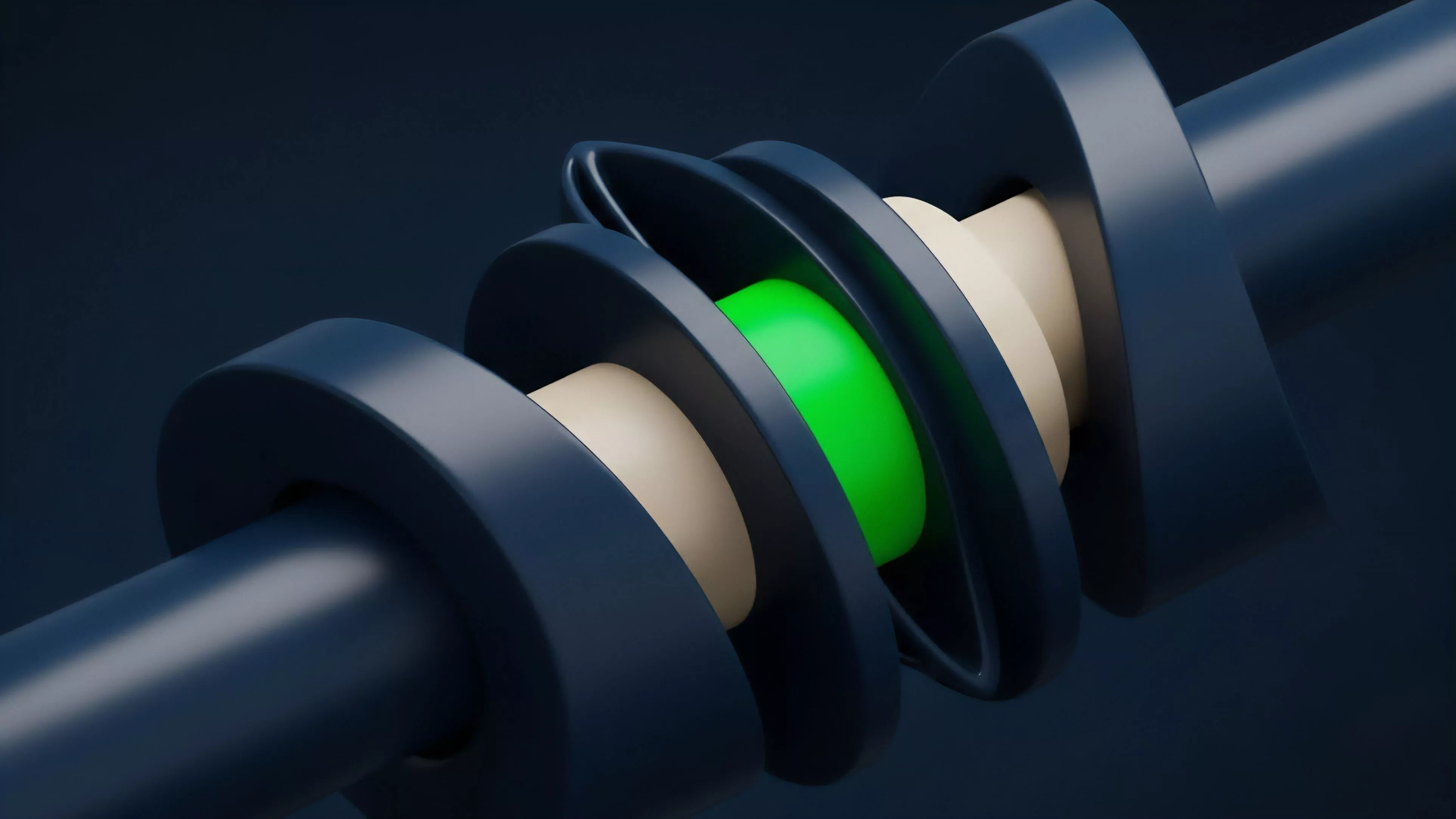

The theoretical framework for Protocol Level Monitoring rests on the principle that blockchain state is a high-frequency derivative of network-wide consensus activity.

Market participants must model the network as a dynamic system where latency, throughput, and gas volatility act as exogenous shocks to the pricing of options and futures. The primary focus involves mapping these technical constraints to the Greeks of a portfolio.

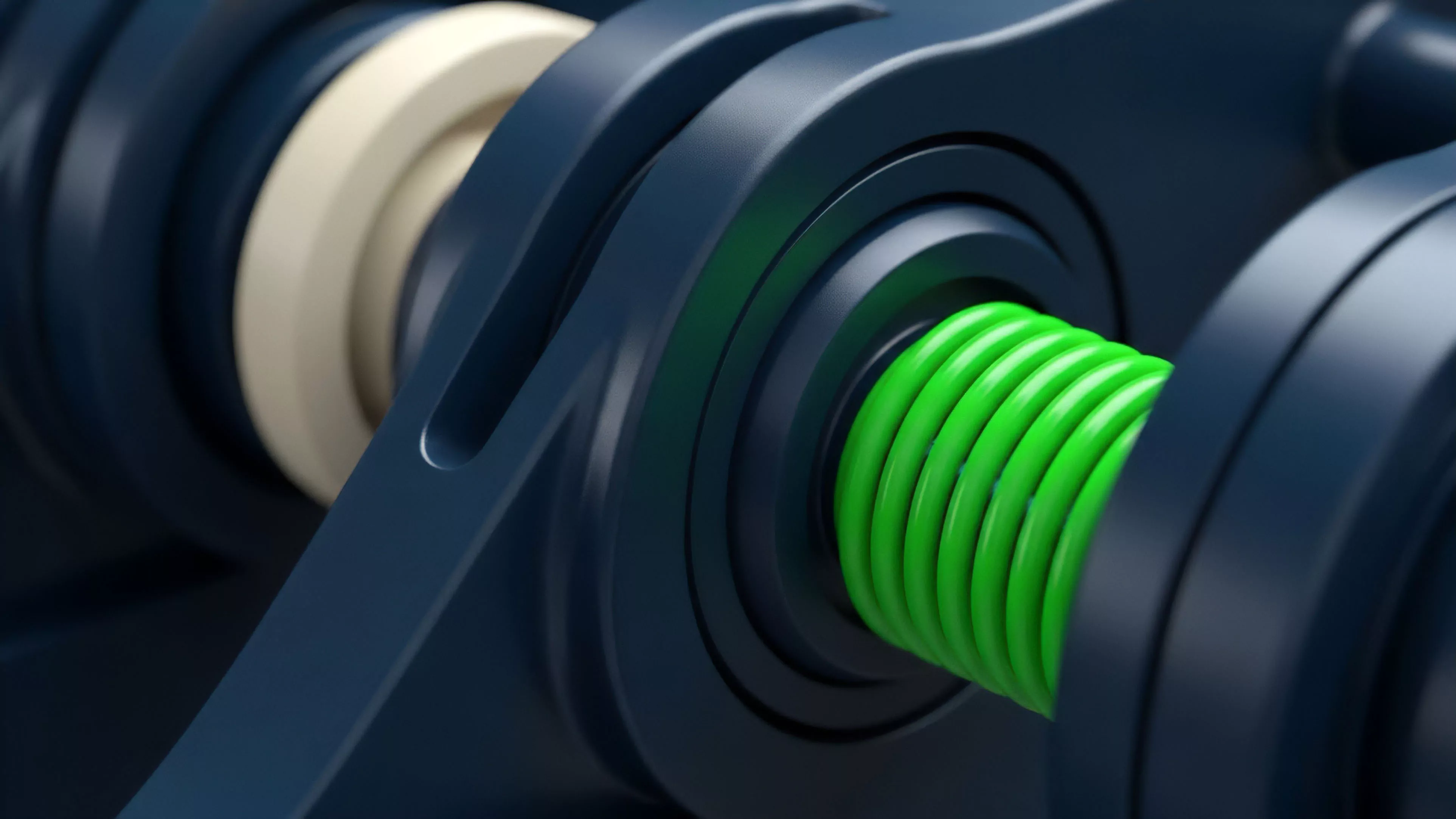

Consensus Mechanics

The stability of a derivative position relies on the deterministic finality of the underlying chain. When a chain experiences reorgs or extended block times, the theoretical value of an option contract, which assumes continuous pricing, becomes detached from the actual settlement reality. Analysts must quantify the probability of these events to adjust the risk-free rate and volatility inputs within pricing models.

Monitoring protocol state allows for the dynamic adjustment of volatility inputs in pricing models based on the real-time health of the underlying consensus engine.

Adversarial Feedback Loops

Systems are under constant pressure from automated agents and opportunistic traders. A slight increase in block inclusion time can trigger a chain reaction of liquidations in under-collateralized protocols. By monitoring the mempool for large-scale transaction batches, participants anticipate these liquidity crunches.

| Metric | Financial Implication |

| Mempool Depth | Predicts near-term volatility spikes |

| Validator Liveness | Determines settlement certainty |

| Gas Price Variance | Affects cost of position adjustment |

The study of these dynamics bridges computer science and quantitative finance. Occasionally, one might consider how the physical constraints of light-speed data propagation in a global validator set mirror the historical challenges of arbitrage in fragmented physical commodities markets ⎊ a constant tension between the speed of information and the speed of execution.

Approach

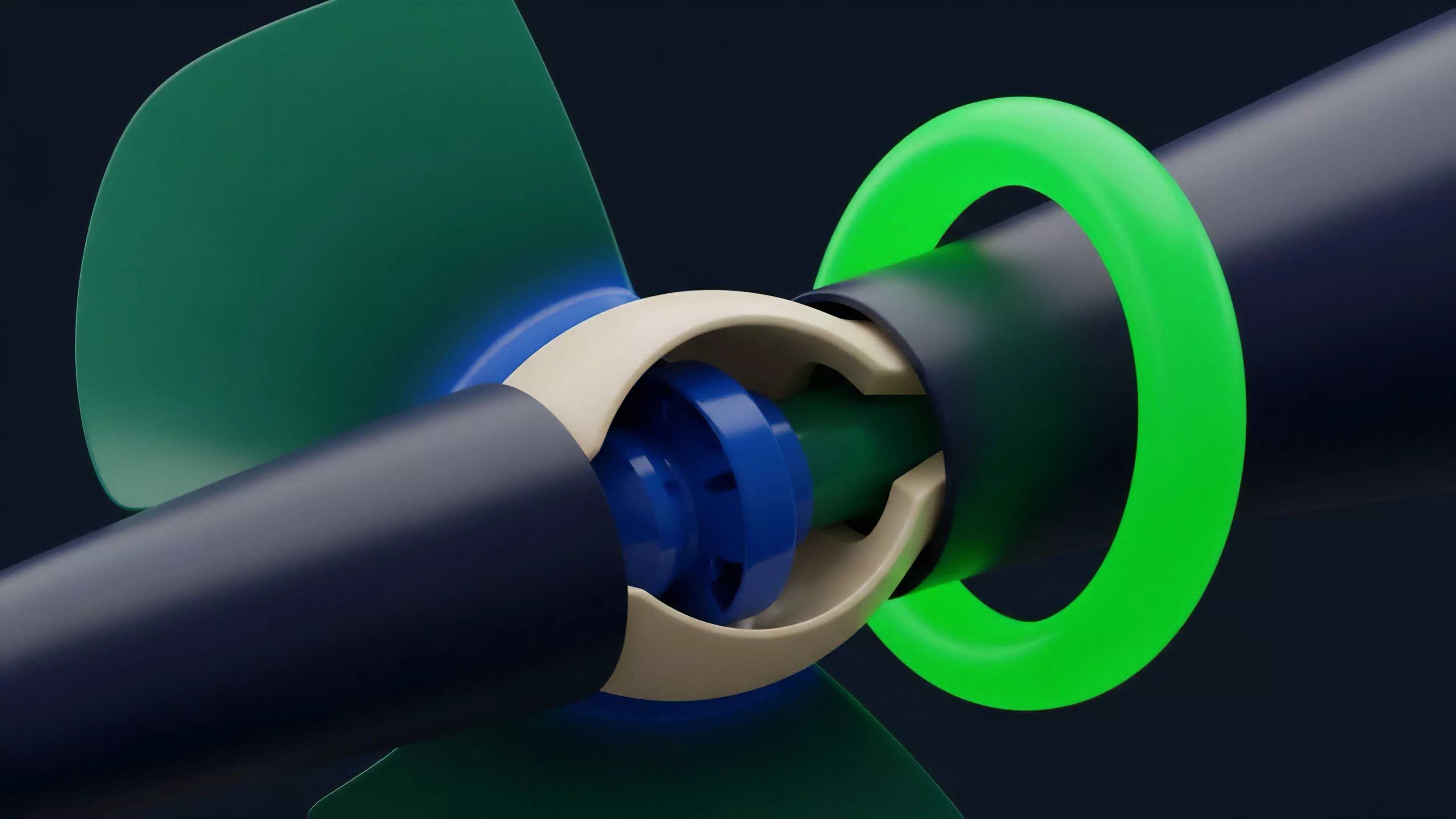

Current implementation of Protocol Level Monitoring involves deploying distributed nodes that aggregate telemetry data directly from the network layer. This data is then fed into quantitative engines that compute real-time risk sensitivities.

The focus is on reducing the time-to-signal, ensuring that adjustments to hedge ratios occur before the broader market reacts to consensus-level anomalies.

- Data Ingestion: Nodes capture raw peer-to-peer messages to map network topology and transaction propagation speed.

- State Analysis: Algorithms parse block headers and smart contract state changes to identify deviations from expected operational parameters.

- Risk Mapping: Automated systems correlate network-level telemetry with derivative Greeks to trigger hedging actions or liquidity adjustments.

This approach requires significant infrastructure investment and technical expertise. It moves the burden of risk management from the protocol level to the individual participant. Those who neglect this layer of monitoring are essentially trading with a blindfold, ignoring the physical foundations that underpin the digital assets they seek to price and hedge.

Evolution

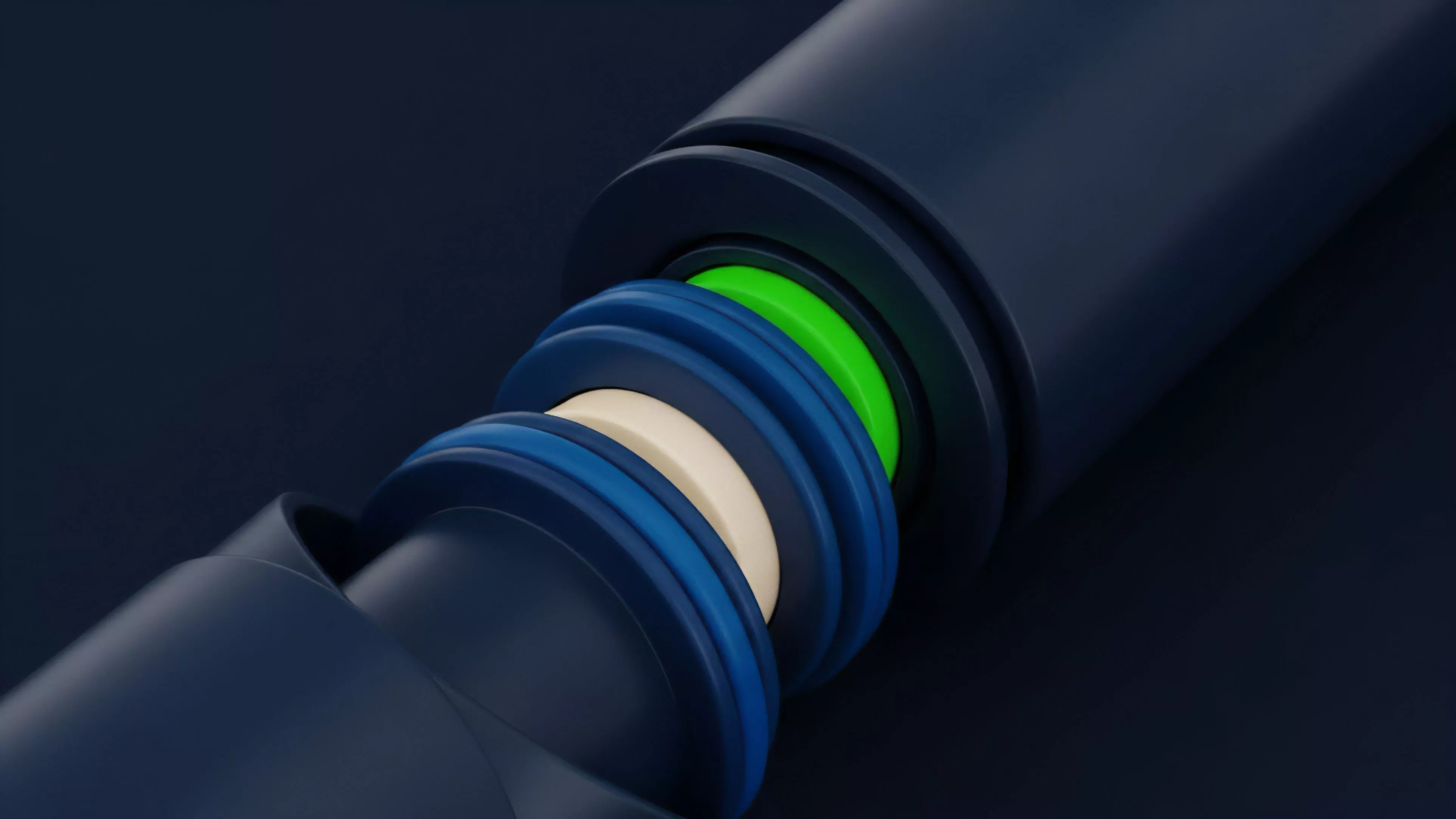

The discipline has shifted from rudimentary node health tracking to sophisticated, predictive analytics.

Initially, developers focused on simple uptime metrics. Today, the focus lies on complex event processing where machine learning models predict the impact of protocol upgrades or hard forks on derivative liquidity. This evolution mirrors the history of high-frequency trading in traditional markets, where the edge moved from basic execution to proprietary data analysis of market microstructure.

The evolution of monitoring has transitioned from simple uptime tracking to complex predictive modeling of systemic risk factors.

Regulatory pressures and the growth of institutional participation have accelerated this development. As protocols become more complex, the ability to audit their state in real-time is no longer a luxury but a requirement for fiduciary compliance. The current state is characterized by an arms race for faster, more accurate data ingestion that captures the nuances of validator incentives and consensus-level vulnerabilities.

Horizon

Future development will likely focus on decentralized monitoring networks that provide verifiable telemetry as a service. This will lower the barrier to entry for smaller participants, democratizing access to high-fidelity risk data. We expect to see the integration of protocol-level insights directly into smart contract execution logic, where self-correcting protocols automatically adjust collateral requirements based on network-level volatility. The convergence of zero-knowledge proofs and Protocol Level Monitoring will allow for the verification of network state without requiring every participant to run a full node. This will significantly enhance the scalability of risk management systems. The ultimate goal is a financial architecture where the protocol itself is self-aware, constantly measuring its own stability and adjusting its economic parameters to prevent systemic failure. What paradox emerges when the tools designed to mitigate systemic risk by providing perfect visibility simultaneously increase market fragility by enabling synchronized, automated liquidation responses?