Essence

Off-chain data aggregation represents the critical infrastructure layer bridging deterministic on-chain logic with the chaotic, real-world data required for decentralized financial derivatives. The core function is to provide smart contracts with reliable, verified information, primarily asset prices, that originate outside the blockchain’s native environment. Without this mechanism, a decentralized options contract, for instance, cannot determine the strike price, calculate collateral requirements, or execute settlement based on the underlying asset’s market value.

The system addresses the fundamental “oracle problem,” which recognizes that a blockchain, by design, operates in a self-contained, trustless vacuum; it cannot natively access external information. The challenge in crypto options is particularly acute due to the time-sensitive nature of pricing and risk management. Unlike a simple spot trade, an options contract’s value is constantly changing based on the volatility and price of the underlying asset.

The aggregation layer must deliver this data with high frequency and low latency to ensure accurate pricing models and prevent liquidations from occurring based on stale information. The integrity of the entire derivative protocol hinges on the quality and security of this off-chain data feed.

Off-chain data aggregation is the process of sourcing and verifying external market information to enable the functionality of on-chain smart contracts.

Origin

The necessity for off-chain data aggregation emerged with the first generation of decentralized finance protocols, particularly those supporting collateralized debt positions (CDPs) like MakerDAO. These early systems required a mechanism to determine when a user’s collateral value dropped below a certain threshold, triggering liquidation. The initial solutions were often centralized, relying on a small number of trusted entities to submit price data.

This design presented a significant vulnerability: a single point of failure where a malicious or compromised data provider could manipulate the price feed for personal gain, leading to incorrect liquidations. The evolution of data aggregation was driven by the increasing complexity of financial instruments being built on-chain. As protocols moved beyond simple lending and borrowing to create sophisticated derivatives like options, perpetual futures, and structured products, the requirements for data quality escalated dramatically.

These instruments demand not just a single price point, but a constant stream of high-frequency data to accurately calculate risk metrics, such as greeks and collateral ratios. The need for robust, decentralized data feeds became a systemic priority to prevent market manipulation and ensure capital efficiency. The early reliance on single-source or highly centralized oracles proved inadequate for the scale and complexity required by a mature derivatives market.

Theory

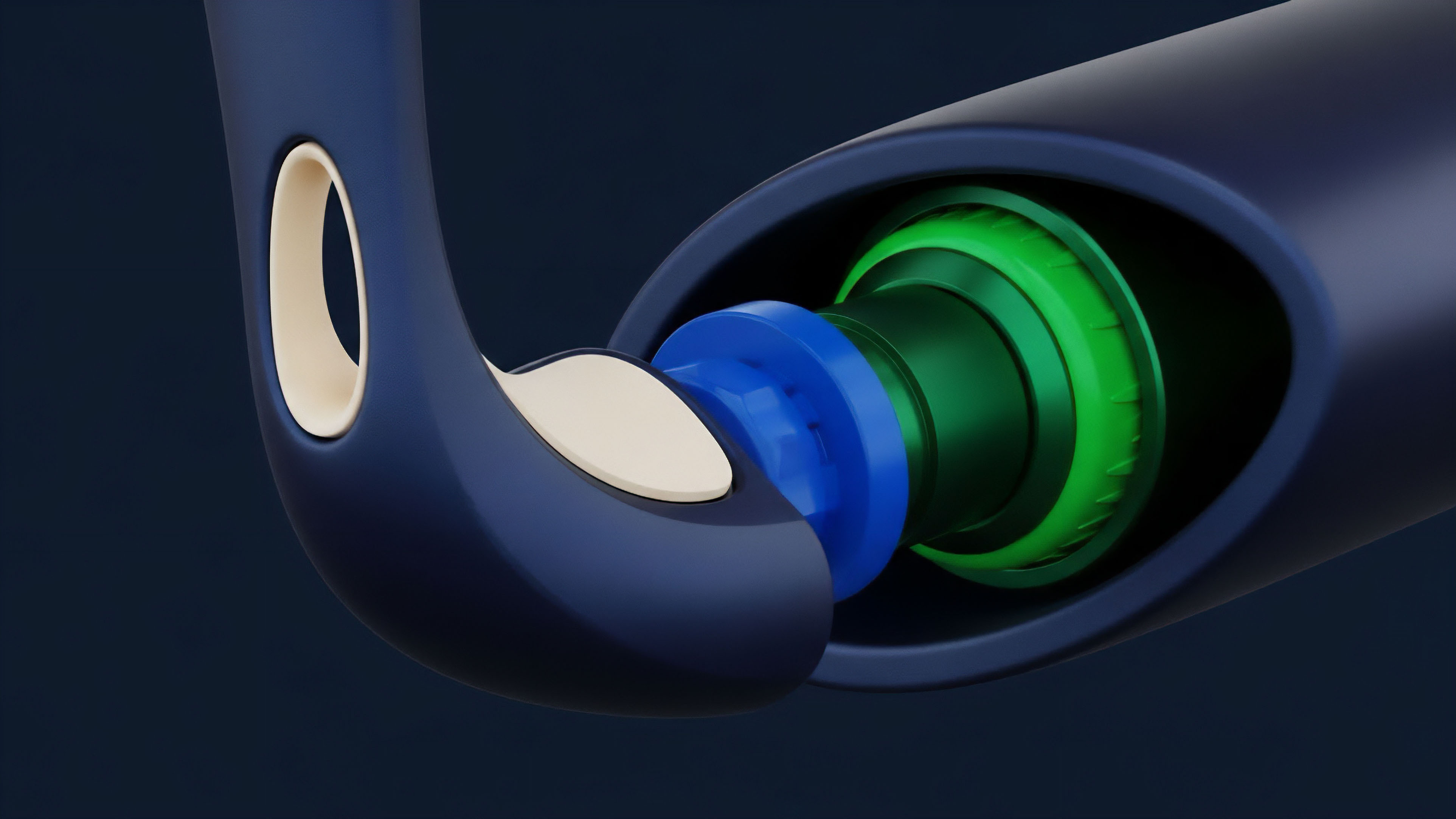

The theoretical foundation of off-chain data aggregation rests on principles of information theory, game theory, and distributed systems engineering. The core challenge is designing a system where data providers are incentivized to submit truthful information, while simultaneously disincentivizing malicious behavior through economic penalties. The aggregation process itself is a consensus mechanism for data.

Instead of trusting a single source, multiple independent nodes source data from various off-chain exchanges and aggregate it. The final, trusted value is typically calculated using a median function, which effectively filters out outliers and malicious data points. The security model relies on economic collateral.

Data providers must stake a certain amount of value in the system. If a provider submits incorrect data, they risk having their stake slashed, creating a powerful economic deterrent against manipulation. This model transforms data integrity from a matter of trust in a single entity to a matter of verifiable economic risk.

The protocol physics of this system dictate that the cost of manipulating the data feed must be greater than the potential profit gained from the manipulation. This economic security ensures the data’s reliability for on-chain derivative contracts.

The selection of data aggregation methodology directly impacts the risk profile of a derivative protocol. Different methods offer distinct trade-offs in terms of security, cost, and latency. A simple median calculation is robust against single outliers, while more complex calculations like time-weighted average prices (TWAPs) are necessary to mitigate short-term flash loan attacks that artificially spike prices on a single exchange.

| Aggregation Method | Description | Application in Derivatives | Vulnerability Profile |

|---|---|---|---|

| Median Price Feed | Calculates the middle value from a set of data providers, discarding high and low outliers. | General collateral valuation, simple options pricing. | Requires a significant number of colluding nodes to shift the median. |

| Time-Weighted Average Price (TWAP) | Calculates the average price over a specific time window (e.g. 10 minutes), sampling data points at intervals. | Liquidation mechanisms, long-term options settlement. | Resilient to short-term price spikes (flash loans), but vulnerable to long-term price manipulation. |

| Volatility Surface Aggregation | Aggregates implied volatility data across different strike prices and expirations. | Sophisticated options pricing models (Black-Scholes variants), risk management. | Requires high-quality data from multiple sources; data sparsity can be a risk. |

Approach

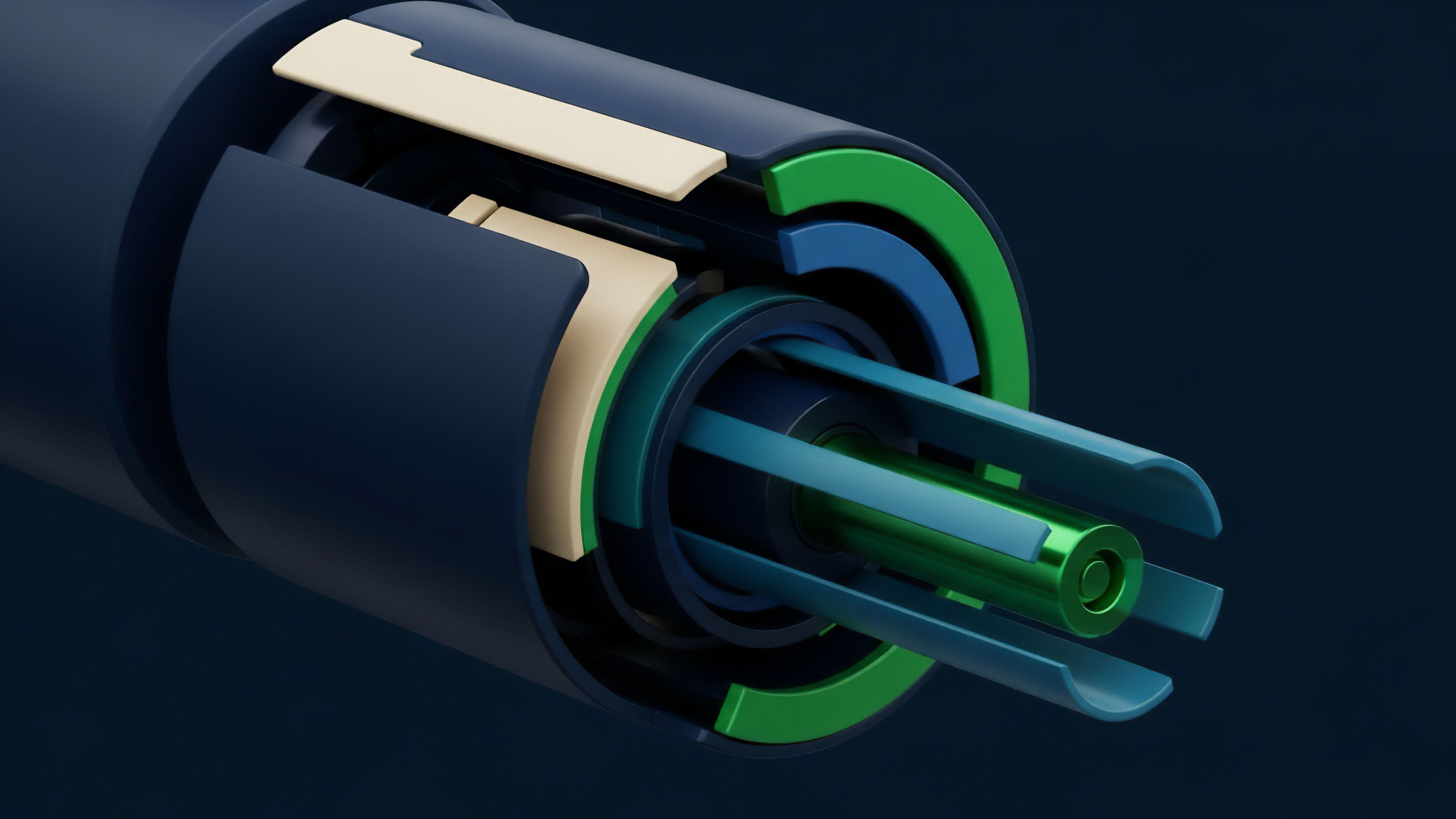

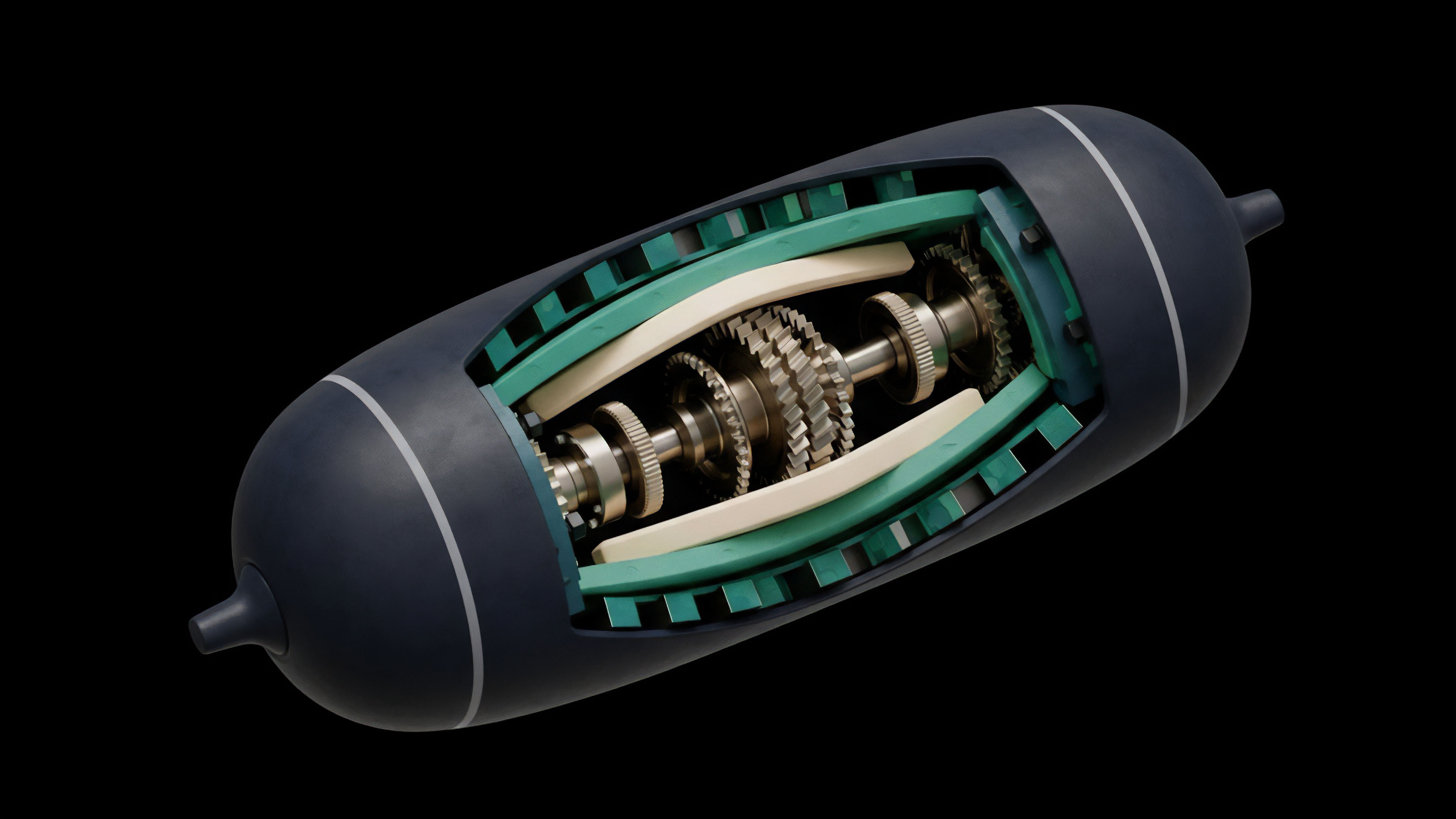

The standard approach to off-chain data aggregation utilizes a decentralized oracle network (DON). This network consists of multiple independent nodes operated by different entities. When a smart contract requires data, it issues a request to the DON.

The process then follows a structured sequence:

- Data Sourcing: Each node in the network retrieves the requested data from various off-chain sources, such as centralized exchanges, data aggregators, and market data APIs.

- Aggregation and Consensus: The nodes submit their individual data points to an aggregation contract on the blockchain. The contract then calculates a single, trusted value based on a predefined methodology, typically a median calculation.

- Update Trigger: The data feed updates when a specific threshold is met, either a price deviation percentage (e.g. a 0.5% change from the previous update) or a time interval (e.g. every 10 minutes).

- Security and Incentives: The nodes are financially incentivized to provide accurate data. They earn rewards for honest submissions and face penalties (slashing) for providing malicious or incorrect data.

This methodology effectively creates a “decentralized truth machine” where the data’s reliability is derived from the consensus of numerous independent sources rather than trust in a single provider. For options protocols, this robust data feed is essential for calculating the fair value of a contract, managing collateral, and ensuring that liquidations occur at the correct market price. The market microstructure of decentralized derivatives depends entirely on the timely and accurate delivery of this aggregated data.

The decentralized oracle network model secures data integrity by replacing trust in a single source with economic incentives and a consensus mechanism across multiple independent nodes.

Evolution

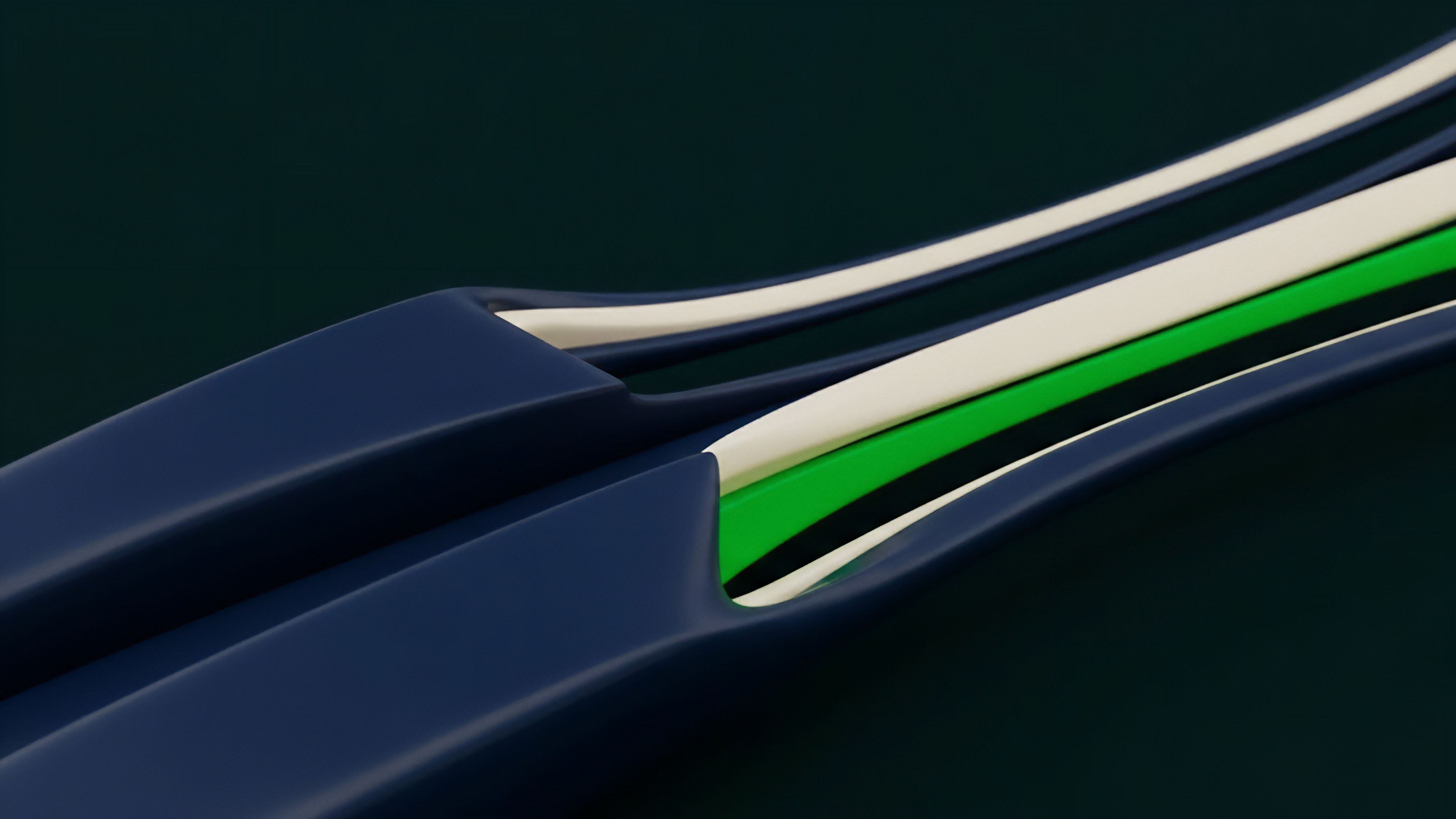

The evolution of off-chain data aggregation has progressed from simple price feeds to sophisticated computational oracles capable of delivering complex data structures. Early iterations of DeFi options struggled with data latency and a lack of necessary information beyond spot prices. The Black-Scholes model and its derivatives require inputs like implied volatility, which cannot be directly sourced from a single price feed.

This challenge drove the development of aggregation methods specifically designed for volatility surfaces and other complex metrics. A key development has been the shift toward more resilient aggregation methods to combat market manipulation. The initial reliance on simple price feeds was vulnerable to flash loan attacks, where an attacker could temporarily manipulate the price on a single exchange to trigger liquidations.

The response involved implementing TWAP oracles, which average prices over time to smooth out short-term spikes. The next frontier involves computational oracles that perform complex calculations off-chain before submitting the result. This allows protocols to access data that requires significant processing, such as calculating the implied volatility surface across multiple strikes and expirations.

The increasing demand for exotic derivatives requires aggregation systems to handle more diverse data types, including:

- Custom Index Calculations: Aggregating prices from multiple exchanges and weighting them according to specific criteria to create a custom index for derivative settlement.

- Event-Based Data: Providing data on real-world events, such as election results or sports outcomes, to support exotic options and prediction markets.

- Proof of Reserve Data: Verifying the off-chain reserves of stablecoins or wrapped assets to ensure collateralization and reduce systemic risk.

Horizon

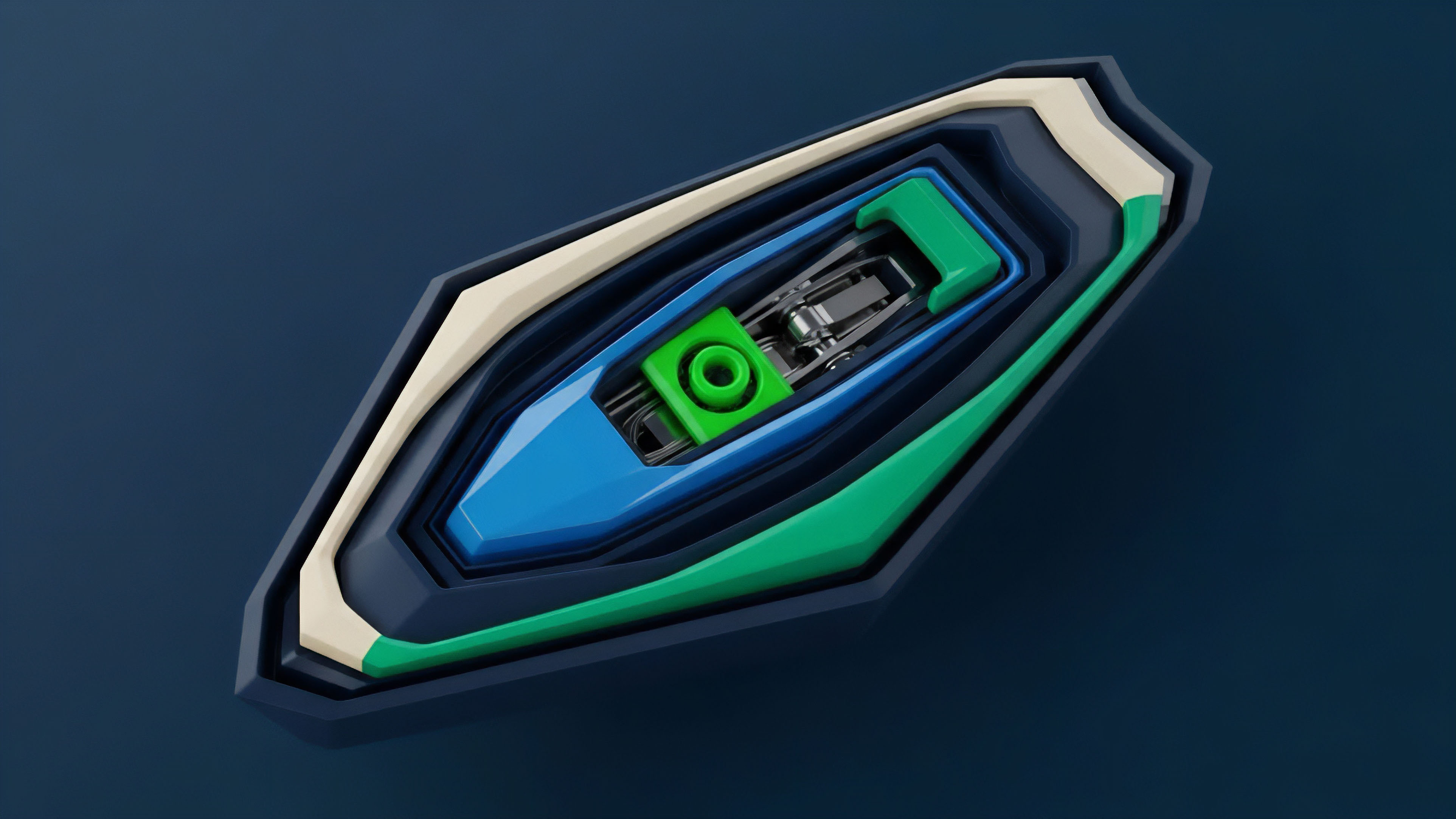

Looking ahead, the next generation of off-chain data aggregation will focus on enhancing data integrity through cryptographic proofs and increasing computational efficiency. The current model, while effective, still requires a high degree of on-chain processing and trust in the nodes’ off-chain calculations. The future direction involves a deeper integration of zero-knowledge proofs (ZKPs) into the aggregation process.

A zero-knowledge oracle would allow a data provider to prove that a specific data point was sourced from a trusted off-chain API without revealing the data itself. This enhances privacy and reduces the surface area for manipulation by preventing nodes from seeing each other’s raw data. Furthermore, decentralized verifiable computation will allow protocols to perform complex calculations off-chain, such as calculating a full options pricing model, and then submit only the verified result to the smart contract.

This significantly reduces on-chain gas costs and enables a new class of sophisticated derivatives that are currently too expensive to operate on a blockchain. The evolution of data aggregation will also move beyond price feeds to incorporate more complex market microstructure data. This includes aggregating order book depth, trading volume, and open interest to provide a more comprehensive view of market health.

This shift allows derivative protocols to move from simply reacting to price changes to proactively managing risk based on a deeper understanding of market dynamics.

| Current Aggregation Model | Horizon Model (Verifiable Computation) |

|---|---|

| Aggregates simple price data points. | Aggregates complex calculations (e.g. implied volatility surface, custom indexes). |

| Trusts nodes to calculate a median accurately. | Verifies off-chain calculations cryptographically (zero-knowledge proofs). |

| Focuses on data freshness and latency. | Focuses on data integrity and computational efficiency. |

| Nodes submit raw data to an on-chain contract. | Nodes submit a cryptographic proof of calculation to an on-chain verifier. |

The future of off-chain data aggregation lies in zero-knowledge proofs and verifiable computation, allowing complex derivatives to operate securely and cost-effectively on-chain.

Glossary

Off-Chain Communication

Statistical Median Aggregation

On-Chain Data Finality

Risk on Risk off Regimes

Off-Chain Data Reliance

Off-Chain State Machine

Aggregation Methods

Off-Chain Execution Future

Multi-Layered Data Aggregation