Essence

Off-Chain Computation On-Chain Verification functions as a technical decoupling of transaction execution from state finalization. This architecture allows a distributed network to delegate intensive mathematical operations to external environments while retaining the security guarantees of the underlying ledger. By shifting the burden of computation away from the limited throughput of the virtual machine, the system achieves a level of performance that matches centralized servers without reintroducing the risk of hidden insolvency or data manipulation.

Off-Chain Computation On-Chain Verification separates execution from settlement to maintain decentralization while achieving high throughput.

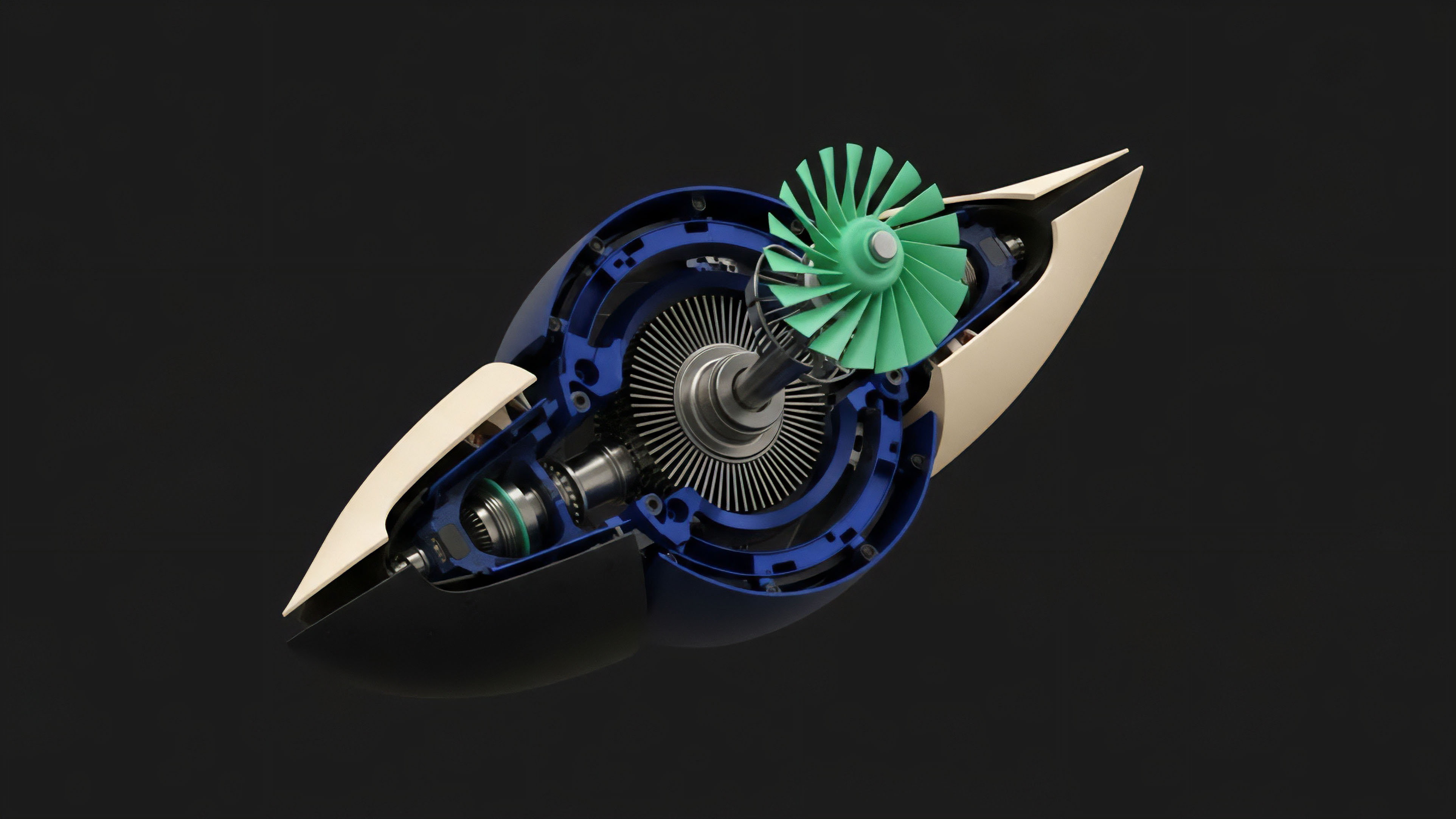

The operational identity of this mechanism rests on the mathematical certainty of the results delivered back to the chain. Instead of every node repeating a calculation, a specialized prover generates a cryptographic certificate or a fraud-proof window. The chain then acts as a succinct judge, confirming the validity of the state transition rather than executing the logic itself.

This shift redefines the blockchain from a global computer into a global settlement layer, where the integrity of complex financial derivatives remains verifiable by anyone with access to the proof.

Origin

The historical genesis of Off-Chain Computation On-Chain Verification lies in the inherent resource scarcity of early decentralized networks. Developers attempting to build sophisticated options markets discovered that calculating Black-Scholes variables or managing real-time risk engines on-chain resulted in prohibitive gas costs and unacceptable latency. This friction created a hard ceiling for the complexity of programmable finance, forcing a choice between the transparency of the chain and the speed of centralized order books.

Early scaling attempts, such as state channels and sidechains, sought to alleviate this pressure by moving transactions off the main ledger. These methods introduced significant trade-offs regarding capital lockups and security assumptions. The necessity for a more robust solution led to the development of validity proofs and optimistic challenge mechanisms.

These advancements allowed for the creation of Layer 2 environments where the security of the primary layer could be inherited without the computational overhead of its consensus mechanism.

Theory

The mathematical logic of Off-Chain Computation On-Chain Verification relies on two primary branches of cryptographic and game-theoretic design. Validity proofs, often utilizing Zero-Knowledge Succinct Non-Interactive Arguments of Knowledge, provide a mathematical guarantee that a specific set of inputs produced a specific output. Conversely, optimistic systems rely on an adversarial environment where participants can challenge incorrect state transitions within a defined timeframe.

Cryptographic Proof Systems

Validity-based systems utilize polynomial commitments and the Fiat-Shamir heuristic to compress large amounts of computational data into a small proof. This proof is easily checked by a smart contract. The prover must demonstrate knowledge of a witness ⎊ the transaction data ⎊ that satisfies the constraints of the circuit without revealing the data itself.

This succinctness ensures that the verification cost grows at a logarithmic rate relative to the complexity of the calculation, enabling the settlement of thousands of trades in a single on-chain transaction.

| Proof Type | Verification Complexity | Settlement Delay | Security Model |

|---|---|---|---|

| ZK-SNARK | Constant | Instant | Cryptographic |

| ZK-STARK | Logarithmic | Instant | Cryptographic |

| Optimistic | Linear | 7 Days | Game-Theoretic |

Verification costs remain constant or logarithmic regardless of the complexity of the off-chain calculation.

Adversarial Integrity

Optimistic verification operates on the assumption that at least one honest actor will detect and report a fraudulent state transition. This system requires the sequencer to post a bond that is slashed if a challenge is successful. The theoretical strength of this model depends on the availability of transaction data, ensuring that any observer can reconstruct the state and verify the accuracy of the proposed updates.

This creates a balance between execution speed and the eventual certainty of the ledger.

Approach

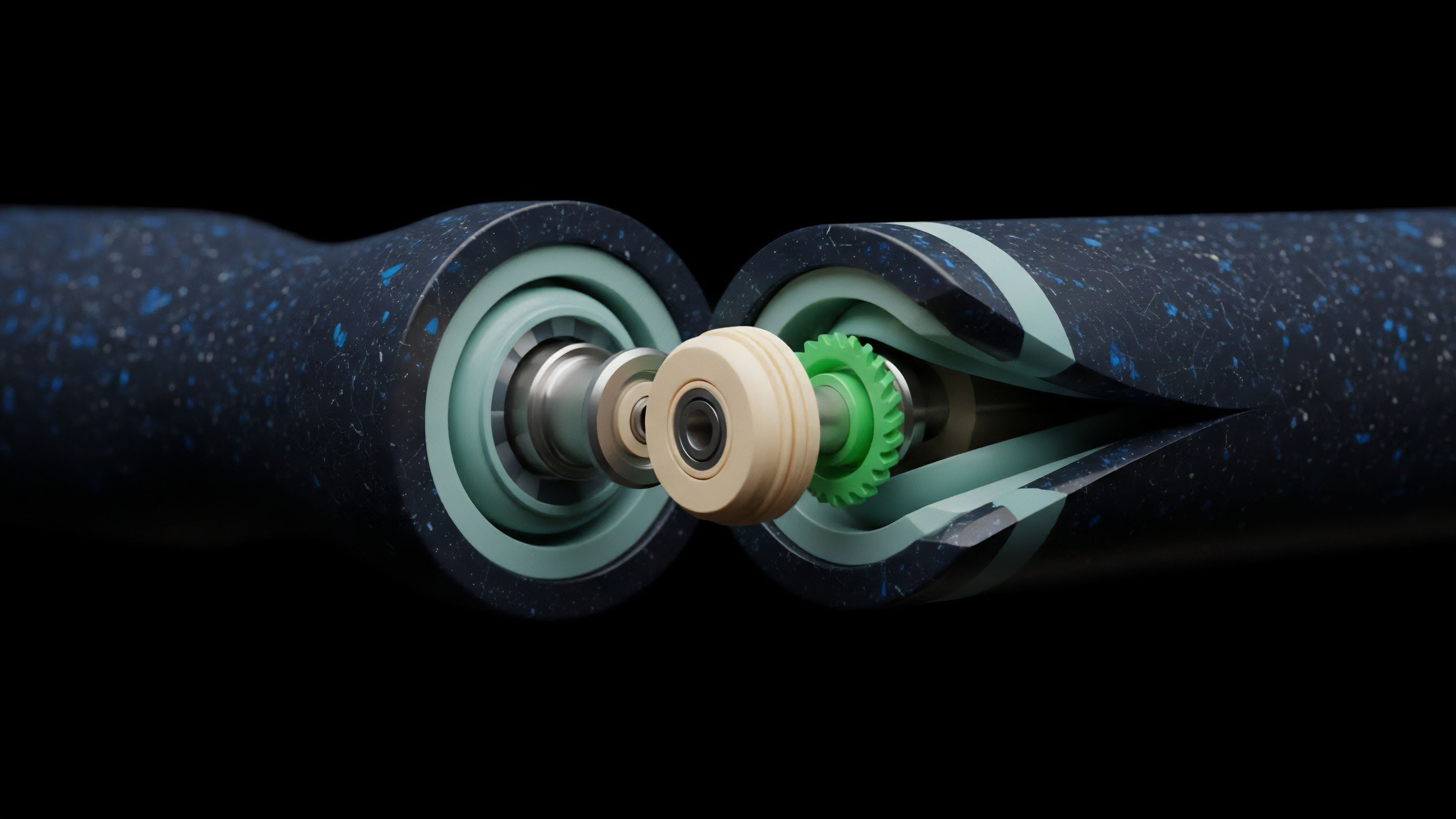

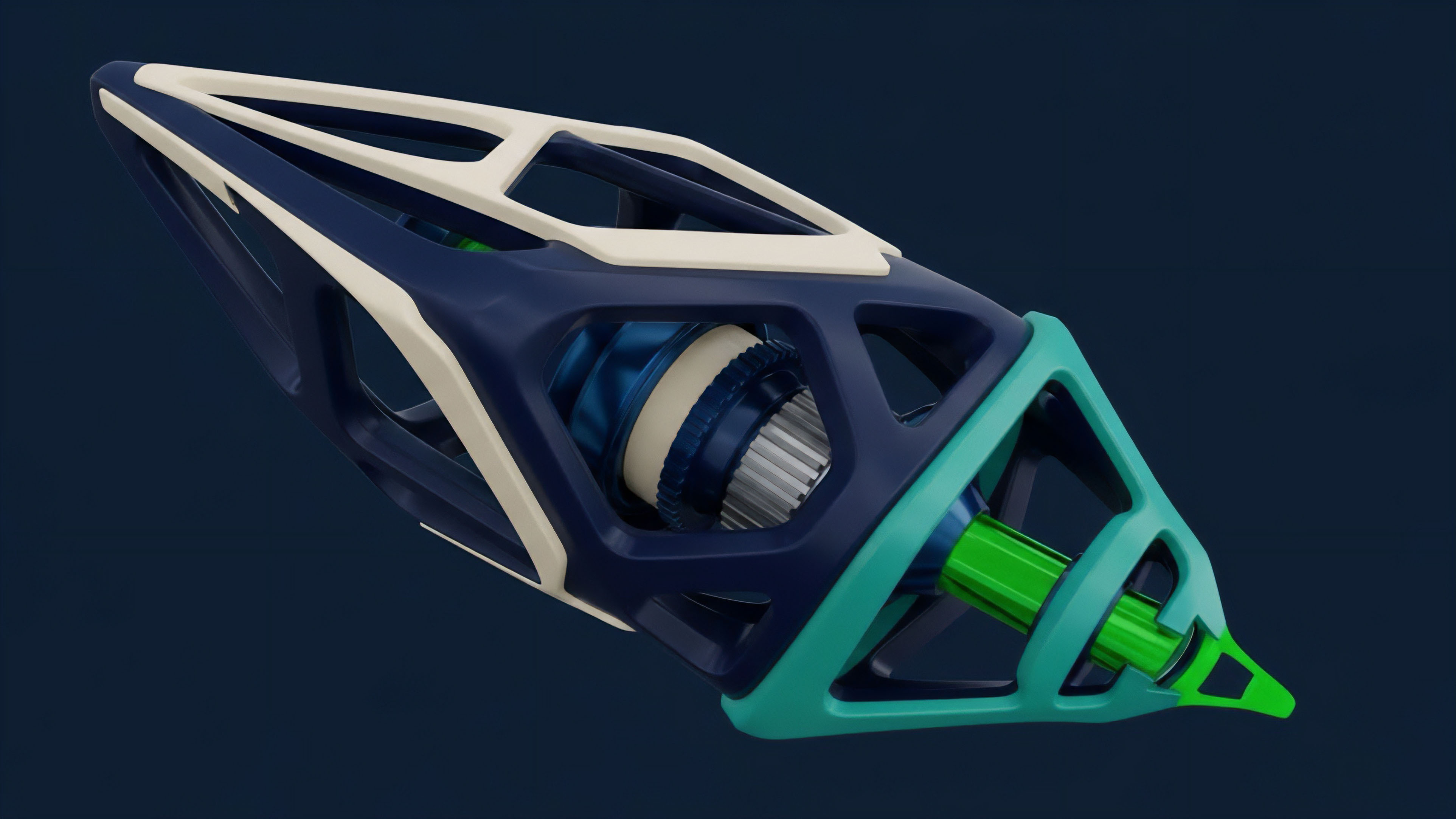

The operational procedure for implementing Off-Chain Computation On-Chain Verification involves several distinct layers of infrastructure. Modern protocols utilize specialized virtual machines designed for proof generation, such as those based on RISC-V or custom zkVM architectures. These environments execute the financial logic ⎊ such as delta-hedging algorithms or liquidation auctions ⎊ and output both the new state and the associated proof.

- Execution Environment: High-performance servers or decentralized prover networks that run the complex logic of the derivative protocol.

- Prover Nodes: Specialized hardware, often using GPU or FPGA acceleration, that generates the cryptographic proofs of state transitions.

- Verification Contract: An immutable set of rules on the base layer that accepts or rejects the submitted proofs.

- Data Availability: A secondary layer ensuring that the underlying transaction data is accessible for independent verification or challenge.

| Metric | On-Chain Only | OCOC Optimistic | OCOC Validity |

|---|---|---|---|

| Throughput | 15-30 TPS | 2,000+ TPS | 10,000+ TPS |

| Gas Cost | Extreme | Low | Medium |

| Capital Efficiency | High | Low | High |

Implementing this system within a derivatives context requires a robust risk engine that operates off-chain. This engine monitors margin levels and price feeds, triggering liquidations when necessary. The results of these liquidations are then batched and verified on-chain, ensuring that the protocol remains solvent without clogging the main network with frequent, small-value transactions.

Evolution

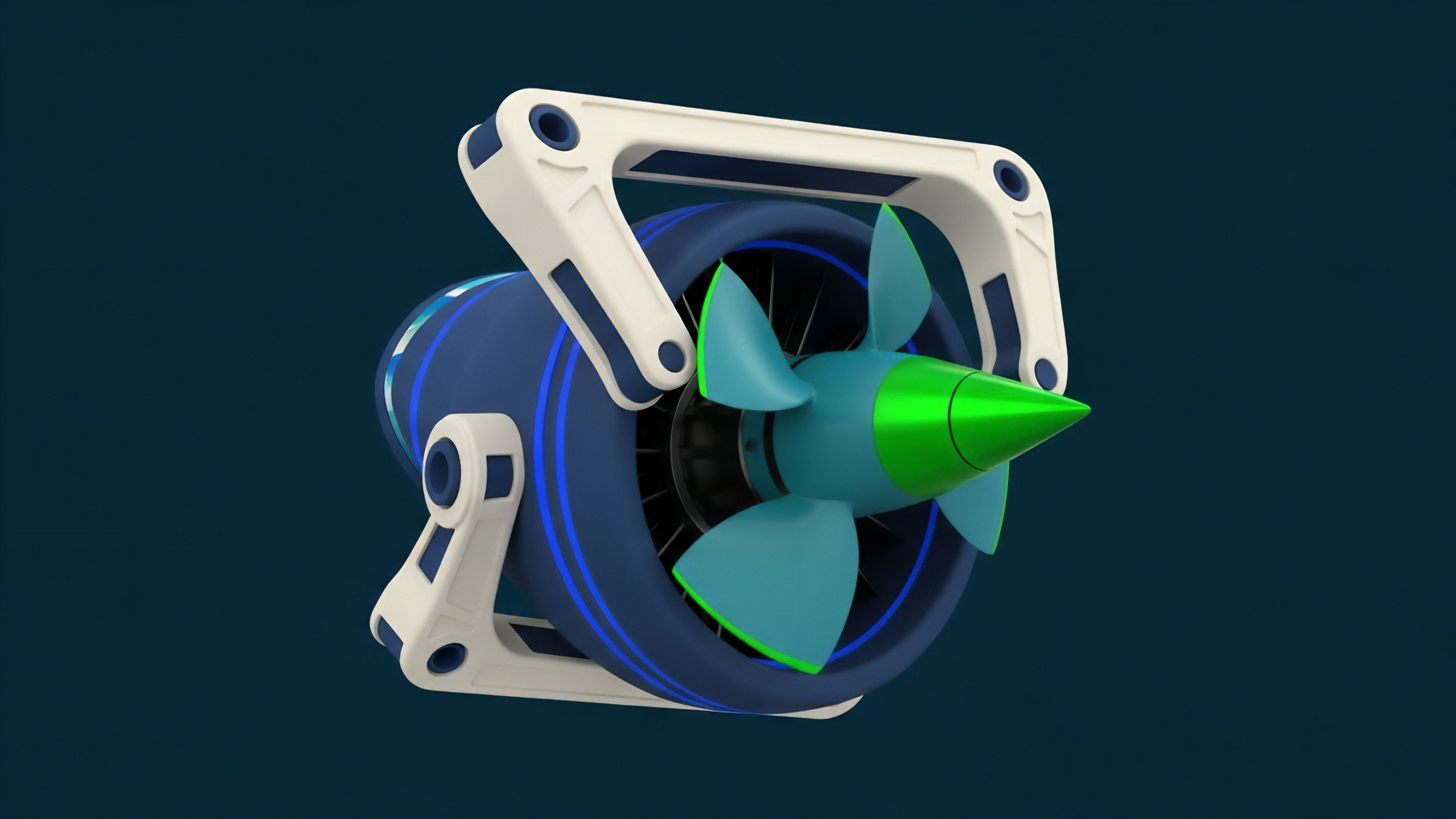

The structural transformation of Off-Chain Computation On-Chain Verification has moved from simple payment channels to general-purpose execution environments.

Initial iterations were limited to specific transaction types, but the rise of ZK-EVMs has allowed for the verification of any Ethereum-compatible smart contract logic. This progression has significantly reduced the friction for developers transitioning from traditional finance to decentralized systems.

Future financial infrastructure relies on the cryptographic certainty of external computation results.

Recent shifts have introduced the concept of coprocessors. These tools allow an on-chain contract to trigger a heavy off-chain calculation and receive the verified result as a callback. This eliminates the need for a full rollup architecture for every application, allowing existing protocols to enhance their functionality with verifiable computation on demand.

This modularity represents a departure from the monolithic scaling strategies of the past, favoring a more flexible and interoperable system.

Horizon

The outlook for Off-Chain Computation On-Chain Verification involves the integration of privacy-preserving technologies and autonomous agents. As Fully Homomorphic Encryption matures, it will become possible to perform verified computations on encrypted data, allowing for private dark pools and confidential margin accounts on public ledgers. This development will attract institutional capital that requires strict privacy for proprietary trading strategies.

- Verifiable AI Agents: Automated trading bots that provide cryptographic proof that they followed a specific strategy or risk mandate.

- Cross-Chain Atomic Settlement: Using proofs to verify state transitions across multiple blockchains simultaneously, enabling unified liquidity.

- Hardware Acceleration Standards: The commoditization of prover hardware, leading to a decentralized market for computational integrity.

The ultimate destination is a financial system where the distinction between off-chain and on-chain becomes invisible to the user. In this future, the chain serves as the ultimate source of truth, while the vast majority of economic activity occurs in high-speed, verifiable environments. This architecture ensures that the transparency and security of decentralization can scale to meet the demands of global capital markets, creating a resilient and permissionless foundation for the next century of finance.

Glossary

Automated Market Makers

Formal Verification

Fiat-Shamir Heuristic

Risc Zero

Modular Arithmetic

Privacy-Preserving Computation

Gpu Acceleration

Derivative Clearing

Prover Hardware