Essence

Network Congestion Mitigation functions as the structural response to throughput limitations within decentralized ledgers. When transaction demand exceeds the block space capacity, gas auctions escalate, effectively pricing out smaller participants and rendering time-sensitive financial contracts, such as options, susceptible to execution failure. This phenomenon introduces severe latency into settlement processes, transforming predictable derivative strategies into high-risk gambles on validator prioritization.

Network Congestion Mitigation encompasses the technical mechanisms designed to maintain transaction throughput and predictable settlement during periods of extreme market volatility.

The primary objective involves decoupling the execution of financial logic from the constraints of a single, saturated base layer. By shifting state updates to secondary environments or optimizing the efficiency of base layer interactions, protocols aim to ensure that capital remains fluid even when the underlying chain faces record-high traffic. This creates a more resilient market environment where derivative positions retain their intended risk-reward profile regardless of external network load.

Origin

The necessity for Network Congestion Mitigation traces back to the fundamental trade-offs inherent in blockchain design.

Early architectures prioritized decentralization and security, often at the expense of scalability. As decentralized finance protocols grew in complexity, the limitations of single-threaded execution became apparent. During peak periods, the mempool effectively became a bottleneck, forcing users into competitive bidding wars for inclusion.

- Transaction Sequencing: The initial reliance on first-come-first-served models proved inadequate under sustained load.

- Gas Market Dynamics: The shift toward dynamic fee markets like EIP-1559 attempted to manage demand but did not solve the underlying capacity constraints.

- Layer Two Emergence: The transition toward rollups and state channels represented a structural pivot away from total reliance on the primary settlement layer.

These historical pressures forced architects to reconsider how financial contracts interface with consensus mechanisms. The realization that base layer capacity is a finite, premium resource drove the industry to prioritize off-chain computation and batching. This evolution reflects a broader shift toward modularity, where settlement, execution, and data availability are handled by distinct, specialized protocol layers.

Theory

The quantitative framework for Network Congestion Mitigation centers on the relationship between block space scarcity and option pricing models.

In a congested state, the cost of exercising an option ⎊ or liquidating an undercollateralized position ⎊ rises exponentially. This creates a liquidity trap where the delta-neutral hedge becomes impossible to adjust, leading to forced losses and systemic fragility.

| Strategy | Congestion Mechanism | Financial Impact |

| State Batching | Reduces base layer footprint | Lowered transaction overhead |

| Optimistic Execution | Defers finality validation | Increased capital velocity |

| ZK Proof Aggregation | Compresses state transitions | High throughput settlement |

The mathematical modeling of these systems requires incorporating a congestion premium into the Greeks. Traditional Black-Scholes formulations assume frictionless settlement; however, in a congested environment, the effective cost of the option includes a stochastic variable representing the probability of transaction failure or extreme delay.

Effective derivative management requires adjusting risk parameters to account for the stochastic nature of transaction finality during high-volatility events.

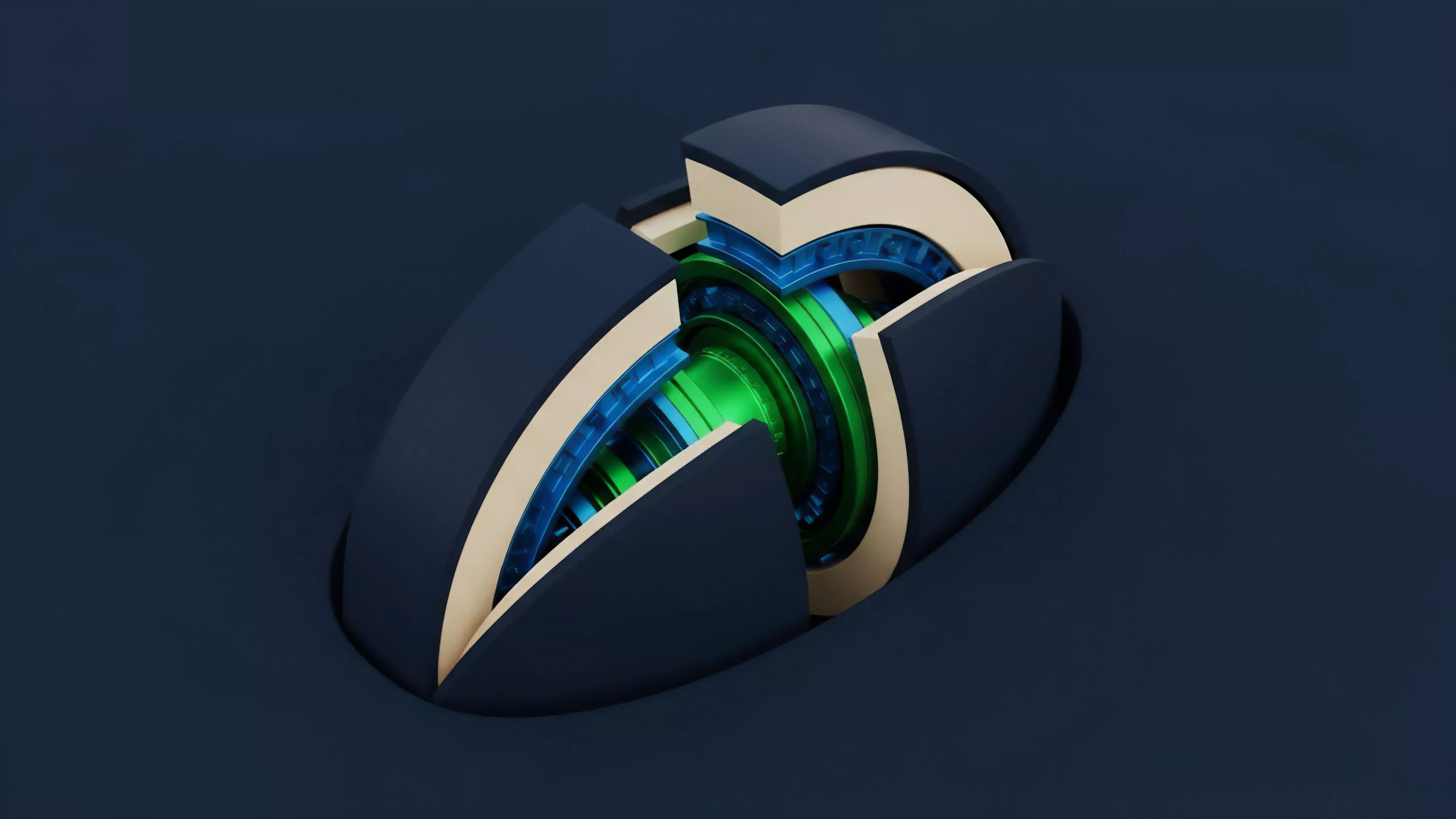

One might observe that the physical constraints of a consensus engine bear striking resemblance to the fluid dynamics of high-pressure pipe systems, where turbulent flow leads to pressure spikes and eventual structural failure. This analogy holds when analyzing the mempool, where the accumulation of pending transactions functions as pressure building against the throughput limit of the validator set. Returning to the mechanics, these systems must utilize sophisticated mempool management and priority fee structures to ensure that high-value liquidations proceed even during periods of network stress.

Approach

Current implementations of Network Congestion Mitigation focus on architectural isolation and execution efficiency.

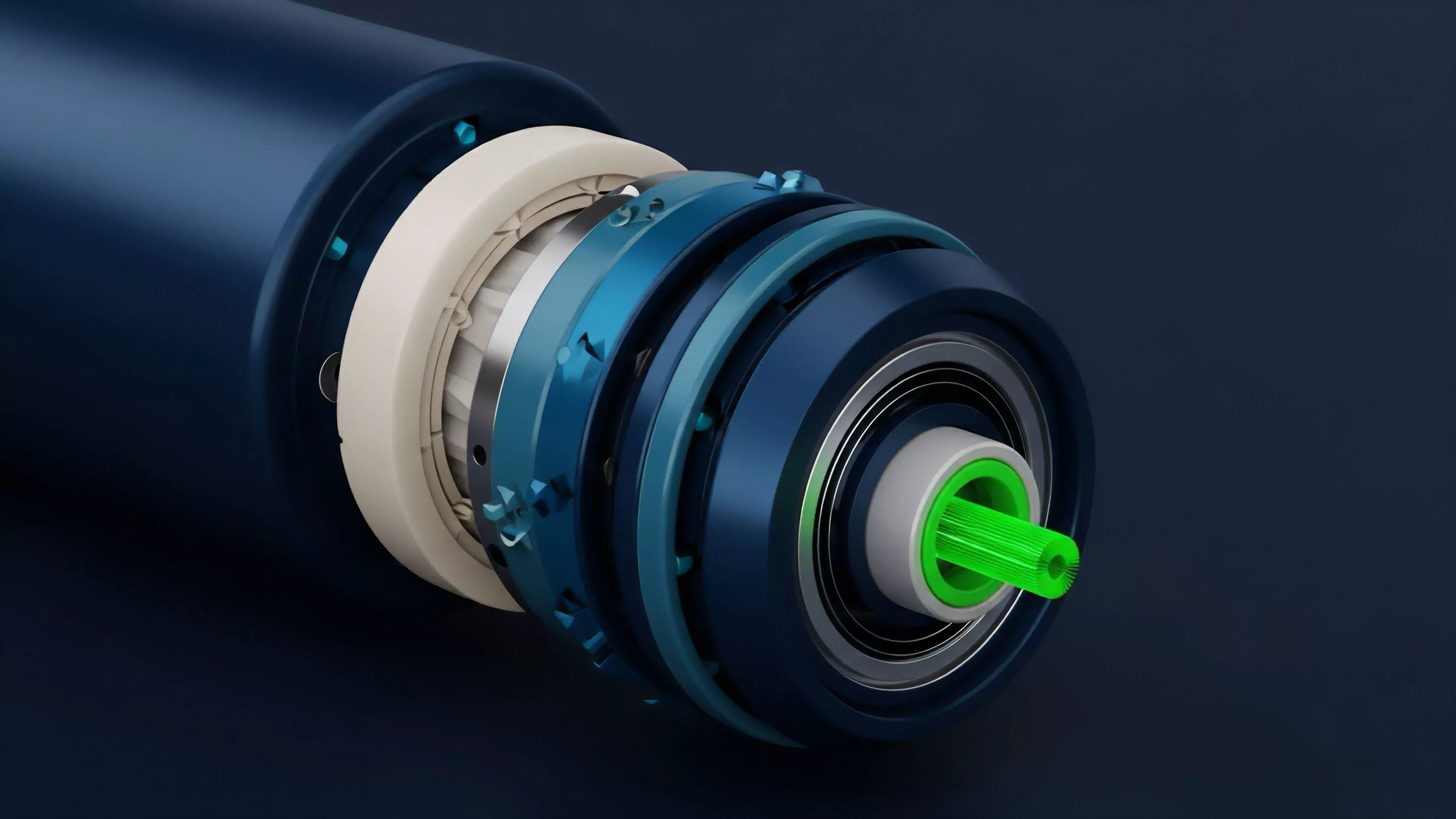

By moving derivative order books and matching engines to specialized environments, protocols isolate the financial logic from the noise of general-purpose chain traffic. This separation allows for deterministic latency, a prerequisite for institutional-grade derivative trading.

- Rollup Integration: Utilizing Layer Two solutions to batch thousands of trades into a single proof submitted to the primary chain.

- Off-chain Order Books: Decoupling the matching process from on-chain execution to eliminate the impact of mempool latency on trade entry.

- Adaptive Fee Modeling: Implementing automated fee adjustment mechanisms that protect user positions from sudden gas spikes during liquidation events.

These approaches fundamentally alter the market microstructure. Instead of every participant competing in a single global mempool, liquidity is partitioned across high-performance environments. This structure supports more complex derivative instruments, such as exotic options or multi-leg strategies, which would be economically unviable if each component required a separate, high-cost base layer transaction.

Evolution

The trajectory of Network Congestion Mitigation has moved from simple gas optimization to sophisticated, multi-layered infrastructure.

Early attempts involved basic transaction batching, which provided marginal improvements. Today, the focus lies in vertical integration, where the protocol layer itself is designed to handle high-frequency interactions through specialized sequencers and state-compression techniques.

Structural evolution in decentralized finance moves toward specialized execution environments that isolate financial settlement from general network congestion.

The shift toward modular architectures allows protocols to leverage specific consensus properties for different parts of the trade lifecycle. Settlement remains on highly secure, decentralized layers, while high-frequency order matching occurs on specialized, high-throughput environments. This evolution addresses the trade-off between speed and security, providing a robust framework for managing systemic risk in volatile markets.

Horizon

Future developments in Network Congestion Mitigation will likely center on autonomous, agent-driven transaction management.

These systems will predict congestion levels based on real-time market data and dynamically route transactions across multiple chains to ensure optimal settlement times. The integration of zero-knowledge proofs will further enhance privacy and efficiency, allowing for complex derivative settlements that are both verifiable and computationally inexpensive.

| Development | Systemic Implication |

| Predictive Routing | Dynamic load balancing across chains |

| Autonomous Liquidation | Reduced dependency on manual monitoring |

| Interoperable Settlement | Unified liquidity across fragmented layers |

The ultimate goal involves creating a seamless, invisible settlement layer that supports global-scale derivative trading. As these technologies mature, the barrier between centralized and decentralized exchange performance will continue to diminish, fostering a more efficient and resilient financial infrastructure.