Essence

Network Activity Metrics represent the granular observation of blockchain state transitions, serving as the raw input for evaluating the utility and velocity of decentralized capital. These indicators transcend price action, providing a window into the actual throughput, user engagement, and economic bandwidth of a protocol. By quantifying the frequency and volume of state changes, participants gain insight into the fundamental demand for block space, which directly influences the cost of execution and the sustainability of fee-based incentive models.

Network Activity Metrics quantify the raw throughput and economic velocity of blockchain protocols to determine fundamental demand for decentralized infrastructure.

The systemic relevance of these metrics lies in their capacity to distinguish between speculative interest and genuine protocol adoption. When analyzing derivatives or liquidity provisioning, one must weigh transaction density and active address counts against market capitalization to identify potential decoupling between valuation and utility. This evaluation informs the risk assessment of margin engines, as sustained high activity often correlates with lower volatility in fee-based revenue streams, while periods of stagnation may signal fragility within the underlying consensus mechanism.

Origin

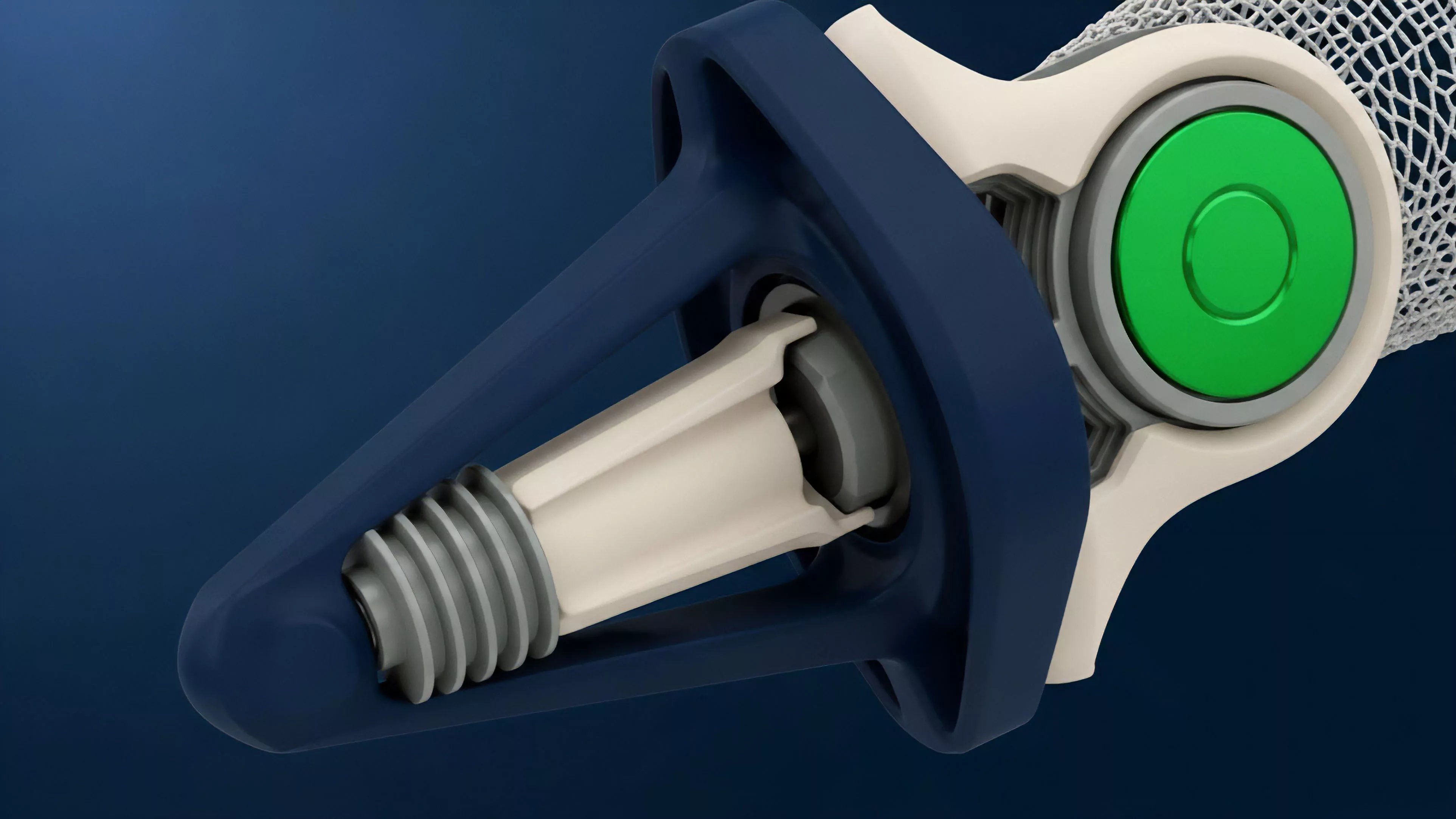

The inception of Network Activity Metrics traces back to the transparent, ledger-based architecture of Bitcoin, where the necessity to monitor hash rate and transaction throughput emerged as a requirement for verifying network health.

Early practitioners utilized basic explorers to track block propagation and mempool congestion, establishing the precedent that on-chain data constitutes the primary source of truth for digital asset assessment. This shift moved financial analysis from traditional, delayed reporting cycles toward real-time observation of protocol-level events. The development of smart contract platforms necessitated a more sophisticated taxonomy of engagement.

With the arrival of programmable money, the focus expanded to include contract invocation frequency, gas consumption patterns, and token velocity. These metrics were refined as developers sought to optimize protocol performance and minimize the latency between user intent and settlement. The evolution from simple value transfer monitoring to complex state interaction tracking marks the maturation of crypto finance as a distinct, data-intensive discipline.

Theory

The architecture of Network Activity Metrics relies on the extraction of data from block headers and transaction receipts.

Analysts focus on gas utilization, unique interaction counts, and protocol-specific state changes to build a probabilistic model of network load. This quantitative framework assumes that higher levels of state interaction reflect greater economic utility, provided that the activity is not artificial or Sybil-driven.

The structural integrity of decentralized protocols depends on the predictable relationship between state interaction frequency and the underlying cost of consensus.

Protocol Physics

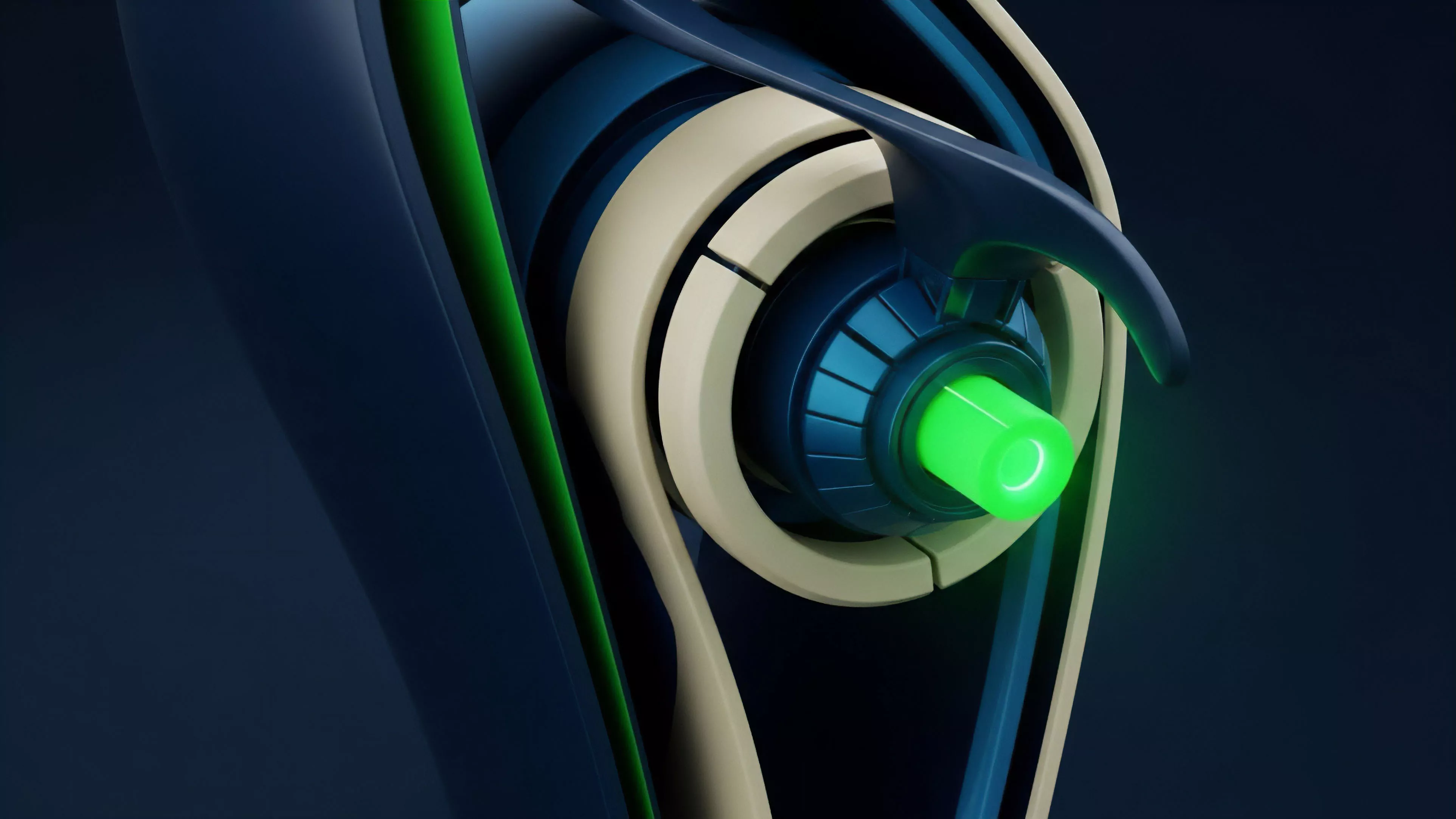

The physics of the protocol dictates the constraints under which these metrics function. Consensus latency and block time set the maximum theoretical ceiling for activity, while fee markets act as the regulatory mechanism for congestion. When the network approaches these physical limits, the cost of derivative hedging rises, as the probability of failed transactions or delayed settlement increases.

This interaction between protocol constraints and market behavior creates a feedback loop where volatility is often a byproduct of congestion-induced slippage.

Quantitative Modeling

Modeling these variables requires rigorous application of statistical methods to account for non-normal distribution of transaction volume. Analysts often employ:

- Time-series analysis to identify structural shifts in network throughput over different epochs.

- Correlation matrices linking specific token movements to protocol-level activity spikes.

- Sensitivity analysis of margin requirements against real-time changes in gas price volatility.

Sometimes I consider whether our reliance on these specific indicators mimics the early obsession with telegraph line traffic in nineteenth-century financial markets, where the physical speed of information defined the limit of economic reach. Anyway, returning to the core argument, the accuracy of these models depends on the ability to filter out non-economic noise, such as spam or automated testing, which can artificially inflate activity readings.

Approach

Current methodologies prioritize the extraction of on-chain data through high-performance indexers and RPC nodes to maintain a live feed of market microstructure. The objective is to identify shifts in order flow before they manifest in price movements.

Professionals now utilize sophisticated dashboards that track liquidity concentration and collateralization ratios across decentralized exchanges, treating these as leading indicators for broader market sentiment.

| Metric | Financial Significance |

| Gas Consumption | Indicates aggregate demand for computation |

| Unique Active Addresses | Measures user base breadth and adoption |

| Transaction Latency | Reflects network congestion and execution risk |

Strategic positioning in derivatives requires a deep understanding of how these metrics impact liquidation thresholds. During periods of high network load, the inability to execute a trade can lead to systemic failures in collateralized debt positions. Consequently, the architect must build defensive strategies that account for both market volatility and the underlying network’s capacity to process emergency liquidations during high-stress events.

Evolution

The transition from primitive ledger monitoring to advanced predictive analytics reflects the increasing complexity of decentralized financial instruments.

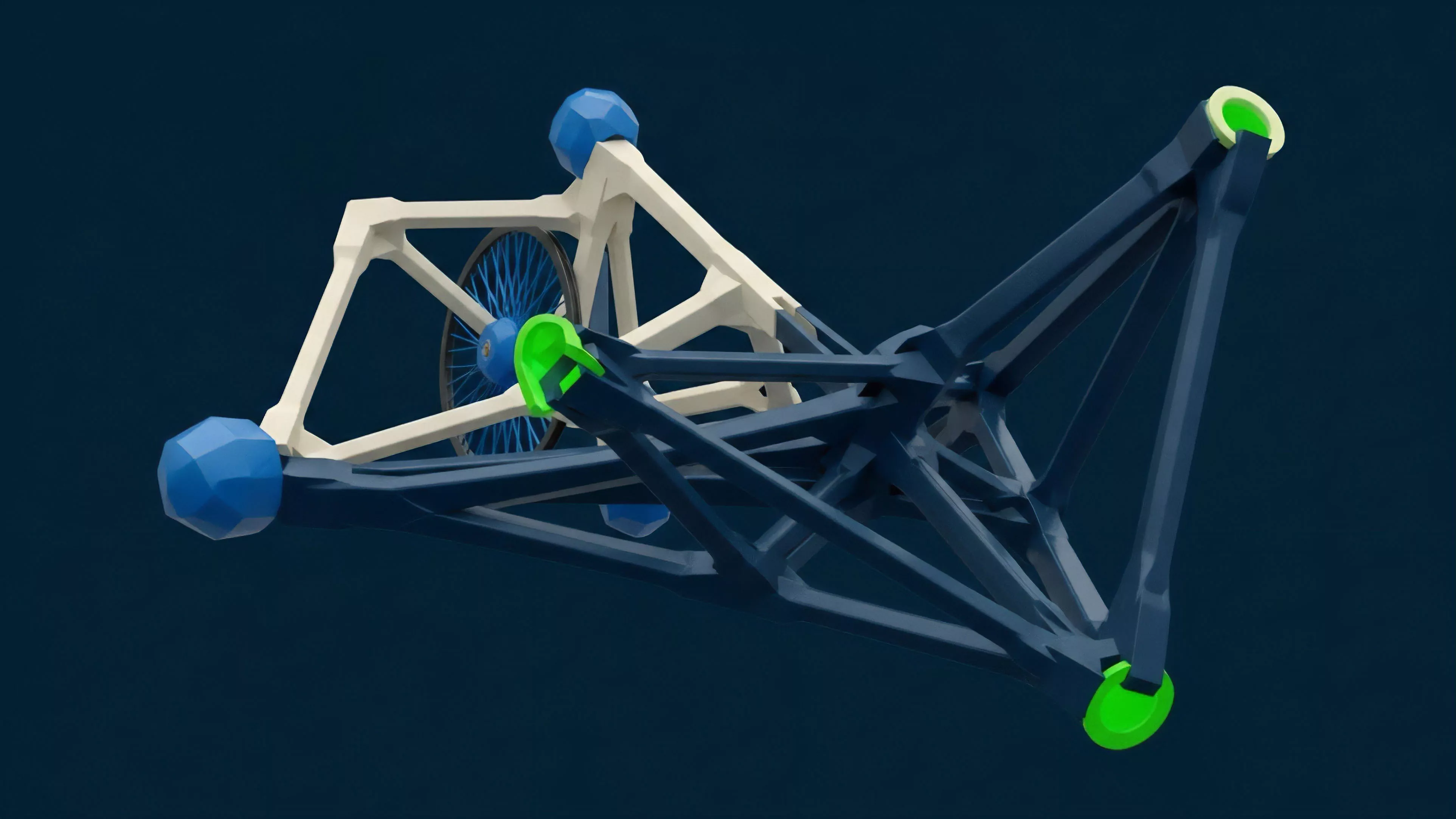

Initially, simple counters for total transactions sufficed; however, the rise of modular architectures and layer-two scaling solutions necessitated a fragmented approach to data collection. We now track activity across heterogeneous environments, necessitating the aggregation of metrics from multiple chains to form a coherent view of global liquidity.

The shift toward modular network architectures requires a fundamental redesign of how we aggregate and interpret activity across fragmented liquidity pools.

This change has moved the focus toward cross-chain interoperability and the associated risks of bridging. The systemic risk profile has changed; failures in a single bridge or cross-chain messaging protocol can now propagate across previously isolated ecosystems. Current strategies must therefore incorporate contagion risk modeling, acknowledging that the activity metrics of one protocol are inextricably linked to the health of the entire interconnected infrastructure.

Horizon

The future of Network Activity Metrics involves the integration of machine learning models to identify non-linear patterns in transaction data that traditional statistical methods miss.

As protocols adopt more complex consensus mechanisms and zero-knowledge proof validation, the metrics themselves will evolve to track the cost of verification rather than just the cost of execution. This will likely lead to the emergence of new derivatives tied directly to network performance, such as block-space futures or latency-based insurance products.

| Future Trend | Strategic Implication |

| Zero-Knowledge Scaling | Reduced on-chain footprint for high-frequency trading |

| Automated Market Makers | Increased sensitivity to protocol-level slippage |

| Modular Consensus Layers | Shift toward multi-chain activity aggregation |

The ultimate goal remains the creation of a transparent, data-driven financial system where risk is priced with mathematical precision. We are moving toward an environment where the internal state of the network is perfectly observable, allowing for the development of dynamic hedging strategies that adjust in real-time to the physical constraints of the blockchain. Success in this domain will belong to those who can translate these raw data streams into robust, automated systems capable of navigating the adversarial nature of decentralized markets.