Essence

Consensus Algorithm Optimization represents the technical refinement of distributed validation mechanisms to enhance transactional throughput, minimize latency, and improve economic security. At the system level, this process modifies how network participants reach agreement on the state of a ledger, directly impacting the velocity of capital and the reliability of derivative settlement. By adjusting parameters such as block production intervals, validator selection heuristics, and signature aggregation techniques, developers shift the balance between decentralization, scalability, and safety.

Consensus algorithm optimization adjusts the mathematical and game-theoretic parameters of distributed validation to maximize network performance and financial reliability.

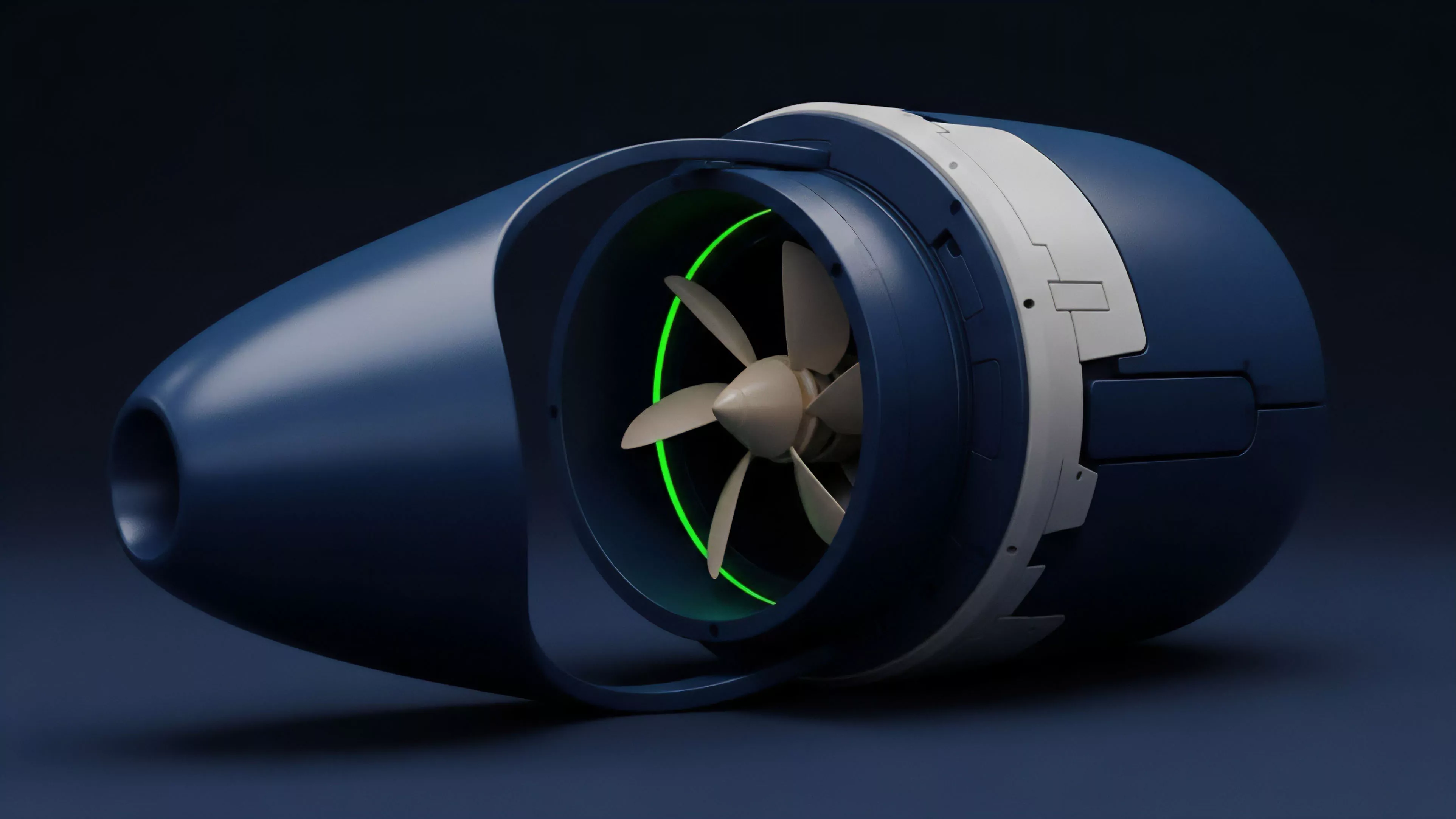

These optimizations function as the mechanical heart of decentralized finance. When validation speed increases, the duration of exposure to price volatility during the settlement phase decreases. This reduction in settlement risk permits more efficient margin management and enables higher leverage ratios without compromising the systemic integrity of the protocol.

The focus remains on achieving maximum computational efficiency while maintaining the adversarial resistance required to protect participant assets.

Origin

The necessity for Consensus Algorithm Optimization emerged from the inherent limitations of first-generation distributed systems, specifically the constraints of proof-of-work mechanisms. Early protocols struggled with high energy consumption and limited transaction capacity, which created bottlenecks for decentralized exchanges and complex financial instruments. Developers recognized that the rigid, sequential nature of these initial designs prevented the scaling required for global-scale financial markets.

- Byzantine Fault Tolerance models provided the initial mathematical framework for ensuring agreement among untrusted nodes.

- Directed Acyclic Graph architectures introduced parallel processing to overcome the sequential bottlenecks of traditional linear blockchains.

- Proof of Stake transitions shifted the cost of consensus from electricity expenditure to economic capital at risk, allowing for faster block finality.

This evolution was driven by the desire to replicate the high-frequency trading capabilities of centralized venues within a permissionless environment. Architects moved away from purely probabilistic finality toward deterministic models, ensuring that once a transaction is included in a block, it cannot be reversed. This shift established the foundation for reliable, high-speed derivatives trading, where the predictability of state updates is as valuable as the underlying asset price itself.

Theory

The mathematical structure of Consensus Algorithm Optimization relies on balancing the trilemma of security, scalability, and decentralization.

Quantitatively, this involves analyzing the latency of message propagation, the complexity of cryptographic verification, and the economic cost of malicious behavior. Systems architects model these factors using game theory to ensure that the dominant strategy for every participant remains honest cooperation.

| Mechanism | Optimization Target | Systemic Impact |

| Signature Aggregation | Computational Overhead | Faster block verification |

| Validator Sharding | Network Throughput | Parallelized transaction settlement |

| Dynamic Gas Pricing | Congestion Control | Predictable trade execution costs |

The pricing of options and other derivatives depends on the precision of the underlying network state. When the consensus process is optimized, the delta between the requested trade time and the recorded block time shrinks. This precision reduces slippage and improves the accuracy of greeks ⎊ the sensitivity measures used by traders to manage risk.

If the consensus mechanism exhibits high jitter or latency, the resulting uncertainty forces market makers to widen their spreads, increasing the cost of capital for all participants.

Optimized consensus mechanisms minimize settlement latency, directly improving the accuracy of risk-sensitive pricing models and reducing market maker spreads.

Market microstructure dynamics reveal that even microsecond improvements in consensus speed alter the competitive landscape. Automated agents prioritize protocols with lower latency to capture arbitrage opportunities. This creates a feedback loop where protocols with superior consensus architectures attract more liquidity, which in turn reinforces the security and stability of the network.

The physics of these protocols determines the limits of what financial instruments can exist on-chain.

Approach

Current methods for Consensus Algorithm Optimization focus on modularity and cross-layer communication. Instead of attempting to solve all performance issues within a single consensus layer, modern architectures delegate execution to secondary layers while anchoring security to a robust, decentralized base layer. This layered approach allows for rapid iteration of execution environments without requiring frequent, risky updates to the foundational consensus protocol.

- Rollup Integration enables high-throughput processing by batching transactions before committing them to the primary consensus layer.

- Validator Set Selection algorithms now utilize reputation-based metrics to ensure that the most reliable nodes participate in critical consensus rounds.

- Zero Knowledge Proofs allow for the compression of validation data, reducing the bandwidth requirements for nodes to synchronize the ledger state.

Architects manage systemic risk by implementing rigorous stress testing of these optimized consensus pathways. They simulate high-load scenarios to identify potential failure points where latency spikes might lead to liquidation cascades or arbitrage failures. This defensive engineering mindset ensures that performance gains are not achieved by sacrificing the fundamental security properties that define decentralized markets.

The objective is to build a financial system that is both faster than legacy infrastructure and fundamentally more resilient to systemic shocks.

Evolution

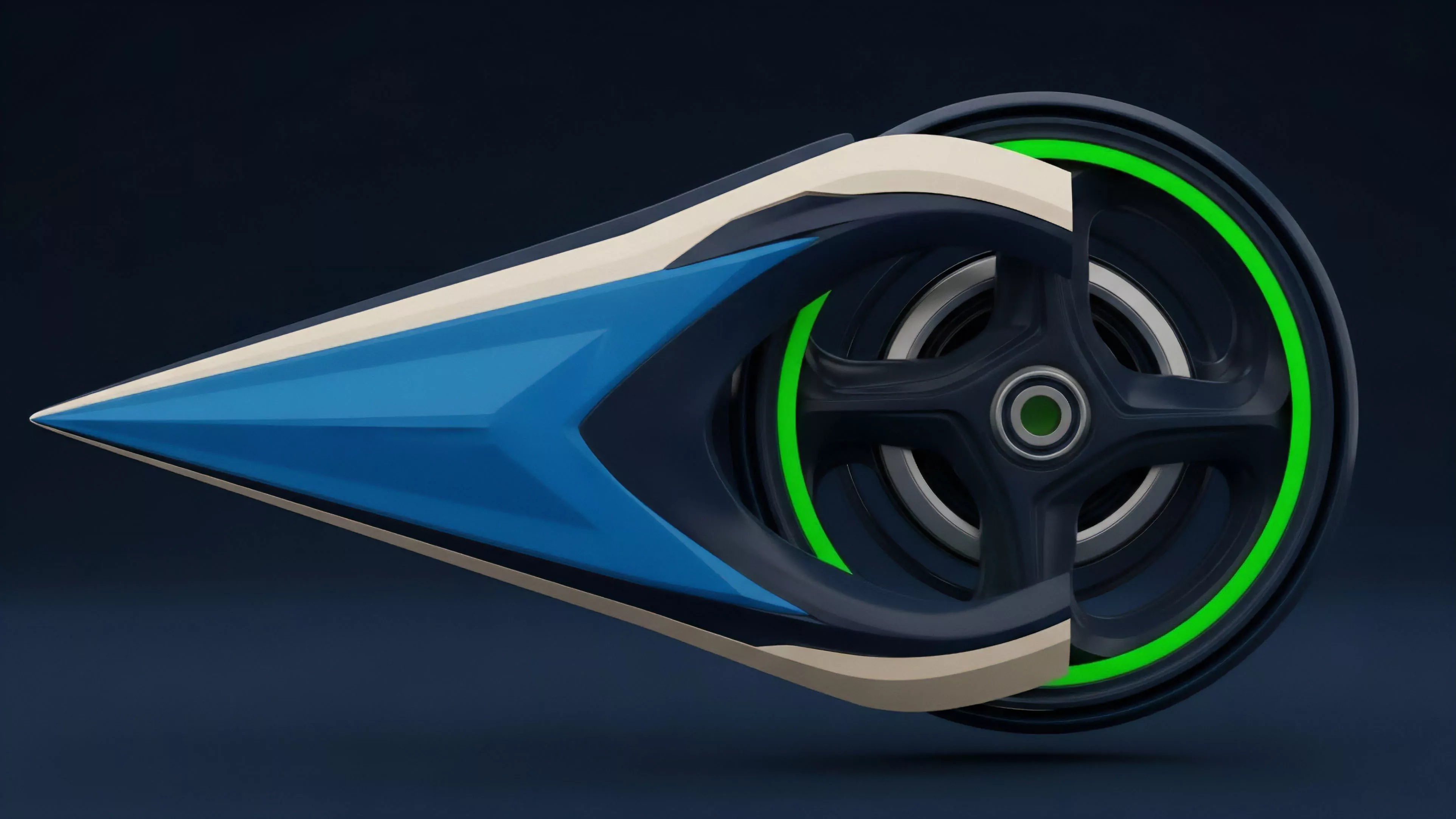

The transition from monolithic to modular consensus has been the defining shift in recent years. Early designs required every node to process every transaction, which created an unavoidable performance ceiling. As demand for decentralized derivatives grew, this architecture proved unsustainable, forcing a migration toward designs that distribute the validation load across multiple, specialized segments of the network.

The shift from monolithic to modular consensus architectures marks the transition toward scalable decentralized infrastructure capable of supporting global derivative volumes.

This evolution also encompasses the development of sophisticated incentive structures. Earlier consensus models relied on simple token rewards, whereas newer approaches utilize complex fee-burning and slashing mechanisms to align validator behavior with long-term network health. These economic designs serve as a vital constraint on adversarial behavior, ensuring that the cost of attacking the consensus process significantly outweighs the potential gains from exploitation.

The protocol now functions as a self-regulating market where performance is incentivized and security is a programmable variable.

Horizon

Future developments in Consensus Algorithm Optimization will likely prioritize asynchronous consensus and hardware-level acceleration. As protocols mature, the focus will move toward achieving near-instant finality, which is a requirement for truly efficient, real-time derivatives trading. The integration of specialized hardware ⎊ such as trusted execution environments and programmable network interface cards ⎊ will further reduce the latency of signature verification and state updates.

| Future Focus | Technological Driver | Financial Outcome |

| Asynchronous Finality | Protocol Design | Elimination of settlement wait times |

| Hardware Acceleration | Cryptographic Chips | Order of magnitude faster throughput |

| Cross-Chain Settlement | Interoperability Standards | Unified global liquidity pools |

These advancements will enable the creation of increasingly complex financial products that currently reside exclusively on centralized exchanges. The boundary between on-chain and off-chain performance will vanish as consensus optimization renders the underlying infrastructure invisible to the user. This will create a environment where the primary differentiator between protocols is not just speed, but the depth and reliability of their liquidity, which is ultimately anchored by the robustness of their consensus architecture.