Essence

Modular Blockchain Efficiency defines the optimization of throughput and cost structures through the decoupling of consensus, execution, data availability, and settlement layers. This architectural shift transforms monolithic constraints into a flexible stack where specialized components interact to reduce redundant computation. By isolating these functions, the system achieves higher transaction density and lower overhead for decentralized applications.

Modular blockchain efficiency represents the strategic decoupling of core protocol functions to maximize throughput and reduce computational redundancy.

The primary value proposition lies in the ability to scale specific layers independently, such as increasing data availability capacity without forcing every node to execute every transaction. This granular approach enables a more resilient and performant financial infrastructure. The systemic impact is a reduction in the friction associated with cross-chain interactions and state bloat, which currently limits the scalability of decentralized derivatives and complex financial instruments.

Origin

The concept emerged from the inherent trade-offs described by the blockchain trilemma, where monolithic designs struggled to balance decentralization, security, and scalability simultaneously.

Early iterations of blockchain architecture bundled all functions into a single layer, leading to significant congestion during periods of high market volatility. Developers recognized that separating these responsibilities could allow each component to be optimized for its specific task.

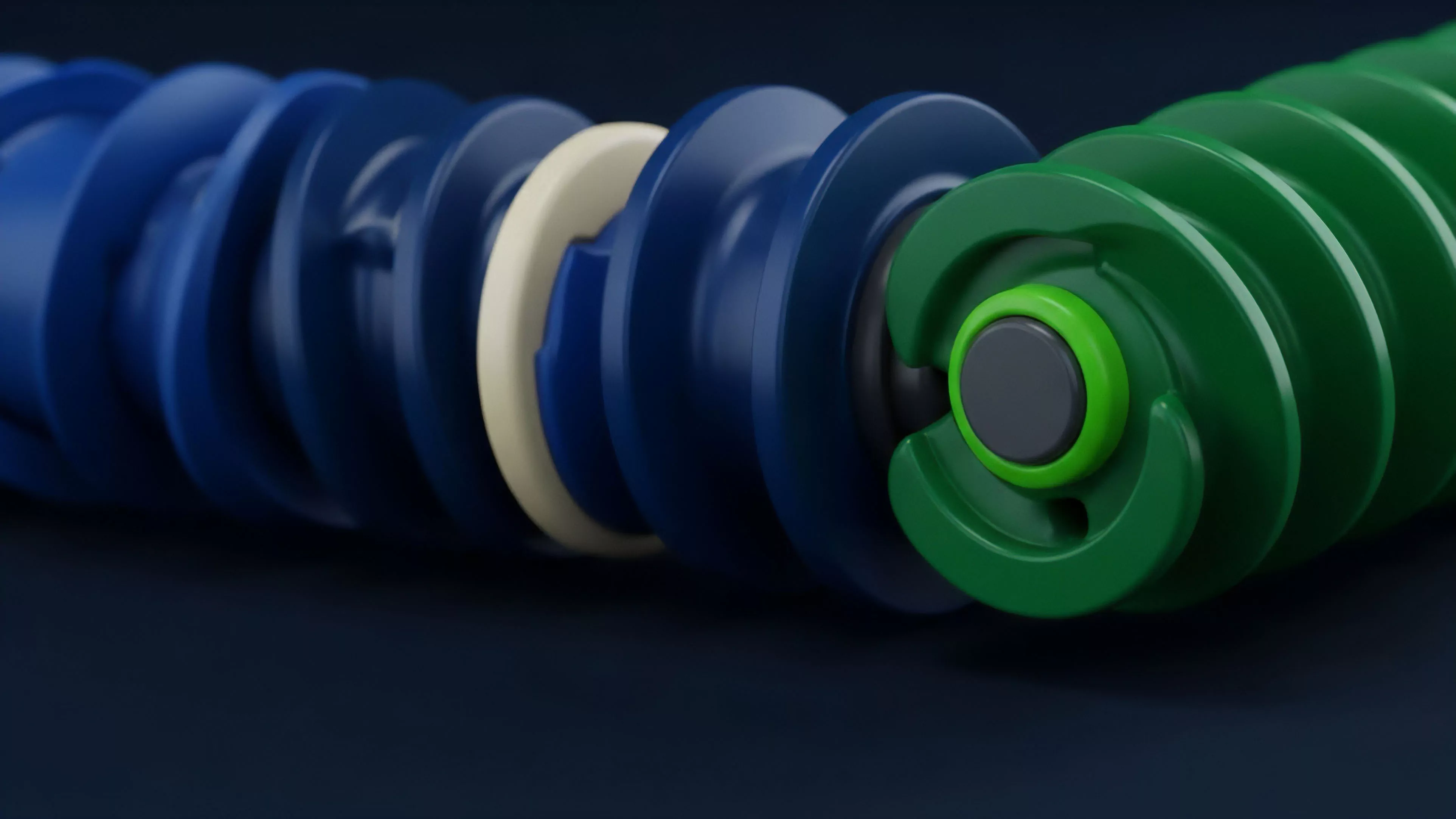

- Decoupled Architecture: The realization that consensus mechanisms need not be coupled with heavy execution environments.

- Data Availability Sampling: The technical breakthrough allowing nodes to verify data without downloading entire blocks.

- Specialized Execution Environments: The move toward rollups and app-specific chains designed for high-frequency trading.

This transition reflects a departure from the one-size-fits-all model toward a heterogeneous ecosystem. The history of this development mirrors the evolution of traditional cloud computing, where monolithic servers were replaced by microservices and distributed storage solutions.

Theory

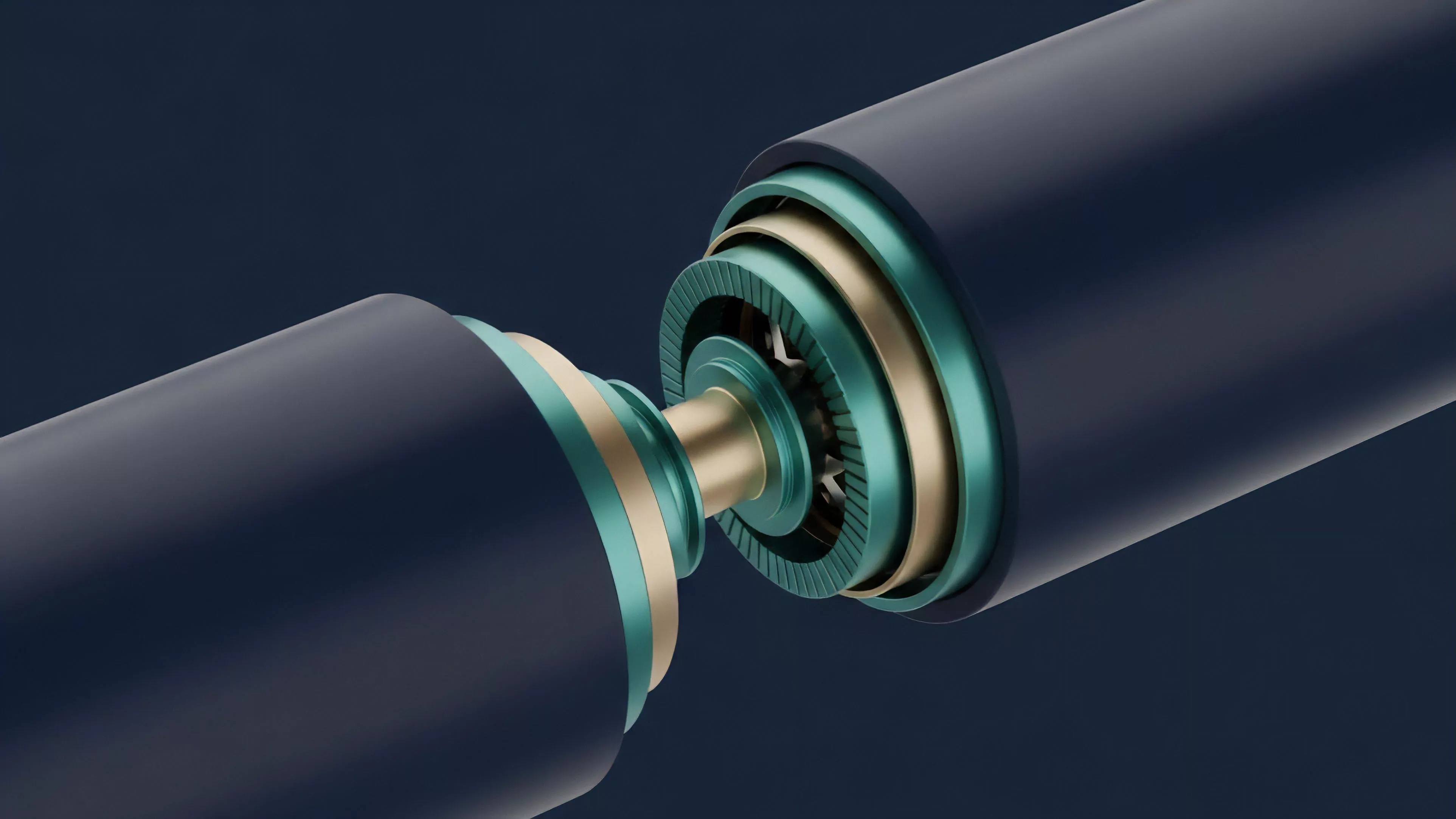

The theoretical framework rests on the principle of computational specialization. By distributing the workload across distinct layers, the system minimizes the total resource consumption required to reach finality.

In the context of derivatives, this means that pricing engines and margin managers can operate within high-performance execution layers while relying on a decentralized base layer for secure settlement.

| Layer Type | Primary Function | Efficiency Metric |

| Execution | Transaction processing | Latency per trade |

| Settlement | Dispute resolution | Finality time |

| Data Availability | Block storage | Sampling overhead |

The interplay between these layers creates a unique environment for risk management. Because the execution layer can be customized, protocols can implement specific order matching algorithms or liquidation logic that would be too costly to run on a monolithic chain. This architecture essentially shifts the burden of performance from the base layer to the specialized rollup, allowing for tighter spreads and more precise hedging strategies.

Computational specialization within modular stacks allows for the deployment of high-frequency derivative protocols on secure, decentralized foundations.

The physics of this consensus model relies on light clients and fraud proofs to maintain security. As nodes participate in sampling, the probability of data unavailability approaches zero, providing a robust base for financial activity. The market microstructure changes accordingly, as participants shift from relying on a single chain to navigating a multi-layered environment.

Approach

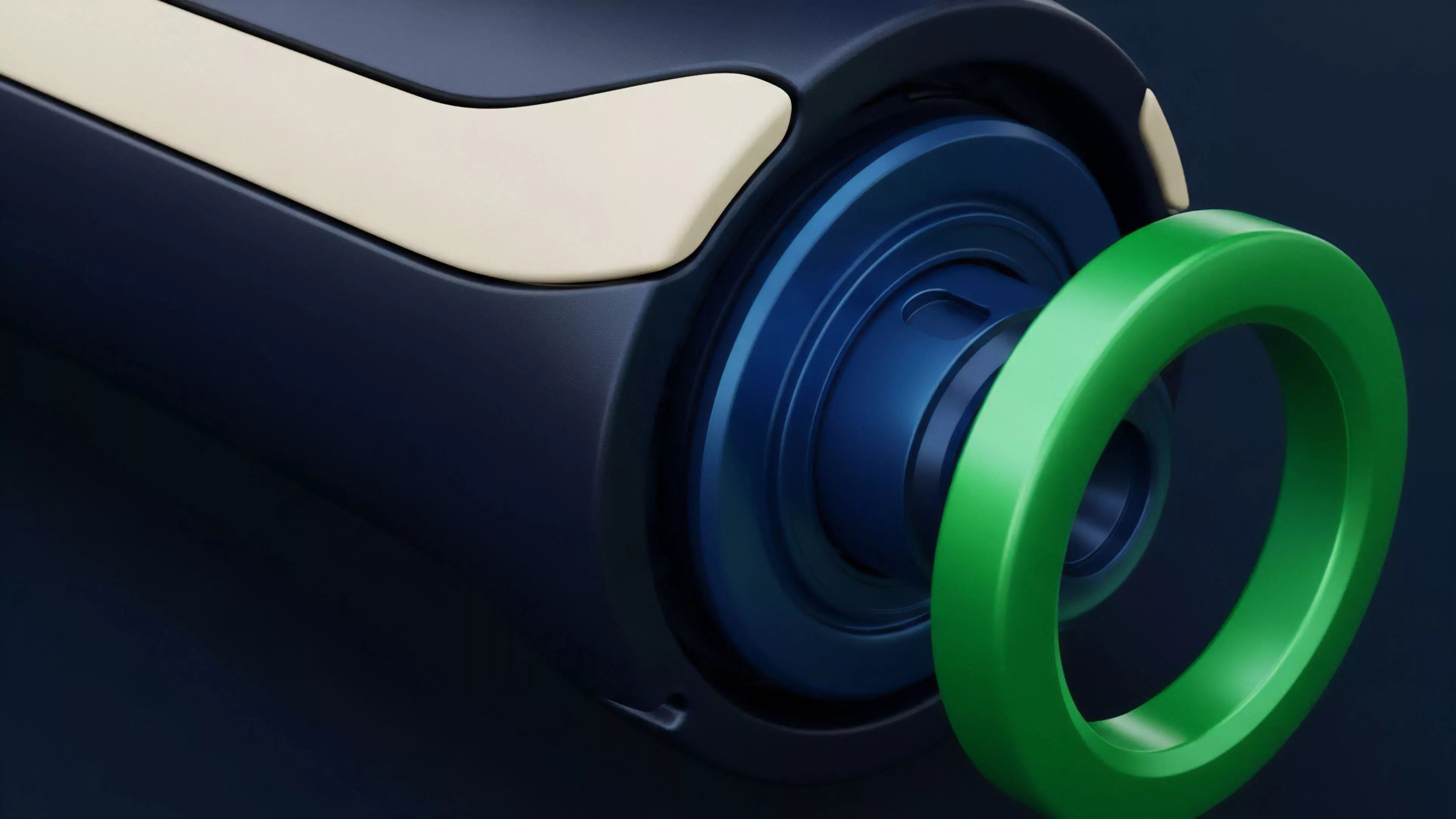

Current implementations prioritize the development of robust interoperability bridges and shared sequencing.

These mechanisms ensure that liquidity remains unified across the modular stack, preventing the fragmentation that typically accompanies multi-chain deployments. Market participants now utilize sophisticated routing protocols to execute trades across different execution environments while maintaining consistent margin requirements.

- Shared Sequencing: Centralizing the order of transactions across multiple rollups to minimize latency and prevent front-running.

- Recursive Proofs: Compressing multiple verification steps into a single proof to reduce the cost of state updates.

- Cross-Layer Liquidity: Utilizing automated market makers that operate across different execution layers to maintain price parity.

This approach requires a rigorous focus on smart contract security, as the complexity of interacting with multiple layers increases the attack surface. Market makers must account for the latency differences between the execution and settlement layers, adjusting their pricing models to reflect the probabilistic nature of finality. It is a game of managing temporal risk across a distributed system.

Evolution

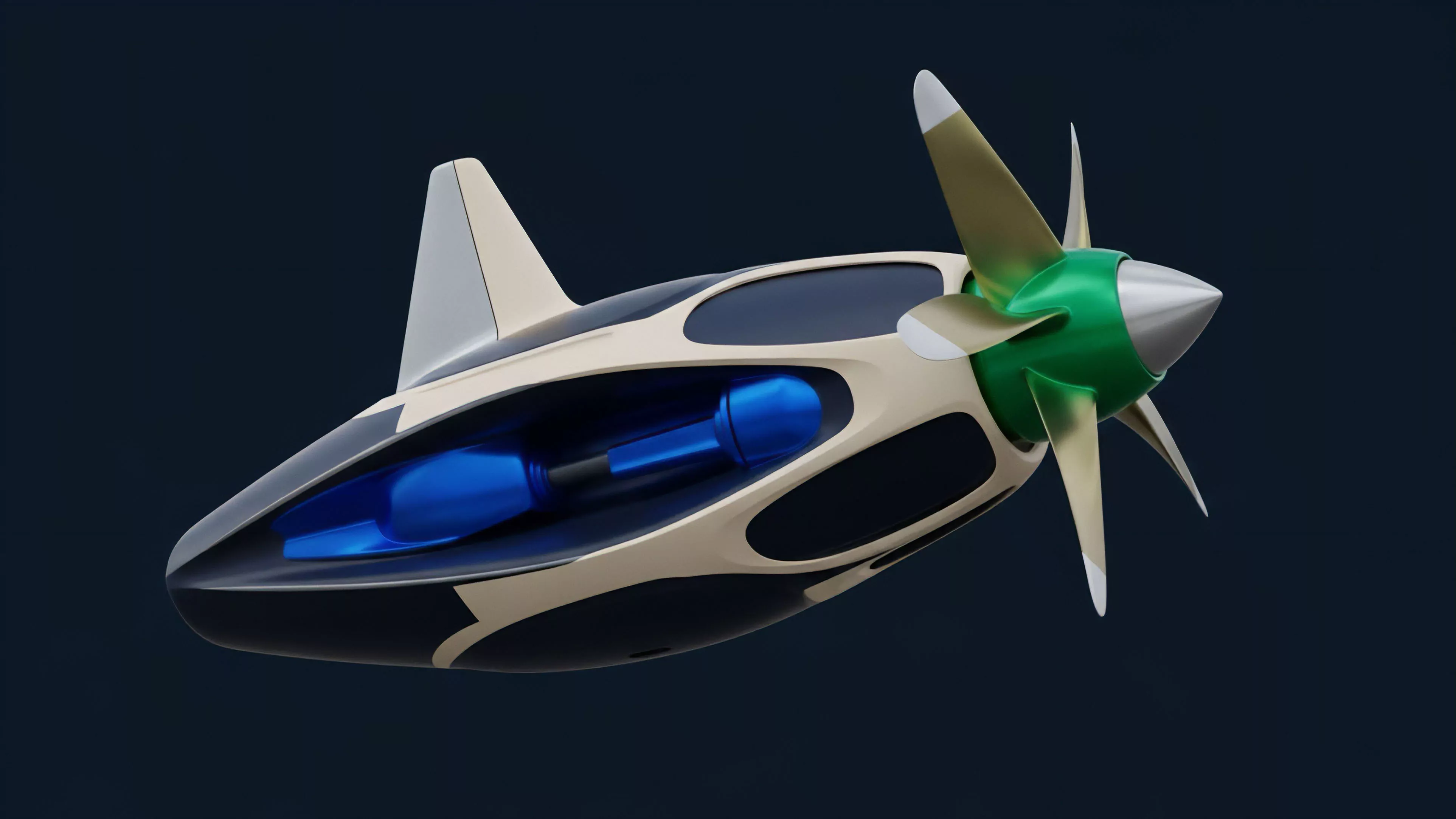

The transition from early, experimental rollups to production-grade modular systems marks a shift in market maturity.

Initial efforts focused on simple asset transfers, whereas current infrastructure supports complex derivative platforms with real-time risk engines. The integration of zero-knowledge proofs has significantly accelerated this progression, allowing for verifiable computation that does not sacrifice privacy or security.

Modular systems evolve by replacing trust-based bridging with cryptographic proofs that ensure state consistency across fragmented execution environments.

One must consider the broader implication: as these systems become more efficient, the cost of capital within decentralized markets decreases. This trend incentivizes the migration of institutional-grade trading strategies onto decentralized venues. The shift is not purely technical; it represents a fundamental change in how market participants view the reliability and performance of decentralized infrastructure.

Horizon

Future developments will focus on the standardization of inter-layer communication protocols.

As these standards stabilize, the distinction between individual blockchains will blur, leading to a cohesive global liquidity layer. This will enable the creation of highly complex derivative instruments that leverage assets across diverse modular components without requiring manual intervention or excessive trust.

| Development Phase | Primary Objective | Market Impact |

| Standardization | Unified messaging | Reduced liquidity fragmentation |

| Autonomic Scaling | Self-adjusting throughput | Lowered volatility costs |

| Universal Settlement | Cross-layer finality | Institutional adoption |

The next logical step involves the implementation of autonomous agents that manage risk and liquidity across the entire modular stack. These agents will operate with a level of precision impossible in current monolithic environments, effectively optimizing the capital efficiency of the entire ecosystem. The result will be a more resilient and transparent financial market that operates with the speed and reliability of traditional high-frequency venues. What remains to be determined is how regulatory frameworks will adapt to a system where the site of execution and the site of settlement are physically and logically distinct.