Essence

Market Data Transparency functions as the structural bedrock for price discovery and risk assessment within decentralized derivative environments. It denotes the availability, accessibility, and integrity of real-time order book state, trade execution history, and liquidity depth across disparate venues. When participants possess an accurate, unified view of market activity, the information asymmetry that characterizes fragmented crypto venues diminishes, allowing for more precise valuation of complex option contracts.

Market Data Transparency represents the unencumbered visibility of order flow and execution data required to establish verifiable asset pricing.

Without this clarity, decentralized finance protocols operate in a state of perpetual opacity, where the true cost of hedging or speculation remains obscured by latency and siloed data structures. This transparency extends beyond simple trade reporting; it encompasses the granular details of market microstructure, such as the composition of liquidity pools and the specific mechanisms governing margin calls. By exposing these variables, protocols facilitate a more resilient financial architecture, one where market participants can audit the systemic health of the platform in real time.

Origin

The necessity for Market Data Transparency arose directly from the inefficiencies inherent in early decentralized exchange designs, which struggled to replicate the high-frequency capabilities of traditional order books.

Initial models relied on simplistic automated market maker mechanics that frequently suffered from slippage and impermanent loss, leaving derivative traders with poor execution quality and minimal insight into underlying volatility. The migration toward sophisticated on-chain order books and hybrid off-chain matching engines was driven by the requirement for more robust data signals.

- Information Asymmetry: The primary historical driver, where early participants lacked access to comprehensive trade data, leading to skewed pricing.

- Fragmented Liquidity: The technical condition of disparate protocols, which necessitated unified data standards to ensure accurate global price discovery.

- Protocol Security: The demand for verifiable, immutable records of all transactions to prevent front-running and manipulation within decentralized environments.

As decentralized protocols evolved to support complex financial instruments, the reliance on transparent data streams became a survival requirement. The shift from black-box execution models to open-ledger or verifiable-off-chain systems reflects a fundamental change in how decentralized finance views the relationship between information flow and systemic trust.

Theory

The theoretical framework for Market Data Transparency rests on the principle of symmetric information access, which is essential for the accurate pricing of options and the maintenance of stable margin engines. In a perfectly transparent system, the Greeks ⎊ Delta, Gamma, Vega, and Theta ⎊ can be calculated with high precision, as the underlying volatility and order flow are observable.

Adversarial agents constantly probe these systems for latency advantages or liquidity imbalances, meaning that transparency is not a static state but a defensive posture against market manipulation.

| Mechanism | Transparency Impact |

| Order Book Visibility | Reduces latency arbitrage and informs liquidity providers of risk. |

| Trade Execution Logs | Enables accurate calculation of realized volatility and slippage. |

| Margin Engine State | Provides real-time indicators of systemic liquidation risk. |

The mathematical modeling of these derivatives requires continuous, high-fidelity inputs. If the data feed experiences delays or lacks depth, the resulting pricing models fail to account for the true state of the market, leading to incorrect hedging strategies and increased vulnerability to contagion. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

The technical architecture must therefore ensure that data propagation speeds align with the requirements of the derivative instruments being traded.

Robust derivative pricing relies upon the continuous, verifiable flow of market data to maintain accurate sensitivity modeling.

Approach

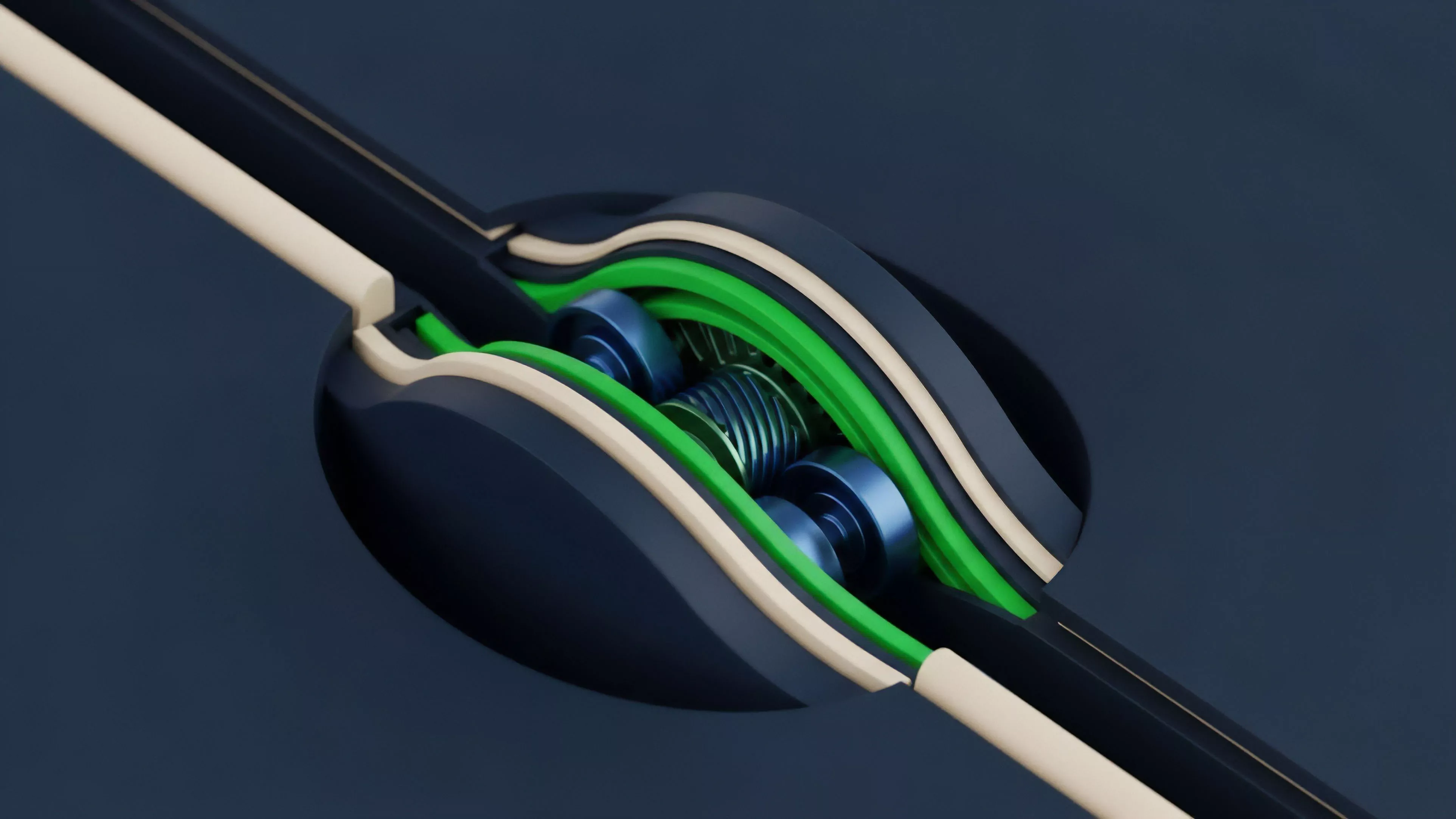

Current implementation strategies focus on the integration of decentralized oracles and high-performance indexing solutions that aggregate data from multiple chains and protocols. These systems work to normalize disparate data formats into a unified stream, providing a coherent picture of market depth and order flow. Participants now utilize advanced monitoring tools that scan the mempool and on-chain state to identify shifts in market sentiment before they manifest in price action.

- Decentralized Oracles: These provide the secure, tamper-proof data feeds required for executing complex derivative contracts on-chain.

- Indexing Protocols: Specialized services that parse blockchain data into queryable formats, allowing for rapid analysis of historical and real-time trade flow.

- Latency Mitigation: Architectural choices such as batch auctions or off-chain matching engines that prioritize fairness in data distribution.

This approach shifts the burden of verification from a central authority to the market participants themselves. By creating open access to the raw data, protocols allow any actor to run their own risk assessment models, which creates a more competitive and efficient environment. The ability to monitor these flows is a prerequisite for any participant attempting to manage complex risk in a decentralized setting.

Evolution

The trajectory of Market Data Transparency has moved from simple, reactive trade reporting to proactive, predictive market analysis.

Early systems were limited by the bandwidth and computational constraints of blockchain networks, often resulting in stale data and limited visibility into order books. Modern protocols have transitioned to modular architectures where data availability is decoupled from transaction execution, allowing for higher throughput and more detailed reporting without sacrificing security.

The evolution of data standards directly enables the maturation of decentralized derivatives into complex, institutional-grade financial instruments.

This progress has been punctuated by significant shifts in protocol design, where the focus has moved toward creating native, transparent hooks for external auditors and quantitative traders. We have witnessed a transformation from siloed, opaque liquidity pools to interconnected systems where data flows freely across bridges and chains. The current state reflects a maturing infrastructure, one that prioritizes the integrity of the data stream as much as the security of the smart contracts themselves.

Horizon

Future developments will likely focus on the integration of zero-knowledge proofs to verify the integrity of market data without sacrificing the privacy of individual participants.

This development addresses the tension between public visibility and the need for institutional privacy in high-volume trading environments. Furthermore, the standardization of cross-protocol data streams will facilitate the emergence of global order books, effectively unifying the fragmented liquidity of the current landscape.

| Development | Systemic Implication |

| Zero Knowledge Verification | Verifiable data integrity without exposing private participant strategy. |

| Cross Chain Liquidity Aggregation | Unified market depth across disparate blockchain ecosystems. |

| Predictive Flow Analysis | Automated agent responses to real-time, transparent market signals. |

As these technologies mature, the barrier to entry for sophisticated market makers will lower, leading to increased efficiency and tighter spreads in decentralized options markets. The ultimate goal is a system where transparency is a native, unchangeable property of the protocol architecture, rather than an added layer of reporting. This shift will define the next phase of decentralized financial evolution, where data accuracy and accessibility dictate the success of market participants and the resilience of the entire system. What happens to market stability when the speed of data propagation surpasses the latency limits of human and automated decision-making?