Essence

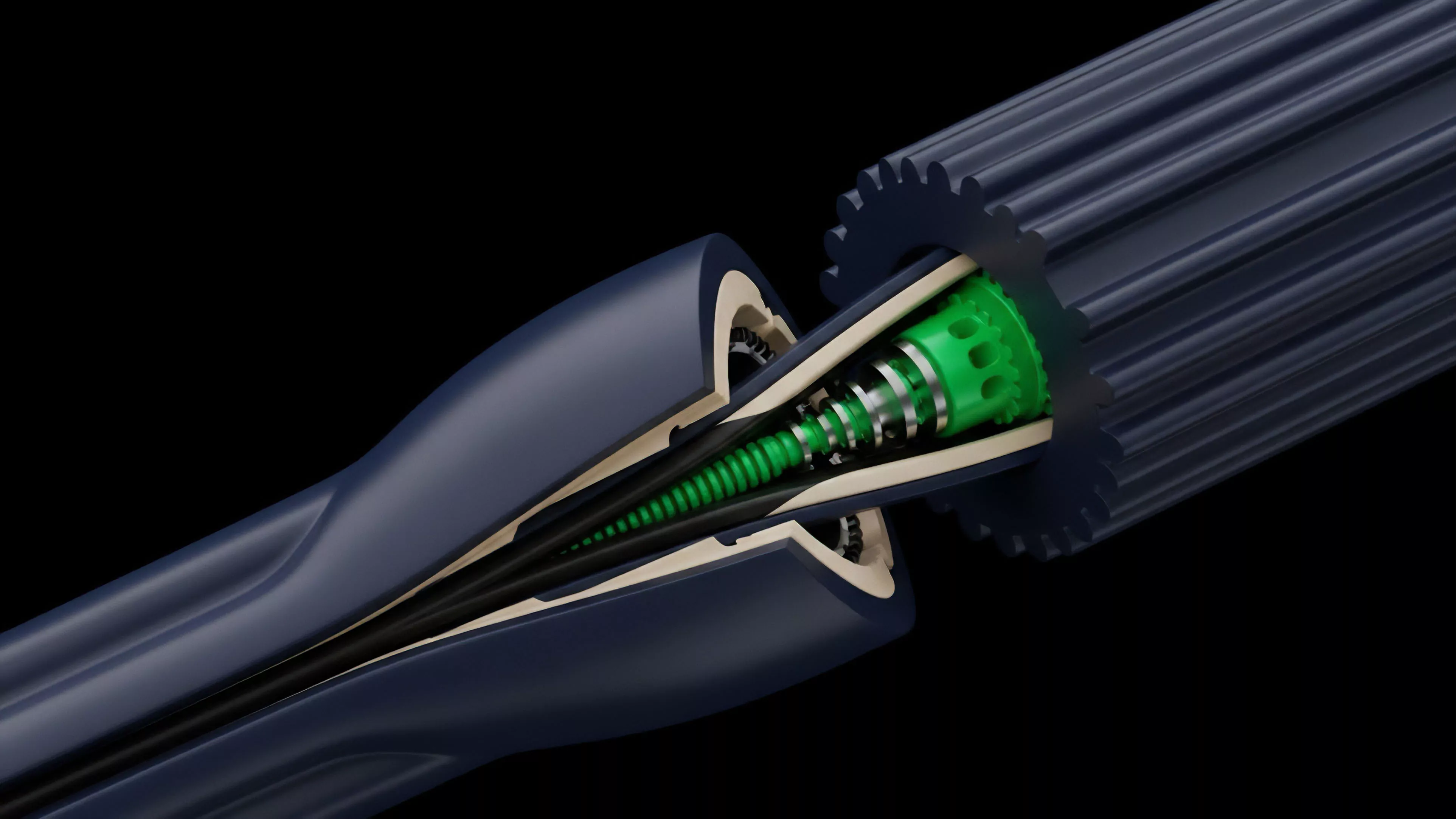

Financial Modeling Tools represent the computational substrate upon which decentralized derivatives markets function. These systems translate complex probabilistic outcomes into executable code, providing the infrastructure for pricing, risk assessment, and margin management in environments devoid of centralized clearinghouses. They act as the primary interface between raw market volatility and the structured financial instruments that allow participants to hedge or speculate with precision.

Financial modeling tools function as the digital infrastructure for pricing and risk management in decentralized derivatives markets.

The operational value of these tools resides in their ability to automate the valuation of path-dependent assets. By codifying mathematical models directly into smart contracts, these systems eliminate counterparty uncertainty regarding settlement terms. This transformation of abstract quantitative theory into immutable protocol logic ensures that every participant operates under the same set of algorithmic constraints, thereby establishing a transparent foundation for price discovery.

Origin

The genesis of these tools traces back to the adaptation of classical quantitative finance frameworks ⎊ specifically the Black-Scholes-Merton model and its binomial extensions ⎊ into the restricted environment of programmable blockchains. Early iterations sought to replicate traditional order-book dynamics on-chain, but the high latency and transaction costs of early protocols necessitated a shift toward automated market maker architectures and oracle-dependent pricing mechanisms.

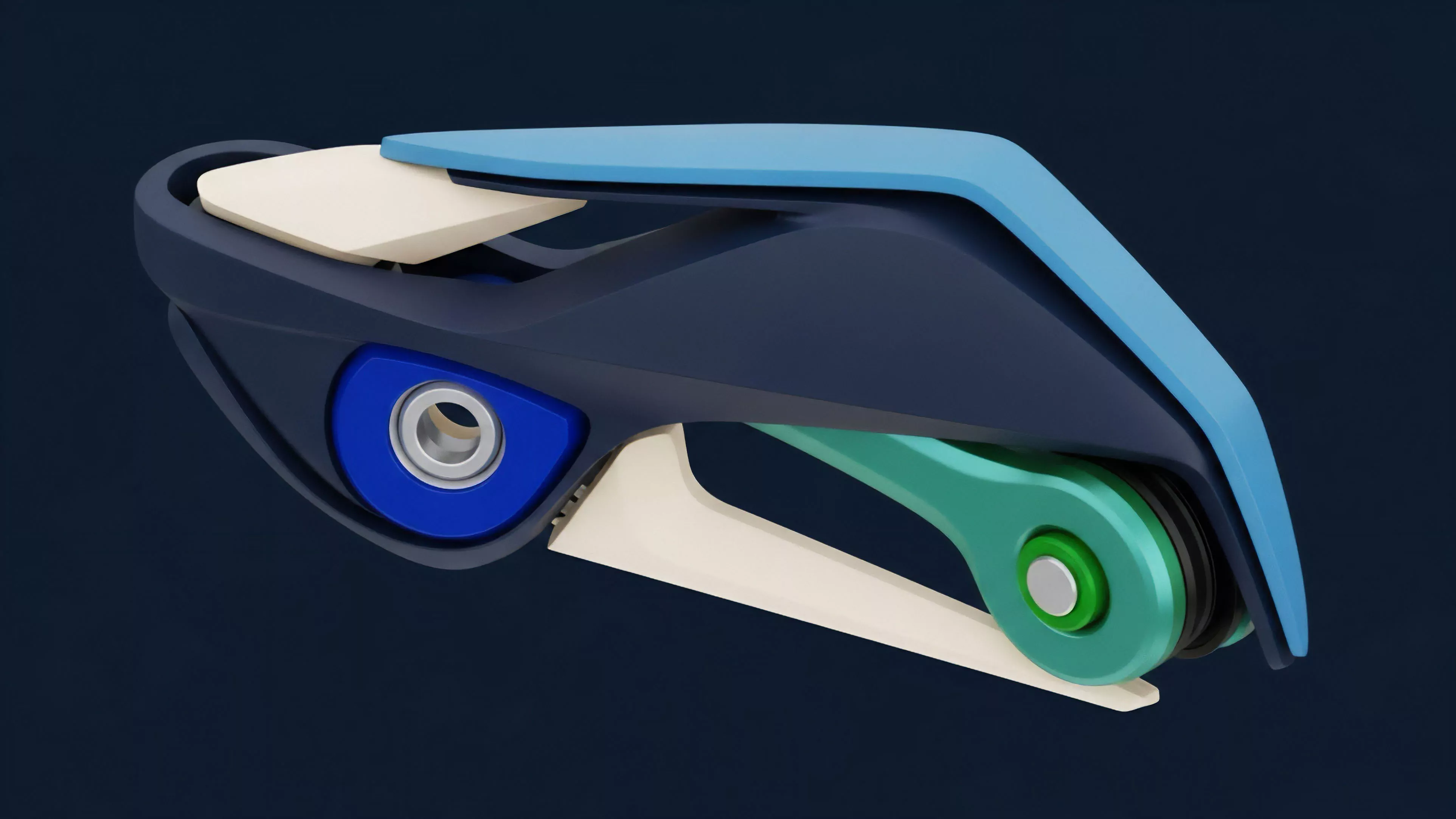

The evolution from centralized exchange architectures to decentralized protocol designs demanded a complete rethinking of margin engines. Developers recognized that the inability to perform high-frequency updates required a new class of Financial Modeling Tools capable of handling extreme volatility without constant human intervention. This necessity birthed the current generation of protocols that utilize:

- Automated Oracles providing external price feeds to trigger liquidation events.

- Dynamic Margin Requirements that adjust based on real-time asset volatility and network congestion.

- Synthetic Collateralization mechanisms allowing for cross-asset hedging without direct exposure to the underlying spot market.

Theory

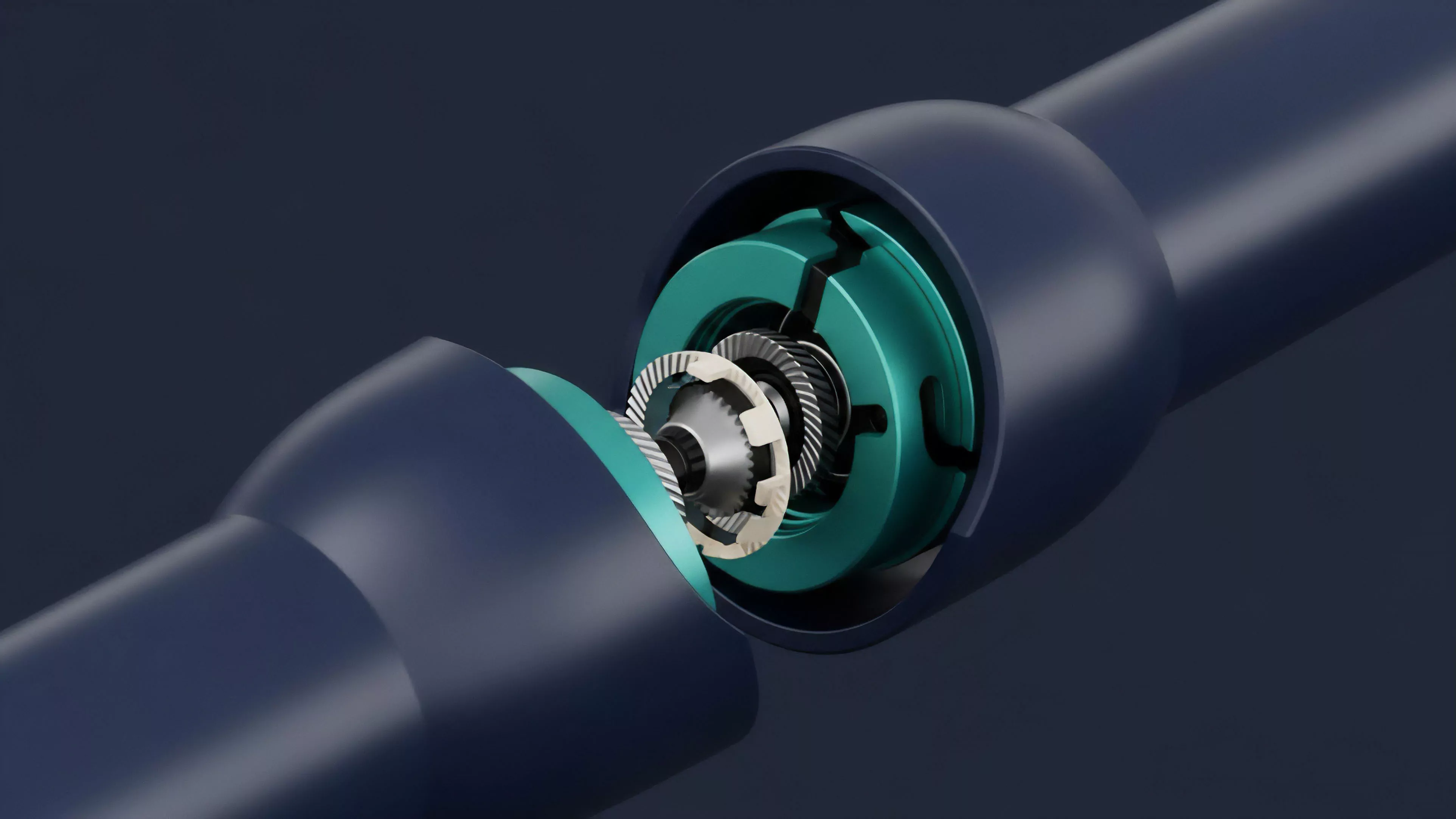

At the structural level, Financial Modeling Tools operate through the integration of stochastic calculus and game theory. The objective is to maintain a state of equilibrium where the protocol remains solvent under diverse market conditions. This involves calculating Greeks ⎊ Delta, Gamma, Theta, Vega, and Rho ⎊ within the constraints of smart contract gas limits and execution speed.

A failure to accurately account for these sensitivities results in systemic insolvency during periods of rapid price movement.

| Metric | Function in Decentralized Models |

| Delta | Sensitivity of option value to underlying asset price changes |

| Gamma | Rate of change in Delta relative to underlying price |

| Theta | Time decay impact on option premiums |

| Vega | Sensitivity to changes in implied volatility |

The systemic implication of these models is the shift from discretionary risk management to algorithmic enforcement. Participants must interact with these tools acknowledging that code executes regardless of market context. One might argue that our reliance on these automated engines introduces a unique form of Systems Risk, where the interconnectedness of liquidity providers and liquidators creates feedback loops that exacerbate market stress rather than absorbing it.

The mathematics are sound, yet the protocol physics often clash with the chaotic reality of human-driven order flow.

Approach

Modern practitioners utilize a combination of on-chain data analysis and off-chain quantitative simulation to calibrate these tools. The current workflow emphasizes the stress-testing of protocol parameters against historical volatility cycles. By running Monte Carlo simulations, architects determine the optimal liquidation thresholds and collateral ratios required to survive tail-risk events.

Quantitative modeling in decentralized finance relies on the rigorous stress-testing of protocol parameters against historical volatility data.

The strategic implementation of these tools involves three primary pillars:

- Risk Sensitivity Analysis involving the continuous monitoring of portfolio exposure to ensure margin requirements remain sufficient.

- Liquidity Provision Modeling focusing on the optimization of capital efficiency for market makers in automated pools.

- Smart Contract Auditing which serves as the ultimate constraint on the financial logic, as any vulnerability in the code renders the underlying model irrelevant.

Evolution

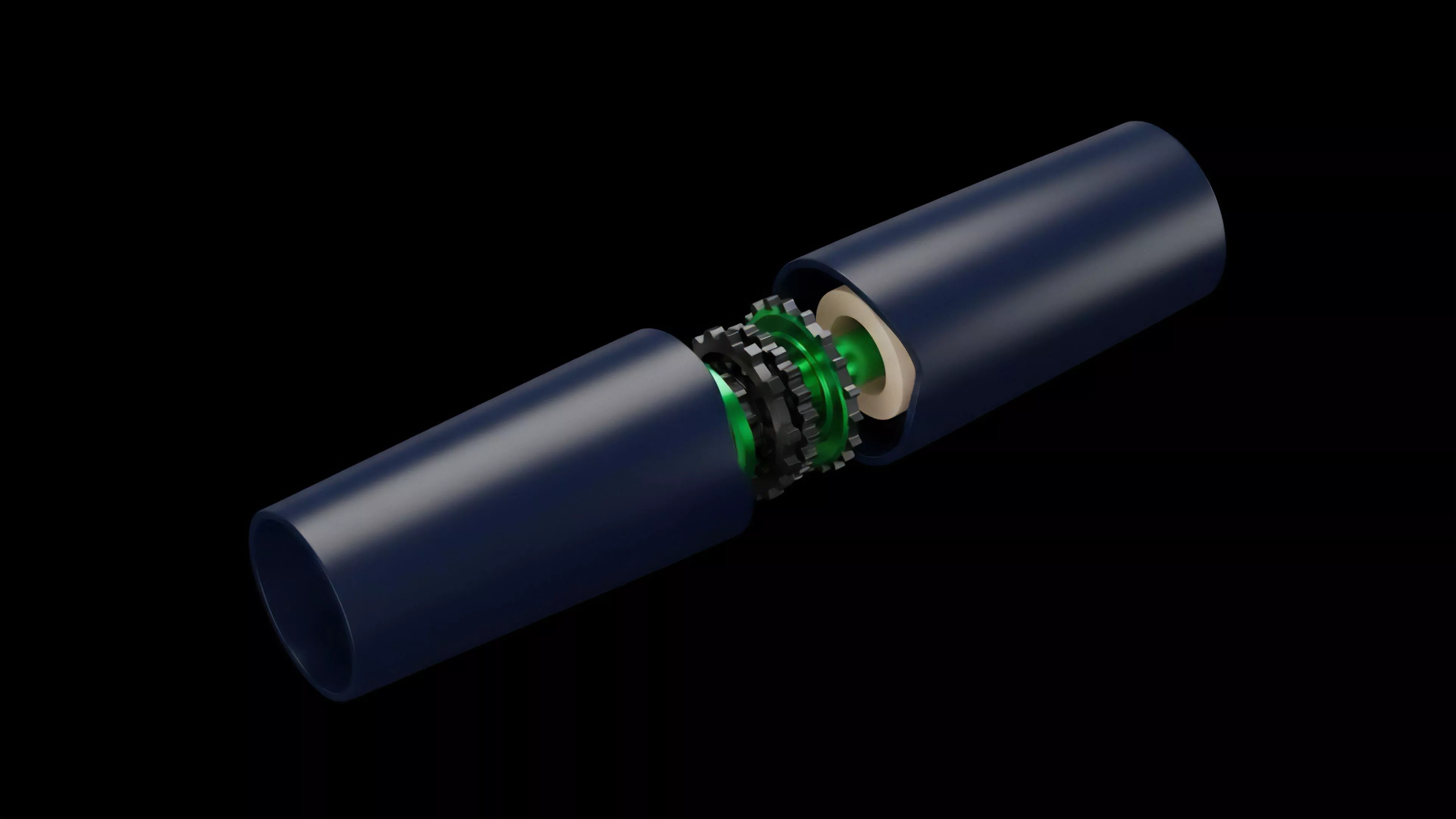

The transition of these tools from basic spot-based calculators to sophisticated multi-asset derivative engines reflects the broader maturation of the decentralized financial stack. Early versions struggled with Oracle Latency and Liquidation Slippage, which frequently led to under-collateralization. Recent advancements have moved toward hybrid off-chain computation models where complex pricing is performed in high-performance environments and only the final state transition is committed to the blockchain.

This structural shift addresses the inherent trade-off between decentralization and performance. The architecture now favors modularity, allowing protocols to swap pricing models or oracle providers without requiring a full system migration. The evolution of Financial Modeling Tools is increasingly driven by the need for capital efficiency, as participants demand higher leverage ratios and tighter spreads in a competitive, global market.

Horizon

Future development will likely prioritize the integration of machine learning models to adjust risk parameters autonomously. As protocols become more complex, the ability to predict and respond to liquidity crises will become the primary competitive advantage. The next generation of tools will need to bridge the gap between Macro-Crypto Correlation and local protocol liquidity, ensuring that global economic shifts do not lead to localized system failures.

| Future Trend | Anticipated Impact |

| Autonomous Risk Adjustment | Reduced manual governance intervention |

| Cross-Protocol Liquidity Aggregation | Lower slippage and deeper order books |

| Predictive Liquidation Engines | Proactive solvency management during high volatility |

The ultimate goal is the creation of a resilient financial layer that functions with the reliability of traditional banking but the transparency of open-source software. This requires a departure from rigid, static models toward adaptive systems that learn from the adversarial nature of the market. Our success depends on the ability to translate these complex mathematical requirements into interfaces that allow for intuitive risk management by a wider array of participants.