Essence

The continuous, algorithmic audit of a derivative position’s collateral adequacy against potential market movements defines Dynamic Margin Solvency (DMS) Verification. This mechanism functions as the financial physics engine of a protocol, dictating the precise boundary between solvent capital and systemic risk. Its primary objective is to guarantee that the collateral posted by a trader is sufficient to absorb the maximum probable loss ⎊ typically defined by a high percentile Value-at-Risk (VaR) or Expected Shortfall (ES) calculation ⎊ over a liquidation look-back period.

The precision of this verification directly correlates with the capital efficiency of the entire options platform. A system that over-margins is inefficient; a system that under-margins is fragile.

The core challenge in decentralized finance (DeFi) is executing this verification in a trustless, permissionless, and computationally constrained environment. Traditional finance (TradFi) systems rely on centralized clearing houses to perform batch-processed, end-of-day risk calculations. In contrast, DeFi options protocols demand a continuous, near-instantaneous assessment of risk, a requirement born from the 24/7 nature of crypto markets and the potential for cascading liquidations.

This necessitates a fundamental shift in the computational architecture of risk modeling, moving the solvency check from a human-audited report to an immutable, verifiable smart contract function.

Dynamic Margin Solvency Verification is the core computational defense against counterparty risk in a permissionless options market.

A robust DMS verification system must account for the cross-asset nature of collateral ⎊ often a basket of different tokens ⎊ and the non-linear payoff structure of options. The complexity is compounded by the fact that options portfolios are multi-dimensional, sensitive not just to the underlying price but also to volatility, time decay, and interest rates. The system must synthesize all these factors into a single, actionable solvency score.

Origin

The concept of margin verification originates in the clearing house models of the 20th century, specifically the development of the Standard Portfolio Analysis of Risk (SPAN) system. SPAN revolutionized risk management by shifting from a position-by-position margin calculation to a portfolio-based approach, netting risk across instruments. This allowed for significant capital efficiency while maintaining a defined safety threshold.

The transition to the crypto derivatives space introduced two existential constraints that broke the traditional model: the absence of a central counterparty (CCP) and the speed of settlement. In a decentralized protocol, the CCP function ⎊ guaranteeing trades and managing risk ⎊ is replaced by code and collateral. Early crypto derivatives platforms, particularly perpetual futures, began with simple, isolated margin checks, typically a fixed maintenance margin ratio against the notional value.

This was crude and failed to account for portfolio diversification benefits.

The advent of crypto options and structured products forced a necessary evolution. We could not simply port the slow, monolithic SPAN algorithm onto a blockchain. The high gas costs and latency of L1 networks made complex, multi-variable risk calculation prohibitively expensive for every block.

The initial attempts at options margining relied on off-chain risk engines and on-chain settlement, creating a critical vulnerability ⎊ the reliance on trusted oracles to report solvency status. The true origin story of modern DMS Verification in DeFi is the architectural compromise between computational cost and financial rigor. The market demanded capital efficiency, and the technology could only deliver it by outsourcing the heavy math to faster, cheaper environments, a trade-off that remains the central tension in current design.

Theory

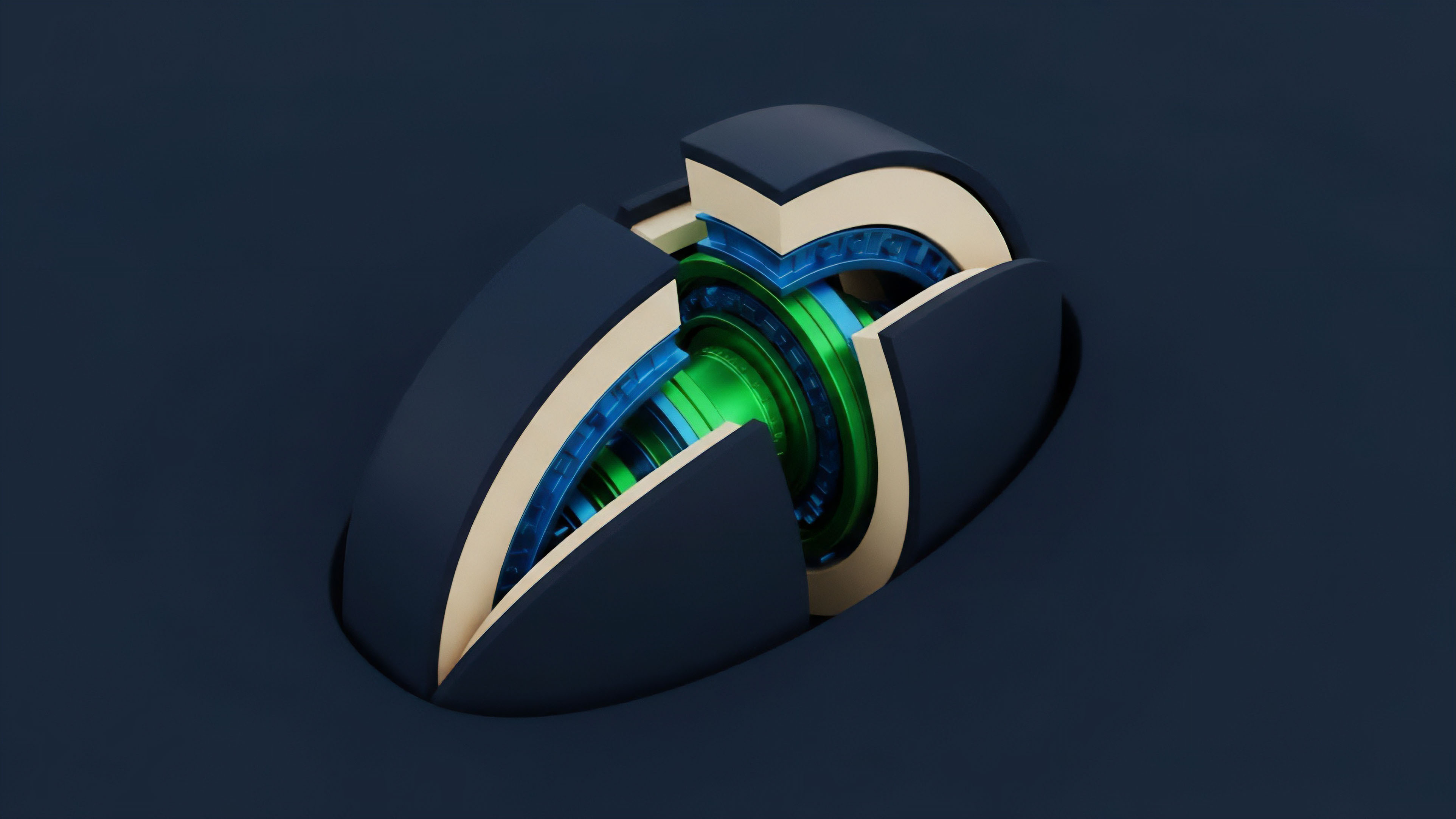

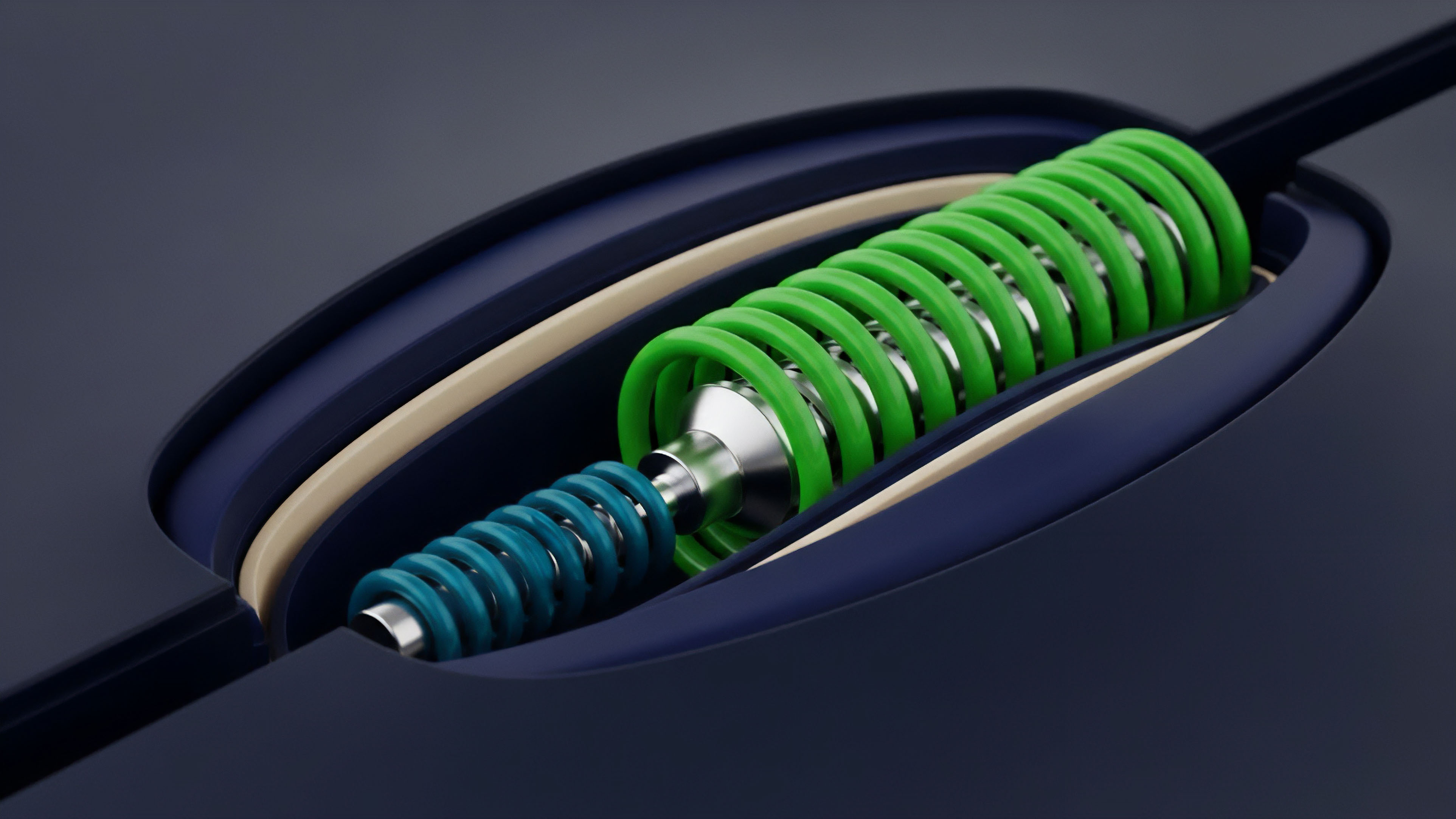

The theoretical foundation of Dynamic Margin Solvency Verification rests on the rigorous application of quantitative finance principles, primarily the portfolio risk metrics adapted for non-linear instruments and adversarial market conditions. The challenge is modeling the maximum credible loss under high-volatility conditions, a task that requires a continuous, multivariate sensitivity analysis. The standard approach begins with the Greeks ⎊ Delta (δ), the sensitivity to the underlying price; Gamma (γ), the sensitivity of Delta; and Vega (mathcalV), the sensitivity to volatility ⎊ but quickly extends into higher-order and cross-term sensitivities, particularly the Charm and Vanna effects, which describe how the portfolio’s risk profile changes over time and with volatility shifts.

A truly solvent margin system must not simply check the current mark-to-market value; it must simulate the portfolio’s value across thousands of potential future price and volatility paths. This simulation is often performed using historical or implied Monte Carlo methods, generating a distribution of potential portfolio losses, from which the protocol selects a high percentile, typically 99.5% or 99.9%, to establish the Value-at-Risk (VaR). However, VaR is known to be non-subadditive and ignores tail risk beyond the cutoff point ⎊ a critical flaw in fat-tailed crypto markets ⎊ leading many architects to favor Expected Shortfall (ES), which calculates the average loss in the worst-case scenarios, providing a more conservative and coherent risk measure.

This calculation must be fast enough to run within the latency window of the liquidation mechanism ⎊ a computational bottleneck that forces many protocols to simplify the model, substituting computationally expensive simulations with a standardized, parameter-based approach that relies on pre-calibrated risk arrays. Our inability to respect the skew, the observation that out-of-the-money options trade at a higher implied volatility than at-the-money options, is the critical flaw in many current models, creating systemic risk during sharp, directional market moves that should have been covered by the margin. The theoretical purity of continuous-time risk management is always constrained by the discrete, block-by-block nature of settlement, which introduces Settlement Risk (σs) ⎊ the potential for the market to move beyond the margin threshold between the last solvency check and the next available liquidation window.

The distinction between the two primary theoretical models for options margining is crucial for understanding the trade-offs in capital allocation.

| Margin Model | Calculation Basis | Capital Efficiency | Computational Cost |

|---|---|---|---|

| Standardized Portfolio Analysis (SPA) | Pre-defined risk arrays for scenarios | Moderate | Low (Pre-computed) |

| Continuous Risk-Based Margining (CRBM) | Real-time VaR/ES on portfolio Greeks | High | Very High (On-demand) |

Approach

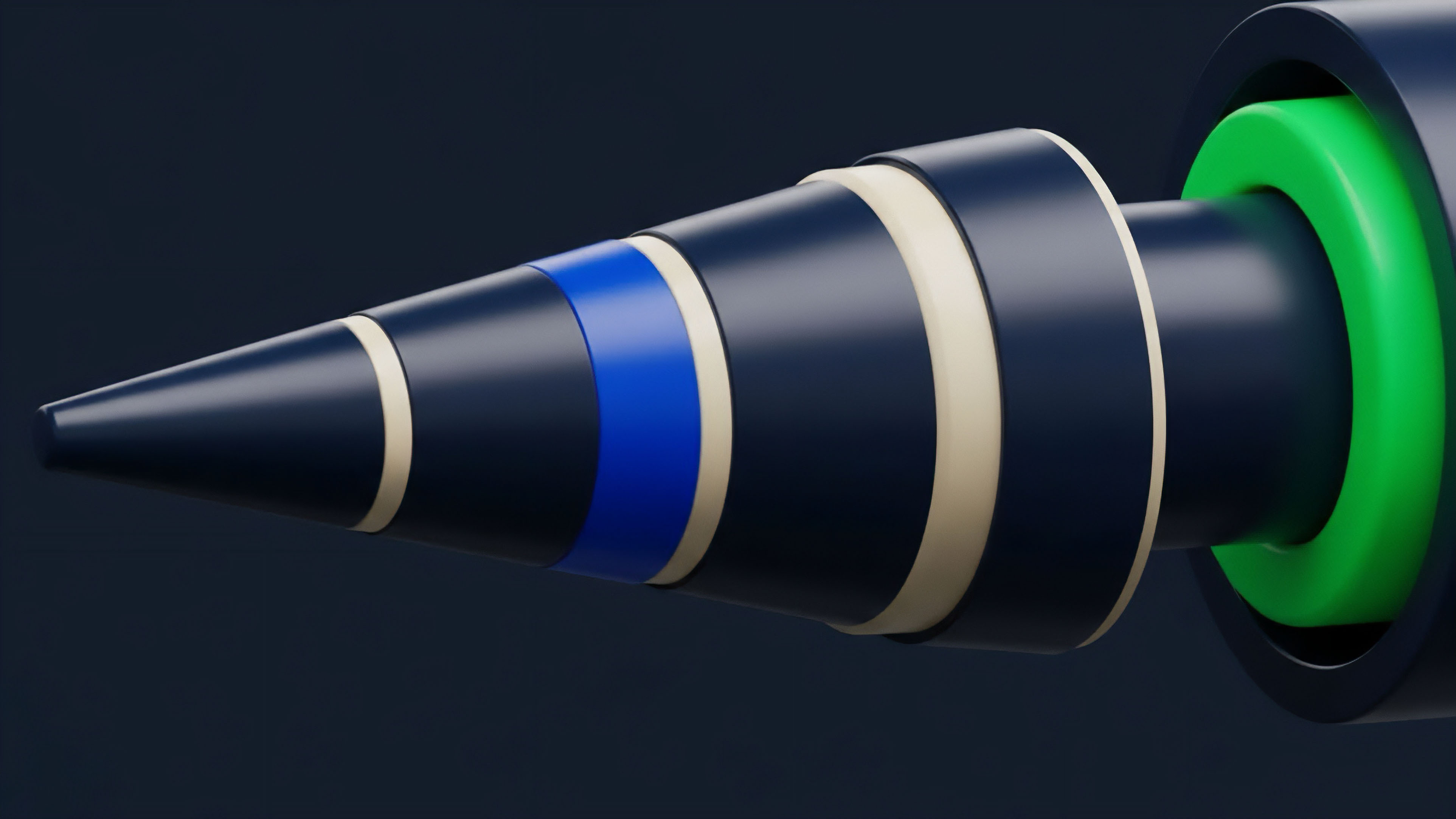

The current technical approach to Dynamic Margin Solvency Verification is a hybrid architecture, a necessary compromise between on-chain security and off-chain computational horsepower. The bulk of the complex, resource-intensive risk calculation is performed by a dedicated off-chain Margin Engine , often run by the protocol’s operators or a decentralized network of specialized keepers.

Off-Chain Computation and Keeper Networks

The Margin Engine continuously monitors all open positions, calculating the portfolio’s Greeks and running the VaR/ES stress tests. Once a position’s collateral falls below the required maintenance margin, the engine generates a cryptographic proof of insolvency. This proof ⎊ a signed message or a zero-knowledge attestation ⎊ is then submitted on-chain by a Keeper Network.

These keepers are economically incentivized agents who race to execute the liquidation transaction, earning a fee for maintaining the protocol’s solvency. This separation of computation from settlement is an architectural necessity.

The latency between the off-chain insolvency detection and the on-chain liquidation execution is the system’s primary point of failure. Fast-moving markets can outrun the keeper network, leading to Bad Debt ⎊ losses that exceed the posted margin and must be absorbed by the protocol’s insurance fund or socialized across solvent traders. This is a direct function of block time and oracle latency.

The Architecture of Verification

A modern DMS verification system relies on several tightly coupled components:

- Risk Parameter Oracles: These feed real-time volatility surfaces, interest rate curves, and correlation data into the Margin Engine. The integrity of these feeds is paramount; a corrupted volatility feed can lead to widespread, systemic mispricing of margin.

- The Margin Engine Software: This proprietary or open-source software executes the complex, multi-asset risk model. It must be highly optimized for speed, often using techniques like Parallel Processing and GPU Acceleration to run thousands of scenarios per second.

- The Liquidation Smart Contract: The immutable on-chain logic that verifies the insolvency proof and executes the collateral seizure and debt repayment. Its code must be ruthlessly audited, as any vulnerability here is a direct path to protocol failure.

- The Insurance Fund: A pool of capital, often tokenized, that acts as the final systemic buffer against unexpected losses and keeper network failures.

The latency between off-chain insolvency detection and on-chain liquidation execution is the ultimate determinant of systemic solvency.

Evolution

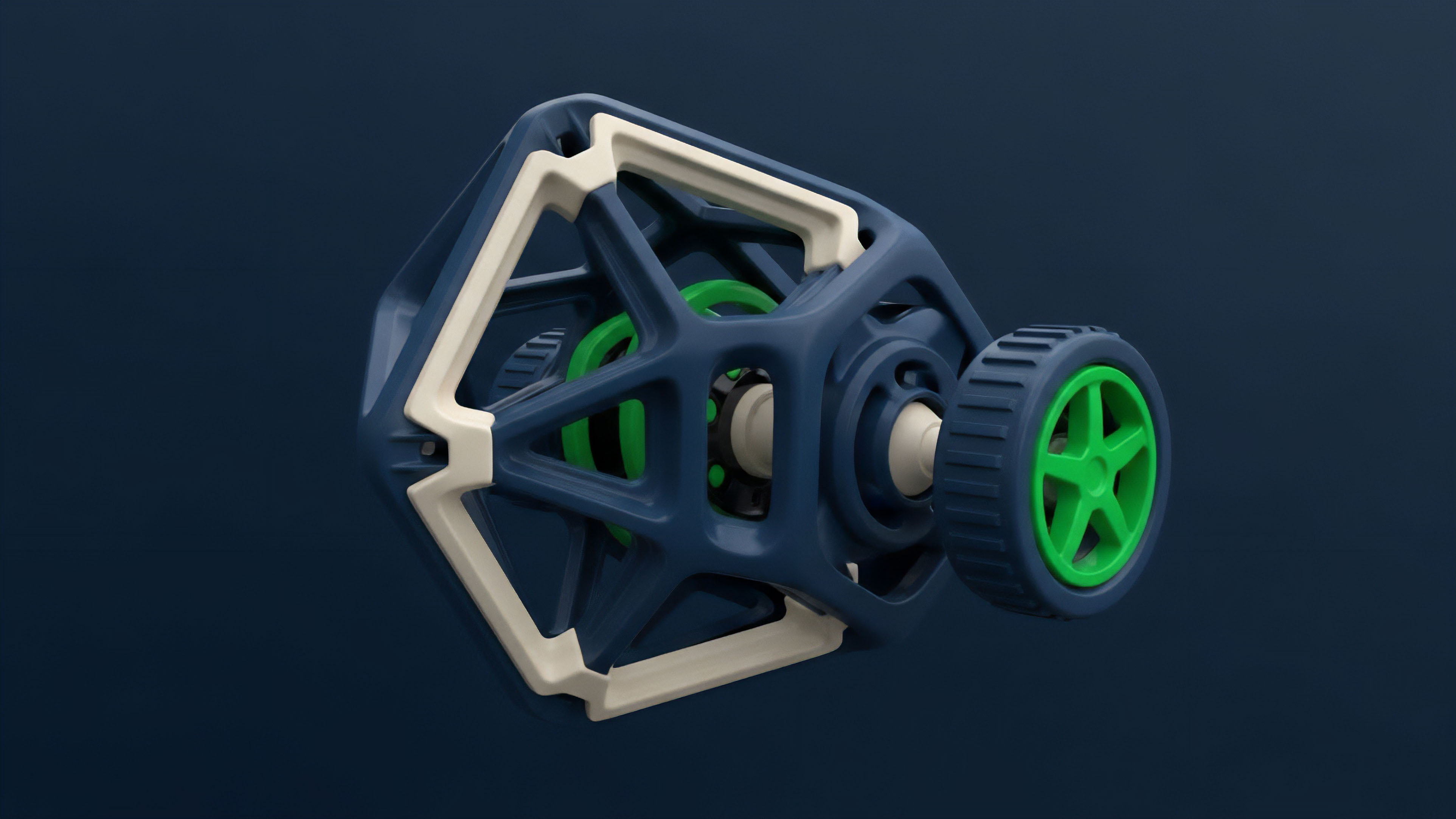

The evolution of margin verification in crypto options is a story of increasing sophistication, moving from isolated, static checks to integrated, dynamic risk management ⎊ a transition driven by the sheer volatility of the underlying assets. Initially, margin was treated as an isolated problem: a futures position was collateralized by a fixed percentage of its notional value, independent of any other position in the account. This was brittle.

Portfolio Margining and Capital Efficiency

The first major leap was the adoption of Portfolio Margining. This allowed traders to offset the risk of a short call option with a long call option at a different strike, recognizing the inherent hedging benefit. The system now verifies the solvency of the net risk of the entire portfolio, not just individual legs.

This shift unlocked orders of magnitude more capital efficiency, a necessary condition for options markets to achieve meaningful depth. This architectural choice is not a technical optimization; it is a financial necessity, as capital efficiency is the gravity of a functioning market.

This is where the financial system becomes truly elegant ⎊ and dangerous if ignored. The same principles that govern the fragility of a fractional reserve bank ⎊ the mismatch between liquid assets and potential liabilities ⎊ govern the solvency of a margin engine. A protocol’s collateral pool is its reserve; the options written are its liabilities.

Protocol Physics and Speed Limits

The second major evolution is the confrontation with Protocol Physics. The speed at which a margin call can be verified and executed is fundamentally limited by the underlying blockchain’s consensus mechanism. The move from slower Layer 1s to high-throughput Layer 2s and sidechains is a direct response to this physical constraint.

This has led to the emergence of specialized risk-management sidechains that can perform complex risk calculations and pre-confirm liquidation triggers before settling the final state on the main chain.

The trade-off between centralized exchange (CEX) and decentralized exchange (DEX) margining highlights this evolution.

| Feature | CEX Margin Verification | DEX Margin Verification |

|---|---|---|

| Speed | Sub-millisecond | Block Time (Seconds to Minutes) |

| Trust Model | Centralized Counterparty (Trusted) | Smart Contract (Trustless) |

| Capital Isolation | Cross-margining across products | Often siloed by protocol (Improving) |

| Liquidation Trigger | Internal, instant, deterministic | External Keeper Network, probabilistic |

Horizon

The future of Dynamic Margin Solvency Verification lies in computational transparency and cryptographic certainty. We are moving toward a world where the solvency of a multi-asset, multi-instrument portfolio can be proven without revealing the underlying positions ⎊ a necessary step for institutional adoption.

Zero-Knowledge Proofs for Solvency

The most significant architectural shift on the horizon is the integration of Zero-Knowledge Proofs (ZKPs). Instead of revealing a user’s full portfolio to the public ledger or the off-chain margin engine, a user will be able to submit a cryptographic proof ⎊ a zk-SNARK or zk-STARK ⎊ that simply asserts: “My current collateral is greater than the required maintenance margin, as calculated by the protocol’s publicly verifiable risk function.” This transforms the solvency check from a process of public disclosure into a process of cryptographic attestation. This is the ultimate expression of trust minimization, allowing for institutional-grade privacy alongside decentralized verification.

The use of Zero-Knowledge Proofs will transform solvency checks from public disclosure to cryptographic attestation, a necessity for institutional adoption.

Standardized Synthetic Stress Scenarios (SSS)

The next evolution requires industry-wide standardization of risk parameters. Currently, every options protocol uses its own proprietary VaR or ES model, making cross-protocol risk aggregation impossible. The industry must converge on a set of Synthetic Stress Scenarios (SSS) ⎊ pre-defined, shared volatility and price shocks that all margin engines must test against.

This would allow for a systemic, interoperable risk framework, a foundational requirement for a robust DeFi money market. This is the only pathway to prevent localized protocol failures from propagating contagion across the ecosystem.

The architectural requirements for this future state are clear:

- Verifiable Off-Chain Compute: The margin engine must be provable, likely running within a ZK-Rollup or similar environment, allowing the expensive risk calculation to be performed cheaply but verified on-chain.

- Interoperable Risk Oracles: A decentralized oracle network must deliver standardized, time-series data for volatility surfaces and correlation matrices, ensuring all protocols operate on the same risk inputs.

- Automated Cross-Protocol Collateralization: Smart contracts must be able to automatically rebalance margin across different protocols based on a global, aggregated solvency score, maximizing capital utility.

Glossary

Aml Verification

Merkle Root Verification

Capital Requirements for Casps

Program Verification

Probabilistic Verification

Attribute-Based Verification

Layer-2 Scaling Solutions

Portfolio Margining Strategy

Just-in-Time Verification