Essence

Liquidity Management within crypto derivatives functions as the deliberate orchestration of capital deployment to ensure continuous order execution and price stability. It represents the structural backbone of decentralized trading venues, dictating how protocols maintain the ability to absorb trade flow without inducing catastrophic slippage. At its heart, this practice balances the requirement for deep, accessible markets against the inherent risks of impermanent loss and capital inefficiency.

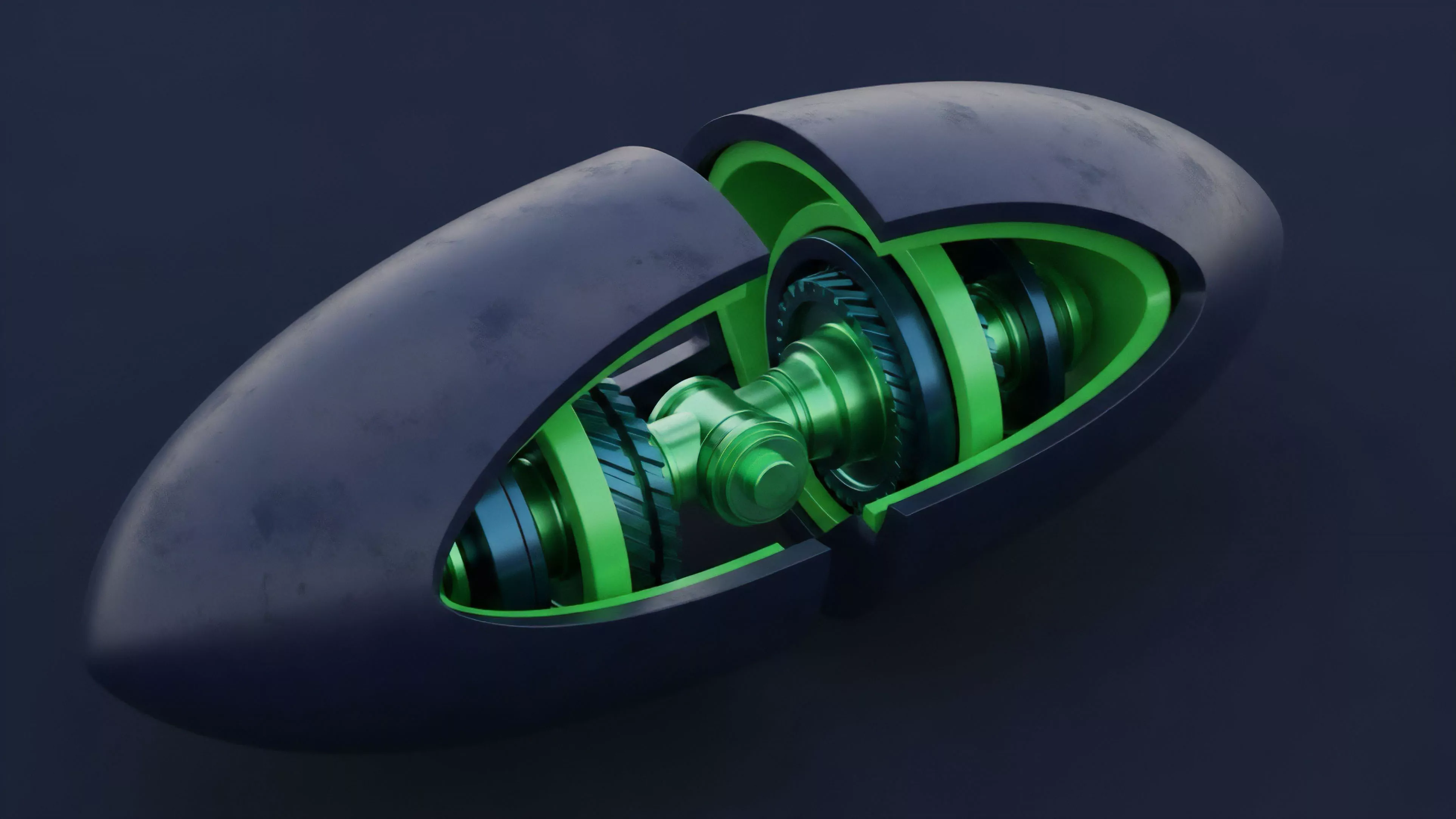

Liquidity Management acts as the mechanical governor of market depth, ensuring trade execution remains stable across volatile asset cycles.

Effective management requires a rigorous understanding of how capital is provisioned across decentralized exchanges, automated market makers, and order book protocols. Participants must navigate the tension between passive liquidity provision, which earns yield, and active market making, which requires constant hedging against directional risk. This discipline defines the survival of any financial protocol, as insufficient liquidity triggers a cascade of failed settlements and eroded trust.

Origin

The genesis of Liquidity Management lies in the shift from traditional, centralized order books to the automated, algorithmic architectures pioneered by early decentralized finance protocols.

Initially, simple constant product formulas provided the baseline for asset exchange, yet these models lacked the sophisticated risk controls necessary for high-volume derivative trading. The industry recognized that static capital allocation resulted in poor price discovery and extreme volatility during market stress.

- Automated Market Makers introduced the concept of programmatic liquidity, removing the reliance on professional intermediaries.

- Liquidity Providers emerged as the primary source of capital, assuming the burden of market risk in exchange for protocol fees.

- Concentrated Liquidity models evolved to allow providers to target specific price ranges, significantly increasing capital efficiency.

This evolution was driven by the necessity to solve for slippage, the discrepancy between the expected price of a trade and the price at which the trade is executed. As decentralized derivatives grew, the requirement for sophisticated Liquidity Management became clear: protocols needed to dynamically adjust to order flow rather than relying on the naive, indiscriminate allocation of capital.

Theory

The theoretical framework for Liquidity Management rests on the intersection of market microstructure and quantitative finance. Protocols must solve for the optimal distribution of liquidity to maximize fee generation while minimizing the probability of liquidation or exhaustion.

This involves modeling the Greeks ⎊ specifically Delta, Gamma, and Vega ⎊ to understand how liquidity depth responds to price fluctuations and volatility spikes.

| Metric | Definition | Systemic Impact |

|---|---|---|

| Slippage | Price impact per trade size | Determines market depth capacity |

| Capital Efficiency | Trading volume per unit of liquidity | Measures protocol profitability |

| Impermenant Loss | Value divergence from holding assets | Dictates provider participation risk |

The mathematical architecture of these systems often employs non-linear bonding curves or dynamic fee structures that respond to real-time volatility. Behavioral game theory informs these designs, as protocols must incentivize participants to act in ways that maintain system health during periods of extreme stress. If a protocol fails to align the incentives of liquidity providers with the needs of traders, the resulting feedback loop often leads to liquidity flight and market collapse.

Market microstructure analysis reveals that liquidity depth is a function of incentive alignment between protocol participants and external market actors.

Sometimes, the complexity of these models mimics biological systems, where the protocol acts as a living organism reacting to external environmental pressures. By adjusting the cost of liquidity provision or the depth of the order book, the system seeks a state of equilibrium. This process remains under constant attack from automated arbitrage agents, forcing designers to build ever more resilient mechanisms for managing capital.

Approach

Current strategies for Liquidity Management prioritize capital velocity and risk-adjusted returns.

Modern protocols utilize advanced Liquidity Aggregators to source depth from multiple venues, reducing the fragmentation inherent in decentralized systems. Participants now employ sophisticated hedging tools, such as delta-neutral strategies, to capture yield while protecting against the underlying asset’s volatility.

- Dynamic Range Adjustment allows liquidity providers to rebalance their positions as market prices move.

- Automated Hedging uses smart contracts to execute protective trades when liquidity thresholds are breached.

- Protocol Owned Liquidity shifts the burden of capital provision from individual users to the protocol treasury, enhancing long-term stability.

The focus has shifted from simple fee accumulation to the precise calibration of liquidity depth relative to open interest. Risk management engines now monitor real-time data feeds to adjust collateral requirements and liquidation thresholds, ensuring the system can withstand significant market shocks. This approach acknowledges that liquidity is not a static resource but a fluid, reactive component of the financial architecture.

Evolution

The trajectory of Liquidity Management reflects a move toward greater transparency and technical sophistication.

Early iterations relied on basic incentive structures, often resulting in “mercenary” capital that exited at the first sign of instability. The current era emphasizes long-term alignment through sophisticated governance models and tokenomic designs that reward consistent, high-quality liquidity provision.

| Era | Focus | Primary Mechanism |

|---|---|---|

| Genesis | Bootstrapping | Yield farming incentives |

| Intermediate | Efficiency | Concentrated liquidity curves |

| Advanced | Resilience | Algorithmic risk management |

These systems have become increasingly adept at handling systemic risk, incorporating cross-chain liquidity routing and multi-protocol integration. The shift from human-managed vaults to autonomous, algorithm-driven liquidity engines has drastically reduced the latency between market events and system adjustments. This transition reflects a broader maturation of the decentralized financial landscape, moving away from experimental designs toward robust, institutional-grade infrastructure.

Horizon

Future developments in Liquidity Management will likely center on predictive modeling and autonomous rebalancing engines.

Integration with off-chain data sources through decentralized oracles will allow protocols to anticipate volatility events rather than merely reacting to them. This transition to proactive risk management will redefine how capital is allocated, favoring protocols that can demonstrate verifiable resilience under extreme market stress.

Future liquidity frameworks will utilize predictive analytics to anticipate volatility and autonomously adjust capital deployment for maximum stability.

The ultimate objective involves creating self-healing liquidity structures that require minimal human intervention. As regulatory frameworks clarify, these systems will likely incorporate modular compliance layers, allowing for permissioned and permissionless liquidity to coexist. The convergence of artificial intelligence and decentralized finance will enable the creation of highly efficient, self-optimizing markets, fundamentally changing how value is transferred and price discovery is achieved globally.