Essence

Liquidation Engine Stress Testing represents the quantitative validation of automated collateral disposal mechanisms under extreme market conditions. It functions as a simulation framework designed to determine if protocol solvency remains intact when volatility spikes, liquidity evaporates, or oracle latency compromises price accuracy.

Liquidation engine stress testing provides a rigorous probabilistic assessment of protocol survival during periods of severe market dislocation.

The core objective involves modeling the feedback loops between falling asset prices, cascading liquidations, and the resulting slippage within decentralized order books. This process identifies the exact threshold where a system transitions from a self-correcting entity to an insolvent one, often characterized by the accumulation of bad debt that exceeds the capacity of insurance funds or socialized loss mechanisms.

Origin

The necessity for this discipline arose from the inherent fragility observed in early decentralized lending protocols. Initial designs relied on simplistic liquidation logic that failed to account for the non-linear relationship between margin calls and market depth.

- Systemic Fragility surfaced during historical flash crashes where collateral value plummeted faster than the engine could execute trades.

- Oracle Failure modes became a primary concern as price feeds lagged during high-frequency volatility events.

- Insurance Fund Depletion events forced developers to reconsider the static parameters governing collateral ratios and penalty structures.

These early systemic failures highlighted the need for a more sophisticated, forward-looking evaluation of protocol mechanics. Architects shifted toward methodologies that simulate thousands of synthetic market paths, moving beyond the optimistic assumptions of continuous liquidity.

Theory

The theoretical foundation of Liquidation Engine Stress Testing relies on the interaction between stochastic calculus and behavioral game theory. The model treats the protocol as a closed system where external price shocks trigger a series of deterministic state changes.

Mathematical Sensitivity

Risk sensitivity analysis focuses on the Delta and Gamma of the liquidation threshold. As prices approach the liquidation point, the sensitivity of the system to further price drops increases exponentially. The engine must account for the following variables:

| Variable | Impact on Liquidation |

|---|---|

| Oracle Latency | Delays execution causing deeper underwater positions |

| Market Slippage | Reduces net proceeds from collateral disposal |

| Liquidation Penalty | Influences incentive for liquidators to act |

The integrity of a liquidation engine rests on its ability to maintain solvency when the cost of execution exceeds the value of recovered collateral.

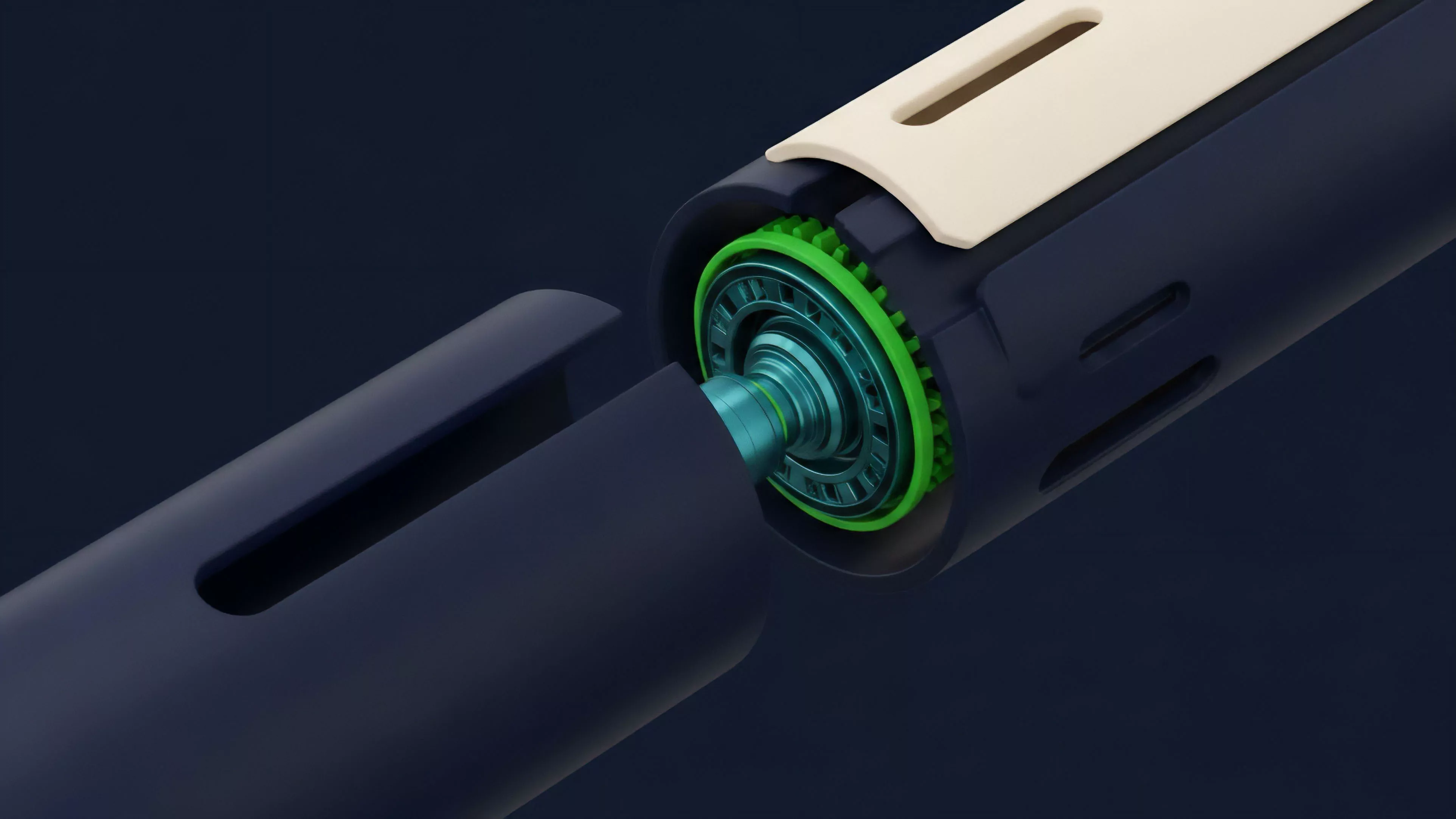

The system experiences significant narrative entropy when participants realize that automated liquidators are rational agents. These agents will only participate if the expected profit covers the gas costs and risk of adverse price movement. This creates a critical dependency on external market makers to provide liquidity at the exact moment the protocol requires it most.

Approach

Current methodologies employ Monte Carlo simulations to stress the liquidation engine against synthetic historical data and hypothetical black swan events.

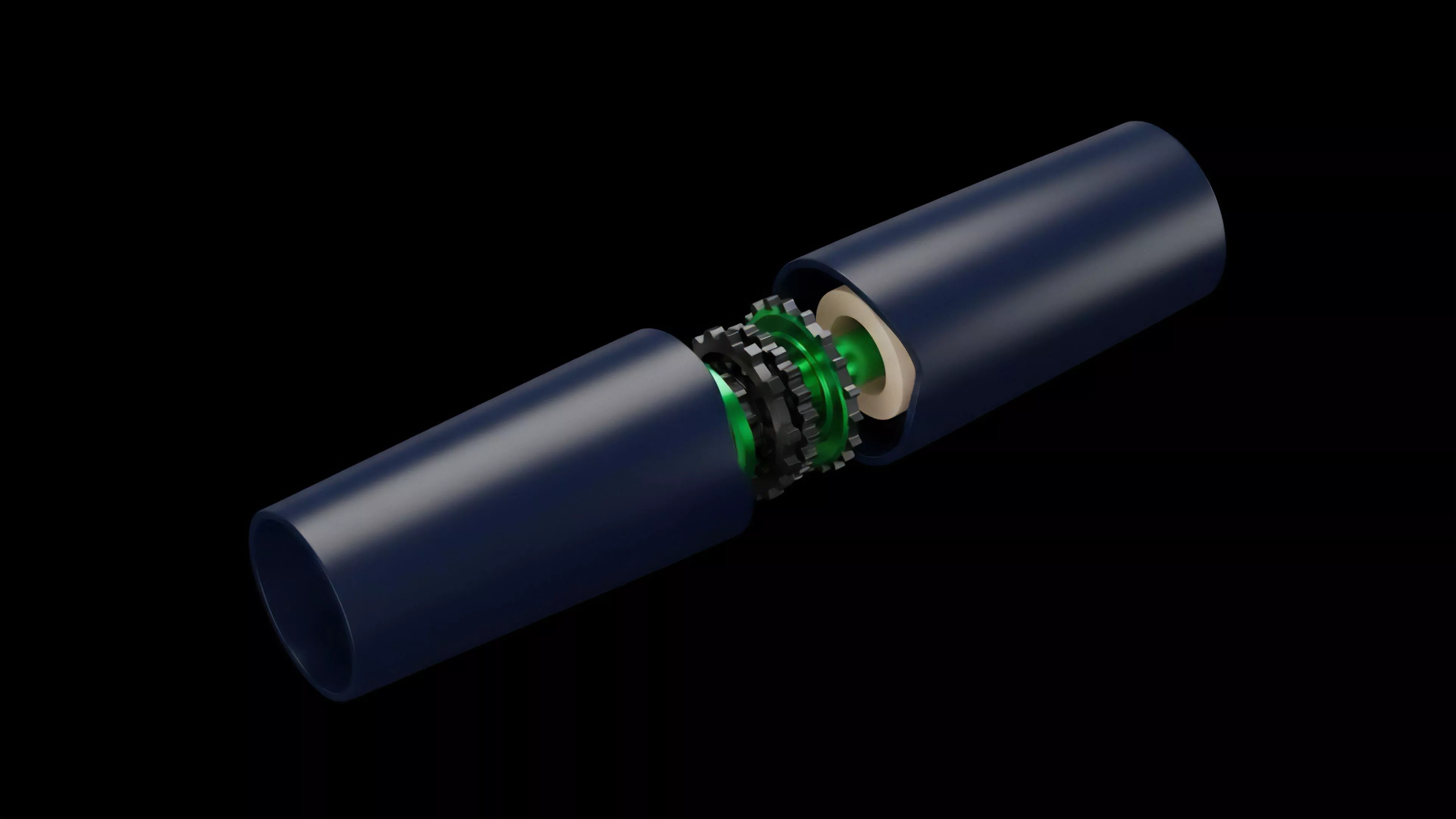

This involves creating a digital twin of the protocol that runs parallel to the mainnet.

Simulation Parameters

Engineers isolate specific failure points by manipulating variables within the simulation environment:

- Volatility Scaling increases the magnitude of price swings to test the responsiveness of the liquidation threshold.

- Liquidity Thinning simulates order book depth reduction to observe the impact on execution price and resulting bad debt.

- Adversarial Agent Injection introduces bots that front-run liquidations or exploit latency to drain protocol resources.

This approach allows developers to identify structural weaknesses before they materialize in production. The goal is to define the boundary conditions where the protocol requires manual intervention or circuit breaker activation to prevent total failure.

Evolution

The transition from static margin requirements to dynamic risk-adjusted thresholds marks the most significant advancement in this domain. Early models utilized fixed percentages for collateralization, which ignored the reality of asset-specific volatility and correlation shifts.

Modern architectures now incorporate Volatility-Adjusted Collateralization, where the liquidation threshold updates in real-time based on realized and implied volatility. This shift acknowledges that decentralized markets are not static environments but adversarial systems that respond to information with high speed and low latency.

Dynamic risk adjustment represents the shift from reactive safety measures to proactive protocol hardening against systemic contagion.

Systems now frequently employ cross-protocol stress tests, recognizing that liquidity fragmentation across different decentralized exchanges creates arbitrage opportunities that liquidation engines must account for. This evolution reflects a growing maturity in the design of digital asset derivatives, moving toward a framework that treats systemic risk as a quantifiable input rather than an exogenous variable.

Horizon

The future of Liquidation Engine Stress Testing lies in the integration of on-chain, real-time risk modeling. Protocols will likely adopt autonomous governance modules that automatically adjust liquidation parameters based on decentralized oracle data and external market conditions.

This trajectory suggests a future where the liquidation engine functions as an intelligent, self-optimizing risk manager. The next iteration will prioritize the following developments:

- Cross-Chain Liquidity Routing to ensure that liquidations occur on the most efficient venue regardless of where the position originated.

- Predictive Execution Modeling which anticipates liquidation clusters before they occur to minimize market impact.

- Automated Circuit Breaker Integration that pauses activity when systemic volatility exceeds the capacity of the protocol to manage risk.

The challenge remains the tension between decentralization and the speed required for effective liquidation. Finding the optimal balance between these two will define the next cycle of protocol design and financial stability in the digital asset space. What happens to protocol stability when liquidation engines become perfectly efficient, thereby removing the volatility that keeps market participants active?