Essence

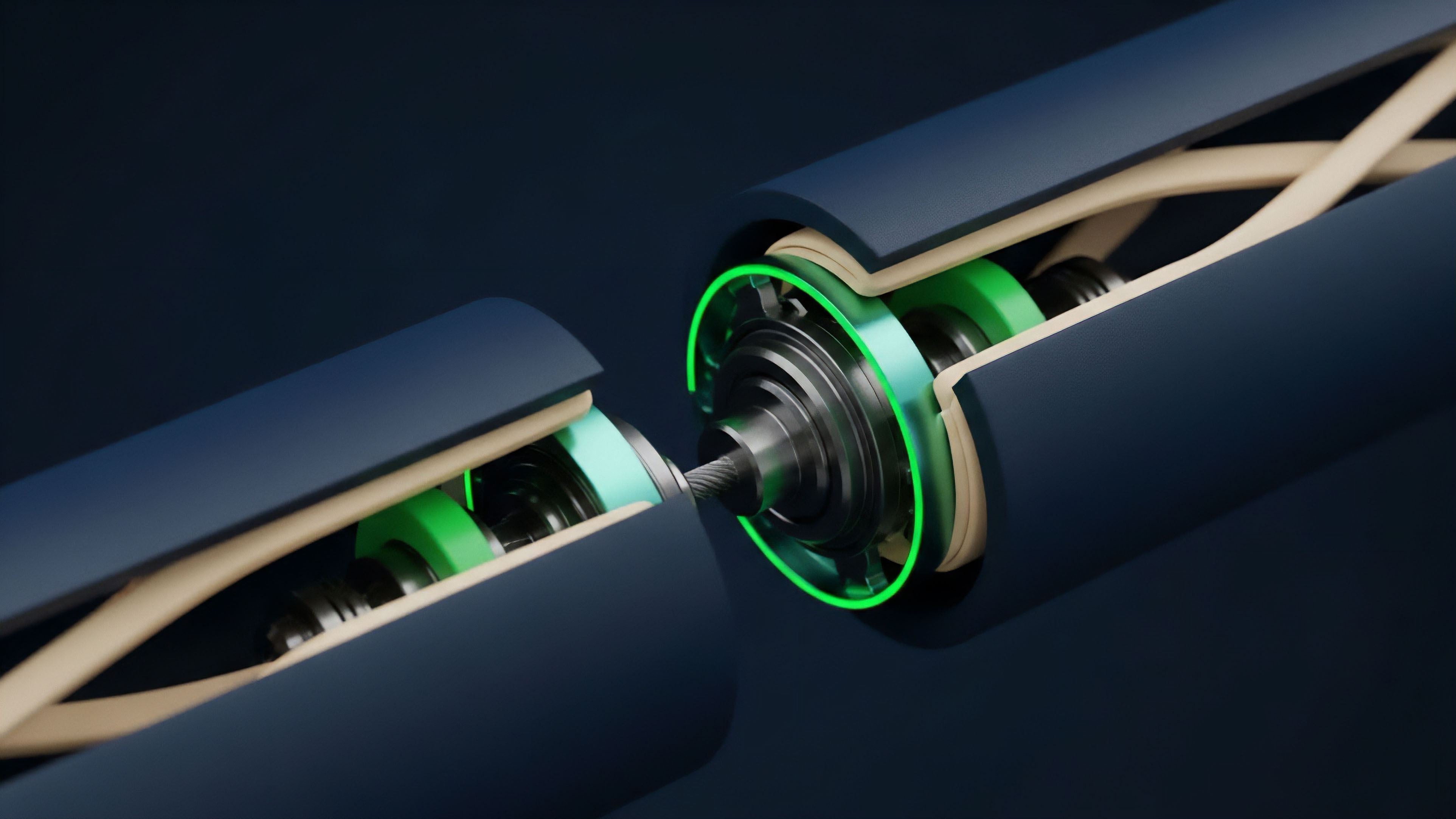

Trustless scaling demands the mathematical certainty of settlement without the prohibitive latency of global consensus. Layer Two Verification functions as the definitive security anchor for off-chain computation, establishing a verifiable link between rapid execution environments and the immutable base layer. This process shifts the burden of proof from every network participant to a specific cryptographic or game-theoretic construct, allowing the underlying protocol to maintain its integrity while processing orders of magnitude more data.

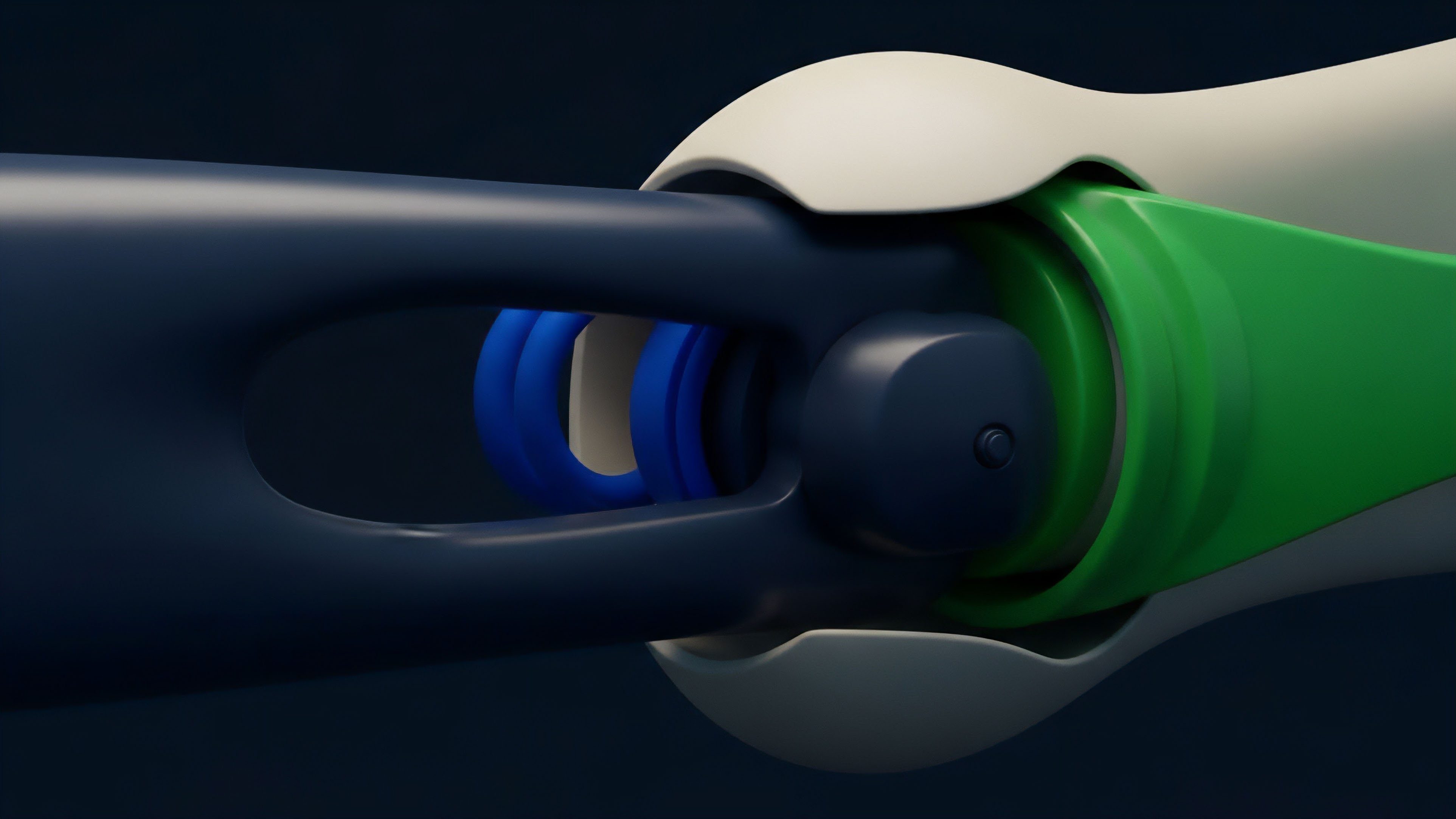

Layer Two Verification constitutes the cryptographic bridge ensuring off-chain state transitions adhere to the consensus rules of the parent blockchain.

The architecture relies on the premise that the base layer should act as a supreme court rather than a daily ledger for every minor interaction. By utilizing Layer Two Verification, developers construct environments where the validity of a transaction is either proven mathematically through succinct proofs or guaranteed by the threat of economic loss via fraud challenges. This systemic shift transforms the blockchain from a singular execution engine into a settlement layer for a vast network of specialized, high-performance execution venues.

The transition from high-entropy off-chain states to low-entropy on-chain finality mirrors the thermodynamic arrow of time, where order is bought at the price of computational energy. Within this structure, the Layer Two Verification engine ensures that no state transition occurs without satisfying the rigorous requirements of the parent network. This creates a sovereign environment where users retain the security of the main chain while benefiting from the efficiency of off-chain processing.

Origin

The necessity for off-chain validation arose from the inherent constraints of the scalability trilemma, where decentralized networks struggled to balance security and throughput.

Early attempts at scaling, such as state channels and plasma, provided the initial blueprints for Layer Two Verification by attempting to move transaction data away from the main chain. These early models faced significant hurdles regarding data availability and user exit strategies, which necessitated a more robust method for ensuring state validity.

| Validation Model | Security Mechanism | Data Availability |

|---|---|---|

| State Channels | Multi-signature Consensus | Off-chain Participants |

| Plasma | Fraud Proofs via Exits | Off-chain Operators |

| Rollups | On-chain Proof Validation | On-chain Calldata |

The emergence of rollups marked a definitive shift in the strategy for Layer Two Verification. By bundling transactions and submitting a compressed representation to the base layer, rollups solved the data availability problem that plagued previous iterations. This shift allowed the parent network to verify the correctness of the off-chain state without needing to execute every individual transaction, leading to the current dominance of optimistic and zero-knowledge validation systems.

Theory

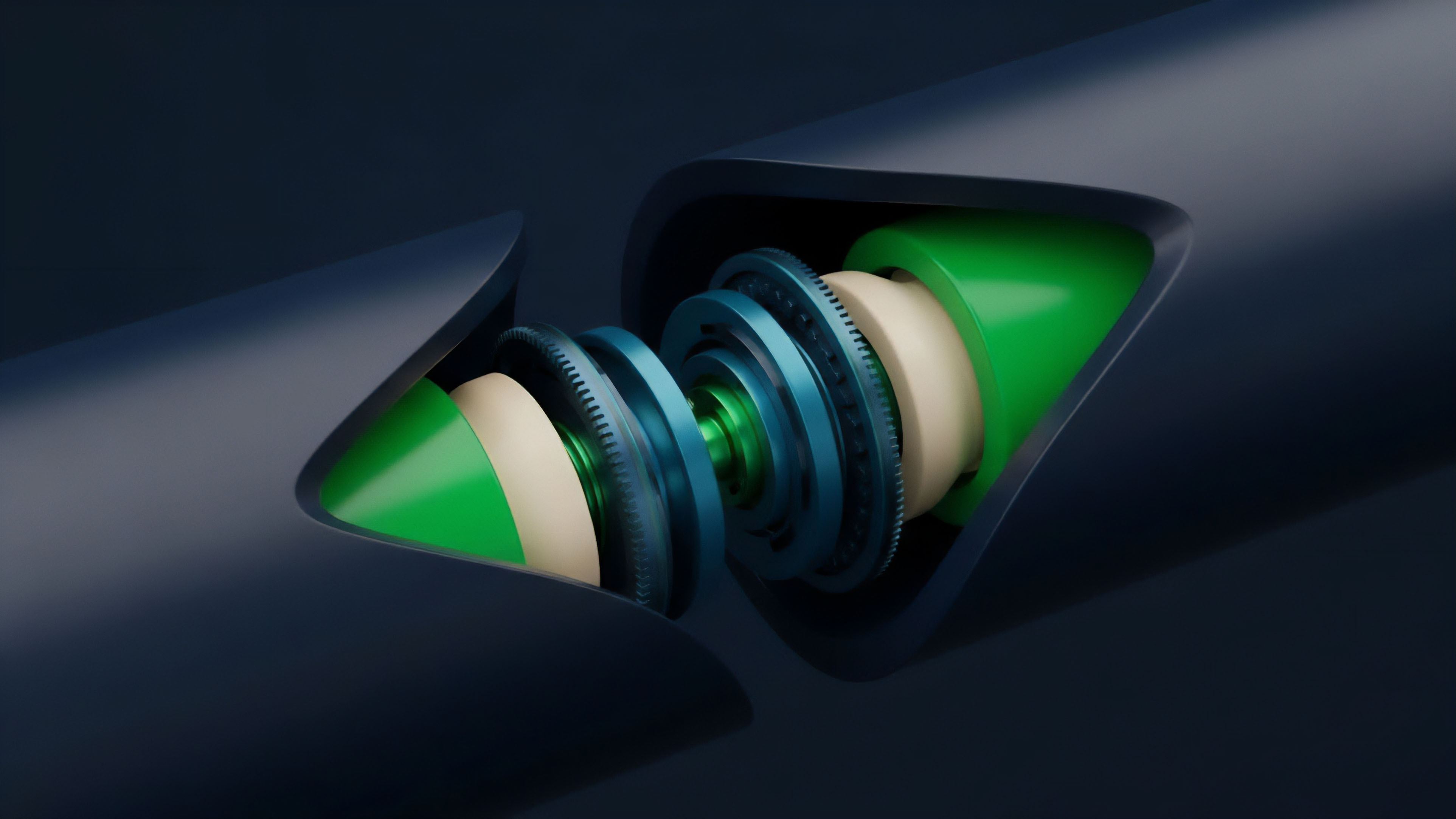

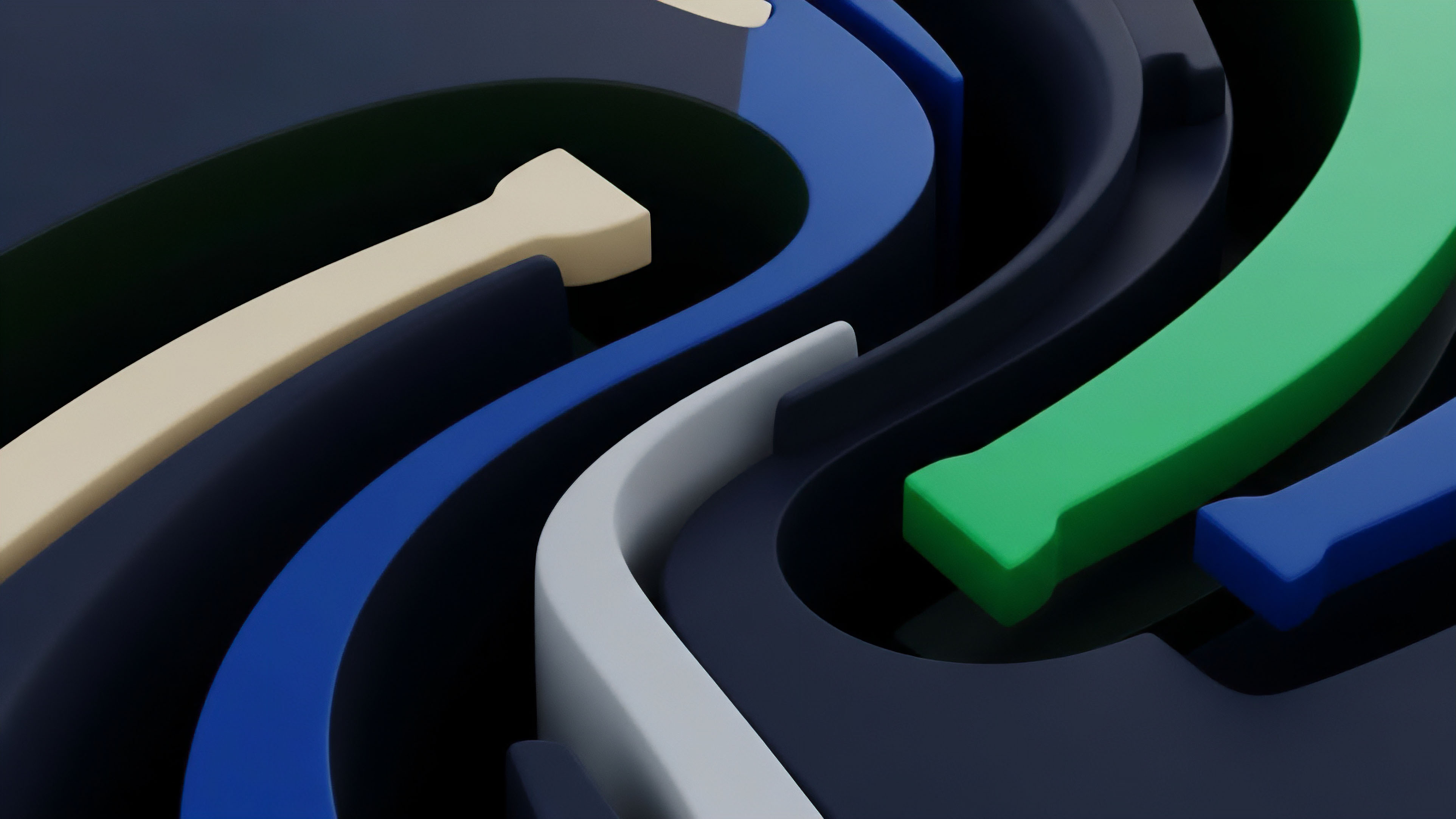

The mathematical foundation of Layer Two Verification splits into two primary methodologies: fraud proofs and validity proofs.

Fraud proofs operate on an optimistic assumption, where the state is considered valid unless a participant provides evidence of a violation within a specific challenge window. This game-theoretic model relies on the existence of at least one honest observer who can detect and prove malfeasance. Validity proofs, conversely, utilize zero-knowledge cryptography to provide a mathematical guarantee that the state transition is correct at the moment of submission.

Mathematical proofs replace social consensus to achieve deterministic finality in high-throughput financial environments.

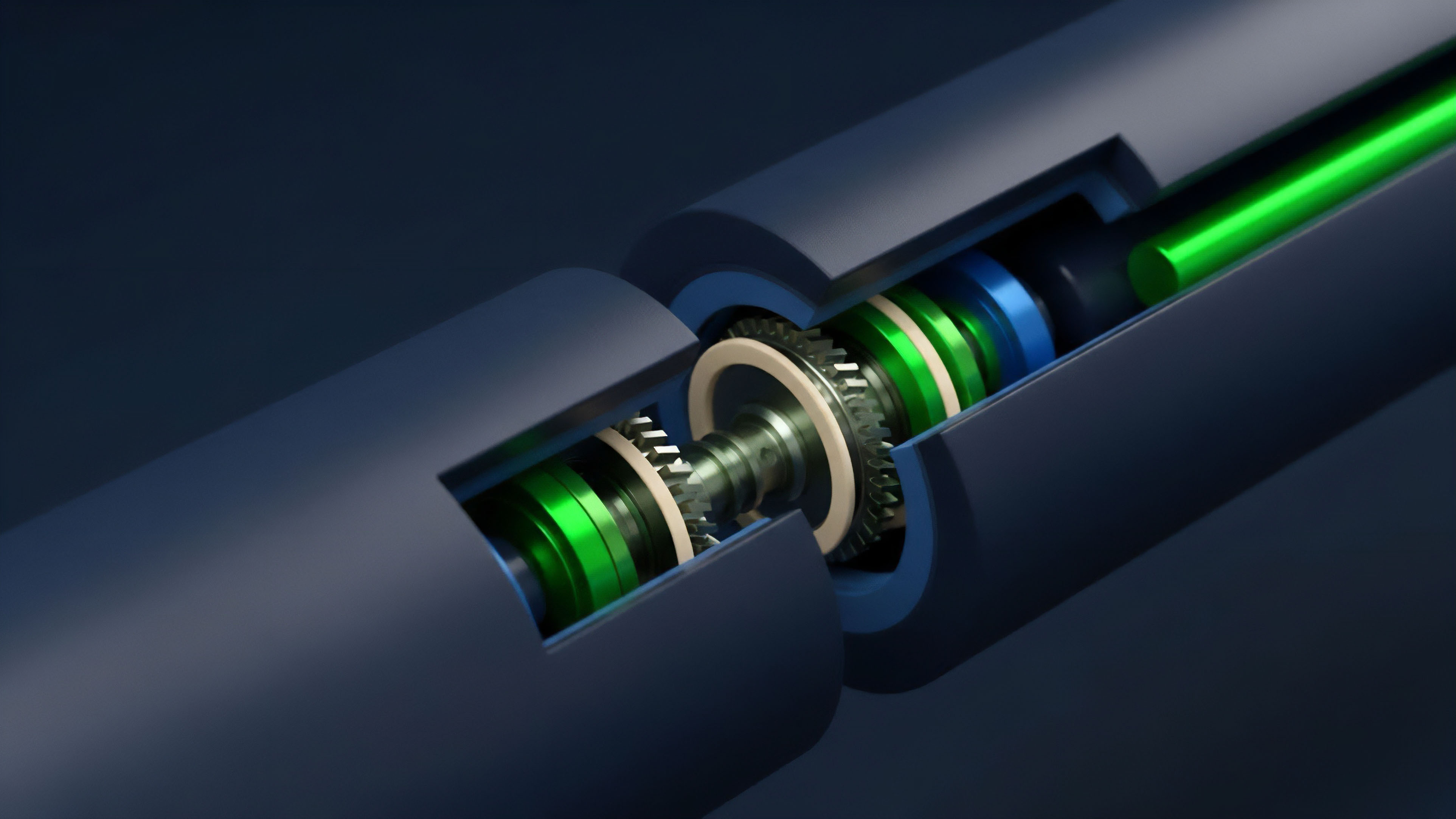

Validity proofs rely on complex arithmetic circuits to represent the logic of transaction execution. These circuits transform state transitions into polynomials, which are then compressed into a succinct proof. The verifier contract on the base layer can confirm the validity of thousands of transactions by checking a single proof, a process that requires significantly less computational power than re-executing the original data.

- Witness Generation provides the raw data and execution trace required to construct the proof.

- Constraint Systems define the mathematical rules that every valid transaction must satisfy.

- Polynomial Commitments allow the verifier to check the proof without seeing the entire data set.

- Succinctness ensures that the verification time remains constant regardless of the transaction volume.

The efficiency of Layer Two Verification is measured by the ratio of execution cost to verification cost. In zero-knowledge systems, the prover incurs a high computational cost to generate the proof, but the verifier on the base layer performs a trivial amount of work. This asymmetry is the primary driver of scalability, as it allows a single powerful prover to serve a vast network of lightweight verifiers.

Approach

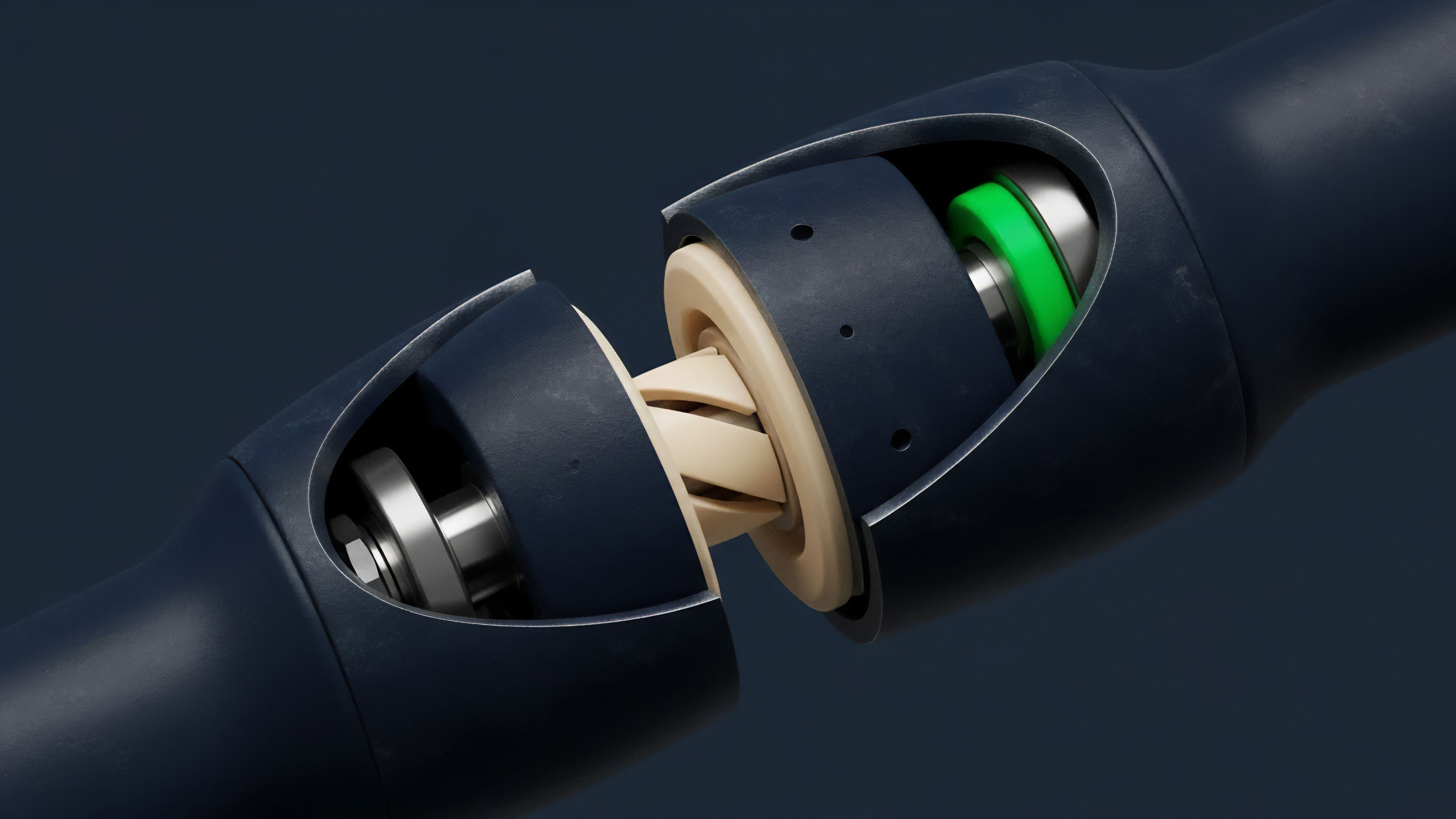

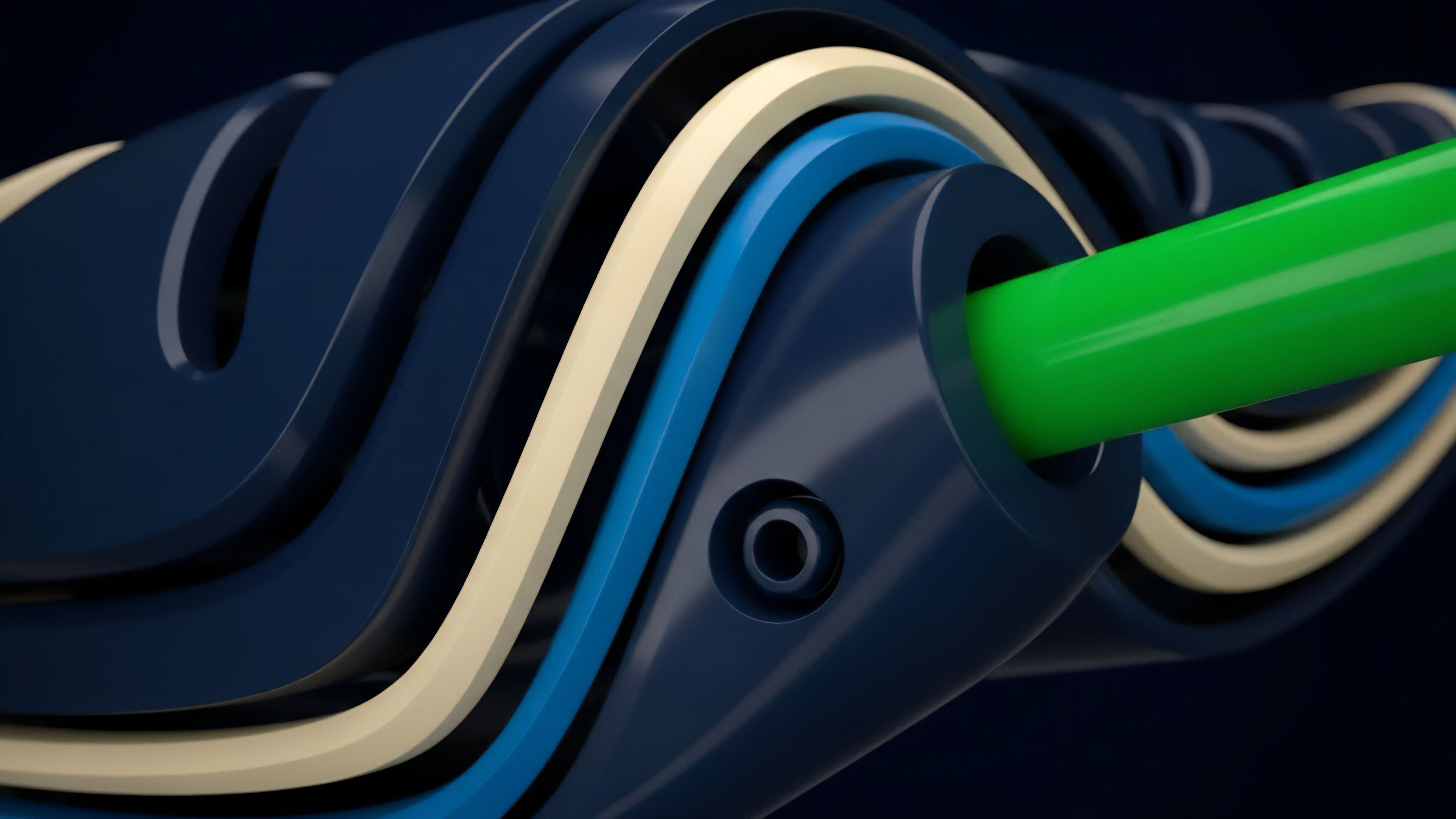

Current implementations of Layer Two Verification utilize specialized sequencers to order transactions and generate the necessary proofs for the base layer.

These sequencers act as the primary gatekeepers of the off-chain environment, responsible for maintaining the state and ensuring that all transitions are submitted to the parent network. While this model provides high performance, it introduces specific risks related to sequencer centralization and potential censorship.

| Verification Parameter | Optimistic Rollup | Zero-Knowledge Rollup |

|---|---|---|

| Finality Time | 7 to 14 Days | Minutes to Hours |

| On-chain Cost | Lower per Batch | Higher per Proof |

| Execution Complexity | EVM Compatible | Circuit Dependent |

The sequencer operates as the primary arbiter of transaction ordering, a role that introduces significant vectors for maximum extractable value while simultaneously acting as the bottleneck for censorship resistance. Current architectures often rely on a single entity to aggregate transactions, generate batches, and submit these to the base layer, creating a dependency that contradicts the decentralized ethos of the underlying network. This centralization is a temporary trade-off for performance, yet it necessitates robust fraud detection mechanisms to ensure the sequencer cannot unilaterally alter the state.

If the sequencer submits an invalid state transition, the verification logic must trigger a challenge or reject the proof immediately. The economic security of the entire rollup depends on the ability of third-party observers to monitor these submissions and provide the necessary evidence of malfeasance. This watchdog role is often under-incentivized, leading to a potential failure mode where invalid states persist due to lack of active oversight.

As the volume of off-chain transactions increases, the computational burden on these observers grows, making the verification process a race between malicious actors and the decentralized security budget.

Evolution

The progression of Layer Two Verification has moved toward increasing the efficiency of data availability and the decentralization of the proving process. The introduction of blob transactions on major networks has significantly reduced the cost of submitting verification data, allowing rollups to scale further without increasing fees for users. This structural shift acknowledges that the primary cost of off-chain validation is the storage of data on the base layer rather than the computation of the proof itself.

- Centralized Proving characterized the initial phase where a single operator managed all validation tasks.

- Permissionless Observation allowed third parties to challenge optimistic state transitions, increasing security.

- Decentralized Sequencing aims to distribute the ordering and proving roles across a network of participants.

- Shared Settlement Layers enable multiple rollups to utilize a common verification architecture for improved interoperability.

Modern Layer Two Verification systems are also incorporating multi-proof architectures. These systems require a state transition to be validated by both a fraud proof and a validity proof, or by multiple different zero-knowledge circuits. This redundancy mitigates the risk of bugs in any single prover implementation, ensuring that the security of the off-chain state does not depend on the perfection of a single piece of code.

Horizon

The future of Layer Two Verification lies in the implementation of recursive proofs and the unification of liquidity across disparate execution layers.

Recursive proving allows a verifier to confirm the validity of a proof that itself contains other proofs, enabling the creation of multi-layered scaling architectures. This technology will allow for the settlement of entire blockchains within a single transaction on the base layer, effectively removing the upper limits of network throughput.

Future verification architectures will leverage recursive proofs to enable infinite scalability without compromising the security of the base layer.

As Layer Two Verification matures, the distinction between different rollup types will likely blur. Hybrid systems will emerge, utilizing the low cost of optimistic validation for standard transactions while switching to zero-knowledge proofs for high-value settlements or instant withdrawals. This flexibility will allow protocols to optimize for both cost and speed based on the specific requirements of the assets being traded. The integration of Layer Two Verification into the foundational architecture of the internet of value will necessitate a shift in how market participants perceive risk. The reliance on mathematical certainty rather than institutional trust will redefine the parameters of financial settlement. In this future, the ability to verify state transitions permissionlessly will be the standard for all digital asset exchanges, ensuring that the global financial system remains transparent, resilient, and accessible to all.

Glossary

Trusted Setup

Sovereign Chains

Off-Chain State

App Chains

Prover Efficiency

Zero Knowledge Proofs

Fpga Proving

Challenge Periods

Censorship Resistance