Essence

The computational ceiling of standard blockchain environments dictates the current boundary of decentralized financial sophistication. Traditional smart contracts operate within a shared state machine where every node must execute every transaction, a design that ensures security but imposes severe latency and cost constraints. High-frequency derivatives and complex option pricing models require a density of calculation that exceeds these on-chain limits.

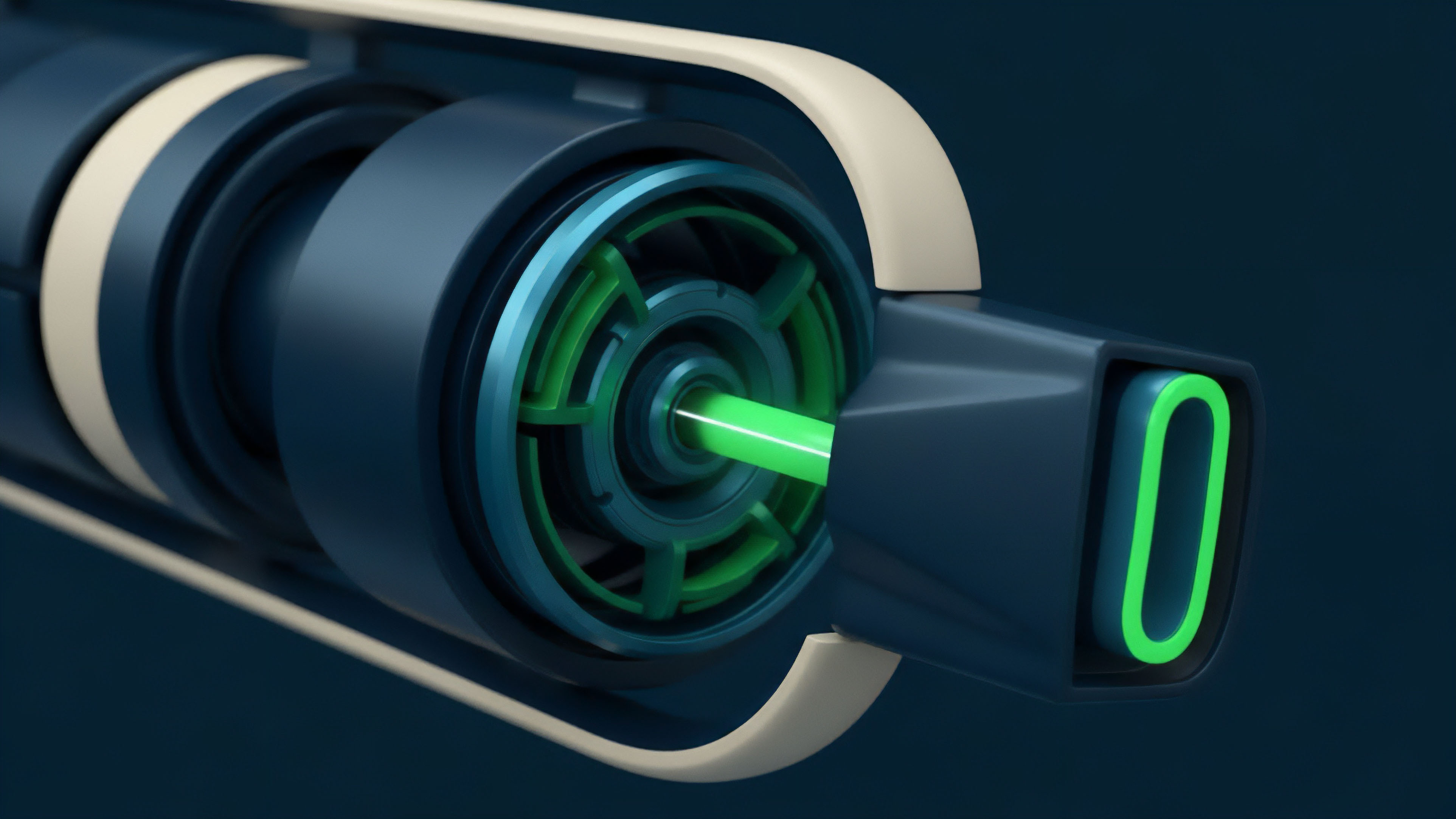

Hybrid Compute Architectures resolve this bottleneck by decoupling the execution of complex logic from the final settlement of state. This separation allows protocols to run intensive risk engines, Black-Scholes simulations, and real-time liquidation monitors in optimized environments while maintaining the censorship resistance of the underlying ledger.

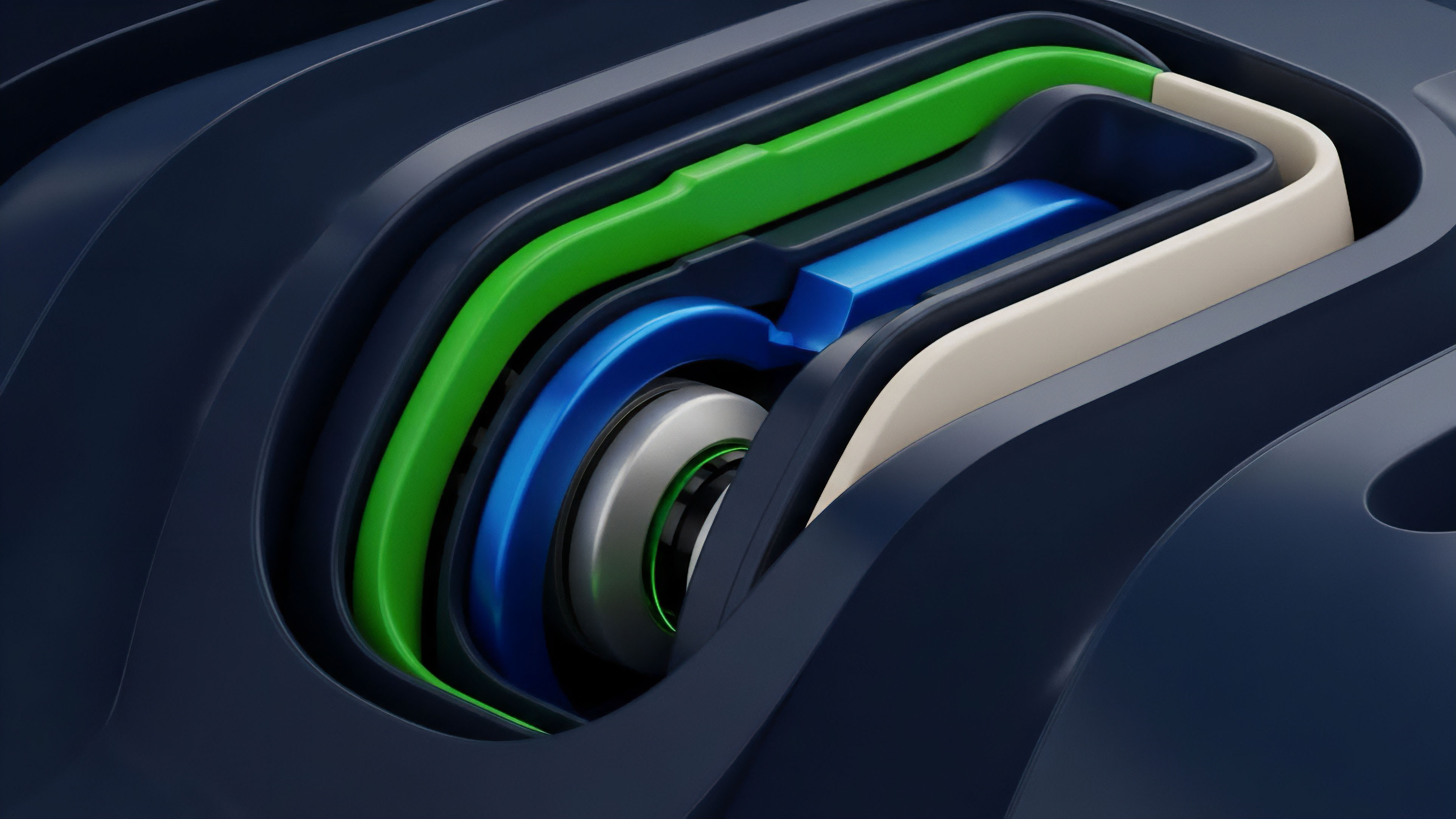

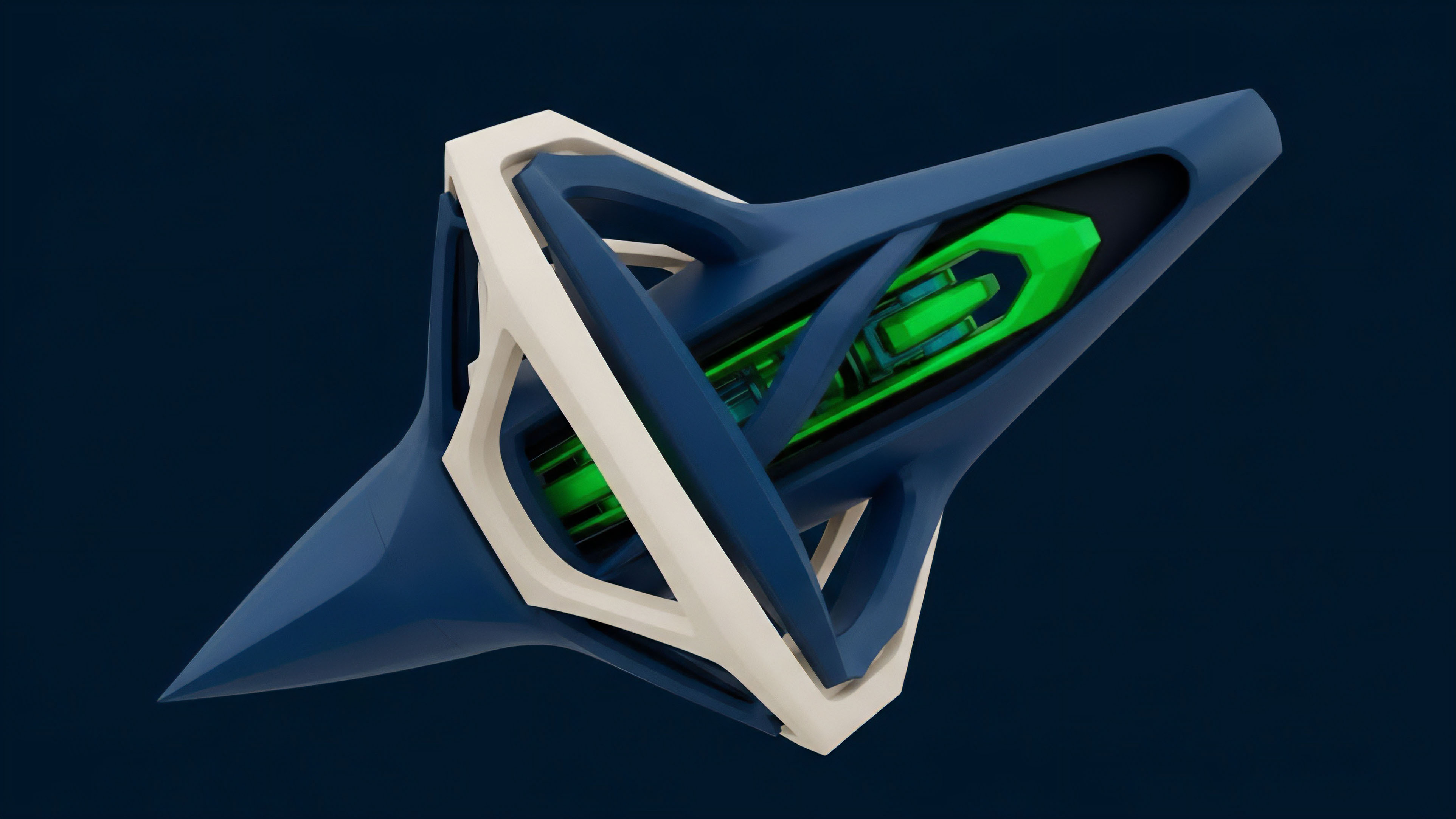

Hybrid architectures facilitate the execution of high-order financial logic by separating intensive calculation from the finality of the distributed ledger.

The primary function of this model involves the offloading of non-critical but computationally heavy tasks to secondary layers. These layers provide a verifiable proof of execution back to the main chain. By doing so, the system achieves a level of capital efficiency and risk management previously reserved for centralized exchanges.

The architecture represents a fundamental shift from monolithic execution to a modular, specialized stack where each component performs the task for which it is most suited. The strategic advantage of this design lies in its ability to handle multi-dimensional risk parameters. In a standard automated market maker, the price of an option might only reflect a simple bonding curve.

A hybrid system allows for the integration of real-time volatility smiles, interest rate shifts, and correlation dynamics. This depth of analysis ensures that liquidity providers are protected against toxic flow and that traders receive pricing that reflects true market conditions.

Origin

The necessity for hybrid models arose from the systemic failures of early decentralized derivative platforms. Initial attempts to build on-chain order books or complex option vaults were met with the harsh reality of gas costs and block times.

During periods of high volatility, the very moments when risk management is most vital, the network would become congested, preventing liquidations and leading to protocol insolvency. The transition began with the introduction of oracle networks that did more than just report prices. These networks started providing external data feeds that triggered on-chain events.

Simultaneously, the development of Layer 2 scaling solutions and sidechains offered a glimpse into a world where execution could be faster and cheaper. The true breakthrough occurred with the maturation of zero-knowledge proofs and trusted execution environments. These technologies provided the missing link: a way to perform calculations off-chain that the on-chain smart contract could trust without re-executing.

This historical progression reflects a move from simple, trustless computation to complex, verifiable computation. The industry realized that decentralization does not require every node to perform every math problem; it requires that every math problem can be proven correct.

Theory

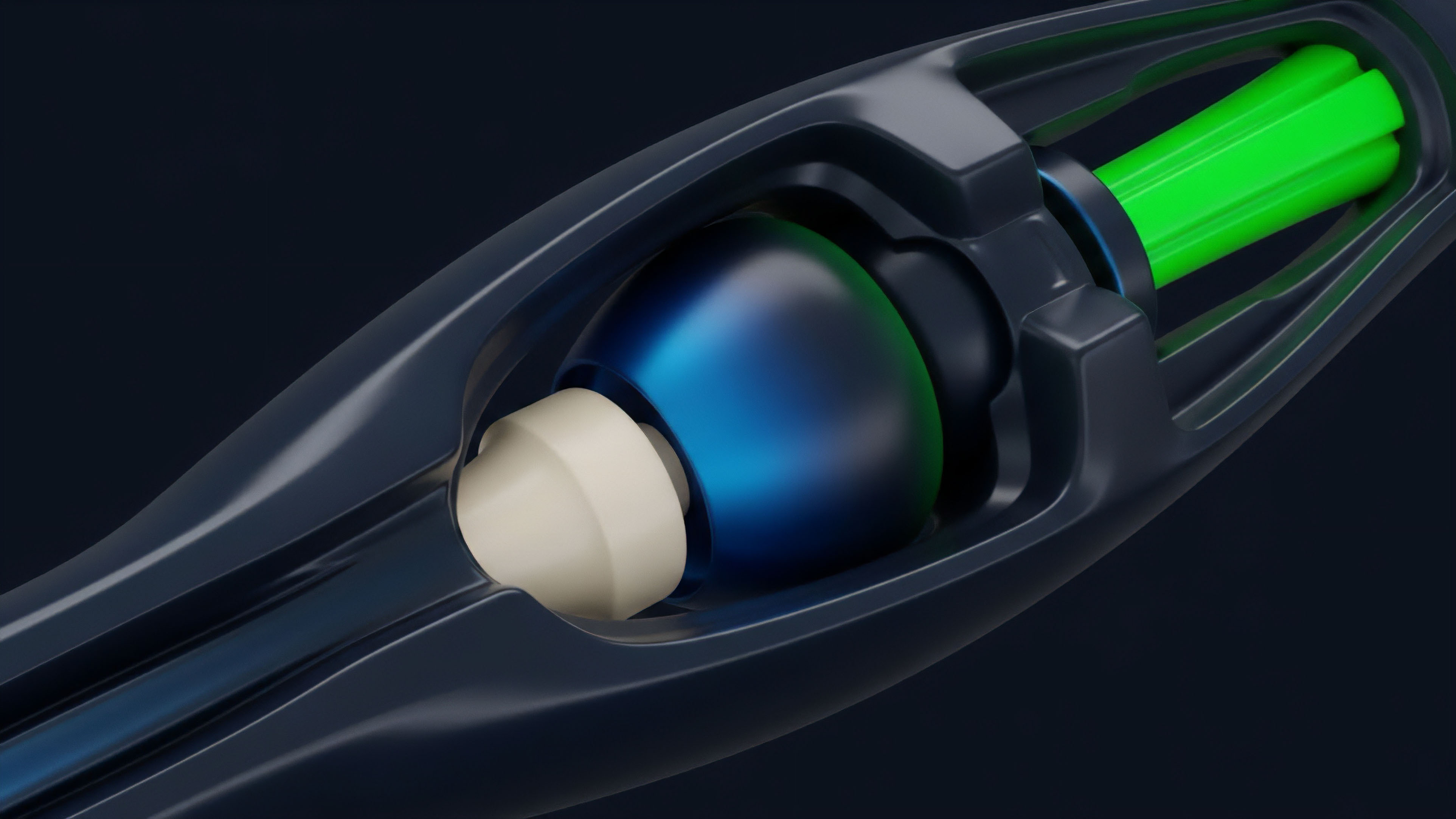

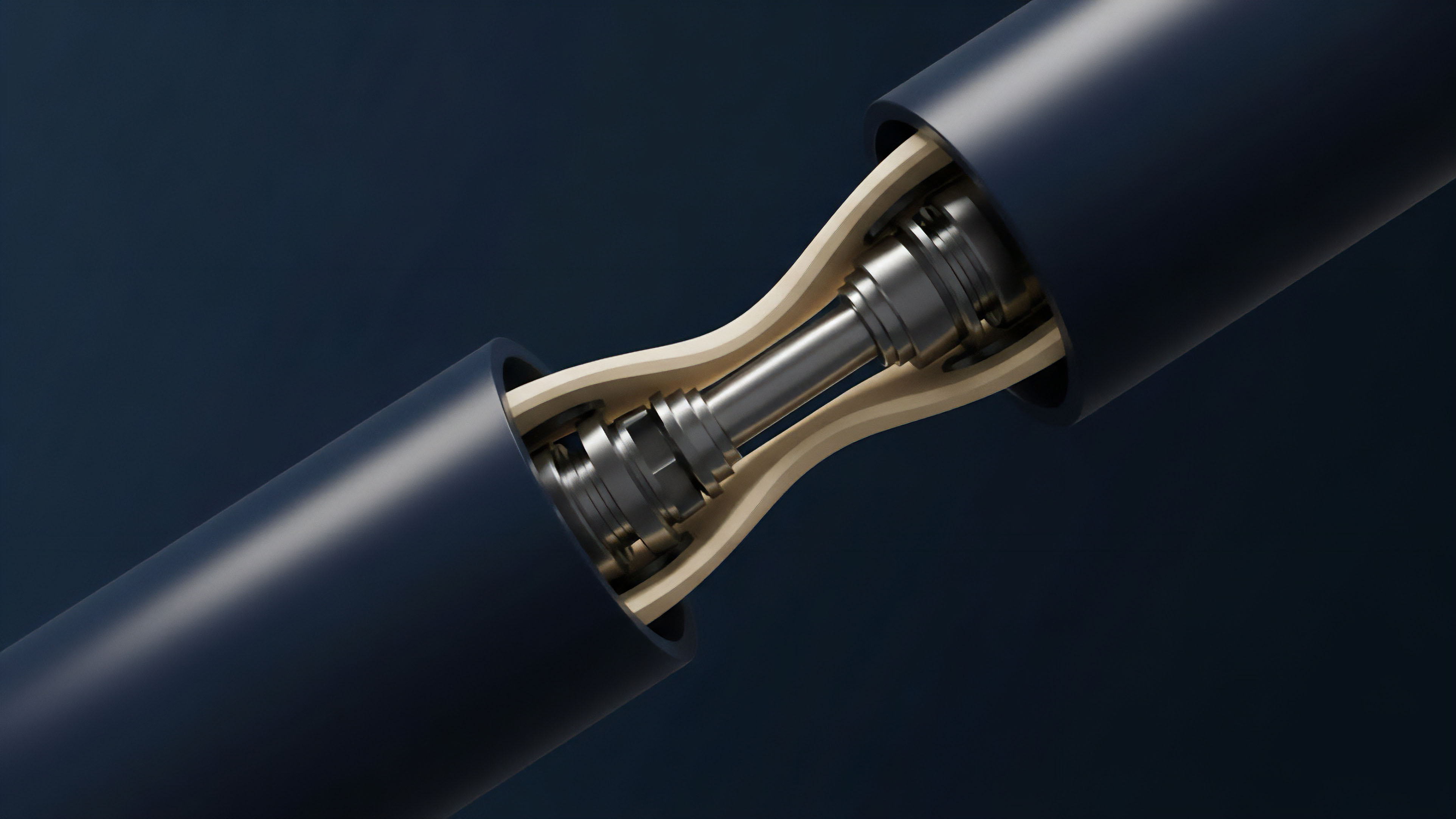

The mathematical foundation of Hybrid Compute Architectures rests on the distinction between deterministic state transitions and probabilistic or heavy-compute risk modeling. In a derivative context, the settlement of a contract is a simple state change: Alice pays Bob.

Conversely, determining the fair value of a long-dated exotic option involves solving partial differential equations or running thousands of Monte Carlo simulations.

Asymmetric Execution Logic

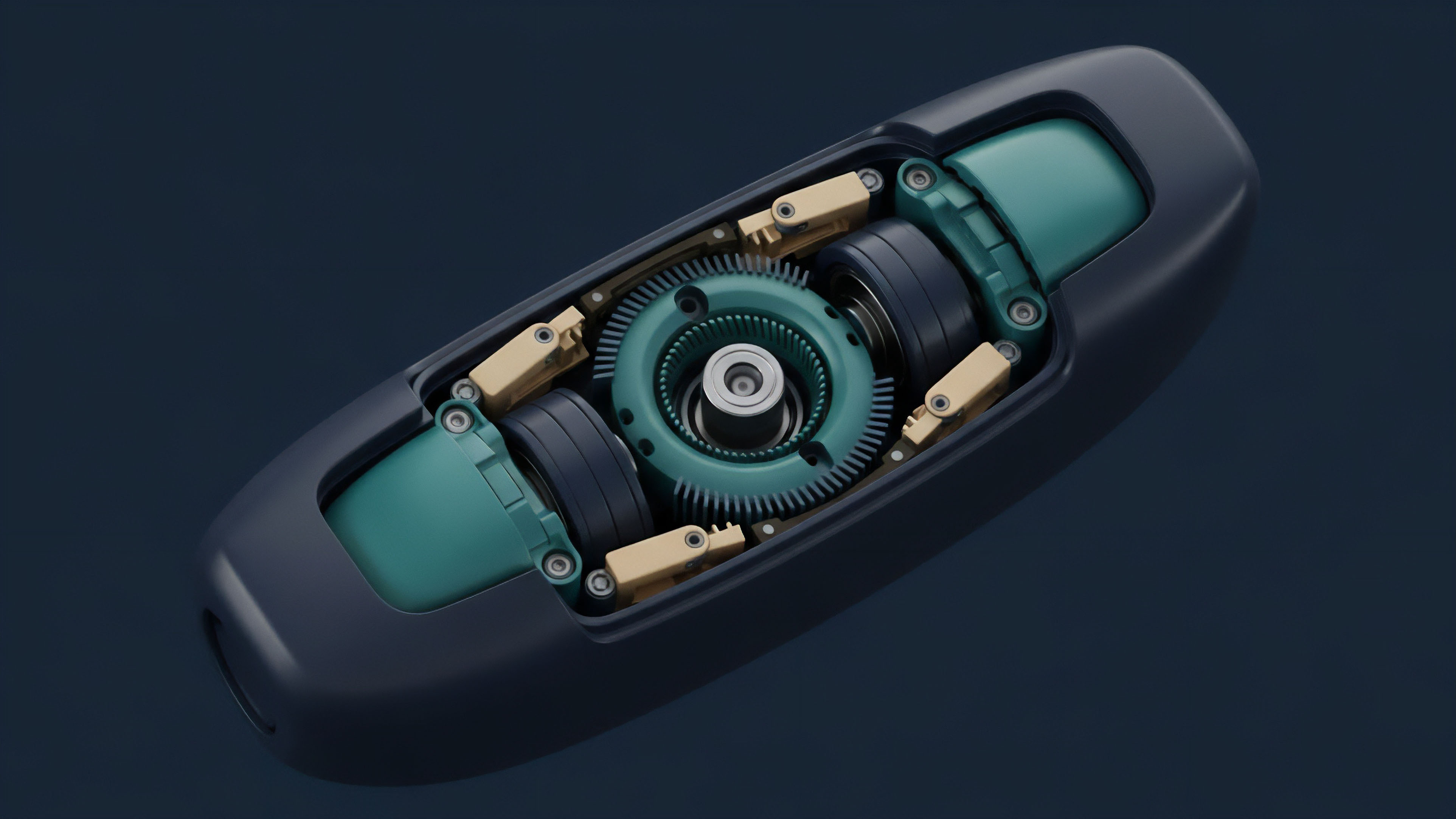

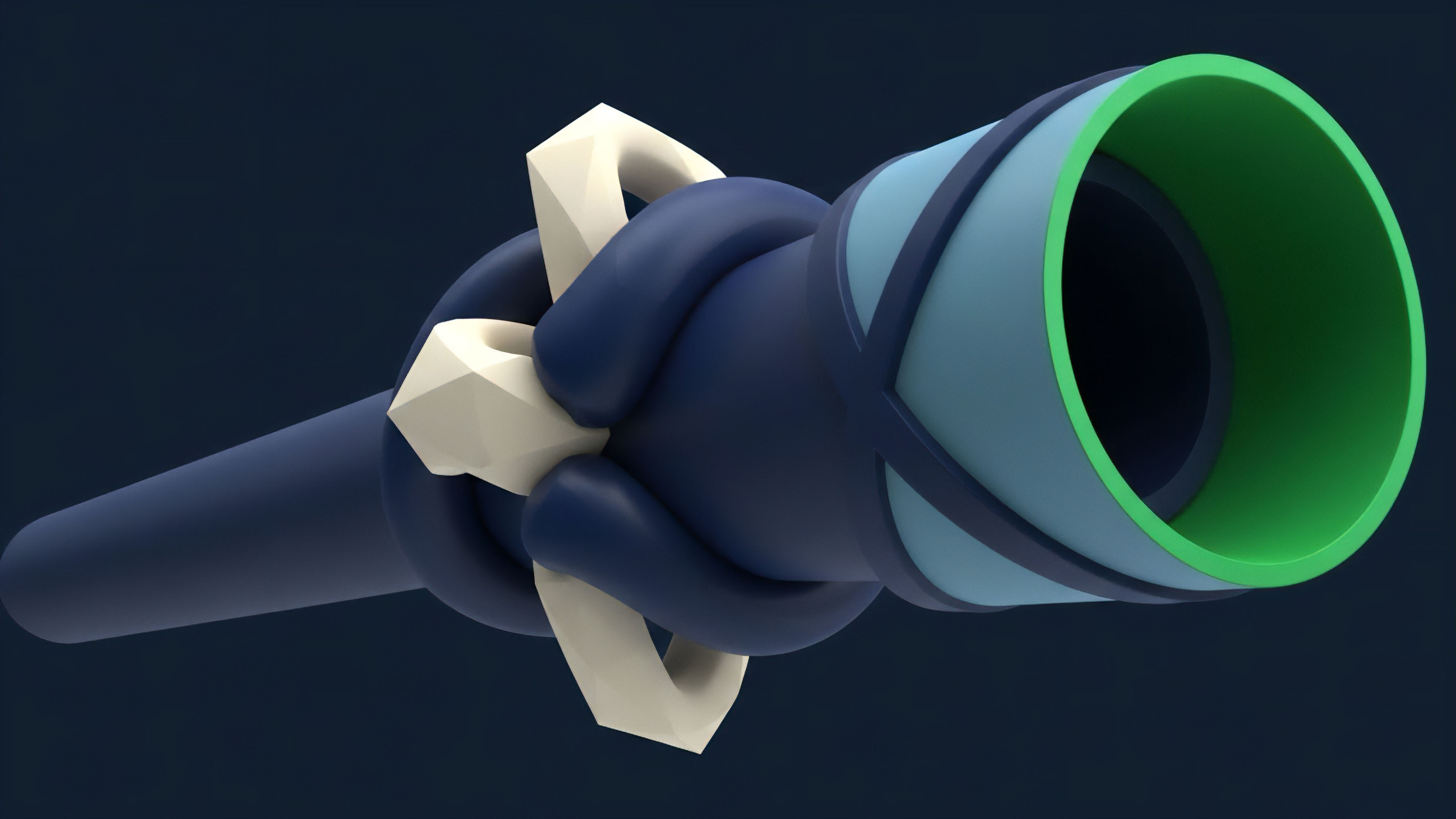

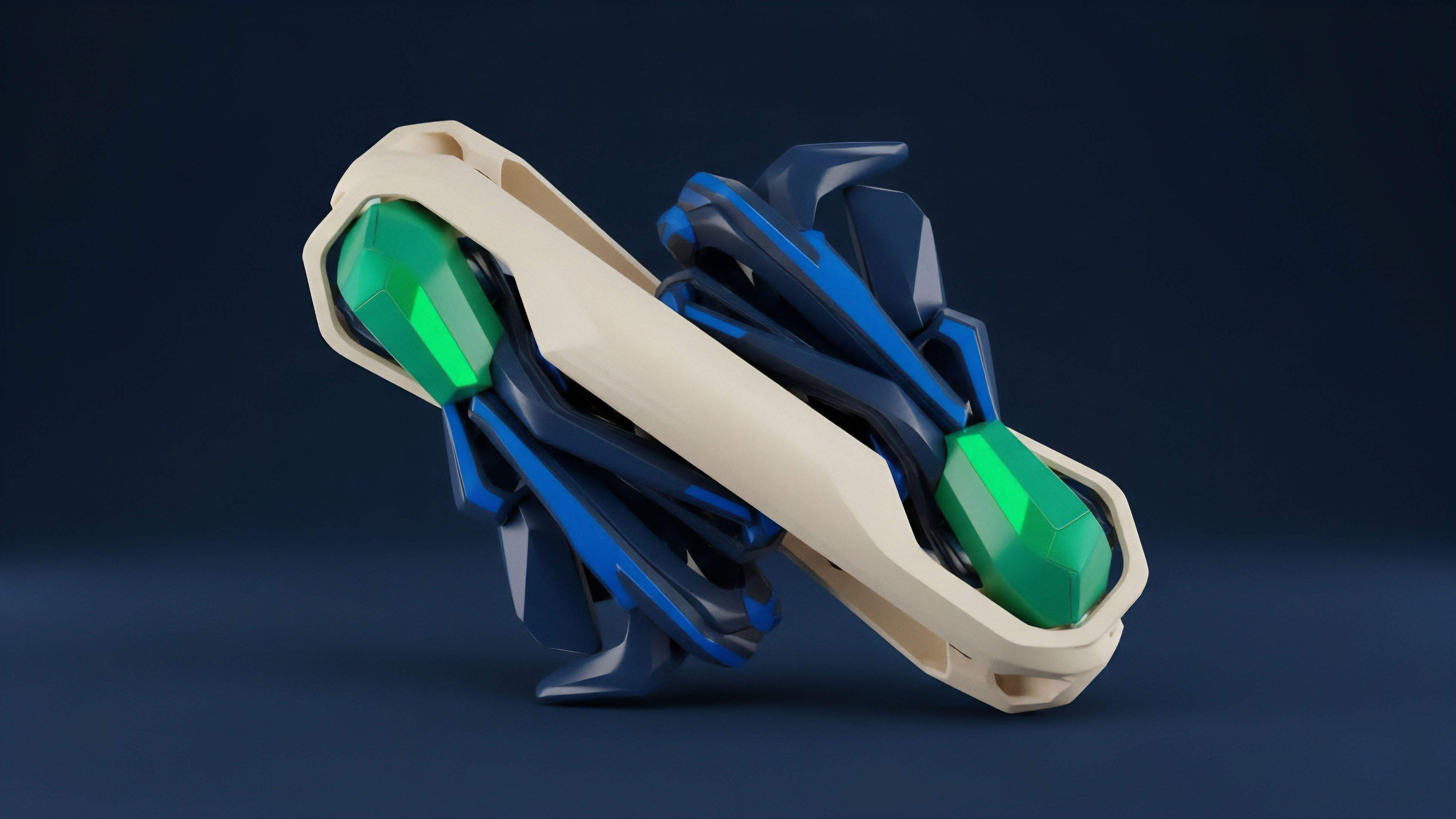

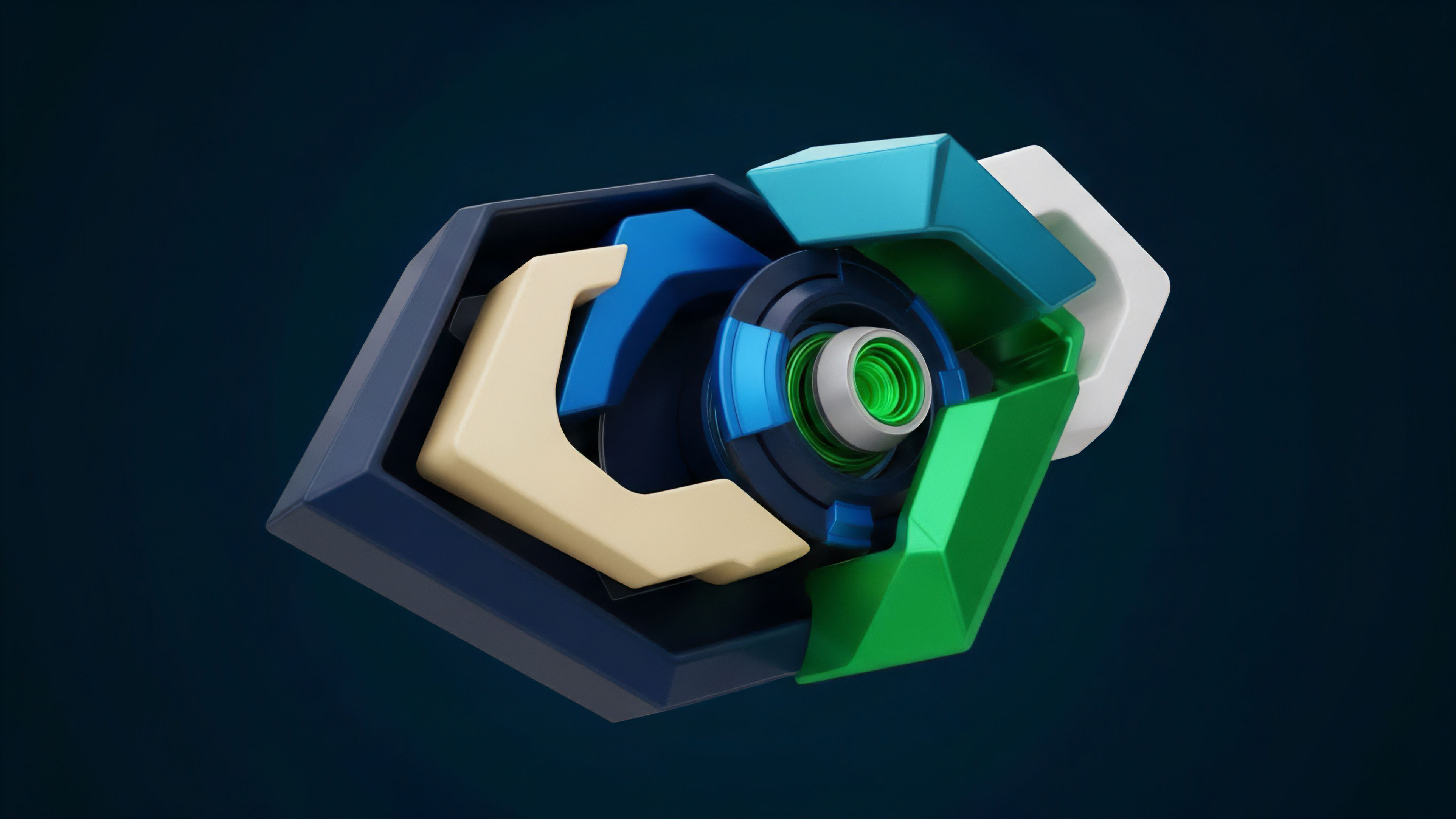

The architecture utilizes a dual-track system. Track one, the Settlement Layer, manages the custody of assets and the finality of trades. Track two, the Computation Layer, handles the heavy lifting.

The interaction between these layers is governed by a set of cryptographic or economic guarantees.

| Feature | On-Chain Execution | Hybrid Execution | Centralized Execution |

|---|---|---|---|

| Latency | High (Block-bound) | Low (Millisecond) | Ultra-Low (Microsecond) |

| Trust Model | Trustless | Verifiable | Trusted Third Party |

| Cost Efficiency | Low (Gas intensive) | High (Off-chain) | Maximum |

| Security | Maximum (L1 Consensus) | High (Cryptographic Proofs) | Variable (Internal Controls) |

The decoupling of state settlement from computational execution allows for the integration of institutional-grade risk modeling within decentralized frameworks.

Verifiable Compute Mechanisms

To maintain the integrity of the system, the Computation Layer must provide proof of its work. This is achieved through several primary methods:

- Zero-Knowledge Coprocessors utilize succinct non-interactive arguments of knowledge to prove that a specific calculation was performed correctly based on a given set of inputs without revealing the inputs themselves.

- Trusted Execution Environments leverage hardware-level isolation, such as Intel SGX, to run code in a secure enclave that is inaccessible to the rest of the system.

- Optimistic Computation assumes the result is correct but allows for a challenge period where observers can submit a fraud proof if they detect an error.

The choice of mechanism involves a trade-off between latency and security. ZK-proofs offer the highest security but currently suffer from high generation times. TEEs provide near-instant execution but introduce a dependency on hardware manufacturers.

Approach

Implementing a hybrid model requires a sophisticated orchestration layer that manages the flow of data between the user, the computation provider, and the blockchain.

The process begins when a user initiates a trade or when a risk threshold is met.

Systemic Workflow

The operational flow of a modern hybrid derivative platform follows a rigorous sequence:

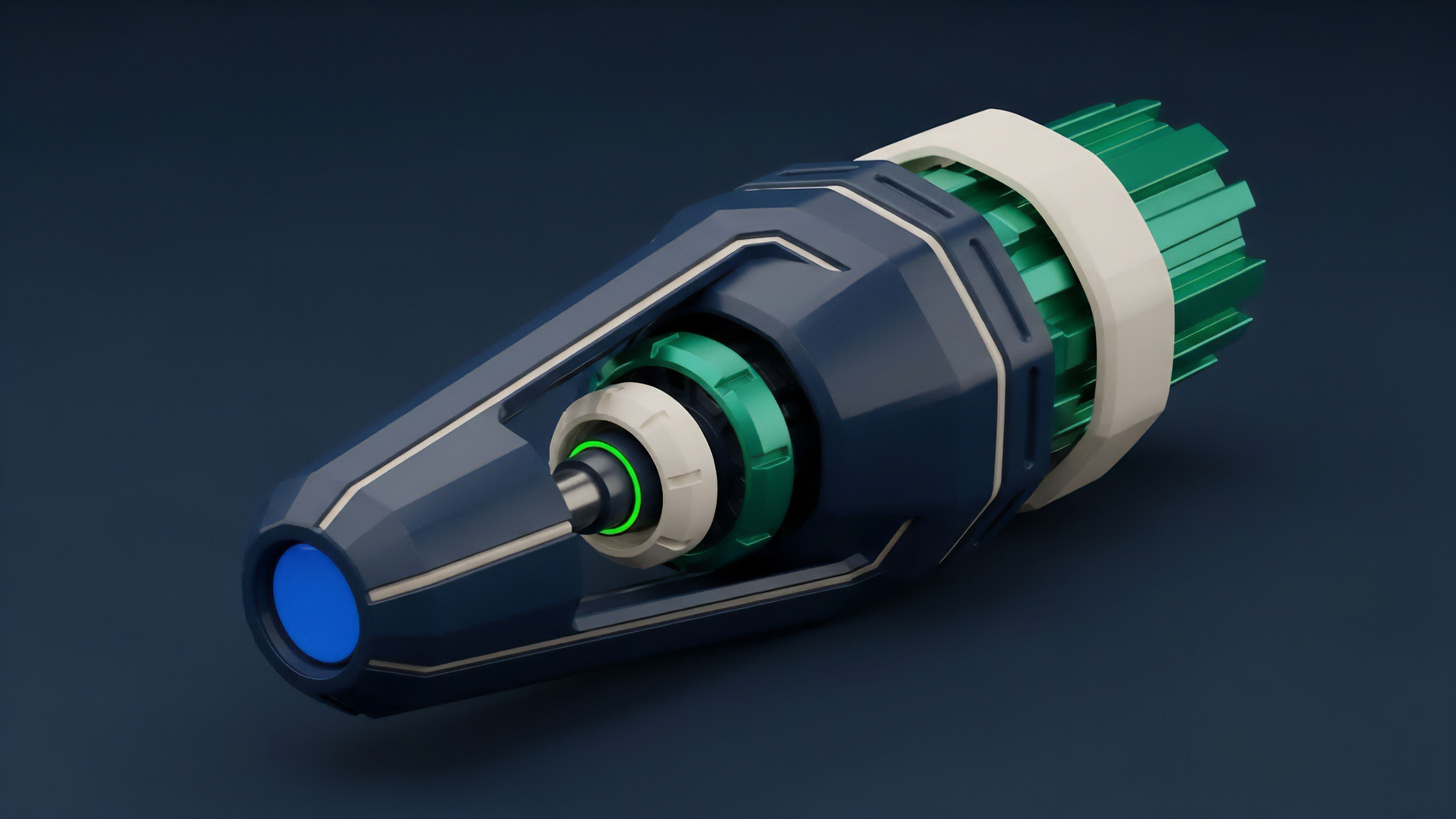

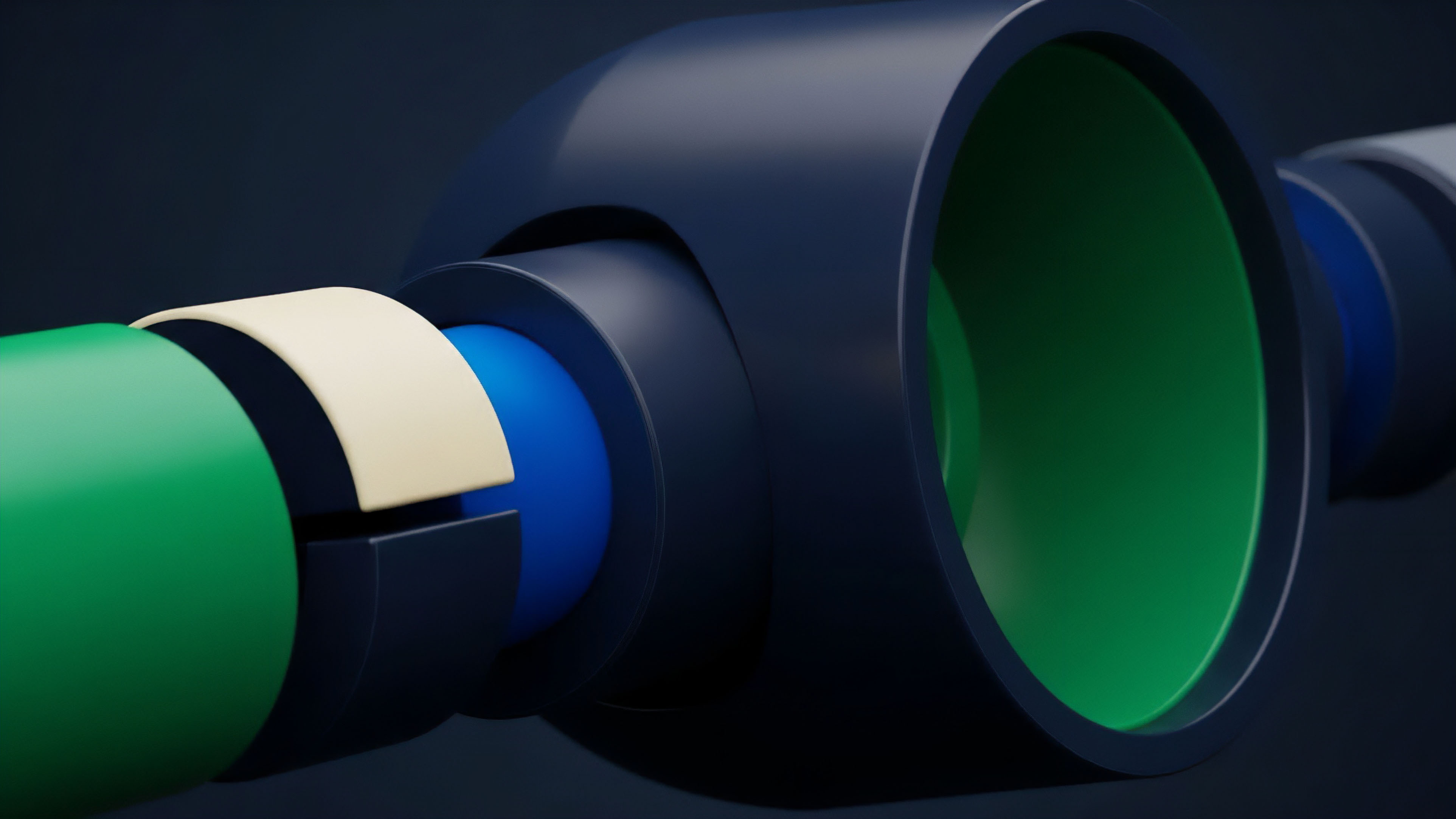

- Data Ingestion involves the collection of real-time market data from multiple sources, including centralized exchange feeds and on-chain liquidity pools.

- Off-Chain Processing occurs within the computation layer, where the risk engine calculates margin requirements, option Greeks, and liquidation prices.

- Proof Generation creates a cryptographic commitment or a signed attestation of the results produced in the previous step.

- On-Chain Verification submits the proof to the smart contract, which validates the evidence and executes the necessary state changes.

Risk Management Integration

Hybrid systems allow for dynamic margin engines that adjust in real-time. Instead of static collateral ratios, the system can implement cross-margining across multiple positions. This requires a constant stream of calculations to ensure that the total value of the account remains above the maintenance margin.

| Risk Parameter | Implementation Method | Impact on Capital Efficiency |

|---|---|---|

| Delta Hedging | Automated Off-chain Logic | Reduces directional exposure risk |

| Gamma Scalping | High-frequency Hybrid Loops | Optimizes liquidity provider returns |

| Liquidation Engine | Real-time Monitor + Proofs | Prevents bad debt accumulation |

The use of hybrid compute also enables the creation of complex structured products. These products can rebalance their underlying assets based on intricate signals that would be impossible to process on-chain. For instance, a volatility-harvesting vault can use off-chain compute to determine the optimal strike prices for its weekly option sales, ensuring it always captures the maximum risk premium.

Evolution

The current state of hybrid computation represents a significant departure from the early days of simple oracles.

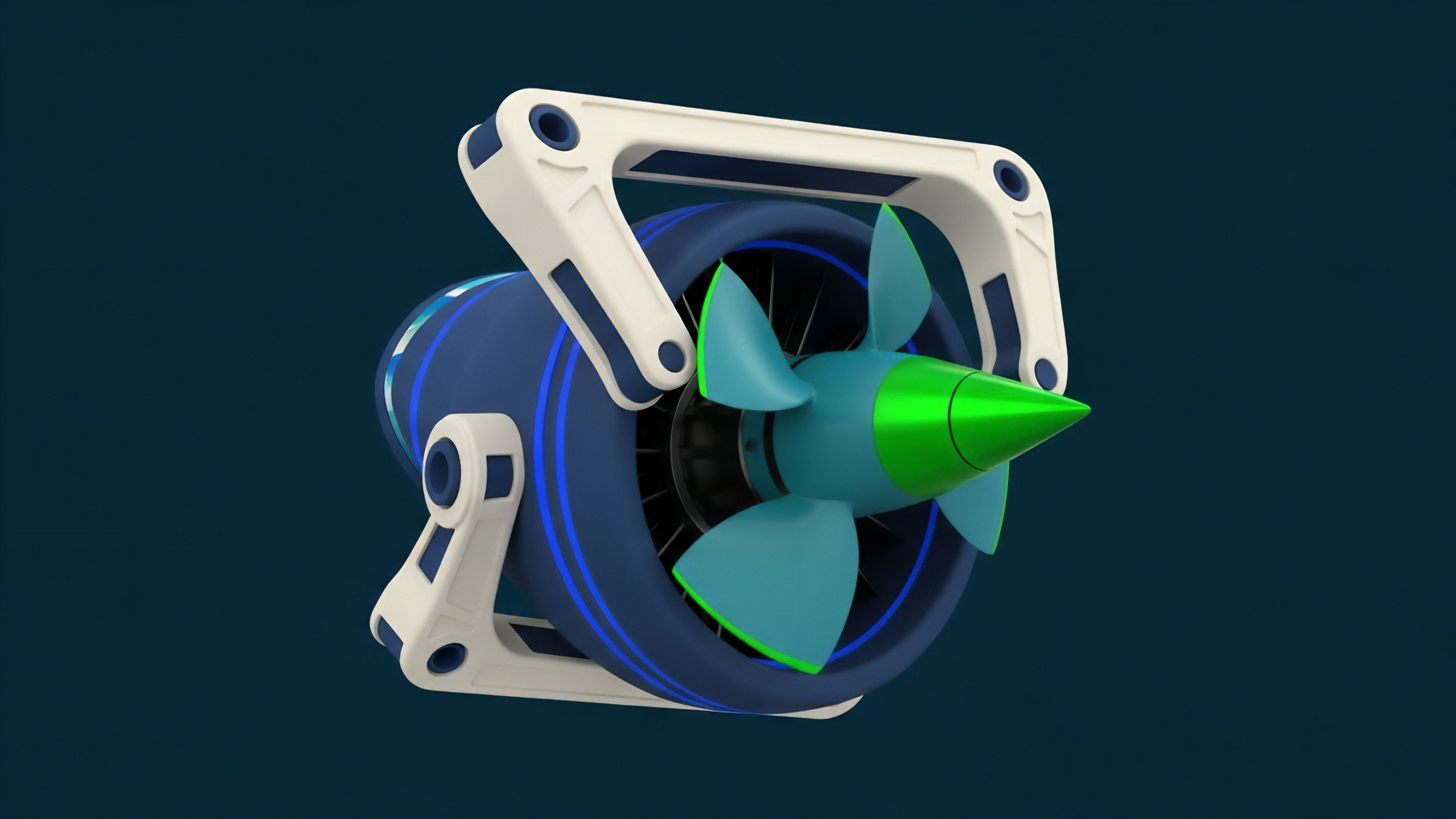

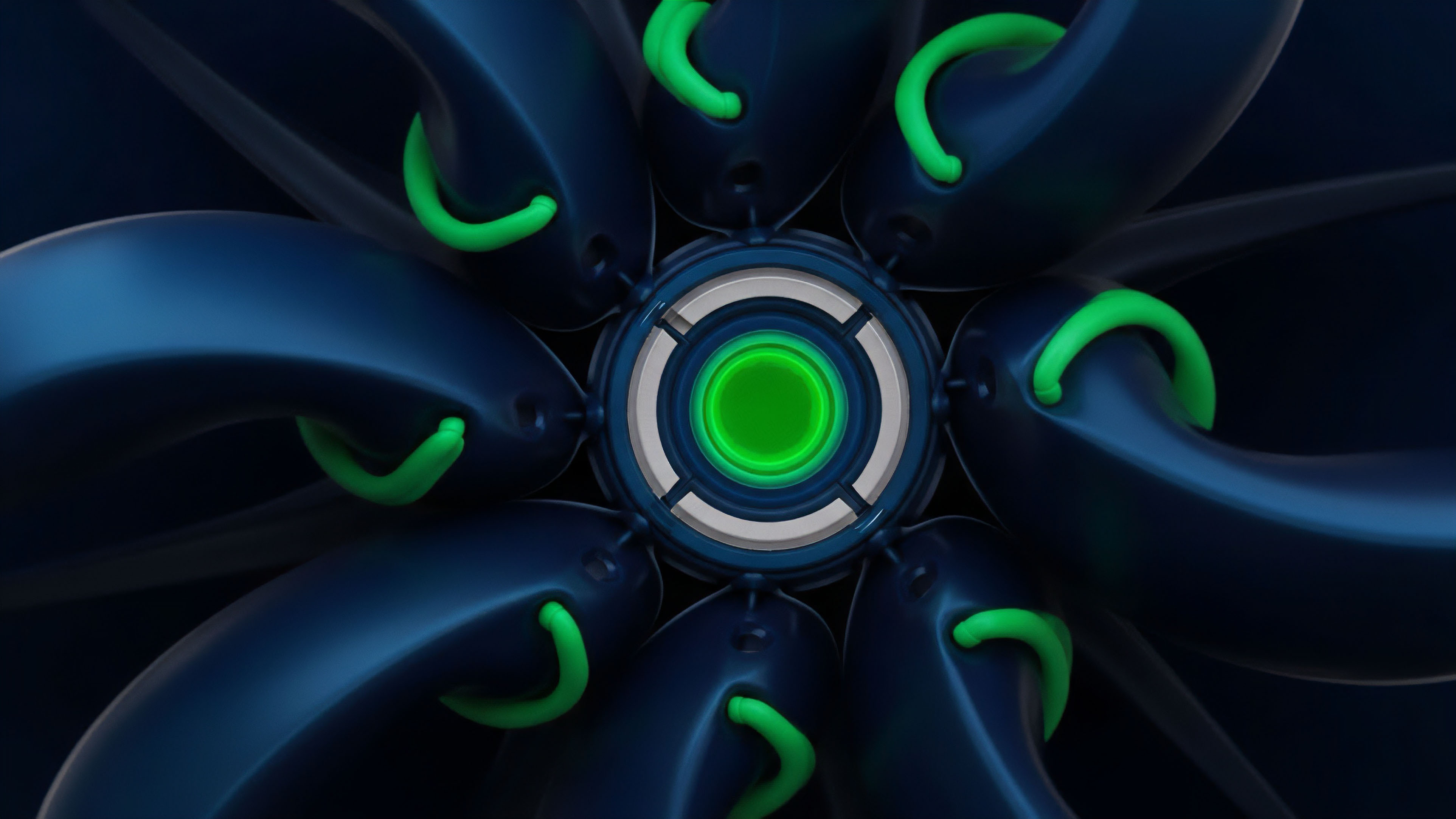

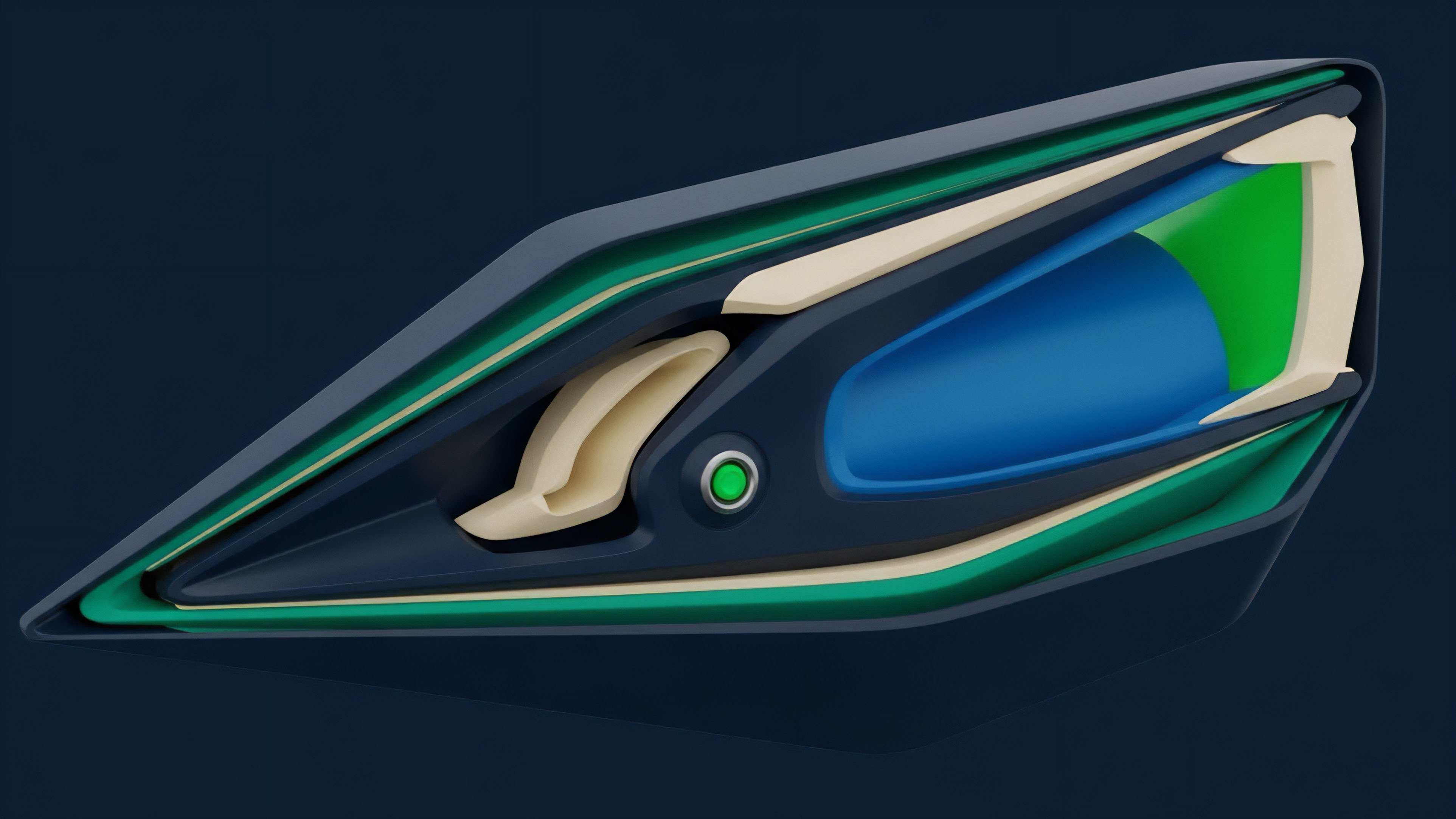

We have moved from a reactive model, where the blockchain waits for external data, to a proactive model, where off-chain agents are an integral part of the protocol’s heartbeat. The rise of modular blockchain stacks has accelerated this trend. By separating data availability, consensus, and execution, developers can now plug in specialized computation layers as needed.

This modularity allows a derivative protocol to use one chain for settlement and a completely different, high-performance network for its order book and risk engine.

The shift toward modularity enables derivative protocols to leverage specialized computation environments without sacrificing the security of established settlement layers.

Strategic shifts in the market have also been driven by the demand for professional-grade trading tools. Institutional participants require features like sub-second order cancellation and complex order types (e.g. Icebergs, TWAP). Hybrid models provide the only viable path to offering these features while keeping the assets under the user’s control. The evolution is marked by a relentless pursuit of performance that rivals centralized systems while maintaining the core tenets of the decentralized movement.

Horizon

The future of hybrid computation lies in the seamless integration of artificial intelligence and machine learning into the risk management stack. As ZK-proofs become more efficient, we will see the emergence of “ZK-ML,” where a protocol can prove that a machine learning model was run correctly to determine the risk parameters of a market. This will allow for hyper-adaptive protocols that can anticipate market stress and adjust collateral requirements before a crash occurs. Another significant development is the move toward decentralized sequencer networks. Currently, many hybrid systems rely on a single or a small group of computation providers. The next phase involves decentralizing these providers to ensure that no single entity can censor trades or manipulate the risk engine. This will involve complex game-theoretic incentives to ensure that providers remain honest and performant. The convergence of these technologies will eventually make the distinction between “on-chain” and “off-chain” invisible to the end user. We are building a global, permissionless financial operating system where the complexity of the math is hidden behind a veil of cryptographic certainty. The ultimate goal is a system that is as fast as a New York server room and as resilient as the Bitcoin network. How does the inevitable centralization of high-performance hardware for Trusted Execution Environments compromise the censorship resistance of decentralized option clearing houses?

Glossary

Protocol Insolvency Prevention

Global Financial Operating System

Trusted Execution

Oracle Network Evolution

Succinct Non-Interactive Arguments

Data Availability Layers

Cryptographic Certainty

Optimistic Fraud Proofs

Modular Blockchain Stack