Essence

Historical Volatility Estimation represents the statistical measurement of an asset’s realized price dispersion over a specified lookback period. It functions as the foundational metric for quantifying past market turbulence, providing the necessary data input for subsequent derivative pricing models and risk management frameworks. By calculating the standard deviation of logarithmic returns, market participants transform raw, chaotic price action into a standardized numerical value, facilitating the comparison of risk across disparate digital assets.

Historical volatility serves as the objective, backward-looking baseline for assessing asset risk and calibrating derivative pricing models.

This estimation process ignores forward-looking market sentiment, focusing exclusively on the realized variance that has already manifested within the order book. In the context of decentralized finance, this metric anchors the assessment of collateral health and liquidation thresholds. Without a rigorous estimation of past realized moves, participants lack the objective grounding required to evaluate whether current market premiums adequately compensate for the inherent instability of the underlying protocol.

Origin

The mathematical lineage of Historical Volatility Estimation derives from classical finance theory, specifically the application of Brownian motion to price dynamics.

Early quant researchers identified that asset returns, when viewed over short intervals, approximate a normal distribution, allowing for the use of variance as a proxy for risk. The transition of these models into digital asset markets necessitated adjustments for the unique, non-stop trading environment and the heightened impact of extreme, non-Gaussian tail events.

- Logarithmic Returns: The standard practice of converting raw price data into log returns ensures stationarity, allowing for consistent statistical comparison over varying timeframes.

- Standard Deviation: This core calculation quantifies the dispersion of returns from the mean, providing the raw unit for volatility assessment.

- Lookback Windows: The selection of the temporal window, such as 30-day or 90-day periods, dictates the sensitivity of the estimation to recent versus long-term market regimes.

Early implementations relied on simple moving averages, but the inherent fragmentation of crypto liquidity pushed developers toward more sophisticated estimators. These initial methods sought to replicate the stability of traditional equity markets, yet they consistently struggled with the rapid, regime-shifting nature of blockchain-native assets. The evolution of these models reflects a move away from static, uniform time-weighting toward dynamic, responsive frameworks that prioritize recent price discovery.

Theory

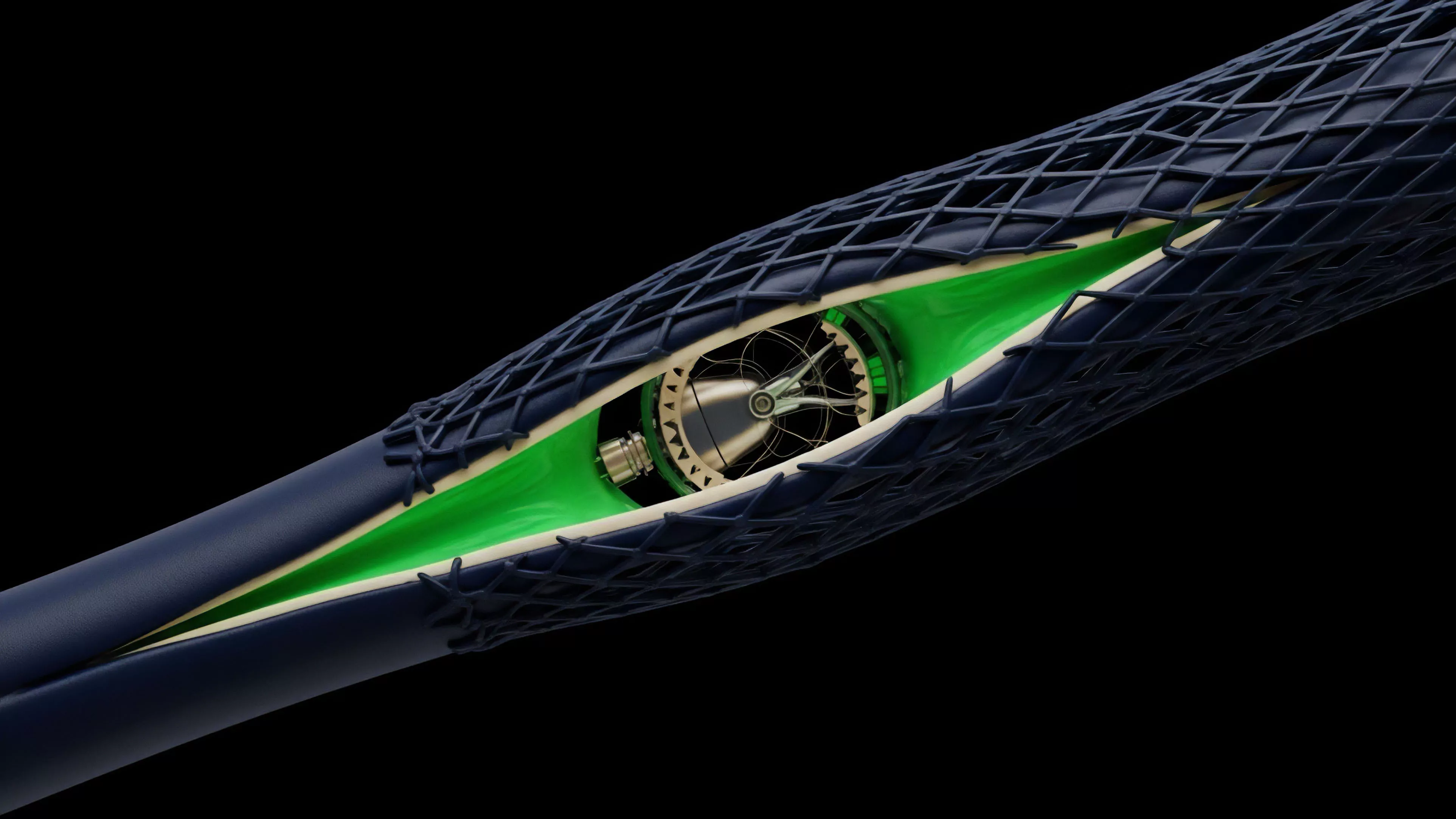

The architecture of Historical Volatility Estimation relies on the rigorous application of statistical estimators to high-frequency tick data.

The most common approach, the Garman-Klass estimator, enhances precision by incorporating high, low, and closing prices, offering a more robust view of price movement than simple close-to-close calculations. This methodology acknowledges that intra-day range dynamics provide significant information about market stress that a single daily closing price ignores.

| Estimator Type | Data Inputs | Primary Utility |

| Close-to-Close | Closing Prices Only | Simplicity and Historical Consistency |

| Garman-Klass | High, Low, Open, Close | Improved Efficiency for Trending Markets |

| Parkinson | High, Low | Maximizing Range-Based Volatility Information |

The mathematical rigor here is non-negotiable. If the estimation fails to account for the microstructure of the exchange, the resulting volatility value becomes disconnected from the actual risk of liquidation.

Robust volatility estimation requires integrating high-frequency range data to capture intra-day liquidity shocks that closing prices obscure.

One might consider how the physics of a system, like the thermal noise in an electrical circuit, mirrors the erratic, unpredictable nature of order flow in a decentralized exchange. Just as engineers must filter signal from noise to maintain system integrity, quantitative analysts must distinguish between genuine regime shifts and transient, noise-driven price spikes. The integrity of the entire derivative pricing chain rests upon this filtering process.

Approach

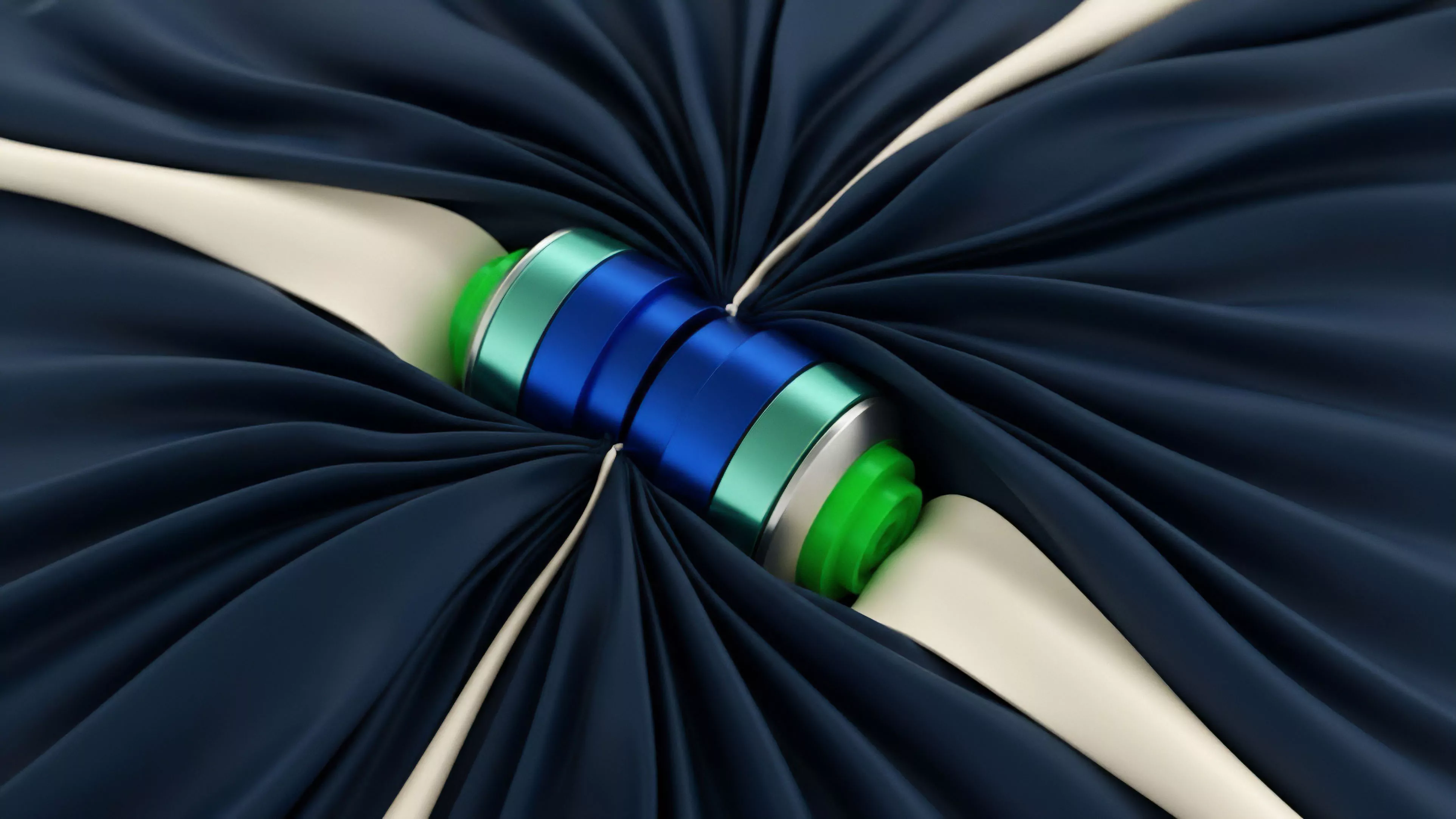

Current practices in Historical Volatility Estimation prioritize the integration of real-time on-chain data and off-chain exchange feed aggregation.

Market makers and protocol architects now employ exponentially weighted moving averages, which assign higher significance to recent price action. This adjustment acknowledges that the most recent liquidity events hold greater predictive weight for immediate risk management than data from weeks prior.

- Data Sanitization: Filtering out erroneous price prints caused by flash crashes or liquidity gaps is a prerequisite for accurate volatility calculation.

- Time-Weighting: Applying decay factors to older data points allows the model to adapt quickly to sudden, structural changes in market volatility.

- Frequency Selection: Determining whether to use minute-by-minute or hourly snapshots significantly impacts the responsiveness of the risk engine.

This approach demands a constant, adversarial stance toward the data. Because decentralized protocols are subject to manipulation, the estimation framework must include checks against outlier-driven distortions. The goal is to produce a volatility value that is both responsive to sudden changes and resilient against individual, large-size orders that could otherwise bias the entire risk assessment model.

Evolution

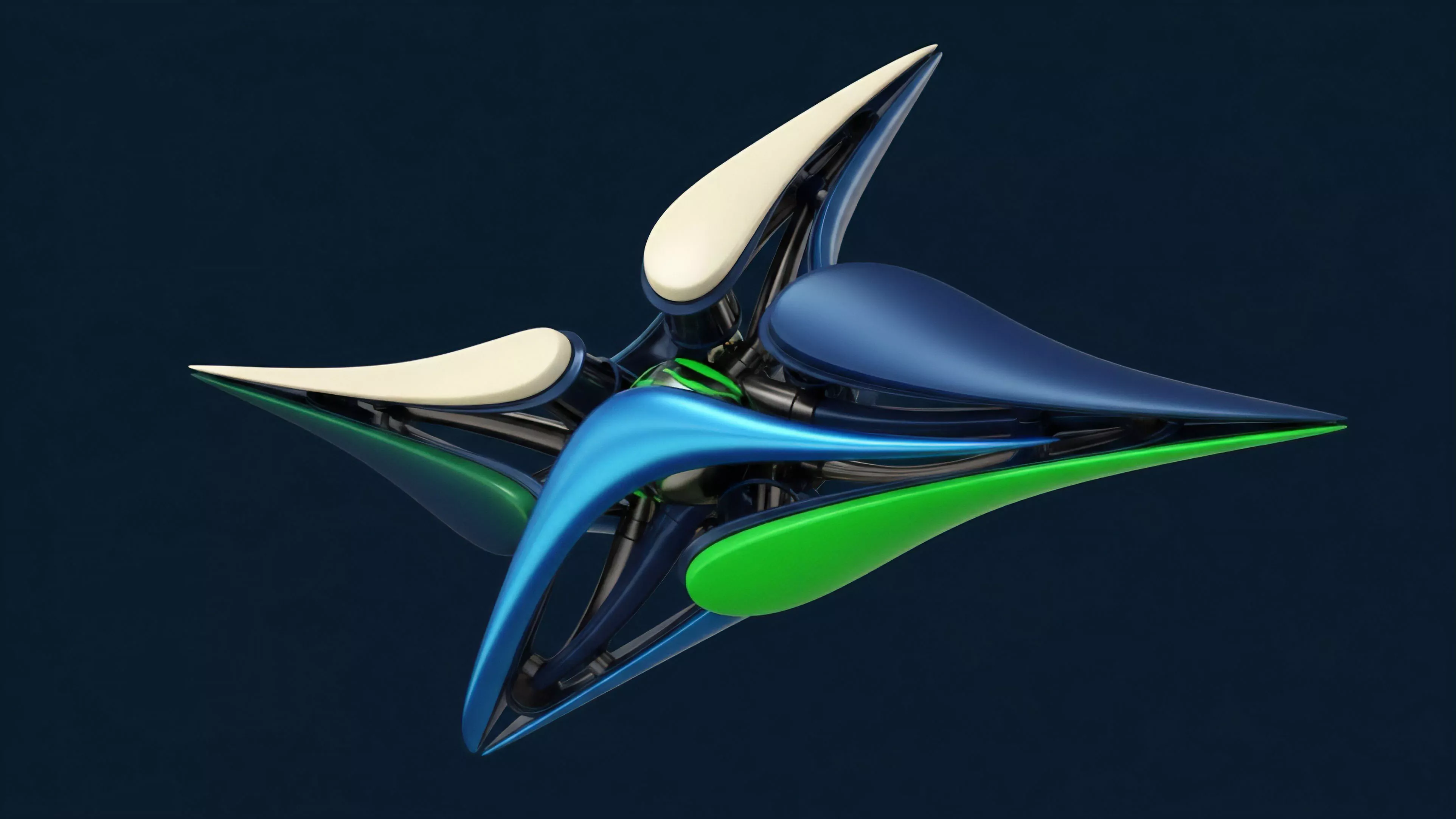

The trajectory of Historical Volatility Estimation has moved from rudimentary, static models to highly adaptive, multi-source frameworks.

Early systems relied on centralized exchange feeds, which were often prone to latency and data gaps. The emergence of decentralized oracles and direct, on-chain order flow analysis has shifted the focus toward creating a singular, reliable source of truth for volatility metrics.

Modern volatility frameworks must transition from static lookback windows to dynamic, regime-aware models that detect structural market shifts.

This shift has forced a rethink of how risk parameters are set. Protocols no longer rely on fixed volatility inputs; instead, they incorporate feedback loops where realized volatility directly informs collateral requirements. This creates a self-regulating system that tightens requirements during periods of high instability and relaxes them when markets demonstrate consistent behavior.

The transition marks a movement toward autonomous, code-based risk management that minimizes the need for human governance intervention during high-stress events.

Horizon

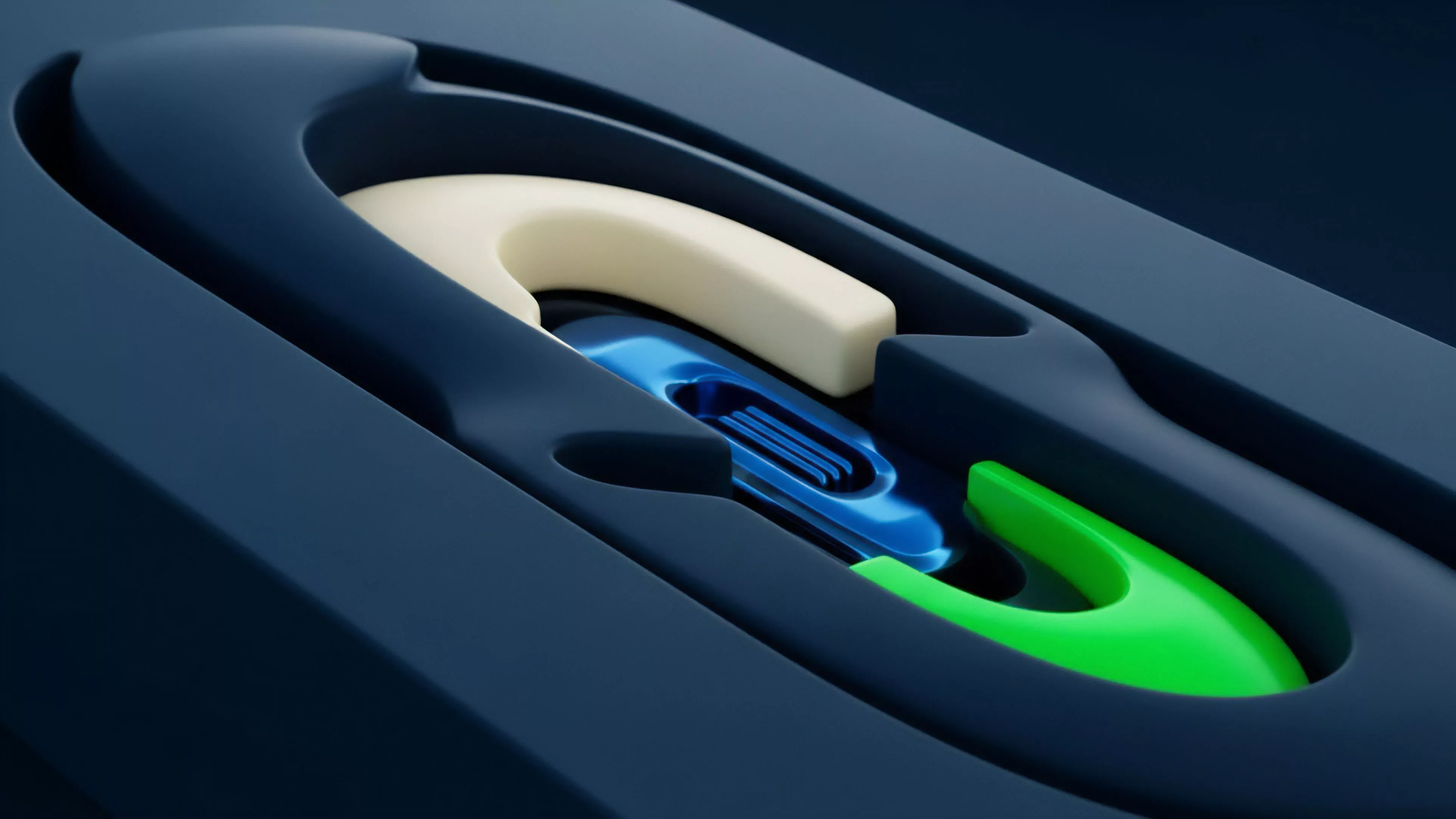

Future developments in Historical Volatility Estimation will center on the synthesis of cross-protocol data and the application of machine learning to detect latent risk patterns. As decentralized derivatives markets mature, the ability to predict volatility regimes based on underlying tokenomics and network activity will become the defining edge for sophisticated participants. The integration of order flow toxicity metrics into volatility estimation will provide a more granular understanding of why price movement occurs, not just the magnitude of the movement itself.

| Future Focus | Technological Driver | Systemic Impact |

| Predictive Regimes | Machine Learning Models | Proactive Risk Mitigation |

| Cross-Protocol Analysis | Interoperable Data Oracles | Unified Liquidity Risk Management |

| Toxicity Filtering | Real-time Order Flow | Reduced False-Positive Liquidations |

The next stage of development will likely involve the creation of decentralized, community-audited volatility indices. These indices will serve as the standardized benchmark for all derivative contracts, reducing the fragmentation that currently hampers cross-platform liquidity. By aligning the estimation process with the transparent, immutable nature of blockchain technology, the industry will achieve a level of systemic robustness that surpasses traditional financial infrastructure.