Essence

Financial Time Series Analysis in crypto derivatives represents the systematic decomposition of price, volume, and order flow data to map the underlying stochastic processes governing digital asset markets. It functions as the primary diagnostic tool for identifying the statistical signatures of market participants, ranging from high-frequency arbitrageurs to long-term liquidity providers. By isolating deterministic patterns from white noise, practitioners gain visibility into the latent variables that dictate option pricing, volatility surfaces, and tail-risk exposure.

Financial Time Series Analysis serves as the analytical bridge between raw exchange data and the probabilistic modeling of future market states.

The field centers on the observation that crypto markets, while lacking the traditional closing bell of equity exchanges, exhibit unique periodicities driven by protocol consensus cycles, funding rate adjustments, and decentralized governance events. Understanding these time-dependent dependencies is the foundation for constructing robust hedging strategies and managing the non-linear risks inherent in derivative positions.

Origin

The lineage of this discipline tracks back to the application of classical econometrics ⎊ specifically ARCH and GARCH models ⎊ to traditional asset classes, adapted rapidly for the 24/7 nature of blockchain liquidity. Early practitioners faced the immediate challenge of high-frequency noise and the absence of standardized settlement windows, which forced a transition toward non-parametric estimation techniques.

- Stochastic Calculus provided the foundational framework for pricing contingent claims in environments characterized by discontinuous price jumps.

- Signal Processing techniques migrated from engineering into finance to filter high-frequency volatility from the structural trend.

- Limit Order Book reconstruction allowed researchers to move beyond trade-only data, incorporating the depth and intention of market participants into time series models.

This evolution occurred in tandem with the rise of decentralized exchanges, where the transparency of the mempool introduced a new dimension of pre-trade data. The shift from centralized black-box venues to open-ledger settlement created a laboratory for observing market microstructure in real-time, effectively moving the origin of modern analysis from retroactive reporting to proactive, stream-based observation.

Theory

Mathematical rigor defines the validity of time series models within the derivative space. The central challenge lies in the non-stationarity of crypto assets, where variance and mean reversion properties shift under the influence of leverage cascades and macro-economic shocks.

Volatility Dynamics

Option pricing relies heavily on the estimation of local volatility, which is rarely constant. Analysts utilize Realized Volatility and Implied Volatility spreads to identify arbitrage opportunities. The breakdown of standard Black-Scholes assumptions in crypto necessitates the use of jump-diffusion models to account for the frequent, severe liquidity gaps observed during market deleveraging events.

The integrity of derivative pricing models depends on the accurate estimation of higher-order moments within the time series distribution.

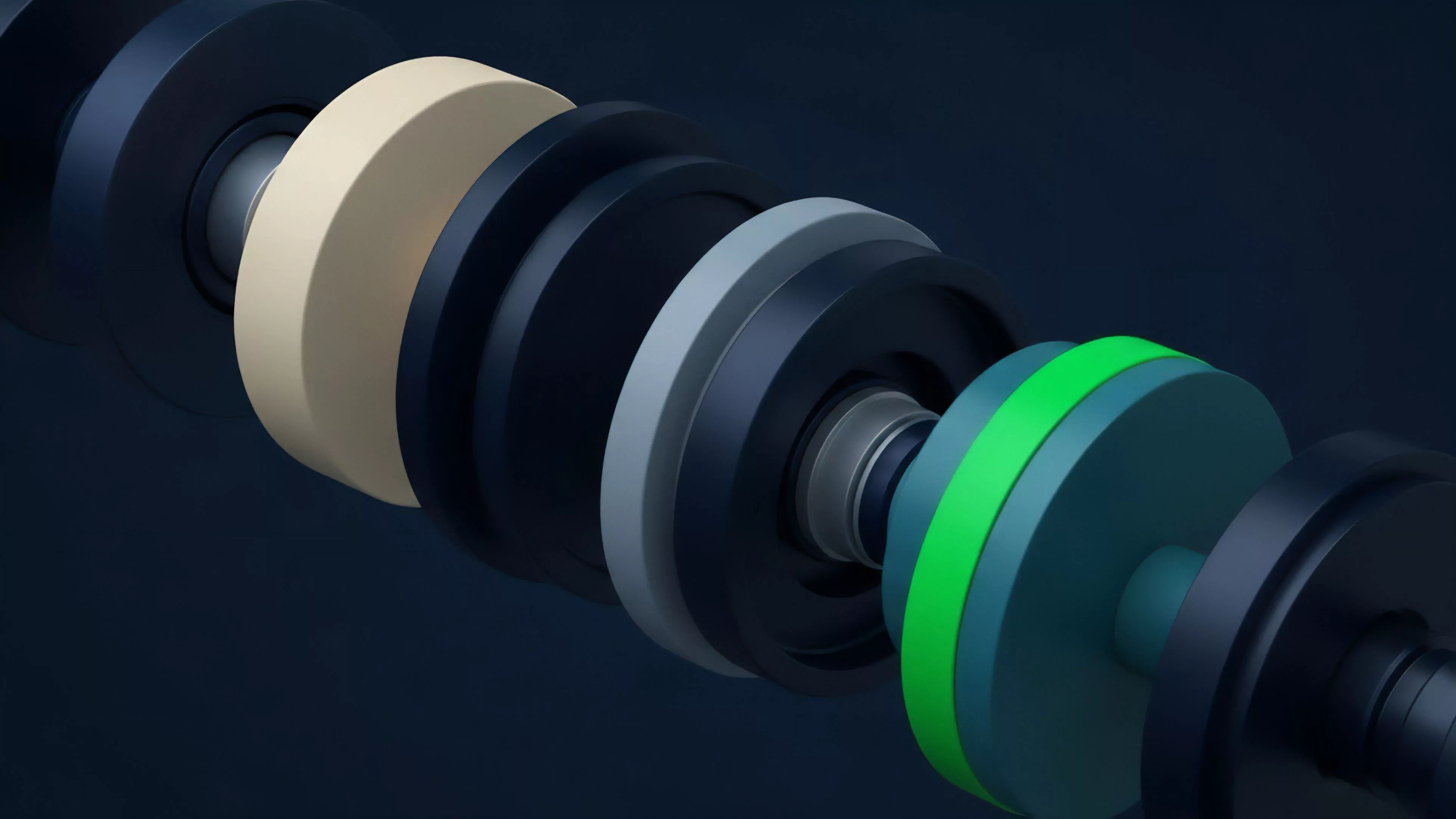

Structural Components

| Component | Analytical Significance |

| Autocorrelation | Measures the persistence of price movements over defined temporal intervals. |

| Heteroskedasticity | Quantifies the clustering of volatility, critical for margin engine calibration. |

| Cointegration | Identifies long-term equilibrium relationships between correlated digital assets. |

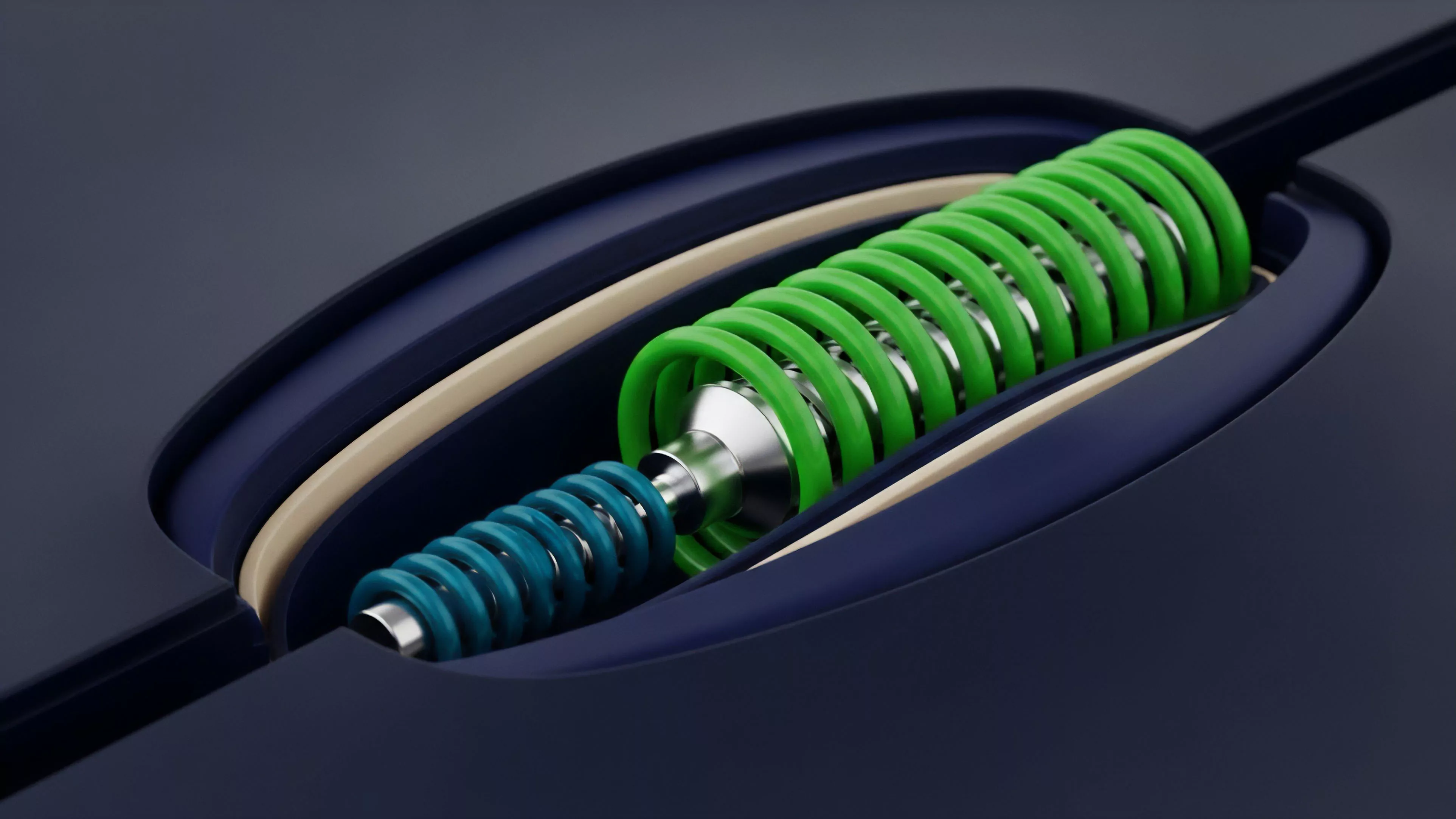

The analysis must account for the Feedback Loop created by liquidation engines. When price drops trigger automated liquidations, the resulting sell-pressure alters the time series distribution, creating a recursive dependency between the model output and the market reality it attempts to predict. This is where the pricing model becomes elegant and dangerous if ignored.

Sometimes, one considers the analogy of celestial mechanics, where gravity ⎊ or in this case, systemic leverage ⎊ bends the trajectory of assets in ways that defy simple linear extrapolation. Returning to the mechanics, the inclusion of exogenous variables like exchange-specific funding rates into the time series model is required to capture the full spectrum of risk.

Approach

Modern practitioners prioritize Order Flow Analysis over simple price action. By tracking the migration of liquidity across different strikes and expiries, analysts construct a dynamic view of market sentiment.

This involves processing raw websocket data to reconstruct the state of the order book at microsecond intervals, identifying institutional positioning before it manifests in price.

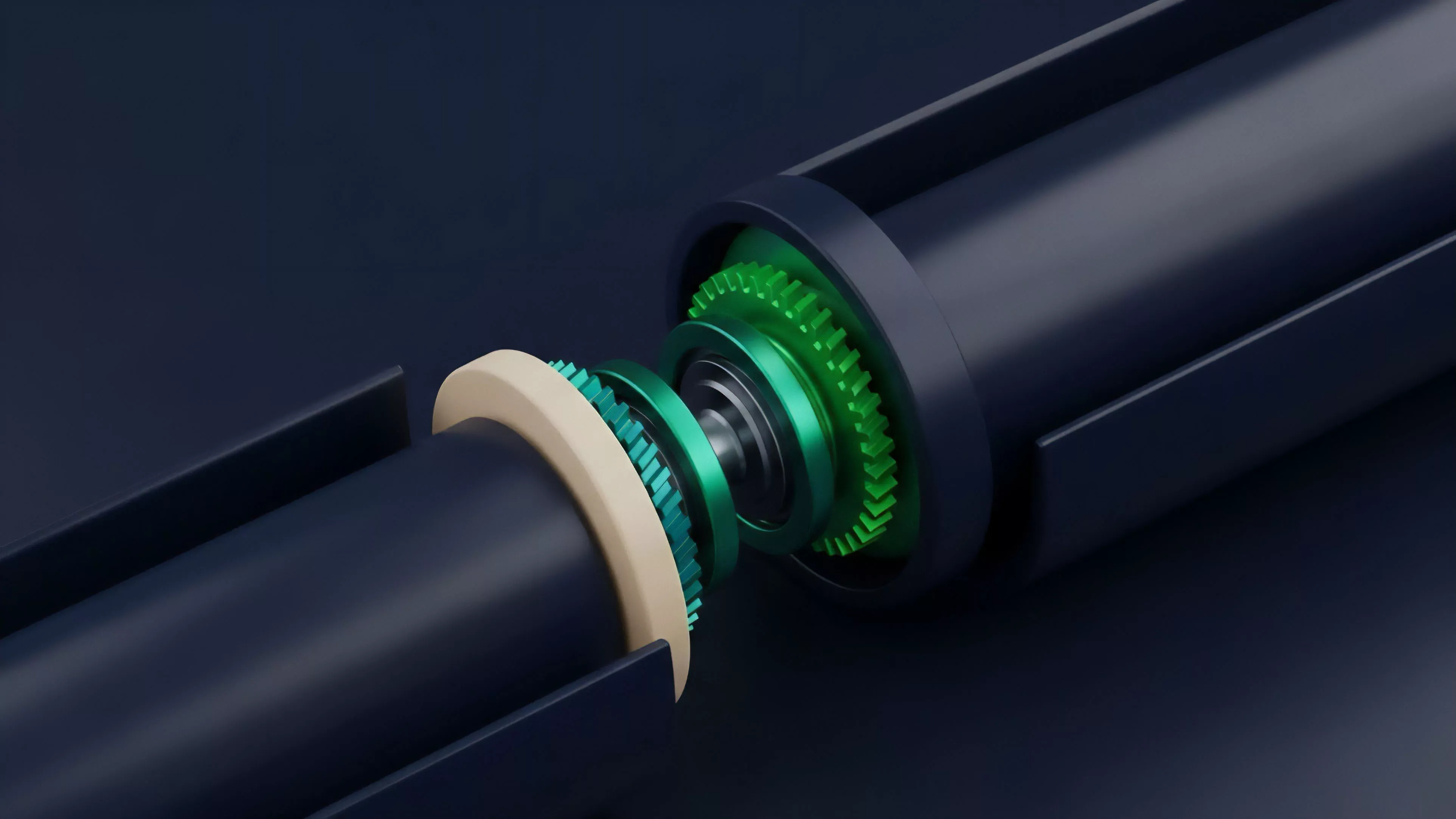

- Feature Engineering involves transforming raw tick data into meaningful inputs like trade intensity, bid-ask spread variance, and order imbalance ratios.

- Model Validation requires rigorous backtesting against historical liquidity crises to ensure the strategy survives extreme tail-risk events.

- Real-time Inference allows for the automated adjustment of delta-hedging parameters based on the current volatility regime.

Data-driven strategies succeed only when the model accounts for the adversarial nature of liquidity provision in permissionless protocols.

The focus remains on the Delta, Gamma, and Vega sensitivities. A precise approach requires continuous monitoring of the skew, as market participants often overpay for downside protection during periods of systemic uncertainty, creating a mispricing that informed strategies exploit through systematic volatility selling or protective put construction.

Evolution

The transition from simple trend-following indicators to complex Machine Learning architectures marks the current state of the field. Early iterations relied on moving averages, which proved inadequate against the non-linear volatility of crypto.

Today, deep learning models analyze multi-dimensional data sets, including on-chain transaction volumes and social sentiment, to predict short-term shifts in derivative demand. The industry has moved toward Protocol-Native Analysis, where the consensus mechanism itself becomes a data point. For instance, the timing of validator reward distributions or governance proposal deadlines now impacts liquidity provision and derivative premiums.

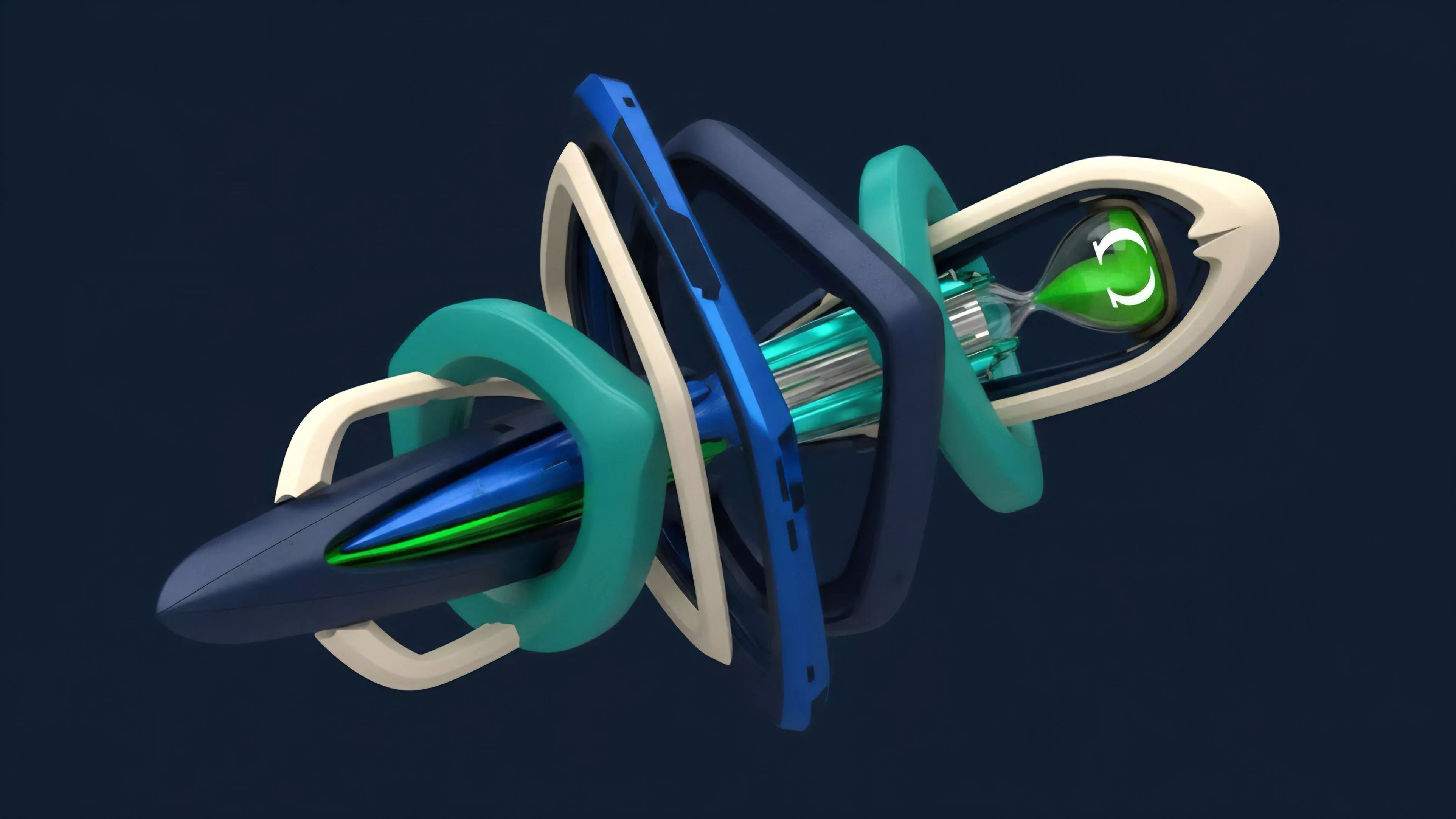

This integration of protocol physics with financial modeling is the defining characteristic of the current era.

| Stage | Primary Driver | Analytical Focus |

| Foundational | Exchange Data | Price and Volume |

| Intermediate | Order Flow | Book Depth and Imbalance |

| Advanced | Protocol State | On-chain Latency and Consensus |

Horizon

The future of Financial Time Series Analysis lies in the convergence of decentralized identity, privacy-preserving computation, and autonomous market makers. As protocols evolve, the ability to analyze private order flow through zero-knowledge proofs will redefine the edge available to market participants. Strategic focus will shift toward Systemic Risk Mapping, where models treat the entire decentralized financial stack as a single, interconnected graph. The ability to predict contagion pathways between lending protocols and derivative exchanges will become the most valuable skill for capital preservation. The goal is no longer just profit, but the construction of resilient architectures capable of weathering the inevitable cycles of extreme leverage and subsequent deleveraging.