Essence

Financial Data Preprocessing constitutes the structural transformation of raw, asynchronous blockchain event streams into deterministic, time-indexed formats suitable for quantitative analysis. This layer acts as the primary filter between the noisy, high-frequency nature of decentralized exchange order books and the precision requirements of option pricing models.

Financial Data Preprocessing converts raw, asynchronous blockchain event streams into deterministic, time-indexed formats for quantitative analysis.

Without this rigorous normalization, the stochastic volatility and irregular latency inherent in distributed ledgers render derivative valuation models inaccurate. Systems architects view this process as the creation of a clean state, where disparate data points ⎊ such as on-chain settlement events, off-chain order matching, and oracle updates ⎊ align to form a cohesive, tradable history.

Origin

The necessity for Financial Data Preprocessing emerged from the fundamental mismatch between traditional financial time-series requirements and the event-driven architecture of early decentralized exchanges. Initial attempts to price options relied on simple, uncleaned snapshots, leading to catastrophic miscalculations in delta hedging and liquidation logic.

- Oracle Inefficiency: Early protocols struggled with stale price feeds, necessitating the development of sophisticated filtering to remove outlier data.

- Latency Arbitrage: Market participants exploited the discrepancy between block confirmation times and off-chain execution, forcing developers to build deterministic sequencing mechanisms.

- Data Fragmentation: The rise of cross-chain liquidity required unified ingestion layers to reconcile varying block times and finality guarantees.

These historical failures forced a shift toward modular, robust data pipelines. The evolution from naive scrapers to high-fidelity, node-level indexing reflects the industry’s maturation from experimental protocols to sophisticated financial infrastructure.

Theory

The theoretical foundation of Financial Data Preprocessing rests on the transition from event-based logs to state-based snapshots. By applying rigorous filtering, normalization, and interpolation, architects create a synthetic, continuous time series from discrete, asynchronous blockchain updates.

Data Normalization Mechanics

The core challenge involves reconciling the irregular arrival of events with the fixed-interval requirements of Black-Scholes or local volatility models. This requires a deterministic approach to handling gaps in liquidity.

| Metric | Preprocessing Strategy | Systemic Goal |

|---|---|---|

| Latency | Timestamp adjustment via node synchronization | Achieve temporal accuracy |

| Noise | Median filtering and outlier rejection | Ensure price stability |

| Finality | State verification against consensus rules | Prevent invalid trade execution |

Rigorous data normalization bridges the gap between irregular, event-driven blockchain logs and the continuous-time requirements of derivative pricing models.

The system must account for adversarial conditions where actors intentionally manipulate latency to create false price signals. By validating data through multiple independent nodes, the preprocessing layer enforces a consistent reality, effectively neutralizing the impact of localized network congestion on derivative valuations.

Approach

Current methodologies emphasize the decoupling of data ingestion from derivative execution. By leveraging specialized indexing services and high-performance caching layers, modern protocols minimize the computational load on the main consensus engine while maintaining sub-millisecond responsiveness.

Execution Architecture

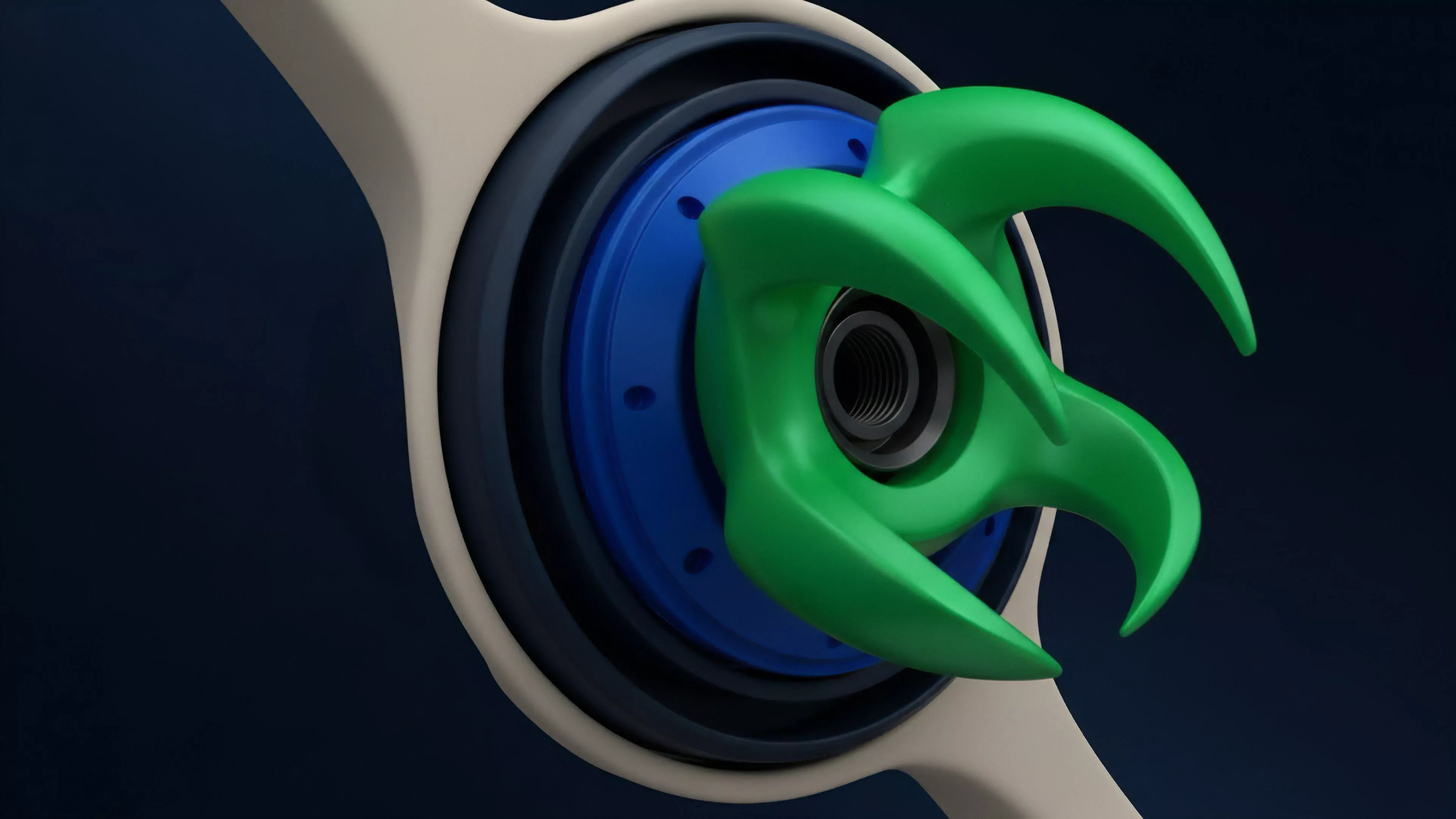

The pipeline follows a distinct, multi-stage progression:

- Ingestion: Capturing raw logs directly from RPC endpoints or mempool streams.

- Transformation: Converting event data into structured, relational schemas that reflect order flow dynamics.

- Validation: Cross-referencing price data against multiple decentralized oracles to ensure resistance to flash-loan attacks.

- Calibration: Updating volatility surfaces and Greeks in real-time to reflect the cleaned, processed input.

This approach treats the data stream as an adversarial environment. The system does not assume the integrity of incoming packets, instead employing strict schema validation to discard malformed data before it reaches the margin engine.

Evolution

Development has shifted from centralized, off-chain scraping to fully decentralized, verifiable computation. The move toward zero-knowledge proofs for data validation marks the current frontier, where the preprocessing itself becomes as trustless as the settlement layer.

Decentralized, verifiable computation represents the current frontier in data processing, ensuring that price feeds remain tamper-proof.

Market participants now demand transparency in how prices are derived. The days of relying on proprietary, opaque indexing solutions are fading as protocols adopt open-source, community-governed data pipelines. This structural shift directly improves the robustness of margin engines, as the risk of cascading liquidations due to faulty data input is significantly reduced.

Sometimes I wonder if our obsession with perfect data is a reaction to the inherent chaos of the physical world ⎊ a desperate attempt to impose order on a system that is, by definition, entropy-driven. Anyway, returning to the architecture, the integration of real-time volatility tracking directly into the preprocessing pipeline has allowed for more dynamic margin requirements, adapting to market stress before it reaches a critical state.

Horizon

The future of Financial Data Preprocessing involves the integration of machine learning-driven anomaly detection directly into the protocol’s consensus layer. As the complexity of crypto derivatives increases, the ability to identify and neutralize malicious data patterns in real-time will determine the survival of decentralized financial venues.

| Future Trend | Impact on Derivatives | Risk Mitigation |

|---|---|---|

| ZK-Proofs | Verifiable data integrity | Eliminates oracle manipulation |

| ML Anomaly Detection | Proactive volatility filtering | Reduces flash-crash impact |

| Cross-chain Aggregation | Unified global liquidity | Minimizes fragmentation risk |

The ultimate goal is the complete automation of the data pipeline, where the protocol itself detects and repairs inconsistencies without human intervention. This vision necessitates a move toward higher-order cryptographic primitives, ensuring that the preprocessing layer maintains the same security guarantees as the underlying settlement logic.