Essence

Transaction Processing Efficiency Evaluation Methods represent the quantitative frameworks utilized to measure the throughput, latency, and resource consumption of decentralized ledgers. These metrics provide the data required to assess whether a network architecture sustains high-frequency financial operations or faces bottleneck risks during periods of intense market volatility.

Evaluation methods quantify the technical capacity of a blockchain to handle transactional volume while maintaining stability and speed.

The evaluation focuses on the interaction between consensus mechanisms and computational overhead. When assessing efficiency, analysts look at the time required for a transaction to achieve deterministic finality versus probabilistic confirmation. This distinction dictates the risk profile for derivative instruments, where rapid settlement prevents slippage and reduces counterparty exposure.

Origin

The requirement for these evaluation methods grew from the limitations observed in early Proof of Work networks.

As decentralized finance expanded, the inability of base-layer protocols to process thousands of transactions per second led to the development of alternative consensus models and layer-two scaling solutions. Early efforts to measure efficiency relied on simple metrics like transactions per second, which often failed to account for network congestion or the cost of data availability. The shift toward more robust methodologies followed the maturation of institutional interest in crypto derivatives, where reliable performance data became necessary for risk management and margin engine calibration.

- Throughput Metrics establish the raw capacity of a protocol under peak load conditions.

- Latency Benchmarks measure the duration from transaction broadcast to confirmed inclusion in a block.

- Resource Utilization tracks the computational cost relative to the value settled on the chain.

Theory

The theoretical foundation of these methods relies on the study of network throughput versus consensus finality. A protocol must balance decentralization, security, and scalability, a trade-off often analyzed through the lens of protocol physics. Quantitative models evaluate efficiency by measuring the entropy of the mempool and the variance in block production times.

High variance introduces unpredictability into the pricing of options, as traders cannot guarantee execution within specific windows.

| Methodology | Focus Area | Financial Impact |

| Mempool Latency Analysis | Transaction propagation delay | Execution risk and slippage |

| Finality Time Modeling | Deterministic settlement speed | Collateral release efficiency |

| Gas Cost Sensitivity | Computational resource pricing | Trading strategy profitability |

Effective evaluation requires modeling the relationship between network congestion and the probability of failed or delayed trade execution.

Systems theory suggests that efficiency is not a static property but an emergent feature of participant behavior. When the cost of computation rises, agents optimize their interaction with the network, which alters the throughput profile and forces a reassessment of the protocol performance metrics.

Approach

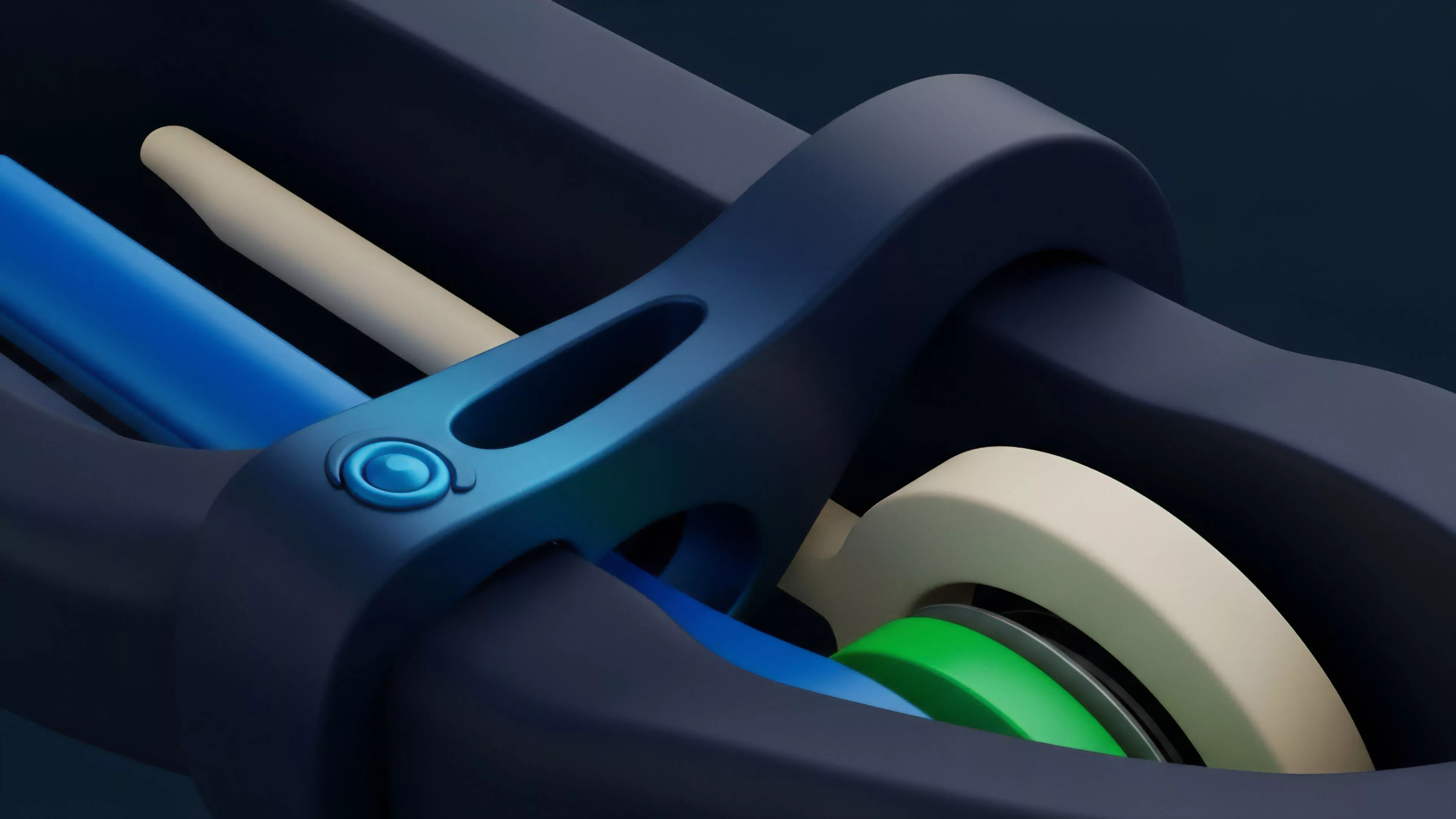

Current evaluation involves real-time monitoring of on-chain telemetry combined with stress testing of node infrastructure. Analysts deploy synthetic transaction sets to simulate high-load environments, observing how the network manages resource contention and consensus pauses.

The approach centers on identifying the maximum sustainable throughput before the network incurs significant latency penalties. This involves tracking the following parameters:

- Validation Delay representing the time taken by validators to reach consensus on a state transition.

- Block Space Saturation indicating the percentage of available capacity utilized during peak demand.

- Reorg Probability quantifying the systemic risk of chain splits under high network stress.

This data informs the design of margin engines. If the evaluation shows that the network experiences frequent reorgs or high latency, the margin requirements for derivative positions must be adjusted upward to compensate for the inability to liquidate collateral during volatile windows.

Evolution

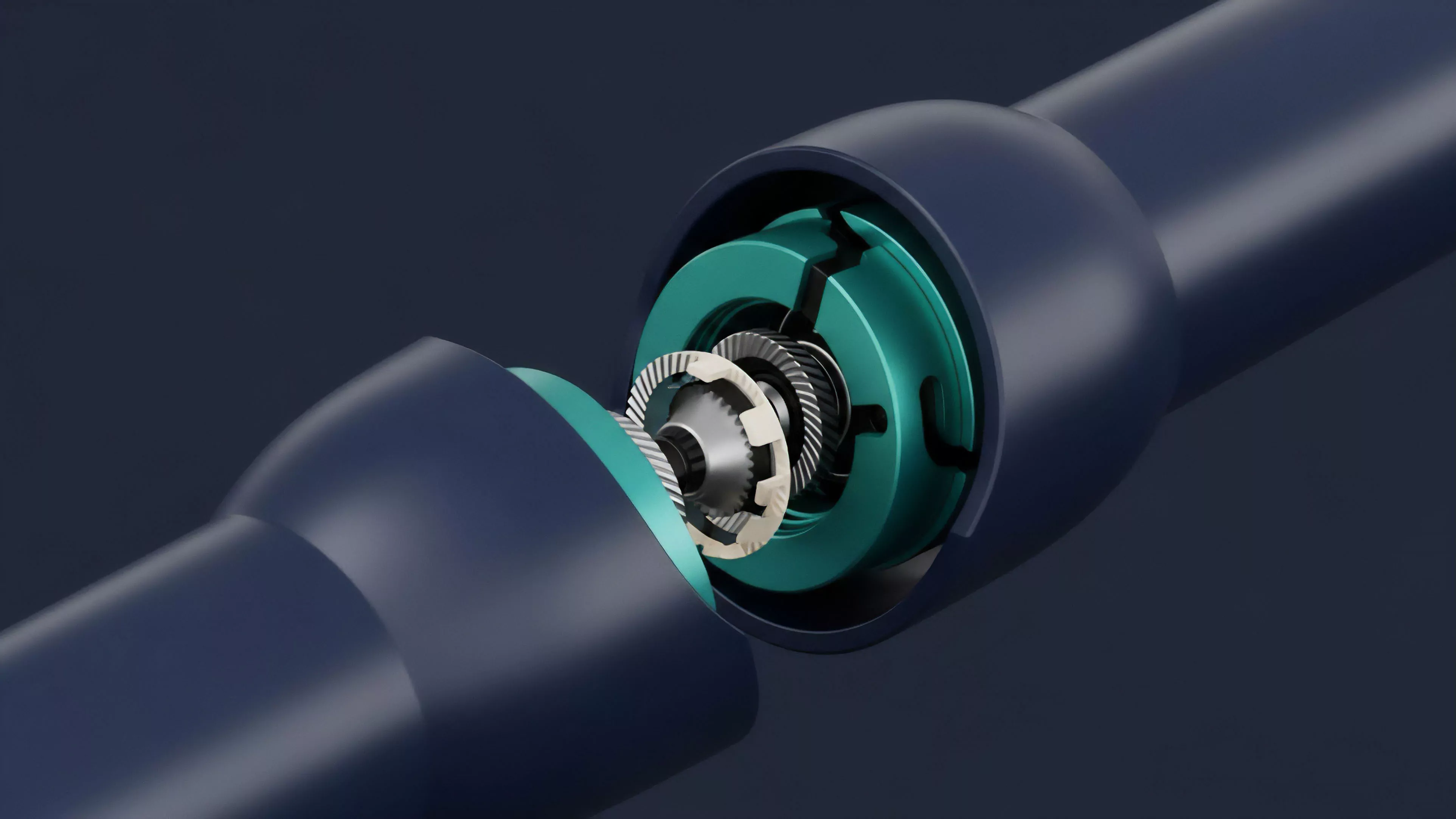

The transition from monolithic architectures to modular designs has forced a change in how efficiency is measured. Where previously researchers focused on single-chain throughput, the current landscape necessitates evaluating cross-chain interoperability and the efficiency of shared security models.

This evolution reflects the move toward asynchronous communication between protocol layers. As liquidity fragments across different execution environments, the metrics for success now include the efficiency of asset bridging and the speed of cross-chain state verification.

Modular blockchain architectures require new evaluation metrics that account for inter-layer communication latency and data availability bottlenecks.

This shift mirrors developments in high-frequency trading where the focus moved from raw exchange speed to the optimization of co-location and order routing. The next phase of development involves automated, protocol-level adjustments to throughput based on real-time demand, moving away from static capacity limits toward dynamic resource allocation.

Horizon

Future developments in evaluation methods will likely prioritize predictive modeling over retrospective analysis. By integrating machine learning to anticipate network congestion before it occurs, protocols may implement proactive fee adjustments or validator incentives to maintain throughput stability. The convergence of formal verification and performance testing will enable the creation of “performance-aware” smart contracts. These contracts will programmatically adjust their logic based on the current efficiency metrics of the underlying network, ensuring that execution remains reliable regardless of the load. The ultimate objective is to reach a state where network throughput is no longer a variable that traders must account for, but a constant, reliable foundation for global financial markets. The limitation of current evaluation methods remains the reliance on historical data which cannot fully account for black-swan events or coordinated adversarial attacks on the consensus layer. How will the next generation of autonomous protocols differentiate between organic demand spikes and intentional congestion attacks to maintain operational efficiency?