Essence

External Data Feeds represent the vital conduits through which decentralized protocols ingest real-world information, enabling the execution of complex financial contracts that require state awareness beyond internal ledger data. These mechanisms bridge the gap between deterministic blockchain environments and the stochastic nature of global asset markets, facilitating the translation of off-chain events into on-chain executable logic. Without such infrastructure, smart contracts remain isolated, restricted to internal token balances rather than functioning as sophisticated derivative engines capable of tracking indices, interest rates, or physical commodity values.

External data feeds function as the bridge between deterministic smart contracts and the stochastic reality of global asset price discovery.

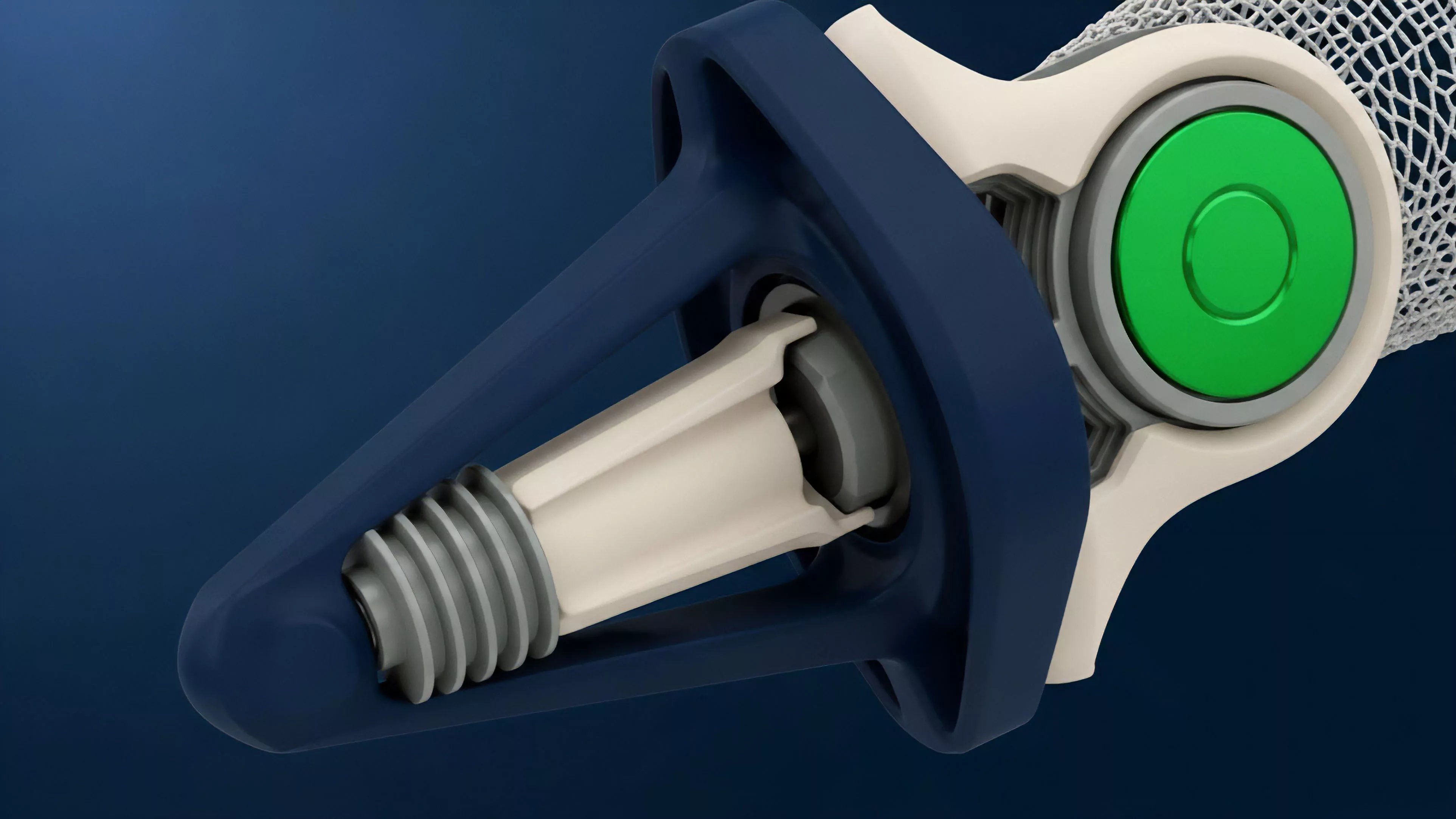

The operational necessity of these systems arises from the fundamental design of distributed ledgers. Blockchains lack native access to external networks due to the consensus requirement that all nodes must reach identical states based solely on internal inputs. External Data Feeds circumvent this by utilizing decentralized oracle networks or trusted API aggregators to fetch, sign, and broadcast verified data points into the protocol state, thereby ensuring the integrity of settlement calculations and collateral management.

Origin

The development of External Data Feeds stems from the limitations of early decentralized finance implementations, which struggled to incorporate non-native assets or real-time market data. Initial attempts relied on centralized points of failure, where single entities provided price updates, creating significant counterparty risk and susceptibility to manipulation. This era underscored the fragility of relying on singular sources in an adversarial environment where code dictates the terms of engagement.

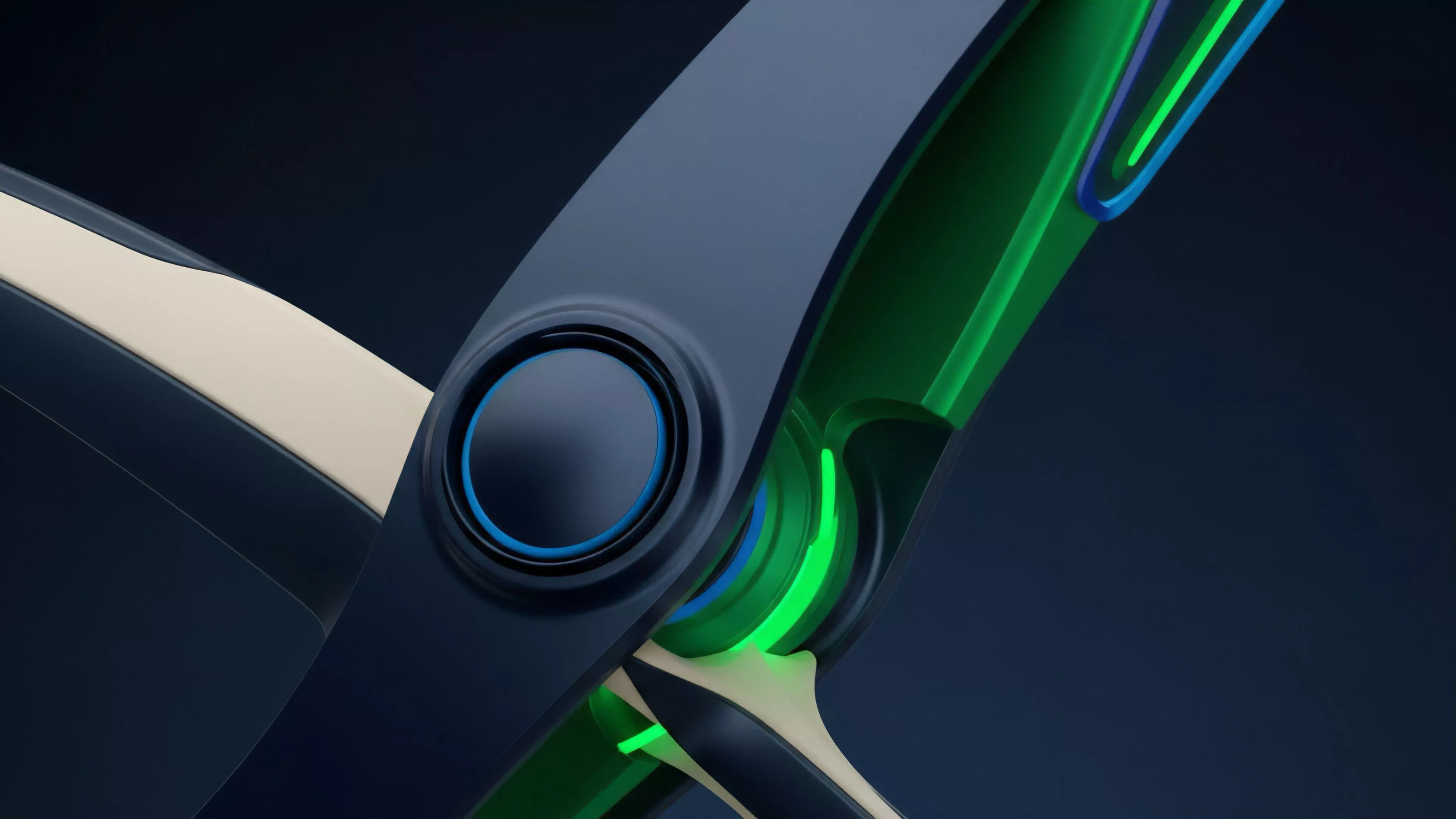

The evolution toward robust infrastructure necessitated a shift from monolithic data providers to decentralized architectures. By distributing the source of truth across multiple independent nodes, the system gains resilience against localized failures and malicious data injection. This structural pivot reflects the broader movement toward trust-minimized systems where the validity of an External Data Feed is verified by cryptographic proof rather than institutional reputation.

Theory

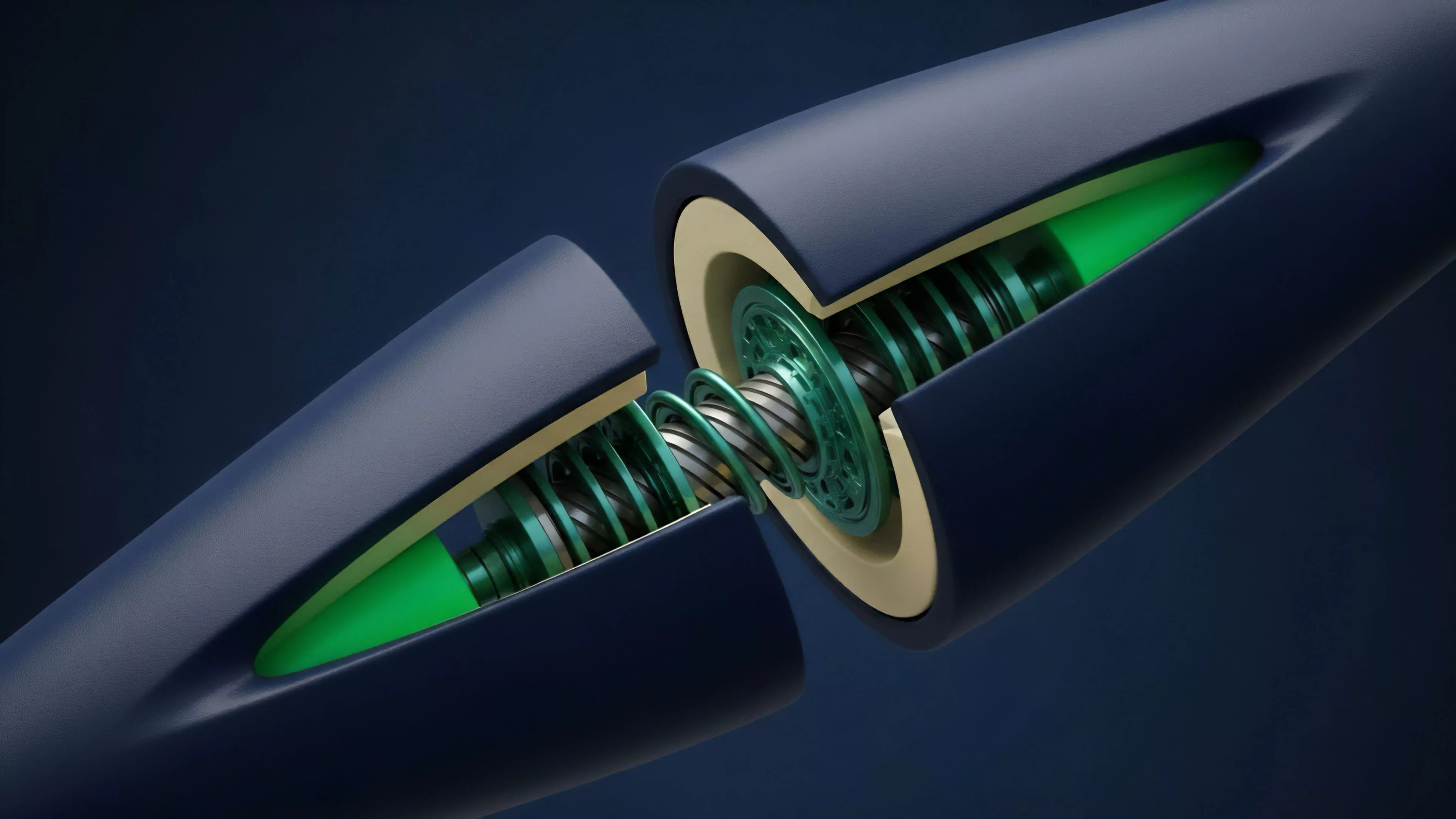

The architecture of External Data Feeds relies on the precise calibration of data aggregation, consensus, and transmission protocols. At the technical level, the process involves fetching raw pricing information from multiple high-volume exchanges, filtering outliers to mitigate flash-crash volatility, and committing the final aggregated value to the blockchain via a smart contract.

Component Architecture

- Data Aggregators collect price samples from geographically dispersed and liquidity-dense trading venues.

- Consensus Mechanisms filter the raw input to generate a singular, verifiable value that resists individual node corruption.

- Update Triggers determine the frequency and conditions under which new data is pushed to the target protocol, balancing cost against precision.

Data aggregation and consensus mechanisms ensure the integrity of off-chain inputs before they influence on-chain margin and liquidation logic.

Quantitatively, the risk profile of an External Data Feed is dictated by its latency and deviation tolerance. High-frequency options markets require near-instantaneous updates to prevent arbitrageurs from exploiting discrepancies between the oracle price and the true market value. Any lag in the feed introduces systemic risk, potentially triggering incorrect liquidations or allowing under-collateralized positions to persist during rapid market shifts.

| Metric | Implication |

| Update Latency | Sensitivity to arbitrage and slippage |

| Sample Depth | Resistance to price manipulation |

| Deviation Threshold | Responsiveness to volatility |

Approach

Current implementations prioritize decentralized node operation and multi-source verification to minimize the impact of individual exchange outages or localized data tampering. By aggregating data across diverse venues, protocols reduce the probability that any single market manipulation event will distort the global settlement price of an option. This statistical approach treats the market as a probabilistic entity, where the External Data Feed acts as a filter for noise while capturing the signal of underlying price movement.

Market participants now evaluate these feeds based on their historical reliability during extreme volatility. The ability of a feed to maintain accuracy during periods of massive order flow is the true measure of its technical robustness. Traders often adjust their risk models based on the specific oracle provider used by a protocol, acknowledging that the underlying data quality directly influences the execution of their derivative strategies.

Evolution

The trajectory of External Data Feeds has moved from static, low-frequency updates to dynamic, event-driven architectures. Early iterations were restricted by the gas costs of writing data to the blockchain, forcing infrequent updates that were unsuitable for high-frequency trading. Modern solutions utilize off-chain computation and layer-two scaling to provide continuous, low-latency streams that match the performance requirements of sophisticated derivative markets.

Furthermore, the integration of cryptographic primitives such as Zero-Knowledge Proofs allows protocols to verify the provenance and accuracy of data without requiring the entire network to process every raw input. This shift toward modular data layers signifies a departure from monolithic oracle designs, allowing protocols to customize their data requirements based on the specific asset class or risk tolerance of their derivative products.

Modular data layers enable protocols to achieve higher update frequency and reduced latency through advanced cryptographic verification.

The financial industry has witnessed a convergence where traditional market makers now provide liquidity to these decentralized feeds, bringing institutional-grade data management practices into the crypto space. This institutional participation acts as a check against amateurish design, forcing protocols to adopt more rigorous testing and fail-safe mechanisms for their External Data Feeds.

Horizon

Future advancements will focus on the automation of feed-switching based on real-time health monitoring of data sources. Protocols will dynamically re-weight their inputs from different providers based on latency and accuracy metrics, creating self-healing data pipelines. This transition toward autonomous infrastructure reduces the burden on governance participants to manually intervene during market disruptions.

The integration of cross-chain data interoperability will also define the next phase of development. As liquidity fragments across multiple chains, the ability to securely move verified price data between environments without introducing new attack vectors will become a primary competitive advantage. The architecture of these feeds will ultimately dictate the scalability and efficiency of the entire decentralized derivative sector, as market participants demand higher precision and lower friction for complex hedging strategies.

| Development Stage | Strategic Focus |

| Current | Decentralized Aggregation |

| Intermediate | Zero-Knowledge Verification |

| Future | Autonomous Source Switching |