Essence

Ethereum Network Congestion represents the state where demand for computational resources on the blockchain exceeds the available capacity of the validator set, leading to delayed transaction finality and elevated gas fees. This phenomenon acts as a fundamental throughput constraint, forcing market participants to engage in priority bidding for block space.

Ethereum Network Congestion functions as an automated market for computational priority where gas prices serve as the clearing mechanism.

The systemic implication involves the transformation of gas from a utility cost into a volatile financial asset. When block space reaches maximum utilization, the network transitions from a collaborative ledger to an adversarial environment where transaction inclusion becomes a function of economic power rather than chronological arrival.

Origin

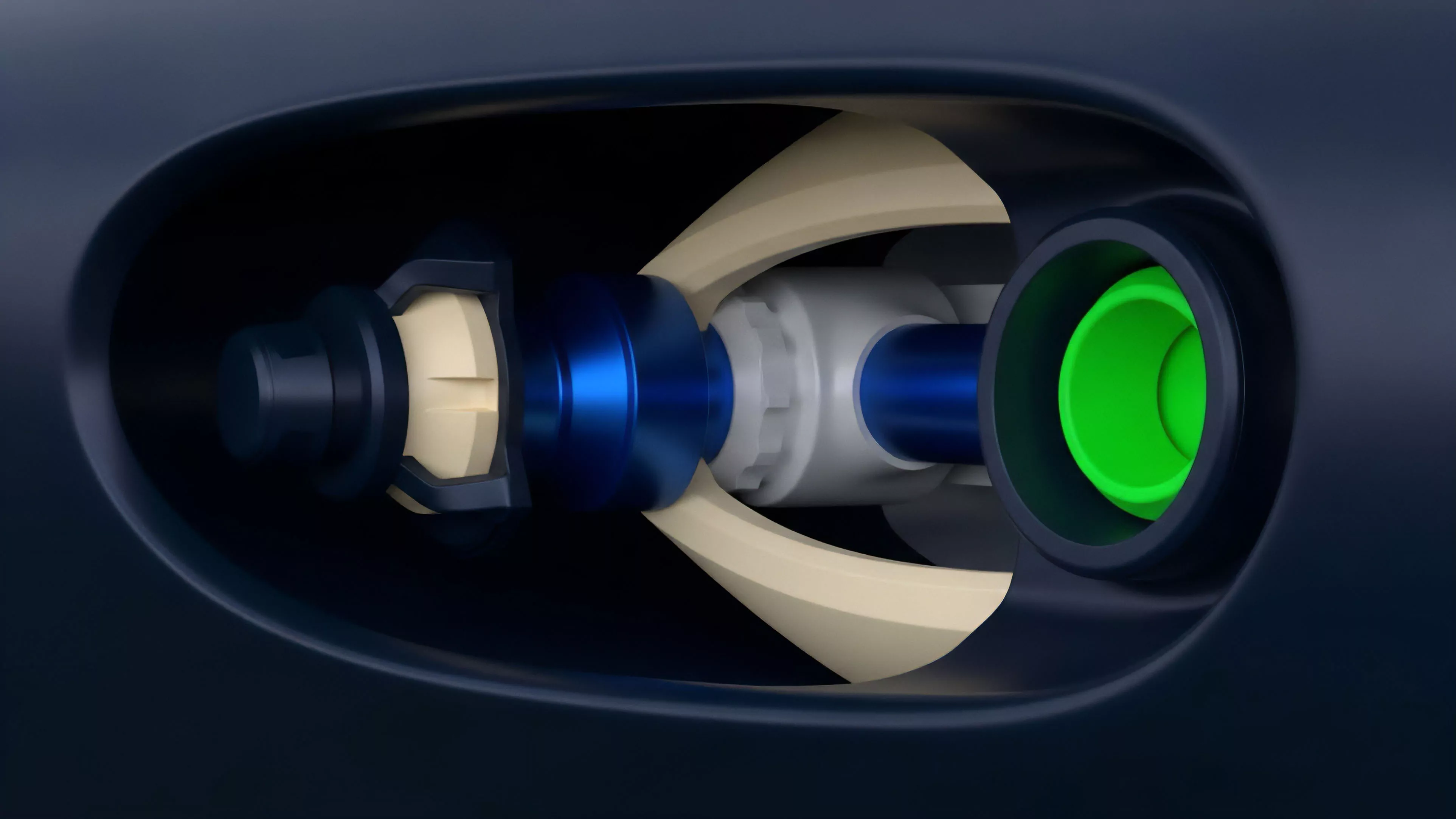

The genesis of this bottleneck resides in the architectural decision to prioritize decentralization and security over absolute transaction throughput. By design, the protocol imposes a strict gas limit per block, preventing excessive hardware requirements for node operators.

- Gas Limit establishes the maximum computational work allowed per block.

- EIP-1559 introduced the base fee mechanism to create predictable burning of native assets.

- Block Space Scarcity emerges as the direct result of fixed supply meeting variable demand.

This scarcity creates a feedback loop. As decentralized applications gain adoption, the demand for atomic settlement on the base layer increases, pushing the system toward its hard-coded physical limits.

Theory

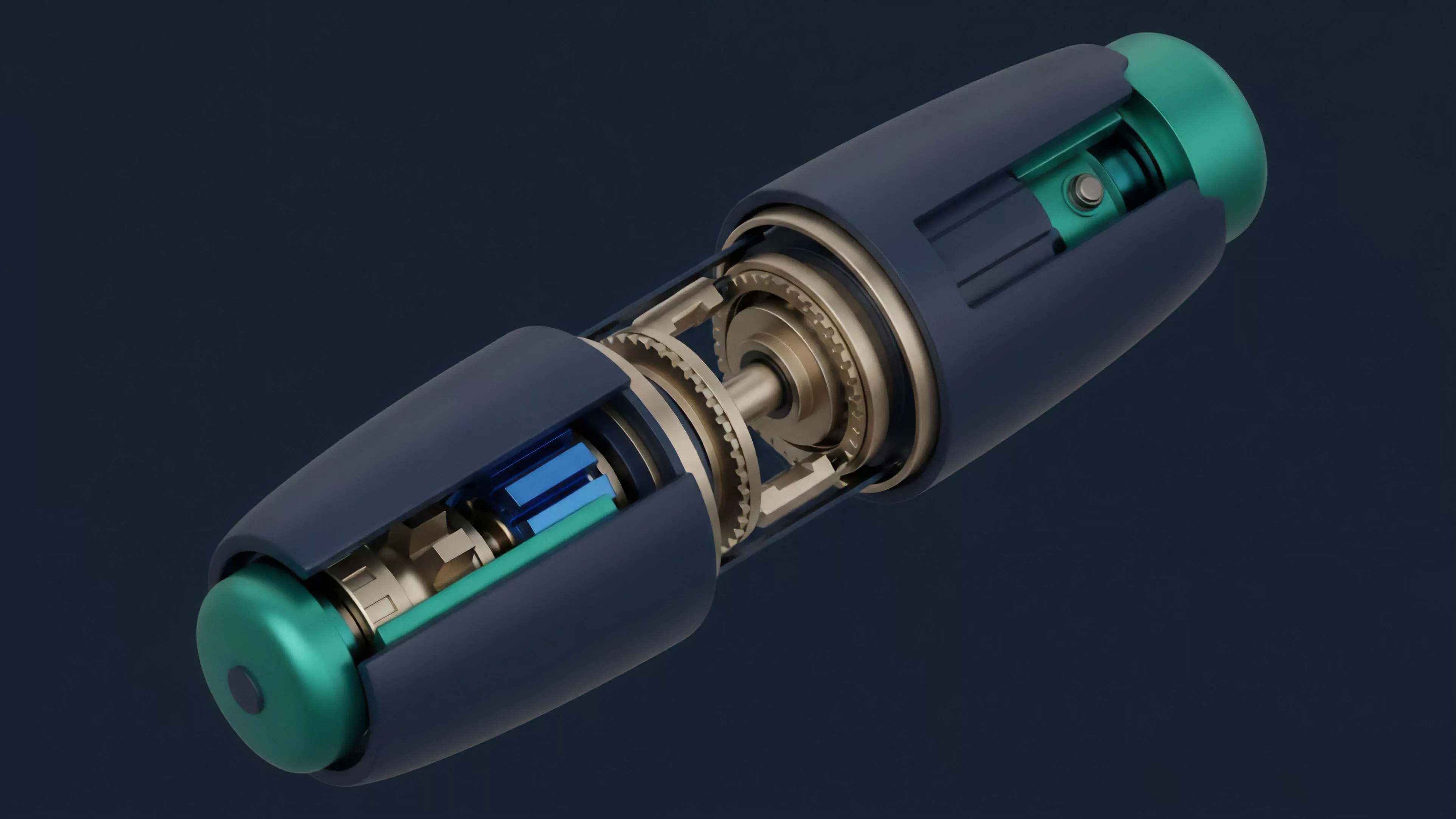

The mechanics of congestion rely on the interaction between the mempool, the block builder, and the proposer. Transaction ordering follows a first-price auction model supplemented by a base fee, effectively creating a tiered access structure.

Market Microstructure

The mempool functions as an order flow auction. Participants seeking rapid inclusion must pay a priority fee to incentivize block builders to select their transactions over others. This dynamic mirrors high-frequency trading environments where latency carries a direct monetary premium.

Transaction priority fees create an auction-based barrier to entry that disproportionately affects low-value or latency-insensitive operations.

Protocol Physics

The consensus mechanism imposes temporal constraints on settlement. During high-traffic periods, the time-to-finality increases as pending transactions accumulate in the mempool, causing a divergence between submission time and execution time. This lag creates significant risk for automated derivative engines relying on oracle price feeds.

Approach

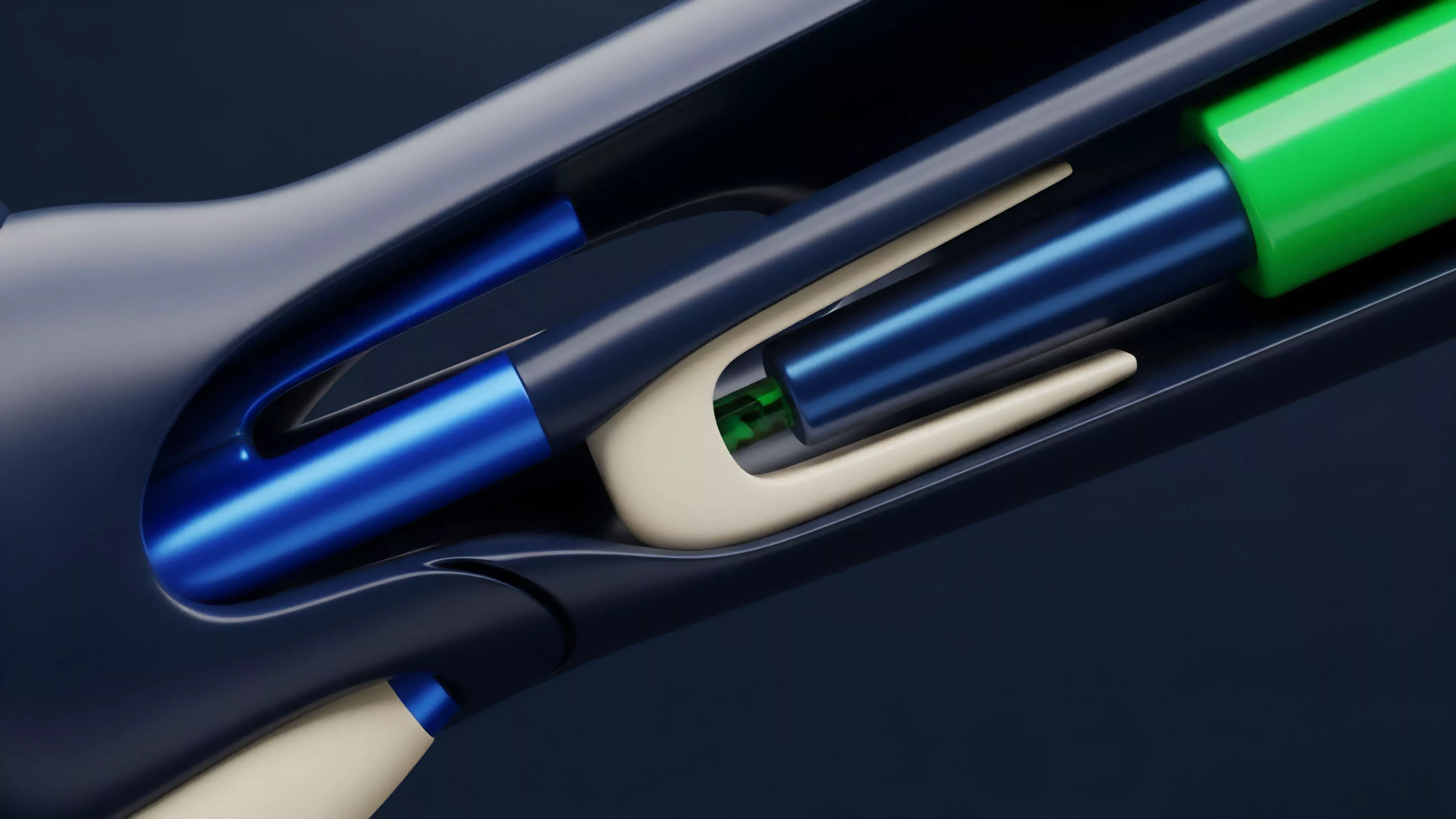

Current strategies for mitigating the impact of congestion focus on off-chain scaling and optimized transaction construction.

Market participants deploy sophisticated middleware to manage the volatility of execution costs.

| Strategy | Mechanism | Risk Profile |

| Layer 2 Rollups | Batching transactions off-chain | Bridge security |

| Gas Token Hedging | Locking gas prices via derivatives | Counterparty risk |

| Flashbots Bundles | Direct builder submission | Mempool exclusion |

Professional entities utilize gas-prediction algorithms to minimize slippage and avoid failed transactions. These tools analyze historical block utilization and mempool depth to estimate optimal bid prices, ensuring that capital remains efficient even when base layer fees spike.

Evolution

The transition from proof-of-work to proof-of-stake shifted the congestion landscape by replacing energy-intensive mining with validator-driven block production. This shift altered the incentive structures for transaction ordering and created new avenues for extractable value.

Protocol evolution moves the bottleneck from energy expenditure to validator-controlled sequencing power.

Historical cycles demonstrate that congestion acts as a catalyst for innovation. Every major period of extreme fee pressure accelerated the adoption of scaling solutions. The ecosystem has moved from reliance on the base layer for all activities toward a modular architecture where the base layer provides only settlement and security, while execution migrates to specialized environments.

Horizon

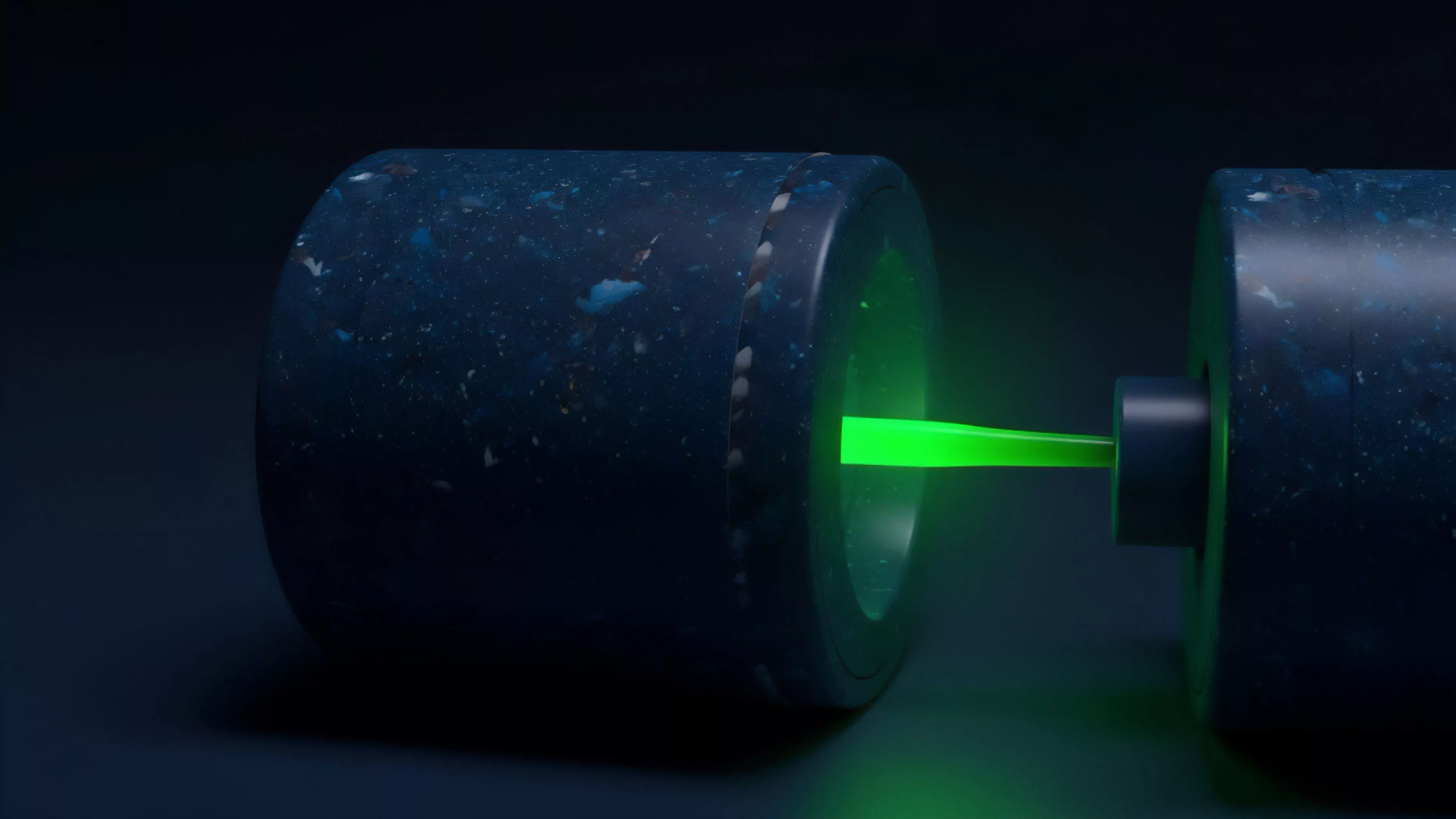

Future developments center on danksharding and the implementation of more efficient data availability layers.

These architectural changes aim to decouple the cost of data storage from the cost of execution, fundamentally altering the economics of network usage.

- Danksharding promises massive increases in data throughput for rollups.

- Account Abstraction allows for sophisticated gas management logic at the wallet level.

- Execution Sharding represents the theoretical limit of horizontal scaling for the network.

The long-term trajectory suggests a future where base layer congestion becomes a specialized market for high-value settlement, while routine activity occurs within highly optimized, scalable environments. The ultimate challenge remains the maintenance of censorship resistance while expanding throughput.