Essence

Distributed Systems Resilience functions as the structural capacity of a decentralized financial network to maintain operational continuity, data integrity, and deterministic state transitions under adversarial conditions or systemic shocks. This attribute represents the inverse of fragility in programmable money environments.

Distributed Systems Resilience defines the ability of a decentralized protocol to sustain core financial functions despite node failures or malicious interference.

The architecture relies on high-fault tolerance, ensuring that the consensus mechanism continues to finalize transactions even when participants drop offline or act in bad faith. Within crypto derivatives, this ensures that margin calls, liquidations, and settlement processes remain executable, preventing the cascading failures that plague centralized intermediaries.

Origin

The genesis of this concept traces back to Byzantine Fault Tolerance research in distributed computing. Developers recognized that in an environment lacking a trusted central authority, the system must achieve consensus through peer validation.

- Byzantine Fault Tolerance: The requirement for a network to reach agreement even when some nodes provide conflicting information.

- Decentralized Infrastructure: The move from siloed data centers to distributed validator sets, reducing single points of failure.

- Financial Cryptography: The integration of cryptographic proofs into transaction validation, ensuring non-repudiation and state consistency.

These foundations evolved as decentralized exchanges and lending protocols sought to mitigate risks inherent in automated market makers and smart contract-based margin engines. The objective remains the elimination of central points of control that historically acted as systemic bottlenecks during periods of extreme volatility.

Theory

Mathematical modeling of Distributed Systems Resilience involves analyzing the probability of state divergence across a validator set. Protocols employ game-theoretic mechanisms, such as slashing conditions and staking requirements, to align validator incentives with network stability.

Systemic robustness depends on the mathematical probability of network consensus persisting despite arbitrary node failure or malicious collusion.

The following table illustrates the trade-offs inherent in different consensus designs:

| Architecture | Failure Tolerance | Latency Impact |

| Synchronous BFT | High | High |

| Asynchronous BFT | Very High | Variable |

| Probabilistic Consensus | Moderate | Low |

The theory assumes an adversarial environment where participants maximize their own utility. Consequently, the protocol must encode rules that penalize non-compliant behavior, ensuring that the cost of attacking the system exceeds the potential gain. This dynamic maintains the integrity of the derivative settlement layer.

Approach

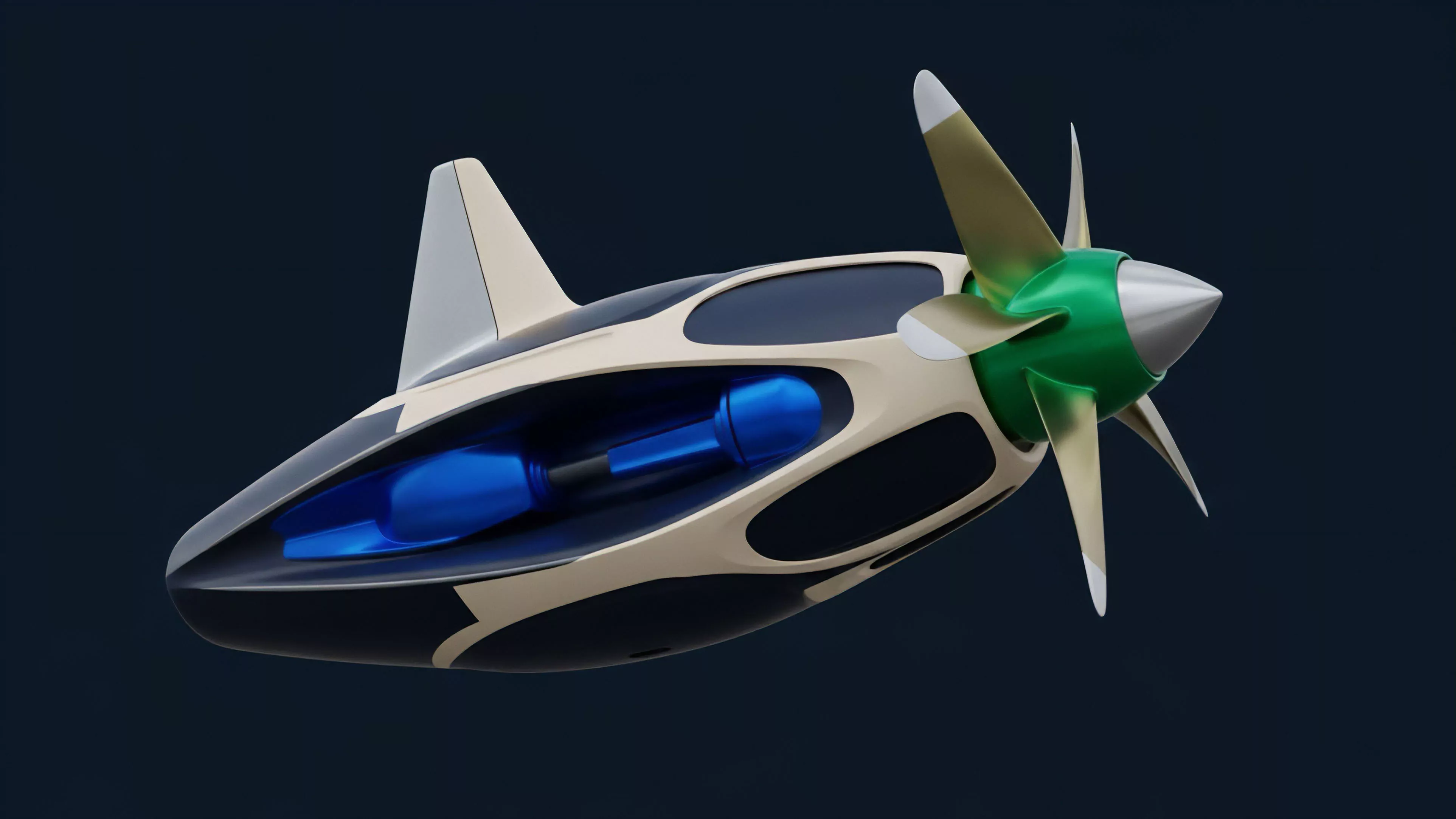

Current implementations prioritize modularity and redundancy.

Protocols split critical functions ⎊ such as order matching, price discovery, and collateral management ⎊ across different layers or specialized validator sets. This prevents a localized failure in one component from halting the entire derivative pipeline.

- State Sharding: Dividing the network state into smaller, manageable pieces to increase throughput and limit the impact of node failures.

- Redundant Validation: Requiring multiple independent attestations for every derivative contract update, ensuring settlement accuracy.

- Automated Liquidation Engines: Deploying decentralized oracles that provide continuous, tamper-proof price feeds to trigger margin calls without human intervention.

Market makers now leverage these resilient infrastructures to manage complex Greek exposures, knowing the underlying settlement protocol will execute even during network congestion. The focus is on ensuring that liquidation thresholds remain reliable regardless of broader market conditions.

Evolution

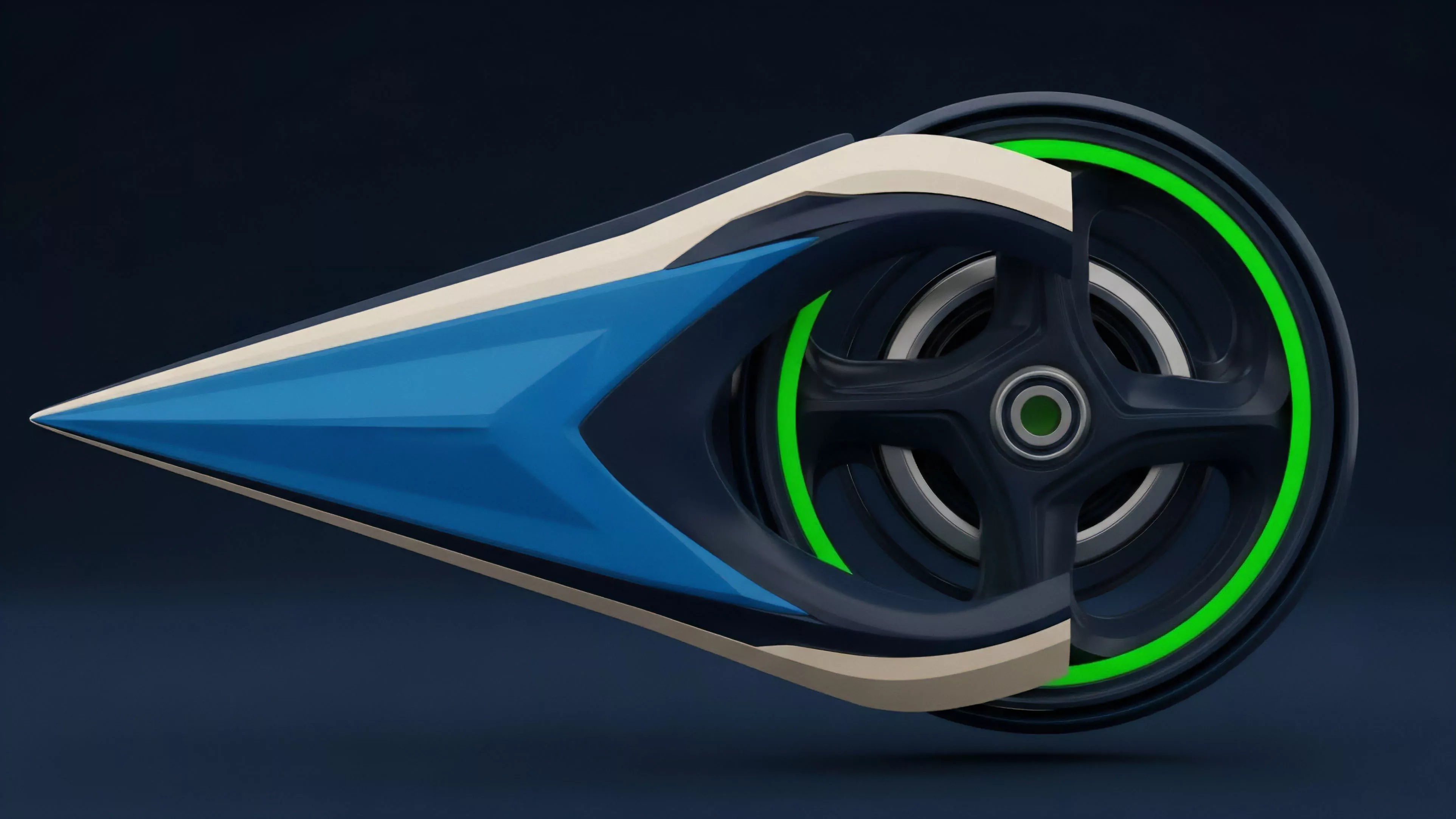

Early decentralized systems suffered from significant downtime and throughput limitations. Improvements in consensus algorithms, such as the shift from proof-of-work to proof-of-stake, drastically increased the economic cost of network disruption.

Evolution of network design has moved from basic uptime requirements to sophisticated economic finality and adversarial resistance.

The transition reflects a maturation where protocols now treat latency as a critical component of risk. The industry moved from simplistic, single-chain designs to multi-chain interoperability, where assets and derivative positions can migrate across protocols to maintain liquidity. This shift allows for a more fluid allocation of capital, reducing the risk of contagion when one segment of the decentralized market experiences technical strain.

Horizon

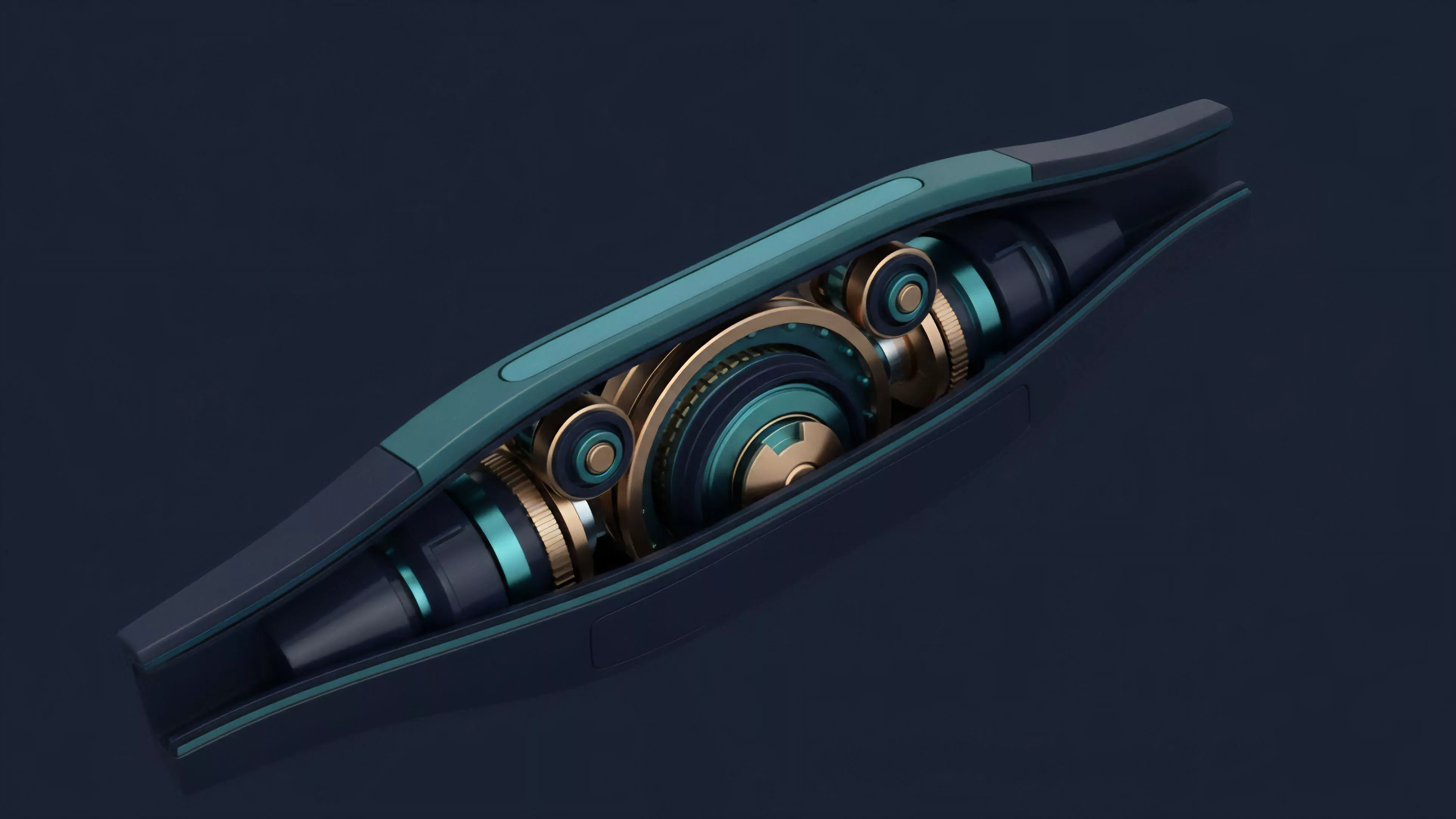

Future developments center on zero-knowledge proof integration to enhance privacy without sacrificing the transparency required for auditability.

Researchers are building protocols capable of self-healing, where the system autonomously reconfigures its validator set in response to detected attacks or prolonged inactivity.

- Zero-Knowledge Rollups: Compressing transaction data to maintain state integrity while reducing the computational load on the base layer.

- Self-Healing Protocols: Algorithmic responses to network stress that adjust parameter thresholds dynamically.

- Cross-Chain Atomic Settlement: Ensuring derivative contracts remain valid even when the underlying assets exist on disparate, independent blockchains.

The trajectory points toward a global, permissionless derivative market that functions with the reliability of legacy systems but without the structural vulnerabilities of centralized clearinghouses. This evolution will define the next phase of institutional participation in decentralized finance. How can decentralized systems maintain performance under extreme load without sacrificing the security guarantees that justify their existence?