Essence

Distributed Ledger Technology functions as a decentralized state machine that maintains a synchronized, immutable record of transactions across a peer-to-peer network. It eliminates the requirement for a central authority by distributing the validation process among multiple participants, ensuring that the ledger remains consistent even in adversarial environments. This architecture relies on cryptographic primitives to secure data integrity and establish a canonical history of state transitions.

Distributed Ledger Technology establishes a shared reality in trustless environments through algorithmic verification.

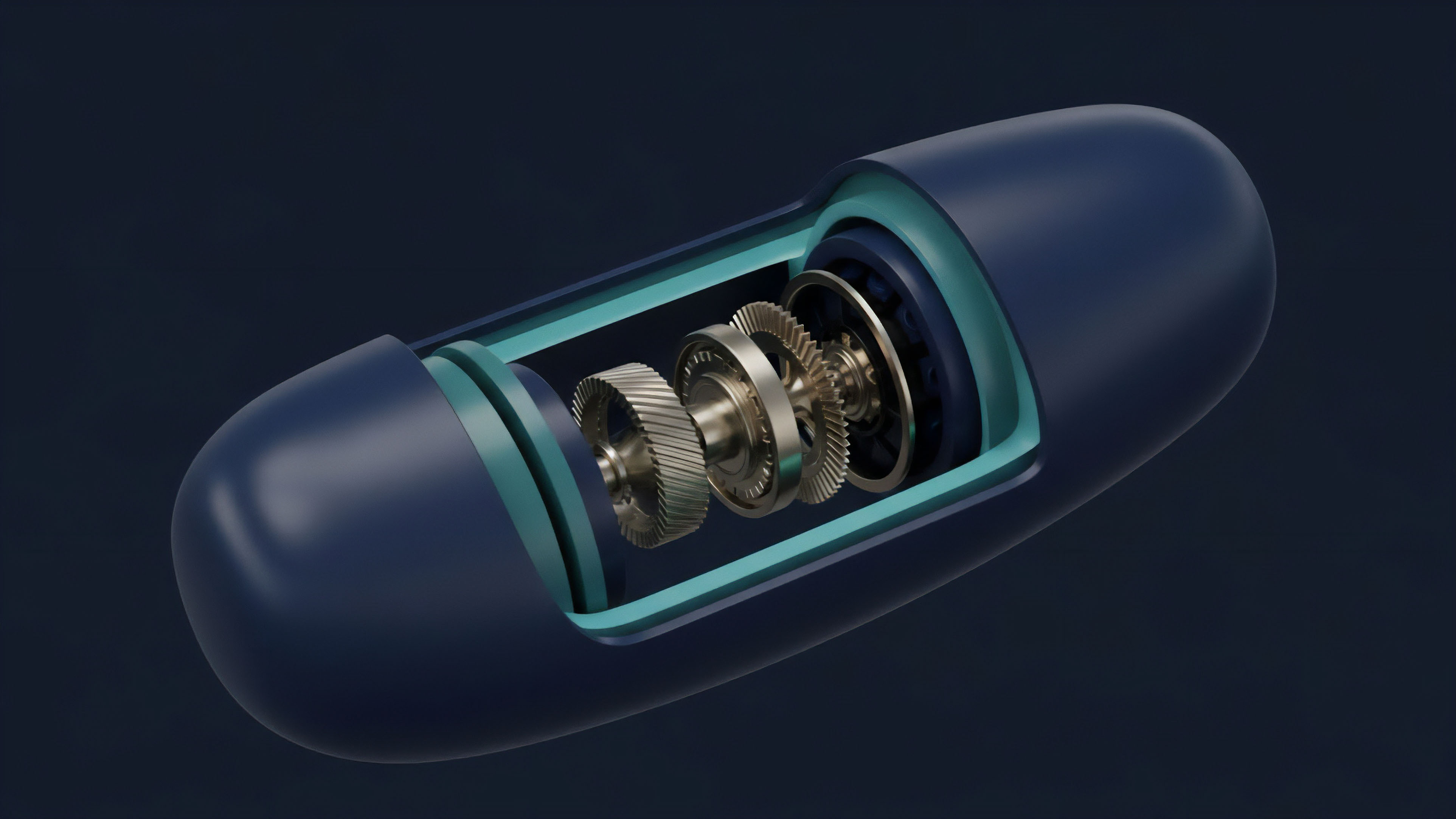

The system operates through a continuous cycle of broadcasting, validating, and committing data. Each participant, or node, maintains a local copy of the entire database, which is updated simultaneously as new entries are approved through a consensus mechanism. This structure ensures that the ledger is resistant to tampering and censorship, as altering any single record would necessitate the simultaneous modification of the majority of the network nodes ⎊ a feat that is computationally or economically prohibitive.

The financial significance of this technology lies in its ability to facilitate atomic settlement and reduce counterparty risk. By embedding the rules of exchange directly into the protocol, Distributed Ledger Technology enables the creation of programmable assets and self-executing contracts. This shift from institutional trust to algorithmic proof allows for the development of complex financial instruments that operate with transparency and efficiency, independent of traditional clearinghouses.

Origin

The conceptual foundations of Distributed Ledger Technology trace back to the early developments in distributed computing and cryptography during the late 20th century.

Researchers sought methods to secure digital communications and create electronic cash systems that did not rely on a single point of failure. The 1982 work on Byzantine Fault Tolerance by Leslie Lamport provided the mathematical framework for achieving agreement in networks where some participants might be malicious or unreliable. Subsequent advancements in the 1990s, such as Hashcash by Adam Back and B-money by Wei Dai, introduced the concept of using computational work to secure a network and prevent spam.

These early attempts at creating decentralized value transfer systems laid the groundwork for the synthesis of linked data structures and consensus algorithms. The integration of merkle trees ⎊ a method for efficiently verifying the integrity of large datasets ⎊ allowed for the creation of a chain of blocks that could be audited by any participant. The practical realization of these theories occurred with the deployment of the first blockchain, which combined Proof of Work with a decentralized ledger to solve the double-spending problem.

This event marked the transition from theoretical research to a functional financial operating system. Since then, the technology has diversified into various forms, including private, permissioned ledgers and public, permissionless networks, each tailored to specific security and performance requirements.

Theory

The theoretical framework of Distributed Ledger Technology is governed by the CAP Theorem, which dictates that a distributed system can only provide two of three guarantees: Consistency, Availability, and Partition Tolerance. In the context of financial ledgers, consistency and partition tolerance are often prioritized to ensure that all participants see the same state of the ledger, even if the network is divided.

This necessitates a rigorous consensus protocol that defines how nodes agree on the validity of new transactions.

Consensus and Security

Mathematical models of consensus focus on the probability of reaching a stable state within a specific timeframe. Probabilistic Finality, common in systems using Proof of Work, suggests that the likelihood of a transaction being reversed decreases exponentially as more blocks are added. In contrast, Deterministic Finality, found in many Proof of Stake systems, provides an absolute guarantee of settlement once a transaction is included in a finalized block.

The security of a ledger relies on the economic or computational cost of altering historical state transitions.

| Mechanism Type | Security Model | Finality Type |

|---|---|---|

| Proof of Work | Computational Resource Expenditure | Probabilistic |

| Proof of Stake | Economic Collateral and Slashing | Deterministic |

| Directed Acyclic Graph | Transaction Referencing Topology | Asynchronous |

Protocol Physics

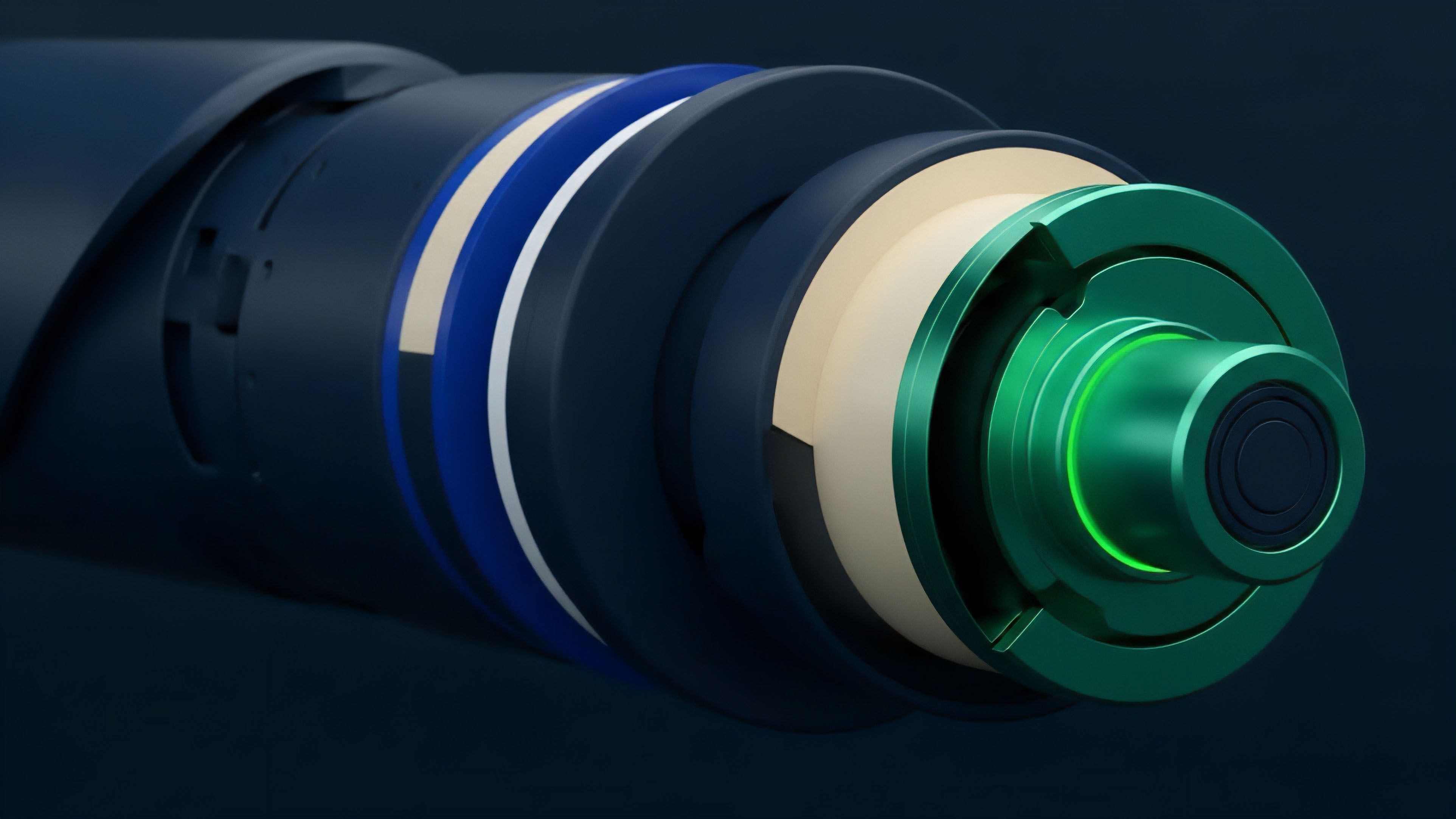

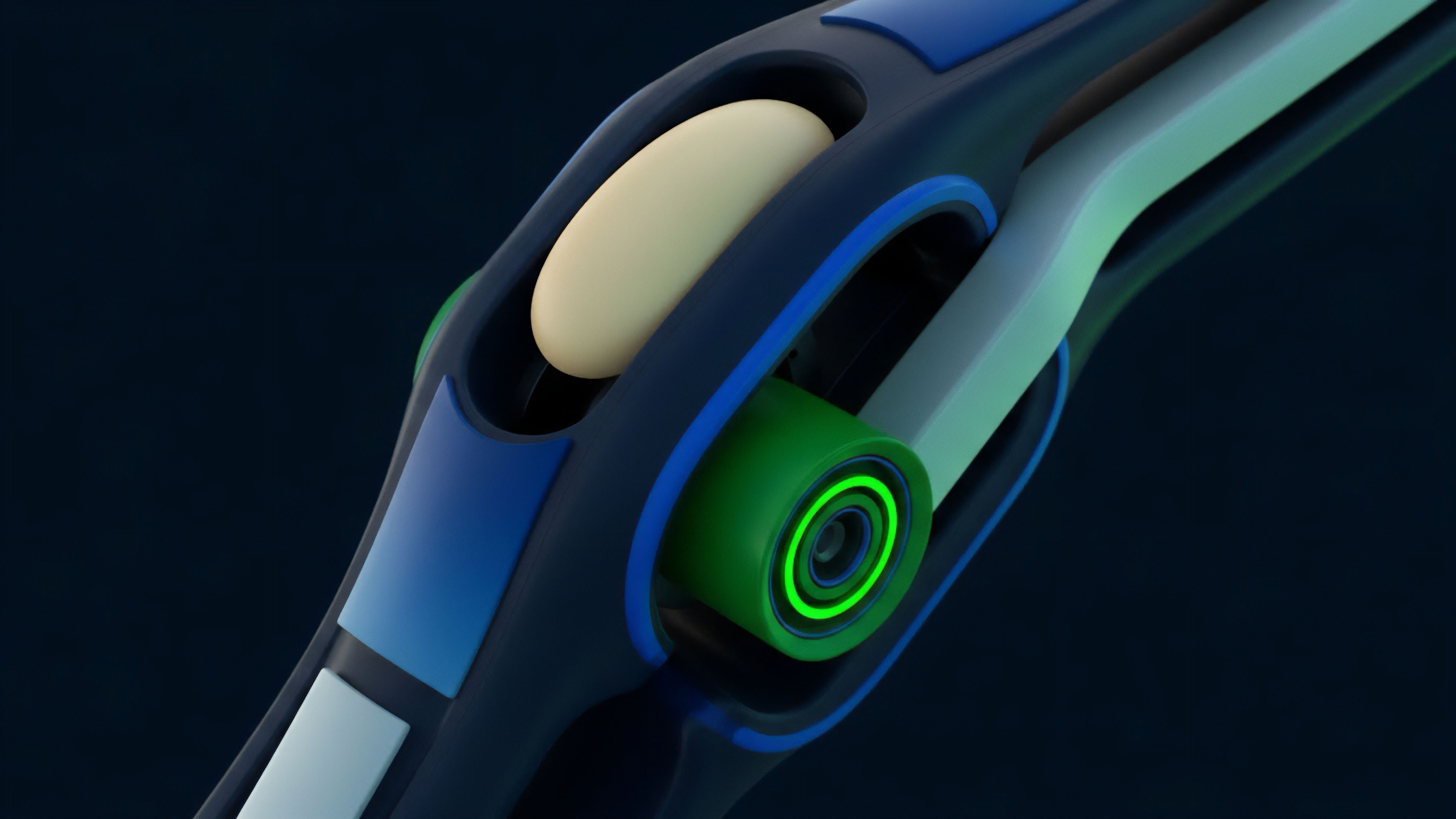

The physical constraints of the network ⎊ such as latency, bandwidth, and processing power ⎊ determine the throughput and scalability of the ledger. The State Transition Function defines the logic that moves the system from one valid state to the next. Every transaction must be verified against the current state to ensure that the sender has sufficient balance and that the cryptographic signatures are valid.

This process creates a bottleneck in monolithic architectures, where every node must process every transaction.

Approach

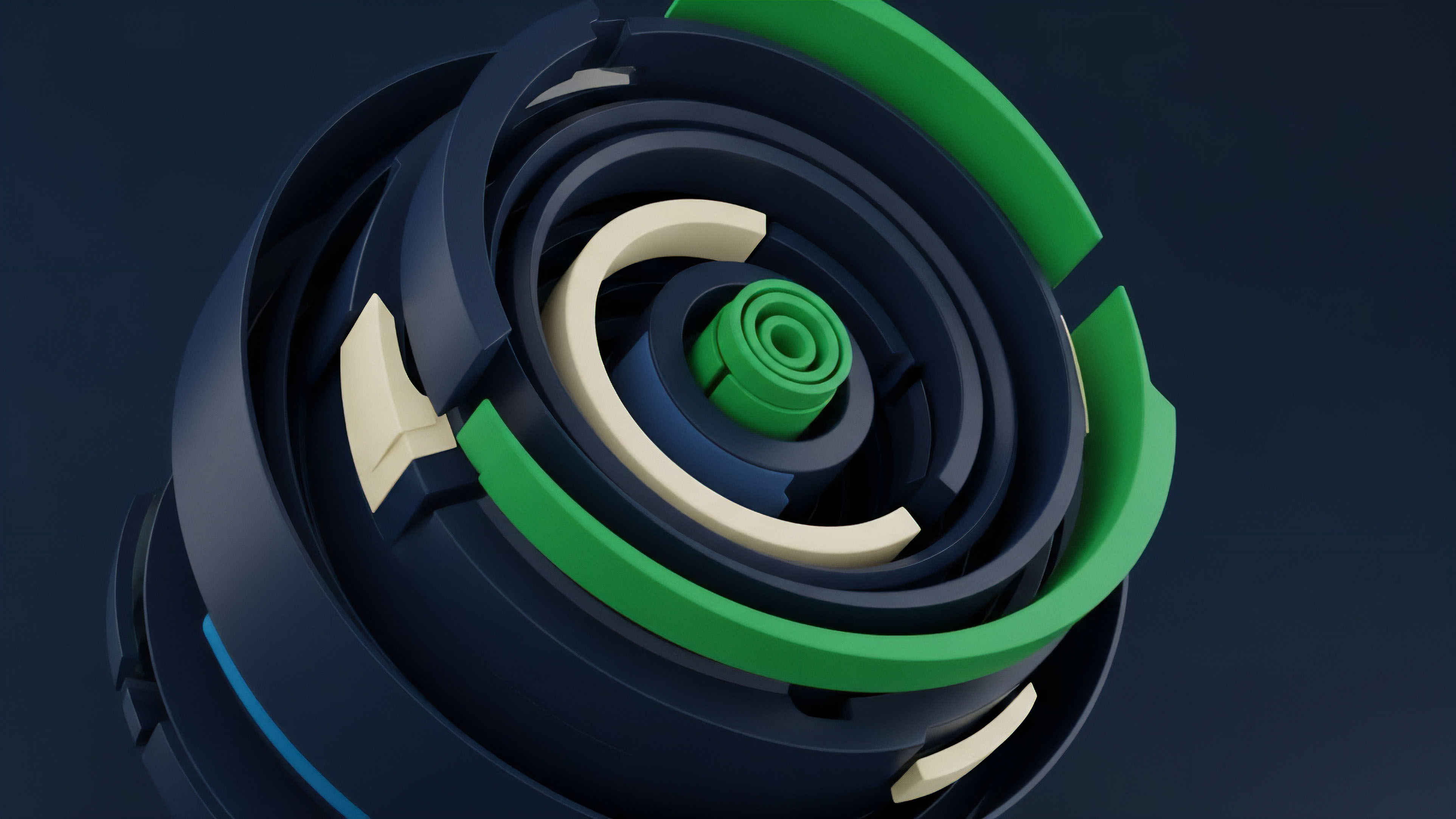

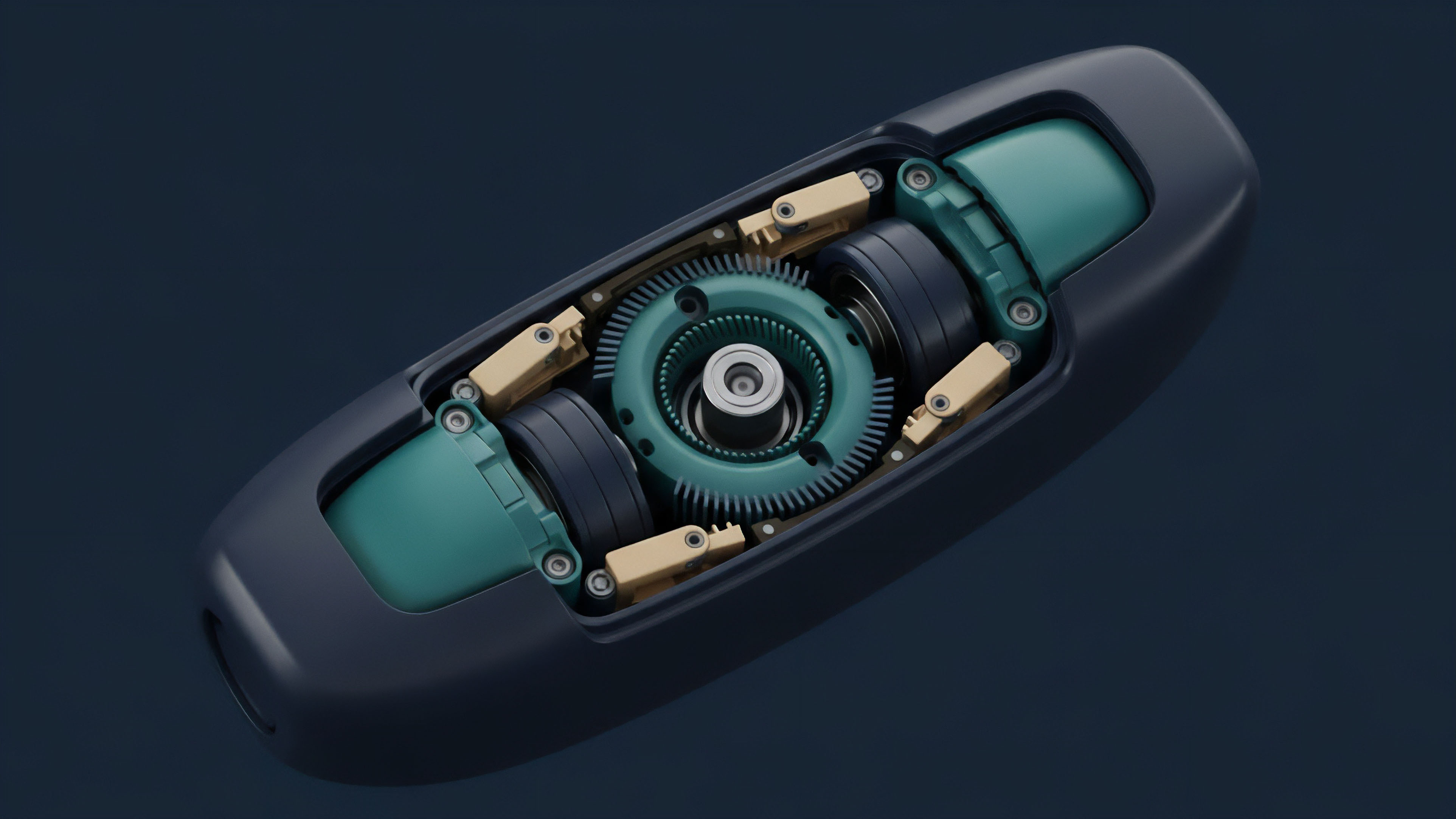

Modern implementations of Distributed Ledger Technology utilize a layered architecture to optimize performance and security. The Execution Layer handles the processing of transactions and the updating of the state, while the Settlement Layer provides the finality and security for the network. By decoupling these functions, developers can create specialized environments that cater to high-frequency trading or complex derivative logic.

Data Availability and Verification

Ensuring that all participants have access to the data required to verify the ledger is a primary challenge. Data Availability Sampling allows nodes to confirm that a block’s data has been published without downloading the entire block. This technique uses erasure coding to spread data across the network, making it highly resilient to data withholding attacks.

- Gossip Protocols: The method by which nodes propagate transaction and block information across the network.

- State Bloom Filters: Probabilistic data structures used to efficiently check for the existence of an element in the ledger state.

- Light Clients: Nodes that verify the ledger using only block headers, relying on cryptographic proofs for transaction validity.

Quantitative Risk Management

Financial strategies within Distributed Ledger Technology environments must account for Smart Contract Risk and Liquidity Fragmentation. Quantitative models use Value at Risk (VaR) and Expected Shortfall to assess the potential for losses due to protocol failures or market volatility. The transparency of the ledger allows for real-time monitoring of collateral ratios and liquidation thresholds, providing a level of visibility that is absent in traditional finance.

Evolution

The progression of Distributed Ledger Technology has moved from simple, single-purpose ledgers to complex, multi-functional platforms.

The early phase focused on UTXO-based models, which treated transactions as a series of inputs and outputs. This was followed by the rise of Account-based models, which enabled the development of Turing-complete smart contracts, allowing for the creation of decentralized applications and autonomous financial protocols.

Modular Architectures

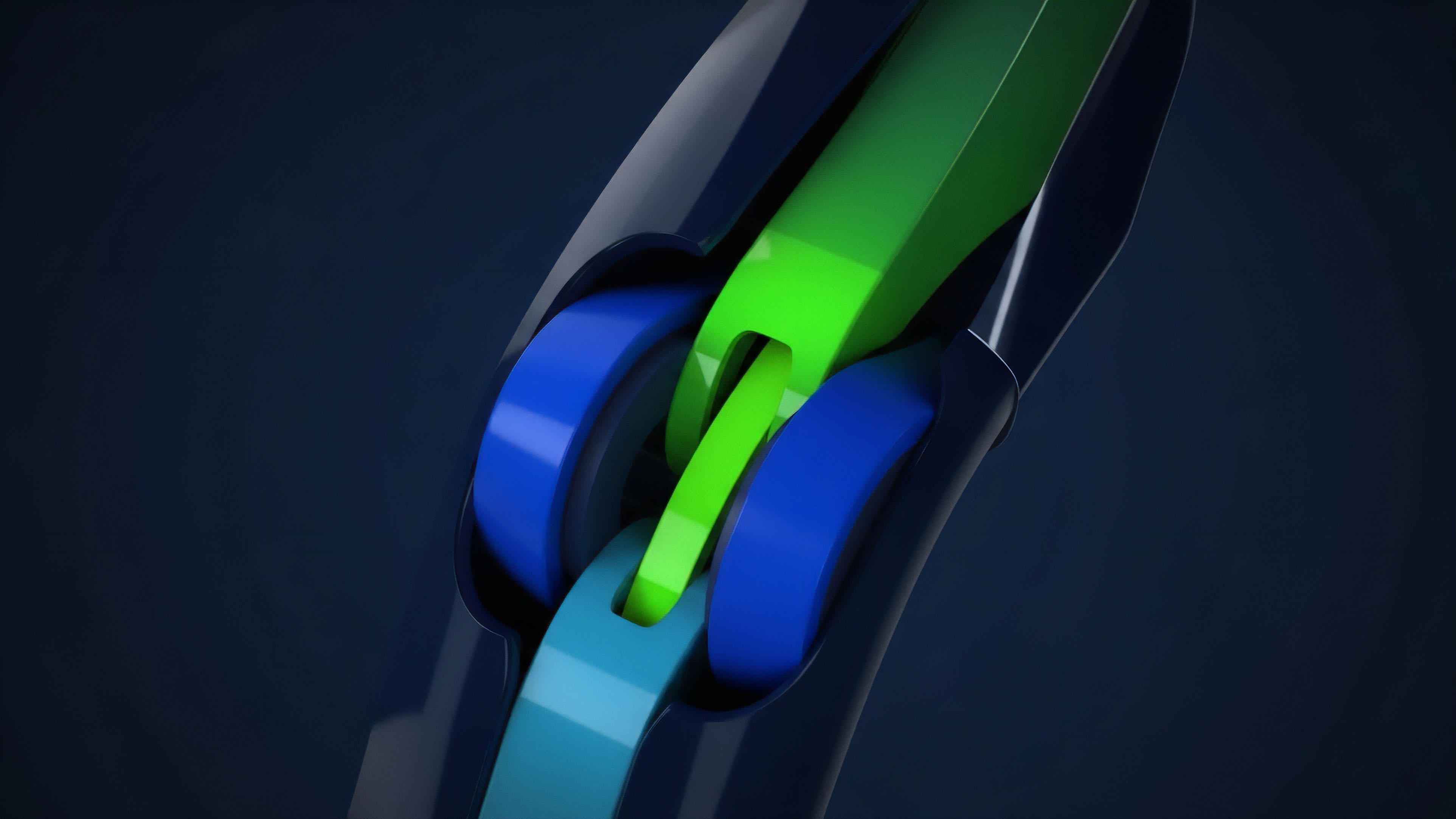

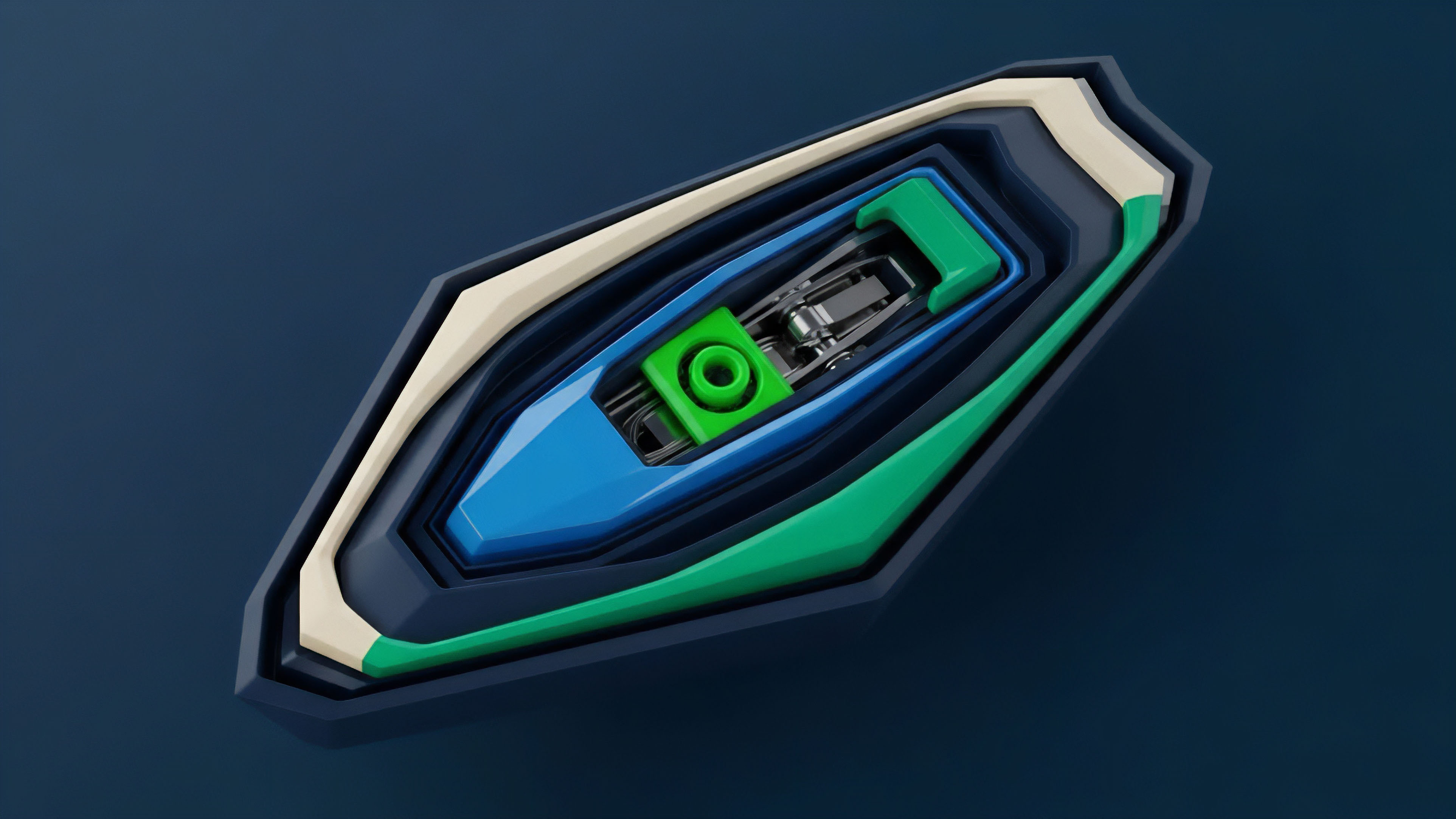

The current state of the technology is defined by a shift toward Modularity. Instead of a single blockchain handling all tasks, the stack is divided into specialized layers for execution, settlement, and data availability. This allows for greater scalability and flexibility, as different protocols can be optimized for specific use cases ⎊ such as high-speed derivatives trading or long-term asset storage ⎊ while still sharing the security of a robust base layer.

Modular blockchain designs decouple execution from data availability to optimize for scalability and security.

| Architecture | Primary Advantage | Trade-off |

|---|---|---|

| Monolithic | Simple Integration | Limited Scalability |

| Modular | High Throughput | Increased Complexity |

| App-Specific | Customized Logic | Liquidity Isolation |

The emergence of Layer 2 Scaling Solutions, such as Rollups, has further refined the technology. These protocols process transactions off-chain and then submit a compressed summary to the main ledger. This reduces the burden on the base layer while maintaining its security guarantees, enabling a new generation of high-performance financial instruments that can compete with centralized exchanges.

Horizon

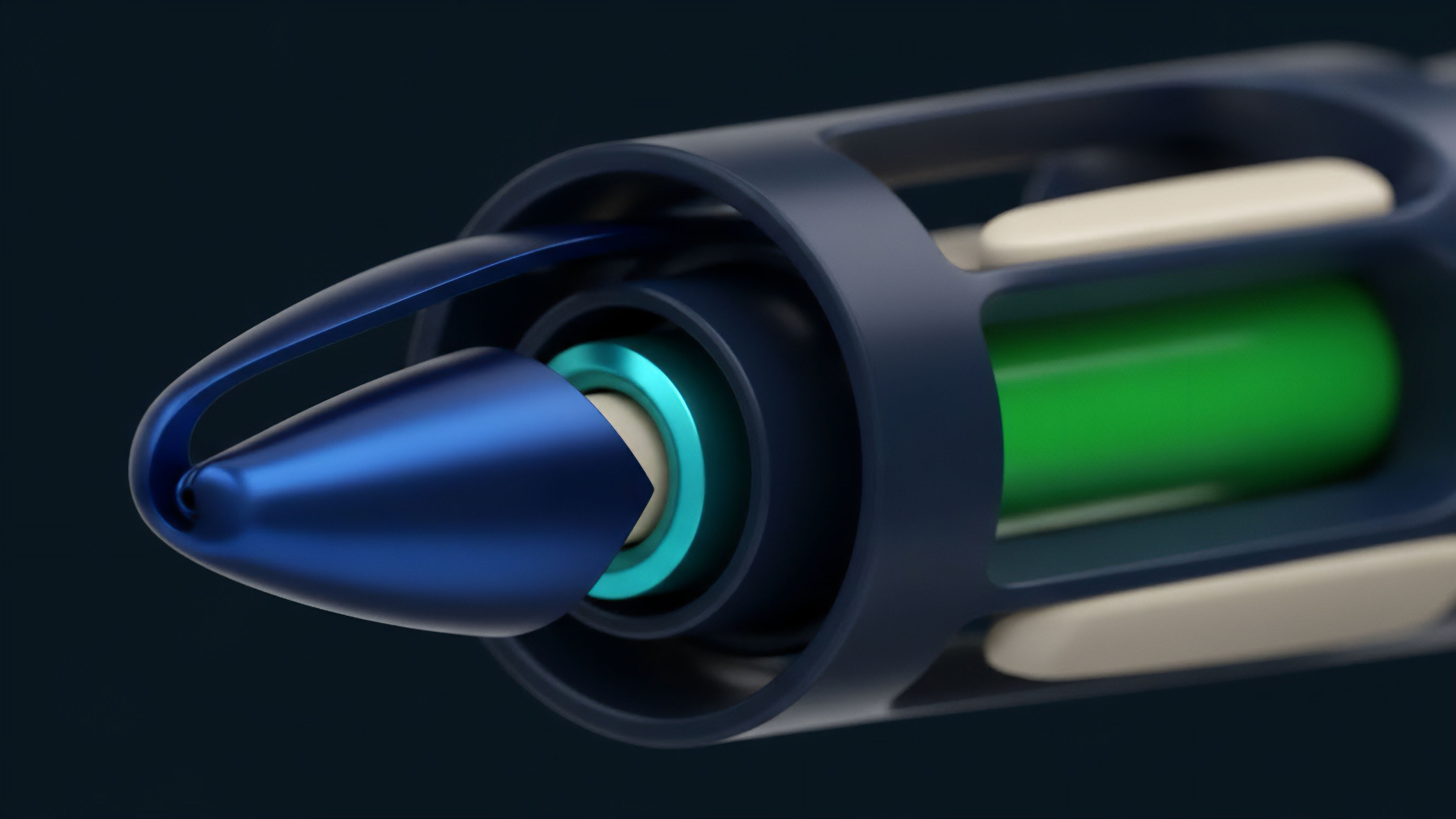

The future of Distributed Ledger Technology lies in the integration of Zero-Knowledge Proofs (ZKP) and the development of Interoperability Protocols.

ZKPs allow for the verification of transactions without revealing the underlying data, providing a solution to the tension between transparency and privacy. This will be mandatory for the adoption of decentralized ledgers by institutional actors who require confidentiality for their trading strategies and client data.

Systemic Integration

As the technology matures, we anticipate the convergence of Distributed Ledger Technology with legacy financial infrastructure. This will likely involve the Tokenization of traditional assets ⎊ such as bonds, equities, and real estate ⎊ allowing them to be traded and settled on-chain. The resulting Global Liquidity Layer will enable 24/7 trading and instant settlement, significantly reducing the capital requirements and operational risks associated with current financial systems. The development of Cross-Chain Communication standards will allow value and data to flow seamlessly between different ledgers. This will mitigate the problem of liquidity fragmentation and enable the creation of complex, multi-chain financial products. The ultimate goal is the creation of a Resilient Financial Operating System that is open, transparent, and accessible to all, providing a robust foundation for the next century of global economic activity.

Glossary

Sidechains

Cryptographic Primitives

Economic Security

Order Book Protocols

Jurisdictional Frameworks

Permissioned Ledgers

Sybil Resistance

Counterparty Risk

Slashing