Essence

Centralized matching engines operate as opaque silos where execution integrity remains an article of faith rather than a verifiable mathematical proof. Order Book Validation represents the transition from this legacy reliance on institutional trust toward a regime of computational certainty. Within the digital asset derivative landscape, this verification mechanism ensures that every bid, ask, and subsequent match adheres to a predefined set of rules without the possibility of surreptitious interference or preferential treatment of specific participants.

The architecture of a validated book demands that the state of the market ⎊ comprising all active orders and their relative priority ⎊ is audit-proof and reproducible by any external observer.

Order Book Validation functions as the mathematical guarantee that market matching logic remains immune to operator manipulation or unauthorized order prioritization.

The systemic relevance of this process lies in its ability to mitigate counterparty and platform risk simultaneously. By employing cryptographic proofs or deterministic state transitions, protocols provide a transparent ledger of intent. This transparency is the primary defense against “ghost orders” or wash trading, which frequently plague unvalidated centralized venues.

In a high-stakes environment where derivative leverage amplifies the consequences of execution errors, the presence of a robust Order Book Validation layer becomes the prerequisite for institutional-grade capital allocation.

- Deterministic Matching ensures that given the same set of inputs, the matching engine produces the identical output across all nodes in the network.

- Sequence Integrity prevents the retroactive insertion or deletion of orders, maintaining the sanctity of the time-priority queue.

- Execution Transparency allows participants to verify that their trades occurred at the best available price according to the current book state.

Financial strategies in decentralized markets rely on the assumption that the underlying plumbing is not actively working against the participant. When Order Book Validation is absent, the risk of “latent toxicity” ⎊ where the exchange operator or a privileged actor front-runs user flow ⎊ increases exponentially. The architectural choice to validate the book on-chain or via zero-knowledge proofs shifts the burden of proof from the user to the protocol, creating a more resilient financial ecosystem.

Origin

The necessity for verifiable order books arose from the catastrophic failures of early digital asset exchanges which operated with zero oversight and significant internal conflicts of interest.

Legacy financial systems rely on a dense web of regulatory audits and legal threats to ensure fair play, yet even these systems suffer from “dark pool” opacity and high-frequency trading advantages that remain hidden from the public. The 2014 collapse of Mt. Gox served as the primary catalyst for a movement toward “Proof of Solvency” and, eventually, “Proof of Execution.”

The historical shift from trusted centralized matching to verifiable decentralized books was driven by the repeated failure of opaque exchange architectures.

Early decentralized exchange attempts utilized simple Automated Market Makers (AMMs) to bypass the need for order book management entirely, but these models proved capital inefficient for sophisticated derivative strategies. As the demand for limit order functionality grew, developers began experimenting with off-chain matching combined with on-chain settlement. This hybrid model, while faster, still lacked full Order Book Validation until the introduction of Layer 2 scaling solutions and specialized AppChains.

These advancements allowed for the high-throughput requirements of a Central Limit Order Book (CLOB) while maintaining the cryptographic security of the underlying blockchain.

| Era | Validation Model | Primary Limitation |

|---|---|---|

| Centralized (2011-2017) | Internal Database Only | Total Operator Dependency |

| Early DEX (2018-2020) | Atomic On-Chain AMM | Extreme Capital Inefficiency |

| Modern Hybrid (2021-Present) | ZK-Proofs / AppChains | Intricate Technical Overhead |

Theory

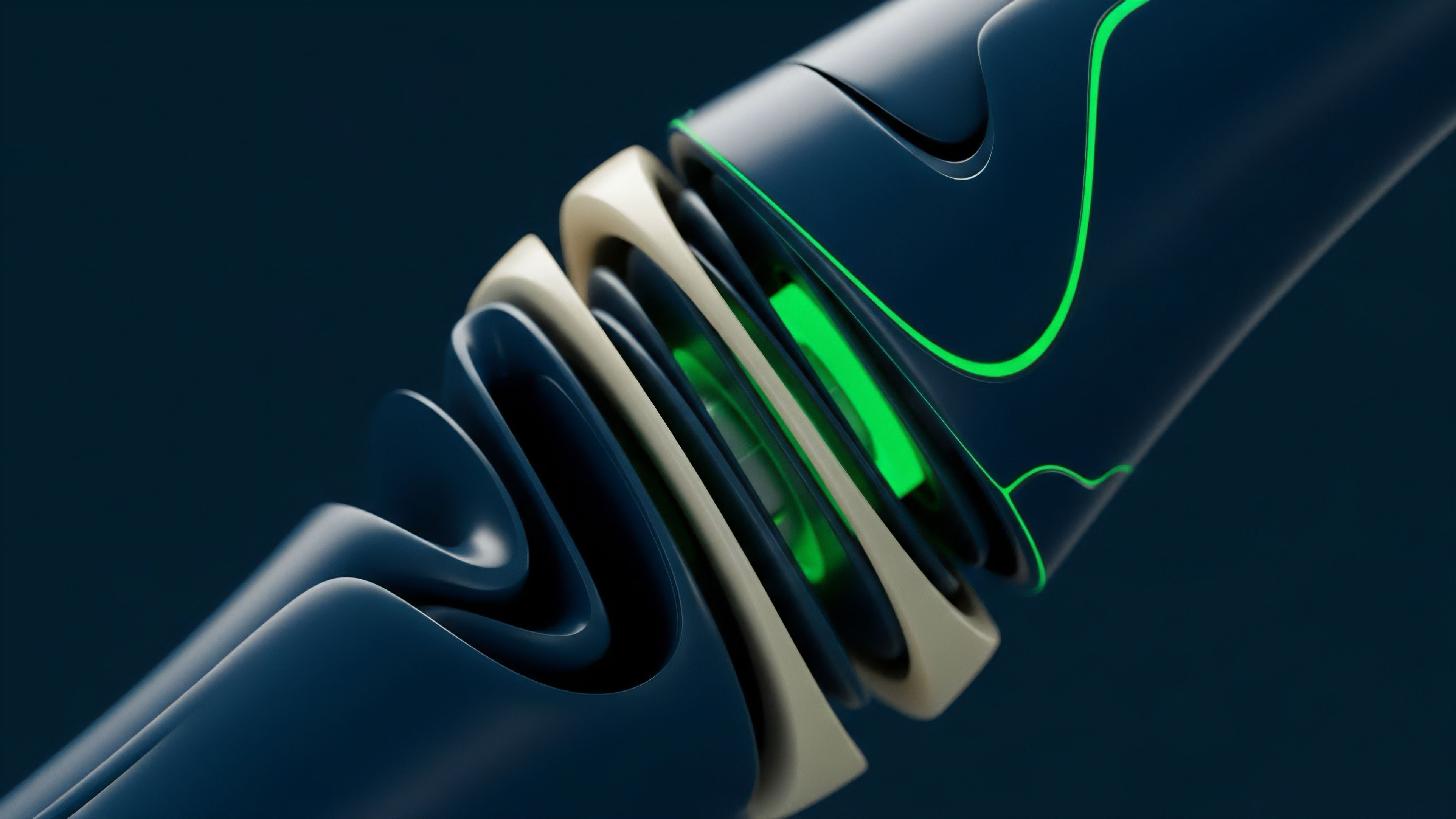

The quantitative framework for Order Book Validation rests upon the formalization of the matching engine as a pure function where the state transition is governed by strict price-time priority. In this model, the “state” consists of a double-sided queue of orders, and each new message ⎊ whether a limit order, a cancellation, or a modification ⎊ triggers a deterministic update to this queue. The mathematical challenge arises when this logic must be executed across a distributed network where latency and message ordering are not naturally synchronized.

To achieve Order Book Validation, the protocol must implement a sequencing layer that assigns a global timestamp or sequence number to every message before it reaches the matching engine. This ensures that every validator in the network, regardless of their geographical location, processes the same sequence of events, leading to an identical final book state. From a risk perspective, the validation of this sequence is what allows for the calculation of “Greeks” and margin requirements with absolute precision.

If the order of execution were fluid or manipulatable, the Delta and Gamma of an options portfolio would become unstable, as the underlying price discovery mechanism would be subject to arbitrary jumps. The protocol physics of this environment dictate a trade-off between “Finality Latency” and “Validation Depth” ⎊ the more rigorous the verification process, the longer it takes for a trade to be considered immutable. Quantitative analysts must therefore model the “Probability of Reversion” when designing high-frequency strategies on validated books.

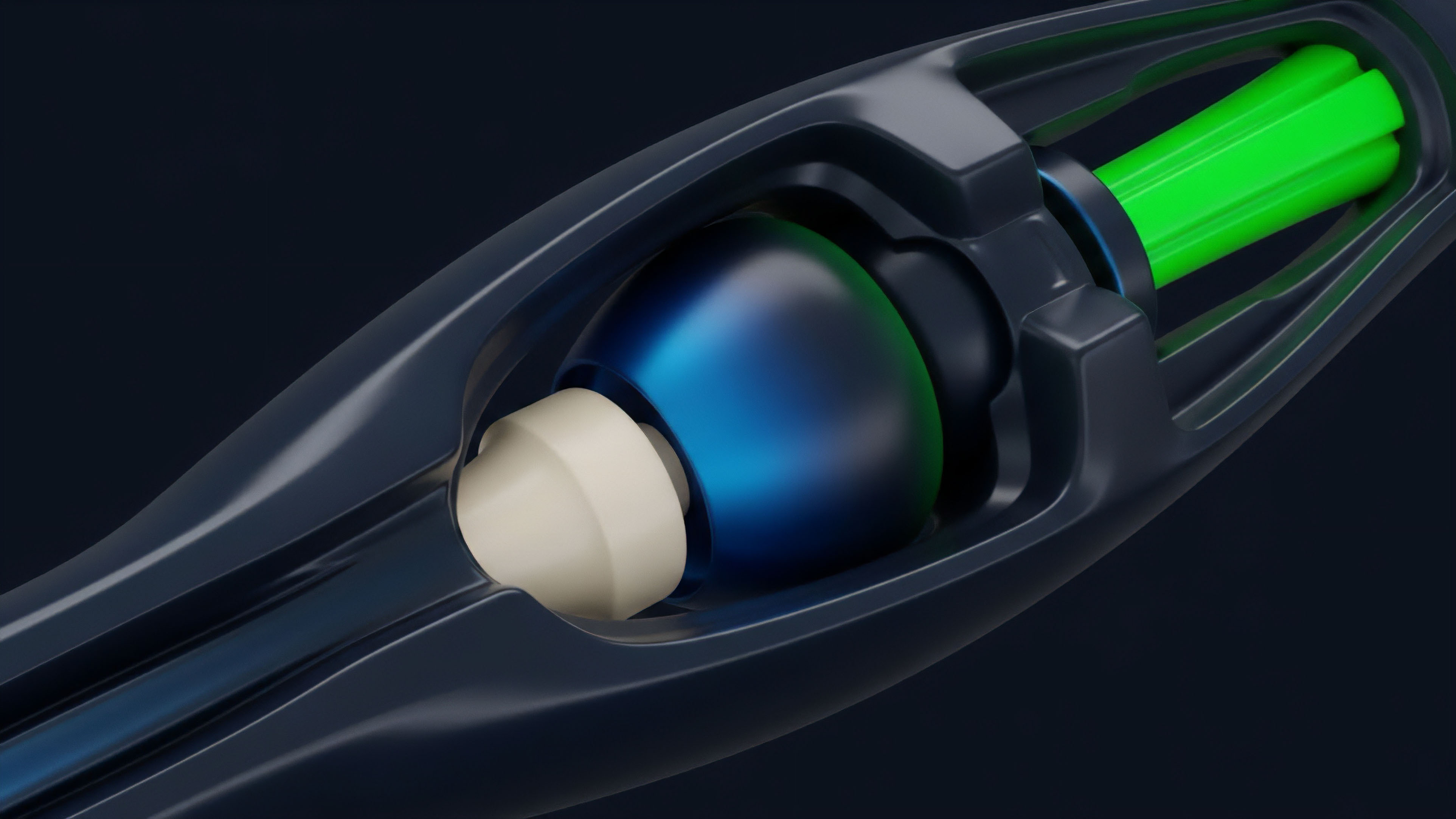

This involves analyzing the Byzantine Fault Tolerance (BFT) of the sequencing layer and the economic cost of a successful “double-spend” or “re-ordering” attack. In a perfectly validated system, the matching engine is decoupled from the settlement layer, allowing for rapid execution while the cryptographic proof of the match is generated asynchronously. This separation of concerns is what enables the high-speed performance required for derivative markets without sacrificing the security of the Order Book Validation.

The margin engine, which is often integrated directly with the validated book, uses the verified price data to trigger liquidations. If the book validation fails, the margin engine may trigger “false positive” liquidations, leading to systemic contagion. Therefore, the integrity of the Order Book Validation is the primary anchor for the entire financial stack, ensuring that the price used for valuation is the result of legitimate, verified market activity rather than an artifact of a compromised matching process.

Systemic stability in derivative markets is a direct function of the deterministic nature of the matching engine and its associated validation proofs.

Verification Parameters

The robustness of a validation system is measured by its resistance to adversarial re-ordering and its ability to provide real-time proofs of execution. Order Book Validation must address the following technical components to be considered secure:

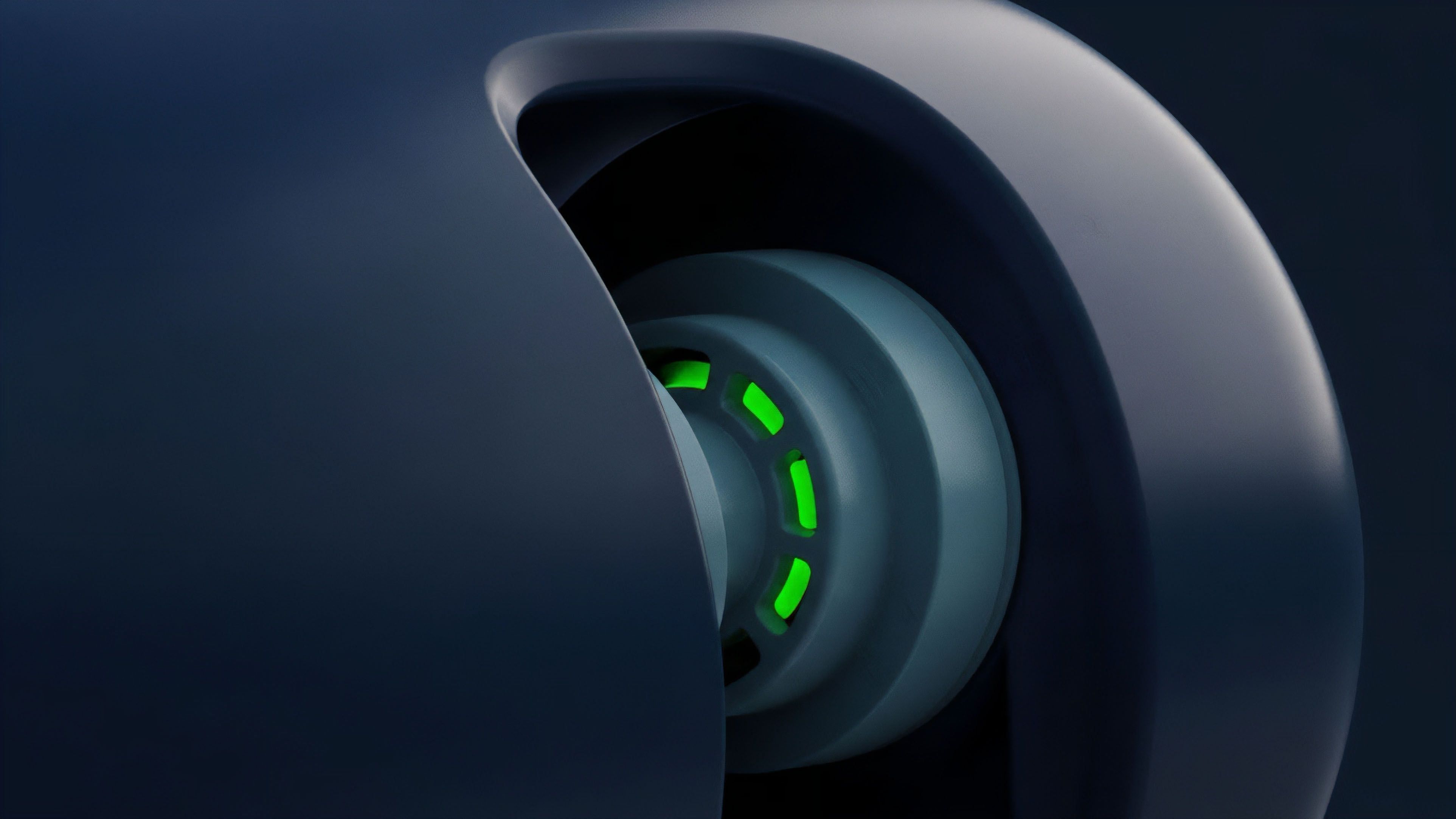

- State Commitment involves creating a cryptographic hash of the entire order book after every match, which is then published to the base layer.

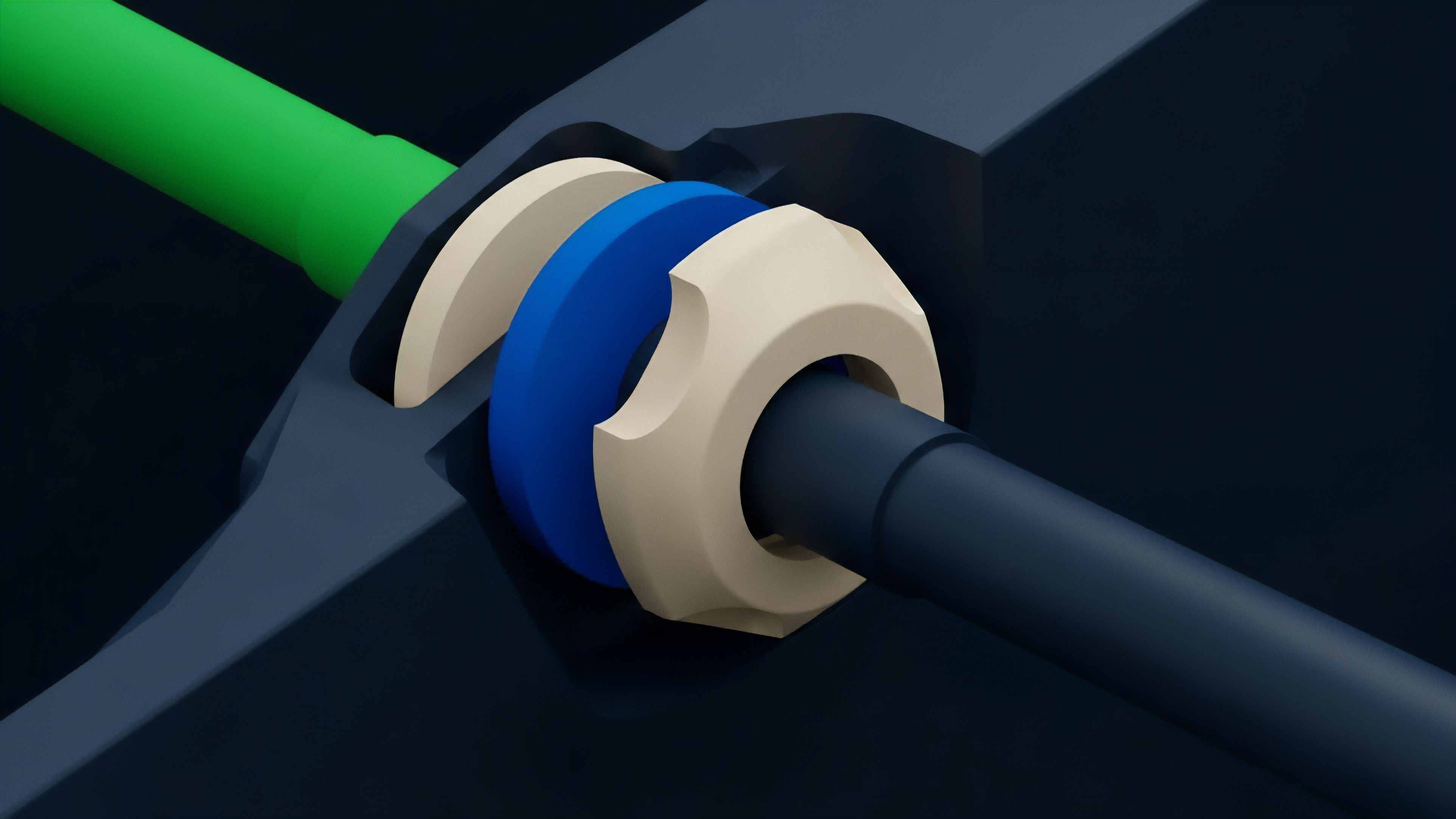

- Inclusion Proofs allow a user to verify that their specific order was correctly placed in the queue and not ignored by the sequencer.

- Anti-Frontrunning Logic uses commit-reveal schemes or threshold encryption to hide order details until they are sequenced, preventing validators from stealing alpha.

Approach

Current implementations of Order Book Validation utilize a variety of architectural patterns to balance speed and security. The most prominent method involves the use of specialized Layer 2 environments ⎊ often referred to as AppChains ⎊ that are optimized specifically for high-throughput order matching. These systems move the matching logic off the main Ethereum execution layer but maintain Order Book Validation by submitting periodic proofs of the matching engine’s state to the Layer 1.

This allows for sub-second execution speeds while inheriting the security of the larger network.

| Implementation Strategy | Validation Mechanism | Latency Profile |

|---|---|---|

| Fully On-Chain CLOB | Consensus Layer Validation | High (Seconds) |

| ZK-Rollup Matching | Validity Proofs (SNARKs/STARKs) | Medium (Milliseconds) |

| Off-Chain with On-Chain Settlement | Optimistic Fraud Proofs | Low (Microseconds) |

Another sophisticated tactic involves the use of Zero-Knowledge (ZK) proofs to validate the book without revealing the individual orders. This “Private Order Book Validation” is particularly attractive to institutional market makers who wish to provide liquidity without exposing their proprietary strategies or inventory levels to competitors. By proving that the matching was done correctly without revealing the inputs, the protocol maintains market integrity while providing a level of privacy that exceeds traditional centralized exchanges.

- Sequencing: Orders are received and timestamped by a decentralized set of sequencers to establish a definitive order of arrival.

- Matching: The engine executes trades based on the verified sequence, following price-time priority rules.

- Proof Generation: A cryptographic proof is generated, demonstrating that the matching logic was followed perfectly.

- Settlement: The proof is verified on-chain, and funds are moved between accounts according to the trade results.

Evolution

The transition from basic Automated Market Makers to sophisticated, validated limit order books represents a significant leap in the technical maturity of the digital asset space. Early AMMs were a response to the inability of blockchains to handle the high message volume of a traditional book. However, the “slippage” and “impermanent loss” associated with AMMs made them unsuitable for the precise hedging required in derivative markets.

This led to the development of “Concentrated Liquidity” models, which were a primitive form of Order Book Validation where liquidity was binned into specific price ranges.

The move toward Central Limit Order Books on-chain marks the end of the AMM era for professional derivative execution.

As scaling technology improved, the industry moved toward “Hyper-Performant Sequencers” that can handle thousands of orders per second. The focus shifted from simply “making a trade possible” to “ensuring the trade is fair.” The rise of Maximal Extractable Value (MEV) as a concept highlighted the vulnerabilities in unvalidated or poorly sequenced books. Modern Order Book Validation now incorporates MEV-resistance as a core feature, using techniques like “Fair Sequencing Services” or “Batch Auctions” to ensure that no single participant can profit from re-ordering transactions within a block.

- Batch Auctions aggregate orders over a short window and execute them at a single clearing price, eliminating the advantage of micro-latency.

- Recursive SNARKs allow for the compression of thousands of Order Book Validation proofs into a single, tiny proof that can be verified cheaply on-chain.

- Cross-Chain Atomic Settlement enables a validated book on one chain to settle trades using assets located on a completely different blockchain.

Horizon

The future of Order Book Validation is trending toward a world of “Invisible Infrastructure” where the verification happens instantaneously and silently in the background. We are moving away from monolithic exchanges toward fragmented liquidity that is unified by a single validation layer. This “Universal Liquidity Layer” would allow any front-end to tap into a global, validated book, ensuring that a trader in Tokyo and a market maker in New York are interacting with the same verified state.

The integration of AI-driven market making agents will further stress these validation systems. As machines begin to trade at speeds approaching the physical limits of fiber optics, the “Validation Gap” ⎊ the time between execution and verification ⎊ must shrink to near zero. Protocols that can provide “Real-Time Order Book Validation” will become the dominant venues for derivative liquidity, as they offer the only environment where high-speed execution does not come at the cost of systemic transparency.

| Future Trend | Technological Driver | Market Effect |

|---|---|---|

| Instant Settlement | Atomic Cross-Chain Bridges | Elimination of Gap Risk |

| Privacy-Preserving Books | Fully Homomorphic Encryption | Institutional Anonymity |

| AI-Native Execution | Sub-Millisecond ZK-Proofs | Machine-to-Machine Markets |

Ultimately, the goal is the total elimination of the “Exchange Operator” as a source of risk. In this future, the code is the exchange, and the Order Book Validation is the regulator. This shift will likely trigger a massive regulatory realignment, as traditional oversight bodies move from auditing human behavior to auditing smart contract code. The sovereign nature of these validated systems ensures that as long as the underlying math remains sound, the market will remain open, fair, and functional, regardless of the external geopolitical or economic climate.

Glossary

Atomic Execution

Non Custodial Exchange

Matching Engine

Cryptographic Proof

Front-Running Protection

Price Time Priority

Liquidity Provision

Risk Engine Transparency

Order Cancellation Integrity