Essence

Decentralized Data Ownership functions as the cryptographic sovereignty of information, where users maintain absolute control over their digital footprint through private key infrastructure. This paradigm shifts the architecture of value from centralized silos to permissionless, verifiable ledgers, ensuring that data generation, storage, and monetization remain tethered to the individual rather than the platform.

Decentralized data ownership establishes a framework where information remains the property of the creator, secured by cryptographic proofs rather than institutional trust.

The systemic relevance lies in the elimination of intermediary rent-seeking behavior. By tokenizing data rights, protocols enable a liquid market for information, allowing participants to stake, lease, or sell access to their data without sacrificing ownership. This structural change alters the risk profile of digital assets, transforming passive user activity into active, yield-bearing capital.

Origin

The genesis of Decentralized Data Ownership resides in the early cypherpunk movement and the subsequent maturation of distributed ledger technology.

Initial efforts focused on censorship resistance and anonymous communication, which gradually coalesced into robust frameworks for self-sovereign identity and verifiable computation.

- Cypherpunk Manifestos established the moral and technical imperative for cryptographic privacy and user-controlled digital assets.

- Blockchain Foundations introduced the technical mechanisms necessary to create immutable, decentralized ledgers for data provenance.

- Smart Contract Proliferation provided the programmable logic required to automate the enforcement of data access and compensation agreements.

These historical threads converged when the limitations of centralized cloud storage became clear. The systemic failure of monolithic platforms to protect user privacy acted as the primary catalyst for the development of decentralized storage networks and identity protocols.

Theory

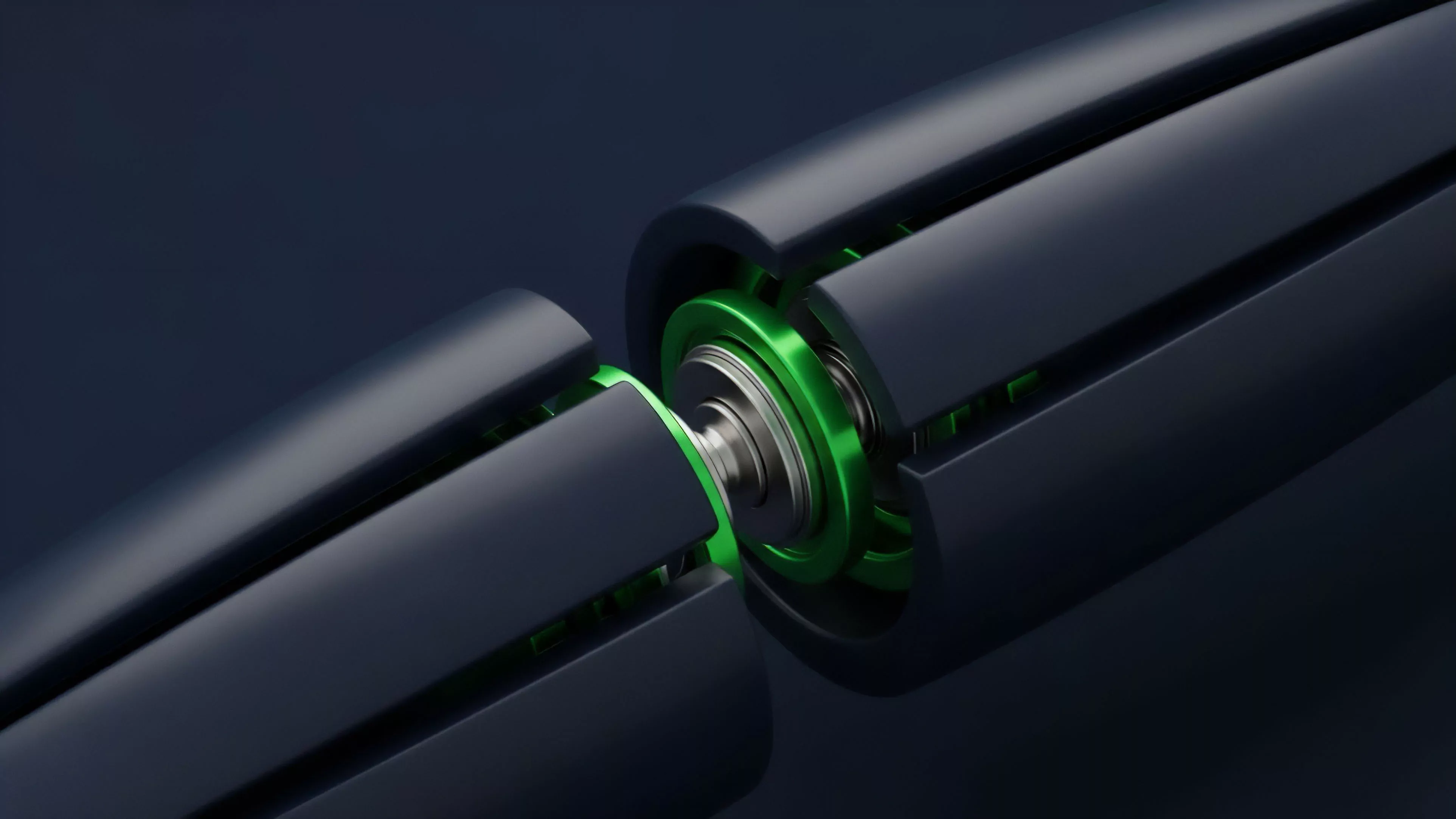

The mechanical structure of Decentralized Data Ownership relies on the integration of Zero-Knowledge Proofs and Decentralized Identifiers. These components allow a participant to prove the validity of data attributes without revealing the underlying sensitive information, effectively decoupling identity from utility.

| Component | Systemic Function |

|---|---|

| Zero-Knowledge Proofs | Verifiable computation without data exposure |

| Decentralized Identifiers | Self-sovereign, non-custodial user identity |

| Data Tokenization | Monetization of information through smart contracts |

The mathematical rigor of zero-knowledge proofs provides the foundation for private, yet verifiable, data exchange in open financial systems.

From a quantitative perspective, this creates a new class of Data-Backed Derivatives. If data access rights are tokenized, the volatility of that data becomes a tradable instrument. Market makers can price the probability of specific data segments becoming high-demand assets, applying traditional option pricing models to non-financial information flows.

The adversarial nature of these systems ensures that only the most resilient data structures survive, as protocols are constantly stress-tested by agents seeking to extract value from information asymmetries.

Approach

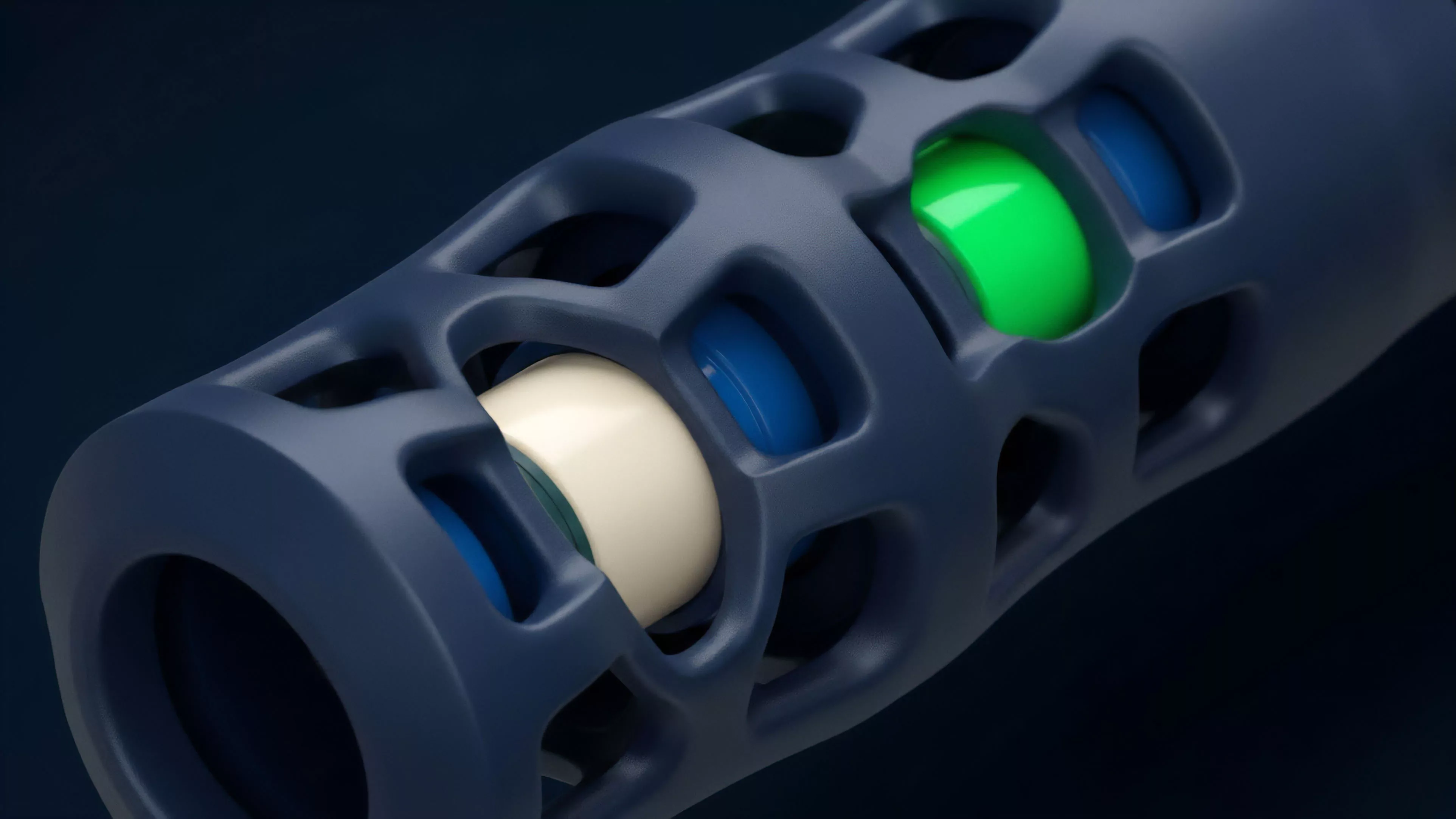

Current implementation focuses on the creation of Data DAOs and decentralized compute networks. These structures manage the collective interests of data providers, pooling information to increase its market value while distributing the resulting revenue according to transparent, on-chain governance rules.

- Liquidity Provision involves staking data tokens to incentivize high-quality, verified information input.

- Margin Engines for data derivatives utilize oracle feeds to adjust collateral requirements based on the real-time demand for specific datasets.

- Governance Models enable token holders to vote on protocol upgrades and data access parameters.

The strategy hinges on Capital Efficiency. By reducing the overhead of intermediaries, the protocol allows for higher returns to the actual data generators. However, this environment is fraught with Smart Contract Risk; any vulnerability in the underlying logic can lead to the instantaneous leakage of private data or the drainage of liquidity pools.

Evolution

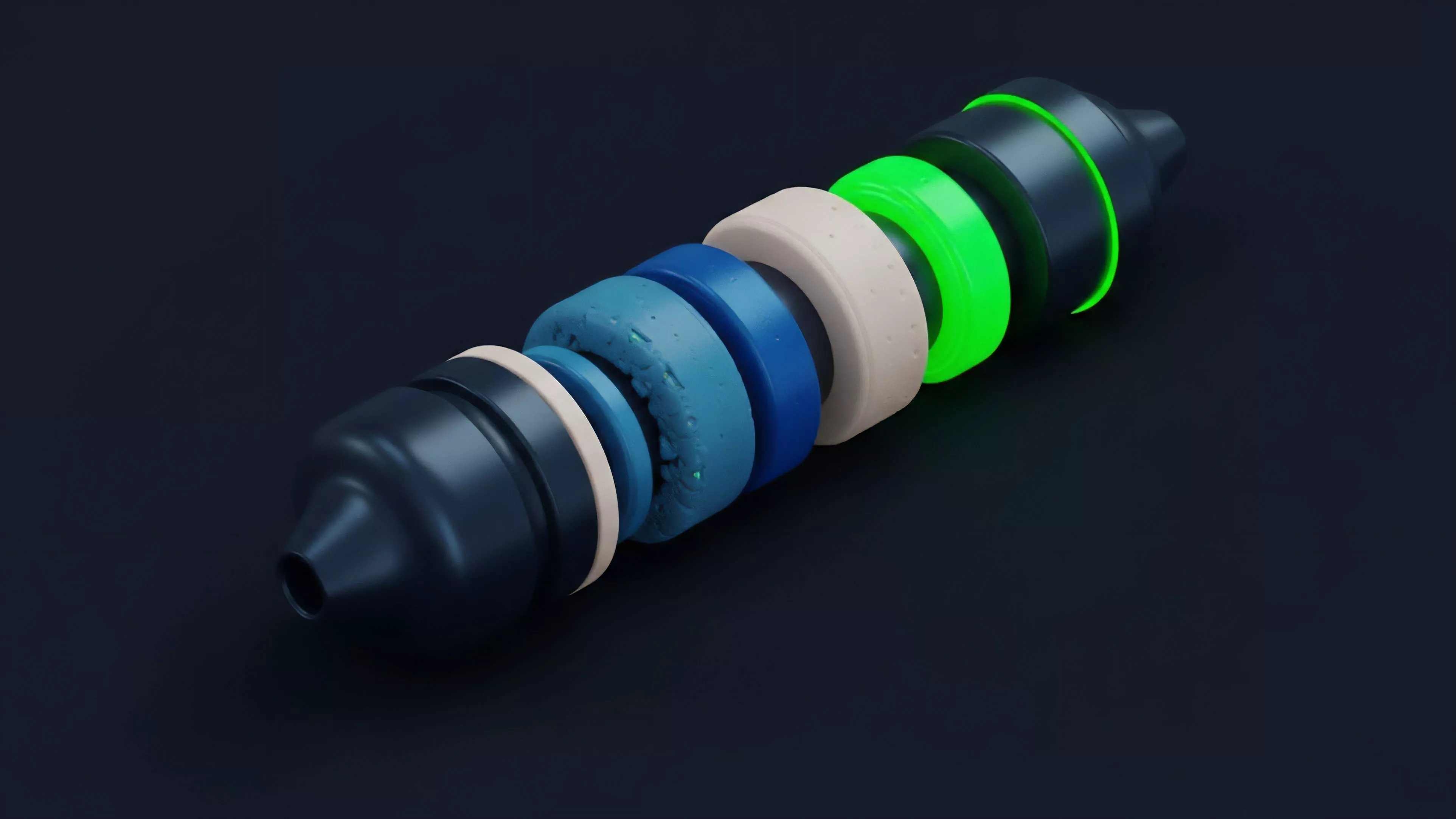

The trajectory of Decentralized Data Ownership has moved from simple file storage to complex, compute-heavy ecosystems.

Early iterations merely provided a distributed repository for static files, whereas current architectures facilitate the execution of arbitrary code on encrypted datasets.

The evolution of data ownership is marked by the transition from passive storage to active, verifiable computation on private datasets.

This shift mirrors the broader history of financial markets, moving from physical exchange to high-frequency, automated trading venues. Sometimes I consider how this parallels the development of early banking; just as paper ledgers gave way to electronic databases, our current systems are shedding their centralized layers in favor of cryptographic consensus. This transition increases the Systemic Risk of contagion, as protocols become increasingly interconnected through shared liquidity and data dependencies.

Horizon

The future involves the integration of Decentralized Data Ownership into the core of decentralized finance, creating a global, permissionless market for intelligence.

We are moving toward a state where machine learning models are trained on private, decentralized datasets, with providers earning continuous, automated royalties for their contributions.

| Phase | Strategic Focus |

|---|---|

| Infrastructure | Scaling decentralized storage and compute |

| Monetization | Standardizing data-backed derivative contracts |

| Integration | Embedding data rights into automated market makers |

The critical hurdle remains the interface between off-chain physical data and on-chain verification. Achieving a robust, tamper-proof link between real-world information and decentralized ledgers will define the success of this domain. What is the ultimate limit of verifiable computation when the underlying hardware itself remains susceptible to physical compromise?