Essence

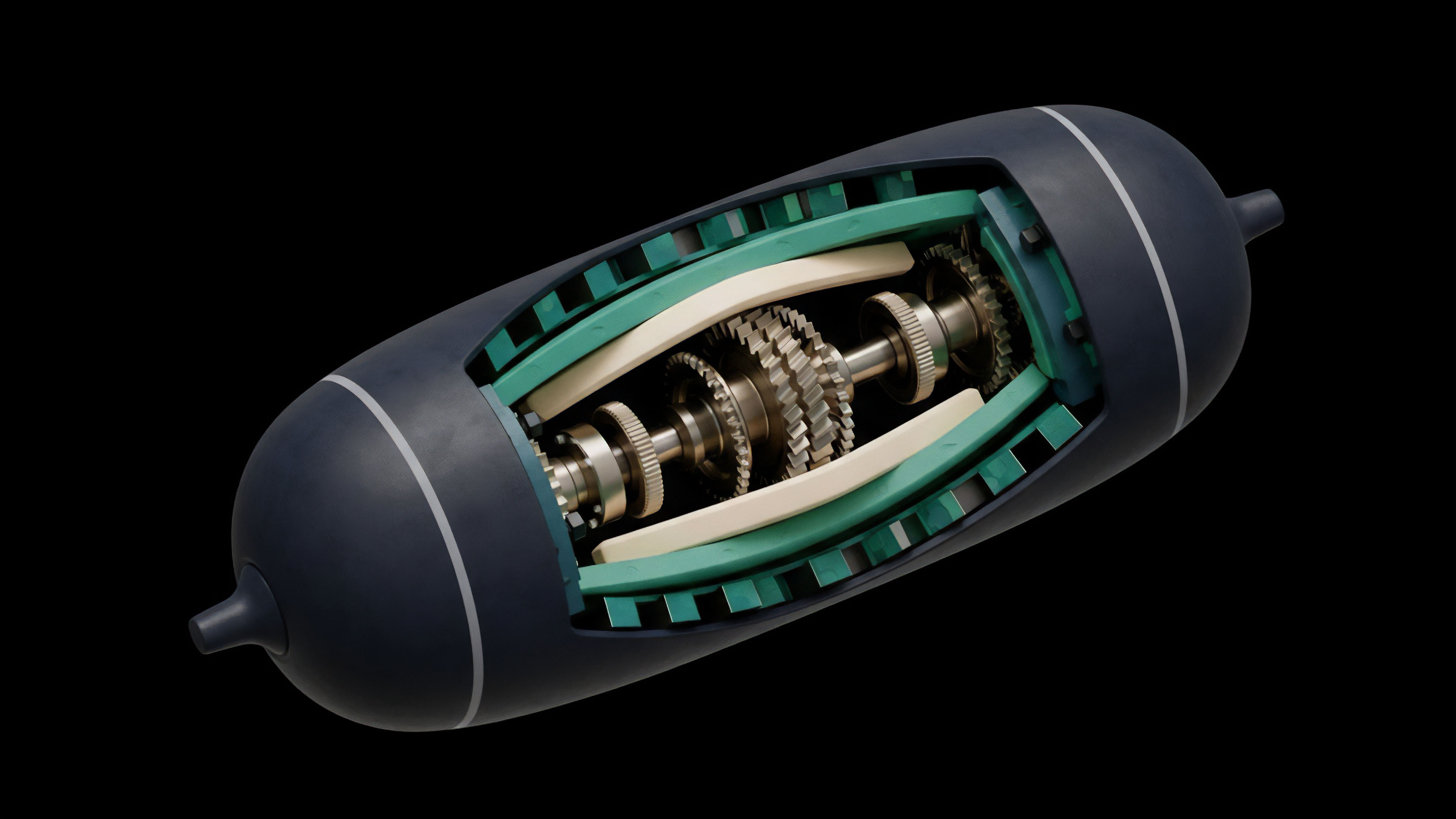

A decentralized clearinghouse serves as the central counterparty for derivatives contracts within a decentralized financial system. Its primary function is to eliminate counterparty risk between two trading parties by acting as a trustless intermediary. In traditional finance, institutions like the Options Clearing Corporation (OCC) perform this role, guaranteeing settlement and managing collateral for options contracts.

The decentralized version achieves this through smart contracts, replacing human-operated institutions with automated code. The architecture focuses on collateral management, margin calculation, and automated liquidation to maintain systemic solvency. This infrastructure is essential for building a robust options market where participants can trade without relying on the creditworthiness of a centralized entity.

A decentralized clearinghouse manages the lifecycle of a derivative contract from initiation to expiration or settlement. It ensures that both the buyer and seller of an options contract post sufficient collateral to cover potential losses. The system continuously monitors the risk profile of each participant’s portfolio, adjusting margin requirements dynamically based on market volatility and price changes.

This automated risk management process prevents cascading defaults and protects the integrity of the market. The core innovation lies in disintermediating the risk transfer process, allowing capital to be managed transparently on-chain.

The decentralized clearinghouse is a trust-minimized risk management layer that ensures the solvency of derivatives markets through automated collateral and liquidation mechanisms.

Origin

The concept of a central clearing counterparty originates from the need to manage systemic risk in traditional financial markets. Before central clearing became standard practice, a single default by a large counterparty could trigger a chain reaction of failures throughout the system, a phenomenon known as contagion. The development of clearinghouses in traditional markets provided a necessary layer of stability by standardizing contracts and guaranteeing performance.

The advent of decentralized finance (DeFi) presented a new challenge: how to replicate this stability without reintroducing centralized trust. Early DeFi derivatives protocols often struggled with capital efficiency. Many systems required full collateralization of positions, meaning a user had to lock up the entire potential value of the contract.

This approach, while simple and safe, significantly limited capital utilization and market participation. The development of a decentralized clearinghouse was driven by the necessity to move beyond this overcollateralized model toward a more capital-efficient design. The goal was to create a system where margin requirements could be dynamically calculated based on portfolio risk, similar to traditional financial models, but executed entirely on-chain.

This required protocols to design complex smart contract architectures capable of handling sophisticated risk calculations and automated liquidations.

Theory

The theoretical foundation of a decentralized clearinghouse rests on the principles of margin calculation and risk modeling. Unlike simple overcollateralized systems, a sophisticated clearinghouse calculates margin requirements based on the probability distribution of potential price movements.

The core challenge lies in translating complex quantitative finance models into deterministic smart contract logic. The system must accurately assess the risk of a user’s entire portfolio, which includes multiple long and short positions across different assets and expiration dates. A key theoretical approach involves portfolio margining.

This model recognizes that certain positions can offset each other’s risk. For example, holding a long put option and a short call option on the same asset might reduce the overall risk exposure compared to holding either position in isolation. A clearinghouse calculates a single margin requirement for the entire portfolio, rather than individual positions.

This allows for significantly greater capital efficiency. The system relies heavily on accurate pricing data from external oracles. The risk calculation uses pricing models, often variants of the Black-Scholes formula, to determine the value of options and the sensitivity of the portfolio to changes in underlying asset price and volatility (Greeks).

The calculation of Greeks ⎊ specifically Delta, Gamma, Vega, and Theta ⎊ is essential for understanding the risk profile.

- Delta Hedging: Measures the change in an option’s price relative to a $1 change in the underlying asset’s price. The clearinghouse uses this to determine how much collateral is required to hedge against small price movements.

- Gamma Risk: Measures the change in Delta for a $1 change in the underlying price. This second-order risk is critical during periods of high volatility, as it indicates how quickly the required hedge amount changes.

- Vega Sensitivity: Measures the change in an option’s price relative to a 1% change in implied volatility. This is a primary driver of risk in options portfolios, as volatility shocks can rapidly increase margin requirements.

- Theta Decay: Measures the time decay of an option’s value. The clearinghouse accounts for this predictable decay to adjust margin requirements over time.

The liquidation mechanism is a critical component of the clearinghouse’s theoretical design. When a user’s portfolio value falls below the required margin, the system must liquidate positions to restore solvency. The design must ensure that liquidations occur quickly and efficiently, preventing the portfolio from becoming undercollateralized.

This process involves a trade-off between allowing enough time for users to add collateral and ensuring immediate action during extreme market movements.

| Model Type | Risk Calculation Basis | Capital Efficiency | Systemic Risk Profile |

|---|---|---|---|

| Standard Margin (Individual Position) | Each position calculated independently | Low | Lower for individual, higher for market-wide inefficiency |

| Portfolio Margin (Cross-Position) | Net risk of all positions in portfolio | High | Lower overall capital requirements, but higher potential for cascading failure during extreme events |

Approach

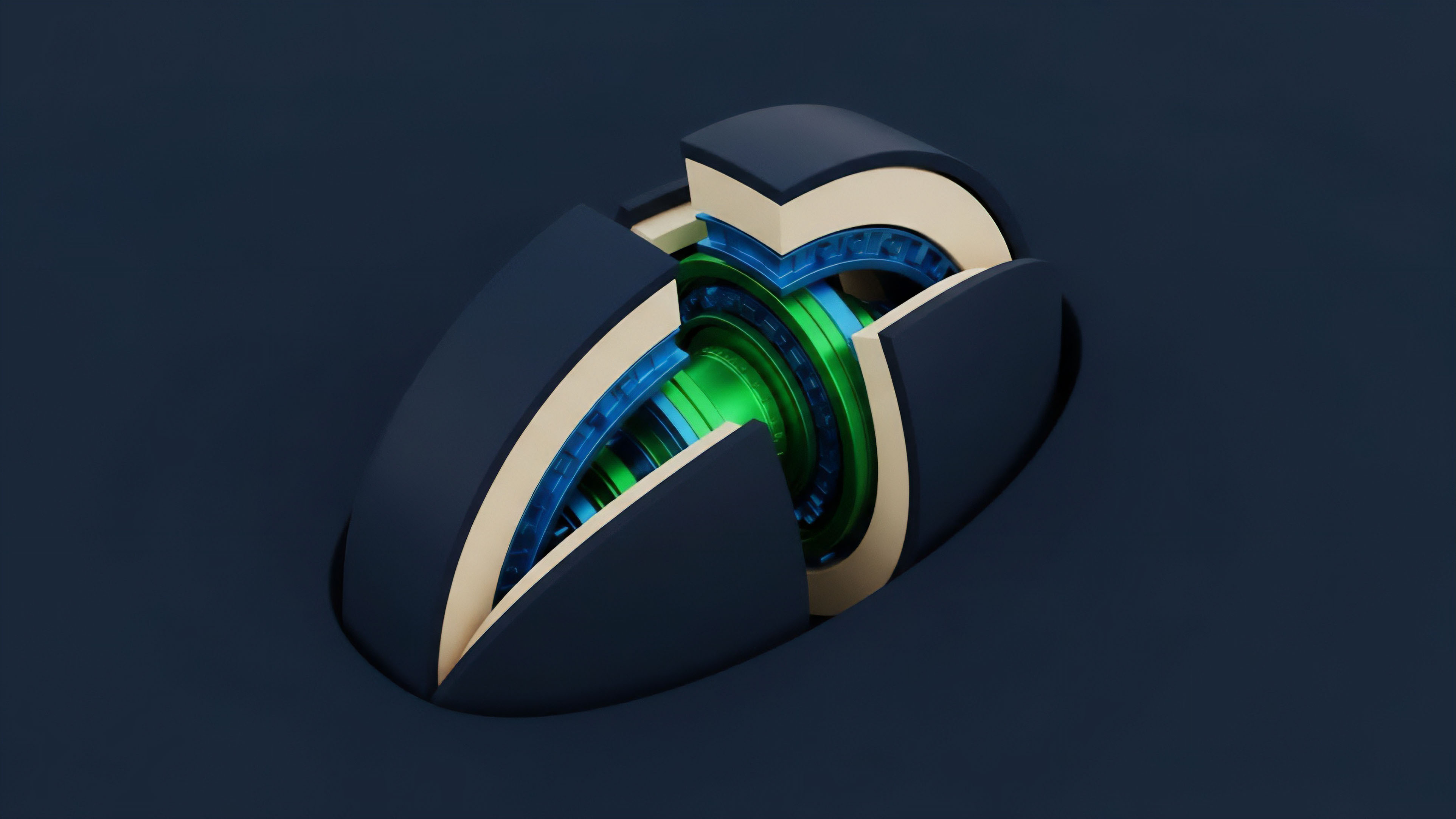

The implementation of a decentralized clearinghouse requires specific architectural decisions to manage risk effectively. A common approach involves a pooled collateral model where all participants contribute to a shared insurance fund. This fund acts as a buffer against liquidations that cannot be executed fully in a volatile market.

The design must carefully balance the size of this fund against the capital efficiency of the system. If the fund is too small, a single large default could deplete it. If it is too large, it represents locked capital that could be used elsewhere.

The choice of liquidation mechanism defines the system’s resilience. Some protocols rely on automated bots or “keepers” that monitor portfolios and trigger liquidations when necessary. The efficiency of these liquidations depends on gas costs and network congestion.

If the network slows down during high volatility, liquidations may fail, leaving the clearinghouse exposed. Other approaches involve a more complex auction mechanism where liquidators bid on the underwater positions. The selection of a risk model is central to the approach.

Protocols must decide whether to use a fixed volatility assumption or to incorporate real-time volatility data. A static model simplifies calculation but fails to account for market changes, potentially leading to inaccurate margin requirements. A dynamic model requires more complex on-chain calculations and relies on high-quality, low-latency data feeds.

The architecture must also account for the potential manipulation of oracle feeds, which could be exploited to game the margin system.

The operational success of a decentralized clearinghouse depends on its ability to execute liquidations swiftly and fairly, balancing capital efficiency with systemic risk protection.

Evolution

The evolution of decentralized clearinghouses has moved through several distinct phases, each driven by lessons learned from market stress. Early protocols often implemented a simple, overcollateralized design where the clearinghouse function was tightly coupled with a specific options exchange. These initial systems were robust against individual defaults but were highly capital inefficient, limiting their ability to scale.

The next phase involved the introduction of portfolio margin and cross-collateralization. This innovation allowed users to post a mix of assets as collateral and to offset risks between different positions. The challenge in this phase was to accurately calculate the risk of complex portfolios on-chain, which led to a trade-off between computational cost and accuracy.

Protocols began to experiment with off-chain risk calculation, where a trusted oracle or centralized service calculates the margin requirements and then submits the result on-chain for verification. This hybrid approach sacrifices some decentralization for efficiency. The current stage of development focuses on creating specialized clearinghouses that act as a shared risk layer for multiple exchanges.

This allows liquidity to be aggregated across different venues, increasing capital efficiency for all participants. The challenge now shifts to managing risk across different blockchains. The concept of a cross-chain clearinghouse requires new protocols to ensure atomicity and finality of transactions across separate networks, a complex problem given the asynchronous nature of different blockchains.

Horizon

Looking ahead, the future development of decentralized clearinghouses will be defined by three primary challenges: cross-chain interoperability, regulatory pressure, and the integration of new risk models. The ability to manage risk across multiple blockchains is essential for creating a truly global derivatives market. This requires a new generation of protocols capable of handling complex state transitions between chains, potentially through zero-knowledge proofs or other cryptographic techniques.

The regulatory environment presents a significant challenge. As decentralized clearinghouses gain scale, they will inevitably attract the attention of regulators who view them as critical financial market infrastructure. The decentralized nature of these protocols complicates traditional regulatory approaches.

Future architectures may need to incorporate mechanisms for compliance, such as whitelisting specific users or implementing circuit breakers, while maintaining the core principles of decentralization. The next generation of risk models will likely move beyond traditional quantitative finance. Protocols are beginning to explore the use of machine learning models to predict volatility and calculate margin requirements dynamically.

These models, trained on real-time market data, could offer greater precision than static formulas. The challenge lies in ensuring transparency and verifiability of these complex models on-chain. The clearinghouse of the future will need to adapt to a rapidly changing market structure, potentially integrating with real-world assets and new forms of collateral.

The evolution of decentralized clearinghouses will hinge on their ability to manage cross-chain risk and adapt to a complex regulatory landscape without compromising their core principles of trust minimization.

Glossary

Real World Assets

Derivatives Contracts

Time Decay Theta

Decentralized Clearinghouse Mechanisms

Risk Transfer Process

Decentralized Clearinghouse Models

Overcollateralized Systems

Defi Risk Architecture

Central Clearinghouse