Essence

Data Source Synthesis in crypto options is the systematic aggregation and validation of disparate information streams to generate accurate inputs for pricing and risk management engines. A decentralized options protocol cannot function effectively without a reliable mechanism to establish the fair value of its underlying assets and, critically, the volatility expectations of the market. The core challenge lies in translating off-chain market dynamics ⎊ the real-time price action and implied volatility from centralized exchanges ⎊ into a format that can be securely consumed by on-chain smart contracts.

This synthesis process bridges the gap between the chaotic, high-frequency nature of off-chain trading and the deterministic, state-based logic of the blockchain. The objective is to produce a singular, canonical data point for a given option parameter at a specific moment in time.

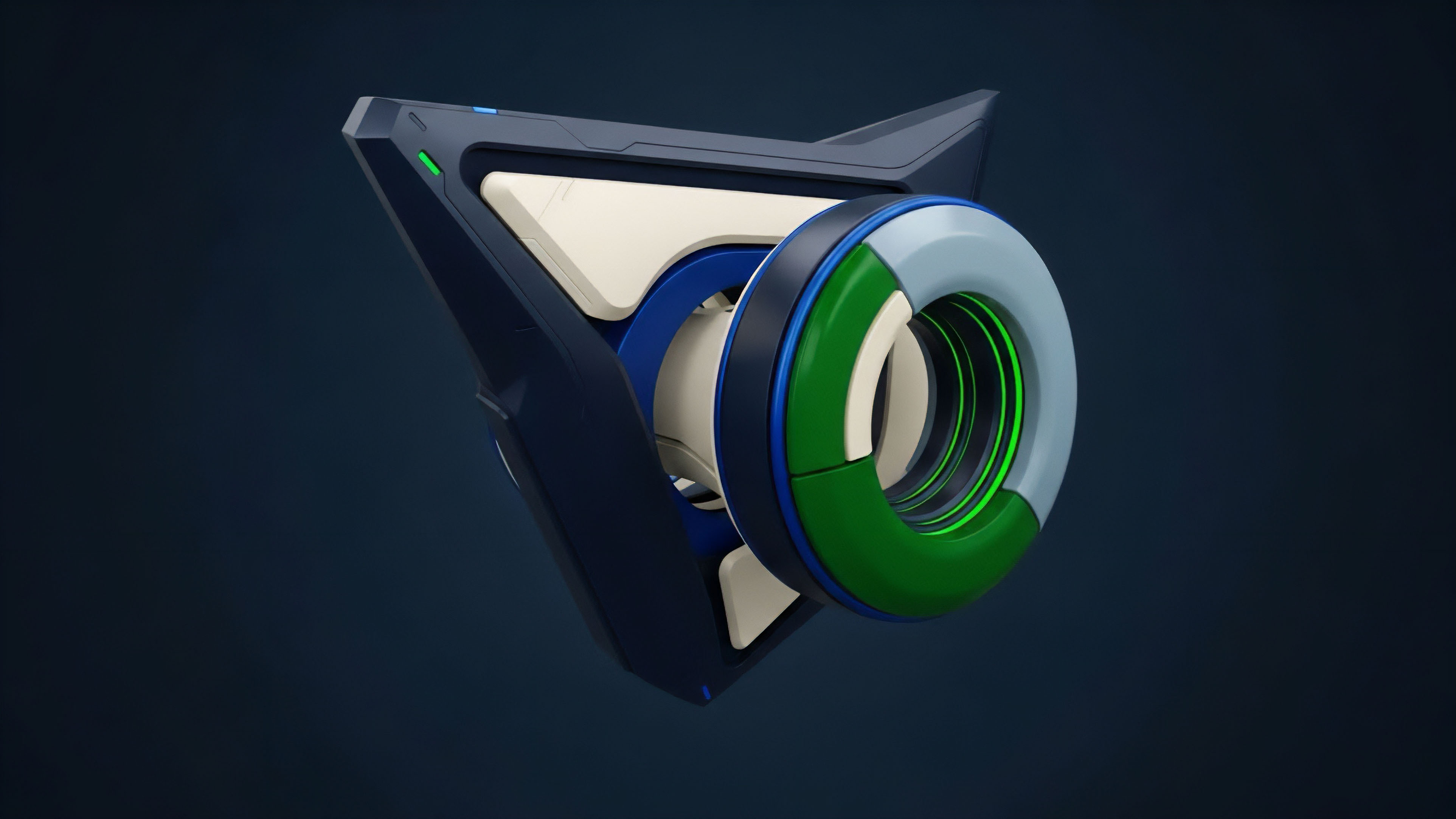

Data Source Synthesis for options protocols creates a single, canonical data point by aggregating real-time market data and volatility metrics from disparate sources, ensuring accurate pricing and risk management.

The synthesis must account for both the spot price of the underlying asset and the more complex data required for options pricing models. This includes the volatility surface, which maps implied volatility across different strikes and maturities. The integrity of this synthesized data directly dictates the solvency of the protocol’s margin system and the accuracy of its liquidations.

A failure in data synthesis leads directly to arbitrage opportunities and systemic risk, as demonstrated by early protocol failures where single-source price feeds were manipulated.

Origin

The necessity of Data Source Synthesis in decentralized options stems directly from the “oracle problem,” which emerged as early DeFi protocols attempted to execute financial logic based on external market data. Traditional finance (TradFi) relies on a long-established chain of trust, where data providers like Bloomberg and Refinitiv are assumed to be reliable, and a central clearinghouse manages counterparty risk.

The crypto space, by design, eliminates this central trust. Early attempts at decentralized options and lending protocols quickly learned that a single price feed, or even a simple time-weighted average price (TWAP) from one decentralized exchange, was insufficient and easily exploitable.

The origin of Data Source Synthesis in crypto options lies in the “oracle problem,” where early DeFi protocols found single-source data feeds insufficient and vulnerable to manipulation.

The first generation of options protocols struggled with this vulnerability. If an options contract required a price feed for settlement, and that feed could be manipulated through flash loans or concentrated liquidity, the entire protocol became a target. The initial solution involved a transition from single-source oracles to multi-source aggregation.

This evolution began by synthesizing data from multiple decentralized exchanges (DEXs) and eventually incorporated data from centralized exchanges (CEXs) to achieve a more robust representation of global market prices. The focus shifted from simply getting data on-chain to verifying the provenance and statistical integrity of that data. The synthesis process evolved from simple averaging to complex weighting mechanisms that account for source liquidity and historical reliability.

Theory

The theoretical foundation for Data Source Synthesis in options pricing rests on the requirements of quantitative finance models. The Black-Scholes model, for instance, requires five inputs: strike price, time to expiration, underlying asset price, risk-free interest rate, and volatility. While the first two are static contract terms and the risk-free rate can be derived from on-chain lending protocols, the underlying price and volatility inputs require continuous synthesis.

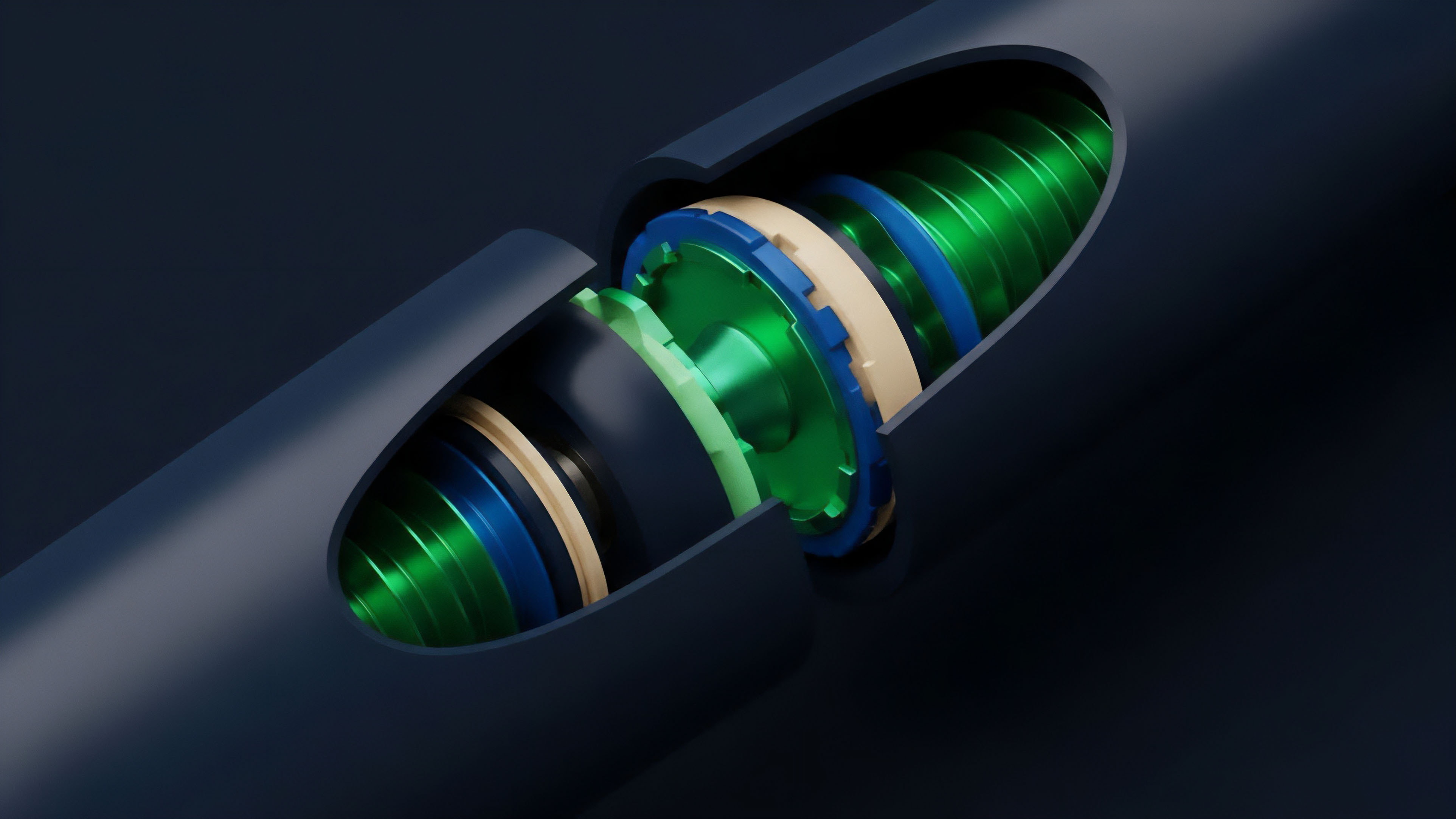

The most complex theoretical challenge is synthesizing the volatility surface. A volatility surface is a three-dimensional plot that represents the implied volatility of an option across various strike prices and maturities. In TradFi, this surface is derived from a high-frequency analysis of options order books.

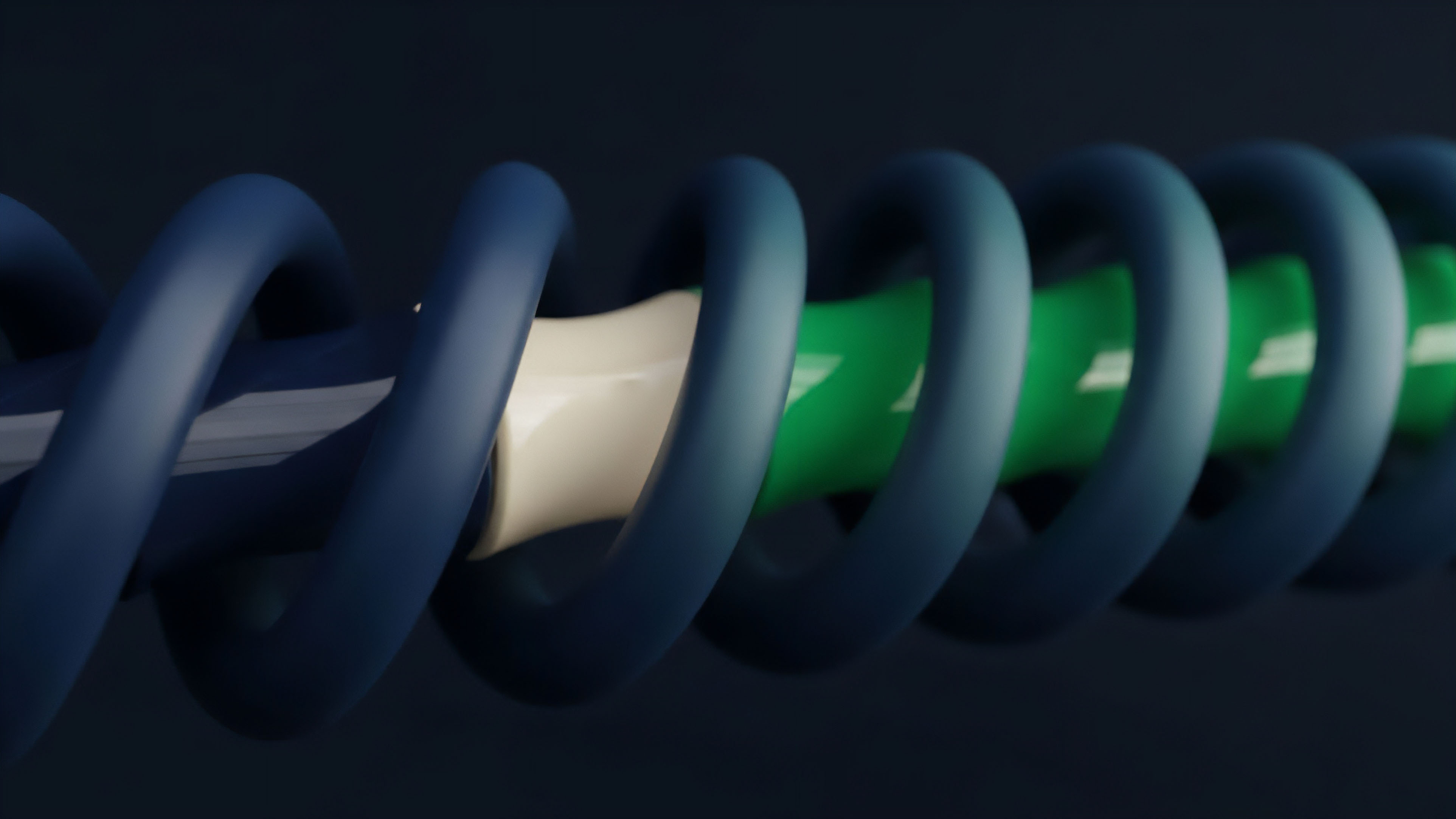

In decentralized markets, this data is often fragmented or non-existent for specific strikes and expiries. The synthesis process must therefore construct this surface by combining:

- On-chain implied volatility: Data derived from decentralized options order books or AMMs, which can be thin or illiquid.

- Off-chain implied volatility: Data sourced from large, liquid centralized exchanges, where the majority of options trading volume occurs.

- Historical volatility: Statistical calculations based on past price movements of the underlying asset.

The synthesis process must reconcile these disparate data points, often by applying weighting algorithms that prioritize data based on liquidity and recentness. A key theoretical consideration is the trade-off between latency and security. High-frequency updates provide accurate real-time pricing but increase the risk of manipulation during brief windows of market stress.

Conversely, lower-frequency updates reduce risk but result in stale pricing.

The theoretical challenge of Data Source Synthesis centers on constructing a robust volatility surface by reconciling on-chain implied volatility from thin AMMs with off-chain data from liquid centralized exchanges.

The following table outlines the key data components required for options pricing models and their source types:

| Data Component | Source Type | Synthesis Challenge |

|---|---|---|

| Underlying Asset Price | Off-chain (CEXs), On-chain (DEXs) | Latency and manipulation resistance during high volatility events. |

| Implied Volatility Surface | Off-chain (CEX options books), On-chain (AMMs) | Reconciling data from different venues and filling gaps for illiquid strikes. |

| Risk-Free Rate | On-chain (lending protocols) | Identifying a reliable, decentralized interest rate benchmark. |

| Liquidation Thresholds | On-chain (protocol state) | Combining synthesized price data with protocol-specific collateral ratios. |

Approach

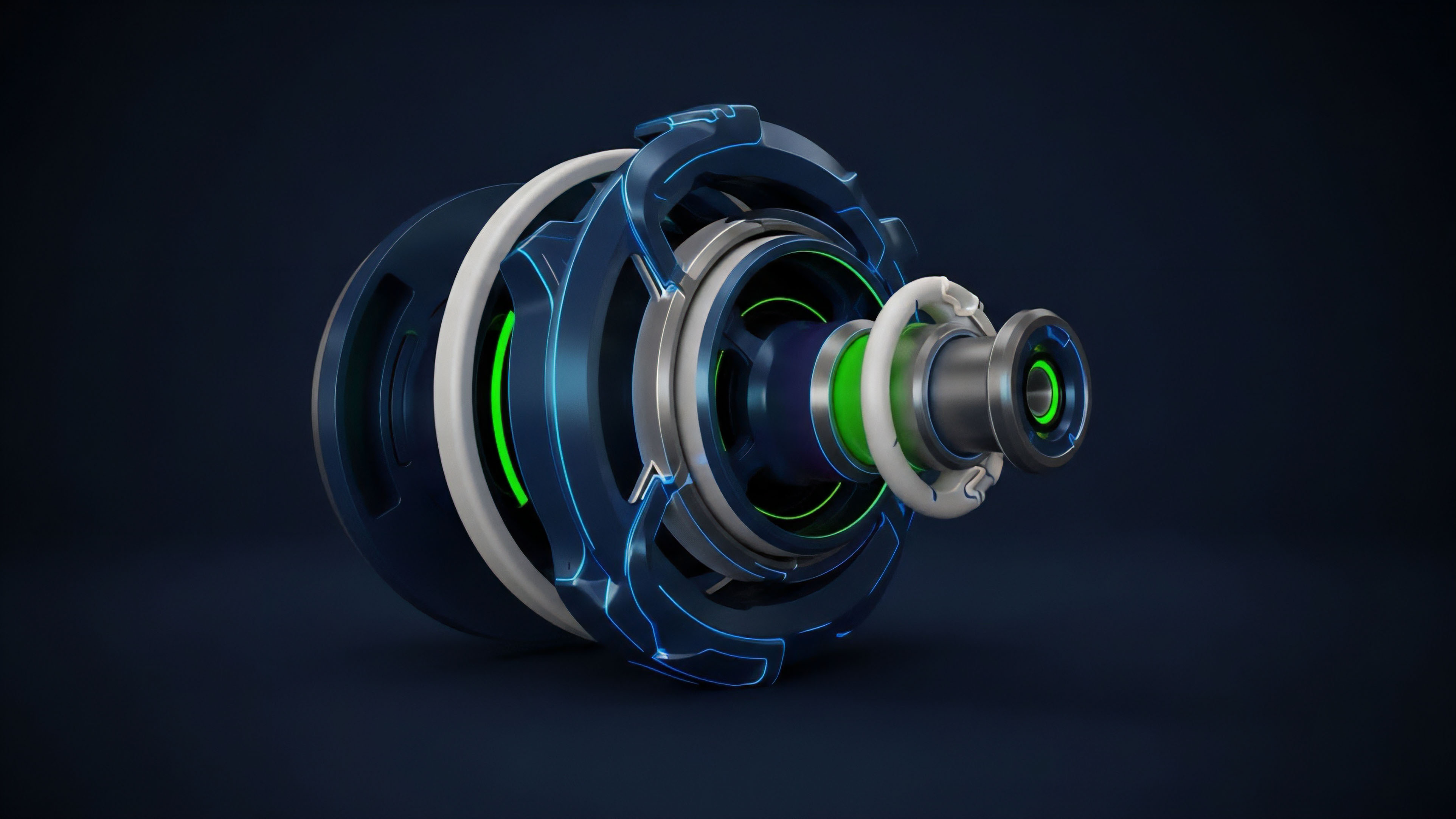

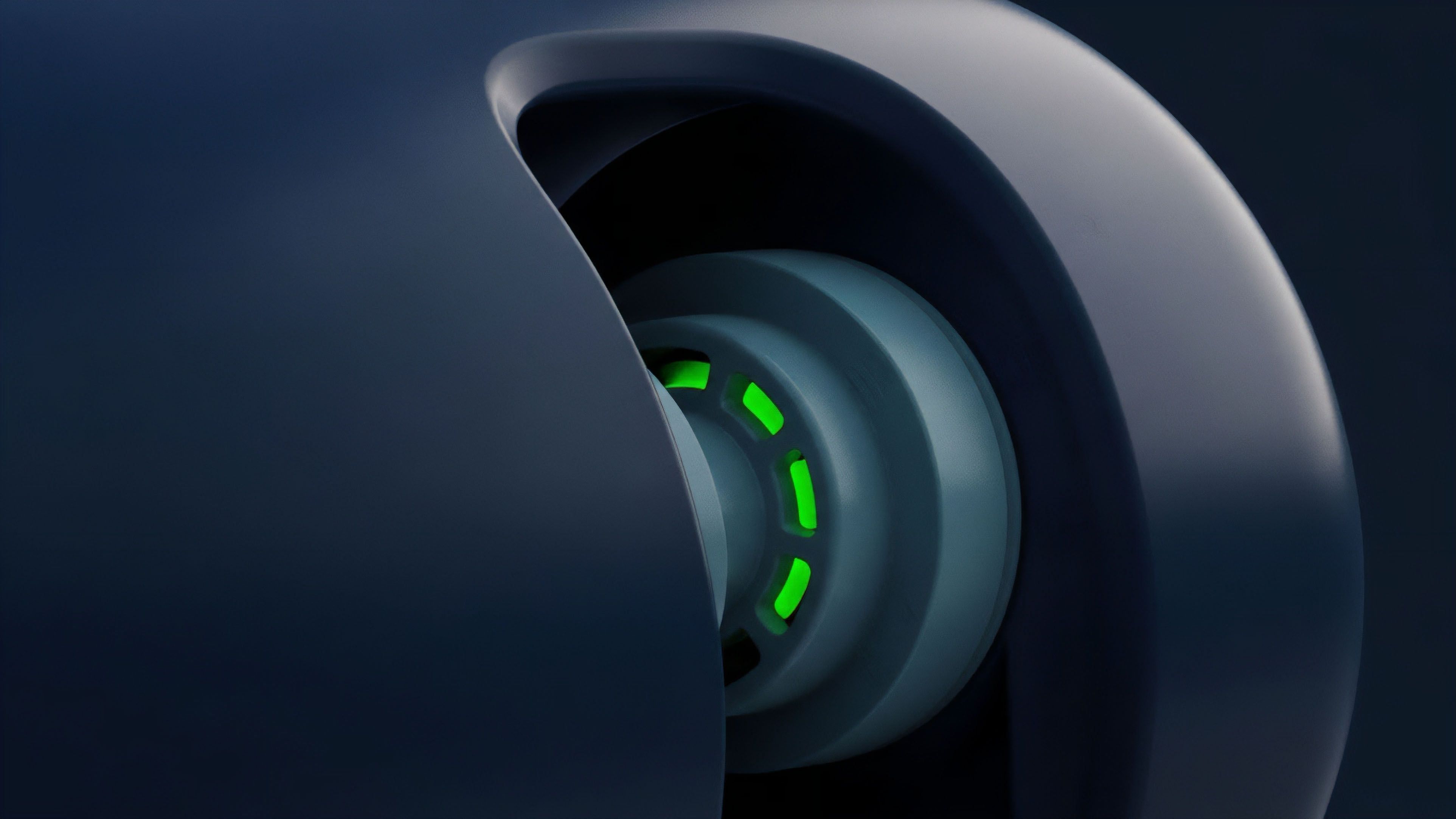

The practical approach to Data Source Synthesis involves a multi-layered architecture designed to mitigate specific risks. The core strategy is decentralized data aggregation, which involves combining data from numerous independent sources to eliminate single points of failure. This approach relies on a network of oracles, where each oracle node fetches data from different centralized exchanges, decentralized exchanges, and data aggregators.

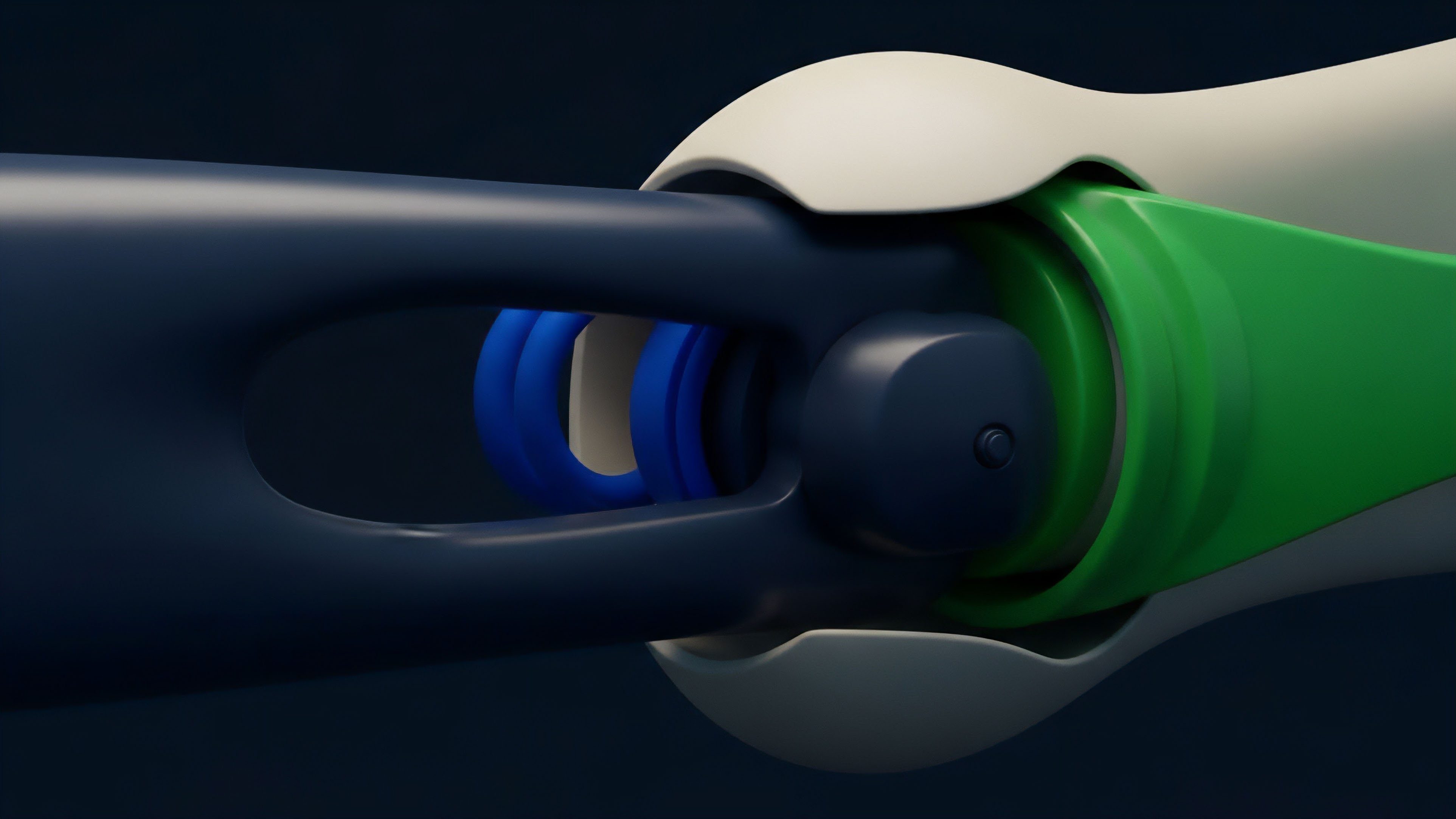

The first step in this process is data collection from various endpoints. The second step is data validation and filtering. This is where the synthesis occurs: the aggregated data points are analyzed for outliers.

A common technique involves calculating a weighted average or median of all reported prices, discarding data points that deviate significantly from the consensus. The weights assigned to each data source often reflect its liquidity or historical reliability. A critical component of this approach is the design of the data update mechanism.

Options protocols require more frequent data updates than typical lending protocols, especially during periods of high market activity. The cost of these updates, paid in gas fees, creates a fundamental trade-off. To manage this, protocols often use a hybrid approach where data updates are triggered only when a significant price deviation occurs or when a liquidation event is imminent.

This reduces operational costs while maintaining sufficient accuracy for risk management.

The current approach relies on decentralized data aggregation, where multiple oracle nodes gather data from diverse sources, validate it by filtering outliers, and apply weighting algorithms to create a robust consensus price.

The synthesis process must also account for the difference between price data and volatility data. Volatility surfaces are not single data points; they are complex structures that require continuous recalculation. Current approaches often rely on external services that specialize in calculating implied volatility surfaces from CEX data and then provide a simplified feed to the on-chain protocol.

This introduces a necessary, but centralized, component into the synthesis chain.

Evolution

The evolution of Data Source Synthesis for options protocols mirrors the broader progression of decentralized finance from simple, fragile systems to more robust, complex architectures. Early options protocols often relied on simplistic TWAP oracles or even manual updates, which proved disastrous during high-volatility events.

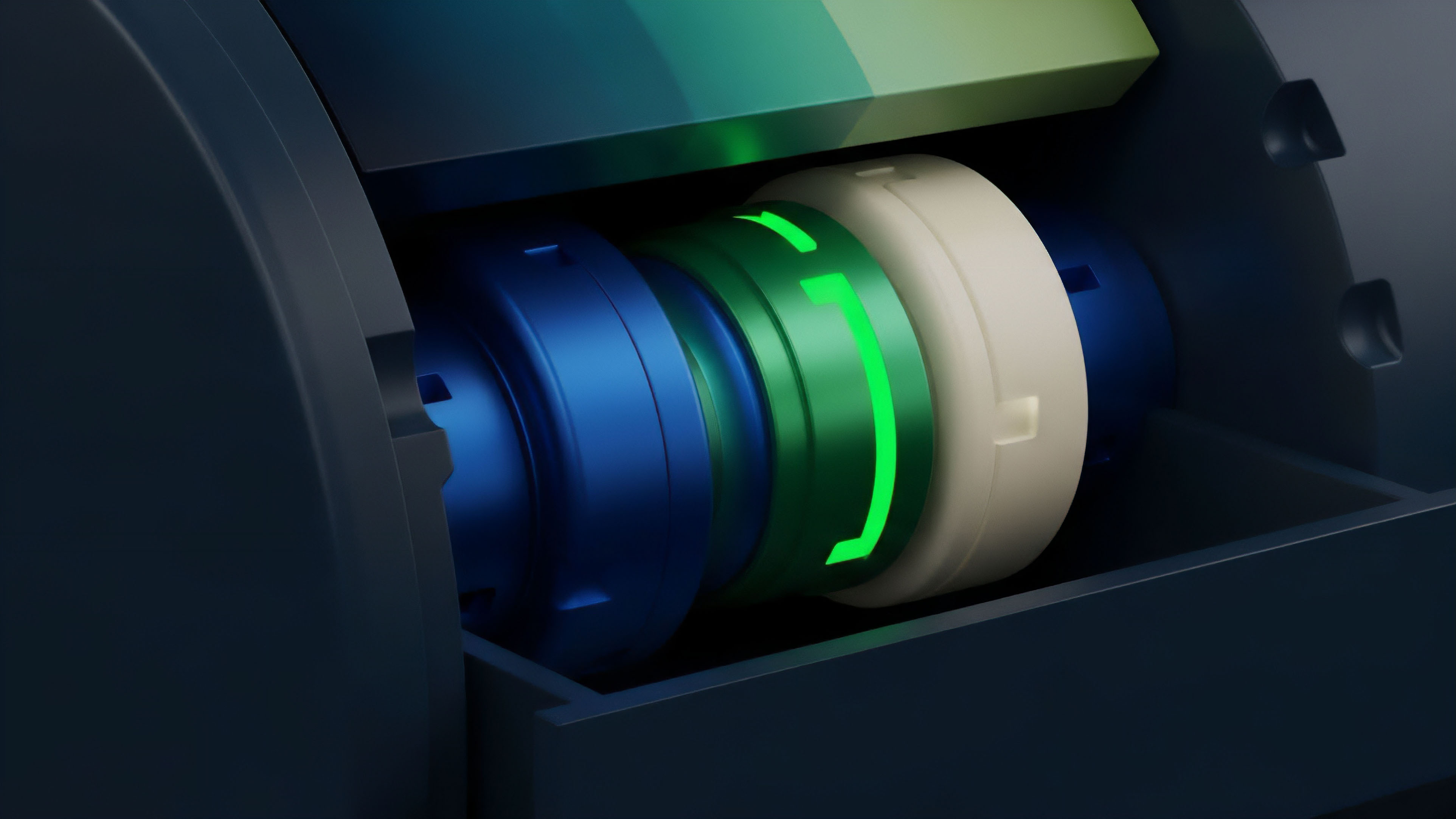

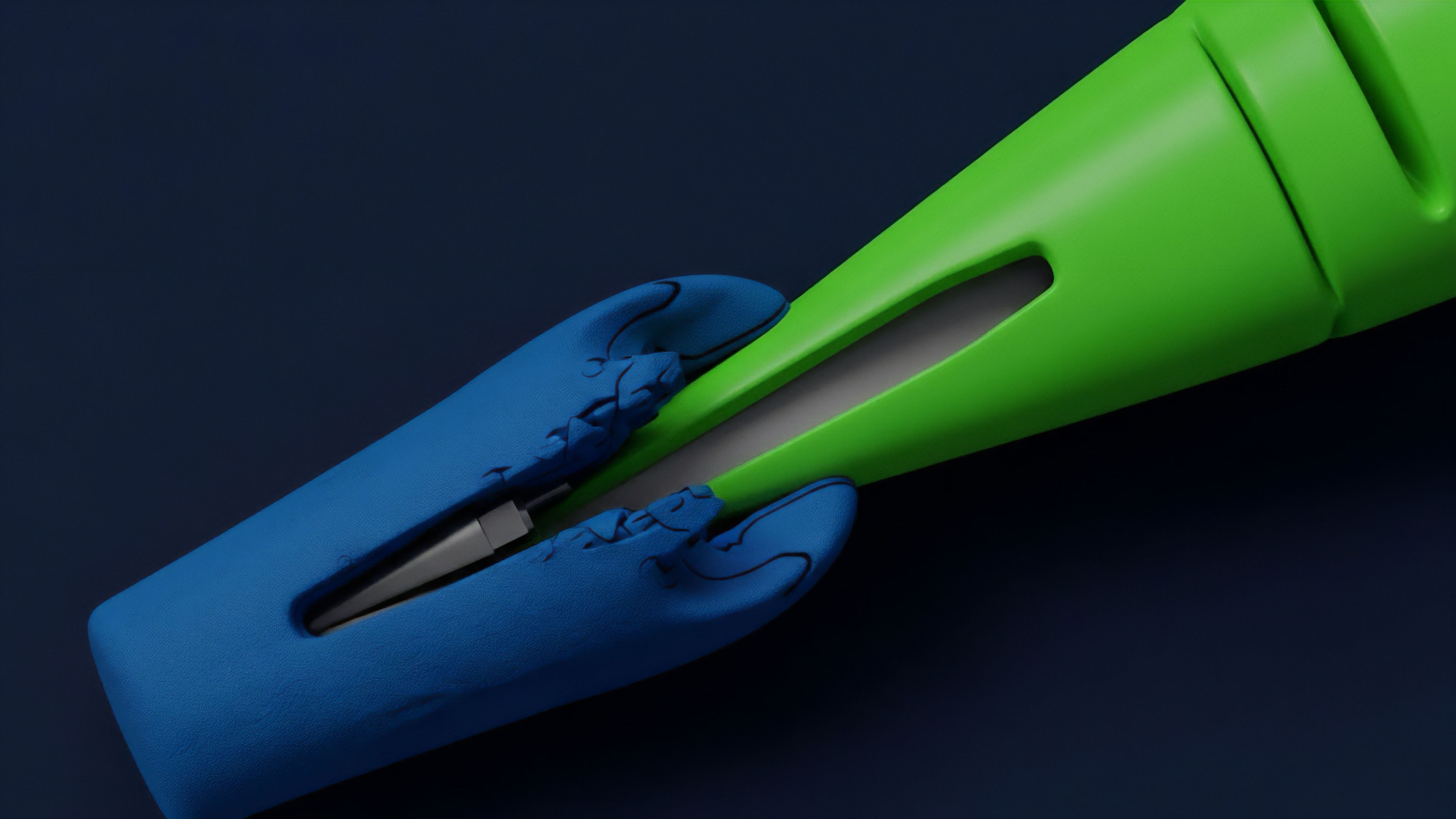

The transition to decentralized oracle networks marked a significant step forward. These networks, such as Chainlink, introduced a layer of economic security where data providers are incentivized to report accurate data and penalized for providing false information. The next stage of evolution involved addressing the “last-mile problem” of data fragmentation.

As the crypto ecosystem expanded to include multiple Layer 1 blockchains and Layer 2 solutions, data sources became fragmented across different networks. A protocol on an L2 solution cannot easily access real-time data from a DEX on another L1 without a cross-chain communication mechanism. This created new latency challenges and increased the complexity of synthesis.

A major recent shift has been the move from synthesizing simple price feeds to synthesizing more complex volatility feeds. The current generation of options protocols recognizes that a single price feed is insufficient for robust risk management. The synthesis now involves calculating and delivering real-time volatility data, including the skew (the difference in implied volatility between out-of-the-money and in-the-money options).

This allows protocols to adjust margin requirements dynamically based on market sentiment, leading to more capital-efficient systems. The following table compares early and current approaches to data synthesis:

| Feature | Early Synthesis Approach (2019-2021) | Current Synthesis Approach (2022-Present) |

|---|---|---|

| Primary Data Source | Single DEX or simple TWAP from a few sources. | Multi-source aggregation across CEXs and DEXs, specialized oracle networks. |

| Volatility Data | None or static historical volatility. | Dynamic, real-time implied volatility feeds; synthesis of volatility surfaces. |

| Security Model | Trust in a single data source or simple economic incentives. | Decentralized network consensus, economic collateralization, and data filtering. |

| Update Frequency | Low frequency (e.g. once per hour) or event-triggered. | Higher frequency updates, often triggered by price deviation thresholds. |

Horizon

The future of Data Source Synthesis for crypto options lies in a move toward truly autonomous and predictive systems. The current synthesis model is reactive; it aggregates historical data to determine current state. The next generation will be predictive, incorporating advanced models to forecast future volatility.

This involves synthesizing a wider range of data, including social sentiment, fundamental network metrics, and predictive models. The primary goal is to move beyond simply synthesizing data for pricing and toward creating a fully autonomous risk management engine. This engine will use synthesized data to dynamically adjust collateral requirements, manage protocol liquidity, and automate risk-off actions during periods of extreme market stress.

This requires a significant upgrade in data processing capabilities, potentially utilizing zero-knowledge proofs to verify data integrity without revealing underlying sources.

Future synthesis will shift from reactive data aggregation to predictive modeling, utilizing ZK-proofs for data verification and incorporating fundamental network metrics to create truly autonomous risk management engines.

The ultimate horizon involves integrating Data Source Synthesis with advanced quantitative strategies. This includes synthesizing real-time skew and term structure data to enable automated trading strategies that capitalize on volatility arbitrage opportunities. The goal is to create a system where options protocols can function with the efficiency of centralized exchanges, but with the transparency and security of decentralized infrastructure. This requires solving the remaining challenges of cross-chain data transfer and data provenance. The focus will shift from simply reporting a price to delivering a complete, verified, and statistically sound risk profile of the underlying asset.

Glossary

Global Open-Source Standards

Data Source Independence

Multi-Source Data Stream

Data Source Curation

Data Source Correlation Risk

Data Source Risk Disclosure

Collateral Ratios

Cross-Chain Data Synthesis

Liquidation Thresholds